Abstract

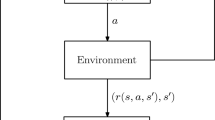

The widespread use of value-based, policy gradient, and actor-critic methods for solving problems in the area of Reinforcement Learning raises the question whether one of these methods is superior to the others in general or at least whether it is more appropriate to use a particular one under certain circumstances. In this paper, we will compare these methods and identify their advantages and disadvantages. Moreover, we will illustrate the insights obtained using the examples of REINFORCE, DQN and DDPG for a better understanding. Finally, we will give brief suggestions about which approach to use under certain conditions.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Arulkumaran, K., Deisenroth, M.P., Brundage, M., Bharath, A.A.: A brief survey of deep reinforcement learning. arXiv:1708.05866 (2017)

Bertsekas, D.P., Tsitsiklis, J.N.: Neuro-dynamic programming, vol. 5. Athena Scientific Belmont, MA (1996)

Bhatnagar, S., Ghavamzadeh, M., Lee, M., Sutton, R.S.: Incremental natural actor-critic algorithms. In: Advances in neural information processing systems, pp. 105–112 (2008)

Duan, Y., Chen, X., Houthooft, R., Schulman, J., Abbeel, P.: Benchmarking deep reinforcement learning for continuous control. In: International Conference on Machine Learning, pp. 1329–1338 (2016)

Fairbank, M., Alonso, E.: The divergence of reinforcement learning algorithms with value-iteration and function approximation. In: The 2012 International Joint Conference on Neural Networks (IJCNN), pp. 1–8. IEEE (2012)

Heess, N., Sriram, S., Lemmon, J., Merel, J., Wayne, G., Tassa, Y., Erez, T., Wang, Z., Eslami, S., Riedmiller, M., et al.: Emergence of locomotion behaviours in rich environments. arXiv preprint arXiv:1707.02286 (2017)

Ji Lin, L.: Self-improving reactive agents based on reinforcement learning, planning and teaching. Machine Learning 8, 293–321 (1992). https://doi.org/10.1007/BF00992699

Kober, J., Bagnell, J.A., Peters, J.: Reinforcement learning in robotics: A survey. The International Journal of Robotics Research 32(11), 1238–1274 (2013)

Konda, V.R., Tsitsiklis, J.N.: Actor-critic algorithms. In: Advances in neural information processing systems, pp. 1008–1014 (2000)

Konda, V.R., Tsitsiklis, J.N.: Onactor-critic algorithms. SIAM journal on Control and Optimization 42(4), 1143–1166 (2003)

Lagoudakis, M.G., Parr, R.: Least-squares policy iteration. Journal of machine learning research 4(Dec), 1107–1149 (2003)

Levine, S., Finn, C., Darrell, T., Abbeel, P.: End-to-end training of deep visuomotor policies. The Journal of Machine Learning Research 17(1), 1334–1373 (2016)

Levine, S., Pastor, P., Krizhevsky, A., Ibarz, J., Quillen, D.: Learning hand-eye coordination for robotic grasping with deep learning and large-scale data collection. The International Journal of Robotics Research 37(4–5), 421–436 (2018)

Lillicrap, T.P., Hunt, J.J., Pritzel, A., Heess, N., Erez, T., Tassa, Y., Silver, D., Wierstra, D.: Continuous control with deep reinforcement learning. CoRR abs/1509.02971 (2015). URL http://arxiv.org/abs/1509.02971

Mirowski, P., Pascanu, R., Viola, F., Soyer, H., Ballard, A.J., Banino, A., Denil, M., Goroshin, R., Sifre, L., Kavukcuoglu, K., et al.: Learning to navigate in complex environments. arXiv preprint arXiv:1611.03673 (2016)

Mnih, V., Badia, A.P., Mirza, M., Graves, A., Lillicrap, T., Harley, T., Silver, D., Kavukcuoglu, K.: Asynchronous methods for deep reinforcement learning. In: International conference on machine learning, pp. 1928–1937 (2016)

Mnih, V., Kavukcuoglu, K., Silver, D., Graves, A., Antonoglou, I., Wierstra, D., Riedmiller, M.A.: Playing atari with deep reinforcement learning. CoRR abs/1312.5602 (2013). URL http://arxiv.org/abs/1312.5602

Mnih, V., Kavukcuoglu, K., Silver, D., Rusu, A.A., Veness, J., Bellemare, M.G., Graves, A., Riedmiller, M., Fidjeland, A.K., Ostrovski, G., Petersen, S., Beattie, C., Sadik, A., Antonoglou, I., King, H., Kumaran, D., Wierstra, D., Legg, S., Hassabis, D.: Human-level control through deep reinforcement learning. Nature 518(7540), 529–533 (2015). URL http://dx.doi.org/10.1038/nature14236

Peters, J., Bagnell, J.A.: Policy gradient methods. Encyclopedia of Machine Learning pp. 774–776 (2010)

Peters, J., Schaal, S.: Policy gradient methods for robotics. In: 2006 IEEE/RSJ International Conference on Intelligent Robots and Systems, pp. 2219–2225. IEEE (2006)

Rajeswaran, A., Kumar, V., Gupta, A., Vezzani, G., Schulman, J., Todorov, E., Levine, S.: Learning complex dexterous manipulation with deep reinforcement learning and demonstrations. arXiv preprint arXiv:1709.10087 (2017)

Riedmiller, M.: Neural fitted q iteration - first experiences with a data efficient neural reinforcement learning method. In: Gama, J., Camacho, R., Brazdil, P.B., Jorge, A.M., Torgo, L. (eds.) Machine Learning: ECML 2005, pp. 317–328. Springer, Berlin Heidelberg, Berlin, Heidelberg (2005)

Rummery, G.A., Niranjan, M.: On-line Q-learning using connectionist systems, vol. 37. University of Cambridge, Department of Engineering Cambridge, England (1994)

Silver, D., Huang, A., Maddison, C.J., Guez, A., Sifre, L., van den Driessche, G., Schrittwieser, J., Antonoglou, I., Panneershelvam, V., Lanctot, M., Dieleman, S., Grewe, D., Nham, J., Kalchbrenner, N., Sutskever, I., Lillicrap, T., Leach, M., Kavukcuoglu, K., Graepel, T., Hassabis, D.: Mastering the game of Go with deep neural networks and tree search. Nature 529(7587), 484–489 (2016). https://doi.org/10.1038/nature16961

Silver, D., Lever, G., Heess, N., Degris, T., Wierstra, D., Riedmiller, M.: Deterministic policy gradient algorithms. In: ICML (2014)

Silver, D., Schrittwieser, J., Simonyan, K., Antonoglou, I., Huang, A., Guez, A., Hubert, T., Baker, L., Lai, M., Bolton, A., Chen, Y., Lillicrap, T., Hui, F., Sifre, L., Van Den Driessche, G., Graepel, T., Hassabis, D.: Mastering the game of Go without human knowledge. Nature (2017). https://doi.org/10.1038/nature24270

Sutton, R.S.: Learning to predict by the methods of temporal differences. Machine learning 3(1), 9–44 (1988)

Sutton, R.S., Barto, A.: Reinforcement learning : an introduction (2018)

Sutton, R.S., McAllester, D.A., Singh, S.P., Mansour, Y.: Policy gradient methods for reinforcement learning with function approximation. In: Advances in neural information processing systems, pp. 1057–1063 (2000)

Tsitsiklis, J.N., Van Roy, B.: An analysis of temporal-difference learning with function approximation. IEEE Transactions on Automatic Control 42(5), 674–690 (1997). https://doi.org/10.1109/9.580874

Watkins, C.J., Dayan, P.: Q-learning. Machine learning 8(3–4), 279–292 (1992)

Williams, R.J.: Simple statistical gradient-following algorithms for connectionist reinforcement learning. Machine learning 8(3–4), 229–256 (1992)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2021 The Editor(s) (if applicable) and The Author(s), under exclusive license to Springer Nature Switzerland AG

About this chapter

Cite this chapter

Scharf, F., Helfenstein, F., Jäger, J. (2021). Actor vs Critic: Learning the Policy or Learning the Value. In: Belousov, B., Abdulsamad, H., Klink, P., Parisi, S., Peters, J. (eds) Reinforcement Learning Algorithms: Analysis and Applications. Studies in Computational Intelligence, vol 883. Springer, Cham. https://doi.org/10.1007/978-3-030-41188-6_11

Download citation

DOI: https://doi.org/10.1007/978-3-030-41188-6_11

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-41187-9

Online ISBN: 978-3-030-41188-6

eBook Packages: Intelligent Technologies and RoboticsIntelligent Technologies and Robotics (R0)