Abstract

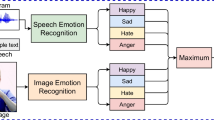

In this paper we present a multimodal approach for the recognition of eight emotions that integrates information from facial expressions, body movement and gestures and speech. We trained and tested a model with a Bayesian classifier, using a multimodal corpus with eight emotions and ten subjects. First individual classifiers were trained for each modality. Then data were fused at the feature level and the decision level. Fusing multimodal data increased very much the recognition rates in comparison with the unimodal systems: the multimodal approach gave an improvement of more than 10% with respect to the most successful unimodal system. Further, the fusion performed at the feature level showed better results than the one performed at the decision level.

Chapter PDF

Similar content being viewed by others

References

Cowie, R., Douglas-Cowie, E., Tsapatsoulis, N., Votsis, G., Kollias, S., Fellenz, W., Taylor, J.G.: Emotion recognition in human-computer interaction, IEEE Signal Processing Magazine, January 2001.

Picard, R.: Affective computing, Boston, MA: MIT Press (1997).

Sebe, N., Cohen, I., Huang, T.S.: Multimodal Emotion Recognition, Handbook of Pattern Recognition and Computer Vision, World Scientific, ISBN 981-256-105-6, January 2005.

Pantic, M., Sebe, N., Cohn, J., Huang, T.S.: Affective Multimodal Human-Computer Interaction, ACM Multimedia, pp. 669–676, Singapore, November 2005.

Scherer, K. R., Wallbott, H. G.: Analysis of Nonverbal Behavior. HANDBOOK OF DISCOURSE: ANALYSIS, Vol. 2, Cap. 11, Academic Press London (1985).

Banse, R., Scherer, K.R.: Acoustic Profiles in Vocal Emotion Expression. Journal of Personality and Social Psychology. 614–636 (1996).

Vogt, T., André, E.: Comparing feature sets for acted and spontaneous speech in view of automatic emotion recognition. IEEE International Conference on Multimedia & Expo (ICME 2005).

Gunes H., Piccardi M.: A Bimodal Face and Body Gesture Database for Automatic Analysis of Human Nonverbal Affective Behavior, Proc. of ICPR 2006 the 18th International Conference on Pattern Recognition, 20–24 Aug. 2006, Hong Kong, China.

Banziger, T., Pirker, H., Scherer, K.: Gemep — geneva multimodal emotion portrayals: a corpus for the study of multimodal emotional expressions. In L. Deviller et al. (Ed.), Proceedings of LREC’06 Workshop on Corpora for Research on Emotion and Affect (pp. 15–019). Genoa. Italy (2006).

Douglas-Cowie, E., Campbell, N., Cowie, R., Roach, P.: Emotional speech: towards a new generation of databases. Speech Communication, 40, 33–60 (2003).

Pantic, M., Rothkrantz, L.J.M.: Automatic analysis of facial expressions: The state of the art. IEEE Trans, on Pattern Analysis and Machine Intelligence, 22(12):1424–1445 (2000).

Ioannou, S., Raouzaiou, A., Tzouvaras, V., Mailis, T., Karpouzis, K., Kollias, S.: Emotion recognition through facial expression analysis based on a neurofuzzy network, Neural Networks, Elsevier, Vol. 18, Issue 4, May 2005, pp. 423–435.

Cowie, R., Douglas-Cowie, E.: Automatic statistical analysis of the signal and prosodic signs of emotion in speech. In Proc. International Conf. on Spoken Language Processing, pp. 1989–1992 (1996).

K.R. Scherer: Adding the affective dimension: A new look in speech analysis and synthesis, In Proc. International Conf. on Spoken Language Processing, pp. 1808–1811, (1996).

Camurri, A., Lagerlof, I, Volpe, G.: Recognizing Emotion from Dance Movement: Comparison of Spectator Recognition and Automated Techniques, International Journal of Human-Computer Studies, 59(1∓), pp. 213–225, Elsevier Science, July 2003.

Bianchi-Berthouze, N., Kleinsmith, A. A categorical approach to affective gesture recognition, Connection Science, V. 15, N. 4, pp. 259–269. (2003).

Picard, R.W., Vyzas, E., Healey, J.: Toward machine emotional intelligence: Analysis of affective physiological state, IEEE Trans, on Pattern Analysis and Machine Intelligence, 23(10):1175–1191 (2001).

Pantic M., Rothkrantz, L.J.M.: Towards an Affect-sensitive Multimodal Human-Computer Interaction, Proceedings of the IEEE, vol. 91, no. 9, pp. 1370–1390 (2003).

Busso, C., Deng, Z., Yildirim, S., Bulut, M., Lee, C.M., Kazemzaeh, A., Lee, S., Neumann, U., Narayanan, S.: “Analysis of Emotion Recognition using Facial Expressions, Speech and Multimodal information,” Proc. of ACM 6th int’l Conf. on Multimodal Interfaces (ICMI 2004), State College, PA, Oct. 2004. pp205–211.

Kim, J., André, E., Rehm, M., Vogt, T., Wagner, J.: Integrating information from speech and physiological signals to achieve emotional sensitivity. Proc. of the 9th European Conference on Speech Communication and Technology (2005).

Gunes H, Piccardi M.: Bi-modal emotion recognition from expressive face and body gestures. Journal of Network and Computer Applications (2006), doi:10.1016/j.jnca.2006.09.007

el Kaliouby, R., Robinson, P.: Generalization of a Vision-Based Computational Model of Mind-Reading. In Proceedings of First International Conference on Affective Computing and Intelligent Interfaces, pp 582–589 (2005).

Engelbrecht, A.P., Fletcher, L., Cloete, I.: Variance analysis of sensitivity information for pruning multilayer feedforward neural networks, Neural Networks, 1999. IJCNN’ 99. International Joint Conference on, Vol.3, Iss., 1999, pp:1829–1833 vol.3.

Young, J. W.: Head and Face Anthropometry of Adult U.S. Civilians, FAA Civil Aeromedical Institute, 1963–1993 (final report 1993)

Raouzaiou, A., Tsapatsoulis, N., Karpouzis, K., Kollias, S.: Parameterized facial expression synthesis based on MPEG-4, EURASIP Journal on Applied Signal Processing, Vol. 2002, No 10, 2002, pp. 1021–1038.

Camurri, A., Coletta, P., Massari, A., Mazzarino, B., Peri, M., Ricchetti, M., Ricci, A. and Volpe, G.:Toward real-time multimodal processing: EyesWeb 4.0, in Proc. AISB 2004 Convention: Motion, Emotion and Cognition, Leeds, UK, March 2004.

Camurri, A., Mazzarino, B., and Volpe, G.: Analysis of Expressive Gesture: The Eyesweb Expressive Gesture Processing Library, in A. Camurri, G. Volpe (Eds.), Gesture-based Communication in Human-Computer Interaction, LNAI 2915, Springer Verlag (2004).

Castellano, G., Camurri, A., Mazzarino, B., Volpe, G.: A mathematical model to analyse the dynamics of gesture expressivity, in Proc. of AISB 2007 Convention: Artificial and Ambient Intelligence, Newcastle upon Tyne, UK, April 2007.

Kessous, L., Amir, N.: Comparison of feature extraction approaches based on the Bark time/frequency representation for classification of expressive speechpaper submitted to Interspeech 2007.

Witten, I.H., Frank, E.: Data Mining: Practical machine learning tools and techniques, 2nd Edition, Morgan Kaufmann, San Francisco (2005).

Kononenko, I.: On Biases in Estimating Multi-Valued Attributes. In: 14th International Joint Conference on Articial Intelligence, 1034–1040 (1995).

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2007 International Federation for Information Processing

About this paper

Cite this paper

Caridakis, G. et al. (2007). Multimodal emotion recognition from expressive faces, body gestures and speech. In: Boukis, C., Pnevmatikakis, A., Polymenakos, L. (eds) Artificial Intelligence and Innovations 2007: from Theory to Applications. AIAI 2007. IFIP The International Federation for Information Processing, vol 247. Springer, Boston, MA. https://doi.org/10.1007/978-0-387-74161-1_41

Download citation

DOI: https://doi.org/10.1007/978-0-387-74161-1_41

Publisher Name: Springer, Boston, MA

Print ISBN: 978-0-387-74160-4

Online ISBN: 978-0-387-74161-1

eBook Packages: Computer ScienceComputer Science (R0)