Abstract

The selection of image focus discrimination function is the basis for obtaining high-quality images in automatic image scene measurement. The performance of several digital image processing algorithms for automatic image focus discrimination is compared comprehensively, and the calculation speed, uniqueness, accuracy and sensitivity of different algorithms are analyzed quantitatively. Firstly, this paper briefly summarizes the imaging principle and focusing principle of digital image processing automatic image focusing, and then from the image information entropy function, gray gradient function, frequency domain evaluation function, and other evaluation functions. Finally, the area selection and focus search algorithm of digital image processing window are described from the aspects of depth of field and focal depth, algorithm selection and algorithm improvement direction. The above analysis results of the characteristics of image focusing discriminant function have guiding significance for the automatic focusing control required by image automatic measurement.

Access provided by Autonomous University of Puebla. Download conference paper PDF

Similar content being viewed by others

Keywords

1 Introduction

Automatic image measurement technology can replace the human eye to realize the measurement of target parameters with image as the carrier, and automatic focusing technology is the guarantee to automatically complete the image measurement and make the measurement results accurate and reliable. As the key content of visual instrument research, it is valued by researchers in many fields at home and abroad. Image auto focusing technology is an important technology in digital image processing. If the level of image auto focusing technology is low, the function of digital image processing system will be greatly reduced. Only by improving the sensitivity of image auto focusing technology and reducing its complexity and dispersion, can we really play the role of digital image processing system.

The focus evaluation operation using the digital image processing method is based on the clarity of the object in the captured image. An ideal focus evaluation function should have the characteristics of high sensitivity, single value, no deviation, small amount of calculation and high signal-to-noise ratio. The so-called high sensitivity means that the data to be judged should have obvious numerical changes near the focus; Single value means that the extreme point to be judged should be single; No deviation means that the calculated focus point position is consistent with the actual measured position; Small amount of calculation means simple operation or short operation time; High SNR means that the result of function operation is less affected by irrelevant content. The characteristics of focus evaluation function are related to the selected function. In this paper, several common image focus evaluation algorithms using image processing algorithms are analyzed and compared, and their corresponding discrimination time, sensitivity and single value results are given. This paper consists of the following parts. The first part introduces the relevant background and significance of this paper, the second part is the related work of this paper, and the third part is data analysis. The fourth part is example analysis. The fifth part is conclusion.

2 Related Work

The soot particles produced from diffusion flames burning biodiesel fuel were thermophoretically sampled and the carbon nanostructure of soot particles were imaged using a high resolution transmission electron microscopy (HRTEM) [1]. Lei et. al report a new quantitative measurement method of polarization direction based on the polarization axis finder (PAF) and digital image processing [2]. Choodowicz et. al present an application of a hybrid algorithm for detection and recognition of railway signaling [3]. Various applications of the firefly algorithm in image analysis are also discussed [4]. A digital image processing algorithm based on sampling aerosol inhomogeneities was developed in the applied problem of laser remote sensing for measuring the velocity of wind [5]. Charu et. al focus on the outstanding features of FPGA technology [6]. This algorithm serves to identify the line/edge of the image object to highlight the boundary lines of the image information [7]. Tan et.al [8] shared the calculation method for image processing with new algorithm, according to the algorithm, the image can be calculated by the math algorithm, so as to we can only use the algorithm to handle many images. However for some image analysis, if we use the different algorithm to handle it. As in some images, there are many noises in the image, so the functional analysis is necessary [9]. Because this design focuses on using the opening and closing operation to denoise the binary image, and the edge extraction function to obtain some image edge feature data, it focuses on the theoretical knowledge involved in the opening and closing operation and edge extraction. Automatic image focusing can be realized by ranging method, that is, measuring the distance between the measured object and the imaging surface, or by image gray contrast analysis method. The former is called active mode and the latter is called passive mode. The judgment of image gray contrast can be realized by optical method or by focusing evaluation function method of digital image processing. The automatic focusing operation using ranging method and optical contrast judgment method has been quite mature, but the camera structure using these two methods is complex, and can not be used in some special automatic image measurement occasions, such as short-range image measurement beyond the resolution of ranging method and image analysis of small objects.

2.1 Related Theories and Tools of Image Processing

Firstly, the structural elements are introduced. The structural elements can be regarded as a small graph much smaller than an image to be processed. They are usually widely used in morphological operations, mainly including expansion, corrosion and opening and closing operations. The expression of inflation is defined as follows:

It should be explained that B is the structural element for expansion operation, X is the image to be expanded, D is the binary image obtained by image x under the expansion of structural element B, and all points in d meet one requirement: for structural element B, when the coordinate point (x, y) coincides with its origin, The intersection of the set of points covered by the structural element B and the binary image x is not empty.

In more popular terms, expansion is to replace the pixel value of the origin of the structural element with the value of the pixel with the largest value in the set of defined pixels. Since the image designed for expansion operation is a binary image, that is, it only includes black-and-white images, that is, the pixel value is only 0 and 255. Therefore, if a white pixel appears in an area covered by the binary image, the value of the pixel at the origin will be replaced by 255, that is, the color will be changed to white. Then, since the edge position of the object is usually a black 0 value, corrosion is equivalent to a contraction of the edge position.

So the role of expansion is obvious. The processing effect of expansion on binary images is that a small part of the black areas in contact with large black areas can be merged into large black areas, which can make the boundary expand outward, and fill the holes in large black areas.

The so-called corrosion, in terms of a relatively easy to understand description, is to replace the pixel value of the origin of the structural element with the value of the pixel with the smallest value in the set of defined pixels. Since the image designed for corrosion operation is a binary image, that is, it only includes black-and-white images, that is, the pixel value is only 0 and 255. Therefore, if a black pixel appears in an area covered by the binary image, the value of the pixel at the origin will be replaced by 0, that is, the color will be changed to black, Otherwise, the value will be replaced by 255, that is, the color will be changed to white. Then, since the edge position of the object is usually a black 0 value, corrosion is equivalent to a contraction of the edge position. Then the effect of corrosion is obvious, that is, to eliminate some scattered points on the edge, so that the edge position shrinks to the interior of the object, so as to eliminate some small and meaningless scattered small objects.

To sum up, expansion and corrosion are mutually inverse processes. The general algorithm flow chart is shown in Fig. 1 below.

2.2 Digital Image Focusing Principle

According to Fourier optics theory, the degree of image clarity or focus is mainly determined by the number of high-frequency components in the light intensity distribution. If the high-frequency components are small, the image will be blurred, and if the high-frequency components are rich, the image will be clear. Therefore, the content of high-frequency components in the image light intensity distribution can be used as the main basis for the image definition evaluation function. Because the image has edge parts, when the image is fully focused, the image is clear, and the high-frequency components containing edge information are the most: when out of focus, the image is blurred, and the high-frequency components are less. Therefore, whether the image is focused can be determined by the number of high-frequency components of image edge information.

Any optical imaging system can be equivalent to an ideal Gaussian imaging system. According to Newton's imaging formula, the optical system can realize the conjugate between the object plane and the image plane by adjusting any one or more parameters in the object distance, image distance or focal length, that is, imaging. The better the conjugate relation is satisfied, the clearer the image will be, otherwise it is the opposite. Only in the case of correct focusing, the gray contrast everywhere in the image is the strongest, which is the theoretical basis for focusing judgment. In automatic image measurement, the object plane and imaging plane are generally fixed, so conjugate imaging can be realized by adjusting the position of imaging lens. The optical imaging optical path structure is shown in Fig. 2.

The gray variance of the image represents the degree of dispersion of the gray distribution of image pixels. When the gray value of image pixels in the calculation area changes greatly, the gray variance is also large, and when the gray values of all image pixels in the calculation area are equal, the gray variance is the smallest. When the image is completely blurred, the gray value distribution dispersion of pixels is small and the gray variance is small; When the image is sharp, the dispersion of pixel gray value distribution is large, so the gray variance is large. The gray variance function takes the gray average value of all pixels in the image window as the reference, calculates the difference of the gray value of each pixel, takes the sum of squares, and then standardizes the number of pixels. It represents the average degree of image gray change in the calculation area. Therefore, the gray variance function can characterize the sharpness of the image to a certain extent.

3 Data Analysis

3.1 Principle of Automatic Image Focusing for Digital Image Processing

-

(1)

Principle of imaging

Although automatic image focusing technology is advanced, its imaging principle is basically the same as that of convex lens imaging. The formula of convex lens imaging principle is as follows:

$$\frac{1}{u} + \frac{1}{v} = \frac{1}{f}$$(2)The meaning of each index in formula (1) is as follows: the meaning of u is the distance between convex lens and object; the meaning of v is the distance between convex lens and imaging plane; the meaning of f is the focal length of convex lens. According to the principle of convex lens imaging, the convex lens imaging model can be obtained as shown in Fig. 3:

Figure 3 u, v, f, D, p, R refer to object distance, image distance, focus, convex lens diameter, object position, imaging radius, respectively. When the digital image processing system defocuss, the distance between the imaging and the convex lens will gradually decrease from s to v, object imaging will leave a fuzzy image on the image detector. The distance between the focus plane and the convex lens is s-v.. The distance between the focus plane and the convex lens is If the value of the s-v continues to increase, the image on the image detector will be more blurred. According to the similar triangles in Fig. 1, the imaging scaling factor formula can be obtained as follows:

$$q = \frac{2R}{D} = \frac{s - v}{v} = \left( {\frac{1}{v} - \frac{1}{s}} \right)$$(3)Formula (3) q refers to the imaging scaling factor. The following formula can be obtained from the convex lens imaging formula and the imaging scaling factor formula:

$$R = q\frac{D}{2} = S\frac{D}{2}\left( {\frac{1}{f} - \frac{1}{u} - \frac{1}{s}} \right)$$(4)Rs > v 0, and when the Rs > vq > is 0 and Rs > vq > q > v, the imaging surface is 0, The formula (4) shows that when the q > is 0 and s > v, the imaging surface is and the imaging surface is in front of the positive focus position. Therefore, digital image processing can realize auto-focusing according to formula (4) principle.

-

(2)

Principle of focusing

The development process of digital image processing is divided into two stages. The first stage mainly adopts the traditional image automatic focusing system, and the second stage mainly adopts the automatic image focusing system. The traditional image automatic focusing principle first adjusts the lens to include the target and then enters the PC machine or embedded system by the CCD/CMOS camera. The embedded system determines whether the lens is readjusted through the motor control module according to the image definition. The auto-image focusing system is divided into two situations: focusing depth and defocusing depth. In focusing depth, the search algorithm is used to focus, then the image processing module is used to determine whether the image is clear or not. Finally, the defocusing depth is calculated by collecting defocusing image parameter information or defocusing image degradation model and fuzzy graphics. Finally, the image definition can be adjusted to the best。

3.2 Evaluation function of automatic image focusing for digital image processing

-

(1)

Information entropy function for images

The formula of image information entropy function is as follows:

$$F = - \sum p_{i} log_{b} \left( {pi} \right)$$(5)The meaning of each index in formula (5) is as follows: the meaning of pi is the probability of characterizing information; the value of b is 2. In digital image processing, the gray level of auto-focusing image is independent, so the probability of representation information of each gray value is different. Based on this, the probability of gray value in gray histogram can be calculated.

-

(2)

Grayscale gradient function

The change of gray scale fluctuation and absolute change of gray scale have a certain function relation with the gray value of a certain point in the image and the pixel of image scale, while the gradient vector mode square function is also related to the change of gray scale fluctuation and absolute change of gray scale. Therefore, the gray gradient vector mode function can be obtained according to gray fluctuation and absolute change:

$$F = \mathop \sum \nolimits_{x}^{M} \mathop \sum \nolimits_{y}^{N} \left\{ {\left[ {g\left( {x + 1,y} \right) - g\left( {x,y} \right)} \right]^{2} + \left[ {g\left( {x,y + 1} \right) - g\left( {x,y} \right)} \right]^{2} } \right\}^{1/2}$$(6)The meaning of each index in formula (6) is as follows: the meaning of M*N is image scale pixel;(x,y) is a point in image; the meaning of g (x,y) is the gray value of a point in image.

-

(3)

Frequency domain evaluation function

Based on Fourier transform, the frequency domain evaluation function can be obtained as follows:

$$F = \mathop \sum \nolimits_{X}^{M} \mathop \sum \nolimits_{Y}^{N} \left( {\mathop \sum \nolimits_{X}^{M} \mathop \sum \nolimits_{Y}^{N} g\left( {x,y} \right)W_{MN}^{xyXY} } \right) - \varphi$$(7)The meanings of each index in formula (7) are as follows:(x,y) means the spatial coordinate vector of the image;(X,Y) means the coordinate vector of the image in the corresponding spatial frequency domain; and (x,y) means the two-dimensional Fourier transform matrix of the g.\({W}_{MN}^{xyXY}\)

-

(4)

Function analysis and comparison

After comparing the sensitivity, precision, deviation, complexity, signal-to-noise ratio, time and other parameters of each function, the following conclusions can be obtained: the image information entropy function has long focus time, poor focus position, short focus time, high focus dispersion, and strong focus sensitivity of frequency domain evaluation function. Therefore, the most suitable function for automatic focusing of digital image processing is the frequency domain evaluation function, but the function obtained from Fourier transform is not good in terms of the complexity of the function. Frequency domain evaluation functions need to be further optimized or explored for other functions, such as wavelet analysis, which are also obtained by Fourier transform.

4 Example analysis

4.1 Area Selection and Focus Search Algorithm for Digital Image Processing Window

-

(1)

Effects of depth of field and focal depth

The deeper the depth of field and focal depth of the digital image processing window, the more blurred the image is, the larger the depth of field of the camera window is, the smaller the aperture is, the distance, focusing parameters and imaging clarity will be affected.

-

(2)

Algorithm selection

-

a.

Blind Mountain Climbing Algorithm

The principle of blind mountain climbing algorithm is to judge the position of mountain peak during mountain climbing, which can determine the best focus position of image definition. The algorithm can optimize the automatic image focusing evaluation function of digital image processing, improve the image focusing speed and reduce the deviation of focusing imaging.

-

b.

curve fitting algorithm

The principle of curve fitting algorithm is to synthesize the original complex curve function into the simplest clustering evaluation function by simple function, and then the extreme point of the original curve function can be obtained by the extreme point of the near fitting function. The algorithm can improve the accuracy of the image, but it has certain requirements for the maximum value of the image data.

-

c.

Fibonacci Search Algorithm

Fibonacci search algorithm is a search algorithm, which can use the hypothesis principle to analyze the most suitable points in the process of auto-focusing, and then determine the best auto-focusing interval by theoretical calculation. Although the algorithm can improve the focusing speed, it is easy to appear larger focusing deviation in the process of moving direction change.

-

a.

-

(3)

Direction of algorithm improvement

-

a.

improve accuracy

Accuracy is one of the criteria for evaluating whether the automatic image focusing evaluation function conforms to the digital image processing. If the imaging image is fuzzy, the minimum gradient value can be adjusted according to the numerical change of gradient value. This can reduce the impact of minimum gradient image definition and improve the accuracy of evaluation function.

-

b.

Improved SNR

Signal-to-noise ratio (SNR) represents the anti-noise interference ability of digital image processing auto-focusing algorithm, and the increase of SNR can reduce the probability of auto-focusing algorithm. Therefore, the image processing can directly take out most of the gradient values, and then use a simple algorithm to bring them into the gradient matrix to calculate, so that the SNR can be improved.

-

a.

4.2 Analysis of Computational Complexity of Focus Function

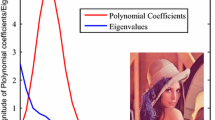

The time required for various kinds of processing and operation is different on different levels of computers, but on the same computer, the calculation time required for relatively complex operation must be longer than that for simple operation. In theory, in order to compare the complexity of various operations, we use the addition times corresponding to the corresponding operation to express the complexity of the operation. The operation time involved in the formula is in ascending order: addition/subtraction, multiplication/division, square operation, square operation, logarithm operation and image gray value sorting and comparison operation. In this way, the operation complexity of the above focus evaluation function expressions is arranged in ascending order: F1, F2, F3, F4, F5, F6, F7 and F8, which is also confirmed by the operation experiments of specific images. Because the computer has random external and internal interrupt requests, even the calculation of the same content takes different time each time. In this paper, the average operation time of five operations is taken as the evaluation parameter of algorithm speed. Figure 4 is a corresponding graphical representation.

5 Conclusion

To sum up, this paper mainly analyzes and compares the image information entropy function, gray gradient function, frequency domain evaluation function, other evaluation functions and other digital image processing automatic image focusing algorithms. The image information entropy function has the disadvantages of inaccurate focus position and long focus time. Therefore, the image information entropy function and gray gradient function are not suitable for the automatic image focusing algorithm of digital image processing. Although the frequency domain evaluation function has some advantages, there are still some shortcomings in the automatic focusing time. These digital image processing automatic image focusing evaluation functions can be further improved.

References

Choi, S., Park, S.: Quantitative image analysis of carbon nanostructure of particles produced from combustion process. J. Nanosci. Nanotechnol. 26(6), 1269–1278 (2018)

Lei, B., Liu, S.: Efficient polarization direction measurement by utilizing the polarization axis finder and digital image processing. Opt. Lett. 40(4), 1468–1479 (2018)

Choodowicz, E., Lisiecki, P., Lech, P.: Hybrid algorithm for the detection and recognition of railway signs. In: Burduk, R., Kurzynski, M., Wozniak, M. (eds.) Progress in Computer Recognition Systems, pp. 337–347. Springer International Publishing, Cham (2020). https://doi.org/10.1007/978-3-030-19738-4_34

Dey, N., Chaki, J., Moraru, L., Fong, S., Yang, XS.: Firefly algorithm and its variants in digital image processing: a comprehensive review. In: Dey, N., (eds.) Applications of Firefly Algorithm and its Variants. Springer Tracts in Nature-Inspired Computing. Springer, Singapore, vol. 17 no(2), pp. 346–355 (2019) https://doi.org/10.1007/978-981-15-0306-1_1

Filimonov, P.A., Belov, M.L., Ivanov, S.E., Gorodnichev, V.A., Fedotov, Y.: An algorithm for measuring wind speed based on sampling aerosol inhomogeneities. Comput. Opt. 125, 116–128 (2020)

Kumar, P., Singh, K.: Hardware model for efficient edge detection in images. In: 2020 IEEE International Conference On Computing, Power and Communication Technologies (GUCON), IEEE, pp.1-6 (2020)

Putra, A., Sihombing, V., Munandar, M.H.: Rancang bangun aplikasi deteksi tepi citra digital menggunakan algoritma prewitt. J. Digit. Inf. 113, 189–199 (2021)

Tan, Y.L., Wang, H.L., Wang, Y.R.: Calculation of effective mode field area of photonic crystal fiber with digital image processing algorithm. Comput. Opt. 112, 163–176 (2018)

Ibrahim, A., Mohamed, M., Ghoneim, M., Shawkly, M.: Digital image processing algorithm for functional analysis of renal perfusion. Int. Digit. Process. 38, 15–26 (2021)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2023 The Author(s), under exclusive license to Springer Nature Singapore Pte Ltd.

About this paper

Cite this paper

Xiao, H., Rosales, E. (2023). Analysis and Comparison of Automatic Image Focusing Algorithms in Digital Image Processing. In: Ahmad, I., Ye, J., Liu, W. (eds) The 2021 International Conference on Smart Technologies and Systems for Internet of Things. STSIoT 2021. Lecture Notes on Data Engineering and Communications Technologies, vol 122. Springer, Singapore. https://doi.org/10.1007/978-981-19-3632-6_68

Download citation

DOI: https://doi.org/10.1007/978-981-19-3632-6_68

Published:

Publisher Name: Springer, Singapore

Print ISBN: 978-981-19-3631-9

Online ISBN: 978-981-19-3632-6

eBook Packages: EngineeringEngineering (R0)