Abstract

In Chap. 6, we discussed various optimization methods for deep neural network training. Although they are in various forms, these algorithms are basically gradient-based local update schemes. However, the biggest obstacle recognized by the entire community is that the loss surfaces of deep neural networks are extremely non-convex and not even smooth. This non-convexity and non-smoothness make the optimization unaffordable to analyze, and the main concern was whether popular gradient-based approaches might fall into local minimizers.

Access provided by Autonomous University of Puebla. Download chapter PDF

Similar content being viewed by others

1 Introduction

In Chap. 6, we discussed various optimization methods for deep neural network training. Although they are in various forms, these algorithms are basically gradient-based local update schemes. However, the biggest obstacle recognized by the entire community is that the loss surfaces of deep neural networks are extremely non-convex and not even smooth. This non-convexity and non-smoothness make the optimization unaffordable to analyze, and the main concern was whether popular gradient-based approaches might fall into local minimizers.

Surprisingly, the success of modern deep learning may be due to the remarkable effectiveness of gradient-based optimization methods despite its highly non-convex nature of the optimization problem. Extensive research has been carried out in recent years to provide a theoretical understanding of this phenomenon. In particular, several recent works [119,120,121] have noted the importance of the over-parameterization. In fact, it was shown that when hidden layers of a deep network have a large number of neurons compared to the number of training samples, the gradient descent or stochastic gradient converges to a global minimum with zero training errors. While these results are intriguing and provide important clues for understanding the geometry of deep learning optimization, it is still unclear why simple local search algorithms can be successful for deep neural network training.

Indeed, the area of deep learning optimization is a rapidly evolving area of intense research, and there are too many different approaches to cover in a single chapter. Rather than treating a variety of techniques in a disorganized way, this chapter explains two different lines of research just for food for thought: one is based on the geometric structure of the loss function and the other is based on the results of Lyapunov stability. Although the two approaches are closely related, they have different advantages and disadvantages. By explaining these two approaches, we can cover some of the key topics of research exploration such as optimization landscape [122,123,124], over-parameterization [119, 125,126,127,128,129], and neural tangent kernel (NTK) [130,131,132] that have been used extensively to analyze the convergence properties of local deep learning search methods.

2 Problem Formulation

In Chap. 6, we pointed out that the basic optimization problem in neural network training can be formulated as

where θ refers to the network parameters and \(\ell :{\mathbb R}^n\mapsto {\mathbb R}\) is the loss function. In the case of supervised learning with the mean square error (MSE) loss, the loss function is defined by

where x, y denotes the pair of the network input and the label, and fθ(⋅) is a neural network parameterized by trainable parameters θ. For the case of an L-layer feed-forward neural network, the regression function fΘ(x) can be represented by

where σ(⋅) denotes the element-wise nonlinearity and

for l = 1, ⋯ , L. Here, the number of the l-th layer hidden neurons, often referred to as the width, is denoted by d(l), so that \({\boldsymbol {g}}^{(l)},{\boldsymbol {o}}^{(l)}\in {\mathbb R}^{d^{(l)}}\) and \({\boldsymbol {W}}^{(l)}\in {\mathbb R}^{d^{(l)}\times d^{(l-1)}}\).

The popular local search approaches using the gradient descent use the following update rule:

where ηk denotes the k-th iteration step size. In a differential equation form, the update rule can be represented by

where \(\dot {\boldsymbol {\theta }}[t] = {\partial {\boldsymbol {\theta }}[t]}/{\partial t}\).

As previously explained, the optimization problem (11.1) is strongly non-convex, and it is known that the gradient-based local search schemes using (11.7) and (11.8) may get stuck in the local minima. Interestingly, many deep learning optimization algorithms appear to avoid the local minima and even result in zero training errors, indicating that the algorithms are reaching the global minima. In the following, we present two different approaches to explain this fascinating behavior of gradient descent approaches.

3 Polyak–Łojasiewicz-Type Convergence Analysis

The loss function ℓ is said to be strongly convex (SC) if

It is known that if ℓ is SC, then gradient descent achieves a global linear convergence rate for this problem [133]. Note that SC in (11.9) is a stronger condition than the convexity in Proposition 1.1, which is given as

Our starting point is the observation that the convex analysis mentioned above is not the right approach to analyzing a deep neural network. The non-convexity is essential for the analysis. This situation has motivated a variety of alternatives to the convexity to prove the convergence. One of the oldest of these conditions is the error bounds (EB) of Luo and Tseng [134], but other conditions have been recent considered, which include essential strong convexity (ESC) [135], weak strong convexity (WSC) [136], and the restricted secant inequality (RSI) [137]. See their specific forms of conditions in Table 11.1. On the other hand, there is a much older condition called the Polyak–Łojasiewicz (PL) condition, which was originally introduced by Polyak [138] and found to be a special case of the inequality of Łojasiewicz [139]. Specifically, we will say that a function satisfies the PL inequality if the following holds for some μ > 0:

Note that this inequality implies that every stationary point is a global minimum. But unlike SC, it does not imply that there is a unique solution. We will revisit this issue later.

Similar to other conditions in Table 11.1, PL is a sufficient condition for gradient descent to achieve a linear convergence rate [122]. In fact, PL is the mildest condition among them. Specifically, the following relationship between the conditions holds [122]:

if ℓ have a Lipschitz continuous gradient, i.e. there exists L > 0 such that

In the following, we provide a convergence proof of the gradient descent method using the PL condition, which turns out to be an important tool for non-convex deep learning optimization problems.

Theorem 11.1 (Karimi et al. [122])

Consider problem (11.1), where ℓ has an L-Lipschitz continuous gradient, a non-empty solution set, and satisfies the PL inequality (11.11). Then the gradient method with a step-size of 1∕L:

has a global convergence rate

Proof

Using Lemma 11.1 (see next section), L-Lipschitz continuous gradient of the loss function ℓ implies that the function

is convex. Thus, the first-order equivalence of convexity in Proposition 1.1 leads to the following:

By arranging terms, we have

By setting θ′ = θ[k + 1] and θ = θ[k] and using the update rule (11.13), we have

Using the PL inequality (11.11), we get

Rearranging and subtracting ℓ∗ from both sides gives us

Applying this inequality recursively gives the result. □

The beauty of this proof is that we can replace the long and complicated proofs from other conditions with simpler proofs based on the PL inequality [122].

3.1 Loss Landscape and Over-Parameterization

In Theorem 11.1, we use the two conditions for the loss function: (1) ℓ satisfies the PL condition and (2) the gradient of ℓ is Lipschitz continuous. Although these conditions are much weaker than the convexity of the loss function, they still impose the geometric constraint for the loss function, which deserves further discussion.

Lemma 11.1

If the gradient of ℓ(θ) satisfies the L-Lipschitz condition in (11.12), then the transformed function \(g:{\mathbb R}^n \mapsto {\mathbb R}\) defined by

is convex.

Proof

Using the Cauchy–Schwarz inequality, (11.12) implies

This is equivalent to the following condition:

where

Thus, using the monotonicity of gradient equivalence in Proposition 1.1, we can show that g(θ) is convex. □

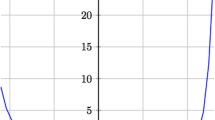

Lemma 11.1 implies that although ℓ is not convex, its transformed function by (11.15) can be convex. Figure 11.1a shows an example of such case. Another important geometric consideration for the loss landscape comes from the PL condition. More specifically, the PL condition in (11.11) implies that every stationary point is a global minimizer, although the global minimizers may not be unique, as shown in Fig. 11.1b,c. While the PL inequality does not imply convexity of ℓ, it does imply the weaker condition of invexity [122]. A function is invex if it is differentiable and there exists a vector-valued function η such that for any θ and θ′ in \({\mathbb R}^n\), the following inequality holds:

A convex function is a special case of invex functions since (11.17) holds when we set η(θ, θ′) = θ′−θ. It was shown that a smooth ℓ is invex if and only if every stationary point of ℓ is a global minimum [140]. As the PL condition implies that every stationary point is a global minimizer, a function satisfying PL is an invex function. The inclusion relationship between convex, invex, and PL functions is illustrated in Fig. 11.2.

Loss landscape for the function ℓ(x) with (a) (11.15) is convex, and (b, c) PL conditions

The loss landscape, where every stationary point is a global minimizer, implies that that there are no spurious local minimizers. This is often called the benign optimization landscape. Finding the conditions for a benign optimization landscape of neural networks was an important theoretical interest of the theorists in machine learning. Originally observed by Kawaguch [141], Lu and Kawaguchi [142] and Zhou and Liang [143] have proven that the loss surfaces of linear neural networks, whose activation functions are all linear functions, do not have any spurious local minima under some conditions and all local minima are equally good.

Unfortunately, this good property no longer stands when the activations are nonlinear. Zhou and Liang [143] show that ReLU neural networks with one hidden layer have spurious local minima. Yun et al. [144] prove that ReLU neural networks with one hidden layer have infinitely many spurious local minima when the outputs are one-dimensional.

These somewhat negative results were surprising and seemed to contradict the empirical success of optimization in neural networks. Indeed, it was later shown that if the activation functions are continuous, and the loss functions are convex and differentiable, over-parameterized fully-connected deep neural networks do not have any spurious local minima [145].

The reason for the benign optimization landscape for an over-parameterized neural network was analyzed by examining the geometry of the global minimum. Nguyen [123] discovered that the global minima are interconnected and concentrated on a unique valley if the neural networks are sufficiently over-parameterized. Similar results were obtained by Liu et al. [124]. In fact, they found that the set of solutions of an over-parameterized system is generically a manifold of positive dimensions, with the Hessian matrices of the loss function being positive semidefinite but not positive definite. Such a landscape is incompatible with convexity unless the set of solutions is a linear manifold. However, the linear manifold with zero curvature of the curve of global minima is unlikely to occur due to the essential non-convexity of the underlying optimization problem. Hence, gradient type algorithms can converge to any of the global minimum, although the exact point of the convergence depends on a specific optimization algorithm. This implicit bias of an optimization algorithm is another important theoretical topic in deep learning, which will be covered in a later chapter. In contrast, an under-parameterized landscape generally has several isolated local minima with a positive definite Hessian of the loss, the function being locally convex. This is illustrated in Fig. 11.3.

4 Lyapunov-Type Convergence Analysis

Now let us introduce a different type of convergence analysis with a different mathematical flavor. In contrast to the methods discussed above, the analysis of the global loss landscape is not required here. Rather, a local loss geometry along the solution trajectory is the key to this analysis.

In fact, this type of convergence analysis is based on Lyapunov stability analysis [146] for the solution dynamics described by (11.8). Specifically, for a given nonlinear system,

the Lyapunov stability analysis is concerned with checking whether the solution trajectory θ[t] converges to zero as t →∞. To provide a general solution for this, we first define the Lyapunov function V (z), which satisfies the following properties:

Definition 11.1

A function \(V:{\mathbb R}^n\mapsto {\mathbb R}\) is positive definite (PD) if

-

V (z) ≥ 0 for all z.

-

V (z) = 0 if and only if z = 0.

-

All sublevel sets of V are bounded.

The Lyapunov function V has an analogy to the potential function of classical dynamics, and \(-\dot V\) can be considered the associated generalized dissipation function. Furthermore, if we set z := θ[t] to analyze the nonlinear dynamic system in (11.18), then \(\dot V:{\boldsymbol {z}}\in {\mathbb R}^n\mapsto {\mathbb R}\) is computed by

The following Lyapunov global asymptotic stability theorem is one of the keys to the stability analysis of dynamic systems:

Theorem 11.2 (Lyapunov Global Asymptotic Stability [146])

Suppose there is a function V such that 1) V is positive definite, and 2) \(\dot V({\boldsymbol {z}})<0\) for all z ≠ 0 and \(\dot V({\boldsymbol {0}})={\boldsymbol {0}}\). Then, every trajectory θ[t] of \(\dot {\boldsymbol {\theta }} = {\boldsymbol {g}}({\boldsymbol {\theta }})\) converges to zero as t →∞. (i.e., the system is globally asymptotically stable).

Example: 1-D Differential Equation

Consider the following ordinary differential equation:

We can easily show that the system is globally asymptotically stable since the solution is \(\theta [t]= C \exp (-t)\) for some constant C, and θ[t] → 0 as t →∞. Now, we want to prove this using Theorem 11.2 without ever solving the differential equation. First, choose a Lyapunov function

where z = θ[t]. We can easily show that V (z) is positive definite. Furthermore, we have

Therefore, using Theorem 11.2 we can show that θ[t] converges to zero as t →∞.

One of the beauties of Lyapunov stability analysis is that we do not need an explicit knowledge of the loss landscape to prove convergence. Instead, we just need to know the local dynamics along the solution path. To understand this claim, here we apply Lyapunov analysis to the convergence analysis of our gradient descent dynamics:

For the MSE loss, this leads to

Now let

and consider the following positive definite Lyapunov function

where z = e[t]. Then, we have

Using the chain rule, we have

where

is often called the neural tangent kernel (NTK) [130,131,132]. By plugging this into (11.21), we have

Accordingly, if the NTK is positive definite for all t, then \(\dot V({\boldsymbol {z}}) <0\). Therefore, e[t] →0 so that f(θ[t]) →y as t →∞. This proves the convergence of gradient descent approach.

4.1 The Neural Tangent Kernel (NTK)

In the previous discussion we showed that the Lyapunov analysis only requires a positive-definiteness of the NTK along the solution trajectory. While this is a great advantage over PL-type analysis, which requires knowledge of the global loss landscape, the NTK is a function of time, so it is important to obtain the conditions for the positive-definiteness of NTK along the solution trajectory.

To understand this, here we are interested in deriving the explicit form of the NTK to understand the convergence behavior of the gradient descent methods. Using the backpropagation in Chap. 6, we can obtain the weight update as follows:

Similarly, we have

Therefore, the NTK can be computed by

where

Therefore, the positive definiteness of the NTK comes from the properties of M(l)[t]. In particular, if M(l)[t] is positive definite for any l, the resulting NTK is positive definite. Moreover, the positive-definiteness of M(l)[t] can be readily shown if the following sensitivity matrix is full row ranked:

4.2 NTK at Infinite Width Limit

Although we derived the explicit form of the NTK using backpropagation, still the component matrix in (11.24) is difficult to analyze due to the stochastic nature of the weights and ReLU activation patterns.

To address this problem, the authors in [130] calculated the NTK at the infinite width limit and showed that it satisfies the positive definiteness. Specifically, they considered the following normalized form of the neural network update:

for l = 1, ⋯ , L, and d(l) denotes the width of the l-th layer. Furthermore, they considered what is sometimes called LeCun initialization, taking \(W_{ij}^{(l)}\sim \mathcal {N}\left (0,\frac {1}{d^{(l)}}\right )\) and \(b^{(l)}_j \sim \mathcal {N}(0,1)\). Then, the following asymptotic form of the NTK can be obtained.

Theorem 11.3 (Jacot et al. [130])

For a network of depth L at initialization, with a Lipschitz nonlinearity σ, and in the limit as the layers width d (1)⋯ , d(L−1) →∞, the neural tangent kernel K(L) converges in probability to a deterministic limiting kernel:

Here, the scalar kernel \(\kappa _\infty ^{(L)}: {\mathbb R}^{d^{(0)}\times d^{(0)}} \mapsto {\mathbb R}\) is defined recursively by

where

where the expectation is with respect to a centered Gaussian process g of covariance ν (l) , and where \(\dot \sigma \) denotes the derivative of σ.

Note that the symptotic form of the NTK is positive definite since \(\kappa ^{(L)}_\infty >0\). Therefore, the gradient descent using the infinite width NTK converges to the global minima. Again, we can clearly see the benefit of the over-parameterization in terms of large network width.

4.3 NTK for General Loss Function

Now, we are interested in extending the example above to the general loss function with multiple training data sets. For a given training data set \(\{{\boldsymbol {x}}_n\}_{n=1}^N\), the gradient dynamics in (11.7) can be extended to

where ℓ(xn) := ℓ(f(xn)) with a slight abuse of notation. This leads to

where Kt(xm, xn) denotes the (m, n)-th block NTK defined by

Now, consider the following Lyapunov function candidate:

where

and f∗(xm) refers to \({\boldsymbol {f}}_{{\boldsymbol {\theta }}^*}({\boldsymbol {x}}_m)\) with θ∗ being the global minimizer. We further assume that the loss function satisfies the property that

so that V (z) is a positive definite function. Under this assumption, we have

where

Therefore, if the NTK \(\boldsymbol {{{\mathcal K}}}[t]\) is positive definite for all t, then Lyapunov stability theory guarantees that the gradient dynamics converge to the global minima.

5 Exercises

-

1.

Show that a smooth ℓ(θ) is invex if and only if every stationary point of ℓ(θ) is a global minimum.

-

2.

Show that a convex function is invex.

-

3.

Let a > 0. Show that V (x, y) = x2 + 2y2 is a Lyapunov function for the system

$$\displaystyle \begin{aligned} \begin{array}{rcl} \dot x = ay^2 -x & ,&\displaystyle \dot y = - y - ax^2. \end{array} \end{aligned} $$ -

4.

Show that \(V(x,y)=\ln (1+x^2)+y^2\) is a Lyapunov function for the system

$$\displaystyle \begin{aligned} \begin{array}{rcl} \dot x = x(y-1) & ,&\displaystyle \dot y = - \frac{x^2}{1+x^2}. \end{array} \end{aligned} $$ -

5.

Consider a two-layer fully connected network \(f_\Theta :\mathbb {R}^2\rightarrow \mathbb {R}^2\) with ReLU nonlinearity, as shown in Fig. 10.10.

-

(a)

Suppose the weight matrices and biases are given by

$$\displaystyle \begin{aligned} {\boldsymbol{W}}^{(0)} = \begin{bmatrix} 2 & -1\\ 1 &1 \end{bmatrix} ,\quad {\boldsymbol{b}}^{(0)} = \begin{bmatrix} 1\\ -1 \end{bmatrix}\\ {\boldsymbol{W}}^{(1)} = \begin{bmatrix} 1 & 2\\ -1 &1 \end{bmatrix} ,\quad {\boldsymbol{b}}^{(1)} = \begin{bmatrix} -9\\ -2 \end{bmatrix}. \end{aligned} $$Given the corresponding input space partition in Fig. 10.11, compute the neural tangent kernel for each partition. Are they positive definite?

-

(b)

In problem (a), suppose that the second layer weight and bias are changed to

$$\displaystyle \begin{aligned} {\boldsymbol{W}}^{(1)} = \begin{bmatrix} 1 & 2\\ 0 & 1 \end{bmatrix} ,\quad {\boldsymbol{b}}^{(1)} = \begin{bmatrix} 0\\ 1 \end{bmatrix}. \end{aligned} $$Given the corresponding input space partition, compute the neural tangent kernel for each partition. Are they positive definite?

-

(a)

References

Z. Allen-Zhu, Y. Li, and Z. Song, “A convergence theory for deep learning via over-parameterization,” in International Conference on Machine Learning. PMLR, 2019, pp. 242–252.

S. Du, J. Lee, H. Li, L. Wang, and X. Zhai, “Gradient descent finds global minima of deep neural networks,” in International Conference on Machine Learning. PMLR, 2019, pp. 1675–1685.

D. Zou, Y. Cao, D. Zhou, and Q. Gu, “Stochastic gradient descent optimizes over-parameterized deep ReLU networks,” arXiv preprint arXiv:1811.08888, 2018.

H. Karimi, J. Nutini, and M. Schmidt, “Linear convergence of gradient and proximal-gradient methods under the Polyak-łojasiewicz condition,” in Joint European Conference on Machine Learning and Knowledge Discovery in Databases. Springer, 2016, pp. 795–811.

Q. Nguyen, “On connected sublevel sets in deep learning,” in International Conference on Machine Learning. PMLR, 2019, pp. 4790–4799.

C. Liu, L. Zhu, and M. Belkin, “Toward a theory of optimization for over-parameterized systems of non-linear equations: the lessons of deep learning,” arXiv preprint arXiv:2003.00307, 2020.

Z. Allen-Zhu, Y. Li, and Y. Liang, “Learning and generalization in overparameterized neural networks, going beyond two layers,” arXiv preprint arXiv:1811.04918, 2018.

M. Soltanolkotabi, A. Javanmard, and J. D. Lee, “Theoretical insights into the optimization landscape of over-parameterized shallow neural networks,” IEEE Transactions on Information Theory, vol. 65, no. 2, pp. 742–769, 2018.

S. Oymak and M. Soltanolkotabi, “Overparameterized nonlinear learning: Gradient descent takes the shortest path?” in International Conference on Machine Learning. PMLR, 2019, pp. 4951–4960.

S. S. Du, X. Zhai, B. Poczos, and A. Singh, “Gradient descent provably optimizes over-parameterized neural networks,” arXiv preprint arXiv:1810.02054, 2018.

I. Safran, G. Yehudai, and O. Shamir, “The effects of mild over-parameterization on the optimization landscape of shallow ReLU neural networks,” arXiv preprint arXiv:2006.01005, 2020.

A. Jacot, F. Gabriel, and C. Hongler, “Neural tangent kernel: convergence and generalization in neural networks,” in Proceedings of the 32nd International Conference on Neural Information Processing Systems, 2018, pp. 8580–8589.

S. Arora, S. S. Du, W. Hu, Z. Li, R. Salakhutdinov, and R. Wang, “On exact computation with an infinitely wide neural net,” arXiv preprint arXiv:1904.11955, 2019.

Y. Li, T. Luo, and N. K. Yip, “Towards an understanding of residual networks using neural tangent hierarchy (NTH),” arXiv preprint arXiv:2007.03714, 2020.

Y. Nesterov, Introductory lectures on convex optimization: A basic course. Springer Science & Business Media, 2003, vol. 87.

Z.-Q. Luo and P. Tseng, “Error bounds and convergence analysis of feasible descent methods: a general approach,” Annals of Operations Research, vol. 46, no. 1, pp. 157–178, 1993.

J. Liu, S. Wright, C. Ré, V. Bittorf, and S. Sridhar, “An asynchronous parallel stochastic coordinate descent algorithm,” in International Conference on Machine Learning. PMLR, 2014, pp. 469–477.

I. Necoara, Y. Nesterov, and F. Glineur, “Linear convergence of first order methods for non-strongly convex optimization,” Mathematical Programming, vol. 175, no. 1, pp. 69–107, 2019.

H. Zhang and W. Yin, “Gradient methods for convex minimization: better rates under weaker conditions,” arXiv preprint arXiv:1303.4645, 2013.

B. T. Polyak, “Gradient methods for minimizing functionals,” Zhurnal Vychislitel’noi Matematiki i Matematicheskoi Fiziki, vol. 3, no. 4, pp. 643–653, 1963.

S. Lojasiewicz, “A topological property of real analytic subsets,” Coll. du CNRS, Les équations aux dérivées partielles, vol. 117, pp. 87–89, 1963.

B. D. Craven and B. M. Glover, “Invex functions and duality,” Journal of the Australian Mathematical Society, vol. 39, no. 1, pp. 1–20, 1985.

K. Kawaguchi, “Deep learning without poor local minima,” arXiv preprint arXiv:1605.07110, 2016.

H. Lu and K. Kawaguchi, “Depth creates no bad local minima,” arXiv preprint arXiv:1702.08580, 2017.

Y. Zhou and Y. Liang, “Critical points of neural networks: Analytical forms and landscape properties,” arXiv preprint arXiv:1710.11205, 2017.

C. Yun, S. Sra, and A. Jadbabaie, “Small nonlinearities in activation functions create bad local minima in neural networks,” arXiv preprint arXiv:1802.03487, 2018.

D. Li, T. Ding, and R. Sun, “Over-parameterized deep neural networks have no strict local minima for any continuous activations,” arXiv preprint arXiv:1812.11039, 2018.

N. P. Bhatia and G. P. Szegö, Stability Theory of Dynamical Systems. Springer Science & Business Media, 2002.

Author information

Authors and Affiliations

Rights and permissions

Copyright information

© 2022 The Author(s), under exclusive license to Springer Nature Singapore Pte Ltd.

About this chapter

Cite this chapter

Ye, J.C. (2022). Deep Learning Optimization. In: Geometry of Deep Learning. Mathematics in Industry, vol 37. Springer, Singapore. https://doi.org/10.1007/978-981-16-6046-7_11

Download citation

DOI: https://doi.org/10.1007/978-981-16-6046-7_11

Published:

Publisher Name: Springer, Singapore

Print ISBN: 978-981-16-6045-0

Online ISBN: 978-981-16-6046-7

eBook Packages: Mathematics and StatisticsMathematics and Statistics (R0)