Abstract

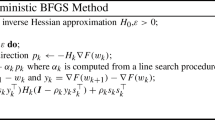

Deep learning algorithms often require solving a highly nonlinear and non-convex unconstrained optimization problem. Methods for solving optimization problems in large-scale machine learning, such as deep learning and deep reinforcement learning (RL), are generally restricted to the class of first-order algorithms, like stochastic gradient descent (SGD). While SGD iterates are inexpensive to compute, they have slow theoretical convergence rates. Furthermore, they require exhaustive trial-and-error to fine-tune many learning parameters. Using second-order curvature information to find search directions can help with more robust convergence for non-convex optimization problems. However, computing Hessian matrices for large-scale problems is not computationally practical. Alternatively, quasi-Newton methods construct an approximate of the Hessian matrix to build a quadratic model of the objective function. Quasi-Newton methods, like SGD, require only first-order gradient information, but they can result in superlinear convergence, which makes them attractive alternatives to SGD. The limited-memory Broyden–Fletcher–Goldfarb–Shanno (L-BFGS) approach is one of the most popular quasi-Newton methods that constructs positive definite Hessian approximations. In this chapter, we propose efficient optimization methods based on L-BFGS quasi-Newton methods using line search and trust-region strategies. Our methods bridge the disparity between first- and second-order methods by using gradient information to calculate low-rank updates to Hessian approximations. We provide formal convergence analysis of these methods as well as empirical results on deep learning applications, such as image classification tasks and deep reinforcement learning on a set of Atari 2600 video games. Our results show a robust convergence with preferred generalization characteristics as well as fast training time.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

I. Goodfellow, Y. Bengio, A. Courville, Deep Learning (MIT Press, Cambridge, 2016)

M.A. Wani, F.A. Bhat, S. Afzal, A. Khan, Advances in Deep Learning (Springer, Berlin, 2020)

T. Hastie, R. Tibshirani, J. Friedman, The Elements of Statistical Learning: Data Mining, Inference and Prediction, 2nd edn. (Springer, Berlin, 2009)

H. Robbins, S. Monro, A stochastic approximation method. Ann. Math. Stat. 22(3), 400–407 (1951)

L. Bottou, Large-scale machine learning with stochastic gradient descent, in Proceedings of COMPSTAT’2010 (Springer, 2010), pp. 177–186

J.C. Duchi, E. Hazan, Y. Singer, Adaptive subgradient methods for online learning and stochastic optimization. J. Mach. Learn. Res. 12, 2121–2159 (2011)

B. Recht, C. Re, S. Wright, F. Niu, Hogwild: a lock-free approach to parallelizing stochastic gradient descent, in Advances in Neural Information Processing Systems (2011), pp. 693–701

L. Adhikari, O. DeGuchy, J.B. Erway, S. Lockhart, R.F. Marcia, Limited-memory trust-region methods for sparse relaxation, in Wavelets and Sparsity XVII, vol. 10394 (International Society for Optics and Photonics, 2017)

Q.V. Le, J. Ngiam, A. Coates, A. Lahiri, B. Prochnow, A.Y. Ng, On optimization methods for deep learning, in Proceedings of the 28th International Conference on International Conference on Machine Learning (2011), pp. 265–272

J.B. Erway, J. Griffin, R.F. Marcia, R. Omheni, Trust-region algorithms for training responses: machine learning methods using indefinite Hessian approximations. Optim. Methods Softw. 1–28 (2019)

P. Xu, F. Roosta-Khorasan, M.W. Mahoney, Second-order optimization for non-convex machine learning: an empirical study. ArXiv e-prints (2017)

J. Martens, Deep learning via Hessian-free optimization, in Proceedings of the 27th International Conference on Machine Learning (ICML) (2010), pp. 735–742

J. Martens, I. Sutskever, Learning recurrent neural networks with hessian-free optimization, in Proceedings of the 28th International Conference of on Machine Learning (ICML) (2011), pp. 1033–1040

J. Martens, I. Sutskever, Training deep and recurrent networks with hessian-free optimization, in Neural Networks: Tricks of the Trade (Springer, 2012), pp. 479–535

R. Bollapragada, R.H. Byrd, J. Nocedal, Exact and inexact subsampled newton methods for optimization. IMA J. Numer. Anal. 39(2), 545–578 (2018)

M.D. Zeiler, ADADELTA: an adaptive learning rate method (2012). arxiv:1212.5701

D.P. Kingma, J. Ba, Adam: a method for stochastic optimization (2014). arXiv:1412.6980

J. Nocedal, S.J. Wright, Numerical Optimization, 2nd edn. (Springer, New York, 2006)

J. Brust, O. Burdakov, J.B. Erway, R.F. Marcia, A dense initialization for limited-memory quasi-newton methods. Comput. Optim. Appl. 74(1), 121–142 (2019)

J. Brust, J.B. Erway, R.F. Marcia, On solving L-SR1 trust-region subproblems. Comput. Optim. Appl. 66(2), 245–266 (2017)

C.G. Broyden, The convergence of a class of double-rank minimization algorithms 1. General considerations. SIAM J. Appl. Math. 6(1), 76–90 (1970)

R. Fletcher, A new approach to variable metric algorithms. Comput. J. 13(3), 317–322 (1970)

D. Goldfarb, A family of variable-metric methods derived by variational means. Math. Comput. 24(109), 23–26 (1970)

D.F. Shanno, Conditioning of quasi-Newton methods for function minimization. Math. Comput. 24(111), 647–656 (1970)

Q.V. Le, J. Ngiam, A. Coates, A. Lahiri, B. Prochnow, A.Y. Ng, On optimization methods for deep learning, in Proceedings of the 28th International Conference on International Conference on Machine Learning (Omnipress, 2011), pp. 265–272

J. Rafati, O. DeGuchy, R.F. Marcia, Trust-region minimization algorithm for training responses (TRMinATR): the rise of machine learning techniques, in 26th European Signal Processing Conference (EUSIPCO 2018) (Italy, Rome, 2018)

O. Burdakov, L. Gong, Y.X. Yuan, S. Zikrin, On efficiently combining limited memory and trust-region techniques. Math. Program. Comput. 9, 101–134 (2016)

R.S. Sutton, A.G. Barto, Reinforcement Learning: An Introduction, 2nd edn. (MIT Press, Cambridge, 2018)

R.S. Sutton, Generalization in reinforcement learning: successful examples using sparse coarse coding. Adv. Neural Inf. Process. Syst. 8, 1038–1044 (1996)

J. Rafati, D.C. Noelle, Lateral inhibition overcomes limits of temporal difference learning, in Proceedings of the 37th Annual Cognitive Science Society Meeting (Pasadena, CA, USA, 2015)

J. Rafati, D.C. Noelle, Sparse coding of learned state representations in reinforcement learning, in Conference on Cognitive Computational Neuroscience (New York City, NY, USA, 2017)

J. Rafati Heravi, Learning representations in reinforcement learning. Ph.D. Thesis, University of California, Merced, 2019

J. Rafati, D.C. Noelle, Learning representations in model-free hierarchical reinforcement learning (2019). arXiv:1810.10096

F.S. Melo, S.P. Meyn, M.I. Ribeiro, An analysis of reinforcement learning with function approximation, in Proceedings of the 25th International Conference on Machine Learning (2008)

G. Tesauro, Temporal difference learning and TD-Gammon. Commun. ACM 38(3)(1995)

V. Mnih, K. Kavukcuoglu, D. Silver, A. Graves, I. Antonoglou, D. Wierstra, M.A. Riedmiller, Playing Atari with deep reinforcement learning (2013). arxiv:1312.5602

V. Mnih, K. Kavukcuoglu, D. Silver, Others, Human-level control through deep reinforcement learning. Nature 518(7540), 529–533 (2015)

D. Silver, A. Huang, C.J. Maddison, D. Hassabis, Others, Mastering the game of go with deep neural networks and tree search. Nature 529(7587), 484–489 (2016)

P. Wolfe, Convergence conditions for ascent methods. SIAM Rev. 11(2), 226–235 (1969)

D.M. Gay, Computing optimal locally constrained steps. SIAM J. Sci. Stat. Comput. 2(2), 186–197 (1981)

J.J. Moré, D.C. Sorensen, Computing a trust region step. SIAM J. Sci. Stat. Comput. 4(3), 553–572 (1983)

A.R. Conn, N.I.M. Gould, P.L. Toint, Trust-Region Methods (Society for Industrial and Applied Mathematics, Philadelphia, 2000)

D.C. Liu, J. Nocedal, On the limited memory BFGS method for large scale optimization. Math. Program. 45(1–3), 503–528 (1989)

J. Rafati, R.F. Marcia, Improving L-BFGS initialization for trust-region methods in deep learning, in 17th IEEE International Conference on Machine Learning and Applications (Orlando, Florida, 2018)

R.H. Byrd, J. Nocedal, R.B. Schnabel, Representations of quasi-Newton matrices and their use in limited-memory methods. Math. Program. 63, 129–156 (1994)

Y. LeCun, L. Bottou, Y. Bengio, P. Haffner, Gradient-based learning applied to document recognition. Proc. IEEE 86(11), 2278–2324 (1998)

Y. LeCun, Others, Lenet5, convolutional neural networks (2015), p. 20

Y. LeCun, The MNIST database of handwritten digits (1998). http://yann.lecun.com/exdb/mnist/

R.S. Sutton, A.G. Barto, Reinforcement Learning: An Introduction, 1st edn. (MIT Press, Cambridge, 1998)

A.S. Berahas, J. Nocedal, M. Takac, A multi-batch L-BFGS method for machine learning, in Advances in Neural Information Processing Systems, vol. 29 (2016), pp. 1055–1063

R.H. Byrd, S.L. Hansen, J. Nocedal, Y. Singer, A stochastic quasi-newton method for large-scale optimization. SIAM J. Optim. 26(2), 1008–1031 (2016)

Y. Nesterov, Introductory Lectures on Convex Optimization: A Basic Course (Springer Science & Business Media, Berlin, 2013)

T. Jaakkola, M.I. Jordan, S.P. Singh, On the convergence of stochastic iterative dynamic programming algorithms. Neural Comput. 6(6), 1185–1201 (1994)

G. Brockman, V. Cheung, L. Pettersson, J. Schneider, J. Schulman, J. Tang, W. Zaremba, OpenAI Gym (2016)

M.G. Bellemare, Y. Naddaf, J. Veness, H.M. Bowling, The arcade learning environment: an evaluation platform for general agents. J. Artif. Intell. Res. 47, 253–279 (2013)

M.G. Bellemare, J. Veness, M.H. Bowling, Investigating contingency awareness using Atari 2600 games, in Twenty-Sixth AAAI Conference on Artificial Intelligence (2012)

M. Hausknecht, J. Lehman, R. Miikkulainen, P. Stone, A neuroevolution approach to general atari game playing. IEEE Trans. Comput. Intell. AI Games 6(4), 355–366 (2014)

J. Schulman, S. Levine, P. Moritz, M. Jordan, P. Abbeel, Trust region policy optimization, in Proceedings of the 32nd International Conference on International Conference on Machine Learning (2015)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2020 Springer Nature Singapore Pte Ltd.

About this chapter

Cite this chapter

Rafati, J., Marica, R.F. (2020). Quasi-Newton Optimization Methods for Deep Learning Applications. In: Wani, M., Kantardzic, M., Sayed-Mouchaweh, M. (eds) Deep Learning Applications. Advances in Intelligent Systems and Computing, vol 1098. Springer, Singapore. https://doi.org/10.1007/978-981-15-1816-4_2

Download citation

DOI: https://doi.org/10.1007/978-981-15-1816-4_2

Published:

Publisher Name: Springer, Singapore

Print ISBN: 978-981-15-1815-7

Online ISBN: 978-981-15-1816-4

eBook Packages: Intelligent Technologies and RoboticsIntelligent Technologies and Robotics (R0)