Abstract

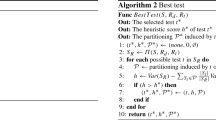

Multi-target regression is concerned with the simultaneous prediction of multiple continuous target variables based on the same set of input variables. It arises in several interesting industrial and environmental application domains, such as ecological modelling and energy forecasting. This paper presents an ensemble method for multi-target regression that constructs new target variables via random linear combinations of existing targets. We discuss the connection of our approach with multi-label classification algorithms, in particular RAkEL, which originally inspired this work, and a family of recent multi-label classification algorithms that involve output coding. Experimental results on 12 multi-target datasets show that it performs significantly better than a strong baseline that learns a single model for each target using gradient boosting and compares favourably to multi-objective random forest approach, which is a state-of-the-art approach. The experiments further show that our approach improves more when stronger unconditional dependencies exist among the targets.

Chapter PDF

Similar content being viewed by others

Keywords

References

Kužnar, D., Možina, M., Bratko, I.: Curve prediction with kernel regression. In: Proceedings of the 1st Workshop on Learning from Multi-Label Data, pp. 61–68 (2009)

Kocev, D., Džeroski, S., White, M.D., Newell, G.R., Griffioen, P.: Using single- and multi-target regression trees and ensembles to model a compound index of vegetation condition. Ecological Modelling 220(8), 1159–1168 (2009)

Dzeroski, S., Demsar, D., Grbovic, J.: Predicting chemical parameters of river water quality from bioindicator data. Appl. Intell. 13(1), 7–17 (2000)

Dzeroski, S., Kobler, A., Gjorgjioski, V., Panov, P.: Using decision trees to predict forest stand height and canopy cover from landsat and lidar data. In: Proc. 20th Int. Conf. on Informatics for Environmental Protection - Managing Environmental Knowledge - ENVIROINFO (2006)

Tsoumakas, G., Katakis, I., Vlahavas, I.: Mining multi-label data. In: Maimon, O., Rokach, L. (eds.) Data Mining and Knowledge Discovery Handbook, 2nd edn., pp. 667–685. Springer, Heidelberg (2010)

Zhang, M.L., Zhou, Z.H.: A review on multi-label learning algorithms. IEEE Transactions on Knowledge and Data Engineering 99 (PrePrints), 1 (2013)

Spyromitros-Xioufis, E., Tsoumakas, G., Groves, W., Vlahavas, I.: Multi-label classification methods for multi-target regression. arXiv preprint arXiv:1211.6581 [cs.LG] (2014)

Tsoumakas, G., Katakis, I., Vlahavas, I.: Random k-labelsets for multi-label classification. IEEE Transactions on Knowledge and Data Engineering 23, 1079–1089 (2011)

Friedman, J.H.: Greedy function approximation: A gradient boosting machine. The Annals of Statistics 29(5), 1189–1232 (2001)

Kocev, D., Vens, C., Struyf, J., Džeroski, S.: Ensembles of multi-objective decision trees. In: Kok, J.N., Koronacki, J., Lopez de Mantaras, R., Matwin, S., Mladenič, D., Skowron, A. (eds.) ECML 2007. LNCS (LNAI), vol. 4701, pp. 624–631. Springer, Heidelberg (2007)

Izenman, A.J.: Reduced-rank regression for the multivariate linear model. Journal of Multivariate Analysis 5(2), 248–264 (1975)

Breiman, L., Friedman, J.H.: Predicting multivariate responses in multiple linear regression. Journal of the Royal Statistical Society: Series B (Statistical Methodology) 59(1), 3–54 (1997)

Blockeel, H., Raedt, L.D., Ramong, J.: Top-down induction of clustering trees. In: Proceedings of the 15th International Conference on Machine Learning, pp. 55–63. Morgan Kaufmann (1998)

Appice, A., Džeroski, S.: Stepwise induction of multi-target model trees. In: Kok, J.N., Koronacki, J., Lopez de Mantaras, R., Matwin, S., Mladenič, D., Skowron, A. (eds.) ECML 2007. LNCS (LNAI), vol. 4701, pp. 502–509. Springer, Heidelberg (2007)

Piccart, B., Struyf, J., Blockeel, H.: Empirical asymmetric selective transfer in multi-objective decision trees. In: Boulicaut, J.-F., Berthold, M.R., Horváth, T. (eds.) DS 2008. LNCS (LNAI), vol. 5255, pp. 64–75. Springer, Heidelberg (2008)

Jalali, A., Ravikumar, P., Sanghavi, S., Ruan, C.: A dirty model for multi-task learning. In: Proc. of the Conference on Advances in Neural Information Processing Systems (NIPS), pp. 964–972 (2010)

Blockeel, H., Džeroski, S., Grbović, J.: Simultaneous prediction of multiple chemical parameters of river water quality with TILDE. In: Żytkow, J.M., Rauch, J. (eds.) PKDD 1999. LNCS (LNAI), vol. 1704, pp. 32–40. Springer, Heidelberg (1999)

Ženko, B., Džeroski, S.: Learning classification rules for multiple target attributes. In: Washio, T., Suzuki, E., Ting, K.M., Inokuchi, A. (eds.) PAKDD 2008. LNCS (LNAI), vol. 5012, pp. 454–465. Springer, Heidelberg (2008)

Aho, T., Ženko, B., Džeroski, S.: Rule ensembles for multi-target regression. In: Proc. of the 9th IEEE International Conference on Data Mining, pp. 21–30. IEEE Computer Society (2009)

Aho, T., Ženko, B., Džeroski, S., Elomaa, T.: Multi-target regression with rule ensembles. Journal of Machine Learning Research 1, 1–48 (2012)

Hsu, D., Kakade, S., Langford, J., Zhang, T.: Multi-label prediction via compressed sensing. In: NIPS, pp. 772–780. Curran Associates, Inc. (2009)

Zhang, Y., Schneider, J.G.: Multi-label output codes using canonical correlation analysis. In: AISTATS 2011 (2011)

Zhang, Y., Schneider, J.G.: Maximum margin output coding. In: ICML. icml.cc / Omnipress (2012)

Tai, F., Lin, H.T.: Multilabel classification with principal label space transformation. Neural Comput. 24(9), 2508–2542 (2012)

Dietterich, T.G.: Ensemble Methods in Machine Learning. In: Kittler, J., Roli, F. (eds.) MCS 2000. LNCS, vol. 1857, pp. 1–15. Springer, Heidelberg (2000)

Breiman, L.: Random forests. Machine Learning 45(1), 5–32 (2001)

Hinton, G.E., Srivastava, N., Krizhevsky, A., Sutskever, I., Salakhutdinov, R.: Improving neural networks by preventing co-adaptation of feature detectors. CoRR abs/1207.0580 (2012)

Tsoumakas, G., Spyromitros-Xioufis, E., Vilcek, J., Vlahavas, I.: Mulan: A java library for multi-label learning. Journal of Machine Learning Research (JMLR) 12, 2411–2414 (2011)

Hall, M., Frank, E., Holmes, G., Pfahringer, B., Reutemann, P., Witten, I.H.: The weka data mining software: An update. SIGKDD Explorations 11 (2009)

Karalič, A., Bratko, I.: First order regression. Mach. Learn. 26(2-3), 147–176 (1997)

Asuncion, A., Newman, D.: UCI machine learning repository (2007)

Groves, W., Gini, M.: Improving prediction in TAC SCM by integrating multivariate and temporal aspects via PLS regression. In: David, E., Robu, V., Shehory, O., Stein, S., Symeonidis, A.L. (eds.) AMEC/TADA. LNBIP, vol. 119, pp. 28–43. Springer, Heidelberg (1981)

Pardoe, D., Stone, P.: The 2007 TAC SCM prediction challenge. In: Ketter, W., La Poutré, H., Sadeh, N., Shehory, O., Walsh, W. (eds.) AMEC 2008. LNBIP, vol. 44, pp. 175–189. Springer, Heidelberg (2010)

Demsar, J.: Statistical comparisons of classifiers over multiple data sets. Journal of Machine Learning Research 7, 1–30 (2006)

Dembczynski, K., Waegeman, W., Cheng, W., Hüllermeier, E.: On label dependence in multi-label classification. In: International Conference on Machine Learning (ICML)-2nd International Workshop on Learning from Multi-Label Data (MLD 2010), pp. 5–12 (2010)

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2014 Springer-Verlag Berlin Heidelberg

About this paper

Cite this paper

Tsoumakas, G., Spyromitros-Xioufis, E., Vrekou, A., Vlahavas, I. (2014). Multi-target Regression via Random Linear Target Combinations. In: Calders, T., Esposito, F., Hüllermeier, E., Meo, R. (eds) Machine Learning and Knowledge Discovery in Databases. ECML PKDD 2014. Lecture Notes in Computer Science(), vol 8726. Springer, Berlin, Heidelberg. https://doi.org/10.1007/978-3-662-44845-8_15

Download citation

DOI: https://doi.org/10.1007/978-3-662-44845-8_15

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-662-44844-1

Online ISBN: 978-3-662-44845-8

eBook Packages: Computer ScienceComputer Science (R0)