Abstract

Reinforcement learning applications to real robots in multi-agent dynamic environments are limited because of huge exploration space and enormously long learning time. One of the typical examples is a case of RoboCup competitions since other agents and their behavior easily cause state and action space explosion.

This paper presents a method that utilizes state value functions of macro actions to explore appropriate behavior efficiently in a multi-agent environment by which the learning agent can acquire cooperative behavior with its teammates and competitive ones against its opponents.

The key ideas are as follows. First, the agent learns a few macro actions and the state value functions based on reinforcement learning beforehand. Second, an appropriate initial controller for learning cooperative behavior is generated based on the state value functions. The initial controller utilizes the state values of the macro actions so that the learner tends to select a good macro action and not select useless ones. By combination of the ideas and a two-layer hierarchical system, the proposed method shows better performance during the learning than conventional methods.

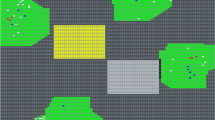

This paper shows a case study of 4 (defense team) on 5 (offense team) game task, and the learning agent (a passer of the offense team) successfully acquired the teamwork plays (pass and shoot) within shorter learning time.

Chapter PDF

Similar content being viewed by others

Keywords

These keywords were added by machine and not by the authors. This process is experimental and the keywords may be updated as the learning algorithm improves.

References

Connell, J.H., Mahadevan, S.: Robot Learning. Kluwer Academic Publishers, Dordrecht (1993)

Fujii, H., Kato, M., Yoshida, K.: Cooperative action control based on evaluating objective achievements. In: Bredenfeld, A., Jacoff, A., Noda, I., Takahashi, Y. (eds.) RoboCup 2005. LNCS (LNAI), vol. 4020, pp. 208–218. Springer, Heidelberg (2006)

Isik, M., Stulp, F., Mayer, G., Utz, H.: Coordination without negotiation in teams of heterogeneous robots. In: Lakemeyer, G., Sklar, E., Sorrenti, D.G., Takahashi, T. (eds.) RoboCup 2006. LNCS (LNAI), vol. 4434, pp. 355–362. Springer, Heidelberg (2007)

Kalyanakrishnan, S., Liu, Y., Stone, P.: Half field offense in robocup soccer: A multiagent reinforcement learning case study. In: Lakemeyer, G., Sklar, E., Sorrenti, D., Takahashi, T. (eds.) RoboCup 2006 Symposium papers and team description papers, CD–ROM, Bremen, Germany (June 2006)

Mcmillen, C., Veloso, M.: Distributed, play-based coordination for robot teams in dynamic environments. In: Lakemeyer, G., Sklar, E., Sorrenti, D.G., Takahashi, T. (eds.) RoboCup 2006. LNCS (LNAI), vol. 4434, pp. 483–490. Springer, Heidelberg (2007)

Noma, K., Takahashi, Y., Asada, M.: Cooperative/competitive behavior acquisition based on state value estimation of others. In: Visser, U., Ribeiro, F., Ohashi, T., Dellaert, F. (eds.) RoboCup 2007. LNCS (LNAI), vol. 5001, pp. 101–112. Springer, Heidelberg (2008)

Sutton, R.S., Barto, A.G.: Reinforcement Learning: An Introduction. MIT Press, Cambridge (1998)

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2010 Springer-Verlag Berlin Heidelberg

About this paper

Cite this paper

Shimada, K., Takahashi, Y., Asada, M. (2010). Efficient Behavior Learning by Utilizing Estimated State Value of Self and Teammates. In: Baltes, J., Lagoudakis, M.G., Naruse, T., Ghidary, S.S. (eds) RoboCup 2009: Robot Soccer World Cup XIII. RoboCup 2009. Lecture Notes in Computer Science(), vol 5949. Springer, Berlin, Heidelberg. https://doi.org/10.1007/978-3-642-11876-0_31

Download citation

DOI: https://doi.org/10.1007/978-3-642-11876-0_31

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-642-11875-3

Online ISBN: 978-3-642-11876-0

eBook Packages: Computer ScienceComputer Science (R0)