Abstract

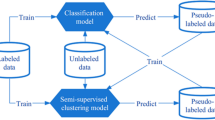

Semi-supervised learning is a paradigm that exploits the unlabeled data in addition to the labeled data to improve the generalization error of a supervised learning algorithm. Although in real-world applications regression is as important as classification, most of the research in semi-supervised learning concentrates on classification. In particular, although Co-Training is a popular semi-supervised learning algorithm, there is not much work to develop new Co-Training style algorithms for semi-supervised regression. In this paper, a semi-supervised regression framework, denoted by CoBCReg is proposed, in which an ensemble of diverse regressors is used for semi-supervised learning that requires neither redundant independent views nor different base learning algorithms. Experimental results show that CoBCReg can effectively exploit unlabeled data to improve the regression estimates.

Access provided by Autonomous University of Puebla. Download to read the full chapter text

Chapter PDF

Similar content being viewed by others

Keywords

These keywords were added by machine and not by the authors. This process is experimental and the keywords may be updated as the learning algorithm improves.

References

Zhu, X.: Semi-supervised learning literature survey. Technical Report (2008)

Blum, A., Mitchell, T.: Combining labeled and unlabeled data with co-training. In: Proceedings of the 11th Annual Conf. on Computational Learning Theory (COLT 1998), pp. 92–100. Morgan Kaufmann Publishers, San Francisco (1998)

Goldman, S., Zhou, Y.: Enhancing supervised learning with unlabeled data. In: Proceedings of the 17th Int. Conf. Machine Learning (ICML 2000), pp. 327–334 (2000)

Zhou, Z., Li, M.: Tri-training: Exploiting unlabeled data using three classifiers. IEEE Trans. on Knowl. and Data Eng. 17(11), 1529–1541 (2005)

Breiman, L.: Bagging predictors. Machine Learning 24(2), 123–140 (1996)

Zhou, Z.H., Li, M.: Semi-supervised regression with co-training. In: Proceedings of the 19th Int. Joint Conf. on Artificial Intelligence (IJCAI), pp. 908–913 (2005)

Brown, G., Wyatt, J., Harris, R., Yao, X.: Diversity creation methods: a survey and categorisation. Information Fusion 6(1), 5–20 (2005)

Krogh, A., Vedelsby, J.: Neural network ensembles, cross validation, and active learning. In: Advances in Neural Information Processing Systems, pp. 231–238. MIT Press, Cambridge (1995)

Schwenker, F., Kestler, H., Palm, G.: Three learning phases for radial basis function networks. Neural Networks 14, 439–458 (2001)

Hansen, J.: Combining predictors: meta machine learning methods and bias/variance and ambiguity. PhD thesis, University of Aarhus, Denmark (2000)

Ridgeway, G., Madigan, D., Richardson, T.: Boosting methodology for regression problems, pp. 152–161 (1999)

Witten, I.H., Frank, E.: Data Mining: Practical Machine Learning Tools and Techniques with Java Implementations. Morgan Kaufmann, San Francisco (1999)

Shrestha, D.L., Solomatine, D.P.: Experiments with adaboost.rt, an improved boosting scheme for regression. Neural Computation 18(7), 1678–1710 (2006)

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2009 Springer-Verlag Berlin Heidelberg

About this paper

Cite this paper

Abdel Hady, M.F., Schwenker, F., Palm, G. (2009). Semi-supervised Learning for Regression with Co-training by Committee. In: Alippi, C., Polycarpou, M., Panayiotou, C., Ellinas, G. (eds) Artificial Neural Networks – ICANN 2009. ICANN 2009. Lecture Notes in Computer Science, vol 5768. Springer, Berlin, Heidelberg. https://doi.org/10.1007/978-3-642-04274-4_13

Download citation

DOI: https://doi.org/10.1007/978-3-642-04274-4_13

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-642-04273-7

Online ISBN: 978-3-642-04274-4

eBook Packages: Computer ScienceComputer Science (R0)