Abstract

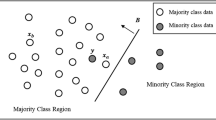

In this paper, a novel inverse random under sampling (IRUS) method is proposed for class imbalance problem. The main idea is to severely under sample the negative class (majority class), thus creating a large number of distinct negative training sets. For each training set we then find a linear discriminant which separates the positive class from the negative class. By combining the multiple designs through voting, we construct a composite between the positive class and the negative class. The proposed methodology is applied on 11 UCI data sets and experimental results indicate a significant increase in Area Under Curve (AUC) when compared with many existing class-imbalance learning methods.

Access provided by Autonomous University of Puebla. Download to read the full chapter text

Chapter PDF

Similar content being viewed by others

Keywords

These keywords were added by machine and not by the authors. This process is experimental and the keywords may be updated as the learning algorithm improves.

References

Batista, G., Prati, R.C., Monard, M.C.: A study of the bahavior of several methods for balancing machine learning training data. SIGKDD Explorations 6(20-29) (2004)

Blake, C., Keogh, E., Merz, C.J.: UCI repository of machine learning databases

Chan, P.K., Stolfo, S.J.: Toward scalable learning with non-uniform class and cost distributions: A case study in credit card fraud detection. In: Proceedings of the 4th ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, New York, pp. 164–168 (1998)

Chawla, N.V.: C4.5 and imbalanced data sets: Investigating the effect of sampling method, probabilistic estimate, and decision tree structure. In: Proceedings of the International Conference on Machine Learning (ICML 2003) Workshop on Learning from Imbalanced Data Sets II (2003)

Chawla, N.V., Bowyer, K.W., Hall, L.O., Kegelmeyer, W.P.: Synthetic minority over-sampling technique. Journal of Artificial Inetelligence Review (16), 321–357 (2002)

de Souto Marcilio, C.P., Bittencourt, V.G., Jose, A.F.C.: An empirical analysis of under-sampling techniques to balance a protein structural class dataset. In: King, I., Wang, J., Chan, L.-W., Wang, D. (eds.) ICONIP 2006. LNCS, vol. 4234, pp. 21–29. Springer, Heidelberg (2006)

Hart, P.E.: Condensed nearest neighbor rule. IEEE Transactions on Information Theory 14, 515–516 (1968)

Japkowicz, M., Stephen, S.: The class imbalance problem: A systematic study. Intelligent data analysis (6), 429–449 (2002)

Kotsiantis, S.B., Pintelas, P.E.: Mixture of expert agents for handling imbalanced data sets. Annals of Mathematics, Computing and Teleinformatics 1(1), 46–55 (2003)

Kubat, M., Matwin, S.: Addressing the course of imbalanced training sets: One-sided selection. In: Proceedings of International Conference of Machine Learning, pp. 179–186 (1997)

Laurikkala, J.: Artificial Intelligence in Medicine. In: Improving Identification of Difficult Small Classes by Balancing Class Distribution. LNCS. Springer, Heidelberg (2001)

Liu, X.Y., Wu, J., Zhou, Z.H.: Exploratory under-sampling for class-imbalance learning. IEEE Transactions on Systems, Man, and Cybernetics - Part B: Cybernetics (2009)

Tomek, I.: Two modifications of CNN. IEEE Transactions on Systems, Man and Cybernatics (6), 769–772 (1976)

Witten, I.H., Frank, E.: Data Mining: Practical machine learning tools and techniques. Morgan Kaufmann, San Francisco (2005)

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2009 Springer-Verlag Berlin Heidelberg

About this paper

Cite this paper

Tahir, M.A., Kittler, J., Mikolajczyk, K., Yan, F. (2009). A Multiple Expert Approach to the Class Imbalance Problem Using Inverse Random under Sampling. In: Benediktsson, J.A., Kittler, J., Roli, F. (eds) Multiple Classifier Systems. MCS 2009. Lecture Notes in Computer Science, vol 5519. Springer, Berlin, Heidelberg. https://doi.org/10.1007/978-3-642-02326-2_9

Download citation

DOI: https://doi.org/10.1007/978-3-642-02326-2_9

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-642-02325-5

Online ISBN: 978-3-642-02326-2

eBook Packages: Computer ScienceComputer Science (R0)