Abstract

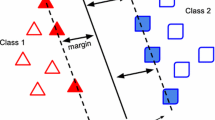

When training Support Vector Machine (SVM), selection of a training data set becomes an important issue, since the problem of overfitting exists with a large number of training data. A user must decide how much training data to use in the training, and then select the data to be used from a given data set. We considered to handle this SVM training data selection as a multi-objective optimization problem and applied our proposed MOGA search strategy to it. It is essential for a broad set of Pareto solutions to be obtained for the purpose of understanding the characteristics of the problem, and we considered the proposed search strategy to be suitable. The results of the experiment indicated that selection of the training data set by MOGA is effective for SVM training.

Access provided by Autonomous University of Puebla. Download to read the full chapter text

Chapter PDF

Similar content being viewed by others

Keywords

- Support Vector Machine

- Pareto Front

- Multiobjective Optimization

- Support Vector Machine Model

- Training Error

These keywords were added by machine and not by the authors. This process is experimental and the keywords may be updated as the learning algorithm improves.

References

Cortes, C., Vapnik, V.: Support-Vector Networks. Machine Learning 20(3), 273–297 (1995)

Chapelle, O., Vapnik, V., Bousquet, O., Mukherjee, S.: Choosing Multiple Parameters for Support Vector Machines. Machine Learning 46(1-3), 131–159 (2002)

Joachims, T.: Text Categorization with Support Vector Machines: Learning with Many Relevant Features. In: Proceedings of the European Conference on Machine Learning, pp. 137–142 (1997)

Osuna, E., Freund, R., Girosi, F.: Training Support Vector Machines: An Application to Face Detection. In: Proceedings of 1997 IEEE Computer Society Conference on Computer Vision and Pattern Recognition, pp. 130–136 (1997)

Mierswa, I.: Controlling Overfitting with Multi-Objective Support Vector Machines. In: GECCO 2007: Proceedings of the 9th annual conference on Genetic and Evolutionary Computation, pp. 1830–1837 (2007)

Li, L., Pratap, A., Lin, H.-t., Abu-Mostafa, Y.S.: Improving generalization by data categorization. In: Jorge, A.M., Torgo, L., Brazdil, P.B., Camacho, R., Gama, J. (eds.) PKDD 2005. LNCS (LNAI), vol. 3721, pp. 157–168. Springer, Heidelberg (2005)

Goldberg, D.E.: Genetic Algorithms in search, optimization and machine learning. Addison-Wesly, Reading (1989)

Fonseca, C.M., Fleming, P.J.: Genetic algorithms for multiobjective optimization: Formulation, discussion and generalization. In: Proceedings of the 5th international conference on genetic algorithms, pp. 416–423 (1993)

Zitzler, E., Thiele, L.: Multiobjective Evolutionary Algorithms: A Comparative Case Study and the Strength Pareto Approach. IEEE Transactions on Evolutionary Computation 3(4), 257–271 (1999)

Deb, K., Agarwal, S., Pratap, A., Meyarivan, T.: A Fast Elitist Non-Dominated Sorting Genetic Algorithm for Multi-Objective Optimization: NSGA-II. KanGAL report 200001, Indian Institute of Technology, Kanpur, India (2000)

Zitzler, E., Laumanns, M., Thiele, L.: SPEA2: Improving the Performance of the Strength Pareto Evolutionary Algorithm. Technical Report 103, Computer Engineering and Communication Networks Lab (TIK), Swiss Federal Institute of Technology (ETH) Zurich (2001)

Okuda, T., Hiroyasu, T., Miki, M., Watanabe, S.: DCMOGA: Distributed Cooperation model of Multi-Objective Genetic Algorithm. In: Advances in Nature-Inspired Computation: The PPSN VII Workshops, pp. 25–26 (2002)

Deb, K., Sundar, J.: Reference Point Based Multi-Objective Optimization Using Evolutionary Algorithms. In: GECCO 2006: Proceedings of the 8th annual conference on Genetic and evolutionary computation, pp. 635–642 (2006)

Tanese, R.: Distributed Genetic Algorithms. In: Proc. 3rd ICGA, pp. 434–439 (1989)

Jaimes, A.L., Coello, C.A.C.: MRMOGA: Parallel Evolutionary Multiobjective Optimization using Multiple Resolutions. In: 2005 IEEE Congress on Evolutionary Computation (CEC 2005), pp. 2294–2301 (2005)

Kursawe, F.: A Variant of Evolution Strategies for Vector Optimization. In: Parallel Problem Solving from Nature. 1st Workshop, PPSN I, pp. 193–197 (1991)

Sato, H., Aguirre, H., Tanaka, K.: Local Dominance Using Polar Coordinates to Enhance Multi-objective Evolutionary Algorithms. In: Proc. 2004 IEEE Congress on Evolutionary Computation, pp. 188–195 (2004)

Knowles, J., Thiele, L., Zitzler, E.: A Tutorial on the Performance Assessment of Stochastic Multiobjective Optimizers. TIK Report 214, Computer Engineering and Networks Laboratory (TIK), ETH Zurich (2006)

Ishibuchi, H., Shibata, Y.: Mating Scheme for Controlling the Diversity-Convergence Balance for Multiobjective Optimization. In: Proc. of 2004 Genetic and Evolutionary Computation Conference, pp. 1259–1271 (2004)

Asuncion, A., Newman, D.J.: UCI Machine Learning Repository. Irvine, CA: University of California, School of Information and Computer Science (2007), http://www.ics.uci.edu/~mlearn/MLRepository.html

Chang, C.-C., Lin, C.-J.: LIBSVM: a library for support vector machines (2001), http://www.csie.ntu.edu.tw/~cjlin/libsvm

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2009 Springer-Verlag Berlin Heidelberg

About this paper

Cite this paper

Hiroyasu, T., Nishioka, M., Miki, M., Yokouchi, H. (2009). Application of MOGA Search Strategy to SVM Training Data Selection. In: Ehrgott, M., Fonseca, C.M., Gandibleux, X., Hao, JK., Sevaux, M. (eds) Evolutionary Multi-Criterion Optimization. EMO 2009. Lecture Notes in Computer Science, vol 5467. Springer, Berlin, Heidelberg. https://doi.org/10.1007/978-3-642-01020-0_14

Download citation

DOI: https://doi.org/10.1007/978-3-642-01020-0_14

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-642-01019-4

Online ISBN: 978-3-642-01020-0

eBook Packages: Computer ScienceComputer Science (R0)