Abstract

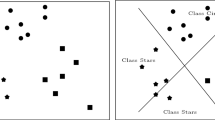

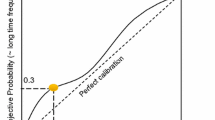

In this paper, we introduce a new approach to selecting the best hyperplane classifier (BHC) from the optimal pairwise linear classifier is given. We first propose a procedure for selecting the BHC, and analyze the conditions in which the BHC is selected. In one of the cases, it is formally shown that the BHC and Fisher’s classifier (FC) are coincident. The empirical and graphical analysis on synthetic data and real-life datasets from the UCI machine learning repository, which involves the optimal quadratic classifier, the BHC, the optimal pairwise linear classifier, and FC.

Chapter PDF

Similar content being viewed by others

Keywords

- Random Vector

- Linear Discriminant Analysis

- Covariance Matrice

- Machine Intelligence

- Linear Dimensionality Reduction

These keywords were added by machine and not by the authors. This process is experimental and the keywords may be updated as the learning algorithm improves.

References

Aladjem, M.: Linear Discriminant Analysis for Two Classes Via Removal of Classification Structure. IEEE Trans. on Pattern Analysis and Machine Intelligence 19(2), 187–192 (1997)

Cooke, T.: Two Variations on Fisher’s Linear Discriminant for Pattern Recognition. IEEE Transations on Pattern Analysis and Machine Intelligence 24(2), 268–273 (2002)

Du, Q., Chang, C.: A Linear Constrained Distance-based Discriminant Analysis for Hyperspectral Image Classification. Pattern Recognition 34(2), 361–373 (2001)

Duda, R., Hart, P., Stork, D.: Pattern Classification, 2nd edn. John Wiley and Sons, Inc., NewYork (2000)

Lotlikar, R., Kothari, R.: Adaptive Linear Dimensionality Reduction for Classification. Pattern Recognition 33(2), 185–194 (2000)

Malina, W.: On an Extended Fisher Criterion for Feature Selection. IEEE Transactions on Pattern Analysis and Machine Intelligence 3, 611–614 (1981)

Rao, A., Miller, D., Rose, K., Gersho, A.: A Deterministic Annealing Approach for Parsimonious Design of Piecewise Regression Models. IEEE Transactions on Pattern Analysis and Machine Intelligence 21(2), 159–173 (1999)

Raudys, S.: On Dimensionality, Sample Size, and Classification Error of Nonparametric Linear Classification. IEEE Transactions on Pattern Analysis and Machine Intelligence 19(6), 667–671 (1997)

Raudys, S.: Evolution and Generalization of a Single Neurone: I. Single-layer Perception as Seven Statistical Classifiers. Neural Networks 11(2), 283–296 (1998)

Rueda, L.: Selecting the Best Hyperplane in the Framework of Optimal Pairwise Linear Classifiers. Technical Report 02-009, School of Computer Science, University of Windsor, Windsor, Canada (2002)

Rueda, L., Oommen, B.J.: On Optimal Pairwise Linear Classifiers for Normal Distributions: The Two-Dimensional Case. IEEE Transations on Pattern Analysis and Machine Intelligence 24(2), 274–280 (2002)

Rueda, L., Oommen, B.J.: On Optimal Pairwise Linear Classifiers for Normal Distributions: The d-Dimensional Case. Pattern Recognition 36(1), 13–23 (2003)

Schalkoff, R.: Pattern Recognition: Statistical, Structural and Neural Approaches. John Wiley and Sons, Inc., Chichester (1992)

Webb, A.: Statistical Pattern Recognition, 2nd edn. John Wiley & Sons, N.York (2002)

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2003 Springer-Verlag Berlin Heidelberg

About this paper

Cite this paper

Rueda, L. (2003). A New Approach That Selects a Single Hyperplane from the Optimal Pairwise Linear Classifier. In: Sanfeliu, A., Ruiz-Shulcloper, J. (eds) Progress in Pattern Recognition, Speech and Image Analysis. CIARP 2003. Lecture Notes in Computer Science, vol 2905. Springer, Berlin, Heidelberg. https://doi.org/10.1007/978-3-540-24586-5_64

Download citation

DOI: https://doi.org/10.1007/978-3-540-24586-5_64

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-540-20590-6

Online ISBN: 978-3-540-24586-5

eBook Packages: Springer Book Archive