Abstract

In 2011, a huge earthquake named Great East-Japan Earthquake gave a serious damage in Japan, especially due to Tsunami inundation caused by the earthquake. After this terrible natural disaster, there is a growing concern about future big earthquakes and Tsunami disasters in Japan, and a demand for their prevention and mitigation is increasing. To react this high demand, we are designing and developing a realtime Tsunami inundation analysis system on a brand-new vector supercomputer SX-ACE installed at Tohoku University as a case study of urgent computing for earthquake and Tsunami disasters. In this article, we will present an overview of the system and its performance in the Nankai trough earthquake case.

Access provided by Autonomous University of Puebla. Download conference paper PDF

Similar content being viewed by others

Keywords

These keywords were added by machine and not by the authors. This process is experimental and the keywords may be updated as the learning algorithm improves.

1 Introduction

On March 11, 2011, the East-Japan earthquake occurred with a magnitude of 9.0 in the Pacific coast of Tohoku, which is a northern part of the mainland of Japan. A huge Tsunami with a height of more than 40 m triggered by the earthquake arrived at coastal cities of the Tohoku area 30 min after the earthquake, went into the inland up to 10 Km, and destroyed the cities. More than 18,000 victims (dead or missing) are due to the huge Tsunami.

After the great East-Japan earthquake, there is a growing concern about future big earthquakes and Tsunami disasters in Japan, and a demand for their prevention and mitigation is increasing, because there is a high probability of several big earthquakes expected in the very near future in the sea around Japan. For example, according to the report published by Cabinet Office, Government of Japan [1], the probability of the Nankai Trough earthquake in the next 30 years is 70 %, and the total number of deaths due to this huge earthquake would have been estimated to reach 320,000 in the worst case, with an economical loss of 220 trillion yen.

To react such a high demand, we start considering our contribution to the society for prevention and mitigation of natural disasters by using our HPC resources and research achievements. Here we focuses on the following three points:

-

Prompt responses to disasters to reduce damages such as warning evacuation from dangerous zones and rescuing survivors as soon as possible,

-

Detailed and highly accurate analysis and forecasting of Tsunami inundation soon after a big earthquake that may trigger a huge Tsunami, and

-

Enhancement of the social resiliency against natural disasters by precise simulation using HPC.

Under these considerations, we are designing and developing a real-time Tsunami inundation analysis system. This article presents an overview of the system and discusses the Tsunami simulation on the SX-ACE supercomputer, which is the brand-new vector supercomputer developed by NEC and has been installed in 2015 at Tohoku University [2].

2 A Real-Time Tsunami Inundation Analysis System

Figure 1 shows a configuration of the Tsunami inundation analysis system. The system has been designed to provide the information about the inundation in the coastal cities with a high resolution of 10-m grids within 20 min at the latest after an earthquake occurrence. To realize such an aggressive target of a highly accurate, 10-m mesh-level Tsunami inundation simulation within a short time period, we can successfully construct a coupled system of a real-time GPS-based earthquake observation system and an on-line Tsunami simulation on our SX-ACE system. The GPS-observation system monitors the land motion at more than 1,300 points in Japan in the 24/7 operation. By using real-time measured land-motion data after an earthquake, the fault model of the earthquake is estimated, and the necessary parameters for the Tsunami simulation are automatically generated. These parameters are transferred to the Tsunami simulator on SX-ACE immediately via a network connecting them, and the simulation will be triggered soon after the parameters arrival. A job management system has specially be modified for the real-time Tsunami inundation analysis system. The job management system named NQS II has been enhanced to support urgent job prioritization so that the urgent job management function can execute the Tsunami inundation simulation job on the SX-ACE system at the highest priority, while immediately suspending other active jobs on the system. The suspended jobs automatically resume as soon as the Tsunami inundation simulation completes.

After the Tsunami simulation completes on the SX-ACE system, the results will be visualized on a city map, and delivered to officers of designated local governments. The visualized information provided includes Tsunami arrival time, maximum inundation depth, Tsunami level changes, estimation of damaged population, houses, and buildings, inundation start time, and maximum water level. All the visualized data can be available through the web-interface.

To realize a highly-accurate Tsunami inundation simulation within a reasonable time, we divide a computation domain in a hierarchical fashion. Figure 2 shows an example of a hierarchical decomposition of the computation domain for Kochi city and its coastal area. Table 1 shows an actual grid sizes starting from a coarse grids of a 810-m mesh down to the finest grids of a 10-m mesh. With these multi-level grids, we implement the TUNAMI, (Tohoku University’s Numerical Analysis Model for Investigating tsunami) code [3] for SX-ACE. The TUNAMI code has originally been developed by Tohoku University, and authorized by UNESCO and Japanese Government as the official code for Tsunami inundation analysis.

The TUNAMI code solves non-linear shallow water equations, and uses the staggered leap-frog 2D finite difference method as the numerical scheme [3]. Figure 3 shows a structure of the TUNAMI code. There are two high cost kernels in the TUNAMI code: one is to calculate mass conservation, and the other is to calculate motion equation. Therefore, we intensively apply several optimization techniques to these two kernels to exploit the potential of the SX-ACE supercomputer. As these kernels have the same structure of doubly nested loops, we vectorized the inner loop for efficient vector operations by the vector processor of the node, and parallelized the outer loop with MPI processes for efficient multi-node parallel processing. In addition to the vectorization and parallelization, we developed several tuning techniques such as inlining subroutines, the optimization of I/O routines, and ADB tuning of stencil operations. Among these techniques, the ADB tuning is very important and effective to obtain a high sustained performance, because an on-chip memory named ADB (Assignable Data Buffer) is introduced to the vector processor of the SX-ACE to effectively provide data with a high locality to the vector pipes on a chip, without hight-cost off-chip memory accesses [4].

3 Performance Evaluation

In this section, we examine the performance of our implementation of the TUNAMI code on the SX-ACE system in comparison with a Xeon-based scalar-parallel system and the K computer. Table 2 summarizes the specifications of these systems. SX-9 and SX-ACE are vector systems and their advantages against scalar systems, LX 406 and the K-computer, are their higher memory bandwidths, when comparing the systems with the same peak performance, resulting in a higher system B/F, a ratio of a memory bandwidth to a peak performance. As many applications are memory-intensive, higher B/F is a key factor to improve their sustained performances. The Nankai trough case with a magnitude of 9.0 is used for the experiments.

The TUNAMI code was originally developed for Xeon-based scalar systems, however, our optimization techniques for its migration to the SX-ACE system lead to a significant performance improvement by a factor of 5.5 in comparison with the performance of the LX 406 system in the case of a single core execution as shown in Fig. 4. As a ratio of peak performances of two systems’ cores is three, the computing efficiency, which is a ratio of the sustained performance to the peak performance, of the SX-ACE processor core is twice higher than that of the Xeon Ivy Bridge’s core of the LX406.

The high memory bandwidth plays an important role to obtain higher efficiency rather than the peak performance in the execution of the TUNAMI code, because the TUNAMI code is a memory-intensive application. When comparing the performance of SX-ACE with that of SX-9, their execution times are almost same, even though the single core performance is only 62.5 % of SX-9’s single core-performance. The higher computing efficiency of SX-ACE compared with SX-9 is the result of the enhancement of the memory subsystem of SX-ACE compared with that of SX-9, such as its shorter memory latency, an enlarged ADB with MSHR (Miss Status Handling Registers), a short-cut mechanism in chaining vector pipes, and out-of-order vector load operations [5].

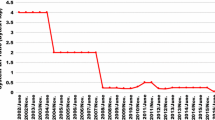

Figure 5 shows the execution time of the simulation in the multi-node environment on SX-ACE, LX406 and the K computer. In the figure, numbers of marks mean the execution times of each system when changing the number of threads(cores). Figure 5 indicates that the performance of the SX-ACE system with 512 cores is equivalent to that of 13 K cores of the K computer. This is because SX-ACE can achieve a high computation efficiency mainly thanks to its high memory bandwidth in cooperation with an on-chip memory named ADB. The high sustained performance of a single core also contributes to the decreasing number of MPI processes to obtain the certain level of the performance for the real-time Tsunami inundation simulation.

Figure 6 shows the timing chart of the entire process from the earthquake detection to the visualization of the simulation results. The process of the Tsunami inundation analysis system consists of two phases: coseismic fault estimation phase and tsunami inundation simulation phase. Using this system for the Nankai trough earthquake case in Japan with 6-h simulation of Tsunami’s behavior, these two phases can complete in less than 8 min and 5 min, respectively. In addition, the simulation results can be visualized and sent to local governments within 4 min after the simulation. Thus, the system completes the Tsunami inundation analysis with a 10-m grids resolution within 20 min.

4 Summary

This article briefly described design and implementation of a real-time Tsunami inundation analysis system on the vector supercomputer SX-ACE. This is the world-first achievement of real-time Tsunami inundation analysis of a 6-h inundation behavior at the level of a 10-m mesh We can implement the system very efficiently by exploiting the potential of SX-ACE, and as a results, even with 512 nodes, we can complete a real-time simulation in 8 min after the occurrence of a big earthquake, which is equivalent to the performance of the K-computer with 13 K nodes. Therefore, we can successfully demonstrate that our SX-ACE system with the capability of the real-time Tsunami inundation simulation has a potential as a social infrastructure for the homeland safety like a weather forecasting system, in addition to as a research infrastructure for computation science and engineering applications.

References

Cabinet Office, Government of Japan. http://www.bousai.go.jp/jishin/nankai/nankaitrough_info.html

Kobayashi, H.: A new SX-ACE-based supercomputer system of Tohoku University. In: Sustained Simulation Performance 2015, pp. 3–151. Springer (2015)

Koshimura, S., et al.: Developing fragility functions for tsunami damage estimation using numerical model and post-tsunami data from Banda Aceh, Indonesia. Coastal Eng. J. 243–273 (2009)

Momose, S.: SX-ACE, Brand-new vector supercomputer for higher sustained performance I. In: Sustained Simulation Performance 2014, pp. 199–214. Springer (2014)

Momose, S.: Next generation vector supercomputer for providing higher sustained performance. In: COOL Chips 2013 (2013)

Acknowledgements

Many colleagues of Tohoku University, NEC and its related companies made a great contribution to this project, and in particular great thanks go to Professors Shunichi Koshimura, Ryota Hino, Yusaku Ohta, Visiting Professors Akihiro Musa and Yoichi Murashima, and Visiting Researchers Hiroshi Matsuoka and Osamu Watanabe, all from Tohoku University

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2016 Springer International Publishing AG

About this paper

Cite this paper

Kobayashi, H. (2016). A Case Study of Urgent Computing on SX-ACE: Design and Development of a Real-Time Tsunami Inundation Analysis System for Disaster Prevention and Mitigation. In: Resch, M., Bez, W., Focht, E., Patel, N., Kobayashi, H. (eds) Sustained Simulation Performance 2016. Springer, Cham. https://doi.org/10.1007/978-3-319-46735-1_11

Download citation

DOI: https://doi.org/10.1007/978-3-319-46735-1_11

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-319-46734-4

Online ISBN: 978-3-319-46735-1

eBook Packages: Mathematics and StatisticsMathematics and Statistics (R0)