Abstract

During experimental tests, optical displacement measures can provide reliable data about the behavior of structural elements without altering key parameters, such as damping, stiffness, or mass, with low cost and high spatial density of measurements. Motion capture Systems are used in different application from medicine to cinematography, involving different types of image processing techniques, but its application to measure the response of civil structures is costly and of limited value in terms of real implementations. Range/Depth Cameras, on the other hand, can provide a 3-D imaging Solution to capture motion and displacements at an affordable cost. These cameras are widely available and used in the videogames industry. This paper presents the first steps for the implementation of a large-displacement measurement methodology and its application.

Access provided by Autonomous University of Puebla. Download conference paper PDF

Similar content being viewed by others

Keywords

2.1 Introduction

Kinect for Xbox360™ [1] is essentially a set of sensors which comprises a triaxial accelerometer, an RGB camera and an infrared camera, initially developed for detecting human features in three dimensions, with a primary application in the field of video games Through a pattern generated by an infrared laser a Range/Depth camera is achieved for three-dimensional scene detection where lighting stops playing an important role as it is in other artificial vision systems, making it a sensor with good performance [2–4] and remarkably low cost. (<150 US $) [5, 6].

The methodology is proposed based on improvements over the Kinect for Xbox360™ raw data using 3D interpolations and a 3D correspondence technique [7] for the measurement of the actual displacement field at a certain time. Test were made using two acquisition methodologies, one based on a continuous 3D reconstruction using Kinect Fusion that is included on the Microsoft Kinect Framework; other extracting raw Kinect depth using Matlab through the Image Acquisition Toolbox that supports Kinect for Xbox360™ devices. Once an acquisition is made, it is performed a 3d interpolation for a normalization of the scattered data in aims to provide normalized data and a posterior processing with a 3D correspondence technique to improve results.

Due to the measuring characteristics and low cost, has become a multipurpose sensor, in different areas from surveys of complex three-dimensional scenes [8], applications focused on improving and reducing costs in augmented reality systems [5], to characterization of turbulent flows using multiple Kinect’s [9]. There are studies of the use of the Kinect for the realization of whole plant phenotypes [10], evaluation of postures in the human body and on-line medical evaluation using Kinect generated point clouds from a clinical environment [3, 6, 11]. There are applications for tracking objects in 3D space [16], machine vision applications in robotics for automated three-dimensional survey [12], also there are multiple calibration approaches [13–15] and even improvements to sensor characteristics [16] as are further comparisons with instruments such as laser scanners commonly used for three-dimensional surveys [13] demonstrating the versatility of this sensor.

2.2 Static Displacement Approach

A foam piece with three straight perpendicular segments, each one in a different coordinate direction, is deformed in a complex arbitrary displacement form as an initial setup. On the surface of the piece, reflective markers used on the OPTITRACK motion capture system are located, composed of six Near Infrared Cameras to obtain a precise 3D location of each marker on the surface of the foam (Fig. 2.1).

The OPTITRACK camera array location is calibrated following the instructions provided by the developer and the positions of each marker are captured. Using Kinect Fusion software the geometry of the foam is measured, moving the Kinect until a full survey of the piece is completed and a three-dimensional point cloud is saved. The process is repeated for another arbitrary displacement form as a final setup of the piece (Fig. 2.2).

Using a CAD software, marker positions where used to compare the Euclidean displacements between the initial and finals position of the piece. A total of 21 points were evaluated on four faces of the piece. It is found that the differences between displacements found using OPTITRACK motion capture system and the Kinect are well related (Table 2.1).

Using the Coherent Point Drift algorithm [7] to establish the correspondence between point clouds it is found a good correspondence visually, as seen on Fig. 2.3. The CPD algorithm fails to achieve a good assessment of the displacement at the six positions showed on Fig. 2.3, but provides a good guess of the Euclidean distances (Table 2.2).

2.3 Dynamic Displacement Approach

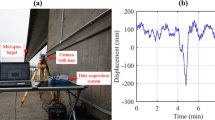

A Dynamic shaker APS 400 ELECTRO-SEIS® is instrumented using a UNIMEASURE LX-PA linear position transducer and a Kinect for Xbox360™ (Fig. 2.4) to measure a frequency sweep between 0.1 and 2.5 Hz using an average amplitude of 5 cm. Kinect Depth raw data is acquired using the Image Acquisition Toolbox for Matlab and the displacement data is acquired using a NI DAQ PAD60-15 data acquisition system.

Pinhole camera calibration parameters are applied to the Kinect depth raw data. The shaker positions are extracted from the same point over time on the Kinect depth data. A comparison between the Kinect extracted positions over the measured positions using the show a correlation of 79.2 % (Fig. 2.5). A spectrogram of the Kinect retrieved displacement signal is shown in Fig. 2.6.

Using the same methodology, the response of a benchmark structure of 3 DOF with a first natural frequency of 2.67 Hz (Fig. 2.7) is measured during free vibration and the displacements are retrieved from the Kinect for Xbox360™ as shown on Fig. 2.8. A Power Spectral density of the retrieved displacements is shown in Fig. 2.9 where a predominant frequency of 2.69 Hz is identified

2.4 Conclusions and Perspectives

Kinect Fusion acquisition methodology showed a poor performance on low magnitude displacements in comparison to a motion capture system, but has the advantage of being able to perform a 3-dimensional survey and can provide a good guess of the displacements on elements with complex geometry. Kinect depth raw data calibrated using a pinhole model parameters showed high accuracy assessing the displacements of a dynamical shaker and the dynamic measures shows a good agreement up to 2.5 Hz when large displacements are present. On a benchmark structure it is found a good correlation on the identified first natural frequency. It is expected to improve the displacement measurements using the proposed methodology and a Kinect for Windows v2 sensor with better performance and a Time of Flight technique to obtain depth data.

References

Microsoft®. Kinect™ for Xbox360™ Available from: http://www.xbox.com/en-us/kinect/ (2011)

Chéné, Y., et al.: On the use of depth camera for 3D phenotyping of entire plants. Comput. Electron. Agric. 82, 122–127 (2012)

Jing, T., et al.: Scanning 3D full human bodies using s. IEEE Trans. Vis. Comput. Graph. 18(4), 643–650 (2012)

Nakamura, T.: Real-time 3-D object tracking using Kinect sensor. In: IEEE International Conference on Robotics and Biomimetics (ROBIO), pp. 784–788. (2011)

Placitelli, A.P. and Gallo L.: Low-cost augmented reality systems via 3D point cloud sensors. In: Signal-Image Technology and Internet-Based Systems (SITIS), 2011 Seventh International Conference on, 2011

Clark, R.A., et al.: Validity of the Microsoft Kinect for assessment of postural control. Gait Posture 36, 372–377 (2012)

Myronenko, A., Song, X.: Point set registration: coherent point drift. IEEE Trans. Pattern Anal. Mach. Intell. 32(12), 2262–2275 (2010)

Varadarajan, K.M., Vincze M. (2011) Surface reconstruction for RGB-D data using real-time depth propagation. In: Computer Vision Workshops (ICCV Workshops), 2011 I.E. International Conference on, 2011

Berger, K., et al.: The capturing of turbulent gas flows using multiple Kinects. In: Computer Vision Workshops (ICCV Workshops), 2011 I.E. International Conference on, 2011

Chéné, Y., et al.: On the use of depth camera for 3D phenotyping of entire plants. Comput. Electron. Agri. 82, 122–127 (2012)

Placitelli, A.P., Gallo, L.: 3D point cloud sensors for low-cost medical in-situ visualization. In: Bioinformatics and Biomedicine Workshops (BIBMW), 2011 I.E. International Conference on, 2011

Nakamura, T.: Real-time 3-D object tracking using Kinect sensor. IEEE International Conference on Robotics and Biomimetics (ROBIO), pp. 784–788. (2011)

Chan-Soo, P., et al.: Comparison of plane extraction performance using laser scanner and Kinect. In: Ubiquitous Robots and Ambient Intelligence (URAI), 2011 8th International Conference on, 2011

Dutta, T.: Evaluation of the Kinect™ sensor for 3-D kinematic measurement in the workplace. Appl. Ergon. 43(4), 645–649 (2012)

Khoshelham, K.: Accuracy Analysis of Kinect Depth Data. GeoInf. Sci. 38(5), 6 (2010)

Suttasupa, Y., Sudsang, A., Niparnan, N.: Plane detection for Kinect image sequences. In: Robotics and Biomimetics (ROBIO), 2011 I.E. International Conference on, 2011

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2016 The Society for Experimental Mechanics, Inc.

About this paper

Cite this paper

Franco, J.M., Marulanda, J., Thomson, P. (2016). Use of a Depth Camera as a Contactless Displacement Field Sensor. In: Brandt, A., Singhal, R. (eds) Shock & Vibration, Aircraft/Aerospace, Energy Harvesting, Acoustics & Optics, Volume 9. Conference Proceedings of the Society for Experimental Mechanics Series. Springer, Cham. https://doi.org/10.1007/978-3-319-30087-0_2

Download citation

DOI: https://doi.org/10.1007/978-3-319-30087-0_2

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-319-30086-3

Online ISBN: 978-3-319-30087-0

eBook Packages: EngineeringEngineering (R0)