Abstract

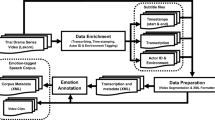

This paper describes a speech database built from 17 Slovenian radio dramas. The dramas were obtained from the national radio-and-television station (RTV Slovenia) and were given at the universities disposal with an academic license for processing and annotating the audio material. The utterances of one male and one female speaker were transcribed, segmented and then annotated with emotional states of the speakers. The annotation of the emotional states was conducted in two stages with our own web-based application for crowd sourcing. The final (emotional) speech database consists of 1385 recordings of one male (975 recordings) and one female (410 recordings) speaker and contains labeled emotional speech with a total duration of around 1 hour and 15 minutes. The paper presents the two-stage annotation process used to label the data and demonstrates the usefulness of the employed annotation methodology. Baseline emotion recognition experiments are also presented. The reported results are presented with the un-weighted as well as weighted average recalls and precisions for 2-class and 7-class recognition experiments.

Access provided by Autonomous University of Puebla. Download to read the full chapter text

Chapter PDF

Similar content being viewed by others

References

Gajšek, R., Štruc, V., Mihelič, F., Podlesek, A., Komidar, L., Sočan, G., Bajec, B.: Multi-modal emotional database: AvID. Informatica (Slovenia) 33(1), 101–106 (2009)

Batliner, A., Biersack, S., Steidl, S.: The prosody of pet robot directed speech: evidence from children. In: Proc. of Speech Prosody, pp. 1–4 (2006)

Koolagudi, S., Rao, K.: Emotion recognition from speech: a review. International Journal of Speech Technology 15(2), 99–117 (2012)

Gajšek, R., Štruc, V., Dobrišek, S., Mihelič, F.: Emotion recognition using linear transformations in combination with video. In: 10th INTERSPEECH (2009)

Gajšek, R., Žibert, J., Justin, T., Štruc, V., Vesnicer, B., Mihelič, F.: Gender and affect recognition based on GMM and GMM-UBM modeling with relevance MAP estimation. In: 11th INTERSPEECH (2010)

Dobrišek, S., Gajšek, R., Mihelic, F., Pavešič, N., Štruc, V.: Towards efficient multi-modal emotion recognition. International Journal of Advanced Robotic Systems 10(53), 1–10 (2013)

Cowie, R., Cornelius, R.R.: Describing the emotional states that are expressed in speech. Speech Communication 40(1–2), 5–32 (2003)

Schuller, B., Steidl, S., Batliner, A., Burkhardt, F., Devillers, L., Müller, C., Narayanan, S.: Paralinguistics in speech and language – state-of-the-art and the challenge. Computer Speech & Language 27(1), 4–39 (2013)

Yamashita, Y.: A review of paralinguistic information processing for natural speech communication. Acoustical Science and Technology 34(2), 73–79 (2013)

Barras, C., Geoffrois, E., Wu, Z., Liberman, M.: Transcriber: Development and use of a tool for assisting speech corpora production. Speech Communication 33(1–2), 5–22 (2001)

Cornelius, R.R.: The science of emotion: Research and tradition in the psychology of emotions. Prentice-Hall, Inc. (1996)

Cornelius, R.R.: Theoretical approaches to emotion. In: ISCA Tutorial and Research Workshop (ITRW) on Speech and Emotion (2000)

Howe, J.: The rise of crowdsourcing. Wired Magazine 14(6), 1–4 (2006)

Justin, T., Mihelic, F., Žibert, J.: Development of emotional Slovenian speech database based on radio drama – EmoLUKS. In: Language Technologies. Proceedings of the 17th International Multiconference INFORMATION SOCIETY - IS 2014, vol. G, Institut “Jožef Stefan” Ljubljana, pp. 157–162 (2014)

Eyben, F., Weninger, F., Groß, F., Schuller, B.: Recent developments in openSMILE, the Munich open-source multimedia feature extractor. In: Proceedings of the 21st ACM International Conference on Multimedia, pp. 835–838. ACM (2013)

Schuller, B., Steidl, S., Batliner, A.: The Interspeech 2009 emotion challenge. In: 10th INTERSPEECH, pp. 312–315 (2009)

Keerthi, S., Shevade, S., Bhattacharyya, C., Murthy, K.: Improvements to Platt’s SMO algorithm for SVM classifier design. Neural Computation 13(3), 637–649 (2001)

Hall, M., Frank, E., Holmes, G., Pfahringer, B., Reutemann, P., Witten, I.H.: The WEKA data mining software: an update. ACM SIGKDD Explorations Newsletter 11(1), 10–18 (2009)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2015 Springer International Publishing Switzerland

About this paper

Cite this paper

Justin, T., Štruc, V., Žibert, J., Mihelič, F. (2015). Development and Evaluation of the Emotional Slovenian Speech Database - EmoLUKS. In: Král, P., Matoušek, V. (eds) Text, Speech, and Dialogue. TSD 2015. Lecture Notes in Computer Science(), vol 9302. Springer, Cham. https://doi.org/10.1007/978-3-319-24033-6_40

Download citation

DOI: https://doi.org/10.1007/978-3-319-24033-6_40

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-319-24032-9

Online ISBN: 978-3-319-24033-6

eBook Packages: Computer ScienceComputer Science (R0)