Abstract

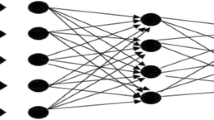

While Hidden Markov Modeling (HMM) has been the dominant technology in speech recognition for many decades, recently deep neural networks (DNN) it seems have now taken over. The current DNN technology requires frame-aligned labels, which are usually created by first training an HMM system. Obviously, it would be desirable to have ways of training DNN-based recognizers without the need to create an HMM to do the same task. Here, we evaluate one such method which is called Connectionist Temporal Classification (CTC). Though it was originally proposed for the training of recurrent neural networks, here we show that it can also be used to train more conventional feed-forward networks as well. In the experimental part, we evaluate the method on standard phone recognition tasks. For all three databases we tested, we found that the CTC method gives slightly better results that those obtained with force-aligned training labels got using an HMM system.

Access provided by Autonomous University of Puebla. Download to read the full chapter text

Chapter PDF

Similar content being viewed by others

References

Bourlard, H.A., Morgan, N.: Connectionist Speech Recognition: A Hybrid Approach. Kluwer Academic, Norwell (1993)

Glorot, X., Bordes, A., Bengio, Y.: Deep sparse rectifier networks. In: Proceedings of AISTATS, pp. 315–323 (2011)

Graves, A.: Supervised Sequence Labelling with Recurrent Neural Networks. SCI, vol. 385. Springer, Heidelberg (2012)

Graves, A., Mohamed, A.R., Hinton, G.E.: Speech recognition with Deep Recurrent Neural Networks. In: Proceedings of ICASSP, pp. 6645–6649 (2013)

Hinton, G., Deng, L., Yu, D., Dahl, G., Mohamed, A., Jaitly, N., Senior, A., Vanhoucke, V., Nguyen, P., Sainath, T., Kingsbury, B.: Deep Neural Networks for acoustic modeling in Speech Recognition. IEEE Signal Processing Magazine 29(6), 82–97 (2012)

Huang, X., Acero, A., Hon, H.W.: Spoken Language Processing. Prentice Hall (2001)

Lamel, L., Kassel, R., Seneff, S.: Speech database development: Design and analysis of the acoustic-phonetic corpus. In: DARPA Speech Recognition Workshop, pp. 121–124 (1986)

Senior, A., Heigold, G., Bacchiani, M., Liao, H.: GMM-free DNN training. In: Proceedings of ICASSP, pp. 5639–5643 (2014)

Tóth, L., Tarján, B., Sárosi, G., Mihajlik, P.: Speech recognition experiments with audiobooks. Acta Cybernetica, 695–713 (2010)

Tóth, L.: Convolutional deep rectifier neural nets for phone recognition. In: Proceedings of Interspeech, Lyon, France, pp. 1722–1726 (2013)

Tóth, L.: Phone recognition with Deep Sparse Rectifier Neural Networks. In: Proceedings of ICASSP, pp. 6985–6989 (2013)

Tóth, L., Grósz, T.: A comparison of deep neural network training methods for large vocabulary speech recognition. In: Proceedings of TSD, pp. 36–43 (2013)

Werbos, P.J.: Backpropagation Through Time: what it does and how to do it. Proceedings of the IEEE 78(10), 1550–1560 (1990)

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2014 Springer International Publishing Switzerland

About this paper

Cite this paper

Grósz, T., Gosztolya, G., Tóth, L. (2014). A Sequence Training Method for Deep Rectifier Neural Networks in Speech Recognition. In: Ronzhin, A., Potapova, R., Delic, V. (eds) Speech and Computer. SPECOM 2014. Lecture Notes in Computer Science(), vol 8773. Springer, Cham. https://doi.org/10.1007/978-3-319-11581-8_10

Download citation

DOI: https://doi.org/10.1007/978-3-319-11581-8_10

Publisher Name: Springer, Cham

Print ISBN: 978-3-319-11580-1

Online ISBN: 978-3-319-11581-8

eBook Packages: Computer ScienceComputer Science (R0)