Abstract

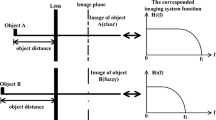

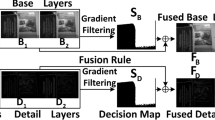

Digital image acquisition devices suffer from a narrow depth of field (DoF) due to optical lenses installed in them. As a result, the generated images have varying focus, thereby losing essential details. Multi-focus image fusion aims to synthesize an all-in-focus image for better scene perception and processing. This paper proposes an effective region-based focus fusion approach based on a novel focus measure derived from multi-scale half gradients extracted from the morphological toggle-contrast operator. The energy of the same focus measure is combined with spatial frequency to design a composite focusing criterion (CFC) to roughly differentiate between the focussed and defocussed regions. The high-frequency information obtained is further enhanced using a guided filter, taking focus guidance from the source images. The best focus region is selected using a pixel-wise maximum rule which is further converted into a refined binarized decision map using a small region removal technique. At this point, the guided filter is re-utilized to verify the spatial correlation concerning the initial fused image to obtain the final decision map for final fusion. Experimental results exhibit the discussed algorithm’s efficacy over current fusion approaches in visual perception and quantitative metrics on registered and un-registered multi-focus datasets.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Bai, X.: Morphological image fusion using the extracted image regions and details based on multi-scale top-hat transform and toggle contrast operator. Digit. Sig. Process. 23(2), 542–554 (2013)

Bai, X., Zhou, F., Xue, B.: Edge preserved image fusion based on multiscale toggle contrast operator. Image Vis. Comput. 29(12), 829–839 (2011)

Haghighat, M.B.A., Aghagolzadeh, A., Seyedarabi, H.: A non-reference image fusion metric based on mutual information of image features. Comput. Electr. Eng. 37(5), 744–756 (2011)

He, K., Sun, J., Tang, X.: Guided image filtering. IEEE Trans. Pattern Anal. Mach. Intell. 35(6), 1397–1409 (2012)

He, K., Gong, J., Xu, D.: Focus-pixel estimation and optimization for multi-focus image fusion. Multimedia Tools Appl. 81(6), 7711–7731 (2022)

Jing, Z., Pan, H., Li, Y., Dong, P.: Evaluation of focus measures in multi-focus image fusion. In: Non-Cooperative Target Tracking, Fusion and Control. IFDS, pp. 269–281. Springer, Cham (2018). https://doi.org/10.1007/978-3-319-90716-1_15

Kahol, A., Bhatnagar, G.: A new multi-focus image fusion framework based on focus measures. In: 2021 IEEE International Conference on Systems, Man, and Cybernetics (SMC), pp. 2083–2088. IEEE (2021)

Kaur, H., Koundal, D., Kadyan, V.: Image fusion techniques: a survey. Arch. Comput. Meth. Eng. 28(7), 4425–4447 (2021)

Li, S., Kang, X., Hu, J.: Image fusion with guided filtering. IEEE Trans. Image Process. 22(7), 2864–2875 (2013)

Liu, S., et al.: A multi-focus color image fusion algorithm based on low vision image reconstruction and focused feature extraction. Sig. Process. Image Commun. 100, 116533 (2022)

Liu, Yu., Wang, L., Cheng, J., Li, C., Chen, X.: Multi-focus image fusion: a survey of the state of the art. Inf. Fusion 64, 71–91 (2020)

Meher, B., Agrawal, S., Panda, R., Abraham, A.: A survey on region based image fusion methods. Inf. Fusion 48, 119–132 (2019)

Meyer, F., Serra, J.: Contrasts and activity lattice. Sig. Process. 16(4), 303–317 (1989)

Nejati, M., Samavi, S., Shirani, S.: Multi-focus image fusion using dictionary-based sparse representation. Inf. Fusion 25, 72–84 (2015)

Piella, G., Heijmans, H.: A new quality metric for image fusion. In: Proceedings 2003 International Conference on Image Processing (Cat. No. 03CH37429), vol. 3, p. III-173. IEEE (2003)

Qu, G., Zhang, D., Yan, P.: Information measure for performance of image fusion. Electron. Lett. 38(7), 313–315 (2002)

Rivest, J.F., Soille, P., Beucher, S.: Morphological gradients. J. Electron. Imaging 2(4), 326–336 (1993)

Roy, M., Mukhopadhyay, S.: Multi-focus fusion using image matting and geometric mean of DCT-variance. In: Singh, S.K., Roy, P., Raman, B., Nagabhushan, P. (eds.) CVIP 2020. CCIS, vol. 1376, pp. 212–223. Springer, Singapore (2021). https://doi.org/10.1007/978-981-16-1086-8_19

Roy, M., Mukhopadhyay, S.: A scheme for edge-based multi-focus color image fusion. Multimedia Tools Appl. 79(33), 24089–24117 (2020)

Singh, P., Diwakar, M., Chakraborty, A., Jindal, M., Tripathi, A., Bajal, E.: A non-conventional review on image fusion techniques. In: 2021 IEEE 8th Uttar Pradesh Section International Conference on Electrical, Electronics and Computer Engineering (UPCON), pp. 1–7. IEEE (2021)

Singh, V., Kaushik, V.D.: A study of multi-focus image fusion: state-of-the-art techniques. In: Tiwari, S., Trivedi, M.C., Kolhe, M.L., Mishra, K.K., Singh, B.K. (eds.) Advances in Data and Information Sciences: Proceedings of ICDIS 2021, pp. 563–572. Springer, Singapore (2022). https://doi.org/10.1007/978-981-16-5689-7_49

Tan, W., Zhou, H., Rong, S., Qian, K., Yu, Y.: Fusion of multi-focus images via a gaussian curvature filter and synthetic focusing degree criterion. Appl. Opt. 57(35), 10092–10101 (2018)

Tan, Y., Yang, B.: Multi-focus image fusion with cooperative image multiscale decomposition. In: Ma, H., et al. (eds.) PRCV 2021. LNCS, vol. 13021, pp. 177–188. Springer, Cham (2021). https://doi.org/10.1007/978-3-030-88010-1_15

Wan, H., Tang, X., Zhu, Z., Li, W.: Multi-focus image fusion method based on multi-scale decomposition of information complementary. Entropy 23(10), 1362 (2021)

Xu, H., Ma, J., Jiang, J., Guo, X., Ling, H.: U2Fusion: a unified unsupervised image fusion network. IEEE Trans. Pattern Anal. Mach. Intell. 44(1), 502–518 (2020)

Xydeas, C., Petrovic, V.: Objective image fusion performance measure. Electron. Lett. 36(4), 308–309 (2000)

You, C.-S., Yang, S.-Y.: A simple and effective multi-focus image fusion method based on local standard deviations enhanced by the guided filter. Displays 72, 102146 (2022)

Zhang, H., Le, Z., Shao, Z., Xu, H., Ma, J.: MFF-GAN: an unsupervised generative adversarial network with adaptive and gradient joint constraints for multi-focus image fusion. Inf. Fusion 66, 40–53 (2021)

Zhang, H., Xu, H., Xiao, Y., Guo, X., Ma, J.: Rethinking the image fusion: a fast unified image fusion network based on proportional maintenance of gradient and intensity. In: Proceedings of the AAAI Conference on Artificial Intelligence, vol. 34, pp. 12797–12804 (2020)

Zhang, Yu., Liu, Yu., Sun, P., Yan, H., Zhao, X., Zhang, L.: IFCNN: a general image fusion framework based on convolutional neural network. Inf. Fusion 54, 99–118 (2020)

Zhao, J., Laganiere, R., Liu, Z.: Performance assessment of combinative pixel-level image fusion based on an absolute feature measurement. Int. J. Innov. Comput. Inf. Control 3(6), 1433–1447 (2007)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2022 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Roy, M., Mukhopadhyay, S. (2022). Multi-focus Image Fusion Using Morphological Toggle-Gradient and Guided Filter. In: Chen, J.IZ., Tavares, J.M.R.S., Shi, F. (eds) Third International Conference on Image Processing and Capsule Networks. ICIPCN 2022. Lecture Notes in Networks and Systems, vol 514. Springer, Cham. https://doi.org/10.1007/978-3-031-12413-6_9

Download citation

DOI: https://doi.org/10.1007/978-3-031-12413-6_9

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-12412-9

Online ISBN: 978-3-031-12413-6

eBook Packages: Intelligent Technologies and RoboticsIntelligent Technologies and Robotics (R0)