Abstract

In each year, huge investments on Healthcare Information Systems (HIS) and Health Information Technology (HIT) are being made all over the world. These systems incur huge costs on the hospitals, yet the overall impacts of HISs on hospital performance have not been deeply assessed. Some recent studies attempted to establish the link between the implementation of Healthcare Information Systems and the performance of organizations. However, some questions remain unclear. Do HISs influence different hospitals the same way? How to understand and explain the mechanism that HIS/HIT improves the performance of hospitals? To address these questions, we identify the bottlenecks of the current healthcare systems which affect the operation efficiency, and conduct a field study to expose issues of current healthcare systems and the value of the HIS/HIT and to identify the factors that affect the performance of different hospitals.

Access provided by Autonomous University of Puebla. Download chapter PDF

Similar content being viewed by others

Keywords

- Big data analytics

- Healthcare Information Systems

- Health Information Technology

- Electronic Medical Record

- Electronic Health Record

2.1 Introduction: IT in US General Hospitals

In the USA, a hospital is often associated with a medical group and it is run by a set of general practitioners, including doctors, nurses, and laboratory technicians. Simultaneously, it has also been widely recognized that Information Technology (IT) market is growing dramatically in recent few years. Combining this, the key role that information plays in health care cannot be ignored. IT costs on healthcare have become a foremost concern of the US government. Health Information Technology (HIT) or Health Information System (HIS) is defined as the computer applications for the practice of medicine [81]. HIS/HIT covers a wide range of applications, such as the Electronic Medical Record (EMR), the Electronic Health Record (EHR), Continuity of Care Document (CCD), Computerized Physician Order Entry (CPOE), decision support systems to assist clinical decision making, and computerized entry systems to collect and storage patient data. According to the report of the U.S. Congressional Budget Office, the Bush Administration established the position of National Coordinator for HIT in the Department of Health and Human Services in 2004 and set the goal of making EHR available to most Americans by 2014. The time to achieving the goal has been revised [15]: in 2008, less than 10% of US hospitals had adopted Basic EHR system; and however, this increased to 76% in 2014. Almost all hospitals (97%) have adopted a certified EHR technology in 2015, increasing by 35% comparing with 2011. Current data suggests that HIS/HIT has gained increasing recognition in the USA and it is playing a more and more important role for US hospitals [35].

Not only the US government, many leading business companies also realize the potential of HIS/HIT development. Google Health, introduced by Google in 2008 and cancelled in 2011, was a personal health information centralization service that allowed patients to import personal medical records, schedule appointments, and refill prescriptions [96]. As the most similar competitor of Google Health, HealthVault, developed by Microsoft, is a web-based platform where users can see, use, add, and interact with other personal devices such as Windows, Windows phone, iPhone [69]. Microsoft HealthVault allows individuals to manage personal health data via health apps and personal health devices. Intel is now making efforts on multiple perspectives to promote the development of HIS/HIT, including personalized medicine, mobility, devices and imaging, privacy and security, secure cloud [38]. IBM’s Healthcare solution aims to enable advanced business models to reduce costs, to create new forms of cooperation, and to promote engagement among business and individuals to increase healthcare outcomes [37]. Subsequently, HIS/HIT has gained visible achievements and is still evolving.

Government and business company efforts bring huge investments into healthcare information systems research in the USA and all over the world. Despite the enormous cost to the hospitals, the overall benefits and costs of HIS have not been deeply assessed [27]. In recent years, much research efforts investigated the link between the implementation of information systems and the performance of organizations. Because hospitals are at the frontier of technology adoption, IT investment becomes one of the main costs of its spending [84]. Many previous studies have indicated a positive relationship between the use of IT and hospital performance [3, 21, 61], but the mechanisms by which IT impacts hospital performance are still not clear: Do HIS/HIT systems influence different hospitals the same way? How to understand and explain the mechanism that HIS/HIT improves the performance of hospitals?

2.2 Overview of Current Healthcare Information Systems

A healthcare system, sometimes referred as “health care system” or “health system,” is the integration of people, institutions, and resources that provide health care services. According to the World Health Organization (WHO)’s definition [80]:

A health system consists of all organizations, people and actions whose primary intent is to promote, restore or maintain health. This includes efforts to influence determinants of health as well as more direct health-improving activities. A health system is therefore more than the pyramid of publicly owned facilities that deliver personal health services. It includes, for example, a mother caring for a sick child at home; private providers; behavior change programmers; vector-control campaigns; health insurance organizations; occupational health and safety legislation. It includes inter-sectoral action by health staff, for example, encouraging the ministry of education to promote female education, a well-known determinant of better health.

The WHO’s definition highlights the fact that there are not only factors of technology, but also factors of human and organization in a healthcare system. All these factors simultaneously determine the outcome of a health care system. In our research, we narrow the broad scope of “system” and define healthcare information systems as computerized systems that facilitate the information sharing and processing within healthcare facilities. Healthcare information systems are fundamentally different from industrial and consumer products which are concerned about market share protection [65]. They need to be able to be implemented across the platforms, and thus there is a requirement for standardization. In general, it has special needs in terms of security, database design, and standards issue.

Evaluating, designing, and implementing HIS/HIT systems cover a wide scope. The key is to integrate the technology factors (e.g., information integration and knowledge management) and social factors (e.g., management, psychology and policy). This multidisciplinary research has drawn interests from many fields including those working in the fields of information system, computer science, business management, medical science, and others. For example: Wilton and McCoy [108] introduced a distributed database which established data links between different applications running in a local network [108]. Both patient information and reference materials were included in their database. Lamoreaux [59] described a database architecture in a medical center in Virginia which integrated the patient treatment file, outpatient clinic file, and fee basis file all together [59]. Johnson et al. [43] discussed the generic database design for patient management information [43] and indicated that the database design needed to allow efficient access to clinical management events from patient, even, location, and provider. Tsumoto [101] developed a rule instruction system to automatically discover the knowledge from an outpatient healthcare system [101], similar to Khoo et al. [52]’s knowledge extraction and discovery system while using the graphical pattern of a medical database [52]. Chandrashekar et al. [14] talked about the considerations when designing a reusable medical database, including the contract issue between the clinical applications and the storage component, multimodality support, centralizing external dependencies, communication models, and performance considerations [14]. Xu et al. [111] introduced an integrated medical supply information system which integrated the demand, service provided, health care service provider’s information, inventory storage data, and support tools all together [111]. A recent study by Honglin et al. proposed multiple factor integration (MFI) method to calculate the similarity map for sentence aligning for medical database [110].

With the emergence of these advanced HIS/HIT systems, some well-developed ones have gained wide adoption. Electronic Medical Record (EMR), Electronic Health Record (EHR), and Electronic Patient Record (EPR) are three of the main types adopted. All three systems aim to represent the data electronically and are often used interchangeably. However, fundamental differences exist among these three systems. EMR is the electronic medical information file that is generated during the process of diagnosis. EMR is normally designed according to the diagnosis process in a medical facility, and it is rarely extended outside the scope of a hospital, clinic or medical center. On the other hand, EHR is the systematic collection of electronic health information about patients, which can go beyond the scope of a single medical facility. Thus, EHR integrates information across different facilities and systems, and EMR can serve as a type of data source for the EHR [33, 53]. The scope and purpose of EHR are given by [1]: “a repository of information regarding the health status of a subject of care in computer processable form, stored and transmitted securely, and accessible by multiple authorized users. It has a standardized or commonly agreed logical information model which is independent of EHR systems. Its primary purpose is the support of continuing, efficient and quality integrated health care and it contains information which is retrospective, concurrent and prospective.” And finally, EPR refers to “An electronic record of periodic health care of a single individual, provided mainly by one institution” [24], as defined by National Health Service (NHS). The definition of EPR is patient centric. It is the health record of a person along his/her life. NHS has classified EPR into six levels. The research of HIS/HIT may focus on any of the six levels. Our field study looked at Level 1 and 2 of these six levels, which provide evidence to support the intergraded systems on upper levels.

-

Level 1 - Patient Administration System and Departmental Systems

-

Level 2 - Integrated Patient Administration and Departmental Systems

-

Level 3 - Clinical activity support and noting

-

Level 4 - Clinical knowledge, decision support, and integrated care pathways

-

Level 5 - Advanced clinical documentation and integration

-

Level 6 - Full multimedia EPR on line

From the perspective of information location, content, source, maintainer, and user, we compare EMR, EHR, and EPR in Table 2.1:

Although these well-developed systems have gained wide acceptance and have been implemented by most healthcare facilities today, many studies have discussed the issues regarding the implementation of the EMR/EHR/EPR as well as the problems of the system design. For example, some studies discussed the accuracy issues of quantitative EMR data [17, 30, 98, 104]. Particularly, Wagner and Hogan indicated that the main cause of errors was the failure to capture the patient’s mistake when misreporting about medications, and the second most important cause for the error was the failure to capture medication changes from outside clinicians. Moody et al. [72] found that only small amount of nurses reported that EHRs had resulted in a decreased workload, while the majority of nurses preferred bedside documentation [72]. Bygholm [12] found the implementation issues of EPR systems from a case study [12], and it was argued that there was a need to distinguish different types of end-user support when various types of activity were involved.

2.3 The Measurement of the Healthcare System

Performance measurement is defined as “the process of quantifying the efficiency and effectiveness of action,” or “a metric used to quantify the efficiency and/or effectiveness of an action,” or “the set of metrics used to quantify both the efficiency and effectiveness of actions” [76], [78]. Here three main issues are covered: “quantification,” “efficiency and effectiveness,” and “metrics.” Quantification means that the results of performance measurement need to be countable and comparable. Efficiency and effectiveness are the measuring objects. Metrics emphasize that performance measurement is multidimensional.

In most cases, the process of measuring performance requires the uses of statistical tools to determine results. Today many performance measurement systems have gained great achievements. For example, the Balanced Scorecard, first proposed in 1992, provides a comprehensive framework to translate a company’s strategic objectives into a related set of performance measures [49, 50], including the financial perspective, customer perspective, internal business perspective, and innovation and learning perspective. Neely’s “Performance Prism” system looks at five interrelated facets of the prism: stakeholder satisfaction, stakeholder contribution, strategies, process, and capabilities [2, 77, 79]. More detailed measuring perspectives are defined under each facet. The Performance Pyramid developed by Lynch and Cross contains a hierarchy of financial and nonfinancial performance measures. The four-level pyramid system shows the link between strategies and operations, translating the strategic objectives top down, and rolling measures bottom up [18]. Dixon [22] developed the Performance Measurement Questionnaire (PMQ) system to determine the degree that the existing performance measures supported the improvements and to identify what the organization needed for improvement [22]. For team-based structures, Jones and Schilling [44] proposed the approaches of the Total Productive Maintenance (TPM) process in which a practical guide for developing a team’s vital measurement system is provided [44]. Later after the proposition of TPM, the 7-step TPM process [62] and Total Measurement Development Method (TMDM) [31] were developed. By studying the processes and strategies with organizations, these systems function as a part of the management process giving insights on what should be achieved and whether the outputs meet intended goals.

Since performance measurement is multidimensional, a Performance Measurement System (PMS) can differ when the situation and context change. Despite the variety of PMSs, some universal steps and requirements need to be followed when designing a meaningful measurement system. Three general steps are included when designing a performance measurement system: defining strategic objectives, deciding what to measure, and installing performance measurement system into management thinking [51]. Wisner and Fawcett later added more operational details into the procedure, expanding the three steps to a nine-step flow diagram [109].

Particularly for the measurement of healthcare related systems, Purbey et al. adopted Beamon’s evaluation criteria for supply chain performance [8], coming up with a set of measurement characteristics for healthcare processes: inclusiveness, universality, measurability, consistency, and applicability [87]. Due to the complexity of healthcare systems, there are various aspects implicating the system performance. Looking at the review of Van Peursem et al., three measurement groups are included for health management performance: (1) economy, efficiency, and effectiveness; (2) quality of care; and (3) process [102]. These measurement aspects focused on the quality of management, not the quality of medical practice. The first aspect mentioned here (economy, efficiency, and effectiveness) is normally referred to as the three e’s and it has been devised for public sector organizations [11, 66, 70]. A PMS for HIS/HIT can also be classified as financial or nonfinancial [68, 90, 102]. Table 2.2 summarizes the studies on healthcare system performance and their measurements according to financial and nonfinancial categories:

As a short conclusion to this section, existing healthcare systems have gained long-term success, while there remain many unsolved issues regarding the implementation and use of such systems. More research needs to be done to improve the usability and data quality of healthcare systems. There is a high demand for a further investigation of current system’s weaknesses and the development of integrated healthcare systems. As a result, we conduct this field study to collect evidence from a general hospital. The information we gathered contains both the qualitative and quantitative ones. Before looking at the details of the field study, let’s compare these two general research categories: quantitative research and qualitative one.

2.4 Quantitative Research

Quantitative research methods are rooted in the natural sciences [74]. The objective is to measure a particular phenomenon using quantified datasets of a chosen sample from the population of interest. In general, using quantitative methods requires the inclusion of a large sample size in order to fully represent the population of interest. Sometimes quantitative research can be followed by qualitative research to further investigate the details of some findings, or it can follow qualitative research in order to prove the validity of proposed assumptions. Quantitative research methods are widely accepted in the field of social science. There are several examples of application of quantitative methods in HIS/HIT studies.

Mathematical modeling [9, 56, 114] means to construct and describe a system using mathematical concepts and equations.

Experimental method in information system studies is a controlled procedure in which independent variables are manipulated by the researchers, and the dependent variable is measured to test the hypotheses [26, 28, 54].

Survey method [7, 89, 93, 105] studies the sampling of datasets from a population using collected survey data. A survey can be cross-sectional (collecting data from people for one time) or longitudinal (collecting information from the same people over time). The cross-sectional method simply measures the research subjects without manipulating the external environment. If multiple groups are selected, it can compare different population groups at a single point of time. In contrast, longitudinal survey method collects information from multiple time frames. It has a significant advantage over cross-section methods in identifying cause-and-effect relationships. However, longitudinal survey method also faces the challenges associated with following a study group over a long time period.

Quantitative methods are most suitable when a researcher wants to know “how much”: the size and extent or duration of certain phenomena [94]. Especially when testing the cost, quality, or performance of HIS/HIT systems, quantitative methods become a main choice of evaluation. For instance, to evaluate the financial performance of HIS/HIT systems, quantitative methods are suitable to use. One of the main strengths of quantitative approaches is their reliability and objectivity. With a well-constructed analytical model, they are able to simplify a complex problem to a limited number of variables. This requires establishing the testing model prior to data collection and the collected data to be precise and able to reflect the target population. Once the data collecting process is complete, data analysis becomes relatively less time-consuming especially with the help of statistical software (e.g., SPSS, Matlab, Minitab, SAS, Excel). What one needs to note is that the research results are relatively independent of the researchers. For example, researchers cannot guarantee whether the outputs are statistically significant, or whether the model fit can be proved. There are also some weaknesses of quantitative methods. As the tested models are constructed before data collection, the researchers might miss some important factors of the phenomena, because the focus is “hypotheses testing” rather than “hypotheses generation” (R. B. [42]). Therefore, the tested model needs to be reasonable and with a valid theoretical background.

2.5 Qualitative Research

In contrast to quantitative ones, qualitative research methods were originally developed for the social sciences [74] who are concerned with “developing explanations of social phenomena [34].” The purpose of utilizing qualitative methods is to gain an in-depth understanding of underlying factors and to uncover hidden trends. More importantly, they are able to provide insights and ideas for future quantitative research: to determine not only what is happening, or what might be important to measure, but why to measure and how people think or feel [48]. Unlike quantitative methods that require large number of datasets in general, qualitative methods usually concentrate on a small number of cases. Examples of qualitative approaches in the field of information systems given by Myers are action research, case study research, and ethnography [74].

Action research “seeks to bring together action and reflection, theory and practice, in participation with others, in the pursuit of practical solutions to issues of pressing concern to people, and more generally the flourishing of individual persons and their communities.” [88]. By this definition, action research method for HIS/HIT has its concern on the perspective of human and organizational factors. Reason and Bradbury concluded that action research could be an ideal postpositivist social scientific research method in information system discipline [88].

Case study research methods intend to implement up-close and detailed examination of a subject of the case. They are analyses of person, projects, periods, policies, decisions, events, institutions, or other systems that are under the study by one or more methods (G. [99]). By its nature, the case study approach can be applied on almost all perspectives of HIS/HIT research. Many cases are presented all over the world, such as the United States [47], Australia [23], Netherland [103], Taiwan [107], Philippines [40], and Africa [46].

The word ethnography has its origin in Greek where ethnos means “folk, people, nation” and grapho means “I write” [95]. The goal of ethnography research is to improve people’s understanding of human thought and activities via investigation of human actions in context [73]. Therefore, ethnography approaches in HIS/HIT research also focus on the social aspects of the field, for instance, organizational culture [5], power and managerial issues [75], and to contribute to the design process drawing examples to build explanation system [25].

Unlike quantitative approaches which check comparatively large sample sizes, qualitative approaches examine specific cases. It is useful when investigating complex situations involving a limited number of cases, and it provides rich detail of the phenomena in specific contexts. Quantitative approaches require data standardization in order to process and compare statistical results, while qualitative approaches allow the researchers to explore the responses as they are and to observe the behaviors, opinions, needs, and patterns without yet fully understanding whether the data are meaningful or not [64]. As a result, they are able to help HIS/HIT researchers capture some important hidden factors which might be ignored with quantitative approaches. However, because of the flexibility of the collected data, it takes more time for data processing and data analysis. Moreover, the results of interpretation and quality are easily influenced by researchers’ personal knowledge and biases. Therefore, qualitative and quantitative methods have been integrated in many HIS/HIT studies to compensate each other.

2.6 Challenges in Understanding Existing Healthcare Information Systems

Identifying the challenges means to explore the influences from the physical, socioeconomic, and work environments [92]. One of the most widely studied questions regarding the performance of current systems is: what matters? These factors can relate to multiple perspectives such as human, organization, and technology. We find a lot of influential factors under different contexts, for example:

-

Staff and clinic size, doctor waiting time, the use of appointment scheduler (new or follow-up patient) [16]

-

Time interval until the next appointment, doctor number, keep record of follow-up patient, improve the communications, booking no routine patients for the first 45 minutes for each clinic, field-of-vision appointments before 1st appointment, redesign the appointment card to give patients more information about their next visit to clinic [9]

-

Number of operators, registration windows, physicians nurses, medical assistants, check in rooms, specialty rooms [97]

-

Appointment scheduling for no-shows. Solution: overbooking [56]

-

Appointment scheduling, appointment supply and consumption process, no-shows, overbooking [55]

-

Different appointment types, no shows, overbooking [32]

-

Length of time patients had attended the clinic, patients’ mode of transport to the clinic [100]

Now the challenge is not only whether the factors matter or what factors matter, but also at which level they matter, and why they matter. Lau’s review on HIS research summarized the factors of HIS studies into Information System Success Model [19, 60]. It is clear that understanding HIS/HIT systems is multidisciplinary. As discussed earlier, the research scope of HIS/HIT covers the aspects of technology, organization, social, and human. To evaluate the quality or performance of an existing health information system, we need to include elements from multiple perspectives: technical factors (such as information quality, system easiness of use, system reliability and response time), social factors (such as policy enforcement), and financial factors (such as different types of costs). We will start from a field study to explore the environment of healthcare providers and to collect information about doctors and patients.

2.7 The Field Study of EVMS

The Eastern Virginia Medical School (EVMS) clinic is located on South Hampton Avenue, Norfolk, Virginia, USA. It belongs to Denver Community Health Services (DCHS), which is a network of 8 community health Centers, 12 school-based health centers, and 2 urgent care centers. The EVMS research provides coordination for research committees as well as the research advisory group. It wins funding from outside sources, with grants and contracts awarded for work in Hampton Roads communities. EVMS has a long-term cooperation with our research team and provided great support for this field study.

The physicians of EVMS specialize in family and internal medicine, obstetrics, medical and surgical specialties as well as radiation oncology, laboratory, and pathology services, with the mission “to provide patient-centered quality healthcare to the patients that we serve.” In order to reach the goal, the medical group has been working very hard to deliver care that is safe, efficient, and cost-effective. By the time of our investigation, EVMS had been using patient portal to keep records. In order to explore the current situation of EVMS Ghent Family Medicine, we conduct a data analysis to identify the discrepancy between patient demand and provider supply, to see whether the capacity management in such an outpatient family machine has brought a good outcome and whether the patient data in the HIT/HIS system was utilized effectively.

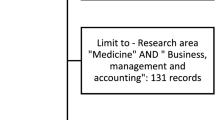

The datasets from EVMS were mainly drawn from the scheduling record spreadsheet provided by the hospital. Some data came from our interview with the doctors, such as the general workloads of doctors and residents. The dataset consists of the doctor’s schedule and patient records from July 2012 to December 2012. There are 131 days, for both morning and afternoon schedules. In our analysis, we take the average of the doctor and patient number for the morning and afternoon as the data points. Our results are as follows.

2.8 Statistical Findings

Figure 2.1 shows a linear relationship between the number of doctors and the number of the patient. According to Fig. 2.1, each doctor takes care of 6 to 7 patients in 4 hours (half a day) on average.

The actual workload for each doctor is 6 to 7 patients per day, according to our collected data. On the other hand, our interview also indicates that each doctor spends about 20 minutes on a single patient on average. Therefore, the actual total workload is less than what the doctors are able to handle. We question that it might be the poor design of scheduling system that reduces the efficiency. There is room for improvement.

From Table 2.3, we can see that Tuesdays and Wednesdays are easy days, while Mondays, Thursdays, and Fridays are busy days, especially on Mondays. Moreover, the standard deviation associated with patients is much higher than that of doctors every day, especially on Mondays. Then here comes the question: does the current schedule respond to the high demand on Mondays?

A similar pattern is also found when we do monthly demand analysis (Table 2.4): November has the highest standard deviation associated with the patient as well as the doctors. The assumption is that it is because of the seasonal factors: November is the month of Thanksgiving and it is very close to Christmas break. People tend to travel, have parties, reunion, and engage in more risky behavior in terms of health issues. Thus, it has the highest variation in demand.

2.9 Gap Between Patient Demand and Doctor Schedule

Figure 2.2 shows the changes in the patient numbers and doctor numbers in half a year. We can see that the service time provided by physicians is level and stable, while the demand for service from patients is sporadic and lumpy. Figure 2.2 suggests that sometimes there were too many service hours, and at other times there appeared to be insufficient service resources that might lead to long waiting times and unhappy patients. Delays in obtaining service lead to patient dissatisfaction, higher cost, and adverse consequences. Similarly, comparing with the actual number of patients seen by the doctors each day which is sporadic and lumpy in Fig. 2.3, the line for the expected number of patients appears more level and stable. It indicates that the current patient schedule does not fit the intended workload capability of doctors.

Finally, we face such a question: are we able to determine a consistent demand pattern that matches the level supply of providers? What we find is that the pattern of the patient demand and the service provider is not consistent. As shown in Fig. 2.4, the shape of the demand and service curve can be a triangle, a negative slope, and a concave. Other than these standard shapes, there are some other shapes as shown in Fig. 2.4(d). In other words, the variability of patient demand and the service seems to be significant.

Such variability may come from patients and service providers. From the perspective of patients, the variability comes from (1) different patient types, such as new patients, follow-up patients, return patients; (2) different schedule types, such as by appointments, late show, no show, overbooking, walk-in patients, urgent patients, emergencies, patients who want the same doctor; and (3) different service times, such as the diagnosis by annual physical, for new patients, for follow-ups, for patients who want to have all health issues done in one visit. From the perspective of the service providers, the variability may come from (1) the difference in provider’s schedule, for example, the doctor schedule is made quarterly, 3 ~ 4 months in advance, while the medical aid schedule is made a day before the service; (2) variability in-service time, that the standard (20 minutes per patient) does not apply to all doctors and there is at least a 5% chance the doctors will run their appointment late. Our findings highlight the mismatch between patient demand and the schedule of the service provider.

Our goal is to reduce the bottleneck of the services, reduce the waiting time of the patients, and improve patients’ satisfaction towards the services. Some lean service operations can take place to reach the goal, such as better scheduling, understanding the patient’s needs and their tolerance span, and matching patient’s demand with providers’ supply. For example, parents with young children will be scheduled early in the morning or late in the afternoon, so the parents do not need to take time off during the day; retired senior citizens (who do not mind waiting a little longer than the scheduled time) can be scheduled in the middle of the day. The physician schedule, nurse scheduling, and patient schedule need to be integrated, and the patient information also needs to be integrated with the staff schedule. Such categorizing functions can be performed by decision support systems. It is one of our following studies that fall into the categories of level 3 and 4 in NHS framework.

2.10 Discussion and Future Work

The field study in EVMS highlights the mismatch between patient demand and service provider schedules. This was actually the very 1st step of our series of study. One of the following works was to adopt the institutional theory to explain the process of implementing HIS/HIT and the possible outcomes. Using HIMSS data, we run structural equation model to test six hypotheses to identify the relationships between size and service volume, size and performance, and size and IT implementation. To solve the problem of existing systems, we will conduct further research to adopt decision support methods to capture the classification patterns from the doctor. Such a system should be able to provide valuable recommendations to health providers, helping them gain more transparent information from patients, and make better scheduling decisions to minimize gaps between patient demand and the provided services.

This field study evaluated Level 1 and 2 of NHS model. It reveals that the physicians in the EVMS hospital were not satisfied to the current scheduling status by the time the survey was conducted. The studies with SERVQUAL, a measurement framework for service quality [83], also show similar findings. SERVQUAL that evaluates the quality of clinic services based on information systems will be measured and compared from five dimensions: tangibles, reliability, responsiveness, assurance, and empathy:

-

Tangibles: physical facilities, equipment, and appearance of personnel

-

Reliability: ability to perform the promised service reliable and accurately

-

Responsiveness: willingness to help customers and provide prompt service

-

Assurance: knowledge and courtesy of employees and their ability to inspire trust and confidence

-

Empathy: caring, individualized attention provided to customers

Many studies have gained success in adopting the SERVQAUL model to evaluate the performance in health care research discipline. Babakus and Mangold [6] found that the SERVQUAL scales could be used to assess the gap between the patient perceptions and expectations and that SERVQUAL was applicable as a standardized measurement scale to compare results in different industries [6]. In particular, Lam [57] checked a hospital service quality in Hong Kong and the result indicated that SERVQUAL was consistent and reliable as a measurement tool [57]. Youssef et al. [113] examined at the service quality of NHS hospitals [113]. Pakdil and Harwood evaluated the patient satisfaction for a preoperative assessment clinic with SERVQUAL [82]. And a recent study in 2010 compared the service quality between public and private hospitals using SERVQUAL [112]. Based on all these facts, we can also adopt SERVQUAL as a reliable measurement infrastructure for our proposed healthcare systems of following studies.

References

ISO/TR 20514. Health Informatics-Electronic Health Record-Definition, Scope, and Content. In: ISO Geneva (2005)

C. Adams, A. Neely, The performance prism to boost M&A success. Meas. Bus. Excell. 4(3), 19–23 (2000)

H. Ahmadi, M. Nilashi, L. Shahmoradi, O. Ibrahim, F. Sadoughi, M. Alizadeh, A. Alizadeh, The moderating effect of hospital size on inter and intra-organizational factors of hospital information system adoption. Technol. Forecast. Soc. Chang. 134, 124–149 (2018)

H. Akashi, T. Yamada, E. Huot, K. Kanal, T. Sugimoto, User fees at a public hospital in Cambodia: Effects on hospital performance and provider attitudes. Soc. Sci. Med. 58(3), 553–564 (2004)

D.E. Avison, M.D. Myers, Information systems and anthropology: And anthropological perspective on IT and organizational culture. Inf. Technol. People 8(3), 43–56 (1995)

E. Babakus, W.G. Mangold, Adapting the SERVQUAL scale to hospital services: An empirical investigation. Health Serv. Res. 26(6), 767 (1992)

L. Baker, T.H. Wagner, S. Singer, M.K. Bundorf, Use of the internet and e-mail for health care information: Results from a national survey. JAMA 289(18), 2400–2406 (2003)

B.M. Beamon, Measuring supply chain performance. Int. J. Oper. Prod. Manag. 19(3), 275–292 (1999)

J.C. Bennett, D. Worthington, An example of a good but partially successful OR engagement: Improving outpatient clinic operations. Interfaces 28(5), 56–69 (1998)

W. Boulding, S.W. Glickman, M.P. Manary, K.A. Schulman, R. Staelin, Relationship between patient satisfaction with inpatient care and hospital readmission within 30 days. Am. J. Manag. Care 17(1), 41–48 (2011)

S. Brignall, S. Modell, An institutional perspective on performance measurement and management in the ‘new public sector’. Manag. Account. Res. 11(3), 281–306 (2000)

A. Bygholm, End-user support: A necessary issue in the implementation and use of EPR systems. Stud. Health Technol. Inform. 84(Pt 1), 604–608 (2000)

R.A. Carr-Hill, The measurement of patient satisfaction. J. Public Health 14(3), 236–249 (1992)

N. Chandrashekar, S. Gautam, K. Srinivas, J. Vijayananda. Design considerations for a reusable medical database. Paper presented at the Computer-Based Medical Systems, 2006. CBMS 2006. 19th IEEE International Symposium on (2006)

D. Charles, M. Gabriel, T. Searcy, Adoption of electronic health record systems among US non-federal acute care hospitals: 2008–2014 (Office of the National Coordinator for Health Information Technology, 2015)

J.E. Clague, P.G. Reed, J. Barlow, R. Rada, M. Clarke, R.H. Edwards, Improving outpatient clinic efficiency using computer simulation. Int. J. Health Care Qual. Assur. 10(5), 197–201 (1997)

K. Corson, M.S. Gerrity, S.K. Dobscha, J.B. Perlin, R.M. Kolodner, R.H. Roswell, Assessing the accuracy of computerized medication histories. Am J Manag Care 10(part 2), 872–877 (2004)

K.F. Cross, R.L. Lynch, The “SMART” way to define and sustain success. National Productiv. Rev. 8(1), 23–33 (1988)

W.H. Delone, E.R. Mclean, Measuring e-commerce success: Applying the DeLone & McLean information systems success model. Int. J. Electron. Commer. 9(1), 31–47 (2004)

S.I. DesHarnais, L.F. McMahon Jr., R.T. Wroblewski, A.J. Hogan, Measuring hospital performance: The development and validation of risk-adjusted indexes of mortality, readmissions, and complications. Med Care 28, 1127–1141 (1990)

S. Devaraj, R. Kohli, Performance impacts of information technology: Is actual usage the missing link? Manag. Sci. 49(3), 273–289 (2003)

Dixon, J. R. (1990). The new performance challenge: Measuring operations for world-class competition: Irwin Professional Pub

M. Evered, S. Bögeholz A case study in access control requirements for a health information system. Paper presented at the Proceedings of the second workshop on Australasian information security, Data Mining and Web Intelligence, and Software Internationalisation-Volume 32 (2004).

N. Executive. Information for health: an information strategy for the modern NHS 1998–2005: a national strategy for local implementation: NHS Executive (1998)

D.E. Forsythe, Using ethnography in the design of an explanation system. Expert Syst. Appl. 8(4), 403–417 (1995)

C.R. Franz, D. Robey, R.R. Koeblitz, User response to an online information system: A field experiment. MIS Q., 29–42 (1986)

C.P. Friedman, J.C. Wyatt, J. Faughnan, Evaluation methods in medical informatics. BMJ 315(7109), 689 (1997)

L. Fu, K. Maly, E. Rasnick, H. Wu, M. Zubair, User experiments of a social. Faceted Multimedia Classification System

G.O. Ginn, R.P. Lee, Community orientation, strategic flexibility, and financial performance in hospitals. J. Healthcare Manage. Am. Coll. Healthcare Execut. 51(2), 111–121 (2005) discussion 121-112

Goldberg, S. I., Shubina, M., Niemierko, A., & Turchin, A. (2010). A Weighty Problem: Identification, Characteristics and Risk Factors for Errors in EMR Data. Paper presented at the AMIA Annual Symposium Proceedings

C.F. Gomes, M.M. Yasin, J.V. Lisboa, Performance measurement practices in manufacturing firms: An empirical investigation. J. Manuf. Technol. Manag. 17(2), 144–167 (2006)

M. Guo, M. Wagner, & C. West. Outpatient clinic scheduling: a simulation approach. Paper presented at the Proceedings of the 36th conference on Winter simulation (2004)

J.L. Habib, EHRs, meaningful use, and a model EMR. Drug Benefit. Trends 22(4), 99–101 (2010)

Hancock, B., Ockleford, E., & Windridge, K. (1998). An introduction to qualitative research: Trent Focus Group Nottingham

J. Henry, Y. Pylypchuk, T. Searcy, V. Patel, Adoption of electronic health record systems among US non-federal acute care hospitals: 2008–2015. ONC Data Brief 35, 1–9 (2016)

J.H. Hibbard, J. Stockard, M. Tusler, Hospital performance reports: Impact on quality, market share, and reputation. Health Aff. 24(4), 1150–1160 (2005)

IBM. Healthcare (2015). Retrieved from http://www-935.ibm.com/industries/healthcare/

Intel. Intel Health and Life Sicences (2015). Retrieved from http://www.intel.com/content/www/us/en/healthcare-it/healthcare-overview.html

B. Jarman, D. Pieter, A. van der Veen, R. Kool, P. Aylin, A. Bottle, et al., The hospital standardised mortality ratio: A powerful tool for Dutch hospitals to assess their quality of care? Qual. Safety Health Care 19(1), 9–13 (2010)

R. Jayasuriya, Managing information systems for health services in a developing country: A case study using a contextualist framework. Int. J. Inf. Manag. 19(5), 335–349 (1999)

M. Je'McCracken, T.F. McIlwain, M.D. Fottler, Measuring organizational performance in the hospital industry: An exploratory comparison of objective and subjective methods. Health Serv. Manag. Res. 14(4), 211–219 (2001)

R.B. Johnson, A.J. Onwuegbuzie, Mixed methods research: A research paradigm whose time has come. Educ. Res. 33(7), 14–26 (2004)

Johnson, S. B., Paul, T., & Khenina, A. (1997). Generic Database Design for Patient Management Information. Paper presented at the Proceedings of the AMIA Annual Fall Symposium

S. D. Jones, D. J. Schilling Measuring team performance: a step-by-step, customizable approach for managers, facilitators, and team leaders (2000). Retrieved from

J.M. Kahn, A.A. Kramer, G.D. Rubenfeld, Transferring critically ill patients out of hospital improves the standardized mortality ratio: A simulation study. Chest J. 131(1), 68–75 (2007)

R.M. Kamadjeu, E.M. Tapang, R.N. Moluh, Designing and implementing an electronic health record system in primary care practice in sub-Saharan Africa: A case study from Cameroon. Inform. Prim. Care 13(3), 179–186 (2005)

B. Kaplan, D. Duchon, Combining qualitative and quantitative methods in information systems research: A case study. MIS Q. 12, 571–586 (1988)

B. Kaplan, J.A. Maxwell, Qualitative research methods for evaluating computer information system, in Evaluating the organizational impact of healthcare information systems, (Springer, New York, 2005), pp. 30–55

R. S. Kaplan, D. P. Norton. Putting the balanced scorecard to work. Performance measurement, management, and appraisal sourcebook, 66 (1995)

R.S. Kaplan, D.P. Norton, The balanced scorecard: Measures that drive performance. Harv. Bus. Rev. 83(7), 172–180 (2005)

D.P. Keegan, R.G. Eiler, C.R. Jones, Are your performance measures obsolete. Manag. Account. 70(12), 45–50 (1989)

C. S. Khoo, S. Chan, Y. Niu. Extracting causal knowledge from a medical database using graphical patterns. Paper presented at the proceedings of the 38th annual meeting on Association for Computational Linguistics (2000)

P. Kierkegaard, Electronic health record: Wiring Europe’s healthcare. Comp. Law Secur. Rev. 27(5), 503–515 (2011)

M. Korpela, H.A. Soriyan, K. Olufokunbi, A. Onayade, A. Davies-Adetugbo, D. Adesanmi, Community participation in health informatics in Africa: An experiment in tripartite partnership in Ile-Ife, Nigeria. Comp. Support. Cooperat. Work (CSCW) 7(3–4), 339–358 (1998)

L.R. LaGanga, Lean service operations: Reflections and new directions for capacity expansion in outpatient clinics. J. Oper. Manag. 29(5), 422–433 (2011)

L.R. LaGanga, S.R. Lawrence, Clinic overbooking to improve patient access and increase provider productivity*. Decis. Sci. 38(2), 251–276 (2007)

S.S. Lam, SERVQUAL: A tool for measuring patients' opinions of hospital service quality in Hong Kong. Total Qual. Manag. 8(4), 145–152 (1997)

B.T. Lamont, D. Marlin, J.J. Hoffman, Porter's generic strategies, discontinuous environments, and performance: A longitudinal study of changing strategies in the hospital industry. Health Serv. Res. 28(5), 623 (1993)

J. Lamoreaux, The organizational structure for medical information management in the Department of Veterans Affairs: An overview of major health care databases. Med. Care 34(3), 31–44 (1996)

F. Lau, C. Kuziemsky, M. Price, J. Gardner, A review on systematic reviews of health information system studies. J. Am. Med. Inform. Assoc. 17(6), 637–645 (2010)

K. Lee, T.T. Wan, Effects of hospitals' structural clinical integration on efficiency and patient outcome. Health Serv. Manag. Res. 15(4), 234–244 (2002)

Leflar, J. (2001). Practical TPM: successful equipment management at Agilent Technologies: Productivity Press

L. Li, W. Benton, G.K. Leong, The impact of strategic operations management decisions on community hospital performance. J. Oper. Manag. 20(4), 389–408 (2002)

Madrigal, D., & McClain, B. (2012). Strengths and Weaknesses of Quantitative and Qualitative Research. UX Matters

K.D. Mandl, I.S. Kohane, Escaping the EHR trap—The future of health IT. N. Engl. J. Med. 366(24), 2240–2242 (2012)

D.J. Mayston, Non-profit performance indicators in the public sector. Financial Account. Manage. 1(1), 51–74 (1985)

N. Menachemi, J. Burkhardt, R. Shewchuk, D. Burke, R.G. Brooks, Hospital information technology and positive financial performance: A different approach to finding an ROI. J. Healthcare Manage. Am. Coll. Healthcare Execut. 51(1), 40–58 (2005) discussion 58-49

P. Micheli, M. Kennerley, Performance measurement frameworks in public and non-profit sectors. Product. Plan. Control 16(2), 125–134 (2005)

Microsoft (2015). Retrieved from https://www.healthvault.com/us/en

A. Midwinter, Developing performance indicators for local government: The Scottish experience. Public Money Manage. 14(2), 37–43 (1994)

A.J. Molyneux, R.S. Kerr, J. Birks, N. Ramzi, J. Yarnold, M. Sneade, et al., Risk of recurrent subarachnoid haemorrhage, death, or dependence and standardised mortality ratios after clipping or coiling of an intracranial aneurysm in the International Subarachnoid Aneurysm Trial (ISAT): long-term follow-up. Lancet Neurol. 8(5), 427–433 (2009)

L.E. Moody, E. Slocumb, B. Berg, D. Jackson, Electronic health records documentation in nursing: nurses' perceptions, attitudes, and preferences. Comp. Inform. Nursing 22(6), 337–344 (2004)

M.D. Myers, Critical ethnography in information systems, in Information systems and qualitative research, (Springer, Boston, 1997a), pp. 276–300

M.D. Myers, Qualitative research in information systems. Manag. Inf. Syst. Q. 21, 241–242 (1997b)

M.D. Myers, L.W. Young, Hidden agendas, power and managerial assumptions in information systems development: An ethnographic study. Inf. Technol. People 10(3), 224–240 (1997)

Neely, A. (1994). Performance measurement system design–third phase. Performance Measurement System Design Workbook, 1

A. Neely, C. Adams, P. Crowe, The performance prism in practice. Meas. Bus. Excell. 5(2), 6–13 (2001)

A. Neely, M. Gregory, K. Platts, Performance measurement system design: A literature review and research agenda. Int. J. Oper. Prod. Manag. 15(4), 80–116 (1995)

Neely, A. D., Adams, C., & Kennerley, M. (2002). The performance prism: The scorecard for measuring and managing business success: Prentice Hall Financial Times London

Organization, W. H. (2007). Everybody's Business--Strengthening Health Systems to Improve Health Outcomes: WHO's Framework for Action

Orszag, P. R. (2008). Evidence on the Costs and Benefits of Health Information Technology. Paper presented at the Testimony before Congress

F. Pakdil, T.N. Harwood, Patient satisfaction in a preoperative assessment clinic: An analysis using SERVQUAL dimensions. Total Qual. Manag. Bus. Excell. 16(1), 15–30 (2005)

A. Parasuraman, V.A. Zeithaml, L.L. Berry, Servqual. J. Retail. 64(1), 12–37 (1988)

S.T. Parente, R.L. Van Horn, Valuing hospital investment in information technology: Does governance make a difference? Health Care Financ. Rev. 28(2), 31–43 (2005)

G.C. Pascoe, Patient satisfaction in primary health care: A literature review and analysis. Eval. Program Plann. 6(3), 185–210 (1983)

I. Press, R.F. Ganey, M.P. Malone, Satisfied patients can spell financial Well-being. Healthcare financial management: journal of the Healthcare Financial Management Association 45(2), 34–36 (1991)., 38, 40-32

S. Purbey, K. Mukherjee, C. Bhar, Performance measurement system for healthcare processes. Int. J. Product. Perform. Manag. 56(3), 241–251 (2007)

Reason, P., & Bradbury, H. (2001). Handbook of action research: Participative inquiry and practice: Sage

C. Schoen, R. Osborn, D. Squires, M. Doty, P. Rasmussen, R. Pierson, S. Applebaum, A survey of primary care doctors in ten countries shows progress in use of health information technology, less in other areas. Health Aff. 31(12), 2805–2816 (2012)

C.L. Schur, L.A. Albers, M.L. Berk, Health care use by Hispanic adults: Financial vs. non-financial determinants. Health Care Financ. Rev. 17(2), 71–88 (1994)

S.M. Shortell, J.P. LoGerfo, Hospital medical staff organization and quality of care: Results for myocardial infarction and appendectomy. Med. Care 19, 1041–1055 (1981)

D.M. Steinwachs, R.G. Hughes, Health Services Research: Scope and Significance (An Evidence-Based Handbook for Nurses, Patient Safety and Quality, 2008), pp. 08–0043

E.R. Stinson, D.A. Mueller, Survey of health professionals' information habits and needs: Conducted through personal interviews. JAMA 243(2), 140–143 (1980)

A. Stoop, M. Berg, Integrating quantitative and qualitative methods in patient care information system evaluation: Guidance for the organizational decision maker. Methods Inf. Med. 42(4), 458–462 (2003)

E. G. Sukoharsono, C. SE. Where Are You, Papi? Asking Mami indoubt with Papi: An Imaginary Dialogue on Critical Ethno-Accounting Research

A. Sunyaev, A. Kaletsch, H. Krcmar. Comparative evaluation of google health API vs. Microsoft healthvault API. Paper presented at the proceedings of the third international conference on health informatics (HealthInf 2010) (2010)

J.R. Swisher, S.H. Jacobson, J.B. Jun, O. Balci, Modeling and analyzing a physician clinic environment using discrete-event (visual) simulation. Comput. Oper. Res. 28(2), 105–125 (2001)

H.C. Szeto, R.K. Coleman, P. Gholami, B.B. Hoffman, M.K. Goldstein, Accuracy of computerized outpatient diagnoses in a veterans affairs general medicine clinic. Am. J. Manag. Care 8(1), 37–43 (2002)

G. Thomas, A typology for the case study in social science following a review of definition, discourse, and structure. Qual. Inq. 17(6), 511–521 (2011)

S. Thomas, R. GLYNNE-JONES, I. CHAIT, Is it worth the wait? A survey of patients' satisfaction with an oncology outpatient clinic. Eur. J. Cancer Care 6(1), 50–58 (1997)

S. Tsumoto, Knowledge discovery in clinical databases and evaluation of discovered knowledge in outpatient clinic. Inf. Sci. 124(1), 125–137 (2000)

K. Van Peursem, M. Prat, S. Lawrence, Health management performance: A review of measures and indicators. Account. Audit. Account. J. 8(5), 34–70 (1995)

J.A. Vennix, J.W. Gubbels, Knowledge elicitation in conceptual model building: A case study in modeling a regional Dutch health care system. Eur. J. Oper. Res. 59(1), 85–101 (1992)

M.M. Wagner, W.R. Hogan, The accuracy of medication data in an outpatient electronic medical record. J. Am. Med. Inform. Assoc. 3(3), 234–244 (1996)

B.B. Wang, T.T. Wan, D.E. Burke, G.J. Bazzoli, B.Y. Lin, Factors influencing health information system adoption in American hospitals. Health Care Manag. Rev. 30(1), 44–51 (2005)

B.B. Wang, T.T. Wan, J. Clement, J. Begun, Managed care, vertical integration strategies and hospital performance. Health Care Manag. Sci. 4(3), 181–191 (2001)

S.-W. Wang, W.-H. Chen, C.-S. Ong, L. Liu, & Y.-W. Chuang. RFID application in hospitals: a case study on a demonstration RFID project in a Taiwan hospital. Paper presented at the system sciences, 2006. HICSS'06. Proceedings of the 39th annual Hawaii international conference on (2006)

R. Wilton, J. M. McCoy. An outpatient clinic information system based on distributed database technology. Paper presented at the Proceedings/the... Annual Symposium on Computer Application [sic] in Medical Care. Symposium on Computer Applications in Medical Care (1989)

J.D. Wisner, S.E. Fawcett, Linking firm strategy to operating decisions through performance measurement. Prod. Invent. Manag. J. 32(3), 5–11 (1991)

H.L. Wu, Y.Y. Liu, C.J. Dong, K. Wang, Multiple factors integration based text alignment for medical database. Adv. Mater. Res. 756, 1648–1651 (2013)

E. Xu, M. Wermus, D.B. Bauman, Development of an integrated medical supply information system. Enterprise Inform. Syst. 5(3), 385–399 (2011)

F. Yeşilada, E. Direktör, Health care service quality: A comparison of public and private hospitals. Afr. J. Bus. Manag. 4(6), 962–971 (2010)

F. Youssef, D. Nel, T. Bovaird, Service quality in NHS hospitals. J. Manag. Med. 9(1), 66–74 (1995)

B. Zeng, A. Turkcan, J. Lin, M. Lawley, Clinic scheduling models with overbooking for patients with heterogeneous no-show probabilities. Ann. Oper. Res. 178(1), 121–144 (2010)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2022 Springer Nature Switzerland AG

About this chapter

Cite this chapter

Fu, L., Zhang, W., Li, L. (2022). Big Data Analytics for Healthcare Information System: Field Study in an US Hospital. In: Paul, S., Paiva, S., Fu, B. (eds) Frontiers of Data and Knowledge Management for Convergence of ICT, Healthcare, and Telecommunication Services. EAI/Springer Innovations in Communication and Computing. Springer, Cham. https://doi.org/10.1007/978-3-030-77558-2_2

Download citation

DOI: https://doi.org/10.1007/978-3-030-77558-2_2

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-77557-5

Online ISBN: 978-3-030-77558-2

eBook Packages: EngineeringEngineering (R0)