Abstract

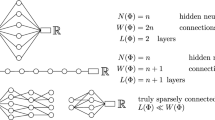

We begin by introducing some standard terminology, namely covering numbers, and neural architectures.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Preview

Unable to display preview. Download preview PDF.

Similar content being viewed by others

References

Peter L. Bartlett, “The sample complexity of pattern classification with neural networks: The size of the weights is more important than the size of the network,” IEEE Trans. Inf. Thy, Vol. 44, No. 2, pp. 525–536, March 1998.

V. Blondel and J. N. Tsitsiklis, “NP-hardness of some linear control design problems,” SIAM J. Control and Opt., 35, pp. 2118–2127, 1997.

B. Dasgupta and E.D. Sontag, “Sample complexity for learning recurrent perceptron mappings,” summary in Advances in Neural Information Processing, 8, MIT Press, Cambridge, MA, pp. 204–210, 1996.

B. Dasgupta and E.D. Sontag, “Sample complexity for learning recurrent perceptron mappings,” IEEE Trans. Info. Thy, Vol. 42, pp. 1479–1487, 1996.

D. Haussler, “Decision theoretic generalizations of the PAC model for neural net and other learning applications,” Information and Computation, 100, pp. 78–150, 1992.

M. Karpinski and A.J. Macintyre, “Polynomial bounds for VC dimension of sigmoidal neural networks,” Proc. 27th ACM Symp. Thy. of Computing, pp. 200–208, 1995.

M. Karpinski and A.J. Macintyre, “Polynomial bounds for VC dimension of sigmoidal and general Pfaffian neural networks,” J. Comp. Sys. Sci., (to appear).

P. Koiran, and E.D. Sontag, “Vapnik-Chervonenkis dimension of recurrent neural networks,” Discrete Applied Math., 1998, to appear. (Summary in Proceedings of Third European Conference on Computational Learning Theory, Jerusalem, March 1997, Springer Lec. Notes in Computer Science 1208, pages 223–237.)

A. Kowalczyk, H. Ferra and J. Szymanski, “Combining statistical physics with VC-bounds on generalisation in learning systems,” Proceeding of the Sixth Australian Conference on Neural Networks (ACNN’95,pp. 41–44, Sydney, 1995.

Sontag, E.D., “A learning result for continuous-time recurrent neural networks,” Systems and Control Letters, 1998 (to appear).

V.N. Vapnik, Estimation of Dependences Based on Empirical Data, Springer-Verlag, 1982.

M. Vidyasagar, A Theory of Learning and Generalization: With Ap-plications to Neural Networks and Control Systems, Springer-Verlag, London, 1997.

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 1999 Springer-Verlag London Limited

About this chapter

Cite this chapter

Vidyasagar, M. (1999). Covering numbers for input-output maps realizable by neural networks. In: Blondel, V., Sontag, E.D., Vidyasagar, M., Willems, J.C. (eds) Open Problems in Mathematical Systems and Control Theory. Communications and Control Engineering. Springer, London. https://doi.org/10.1007/978-1-4471-0807-8_48

Download citation

DOI: https://doi.org/10.1007/978-1-4471-0807-8_48

Publisher Name: Springer, London

Print ISBN: 978-1-4471-1207-5

Online ISBN: 978-1-4471-0807-8

eBook Packages: Springer Book Archive