Abstract

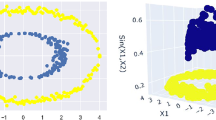

In this paper, we present a new method to enhance classification performance based on Boosting by introducing nonlinear discriminant analysis as feature selection. To reduce the dependency between hypotheses, each hypothesis is constructed in a different feature space formed by Kernel Discriminant Analysis (KDA). Then, these hypotheses are integrated based on AdaBoost. To conduct KDA in each Boosting iteration within realistic time, a new method of kernel selection is also proposed. Several experiments are carried out for the blood cell data and thyroid data to evaluate the proposed method. The result shows that it is almost the same as the best performance of Support Vector Machine without any time-consuming parameter search.

Access provided by Autonomous University of Puebla. Download to read the full chapter text

Chapter PDF

Similar content being viewed by others

References

Abe, S. (2005) Support vector machines for pattern classification. Springer

Baudat, G., Anouar, F. (2000) Generalized discriminant analysis using a kernel approach. Neural Computation, Vol. 12: 2385–2404

Freund, Y., Schapire, R. E. (1997) A decision-theoretic generalization of on-line learning and an application to boosting. Journal of Computer and System Sciences, Vol. 55, No. 1: 119–139

Juwei, L., Plataniotis, K. N., Venetsanopoulos, A. N. (2003) Boosting linear discriminant analysis for face recognition. IEEE Int. Conf. on Image Processing: 14–17

Schölkopf, B., Smola, A., Müller, K.-R. (1996) Nonlinear component analysis as a kernel eigenvalue problem. MPI Technical Report, No. 44

Murua, A. (2002) Upper bounds for error rates of linear combinations of classifiers. IEEE Trans, on Pattern Analysis and Machine Intelligence, Vol. 24, No. 5: 591–602

Rätsch, G., Onoda, T., Müller, K.-R. (2001) Soft margins for AdaBoost. Machine Learning, Vol. 42, No. 3: 287–320

ftp://ftp.ics.uci.edu/pub/machine-learning-databases/

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2005 Springer-Verlag/Wien

About this paper

Cite this paper

Kita, S., Maekawa, S., Ozawa, S., Abe, S. (2005). Boosting Kernel Discriminant Analysis with Adaptive Kernel Selection. In: Ribeiro, B., Albrecht, R.F., Dobnikar, A., Pearson, D.W., Steele, N.C. (eds) Adaptive and Natural Computing Algorithms. Springer, Vienna. https://doi.org/10.1007/3-211-27389-1_103

Download citation

DOI: https://doi.org/10.1007/3-211-27389-1_103

Publisher Name: Springer, Vienna

Print ISBN: 978-3-211-24934-5

Online ISBN: 978-3-211-27389-0

eBook Packages: Computer ScienceComputer Science (R0)