Abstract

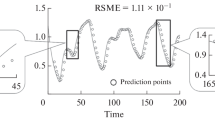

Usually, training data are not evenly distributed in the input space. This makes non-local methods, like Neural Networks, not very accurate in those cases. On the other hand, local methods have the problem of how to know which are the best examples for each test pattern. In this work, we present a way of performing a trade off between local and non-local methods. On one hand a Radial Basis Neural Network is used like learning algorithm, on the other hand a selection of the training patterns is used for each query. Moreover, the RBNN initialization algorithm has been modified in a deterministic way to eliminate any initial condition influence. Finally, the new method has been validated in two time series domains, an artificial and a real world one.

Access provided by Autonomous University of Puebla. Download to read the full chapter text

Chapter PDF

Similar content being viewed by others

References

Aha, D.W., Kibler, D., Albert, M.K.: Instance-based learning algorithms. Machine Learning 6, 37–66 (1991)

Bottou, L., Vapnik, V.: Local learning algorithms. Neural Computation 4(6), 888–900 (1992)

Atkenson, C.G., Moore, A.W., Schaal, S.: Locally weighted learning. Artificial Intelligence Review 11, 11–73 (1997)

Dasarathy, B.V. (ed.): Nearest neighbour(NN) norms: NN pattern classification techniques. IEEE Computer Society Press, Los Alamitos (1991)

Ghosh, J., Nag, A.: An Overview of Radial Basis Function Networks. In: Howlett, R.J., Jain, L.C. (eds.). Physica Verlag, Heidelberg (2000)

Moody, J.E., Darken, C.: Fast learning in networks of locally tuned processing units. Neural Computation 1, 281–294 (1989)

Park, J., Sandberg, I.W.: Universal approximation and radial-basis-function networks. Neural Computation 5, 305–316 (1993)

Valls, J.M., Galván, I.M., Isasi, P.: Lazy learning in radial basis neural networks: a way of achieving more accurate models. Neural Processing Letters 20, 105–124 (2004)

Wettschereck, D., Dietterich, T.: Improving the perfomance of radial basis function networks by learning center locations. Advances in Neural Information Processing Systems 4, 1133–1140 (1992)

Yingwei, L., Sundararajan, N., Saratchandran, P.: A sequential learning scheme for function approximation using minimal radial basis function neural networks. Neural Computation 9, 461–478 (1997)

Zaldívar, J.M., Gutiérrez, E., Galván, I.M., Strozzi, F., Tomasin, A.: Forecasting high waters at Venice Lagoon using chaotic time series analysis and nonlinear neural networks. Journal of Hydroinformatics 2, 61–84 (2000)

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2006 Springer-Verlag Berlin Heidelberg

About this paper

Cite this paper

Valls, J.M., Galván, I.M., Isasi, P. (2006). Lazy Training of Radial Basis Neural Networks. In: Kollias, S.D., Stafylopatis, A., Duch, W., Oja, E. (eds) Artificial Neural Networks – ICANN 2006. ICANN 2006. Lecture Notes in Computer Science, vol 4131. Springer, Berlin, Heidelberg. https://doi.org/10.1007/11840817_21

Download citation

DOI: https://doi.org/10.1007/11840817_21

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-540-38625-4

Online ISBN: 978-3-540-38627-8

eBook Packages: Computer ScienceComputer Science (R0)