Abstract

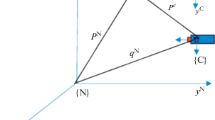

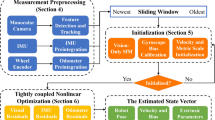

This paper describes an approach for fusion of monocular vision measurements, camera motion, odometer and inertial rate sensor measurements. The motion of the camera between successive images generates a baseline for range computations by triangulation. The recursive estimation algorithm is based on extended Kalman filtering. The depth estimation accuracy is strongly affected by the mutual observer and feature point geometry, measurement accuracy of observer motion parameters and line of sight to a feature point. The simulation study investigates how the estimation accuracy is affected by the following parameters: linear and angular velocity measurement errors, camera noise, and observer path. These results impose requirements to the instrumentation and observation scenarios. It was found that under favorable conditions the error in distance estimation does not exceed 2% of the distance to a feature point.

Article PDF

Similar content being viewed by others

Avoid common mistakes on your manuscript.

References

Lazaros, N., Sirakoulis, G.C., and Gasteratos, A., Review of stereo vision algorithms: from software to hardware, International Journal of Optomechatronics, 2008, no. 2(4), pp. 435–462.

Hartley, R. and Zisserman, A., Multiple View Geometry in Computer Vision, Cambridge university press, 2003.

Vishnyakov, B.V., Vizilter, Y.V., Knyaz, V.A., Malin, I.K., Vygolov, O.V. and Zheltov, S.Y., Stereo sequences analysis for dynamic scene understanding in a driver assistance system, in SPIE Optical Metrology 2015, International Society for Optics and Photonics.

Kytö, M., Nuutinen, M. and Oittinen, P., Method for measuring stereo camera depth accuracy based on stereoscopic vision, in IS&T/SPIE Electronic Imaging 2011, International Society for Optics and Photonics.

Beloglazov, I.N., Accuracy of stereoscopic navigation system in flights above natural landscapes and urban land, Journal of Computer and Systems Sciences International, 2010, no. 49.5, pp. 802–810.

Gordon, D.A., Static and dynamic visual fields in human space perception, JOSA, 1965, vol. 55, no. 10, pp. 1296–1302.

Gibson, J.J., The Ecological Approach to Visual Perception, Psychology Press, 2013.

Regan, D., Beverley, K., and Cynader, M., The Visual Perception of Motion in Depth, Scientific American, 1979.

Gibson, J.J., The Perception of the Visual World, 1950.

Wexler, M., Panerai, F., Lamouret, I., and Droulez, J., Self-motion and the perception of stationary objects, Nature, 2001, no. 409(6816), pp. 85–88.

Longuet-Higgins, H.C. and Prazdny, K., The interpretation of a moving retinal image, Proceedings of the Royal Society of London. Series B. Biological Sciences, 1980, vol. 208, no. 1173, pp. 385–397.

Landy, M.S. et al., Measurement and modeling of depth cue combination: in defense of weak fusion, Vision research, 1995, no. 35.3, pp. 389–412.

Tkocz, M. and Janschek, K., Metric velocity and landmark distance estimation utilizing monocular camera images and IMU data, in Proc. 11th Workshop on Positioning, Navigation and Communication, IEEE, 2014.

Huster, A., Relative position sensing by fusing monocular vision and inertial rate sensors, Ph.D. dissertation, Citeseer, 2003.

Huster, A. and Rock, S.M., Relative position estimation for manipulation tasks by fusing vision and inertial measurements, in Proc. 11 th International Conference on Advanced Robotics, Coimbra, Portugal, June 30–July 3 2003, vol. 2. ICAR, 2003, pp. 1562–1567.

Hammel, S., Liu, P., Hilliard, E., and Gong, K., Optimal observer motion for localization with bearing measurements, Computers & Mathematics with Applications, 1989, vol. 18, no. 1, pp. 171-180.

Oshman, Y. and Davidson, P., Optimization of observer trajectories for bearings-only target localization, Aerospace and Electronic Systems, IEEE Transactions on, 1999, vol. 35, no. 3, pp. 892–902.

Harris, C. and Stephens, M., A combined corner and edge detector, in Proc. of the 4th Alvey Vision Conference, 1988, pp. 147–151.

Moravec, H., Obstacle Avoidance and Navigation in the Real World by a Seeing Robot Rover, Tech Report CMURI-TR-3 Carnegie-Mellon University, Robotics Institute, 1980.

Shi, J. and Tomasi, C., Good features to track, in Proc. of the IEEE Conference on Computer Vision and Pattern Recognition, 1994, pp. 593–600.

Lucas, B.D. and Kanade, T., An iterative image registration technique with an application to stereo vision, in Proc. of Imaging Understanding Workshop, 1981, pp. 121–130.

Bouguet, J.-Y., Pyramidal implantation of the Lucas-Kanade Feature Tracker Description of the algorithm, Intel Corporation, Microprocessor Research Labs, 1999.

Author information

Authors and Affiliations

Corresponding author

Additional information

Published in Russian in Giroskopiya i Navigatsiya, 2016, No. 4, pp. 98–111.

Rights and permissions

About this article

Cite this article

Davidson, P., Raunio, JP. & Piché, R. Monocular vision-based range estimation supported by proprioceptive motion. Gyroscopy Navig. 8, 150–158 (2017). https://doi.org/10.1134/S2075108717020043

Received:

Published:

Issue Date:

DOI: https://doi.org/10.1134/S2075108717020043