Abstract

This paper presents a novel grasp generative residual attention network (RANET) for generating antipodal robotic grasp from multi-modal images with the pixel-wise method. To strengthen the generalization ability of unknown objects, this paper proposed a new structure that differs from the previous grasp generative network in that it additionally integrates a coordinate attention mechanism and a symmetrical skip connection, respectively. Using the coordinate attention module to emphasize meaningful information of the feature map and the symmetrical skip connection to remain more fine-grained details of feature. Moreover, a multi atrous convolution module is included in the structure to capture more high-level information, while a hypercolumn feature fusion method is incorporated for getting the best from the complementation of different layers’ features. Through evaluation on public datasets, the result demonstrates that we achieve 98.9% accuracy on the Cornell dataset which is the state-of-the-art performance with real-time speed(∼ 17 ms), meanwhile, we represent a 93.9% accuracy performance on the Jacquard dataset.

Article PDF

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Avoid common mistakes on your manuscript.

References

R. H. Taylor, A. Menciassi, G. Fichtinger, P. Fiorini, and P. Dario, “Medical robotics and computer-integrated surgery,” Springer Handbook of Robotics, pp. 1657–1684, 2016.

D. Choi, S. H. Kim, W. Lee, S. Kang, and K. Kim, “Development and preclinical trials of a surgical robot system for endoscopic endonasal transsphenoidal surgery,” International Journal of Control, Automation, and Systems, vol. 19, no. 3, pp. 1352–1362, 2021.

Y. Li, Y. Yue, D. Xu, E. Grinspun, and P. K. Allen, “Folding deformable objects using predictive simulation and trajectory optimization,” Proc. of IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), pp. 6000–6006, IEEE, 2015.

M. Saadat and P. Nan, “Industrial applications of automatic manipulation of flexible materials,” Industrial Robot: An International Journal, vol. 29, no. 5, pp. 434–442, 2002.

J.-K. Oh, S. Lee, and C.-H. Lee, “Stereo vision based automation for a bin-picking solution,” International Journal of Control, Automation, and Systems, vol. 10, no. 2, pp. 362–373, 2012.

H. Bay, T. Tuytelaars, and L. Van Gool, “SURF: Speeded up robust features,” Proc. of European Conference on Computer Vision, pp. 404–417, Springer, 2006.

E. Rublee, V. Rabaud, K. Konolige, and G. Bradski, “ORB: An efficient alternative to SIFT or SURF,” Proc of International Conference on Computer Vision, pp. 2564–2571, IEEE, 2011.

S. Salti, F. Tombari, and L. Di Stefano, “SHOT: Unique signatures of histograms for surface and texture description,” Computer Vision and Image Understanding, vol. 125, pp. 251–264, 2014.

L. Chen, P. Huang, Y. Li, and Z. Meng, “Detecting graspable rectangles of objects in robotic grasping,” International Journal of Control, Automation, and Systems, vol. 18, no. 5, pp. 1343–1352, 2020.

I. Lenz, H. Lee, and A. Saxena, “Deep learning for detecting robotic grasps,” The International Journal of Robotics Research, vol. 34, no. 4–5, pp. 705–724, 2015.

L. Pinto and A. Gupta, “Supersizing self-supervision: Learning to grasp from 50K tries and 700 robot hours,” Proc. of IEEE International Conference on Robotics and Automation (ICRA), pp. 3406–3413, IEEE, 2016.

D. Park and S. Y. Chun, “Classification based grasp detection using spatial transformer network,” arXiv preprint arXiv:1803.01356, 2018.

F.-J. Chu, R. Xu, and P. A. Vela, “Real-world multiobject, multigrasp detection,” IEEE Robotics and Automation Letters, vol. 3, no. 4, pp. 3355–3362, 2018.

Z. Wang, Z. Li, B. Wang, and H. Liu, “Robot grasp detection using multimodal deep convolutional neural networks,” Advances in Mechanical Engineering, vol. 8, p. 9, 1687814016668077.

J. J. van Vuuren, L. Tang, I. Al-Bahadly, and K. M. Arif, “A 3-stage machine learning-based novel object grasping methodology,” IEEE Access, vol. 8, pp. 74216–74236, 2020.

X. Wang, X. Jiang, J. Zhao, S. Wang, and Y.-H. Liu, “Grasping objects mixed with towels,” IEEE Access, vol. 8, pp. 129338–129346, 2020.

D.-W. Kim, H. Jo, and J.-B. Song, “Irregular depth tiles: Automatically generated data used for network-based robotic grasping in 2D dense clutter,” International Journal of Control, Automation, and Systems, vol. 19, no. 10, pp. 3428–3434, 2021.

K.-H. Ahn and J.-B. Song, “Image preprocessing-based generalization and transfer of learning for grasping in cluttered environments,” International Journal of Control, Automation, and Systems, vol. 18, pp. 2306–2314, 2020.

J. Redmon and A. Angelova, “Real-time grasp detection using convolutional neural networks,” Proc. of IEEE International Conference on Robotics and Automation (ICRA), pp. 1316–1322, IEEE, 2015.

D. Guo, F. Sun, H. Liu, T. Kong, B. Fang, and N. Xi, “A hybrid deep architecture for robotic grasp detection,” Proc. of IEEE International Conference on Robotics and Automation (ICRA), pp. 1609–1614, IEEE, 2017.

S. Kumra and C. Kanan, “Robotic grasp detection using deep convolutional neural networks,” Proc. of IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), pp. 769–776, IEEE, 2017.

Q. Zhang, D. Qu, F. Xu, and F. Zou, “Robust robot grasp detection in multimodal fusion,” Proc. of MATEC Web of Conferences, vol. 139, p. 00060, EDP Sciences, 2017.

D. Morrison, J. Leitner, and P. Corke, “Closing the loop for robotic grasping: A real-time, generative grasp synthesis approach,” Proceedings of Robotics: Science and Systems, pp. 1–10, 2018.

D. Morrison, P. Corke, and J. Leitner, “Learning robust, real-time, reactive robotic grasping,” The International Journal of Robotics Research, vol. 39, no. 2–3, pp. 183–201, 2020.

A. Depierre, E. Dellandréa, and L. Chen, “Jacquard: A large scale dataset for robotic grasp detection,” Proc. of IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), pp. 3511–3516, IEEE, 2018.

J. Bohg, A. Morales, T. Asfour, and D. Kragic, “Data-driven grasp synthesis—A survey,” IEEE Transactions on Robotics, vol. 30, no. 2, pp. 289–309, 2013.

C.-P. Tung and A. C. Kak, “Fast construction of force-closure grasps,” IEEE Transactions on Robotics and Automation, vol. 12, no. 4, pp. 615–626, 1996.

C. Rosales, R. Suárez, M. Gabiccini, and A. Bicchi, “On the synthesis of feasible and prehensile robotic grasps,” Proc. of IEEE International Conference on Robotics and Automation, pp. 550–556, IEEE, 2012.

D. Prattichizzo, M. Malvezzi, M. Gabiccini, and A. Bicchi, “On the manipulability ellipsoids of underactuated robotic hands with compliance,” Robotics and Autonomous Systems, vol. 60, no. 3, pp. 337–346, 2012.

J. Watson, J. Hughes, and F. Iida, “Real-world, realtime robotic grasping with convolutional neural networks,” Proc. of Annual Conference towards Autonomous Robotic Systems, pp. 617–626, Springer, 2017.

G. Chalvatzaki, N. Gkanatsios, P. Maragos, and J. Peters, “Orientation attentive robotic grasp synthesis with augmented grasp map representation,” arXiv preprint, arXiv:2006.05123, 2020.

Y. Xu, L. Wang, A. Yang, and L. Chen, “GraspCNN: Realtime grasp detection using a new oriented diameter circle representation,” IEEE Access, vol. 7, pp. 159322–159331, 2019.

S. Kumra, S. Joshi, and F. Sahin, “Antipodal robotic grasping using generative residual convolutional neural network,” arXiv preprint arXiv:1909.04810, 2019.

S. Wang, X. Jiang, J. Zhao, X. Wang, W. Zhou, and Y. Liu, “Efficient fully convolution neural network for generating pixel wise robotic grasps with high resolution images,” Proc. of IEEE International Conference on Robotics and Biomimetics (ROBIO), pp. 474–480, IEEE, 2019.

P. Dolezel, D. Stursa, D. Kopecky, and J. Jecha, “Memory efficient grasping point detection of nontrivial objects,” IEEE Access, vol. 9, pp. 82130–82145, 2021.

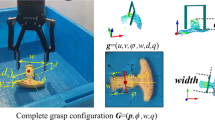

Y. Jiang, S. Moseson, and A. Saxena, “Efficient grasping from RGBD images: Learning using a new rectangle representation,” Proc. of IEEE International Conference on Robotics and Automation, pp. 3304–3311, IEEE, 2011.

Q. Hou, D. Zhou, and J. Feng, “Coordinate attention for efficient mobile network design,” arXiv preprint arXiv:2103.02907, 2021.

H. Zhang, X. Lan, S. Bai, X. Zhou, Z. Tian, and N. Zheng, “ROI-based robotic grasp detection for object overlapping scenes,” Proc. of IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), pp. 4768–4775, IEEE, 2019.

Author information

Authors and Affiliations

Corresponding author

Additional information

Qian-Qian Hong received her B.S. degree in science and technology of computer from University of Electronic Science and Technology Zhongshan College, China in 2018. Currently, she is pursuing an M.S. degree in computer engineering with the Guangdong University of Technology. Her current research interests include computer vision and robotic grasping.

Liang Yang received his B.S. degree in electronics engineering from Nanchang University, Nanchang, China, in 2002, his M.S. and Ph.D. degrees from the School of Automation, Guangdong University of Technology, in 2005 and 2016, respectively. From 2005 to 2009, he has worked in Huawei Co. as a senior engineer. The products which he had ever involved in implementing serves millions of people. He is currently a Professor at the School of Computer Engineering, University of Electronic Science and Technology of China, Zhongshan Institute. Meanwhile, he is a postdoctoral with University of Electronic Science and Technology. His research interests include robot systems and technology, and robotics and computational intelligence.

Bi Zeng received her M.S. and Ph.D. degrees from the Guangdong University of Technology, where she is currently a Professor with the School of Computers. Her current research interests include computational intelligence, data mining, intelligent robot, and wireless sensor networks. She is a Senior Member of CCF, and Multi-Valued Logic and Fuzzy Logic Committee, China.

Publisher’s Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

This work is supported by the Science and Technology Foundation of Guangdong Province under Grant 2019B090910001 and 2021A0101180005, in part by the National Natural Science Foundation of China under Grant 61941301, Grant 61803090, Grant 11771102, and Grant 61573108, in part by China Postdoctoral Science Foundation under Grant 2018M633353, in part by the Special Program for Key Field of Guangdong Colleges under Grant 2019KZDZX1037, in part by the Natural Science Foundation of Guangdong Province under Grant 2019A1515012109, 2021A030310668 and 2022A1515010178, in part by the Scientific and Technical Supporting Programs of Sichuan Province under Grant 2017GZ0391, 2019YFG0352 and 2017GZ0392. The authors express our deep appreciations to the Editor-in-Chief, Associate Editor, and anonymous reviewers for their constructive and professional comments to improve this paper.

Rights and permissions

About this article

Cite this article

Hong, QQ., Yang, L. & Zeng, B. RANET: A Grasp Generative Residual Attention Network for Robotic Grasping Detection. Int. J. Control Autom. Syst. 20, 3996–4004 (2022). https://doi.org/10.1007/s12555-021-0929-8

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s12555-021-0929-8