Abstract

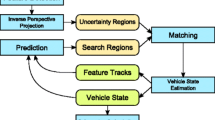

We present the sliding-window monocular visual inertial odometry that is accurate and robust to outliers by employing a new observation model grounded on the pentafocal geometric constraints. The previous approaches are dependent on the unknown 3D coordinates of the features to estimate the ego-motion. However, the inaccurate 3D position of the features can lead to poor performance in motion estimation. To overcome these limitations, we utilize the pentafocal geometry relationship between five images as camera observation model, which makes it unnecessary to estimate the 3D position of the features. Furthermore, we apply the pentafocal constraints in the 1-point random sample consensus (RANSAC) algorithm to find incorrect feature correspondences. We demonstrate the effectiveness of the proposed algorithm in two types of experiments: the KITTI driving scene dataset and the EuRoC micro aerial vehicle (MAV) flying dataset, both qualitatively and quantitatively. It shows more accurate state estimation performance compared to the well-known stereo visual odometry algorithm and current state-of-the-art visual inertial odometry methods.

Article PDF

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Avoid common mistakes on your manuscript.

References

D. Scaramuzza and F. Fraundorfer, “Visual odometry au][tutorial],” IEEE Robotics & Automation Magazine, vol. 18, no. 4, pp. 80–92, 2011.

A. J. Davison, I. D. Reid, N. D. Molton, and O. Stasse, “MonoSLAM: Real-time single camera SLAM,” IEEE Transactions on Pattern Analysis and Machine Intelligence, vol. 29, no. 6, pp. 1052–1067, 2007.

J. Engel, T. Schöps, and D. Cremers, “LSD-SLAM: Largescale direct monocular SLAM,” Proc. of European Conference on Computer Vision (ECCV), pp. 834–849, 2014.

C. Forster, M. Pizzoli, and D. Scaramuzza, “SVO: Fast semi-direct monocular visual odometry,” Proc. of IEEE International Conference on Robotics and Automation (ICRA), pp. 15–22, 2014.

S. Choi, J. Park, and W. Yu, “Simplified epipolar geometry for real-time monocular visual odometry on roads,” International Journal of Control, Automation and Systems, vol. 13, no. 6, pp. 1454–1464, 2015.

P. Corke, Robotics, Vision and Control: Fundamental Algorithms in MATLAB, Springer, 2011.

D. Nistér, O. Naroditsky, and J. Bergen, “Visual odometry,” Proc. of IEEE Computer Society Conference on Computer Vision and Pattern Recognition (CVPR), vol. 1, pp. I–I, 2004.

A. Geiger, J. Ziegler, and C. Stiller, “Stereoscan: dense 3d reconstruction in real-time,” Proc. of IEEE Intelligent Vehicles Symposium (IV), pp. 963–968, 2011.

J. Engel, J. Stückler, and D. Cremers, “Large-scale direct SLAM with stereo cameras,” Proc. of IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), pp. 1935–1942, 2015.

A. I. Mourikis and S. I. Roumeliotis, “A multi-state constraint kalman filter for vision-aided inertial navigation,” Proc. of IEEE International Conference on Robotics and Automation (ICRA), pp. 3565–3572, 2007.

E. S. Jones and S. Soatto, “Visual-inertial navigation, mapping and localization: A scalable real-time causal approach,” The International Journal of Robotics Research, vol. 30, no. 4, pp. 407–430, 2011.

S. Leutenegger, S. Lynen, M. Bosse, R. Siegwart, and P. Furgale, “Keyframe-based visual-inertial odometry using nonlinear optimization,” The International Journal of Robotics Research, vol. 34, no. 3, pp. 314–334, 2015.

M. Bloesch, S. Omari, M. Hutter, and R. Siegwart, “Robust visual inertial odometry using a direct EKF-based approach,” Proc. of IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), pp. 298–304, 2015.

C. Forster, L. Carlone, F. Dellaert, and D. Scaramuzza, “IMU preintegration on manifold for efficient visualinertial maximum-a-posteriori estimation,” Robotics: Science and Systems (RSS), 2015.

J. Civera, O. G. Grasa, A. J. Davison, and J. Montiel, “1-point RANSAC for EKF-based structure from motion,” Proc. of IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), pp. 3498–3504, 2009.

A. Geiger, P. Lenz, C. Stiller, and R. Urtasun, “Vision meets robotics: The KITTI dataset,” The International Journal of Robotics Research, vol. 32, no. 11, pp. 1231–1237, 2013.

M. Burri, J. Nikolic, P. Gohl, T. Schneider, J. Rehder, S. Omari, M. W. Achtelik, and R. Siegwart, “The EuRoC micro aerial vehicle datasets,” The International Journal of Robotics Research, vol. 35, no. 10, pp. 1157–1163, 2016.

J. Civera, A. J. Davison, and J. M. Montiel, “Inverse depth parametrization for monocular SLAM,” IEEE Transactions on Robotics, vol. 24, no. 5, pp. 932–945, 2008.

P. Piniés, T. Lupton, S. Sukkarieh, and J. D. Tardós, “Inertial aiding of inverse depth SLAM using a monocular camera,” Proc. of IEEE International Conference on Robotics and Automation (ICRA), pp. 2797–2802, 2007.

G. Sibley, L. Matthies, and G. Sukhatme, “A sliding window filter for incremental SLAM,” Unifying Perspectives in Computational and Robot Vision, pp. 103–112, 2008.

M. Li and A. I. Mourikis, “High-precision, consistent EKFbased visual-inertial odometry,” The International Journal of Robotics Research, vol. 32, no. 6, pp. 690–711, 2013.

E. Mouragnon, M. Lhuillier, M. Dhome, F. Dekeyser, and P. Sayd, “Generic and real-time structure from motion using local bundle adjustment,” Image and Vision Computing, vol. 27, no. 8, pp. 1178–1193, 2009.

J.-S. Hu and M.-Y. Chen, “A sliding-window visual-IMU odometer based on tri-focal tensor geometry,” Proc. of IEEE International Conference on Robotics and Automation (ICRA), pp. 3963–3968, 2014.

C. Troiani, A. Martinelli, C. Laugier, and D. Scaramuzza, “2-point-based outlier rejection for camera-imu systems with applications to micro aerial vehicles,” Proc. of IEEE International Conference on Robotics and Automation (ICRA), pp. 5530–5536, 2014.

R. Hartley and A. Zisserman, Multiple View Geometry in Computer Vision, Cambridge university press, 2003.

M. A. Fischler and R. C. Bolles, “Random sample consensus: a paradigm for model fitting with applications to image analysis and automated cartography,” Communications of the ACM, vol. 24, no. 6, pp. 381–395, 1981.

J. Nikolic, J. Rehder, M. Burri, P. Gohl, S. Leutenegger, P. T. Furgale, and R. Siegwart, “A synchronized visualinertial sensor system with FPGA pre-processing for accurate real-time SLAM,” Proc. of IEEE International Conference on Robotics and Automation (ICRA), pp. 431–437, 2014.

H. Bay, T. Tuytelaars, and L. Van Gool, “Surf: Speeded up robust features,” Proc. of European Conference on Computer Vision (ECCV), pp. 404–417, 2006.

B. Kitt, A. Geiger, and H. Lategahn, “Visual odometry based on stereo image sequences with RANSAC-based outlier rejection scheme,” Proc. of IEEE Intelligent Vehicles Symposium (IV), pp. 486–492, 2010.

J. Sturm, N. Engelhard, F. Endres, W. Burgard, and D. Cremers, “A benchmark for the evaluation of RGB-D SLAM systems,” Proc. of IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), pp. 573–580, 2012.

A. Geiger, P. Lenz, and R. Urtasun, “Are we ready for autonomous driving? the KITTI vision benchmark suite,” Proc. of IEEE Computer Society Conference on Computer Vision and Pattern Recognition (CVPR), pp. 3354–3361, 2012.

Author information

Authors and Affiliations

Corresponding author

Additional information

Recommended by Associate Editor Huaping Liu under the direction of Editor Duk-Sun Shim. This work was supported by the Seoul National University Research Grant in 2015, the National Research Foundation of Korea (NRF) grant funded by the Ministry of Science, ICT & Future Planning (2014M1A3A3A02034854), and the Technology Innovation Program (10067206) funded by the Ministry of Trade, industry & Energy (MI, Korea).

Pyojin Kim received the B.S. degree in Mechanical Engineering from Yonsei University in 2013. He is currently pursuing the M.S. and Ph.D. degrees in the Department of Mechanical and Aerospace Engineering at Seoul National University. His research interests include 3D computer vision, visual odometry, and visual SLAM.

Hyon Lim received the B.S. and M.S. degrees in Electronic and Electrical Engineering from Inha University in 2008 and 2010, and the Ph.D. degree in the Department of Mechanical and Aerospace Engineering from Seoul National University in 2015. His research interests include computer vision, real-time visual SLAM, and applications of unmanned aerial vehicles.

H. Jin Kim received the B.S. degree from Korea Advanced Institute of Technology (KAIST) in 1995, and the M.S. and Ph.D. degrees in Mechanical Engineering from University of California, Berkeley (UC Berkeley), in 1999 and 2001, respectively. From 2002 to 2004, she was a Postdoctoral Researcher in Electrical Engineering and Computer Science (EECS), UC Berkeley. In September 2004 she joined the Department of Mechanical and Aerospace Engineering at Seoul National University, Seoul, Korea, as an Assistant Professor where she is currently a Professor. Her research interests include intelligent control of robotic systems and motion planning.

Rights and permissions

About this article

Cite this article

Kim, P., Lim, H. & Kim, H.J. Visual Inertial Odometry with Pentafocal Geometric Constraints. Int. J. Control Autom. Syst. 16, 1962–1970 (2018). https://doi.org/10.1007/s12555-017-0200-5

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s12555-017-0200-5