Abstract

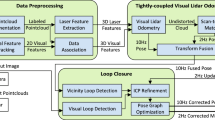

To achieve precise localization, autonomous vehicles usually rely on a multi-sensor perception system surrounding the mobile platform. Calibration is a time-consuming process, and mechanical distortion will cause extrinsic calibration errors. Therefore, we propose a lidar-visual-inertial odometry, which is combined with an adapted sliding window mechanism and allows for online nonlinear optimization and extrinsic calibration. In the adapted sliding window mechanism, spatial-temporal alignment is performed to manage measurements arriving at different frequencies. In nonlinear optimization with online calibration, visual features, cloud features, and inertial measurement unit (IMU) measurements are used to estimate the ego-motion and perform extrinsic calibration. Extensive experiments were carried out on both public datasets and real-world scenarios. Results indicate that the proposed system outperforms state-of-the-art open-source methods when facing challenging sensor-degenerating conditions.

摘要

为了实现精确定位, 自动驾驶汽车通常依赖分布于移动平台周围的多传感器感知系统。标定是一个耗时的过程, 机械形变会导致外参标定误差。因此, 我们提出了一种激光-视觉-惯性里程计, 它与自适应滑动窗口机制相结合, 允许在线非线性优化和外参标定。在自适应滑动窗口机制中, 利用时空对齐管理到达不同频率的测量。在具有在线标定的非线性优化中, 视觉特征、点云特征和惯性测量单元(IMU)测量用于估计自身运动并执行外参标定。在公共数据集和真实场景中进行了大量实验。结果表明, 当面临具有挑战性的传感器退化条件时, 所提出的系统优于最先进的开源方法。

Article PDF

Similar content being viewed by others

Avoid common mistakes on your manuscript.

References

ZHUANG H Y, ZHOU X J, WANG C X, et al. Wavelet transform-based high-definition map construction from a panoramic camera [J]. Journal of Shanghai Jiao Tong University (Science), 2021, 26(5): 569–576.

CHEN J C, LI L, YANG X B. Efficient online vehicle tracking for real-virtual mapping systems [J]. Journal of Shanghai Jiao Tong University (Science), 2021, 26(5): 598–606.

AN L F, ZHANG X Y, GAO H B, et al. Semantic segmentation-aided visual odometry for urban autonomous driving [J]. International Journal of Advanced Robotic Systems, 2017, 14(5): 1–11.

MOURIKIS A I, ROUMELIOTIS S I. A multi-state constraint Kalman filter for vision-aided inertial navigation [C]//2007 IEEE International Conference on Robotics and Automation. Rome: IEEE, 2007: 3565–3572.

LI M Y, MOURIKIS A I. High-precision, consistent EKF-based visual-inertial odometry [J]. The International Journal of Robotics Research, 2013, 32(6): 690–711.

QIN T, LI P L, SHEN S J. VINS-Mono: A robust and versatile monocular visual-inertial state estimator [J]. IEEE Transactions on Robotics, 2018, 34(4): 1004–1020.

ZHANG J, SINGH S. Low-drift and real-time lidar odometry and mapping [J]. Autonomous Robots, 2017, 41(2): 401–416.

SHAN T X, ENGLOT B, MEYERS D, et al. LIOSAM: Tightly-coupled lidar inertial odometry via smoothing and mapping [C]//2020 IEEE/RSJ International Conference on Intelligent Robots and Systems. Las Vegas, NV: IEEE, 2020: 5135–5142.

XU W, ZHANG F. FAST-LIO: A fast, robust LiDAR-inertial odometry package by tightly-coupled iterated Kalman filter [J]. IEEE Robotics and Automation Letters, 2021, 6(2): 3317–3324.

PEREIRA M, et al. Self calibration of multiple LIDARs and cameras on autonomous vehicles [J]. Robotics and Autonomous Systems, 2016, 83: 326–337.

KIM D H, KIM G W. Efficient calibration method of multiple LiDARs on autonomous vehicle platform [C]//2020 IEEE International Conference on Big Data and Smart Computing. Busan: IEEE, 2020: 446–447.

GOGINENI S. Multi-sensor fusion and sensor calibration for autonomous vehicles [J]. International Research Journal of Engineering and Technology, 2020, 7(7): 1073–1078.

BELTRAN J, GUINDEL C, DE LA ESCALERA A, et al. Automatic extrinsic calibration method for LiDAR and camera sensor setups [J]. Transactions on Intelligent Transportation Systems, 2022, 23(10): 1–13.

KATO S, TAKEUCHI E, ISHIGURO Y, et al. An open approach to autonomous vehicles [J]. IEEE Micro, 2015, 35(6): 60–68.

FURGALE P, BARFOOT T D, SIBLEY G. Continuous-time batch estimation using temporal basis functions [C]//2012 IEEE International Conference on Robotics and Automation. Saint Paul, MN: IEEE, 2012: 2088–2095.

LEVINSON J, THRUN S. Automatic online calibration of cameras and lasers [C]//Robotics: Science and Systems IX. Berlin: IEEE, 2013: 24–28.

JIAO J H, YE H Y, ZHU Y L, et al. Robust odometry and mapping for multi-LiDAR systems with online extrinsic calibration [J]. IEEE Transactions on Robotics, 2022, 38(1): 351–371.

ZUO X X, GENEVA P, LEE W, et al. LIC-fusion: LiDAR-inertial-camera odometry [C]//2019 IEEE/RSJ International Conference on Intelligent Robots and Systems. Macao: IEEE, 2019: 5848–5854.

LIN J R, ZHENG C R, XU W, et al. R2LIVE: A robust, real-time, LiDAR-inertial-visual tightly-coupled state estimator and mapping [J]. IEEE Robotics and Automation Letters, 2021, 6(4): 7469–7476.

SHAN T X, ENGLOT B, RATTI C, et al. LVISAM: Tightly-coupled lidar-visual-inertial odometry via smoothing and mapping [C]//2021 IEEE International Conference on Robotics and Automation. Xi’an: IEEE, 2021: 5692–5698.

LUCAS B D, KANADE T. An iterative image registration technique with an application to stereo vision [C]//7th International Joint Conference on Artificial Intelligence. Vancouver: Morgan Kaufmann Publishers Inc, 1981: 674–679.

HUBER P J. Robust estimation of a location parameter [M]//Breakthroughs in statistics. New York: Springer, 1992: 492–518.

AGARWAL S, MIERLE K. Ceres solver: Tutorial & reference [EB/OL]. [2021-12-10]. http://www.ceres-solver.org.

Funding

Foundation item: the National Key R&D Program of China (No. 2020YFC2007500), and the National Natural Science Foundation of China (No. U2013203)

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Mao, T., Zhao, W., Wang, J. et al. Lidar-Visual-Inertial Odometry with Online Extrinsic Calibration. J. Shanghai Jiaotong Univ. (Sci.) 28, 70–76 (2023). https://doi.org/10.1007/s12204-023-2570-6

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s12204-023-2570-6