Abstract

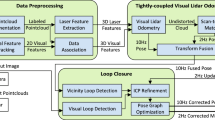

Pose estimation is one of the fundamental capabilities that must be fulfilled by autonomous vehicles prior to performing other tasks such as collision avoidance and motion control. Due to the complexity of outdoor environments, this problem can only be effectively solved by fusing multiple modalities such as LiDAR and camera. In this paper, we propose a novel method to tightly couple inertial measurements from IMU into the emerging direct visual-laser odometry framework. To be more specific, a 2-step optimization-based approach is employed. Firstly, inertial measurements are used to introduce additional constraints in direct image alignment. The estimated pose is then refined in IMU-assisted windowed refinement. To validate the proposed method, we carry out intensive experiments in two recent and challenging datasets: UrbanLoco and USVInland. Experimental results show that our framework enjoys more robust and accurate pose estimation in challenging scenarios compared to that of existing popular methods.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Bresson, G., Alsayed, Z., Yu, L., Glaser, S.: Simultaneous localization and mapping: a survey of current trends in autonomous driving. IEEE Trans. Intell. Veh. 2(3), 194–220 (2017)

Jinyu, L., Bangbang, Y., Danpeng, C., Nan, W., Guofeng, Z., Hujun, B.: Survey and evaluation of monocular visual-inertial slam algorithms for augmented reality. Virtual Reality Intell. Hardw. 1(4), 386–410 (2019)

Cadena, C., Carlone, L., Carrillo, H., Latif, Y., Scaramuzza, D., Neira, J., Reid, I., Leonard, J.J.: Past, present, and future of simultaneous localization and mapping: toward the robust-perception age. IEEE Trans. Robot. 32(6), 1309–1332 (2016)

Campos, C., Elvira, R., Rodríguez, J.J.G., Montiel, J.M., Tardós, J.D.: Orb-slam3: an accurate open-source library for visual, visual-inertial, and multimap slam. IEEE Trans. Robot. 37(6), 1874–1890 (2021)

Cvišić, I., Ćesić, J., Marković, I., Petrović, I.: Soft-slam: computationally efficient stereo visual simultaneous localization and mapping for autonomous unmanned aerial vehicles. J. Field Robot. 35(4), 578–595 (2018)

Zhang, J., Singh, S.: Low-drift and real-time lidar odometry and mapping. Auton. Robot. 41(2), 401–416 (2017)

Qin, C., Ye, H., Pranata, C.E., Han, J., Zhang, S., Liu, M.: Lins: A lidar-inertial state estimator for robust and efficient navigation. In: 2020 IEEE International Conference on Robotics and Automation (ICRA), pp. 8899–8906. IEEE (2020)

Engel, J., Koltun, V., Cremers, D.: Direct sparse odometry. IEEE Trans. Pattern Anal. Mach. Intell. 40(3), 611–625 (2017)

Behley, J., Stachniss, C.: Efficient surfel-based slam using 3d laser range data in urban environments. In: Robotics: Science and Systems, vol. 2018, p. 59 (2018)

Von Stumberg, L., Usenko, V., Cremers, D.: Direct sparse visual-inertial odometry using dynamic marginalization. In: 2018 IEEE International Conference on Robotics and Automation (ICRA), pp. 2510–2517. IEEE (2018)

Xu, W., Cai, Y., He, D., Lin, J., Zhang, F.: Fast-lio2: Fast direct lidar-inertial odometry (2021). arXiv:2107.06829

Shin, Y.S., Park, Y.S., Kim, A.: Dvl-slam: sparse depth enhanced direct visual-lidar slam. Auton. Robot. 44(2), 115–130 (2020)

Wen, W., Zhou, Y., Zhang, G., Fahandezh-Saadi, S., Bai, X., Zhan, W., Tomizuka, M., Hsu, L.T.: Urbanloco: a full sensor suite dataset for mapping and localization in urban scenes. In: 2020 IEEE International Conference on Robotics and Automation (ICRA), pp. 2310–2316. IEEE (2020)

Cheng, Y., Jiang, M., Zhu, J., Liu, Y.: Are we ready for unmanned surface vehicles in inland waterways? The usvinland multisensor dataset and benchmark. IEEE Robot. Autom. Lett. 6(2), 3964–3970 (2021)

Sola, J., Deray, J., Atchuthan, D.: A micro lie theory for state estimation in robotics (2018). arXiv:1812.01537

Forster, C., Carlone, L., Dellaert, F., Scaramuzza, D.: On-manifold preintegration for real-time visual-inertial odometry. IEEE Trans. Robot. 33(1), 1–21 (2016)

Zubizarreta, J., Aguinaga, I., Montiel, J.M.M.: Direct sparse mapping. IEEE Trans. Robot. 36(4), 1363–1370 (2020)

Sturm, J., Engelhard, N., Endres, F., Burgard, W., Cremers, D.: A benchmark for the evaluation of rgb-d slam systems. In: 2012 IEEE/RSJ International Conference on Intelligent Robots and Systems, pp. 573–580. IEEE (2012)

Geiger, A., Lenz, P., Urtasun, R.: Are we ready for autonomous driving? The kitti vision benchmark suite. In: 2012 IEEE Conference on Computer Vision and Pattern Recognition, pp. 3354–3361. IEEE (2012)

Acknowledgement

We acknowledge the support of time and facilities from Ho Chi Minh City University of Technology (HCMUT), VNU-HCM for this study.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2023 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Pham, QH., Tran, NH., Nguyen, TD. (2023). IMU-Assisted Direct Visual-Laser Odometry in Challenging Outdoor Environments. In: Huang, YP., Wang, WJ., Quoc, H.A., Le, HG., Quach, HN. (eds) Computational Intelligence Methods for Green Technology and Sustainable Development. GTSD 2022. Lecture Notes in Networks and Systems, vol 567. Springer, Cham. https://doi.org/10.1007/978-3-031-19694-2_44

Download citation

DOI: https://doi.org/10.1007/978-3-031-19694-2_44

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-19693-5

Online ISBN: 978-3-031-19694-2

eBook Packages: Intelligent Technologies and RoboticsIntelligent Technologies and Robotics (R0)