Abstract

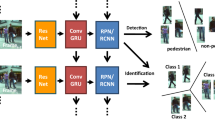

With the deepening of neural network research, object detection has been developed rapidly in recent years, and video object detection methods have gradually attracted the attention of scholars, especially frameworks including multiple object tracking and detection. Most current works prefer to build the paradigm for multiple object tracking and detection by multi-task learning. Different with others, a multi-level temporal feature fusion structure is proposed in this paper to improve the performance of framework by utilizing the constraint of video temporal consistency. For training the temporal network end-to-end, a feature exchange training strategy is put forward for training the temporal feature fusion structure efficiently. The proposed method is tested on several acknowledged benchmarks, and encouraging results are obtained compared with the famous joint detection and tracking framework. The ablation experiment answers the problem of a good position for temporal feature fusion.

Article PDF

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Avoid common mistakes on your manuscript.

References

FEICHTENHOFER C, PINZ A, ZISSERMAN A. Detect to track and track to detect[C]//Proceedings of the IEEE International Conference on Computer Vision, October 22–29, 2017, Venice, Italy. New York: IEEE, 2017: 3038–3046.

ZHANG Y, WANG C, WANG X, et al. FairMOT: on the fairness of detection and re-identification in multiple object tracking[J]. International journal of computer vision, 2021, 129: 3069–3087.

PENG J L, WANG Q, WANG X. Chained-tracker: chaining paired attentive regression results for end-to-end joint multiple-object detection and tracking[C]//16th European Conference on Computer Vision, August 23–28, 2020, Glasgow, UK. Heidelberg: Springer, 2020: 145–161.

ZHOU X, KOLTUN V, KRÄHENBÜHL P. Tracking objects as points[C]//16th European Conference on Computer Vision, August 23–28, 2020, Glasgow, UK. Heidelberg: Springer, 2020: 474–490.

ZHANG Y, WANG C, WANG X, et al. Bytetrack: multi-object tracking by associating every detection box[C]//17th European Conference on Computer Vision, October 24–28, 2022, Tel Aviv, Israel. Heidelberg: Springer, 2022: 1–21.

CHEN X, PENG H, WANG D, et al. SeqTrack: sequence to sequence learning for visual object tracking[C]//Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, June 18–22, 2023, Vancouver, Canada. New York: IEEE, 2023: 14572–14581.

LIU M, ZHU M. Mobile video object detection with temporally-aware feature maps[C]//Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, June 18–22, 2018, Salt Lake City, UT, USA. New York: IEEE, 2018: 5686–5695.

BERTASIUS G, TORRESANI L, SHI J. Object detection in video with spatiotemporal sampling networks[C]//15th European Conference on Computer Vision, September 8–14, 2018, Munich, Germany. Heidelberg: Springer, 2018: 331–346.

GUO C, ZHENG N, TAN Y, et al. Progressive sparse local attention for video object detection[C]//Proceedings of the IEEE/CVF International Conference on Computer Vision, October 27–November 2, 2019, Seoul, Korea. New York: IEEE, 2019: 3909–3918.

TANG P, WANG C, WANG X, et al. Object detection in videos by high quality object linking[J]. IEEE transactions on pattern analysis and machine intelligence, 2019, 42(5): 1272–1278.

XU Y, BAN Y, DELORME G, et al. TransCenter: transformers with dense representations for multiple-object tracking[J]. IEEE transactions on pattern analysis and machine intelligence, 2022, 45(6): 7820–7835.

YU F, WANG D, SHELHAMER E, et al. Deep layer aggregation[C]//Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, June 18–22, 2018, Salt Lake City, UT, USA. New York: IEEE, 2018: 2403–2412.

LEAL-TAIXÉ L, MILAN A, REID I, et al. Motchallenge 2015: towards a benchmark for multi-target tracking[EB/OL]. (2015-04-01) [2023-12-23]. https://arxiv.org/abs/1504.01942.

MILAN A, LEAL-TAIXÉ L, REID I, et al. MOT16: a benchmark for multi-object tracking[EB/OL]. (2016-03-01) [2023-12-23]. https://arxiv.org/abs/1603.00831.

SHAO S, ZHANG Y, ZENG W, et al. Crowdhuman: a benchmark for detecting human in a crowd[EB/OL]. (2018-05-01) [2023-12-23]. https://arxiv.org/abs/1805.00123.

GEIGER A, LENZ P, URTASUN R. Are we ready for autonomous driving? The KITTI vision benchmark suite[C]//Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, June 16–21, 2012, Providence, RI, USA. New York: IEEE, 2012: 3354–3361.

CAESAR H, BANKITI V, LANG A, et al. Nuscenes: a multimodal dataset for autonomous driving[C]//Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, June 14–19, 2020, Seattle, WA, USA. New York: IEEE, 2020: 11621–11631.

DOLLÁR P, WOJEK C, SCHIELE B, et al. Pedestrian detection: a benchmark[C]//Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, June 20–25, 2009, Miami, FL, USA. New York: IEEE, 2009: 304–311.

ZHANG S, BENENSON R, SCHIELE B. Citypersons: a diverse dataset for pedestrian detection[C]//Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, July 21–26, 2017, Honolulu, HI, USA. New York: IEEE, 2017: 3213–3221.

XIAO T, LI S, WANG B, et al. Joint detection and identification feature learning for person search[C]//Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, July 21–26, 2017, Honolulu, HI, USA. New York: IEEE, 2017: 3415–3424.

ZHENG L, ZHANG H, SUN S, et al. Person re-identification in the wild[C]//Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, July 21–26, 2017, Honolulu, HI, USA. New York: IEEE, 2017: 1367–1376.

ESS A, LEIBE B, SCHINDLER K, et al. A mobile vision system for robust multi-person tracking [C]//Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, June 23–28, 2008, Anchorage, AK, USA. New York: IEEE, 2008: 1–8.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflicts of interest

The authors declare no conflict of interest.

Additional information

This work has been supported by the Zhejiang Provincial Natural Science Foundation of China (No.LQ24F020016), the General Scientific Research Fund of Zhejiang Provincial Education Department (No.Y202248776), the Wenzhou Science and Technology Plan Project (No.G20220035), and the Wenzhou Key Laboratory Construction Project (No.2022HZSY0048).

LIN Mengying is a lecturer at the College of Intelligent Manufacturing, Wenzhou Polytechnic. She received her master degree in professional engineering (2019) from University of Sydney. Her research interests are mainly in smart materials, defect detection and artificial intelligence. E-mail: 2019291034@wzpt.edu.cn

Rights and permissions

About this article

Cite this article

Ge, Y., Ye, W., Zhang, G. et al. Multi-level temporal feature fusion with feature exchange strategy for multiple object tracking. Optoelectron. Lett. 20, 505–512 (2024). https://doi.org/10.1007/s11801-024-4139-5

Received:

Revised:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11801-024-4139-5