Abstract

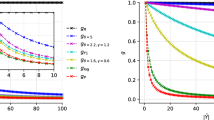

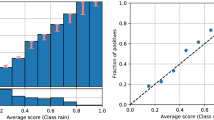

Classifiers that refrain from classification in certain cases can significantly reduce the misclassification cost. However, the parameters for such abstaining classifiers are often set in a rather ad-hoc manner. We propose a method to optimally build a specific type of abstaining binary classifiers using ROC analysis. These classifiers are built based on optimization criteria in the following three models: cost-based, bounded-abstention and bounded-improvement. We show that selecting the optimal classifier in the first model is similar to known iso-performance lines and uses only the slopes of ROC curves, whereas selecting the optimal classifier in the remaining two models is not straightforward. We investigate the properties of the convex-down ROCCH (ROC Convex Hull) and present a simple and efficient algorithm for finding the optimal classifier in these models, namely, the bounded-abstention and bounded-improvement models. We demonstrate the application of these models to effectively reduce misclassification cost in real-life classification systems. The method has been validated with an ROC building algorithm and cross-validation on 15 UCI KDD datasets.

Article PDF

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Avoid common mistakes on your manuscript.

References

Axelsson, S. (1999). The base-rate fallacy and its implications for the intrusion detection. In Proceedings of the 6th ACM conference on computer and communications security (pp. 1–7). Singapore: Kent Ridge Digital Labs.

Chow, C. (1970). On optimum recognition error and reject tradeoff. IEEE Transactions on Information Theory, 16(1), 41–46.

Cohen, I., & Goldszmidt, M. (2004). Properties and benefits of calibrated classifiers. In J.-F. Boulicaut, F. Esposito, F. Giannotti, & D. Pedreschi (Eds.), Lecture notes in computer science : Vol. 3202. Proceedings of PKDD 2004: 8th European conference on principles and practice of knowledge discovery in databases (pp. 125–136). Berlin: Springer.

Dietterich, T. G. (1998). Approximate statistical test for comparing supervised classification learning algorithms. Neural Computation, 10(7), 1895–1923.

Dubuisson, B., & Masson, M. (1996). A statistical decision rules with incomplete knowledge about classes. Pattern Recognition, 26(1), 155–165.

Elkan, C. (2001). The foundations of cost-sensitive learning. In Proceedings of the seventeenth international joint conference on artificial intelligence (IJCAI’01) (pp. 973–978). Seattle: Kaufmann.

Fawcett, T. (2003). ROC graphs: notes and practical considerations for researchers (HPL-2003-4). Tech. rep., HP Laboratories.

Ferri, C., & Hernández-Orallo, J. (2004). Cautious classifiers. In Proceedings of ROC analysis in artificial intelligence, 1st international workshop (ROCAI-2004) (pp. 27–36), Valencia, Spain.

Ferri, C., Flach, P., & Hernández-Orallo, J. (2004). Delegating classifiers. In Proceedings of 21th international conference on machine leaning (ICML-2004) (pp. 106–110). Alberta: Omnipress.

Flach, P. A., & Wu, S. (2005). Repairing concavities in ROC curves. In Proceedings of the 19th international joint conference on artificial intelligence (IJCAI’05) (pp. 702–707), Edinburgh, Scotland.

Gamberger, D., & Lavrač, N. (2000). Reducing misclassification costs. In Lecture notes in artificial intelligence : Vol. 1910. Principles of data mining and knowledge discovery, 4th European conference (PKDD 2000) (pp. 34–43), Lyon, France. Berlin: Springer.

Hettich, S., & Bay, S. D. (1999). The UCI KDD archive. Web page at http://kdd.ics.uci.edu.

Lewis, D. D., & Catlett, J. (1994). Heterogeneous uncertainty sampling for supervised learning. In Proceedings of ICML-94, 11th international conference on machine learning (pp. 148–156). San Francisco: Kaufmann.

Muzzolini, R., Yang, Y.-H., & Pierson, R. (1998). Classifier design with incomplete knowledge. Pattern Recognition, 31(4), 345–369.

Pazzani, M. J., Murphy, P., Ali, K., & Schulenburg, D. (1994). Trading off coverage for accuracy in forecasts: applications to clinical data analysis. In Proceedings of AAAI symposium on AI in medicine (pp. 106–110), Stanford, CA.

Pietraszek, T. (2005). Optimizing abstaining classifiers using ROC analysis. In Machine learning, proceedings of the twenty-second international conference (ICML 2005) (pp. 665–672), Bonn, Germany.

Pietraszek, T. (2007). Classification of intrusion detection alerts using abstaining classifiers. Intelligent Data Analysis Journal, 11(3), 293–316.

Provost, F., & Fawcett, T. (2001). Robust classification systems for imprecise environments. Machine Learning, 42(3), 203–231.

R Development Core Team (2004). R: a language and environment for statistical computing. Vienna: R Foundation for Statistical Computing. ISBN 3-900051-00-3.

Senator, T. E. (2005). Multi-stage classification. In Proceedings of the 5th IEEE international conference on data mining (ICDM 2005) (pp. 386–393). Houston: IEEE Computer Society.

Stewart, J. (1992). Calculus. Washington: Brooks Cole.

Tortorella, F. (2000). An optimal reject rule for binary classifiers. In Lecture notes in computer science : Vol. 1876. Advances in pattern recognition, joint IAPR international workshops SSPR 2000 and SPR 2000 (pp. 611–620), Alicante, Spain. Berlin: Springer.

Tortorella, F. (2004). Reducing the classification cost of support vector classifiers through an ROC-based reject rule. Pattern Analysis Applications, 7(2), 128–143.

Witten, I. H., & Frank, E. (2000). Data mining: practical machine learning tools with java implementations. San Francisco: Kaufmann.

Wolfram Research Inc. (1999–2006). Lagrange Multiplier—from Wolfram MathWorld. Web page at http://mathworld.wolfram.com/LagrangeMultiplier.html.

Zadrozny, B., & Elkan, C. (2001). Obtaining calibrated probability estimates from decision trees and naive Bayesian classifiers. In Proceedings of the eighteenth international conference on machine learning (ICML-2001) (pp. 609–616), Williams College, Williamstown, MA. San Mateo: Kaufmann.

Author information

Authors and Affiliations

Corresponding author

Additional information

An early version of this paper was published at ICML2005.

Action Editor: Johannes Fürnkranz.

Rights and permissions

About this article

Cite this article

Pietraszek, T. On the use of ROC analysis for the optimization of abstaining classifiers. Mach Learn 68, 137–169 (2007). https://doi.org/10.1007/s10994-007-5013-y

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10994-007-5013-y