Abstract

In online STEM courses, self-regulated learning (SRL) serves a critical role in academic success because students are required to monitor and regulate their learning processes. Yet, relatively little research has investigated which and to what extent do SRL strategies contribute to students’ online learning experiences. In this paper, with a lens of the Community of Inquiry (CoI) framework (Garrison et al., 2001), we investigated which students' SRL strategy use predicts three elements of the perceptions of CoI: teaching, social, and cognitive presences. Our sample included 278 undergraduate STEM students who enrolled in a self-paced online course teaching the introductory level of calculus. A Multiple Indicator-Multiple Cause (MIMIC) analysis was employed to investigate the SRL predictors that affect three elements of CoI. Prior to MIMIC analyses, we confirmed the dimensionalities of the SRL and the perceptions of CoI, respectively, through a series of confirmatory factor analyses (CFAs). The MIMIC analysis revealed that environmental structuring and help-seeking affected teaching presence. Social presence was predicted by goal setting and self-evaluation through peers, whereas environmental structuring, time management, and self-evaluation through peers predicted cognitive presence. The findings of this study provide new empirical evidence on the different roles of SRL in promoting three elements of the perceptions of CoI. Academic and practical implications of the findings of the study were discussed.

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Avoid common mistakes on your manuscript.

1 Introduction

Research has consistently demonstrated the relationship between self-regulated learning (SRL) and academic achievement in online learning (Broadbent & Poon, 2015). Cho and Shen (2013) further emphasized that SRL is not only a contributing factor, but also a key predictor of students' success in online environments. This fact highlights the importance of SRL in shaping the educational outcomes of students in digital platforms.

Despite a growing body of research exploring the nuances of SRL in online learning, there remains a notable gap in understanding its implications specifically within the context of science, technology, engineering, and mathematics (STEM) education (Li et al., 2022; Saez et al., 2020; Zheng et al., 2020). In addition, while there is evident interest in online learning studies, as indicated by works such as those by Barnard-Brak et al. (2010), a comprehensive exploration of SRL within this context appears to be less prevalent. Existing empirical studies have often centered on general aspects of SRL (Cleary et al., 2020), with less attention given to the intricate phases of students' self-regulation processes.

The significance of this oversight becomes even more pronounced when considering the unique challenges (e.g., students’ inappropriate uses of learning strategies and adaptability to online learning) posed by STEM courses in higher education (Dumford & Miller, 2018; García-Pérez et al., 2021). These courses naturally require students to deeply understand complex ideas and develop skills for solving varied problems (Wang et al., 2021). The online environment, especially in self-paced modules, generally often lacks the immediate feedback and in-person interactions characteristic of traditional classrooms (Hawkins et al., 2013; Kim et al., 2021). This absence makes individuals' ability to regulate their learning not just beneficial, but critical to their academic achievement in STEM courses.

Given these considerations, it becomes evident that there is an urgent need to explore the dynamics of SRL in online STEM education. As more institutions offer STEM courses online, it becomes especially important to understand SRL dynamics to ensure students can effectively manage their learning. Anchored in the community of inquiry (CoI) framework, this study delves deeper into this area of exploration. Our primary objective is to identify and understand how SRL variables manifest and interact in a STEM online course, with the hope of providing insights that can enhance the quality and effectiveness of digital STEM education.

2 Literature review

2.1 Self-regulated learning in online learning environments

In recent times, as students learning has transcended existing classroom boundaries, migrating to online platforms, their autonomy and responsibility have concurrently increased. This shift underscores the paramount significance of self-regulated learning (SRL). In the vast expense of online learning, the essence of SRL becomes a complex tapestry of cognitive, metacognitive, and behavioral strands (Pintrich & De Groot, 1990; Pintrich et al., 1993; Zimmerman, 1989, 1990). It encapsulates the dynamic learning processes and strategies that guide a student’s learning journey. As online learning often lacks the immediate feedback loop of face-to-face interactions, the capability to set goals, monitor progress, and adapt learning strategies becomes the precursor of academic success. Zimmerman (1989, 1990)’s cyclic conception of SRL—encompassing forethought, performance, and self-reflection phases—has laid the groundwork for evolving theoretical frameworks. In addition, Pintrich and De Groot (1990) and Pintrich et al. (1993) further elaborate on this, emphasizing that SRL is not limited to cognitive processes but also involves controlling one’s attention and motivation. In digital learning environments, it translates to managing distractions, staying motivated, and creating a conducive learning environment.

SRL is particularly accentuated in online learning scenarios. In self-paced online courses, students bear greater responsibility for their own learning (Dabbagh & Bannan-Ritland, 2005), navigating vast repositories of digital information with limited immediate feedback. Previous studies have demonstrated that SRL is a crucial factor that positively affects online students’ learning outcomes, including academic performance and satisfaction during online courses. For instance, a review by Broadbent and Poon (2015) found that time management, metacognition, effort regulation, and critical thinking were significantly correlated with students’ grades in online higher education settings. In addition, SRL strategies such as self-efficacy and task value have been identified as significant predictors of satisfaction with online courses (e.g., Amoozegar et al., 2017; Artino, 2007; Joo et al., 2013).

Yet, while these insights are invaluable, STEM education—with its complex blend of theory, practical skills, and iterative problem-solving—presents unique methodological challenges for SRL research. Conventional SRL frameworks, implemented for more generic contexts, may not fully grasp the multifaceted nature of online STEM education. Also, many studies in online STEM education have often relied on linear models or single-indicator analyses. While informative, existing approaches could overlook the complex interplays and might oversimplify the intricacy of SRL in STEM learning. There is a pressing need for a methodological approach that offers both a dynamic and holistic perspective on SRL, tailored to the intricacies of online STEM courses that offers a fresh and comprehensive perspective of SRL in digital learning landscapes.

2.2 Self-regulated learning in STEM education

SRL in STEM has been significantly emphasized in recent years. SRL skills are essential for STEM major students’ interdisciplinary (Zheng et al. 2020) and open-ended task performance (Barak, 2012). Barak (2012) argues that fostering students’ SRL is advantageous to enhancing students’ learning engagement in open-ended design tasks. Several empirical studies have further explored the potential of SRL variables in STEM learning contexts. Zheng et al., (2020) analyzed the engineering design behaviors of 108 ninth graders and then identified four SRL behaviors patterns: competent, cognitive-oriented, reflective, and minimally self-regulated learners. The study found that each SRL group exhibited different belief states and behavior patterns. Furthermore, Li et al. (2020) explored a total of 111 ninth-grade students’ engineering design exercises via Energy3D, a computer-aided learning environment that supports 3D modeling and simulation. The study found the significant differences in evaluation behaviors in SRL among the performance groups of unsuccessful, success-oriented, and mastery-oriented students.

Overall, these studies yielded a consistent result that SRL is a significant factor that influences students’ STEM learning performance. However, despite these findings, previous research has been limited to K-12 settings, and few studies in higher education have identified the relationships between SRL and online learning presence in STEM fields. Given that students in higher education may have different capabilities to regulate and control their own learning compared to those in K-12, it is worth exploring how SRL behaviors of students with STEM majors in higher education appear in online learning.

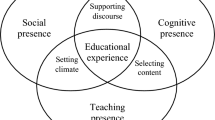

2.3 Community of inquiry

The Community of Inquiry (CoI) is a dynamic model that represents essential elements for the development of community and the pursuit of inquiry (Garrison et al., 2001). The CoI framework is grounded in Vygotsky's social constructivism (1978) and Dewey’s (1938) practical inquiry. The core of the CoI framework is “deep and meaningful learning” (Akyol & Garrison, 2011, p. 235). The CoI framework has had a significant influence on research and practice to enhance the effectiveness of online education (Kozan & Caskurlu, 2018). The CoI framework consists of three main elements: cognitive presence, social presence, and teaching presence. Previous research has found that these three presences have a positive impact on learning outcomes in online learning environments (Martin et al., 2022). In addition, instructional strategies and activities have been designed to promote these three presences in online courses (Fiock, 2020). The next section describes each presence and its effectiveness on learning outcomes in online learning settings.

2.3.1 Cognitive presence

Cognitive presence is defined as “the extent to which the participants in any particular configuration of a CoI are able to construct meaning through sustained communication” (Garrison et al., 1999, p. 89). Cognitive presence has been found to be a significant factor that positively predicts students’ satisfaction in online courses (e.g., Giannousi & Kioumourtzoglou, 2016; Joo et al. 2011). For example, Giannousi and Kioumourtzoglou (2016) investigated how cognitive, social, and teaching presence were predictive of students’ satisfaction during hybrid learning courses. Using a total of 214 undergraduates, they collected students’ responses from a 5-Likert self-reported survey developed by Arbaugh et al. (2008). They found that cognitive presence was the strongest predictor of students’ satisfaction. Joo et al., (2011) corroborated that cognitive presence is significantly predictive of students’ satisfaction during online courses.

2.3.2 Social presence

Social presence refers to “the ability of participants to identify with the community (e.g., course of study), communicate purposefully in a trusting environment, and develop interpersonal relationships by projecting their individual personalities." (Garrison, 2009, p. 352) Social presence is generally divided into three categories: emotional expression, open communication, and group cohesion (Garrison et al., 1999). Previous research has shown that social presence is one of the significant predictors of learning outcomes and satisfaction with online courses (e.g., Hostetter & Busch, 2012; Richardson et al., 2017). For instance, Hostetter and Busch, (2012) explored the relationship between social presence and learning outcome via classroom assessment technique (CAT) ratings. Their regression analysis results suggest that students with higher social presence in the online discussion had higher ratings on CAT results. Richardson et al. (2017) conducted a meta-analysis and found a highly positive relationship between social presence and perceived learning. This research emphasizes that enhancing social presence through social interactions is essential and serves as the backbone of critical thinking and higher-level learning.

2.3.3 Teaching presence

Teaching presence is defined as "the design, facilitation, and direction of cognitive and social presence for the realization of personally meaningful and educationally worthwhile learning outcomes" (Anderson et al., 2001, p. 5). Teaching presence occurs in the form of course organization and instructional discourse and serves as a proxy for instructional guidance quality. Teaching presence is also correlated with social and cognitive presence (Joo et al., 2011). Specifically, existing research has reported statistically significant relationships between teaching presence-related sub-factors (e.g., instructional design, discourse facilitation, and direct instruction) and learner satisfaction. Zhang et al. (2016) examined how teaching presence is associated with online learners' four types of engagement behaviors (i.e., positive, active, constructive, and interactive engagement). Using data from 218 middle school English teachers, they conducted regression analyses and found multiple statistically significant relationships between teaching presence and the engagement behavior types. These findings demonstrate that teaching presence is largely indicative of the instructional design quality of online courses and students' learning engagement.

2.4 Self-regulated learning and community of inquiry

The original CoI framework includes three presences: Cognitive presence, social presence, and teaching presence. In addition to these three types of presence, research has proposed a new type of presence, learning presence, in a revised CoI framework (Kozan & Caskurlu, 2018). Learning presence is closely related to SRL. Shea and Bidjerano (2010) proposed learning presence as the fourth element in the CoI. They explained that a constellation of traits of SRL such as motivation and behavior could be elements of learning presence. To support this, Shea and Bidjerano (2012) conducted surveys including CoI items (Arbaugh et al., 2008; Shea & Bidjerano, 2008; Swan et al., 2008), self-efficacy, and effort regulation items from the MSLQ (Motivated Strategies for Learning Questionnaire), including the OSLQ and CoI items (Barnard et al., 2009) to 2,010 college students. They found that teaching presence, cognitive presence, and social presence were positively correlated with the total SRL scores measured by OSLQ. Additionally, SRL was identified as a vital moderator of the effects of teaching and cognitive presences. It also moderated the effects of social presence on cognitive presence. Cho et al. (2017) examined the effect of SRL on college students' perceptions of CoI in online settings. The study results reveal that SRL levels significantly affected online students' perceived CoI. Furthermore, students with better SRL skills demonstrated a stronger perceived CoI compared to those with lesser SRL skills. This result describes the relationships between the online SRL and the three CoI presences. However, the findings of the above two studies are limited to overall SRL scores.

Wertz (2022) recently developed a survey named "WebTALK" that includes broader constructs of SRL that were named "learning presence indicators." Wertz confirmed that learning presence was identified as the strongest predictor of cognitive presence compared to social and teaching presences. Although Wertz's (2022) study provided empirical evidence on the relationships between each SRL component and each CoI presence, further investigations are still needed. In Wertz's (2022) study, three-dimensional constructs were identified in learning presence: developmental, motivational, and behavioral indicators. Wertz (2022) explained a developmental indicator as a new construct of learning presence, but it cannot be theoretically supported and explained. The developmental components are not included in any existing SRL theories and models. The finding of Wertz's (2022) study might be attributed to the use of the Learning Environment Preferences (LEP) Scale, which was developed to measure students' intellectual development (Moore, 1990). SRL generally consists of motivational, behavioral, and cognitive/metacognitive regulation components (Lehmann et al., 2014). Therefore, there is still a need to investigate specific components of motivational, behavioral, and cognitive/metacognitive regulation in relation to CoI.

Related to the sub-components of SRL in online setting, there have been inconsistent results in the previous validation studies. Previous validation studies of OSLQ (e.g., Barnard et al., 2009; Fung et al., 2018; Kilis & Yildirim, 2018) identified online SRL consists of six components – environmental structuring, goal setting, time management, help-seeking, task strategies, and self-evaluation. On the other hand, Vanslambrouck et al. (2019) supported the seven-factor models by splitting self-evaluation into two sub-constructs: self-evaluation through strategies and self-evaluation through peers through the confirmatory factor analyses with 213 students in the blended-learning settings. Regarding the self-evaluation through peers, it should be noted that contrast to the traditional peer-assessment which can be defined as a structured process in which “students evaluate, or are evaluated by, their peers” (van Zundert et al., 2010, p. 270), self-evaluation through peers can be operationalized as a type of self-assessment whereby students assessed their own learning progress by referencing their peers’ learning processes (Vanslambrouck et al., 2019). That is, the subjects of assessing and being assessed are students themselves, but the main criteria of self-assessment were set by their peers’ online learning activities. In this regard, it is required to investigate the dimensionality of SRL in online settings.

Existing research offers foundational insights into the interplay between SRL variables and the CoI framework. However, a noticeable gap persists when we delve into online STEM learning contexts. The CoI framework generally provides a robust structure to understand student learning experiences in online settings. Within this context, SRL becomes crucial, acting as the internal boundary guiding students through various paths of digital information, asynchronous interactions, and self-paced modules. STEM education—requiring deep conceptual understanding, practical experimentation, and problem-solving—amplifies the significance of SRL. As Wang et al. (2021) highlights, STEM courses demand learners to grapple with multifaceted and intricate knowledge structures. In online environments, where face-to-face feedback is limited, and learners often work in isolation, the importance of SRL is heightened. However, despite the evident interconnection between the CoI framework, SRL, and online STEM education, there remains a paucity of empirical research exploring these dynamics. The complexity of how SRL variables interact with the CoI elements in the context of online STEM remains unanswered. This study hence seeks to bridge this gap. Our focal research question is: To what extent does online self-regulated learning influence STEM-majored undergraduates' perceived community of inquiry? Fig. 1 represents our hypothetical research model, offering a lens through which we aim to decode the dynamics of SRL within the CoI framework in online STEM education.

To address the above research question, the current study adopts a multiple indicator, multiple cause (MIMIC; (Jöreskog & Goldberger, 1975) model as a methodological framework. The MIMIC model is a statistical approach to simultaneously estimate a measurement model and a structural model into a single model, which allows researchers to examine which factors with multiple indicators are regressed on any types of covariates by minimizing measurement errors and required sample sizes. Such characteristics of the MIMIC model allow us to accomplish research goals by estimating a reflective model of the CoI and, at the same time, investigating the predictive association between sub-factors online SRL and latent constructs of the CoI.

3 Method

3.1 Participants, contexts, and procedures

A total of 287 undergraduates in South Korea participated in this study. Students were enrolled in a self-paced online course teaching the introductory level of calculus during the 2020 spring semester. At the beginning of the semester, study recruitment emails were sent to 2,754 undergraduate students who registered for Introductory mathematics, and 1,024 (37.2%) students consented to participate in this study. Based on the academic background survey results, 339 students majoring in STEM were initially selected for this study. Nevertheless, due to withdrawals, course enrollment had changed. These cases (n = 52) were excluded in the sample (Enders, 2022). Accordingly, the final sample (n = 287) for the current study was limited to those who enrolled in the course, majored in the STEM field, did not withdraw in the middle of the course, and completed all three surveys. And there was no missing data in the students' survey responses. Among the 287 undergraduate students, 33.8% were female (n = 97) and 66.2% were male (n = 190). Regarding study participants’ majors, the majority of students majored in public health science (42.9%) or engineering (27.5%), 12.9% of students majored in information technology, 6.6% of students majored in nursing and science, and 3.5% of students majored in veterinary medicine.

This course is one of the prerequisite courses before moving to advanced courses offered by STEM majors. An introduction to mathematics for university reviews a summary of high school level mathematics as well as basic levels of calculus, including the following concepts: function, limits and continuity, definite and indefinite integration, and the fundamental theorem of calculus. A key feature of this course is not to focus on mastery in mathematical knowledge on calculus, but to apply acquired knowledge from the course to authentic real-world tasks. As a self-paced online course, the course was fully delivered in learning management system (LMS) in which students were able to access syllabus, lecture notes, worked-out example-formatted exercise tasks, video lectures recorded by an instructor. In addition to cognitive resources related to courses, the course also offered regular office hours and additional teaching assistant (TA) sessions in which TAs helped students who struggled with solving exercise programs and exam preparations (in weeks 5, 6 and 13, 14 in Fig. 2).

Data Collection Processes and Online Course Timeline. Note. Each box in the figure refers to weeks of the online courses. Black colored boxes represent the administration of survey, and gray colored boxes represent the weeks for midterm and final exams. TA session refers to online-streaming courses where teaching assistants explained what students were struggling with during the courses, answered exercise questions, and help students prepare for midterm and final exams

This course was taught by the same instructor with multiple TAs and each semester consisted of 15 weeks. From this course, students were expected to acquire the basic concepts of calculus, to flexibly apply mathematical knowledge to authentic tasks, and ultimately to foster mathematical literacy, which is a function of future STEM disciplines. Participants signed consent forms agreeing to participate in the study and completed academic background surveys via online survey forms during the first week. The surveys on online SRL (Barnard et al., 2009) and perceived CoI (Arbaugh et al., 2008) were administrated in week 14. The mid-term and final exams occurred in weeks 7 and 15, respectively. In addition to students’ survey data, we also collected the details of the course and assignment structures. Gathering these types of data was intended to corroborate the quantitative data analysis results by explaining how SRL-related settings exist in the course. The overall data collection phases and online course timeline were presented in Fig. 2.

3.2 Measures

3.2.1 Online self-regulated learning

In this study, students' SRL was measured using the Online Self-regulation Learning Questionnaire (OSLQ; Barnard et al., 2009; see Appendix 1). This scale used a 5-point Likert scale and aimed to assess how students utilized SRL strategies in online settings, with a focus on six sub-constructs: goal setting, environment structuring, time management, task strategies, help seeking, and self-evaluation. Barnard et al. (2009) validated the OSLQ with 204 undergraduate students in online courses and found high levels of average internal reliability (α = 0.92). After checking dimensionality of SRL through a confirmatory factor analysis (CFA), our data was fitted to seven factor model (see more details in measurement models section). The subscale of online SRL ranged from 0.77 to 0.92, all of which were at an acceptable level. Table 1 presents overviews of the measures used in this study and their reliability information.

3.2.2 Perception of community of inquiry

Perceptions of the community of inquiry were measured using a measure developed by Arbaugh et al. (2008; see Appendix 2). This measure consisted of 34 items using a 5-point Likert scale and assessed three sub-constructs: teaching presence, social presence, and cognitive presence. Yu and Richardson (2015) validated this measure with 995 Korean undergraduate students who were enrolled in a cyber university and found that the reliability ranged from 0.91 to 0.96. In this study, the internal reliability of the CoI subscales were somewhat high, ranging from 0.95 to 0.97.

3.2.3 Academic background variables

Students responded to a survey that asked about their majors, gender, prior learning experience with online courses, and the amount of time they spent on online learning. The purpose of entering these variables into the research model is to control potential confounding effects caused by undergraduate students’ academic background, thereby elucidating the relationships between SRL and perceived CoI. Gender was coded as a dichotomous variable, with females being assigned a value of 0 and males a value of 1. Participants were asked to report the number of online courses they had previously taken in the context of higher education as a measure of their prior experience with online learning. Lastly, the survey assessed how many hours per week students invested in preparing for and engaging with online courses by asking about their time spent on online learning.

4 Data analysis

Data were analyzed under the framework of structural equation modeling (SEM). SEM largely consists of two foundational models: a measurement model which investigates the relationship between latent variables and manifest indicators (i.e., items) and a structural model which simultaneously estimates regression equations with latent variables. As a special subset of SEM, a MIMIC model is a combination of a measurement model which defines latent structures (i.e., CFA) and a structural model which regresses latent variables (i.e., path analysis) onto multiple predictors. Given that the goal of this study is to investigate the predictive relationships between online SRL strategies (i.e., multiple causes) and three presences of CoI (i.e., latent factors), MIMIC models allow us to evaluate more formally which online SRL and academic background variables affect three latent level of presences of CoI.

Adopting a two-step approach (Schumacker & Lomax, 2010), we first established measurement models of key variables—perceptions of CoI and online SRL—through a series of CFAs. The main purpose of this step is to check the viability of building MIMIC models and the dimensionality of latent structures. Establishing measurement models enables us to check whether the collected data fit the hypothesized factor structures. Next, we implemented a MIMIC (Jöreskog & Goldberger, 1975) modeling approach with a maximum likelihood (ML) estimation by entering online SRL strategies and academic background variables as predictors into the confirmed measurement of perceived CoI. Compared to Item response theory (IRT) which can identify item features (e.g., item difficulty, discrimination, or guessing parameters), the main purpose of MIMIC model is to identify latent factors measured by indicators and uncover multiple predictors which cause these latent factors (Jöreskog & Goldberger, 1975). In terms of methodological benefits, compared to traditional latent variable modelings which build a measurement model and then a structural model sequentially, the MIMIC model can simultaneously estimate a measurement model and a structural model. Further, it enables us to model measurement errors, thereby increasing accuracy of estimating item reliability and factor loadings. In addition, these simultaneous estimation approaches may require relatively smaller sample sizes than other SEM models.

To test the appropriateness of the measurement models and MIMIC models, we employed several goodness-of-fit indices: chi-square estimates, comparative fit index (CFI), Tuckers-Lewis Index (TLI), Bollen’s Incremental Fit Index (IFI), root mean square error of approximation (RMSEA), and standardized root mean square residual (SRMR). As suggested by Hu and Bentler (1999), thresholds of 0.90 or above for CFI, TLI, and IFI, an RMSEA value of 0.08 or lower and an SRMR value of 0.06 or lower indicate acceptable model fit. However, since these guidelines for fit indices can vary depending on the model complexity and sample size (McNeish & Wolf, 2023; West et al., 2023), we comprehensively consider both quantitative criteria, including model fit statistics and factor loadings, and qualitative characteristics for each item description.

To estimate appropriate sample size for the analysis, we employed two different approaches. First, guided by Hu and Bentler (1999), a minimum sample size of 250 is required when using ML estimator with normal-distributed variables. Second, based on MacCullum et al.’s (1996) power analysis-based sample size determination, we estimated the minimum required sample size with conditions of an alpha level of 0.05, a desired power of 0.80, and a null RMSEA of 0.08. The calculation results showed that the required sample size for the test of close fit was 216. Collectively, both approaches provide further evidence that the sample size in the current study can be considered acceptable.

Lastly, to assess potential common method bias (CMB) derived from a common response method, we conducted Harman’s single factor test (Korsgaard & Roberson, 1995; Podsakoff et al., 2003). If a total variance extracted by a single factor is above 50%, there may inflations or deflations of covariance structures caused by CMB (Fuller et al., 2016). All analyses were conducted using R 4.3.2. with the psych (Revelle, 2022), lavaan (Rosseel, 2012), and performance packages (Lüdecke et al., 2021).

5 Results

5.1 Descriptive statistics

Descriptive statistics and correlations of the variables used are presented in Table 2. For online SRL, the sub-construct of environment structuring has the highest means, whereas task strategies and self-evaluation through peers show relatively lower means. For the perceived sense of CoI, compared to teaching and cognitive presences, social presence showed a relatively low mean value. The correlations between the online SRL and the perceived CoI were moderate to relatively high (0.413 < r < 0.798). It implies that these two key variables and their sub-constructs were highly intertwined, especially the relationship between online SRL and the perceived sense of CoI. The skewness and kurtosis of the variables were less than 3 and 10, suggesting that the variables used did not severely violate normality assumptions.

5.2 Measurement models

As a first step for the MIMIC model and to assess construct validity of the online SRL and CoI, we conduct a series of CFAs. The results of CFA using the original model of CoI were unsatisfactory, χ2 = 1819.792, df = 524, p < 0.001, CFI = 0.889, TLI = 0.882, IFI = 0.890, RMSEA = 0.093, and SRMR = 0.051. First, we checked the item description, and noticed that five items were not aligned with course design considerations, such as “issue-based discussion (teaching presence item 11)”, “individualized feedback from instructors (teaching presence item 12)”, “improvement of sense of belonging (teaching presence item 10 and social presence item 1)”, and “knowledge transfer to non-class related activities (cognitive presence 12)”. Further, guided by Hancock and Mueller (2013), we investigated residual correlations across 34 items to identify misfitted items. Residual correlations indicate the degree of unexplained variances between items; thus, if some items hold excessive residual correlations, these unexplained variances lead to lower the quality of the measurement model. Indeed, these five items showed substantially higher residual correlations than other CoI items. After deleting five items, the revised model fitted the data well, χ2 = 1199.861, df = 374, p < 0.001, CFI = 0.915, TLI = 0.907, IFI = 0.915, RMSEA = 0.088, and SRMR = 0.049.

To check the dimensionality of SRL, the CFA model using the original OSLQ scales (i.e., six factor model) yielded an acceptable model fit: χ2 = 716.428, df = 237, p < 0.001, CFI = 0.895, TLI = 0.877, IFI = 0.895, RMSEA = 0.084, and SRMR = 0.061. However, after reviewing each item statement and factor loadings, we detected a significant difference in factor loading for the first two items (i.e., items 21 and 22) and last two items (i.e., items 23 and 24) of self-evaluation subscale. We therefore decided to split self-evaluation into two sub-constructs and named them self-evaluation through strategies and self-evaluation through peers. Self-evaluation through strategies can be operationalized as a process whereby students make judgements about their own online learning processes or the quality of learning by external instructional materials, artifacts or strategies. On the other hand, self-evaluation through peers can be described as a process during which students reflect or evaluate their learning through referring to their peers' learning progresses. This result supported the seven-factor model of OSLQ suggested by the work of Vanslambrouck et al. (2019). In our study, the seven-factor model fitting to the current data yielded more acceptable model fit indices, χ2 = 562.628, df = 231, p < 0.001, CFI = 0.927, TLI = 0.913, IFI = 0.928, RMSEA = 0.071, and SRMR = 0.052.

Lastly, given that both online SRL and CoI were collected from the same data sources (i.e., a self-reported survey), it is required to test whether a systematic error variance caused by CMB potentially led to distort the relationship between variables. The total variance extracted by a single factor was 48.7%, which is lower than the cutoff of 50.0% (Fuller et al., 2016). This means that the current dataset is free of CMB issues.

5.3 Main analysis

To build a MIMIC model, academic background variables – gender, prior learning experiences, and time spent in course – and seven sub-constructs of SRL are added to the measurement model of CoI and are loaded to each latent variable. In terms of SRL, we entered mean scores of sub-constructs of SRL. The overall model fit remained acceptable, χ2 = 1585.629, df = 634, p < 0.001, CFI = 0.905, TLI = 0.896, IFI = 0.906, RMSEA = 0.072, and SRMR = 0.042. Among academic background variables, there was no significant predictor to three sub-constructs of CoI. This result suggests that these academic background variables did not affect the levels of the CoI. On the other hand, as Fig. 3 illustrates, some sub-constructs of online SRL were positively associated with sub-factors of CoI. Specifically, goal setting was significantly related to social presence (b = 0.310, p < 0.001). Environment structuring was significantly related to teaching presence (b = 0.262, p < 0.001) and cognitive presence (b = 0.247, p = 0.001). Help-seeking was significantly associated with teaching presence (b = 0.204, p = 0.001). Time management was associated with cognitive presence (b = 0.152, p = 0.016). Lastly, self-evaluation through peers was significantly associated with social presence (b = 0.245, p < 0.001) and cognitive presence (b = 0.140, p = 0.012). We provide detailed results of regression and measurement sections of MIMIC model in Appendix 3.

6 Discussion

6.1 Self-regulated learning and community of inquiry

We aimed at investigating the extent to which SRL influences STEM-major undergraduates' perceived CoI in online learning environments. We discovered learning dynamics between SRL variables and three types of presence in the CoI framework using a MIMIC model. The results of the present study demonstrate that SRL variables significantly predicted CoI presences in general, which are aligned with previous study findings (Cho et al., 2017; Wertz, 2022). These study findings confirmed the relationships between SRL and CoI presences identified in the previous studies (Cho et al., 2017; Wertz, 2022). Moreover, specifically, we found that the different aspects of online SRL have a significant impact on the three outcome variables (cognitive, teaching, and social presence).

First, in terms of cognitive presence, three SRL variables (environment structuring, time management, and self-evaluation through peers) were found to be significant predictors. Cognitive presence refers to “the extent to which students are able to construct and confirm meaning through sustained reflection” (Garrison et al., 2001, p. 5). It has four phases: triggering events, exploration, integration, and resolution. It is speculated that environmental structuring and time management may be effective in helping students complete these phases. Environmental structuring involves choosing a place and time with minimal distractions for studying, which also applies to online learning contexts. Online learners must also manage their time effectively in autonomous online learning settings. Additionally, the study found a significant relationship between self-evaluation through peers and cognitive presence, which is supported by the work of Williams-Dobosz et al. (2021), who found that students build cognitive presence by responding to others' questions and providing explanations and resources to their peers in online courses.

Second, with regard to teaching presence, we found that both environment structuring and help-seeking were identified as significant predictors of teaching presence. Help-seeking typically entails locating peers who are knowledgeable about course content from instructors or teaching assistants. In the online course that this study looked at, students and teaching assistants (TAs) met online once a week using a web-conferencing tool. Students in the course asked TAs for occasional assistance through online sessions. In the TA sessions, TAs answered questions and gave guidance for assignments to students via a web-conferencing tool, which increased the students’ perception of their presence.

In fact, web-conferencing tools have been proven effective in increasing teaching presence. For instance, Stover and Miura (2015) found that students who had significantly higher levels of perceived teaching presence in an online course that utilized a web-conferencing tool than those in an online course that did not use any tools. The use of web-conferencing tools is closely related to environmental structuring in the present study. It is because students had to find a quiet place or clear up their room to use a web-conferencing tool to meet their TAs.

Third, this study found that goal setting and self-evaluation through peers significantly predicted social presence. An interpretation is that both goal setting and self-evaluation through peers are indicative of high engagement and reflective thinking in individuals' own behaviors. The study found that the course delivered an assignment that required students to apply calculus concepts to primary learning tasks in STEM education. It is speculated that a challenging assignment that demands students' problem-solving skills might help them set specific goals and monitor their learning process better. Additionally, the fact that a substantial portion of students (59.9%) completed group assignments illustrated that they were likely to gain a better understanding of how their behaviors affect others.

6.2 Implications

6.2.1 Academic implications

The current study findings contribute to the better understanding of learning presence constructs. In particular, the present study discovered learning presence constructs in STEM contexts. In Pintrich et al.’s (1993) framework of SRL, the present study found environment structuring, time management, help-seeking, and self-evaluation through peers as behavioral constructs and goal settings as a cognitive/metacognitive construct. While a majority of previous studies found motivational and behavioral constructs of learning presence (e.g., Shea & Bidjerano, 2010; Wertz, 2022), the present study discovered cognitive/metacognitive as a new construct of learning presence. Therefore, the findings of the current study not only corroborate existing constructs of learning presence but also provide a new insight into cognitive/metacognitive constructs of learning presence in STEM fields.

A methodological implication is the use of the MIMIC model as a way to elucidate the effect of sub-constructs of SRL on the perceived CoI. Compared to traditional approaches which can be applied to observed variables (e.g., regression and ANOVA) or investigate the relationships between selected SRL sub-constructs and the perceived CoI, the MIMIC model allows for simultaneous evaluations of three latent variables of CoI presence—cognitive, social, and teaching presences—in relation to seven SRL sub-constructs. It enables us to assess how each SRL sub-construct uniquely contributes to explaining the variance of three presences, controlling for all other covariates in the models. Further, our findings from the MIMIC model also provide a more precise understanding of how three presences under the CoI frameworks are regressed on SRL sub-constructs, given that measurement errors of each indicator are considered in the process of model specification (Jöreskog & Goldberger, 1975).

6.2.2 Practical implications

The present study found goal setting, environment structuring, time management, help-seeking, and self-evaluation through peers as constructs of learning presence. This implies that instructors or practitioners should assist students in employing these skills when designing and teaching online courses. First, instructors or instructional designers should integrate online discussion forums into their courses to promote students’ help-seeking behaviors. Several studies have shown that online discussion forums effectively assisted students in seeking help for academic problem solving in online settings (e.g., Balaji & Chakrabarti, 2010; Bull et al., 2001). In addition, instructors could provide instructional prompts such as ‘You are not expected to solve this task all alone!’ in online discussion forums (Schworm & Gruber, 2012) to improve students’ help-seeking. Second, instructors or instructional designers could design activities where online learners can write down the distractions they encounter while taking online courses and discuss how to remove them by sharing (García et al., 2015). Third, in order to support online students’ time management, instructors could share effective time management strategies found in empirical studies (e.g., Miertschin et al., 2015) with their students. Fourth, in terms of goal setting, instructors could encourage students to set their own learning goals and monitor their progress to achieve the goals in online discussion forums. Finally, instructors could design and provide group work where students can regulate each other’s self-evaluation process.

6.3 Limitations and future research

Despite the study being an attempt to provide empirical evidence on how to connect SRL to the CoI framework, several limitations should be addressed. The first limitation concerns that cross-sectional research design the current study used can prevent capturing situated features of SRL (Greene, 2018). The use of SRL strategies can be dynamically changed depending on which tasks students faced over the same course (Severiens et al., 2001). In this regard, future study should consider adopting longitudinal designs which allow for tracing how students adopt SRL strategies over time.

Second, students’ SRL was measured only by a self-reported questionnaire which potentially causes CMB issues. Although self-report questionnaires have been a prevalent practice in SRL research, its resultant measures could be biased because it relies heavily on students' retrospective memories (Rovers et al., 2019). As such, there is a need for research that collects multiple data sources, such as trace-log, recorded video clips, and verbal transcripts to evaluate the quality and quantity of students’ SRL strategies in an objective way (Azevedo & Gašević, 2019). Such multimodal data enable us to advance our understanding of how undergraduate students regulate their learning in online STEM courses (Molenaar et al., 2023), and to minimize systematic error variances derived from a single data collection when modeling dynamic learning progresses (Garger et al., 2019).

Third, given that the sample of the current study included similar majors, especially STEM fields, the composition of our sample is relatively homogeneous. Although these sample features can advance our understanding of how STEM majored students engage with online STEM courses, with lenses of SRL and CoI framework, it is still limited that our findings can be extended to students with various majors. Regarding the perceived CoI according to students’ majors, Lim and Richardson (2021) reported that undergraduate students’ academic domains do not affect significant differences in the degrees of teaching, social and cognitive presences. To add more generalizability to our findings, future research is required to consider potentially important academic background variables and incorporate heterogeneous samples in research design phases.

Additionally, future study could examine the perceived quality of online learning in relation to the use of SRL and perceived CoI. When students engage in online learning settings, they assess various aspects of quality including educational quality, information quality, and technical system quality (Yang et al., 2023). Understanding these interconnections could provide new insights into optimizing online learning experiences for students.

Lastly, the present study did not investigate the relationships between SRL and perceived CoI, and academic achievements. Previous research syntheses broadly construed that online course achievements were considerably associated with SRL (Lee et al., 2019; Xu et al., 2023) and three presences of CoI framework (Martin et al., 2022). Considering the intertwined relationships among these three variables, future research is needed to collect academic achievement data (e.g., course grades) and further investigate the dynamic interactions between the use of SRL strategies, perceived CoI, and the academic achievements.

7 Conclusion

This study contributes to laying the foundational base for understanding how SRL serves a pivotal role in an online STEM course, with a lens of CoI framework. Until recently, SRL has gained attention among online learning researchers and has been gradually incorporated into the CoI framework as a learning presence (Shea & Bidjerano, 2012). Nevertheless, it remains unclear how undergraduate students utilize SRL strategies in self-paced online courses and ultimately contribute to increased teaching, social, and cognitive presence. The current study fills this gap and adds empirical evidence to the literature on the CoI framework and SRL. The results of MIMIC analyses offer evidence that undergraduate students’ SRL strategy usage patterns did not uniformly predict three types of presence in the CoI framework. In particular, environmental structuring and help-seeking affected teaching presence. Goal setting and self-evaluation through peers predicted social presence, whereas environmental structuring, time management, and self-evaluation through peers predicted cognitive presence. Such varying predictive patterns of SRL tell us that the relationships between SRL strategies and the three elements of CoI frameworks are complex. These findings suggest that researchers should move away from the current simplistic perspectives in which SRL itself or selected SRL variables are viewed as a learning presence, and toward adopting a more nuanced and comprehensive view of SRL. We believe that a sophisticated understanding of how to situate SRL within the CoI framework enables researchers and practitioners to adjust and refine the design of online learning experiences, thereby boosting learning for students.

Data availability

The datasets generated and/or analyzed during the current study are not publicly available due to participant privacy but are available from the corresponding author on reasonable request.

References

Akyol, Z., & Garrison, D. R. (2011). Understanding cognitive presence in an online and blended community of inquiry: Assessing outcomes and processes for deep approaches to learning. British Journal of Educational Technology, 42(2), 233–250. https://doi.org/10.1111/j.1467-8535.2009.01029.x

Amoozegar, A., Daud, S. M., Mahmud, R., & Jalil, H. A. (2017). Exploring learner to institutional factors and learner characteristics as a success factor in distance learning. International Journal of Innovation and Research in Educational Sciences, 4(6), 647–656. Retrieved from https://www.ijires.org/index.php/issues?view=publication&task=show&id=331

Anderson, T., Rourke, L., Garrison, D. R., & Archer, W. (2001). Assessing teaching presence in a computer conferencing context. Journal of Asynchronous Learning Networks, 5(2), 1- 17. http://hdl.handle.net/2149/725

Arbaugh, J. B., Cleveland-Innes, M., Diaz, S. R., Garrison, D. R., Ice, P., Richardson, J. C., & Swan, K. P. (2008). Developing a community of inquiry instrument: Testing a measure of the community of inquiry framework using a multi-institutional sample. The Internet and Higher Education, 11(3–4), 133–136. https://doi.org/10.1016/j.iheduc.2008.06.003

Artino, A. R. (2007). Online military training: Using a social cognitive view of motivation and self-regulation to understand students’ satisfaction, perceived learning, and choice. Quarterly Review of Distance Education, 8(3), 191–202. Retrieved from http://www.infoagepub.com/index.php?id=89&i=5

Azevedo, R., & Gašević, D. (2019). Analyzing multimodal multichannel data about self-regulated learning with advanced learning technologies: Issues and challenges. Computers in Human Behavior, 96, 207–210. https://doi.org/10.1016/j.chb.2019.03.025

Balaji, M. S., & Chakrabarti, D. (2010). Student interactions in online discussion forum: Empirical research from “Media Richness Theory” perspective. Journal of Interactive Online Learning, 9(1), 1–22. Retrieved from http://www.ncolr.org/jiol/issues/pdf/9.1.1.pdf

Barak, M. (2012). From ‘doing’ to ‘doing with learning’: Reflection on an effort to promote self-regulated learning in technological projects in high school. European Journal of Engineering Education, 37(1), 105–116. https://doi.org/10.1080/03043797.2012.658759

Barnard, L., Lan, W., To, Y., Paton, V., & Lai, S. (2009). Measuring self-regulation in online and blended learning environments. Internet and Higher Education, 12(1), 1–6. https://doi.org/10.1016/j.iheduc.2008.10.005

Barnard-Brak, L., Paton, V. O., & Lan, W. Y. (2010). Profiles in self-regulated learning in the online learning environment. International Review of Research in Open and Distributed Learning, 11(1), 61–80. https://doi.org/10.19173/irrodl.v11i1.769

Broadbent, J., & Poon, W. L. (2015). Self-regulated learning strategies & academic achievement in online higher education learning environments: A systematic review. The Internet and Higher Education, 27, 1–13. https://doi.org/10.1016/j.iheduc.2015.04.007

Bull, S., Greer, J., McCalla, G. O. R. D., & Kettel, L. O. R. I. (2001, May). Help-seeking in an asynchronous help forum. In Proceedings of workshop on help provision and help-seeking in interactive learning environments, international conference on artificial intelligence in education. San Antonio, TX, USA.

Cho, M. H., & Shen, D. (2013). Self-regulation in online learning. Distance Education, 34(3), 290–301. https://doi.org/10.1080/01587919.2013.835770

Cho, M. H., Kim, Y., & Choi, D. (2017). The effect of self-regulated learning on college students’ perceptions of community of inquiry and affective outcomes in online learning. The Internet and Higher Education, 34, 10–17. https://doi.org/10.1016/j.iheduc.2017.04.001\

Cleary, T. J., Callan, G. L., & Zimmerman, B. J. (2012). Assessing self-regulation as a cyclical, context-specific phenomenon: Overview and analysis of SRL microanalytic protocols. Educational Research International, 2012, 1–19. https://doi.org/10.1155/2012/428639

Dabbagh, N., & Bannan-Ritland, B. (2005). Online learning: Concepts, strategies and application. Pearson Prentice Hall.

Dewey, J. (1938). Experience and education. Collier Books.

Dumford, A. D., & Miller, A. L. (2018). Online learning in higher education: Exploring advantages and disadvantages for engagement. Journal of Computing in Higher Education, 30, 452–465. https://doi.org/10.1007/s12528-018-9179-z

Enders, C. K. (2022). Applied missing data analysis. Guilford Publications.

Fiock, H. (2020). Designing a community of inquiry in online courses. The International Review of Research in Open and Distributed Learning, 21(1), 135–153. https://doi.org/10.19173/irrodl.v20i5.3985

Fuller, C. M., Simmering, M. J., Atinc, G., Atinc, Y., & Babin, B. J. (2016). Common methods variance detection in business research. Journal of Business Research, 69(8), 3192–3198. https://doi.org/10.1016/j.jbusres.2015.12.008

Fung, J. J., Yuen, M., & Yuen, A. H. (2018). Validity evidence for a Chinese version of the online self-regulated learning questionnaire with average students and mathematically talented students. Measurement and Evaluation in Counseling and Development, 51(2), 111–124. https://doi.org/10.1080/07481756.2017.1358056

García, B. J., Tenorio, G. C., & Ramírez, M. S. (2015). Self-motivation challenges for student involvement in the Open Educational Movement with MOOC. RUSC. Universities and Knowledge Society Journal, 12(1), 91–103. https://doi.org/10.7238/rusc.v12i1.2185

García-Pérez, D., Fraile, J., & Panadero, E. (2021). Learning strategies and self-regulation in context: How higher education students approach different courses, assessments, and challenges. European Journal of Psychology of Education, 36(2), 533–550. https://doi.org/10.1007/s10212-020-00488-z

Garger, J., Jacques, P. H., Gastle, B. W., & Connolly, C. M. (2019). Threats of common method variance in student assessment of instruction instruments. Higher Education Evaluation and Development, 13(1), 2–17. https://doi.org/10.1108/HEED-05-2018-0012

Garrison, D. R., Anderson, T., & Archer, W. (1999). Critical inquiry in a text-based environment: Computer conferencing in higher education. The Internet and Higher Education, 2(2), 87–105. https://doi.org/10.1016/S1096-7516(00)00016-6

Garrison, D. R., Anderson, T., & Archer, W. (2001). Critical thinking, cognitive presence, and computer conferencing in distance education. American Journal of Distance Education, 15(1), 7–23. https://doi.org/10.1080/08923640109527071

Garrison, D. R. (2009). Communities of inquiry in online learning. In P. L. Rogers, G. A. Berg, J. V. Boettcher, C. Howard, L. Justice, & K. D. Schenk (Eds.). Encyclopedia of distance learning (pp. 352–355). (2nd ed.). IGI Global. https://doi.org/10.4018/978-1-60566-198-8.ch052

Giannousi, M., & Kioumourtzoglou, E. (2016). Cognitive, social, and teaching presence as predictors of students’ satisfaction in distance learning. Mediterranean Journal of Social Sciences, 7(2 SI), 439–439. https://doi.org/10.5901/mjss.2016.v7n2s1p439

Greene, J. A. (2018). Self-regulation in education. Routledge.

Hancock, G. R., & Mueller, R. O. (2013). Structural equation modeling: A second course. Information Age Publishing, Inc.

Hawkins, A., Graham, C. R., Sudweeks, R. R., & Barbour, M. K. (2013). Academic performance, course completion rates, and student perception of the quality and frequency of interaction in a virtual high school. Distance Education, 34(1), 64–83. https://doi.org/10.1080/01587919.2013.770430

Hostetter, C., & Busch, M. (2012). Measuring up online: The relationship between social presence and student learning satisfaction. Journal of the Scholarship of Teaching and Learning, 6(2), 1–12. Retrieved from https://scholarworks.iu.edu/journals/index.php/josotl/article/view/1670

Hu, L. T., & Bentler, P. M. (1999). Cutoff criteria for fit indexes in covariance structure analysis: Conventional criteria versus new alternatives. Structural Equation Modeling: A Multidisciplinary Journal, 6(1), 1–55. https://doi.org/10.1080/10705519909540118

Joo, Y. J., Lim, K. Y., & Kim, E. K. (2011). Online university students’ satisfaction and persistence: Examining perceived level of presence, usefulness and ease of use as predictors in a structural model. Computers & Education, 57(2), 1654–1664. https://doi.org/10.1016/j.compedu.2011.02.008

Joo, Y. J., Lim, K. Y., & Kim, J. (2013). Locus of control, self-efficacy, and task value as predictors of learning outcome in an online university context. Computers & Education, 62, 149–158. https://doi.org/10.1016/j.compedu.2012.10.027

Jöreskog, K. G., & Goldberger, A. S. (1975). Estimation of a model with multiple indicators and multiple causes of a single latent variable. Journal of the American Statistical Association, 70(351a), 631–639. https://doi.org/10.1080/01621459.1975.10482485

Kilis, S., & Yildirim, Z. (2018). Online self-regulation questionnaire: Validity and reliability study of Turkish translation. Cukurova University Faculty of Education Journal, 47(1), 233–245. https://doi.org/10.14812/cuefd.298791

Kim, D., Jung, E., Yoon, M., Chang, Y., Park, S., Kim, D., & Demir, F. (2021). Exploring the structural relationships between course design factors, learner commitment, self-directed learning, and intentions for further learning in a self-paced MOOC. Computers & Education, 166, 104171. https://doi.org/10.1016/j.compedu.2021.104171

Korsgaard, M. A., & Roberson, L. (1995). Procedural justice in performance evaluation: The role of instrumental and non-instrumental voice in performance appraisal discussions. Journal of Management, 21(4), 657–669. https://doi.org/10.1177/014920639502100404

Kozan, K., & Caskurlu, S. (2018). On the Nth presence for the Community of Inquiry framework. Computers & Education, 122, 104–118. https://doi.org/10.1016/j.compedu.2018.03.010

Lee, D., Lee, S. L., & Watson, W. R. (2019). Systematic literature review on self-regulated learning in massive open online courses. Australasian Journal of Educational Technology, 35(1), 28–41. https://doi.org/10.14742/ajet.3749

Lehmann, T., Hähnlein, I., & Ifenthaler, D. (2014). Cognitive, metacognitive and motivational perspectives on preflection in self-regulated online learning. Computers in Human Behavior, 32, 313–323. https://doi.org/10.1016/j.chb.2013.07.051

Li, S., Du, H., Xing, W., Zheng, J., Chen, G., & Xie, C. (2020). Examining temporal dynamics of self-regulated learning behaviors in STEM learning: A network approach. Computers & Education, 158, 103987. https://doi.org/10.1016/j.compedu.2020.103987

Li, S., Zheng, J., Huang, X., & Xie, C. (2022). Self-regulated learning as a complex dynamical system: Examining students’ STEM learning in a simulation environment. Learning and Individual Differences, 95, 102144. https://doi.org/10.1016/j.lindif.2022.102144

Lim, J., & Richardson, J. C. (2021). Predictive effects of undergraduate students’ perceptions of social, cognitive, and teaching presence on affective learning outcomes according to disciplines. Computers & Education, 161, 104063. https://doi.org/10.1016/j.compedu.2020.104063

Lüdecke, D., Ben-Shachar, M. S., Patil, I., Waggoner, P., & Makowski, D. (2021). performance: An R package for assessment, comparison and testing of statistical models. Journal of Open Source Software, 6(60). https://doi.org/10.21105/joss.03139

MacCallum, R. C., Browne, M. W., & Sugawara, H. M. (1996). Power analysis and determination of sample size for covariance structure modeling. Psychological Methods, 1(2), 130–149. https://doi.org/10.1037/1082-989X.1.2.130

Martin, F., Wu, T., Wan, L., & Xie, K. (2022). A meta-analysis on the community of inquiry presences and learning outcomes in online and blended learning environments. Online Learning, 26(1), 325–359. https://files.eric.ed.gov/fulltext/EJ1340511.pdf

McNeish, D., & Wolf, M. G. (2023). Dynamic fit index cutoffs for confirmatory factor analysis models. Psychological Methods, 28(1), 61–88. https://doi.org/10.1037/met0000425

Miertschin, S. L., Goodson, C. E., & Stewart, B. L. (2015, June). Time management skills and student performance in online courses. In 2015 ASEE Annual Conference & Exposition (pp. 1585.1–1585.16). Retrieved from https://peer.asee.org/24921

Molenaar, I., de Mooij, S., Azevedo, R., Bannert, M., Järvelä, S., & Gašević, D. (2023). Measuring self-regulated learning and the role of AI: Five years of research using multimodal multichannel data. Computers in Human Behavior, 139, 107540. https://doi.org/10.1016/j.chb.2022.107540

Moore, W. S. (1990). The learning environment preferences: An instrument manual. Center for the Study of Intellectual Development.

Pintrich, P. R., & De Groot, E. V. (1990). Motivational and self-regulated learning components of classroom academic performance. Journal of Educational Psychology, 82(1), 33–40. https://doi.org/10.1037/0022-0663.82.1.33

Pintrich, P. R., Smith, D. A., Garcia, T., & McKeachie, W. J. (1993). A manual for the use of the motivated strategies for learning questionnaire. Ann Arbor University of Michigan. National Center for Research to improve postsecondary teaching and learning.

Podsakoff, P. M., MacKenzie, S. B., Lee, J. Y., & Podsakoff, N. P. (2003). Common method biases in behavioral research: A critical review of the literature and recommended remedies. Journal of Applied Psychology, 88(5), 879–903. https://doi.org/10.1037/0021-9010.88.5.879

Revelle, W. (2022). psych: Procedures for Psychological, Psychometric, and Personality Research. Northwestern University, Evanston, Illinois. R package version 2.2.9, https://CRAN.R-project.org/package=psych

Richardson, J. C., Maeda, Y., Lv, J., & Caskurlu, S. (2017). Social presence in relation to students’ satisfaction and learning in the online environment: A meta-analysis. Computers in Human Behavior, 71, 402–417. https://doi.org/10.1016/j.chb.2017.02.001

Rosseel, Y. (2012). lavaan: An R Package for structural equation modeling. Journal of Statistical Software, 48(2), 1–36. https://doi.org/10.18637/jss.v048.i02

Rovers, S. F., Clarebout, G., Savelberg, H. H., de Bruin, A. B., & van Merriënboer, J. J. (2019). Granularity matters: Comparing different ways of measuring self-regulated learning. Metacognition and Learning, 14, 1–19. https://doi.org/10.1007/s11409-019-09188-6

Saez, F., Mella, J., Loyer, S., Zambrano, C., & Zanartu, N. (2020). Self-regulated learning in engineering students: A systematic review. Revista Espacios, 41(2). 1–7. Retrieved from http://w.revistaespacios.com/a20v41n02/20410207.html

Schumacker, R. E., & Lomax, R. G. (2010). A beginner’s guide to structural equation modeling (3rd ed.). Routledge.

Schworm, S., & Gruber, H. (2012). e-Learning in universities: Supporting help-seeking processes by instructional prompts. British Journal of Educational Technology, 43(2), 272–281.

Severiens, S., Ten Dam, G., & Van Hout Wolters, B. (2001). Stability of processing and regulation strategies: Two longitudinal studies on student learning. Higher Education, 42(4), 437–453. Retrieved from https://www.jstor.org/stable/3448099

Shea, P., & Bidjerano, T. (2008). Measures of quality in online education: An investigation of the community of inquiry model and the net generation. Journal of Educational Computing Research, 39(4), 339–361. https://doi.org/10.2190/EC.39.4.b

Shea, P., & Bidjerano, T. (2010). Learning presence: Towards a theory of self-efficacy, self-regulation, and the development of communities of inquiry in online and blended learning environments. Computers & Education, 55(4), 1721–1731. https://doi.org/10.1016/j.compedu.2010.07.017

Shea, P., & Bidjerano, T. (2012). Learning presence as a moderator in the community of inquiry model. Computers & Education, 59(2), 316–326. https://doi.org/10.1016/j.compedu.2012.01.011

Stover, S., & Miura, Y. (2015). The effects of web conferencing on the community of inquiry in online classes. Journal on Excellence in College Teaching, 26(3), 121–143. http://celt.muohio.edu/ject/issue.php?v=26&n=3

Swan, K., Shea, P., Richardson, J., Ice, P., Garrison, D. R., Cleveland-Innes, M., et al. (2008). Validating a measurement tool of presence in online communities of inquiry. E-Mentor, 2(24), 1–12. https://www.e-mentor.edu.pl/_xml/wydania/24/543.pdf

van Zundert, M., Sluijsmans, D., & Van Merriënboer, J. (2010). Effective peer assessment processes: Research findings and future directions. Learning and Instruction, 20(4), 270–279. https://doi.org/10.1016/j.learninstruc.2009.08.004

Vanslambrouck, S., Zhu, C., Pynoo, B., Lombaerts, K., Tondeur, J., & Scherer, R. (2019). A latent profile analysis of adult students’ online self-regulation in blended learning environments. Computers in Human Behavior, 99, 126–136. https://doi.org/10.1016/j.chb.2019.05.021

Vygotsky, L. (1978). Mind and society. Harvard University Press.

Wang, Q., Peng, Y., & Wang, H. (2021). A curation activity-based self-regulated learning promotion approach as scaffolding to improving learners’ performance in STEM courses. Journal of Educational Computing Research, 07356331211056532. https://doi.org/10.1177/2F07356331211056532

Wertz, R. E. (2022). Learning presence within the Community of Inquiry framework: An alternative measurement survey for a four-factor model. The Internet and Higher Education, 52, 100832. https://doi.org/10.1016/j.iheduc.2021.100832

West, S. G., Wu, W., McNeish, D., & Savord, A. (2023). Model Fit in Structural Equation Modeling In R H. Hoyle, Handbook of structural equation modeling, second edition (pp. 185-205). Guilford.

Williams-Dobosz, D., Jeng, A., Azevedo, R. F., Bosch, N., Ray, C., & Perry, M. (2021). Ask for help: Online help-seeking and help-giving as indicators of cognitive and social presence for students underrepresented in chemistry. Journal of Chemical Education, 98(12), 3693–3703. https://doi.org/10.1021/acs.jchemed.1c00839

Xu, Z., Zhao, Y., Liew, J., Zhou, X., & Kogut, A. (2023). Synthesizing research evidence on self-regulated learning and academic achievement in online and blended learning environments: A scoping review. Educational Research Review. 100510. https://doi.org/10.1007/s12564-016-9426-9

Yang, R., Wibowo, S., Mubarak, S., & Rahamathulla, M. (2023). Managing students' attitude, learning engagement, and stickiness towards e-learning post-COVID-19 in Australian universities: a perceived qualities perspective. Journal of Marketing for Higher Education, 1–32. https://doi.org/10.1080/08841241.2023.2204466

Yu, T., & Richardson, J. C. (2015). Examining reliability and validity of a Korean version of the Community of Inquiry instrument using exploratory and confirmatory factor analysis. The Internet and Higher Education, 25, 45–52. https://doi.org/10.1016/j.iheduc.2014.12.004

Zhang, H., Lin, L., Zhan, Y., & Ren, Y. (2016). The impact of teaching presence on online engagement behaviors. Journal of Educational Computing Research, 54(7), 887–900. https://doi.org/10.1177/0735633116648171

Zheng, J., Xing, W., Zhu, G., Chen, G., Zhao, H., & Xie, C. (2020). Profiling self-regulation behaviors in STEM learning of engineering design. Computers & Education, 143, 103669. https://doi.org/10.1016/j.compedu.2019.103669

Zimmerman, B. J. (1989). A social cognitive view of self-regulated academic learning. Journal of Educational Psychology, 81(3), 329–339. https://doi.org/10.1037/0022-0663.81.3.329

Zimmerman, B. J. (1990). Self-regulated learning and academic achievement: An overview. Educational Psychologist, 25(1), 3–17. https://doi.org/10.1207/s15326985ep2501_2

Funding

This research received no specific grant from any funding agency in the public, commercial, or not-for-profit sectors.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Ethical approval

The questionnaire and methodology for this study was approved by the Human Research Ethics committee of Utah State University.

Conflict of interest

None.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendices

Appendix 1 Online self-regulated learning questionnaire (OSLQ) (Barnard et al., 2009)

Goal setting

Item 1: I set standards for my assignments in online courses.

Item 2: I set short-term (daily or weekly) goals as well as long-term goals (monthly or for the semester).

Item 3: I keep a high standard for my learning in my online courses.

Item 4: I set goals to help me manage study time for my online courses.

Item 5: I don't compromise the quality of my work because it is online.

Environment structuring

Item 1: I choose the location where I study to avoid too much distraction.

Item 2: I find a comfortable place to study.

Item 3: I know where I can study most efficiently for online courses.

Item 4: I choose a time with few distractions for studying for my online courses.

Task strategies

Item 1: I try to take more thorough notes for my online courses because notes are even more important for learning online than in a regular classroom.

Item 2: I read aloud instructional materials posted online to fight against distractions.

Item 3: I prepare my questions before joining in discussion forum.

Item 4: I work extra problems in my online courses in addition to the assigned ones to master the course content.

Time management

Item 1: I allocate extra studying time for my online courses because I know it is time demanding.

Item 2: I try to schedule the same time every day or every week to study for my online courses, and I observe the schedule.

Item 3: Although we don't have to attend daily classes, I still try to distribute my studying time evenly across days.

Help-seeking

Item 1: I find someone who is knowledgeable in course content so that I can consult with him or her when I need help.

Item 2: I share my problems with my classmates online, so we know what we are struggling with and how to solve our problems.

Item 3: If needed, I try to meet my classmates face-to-face.

Item 4: I am persistent in getting help from the instructor through e-mail.

Self-evaluation through strategy

Item 1: I summarize my learning in online courses to examine my understanding of what I have learned.

Item 2: I ask myself a lot of questions about the course material when studying for an online course.

Self-evaluation through peers

Item 1: I communicate with my classmates to find out how I am doing in my online classes.

Item 2: I communicate with my classmates to find out what I am learning that is different from what they are learning.

Appendix 2 Community of inquiry instrument (Arbaugh et al., 2008)

After checking measurement models, items with asterisk (*) were removed in a main analysis.

Teaching Presence (TP)

Item TP1: The instructor clearly communicated important course topics.

Item TP2: The instructor clearly communicated important course goals.

Item TP3: The instructor provided clear instructions on how to participate in course learning activities.

Item TP4: The instructor clearly communicated important due dates/time frames for learning activities.

Item TP5: The instructor was helpful in identifying areas of agreement and disagreement on course topics that helped me to learn.

Item TP6: The instructor was helpful in guiding the class towards understanding course topics in a way that helped me clarify my thinking.

Item TP7: The instructor helped to keep course participants engaged and participating in productive dialogue.

Item TP8: The instructor helped keep the course participants on task in a way that helped me to learn.

Item TP9: The instructor encouraged course participants to explore new concepts in this course.

*Item TP10: Instructor actions reinforced the development of a sense of community among course participants.

*Item TP11: The instructor helped to focus discussion on relevant issues in a way that helped me to learn.

*Item TP12: The instructor provided feedback that helped me understand my strengths and weaknesses relative to the course's goals and objectives.

Item TP13: The instructor provided feedback in a timely fashion.

Social Presence (SP)

*Item SP1: Getting to know other course participants gave me a sense of belonging in the course.

Item SP2: I was able to form distinct impressions of some course participants.

Item SP3: Online or web-based communication is an excellent medium for social interaction.

Item SP4: I felt comfortable conversing through the online medium.

Item SP5: I felt comfortable participating in the course discussions.

Item SP6: I felt comfortable interacting with other course participants.

Item SP7: I felt comfortable disagreeing with other course participants while still maintaining a sense of trust.

Item SP8: I felt that my point of view was acknowledged by other course participants.

Item SP9: Online discussions help me to develop a sense of collaboration.

Cognitive Presence (CP)

Item CP1: Problems posed increased my interest in course issues.

Item CP2: Course activities piqued my curiosity.

Item CP3: I felt motivated to explore content related questions.

Item CP4: I utilized a variety of information sources to explore problems posed in this course.

Item CP5: Brainstorming and finding relevant information helped me resolve content related questions.

Item CP6: Online discussions were valuable in helping me appreciate different perspectives.

Item CP7: Combining new information helped me answer questions raised in course activities.

Item CP8: Learning activities helped me construct explanations/solutions.

Item CP9: Reflection on course content and discussions helped me understand fundamental concepts in this class.

Item CP10: I can describe ways to test and apply the knowledge created in this course.

Item CP11: I have developed solutions to course problems that can be applied in practice.

*Item CP12: I can apply the knowledge created in this course to my work or other non-class related activities.

Appendix 3 Structure and measurement model results in MIMIC model

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Na, C., Lee, D., Moon, J. et al. Modeling undergraduate students’ learning dynamics between self-regulated learning patterns and community of inquiry. Educ Inf Technol (2024). https://doi.org/10.1007/s10639-024-12527-z

Received:

Accepted:

Published:

DOI: https://doi.org/10.1007/s10639-024-12527-z