Abstract

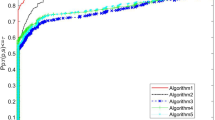

A cautious projection BFGS method is proposed for solving nonconvex unconstrained optimization problems. The global convergence of this method as well as a stronger general convergence result can be proven without a gradient Lipschitz continuity assumption, which is more in line with the actual problems than the existing modified BFGS methods and the traditional BFGS method. Under some additional conditions, the method presented has a superlinear convergence rate, which can be regarded as an extension and supplement of BFGS-type methods with the projection technique. Finally, the effectiveness and application prospects of the proposed method are verified by numerical experiments.

Article PDF

Similar content being viewed by others

Avoid common mistakes on your manuscript.

References

Fu Z J, Ren K, Shu J G, et al. Enabling personalized search over encrypted outsourced data with efficiency improvement. J IEEE Trans Parall Distr Syst, 2016, 27(9): 2546–2559

Fu Z J, Wu X L, Guan C W, et al. Toward efficient multi-keyword fuzzy search over encrypted outsourced data with accuracy improvement. J IEEE Trans Inf Foren Sec, 2017, 11(12): 2706–2716

Gu B, Sun X M, Sheng V S. Structural minimax probability machine. J IEEE Trans Neur Net Lear, 2017, 28(7): 1646–1656

Pan Z Q, Lei J J, Zhang Y, et al. Fast motion estimation based on content property for low-complexity H.265/HEVC encoder. J IEEE Trans Broadcast, 2016, 62(3): 675–684

Pan Z Q, Zhang Y, Kwong S. Efficient motion and disparity estimation optimization for low complexity multiview video coding. J IEEE Trans Broadcast, 2015, 61(2): 166–176

Broyden C G. The convergence of a class of double-rank minimization algorithms 1. general considerations. SIMA J Appl Math, 1970, 6(1): 76–90

Fletcher R. A new approach to variable metric algorithms. Comput J, 1970, 13(3): 317–322

Goldfarb D. A family of variable-metric methods derived by variational means. Math Comp, 1970, 24(109): 23–26

Shanno D F. Conditioning of quasi-Newton methods for function minimization. Math Comp, 1970, 24(111): 647–656

Powell M J D. Some global convergence properties of a variable metric algorithm for minimization without exact line searches. Nonlinear Programming, SIAM-AMS Proceedings. 1976, 9(1): 53–72

Byrd R H, Nocedal J, Yuan Y X. Global convergence of a cass of quasi-Newton methods on convex problems. SIAM J Numer Anal, 1987, 24(5): 1171–1190

Byrd R H, Nocedal J. A tool for the analysis of quasi-Newton methods with application to unconstrained minimization. SIAM J Numer Anal, 1989, 26(3): 727–739

Dixon L C W. Variable metric algorithms: Necessary and sufficient conditions for identical behavior of nonquadratic functions. J Optim Theory Appl, 1972, 10(1): 34–40

Griewank A. The global convergence of partitioned BFGS on problems with convex decompositions and Lipschitzian gradients. Math Program, 1991, 50(1): 141–175

Powell M J D. On the convergence of the variable metric algorithm. IMA J Appl Math, 1971, 7(1): 21–36

Powell M J D. Updating conjugate directions by the BFGS formula. Math Program, 1987, 38(1): 29–46

Biggs M C. Minimization algorithms making use of non-quadratic properties of the objective function. IMA J Numer Anal, 1971, 8(3): 123–123

Nocedal J, Yuan Y X. Analysis of a self-scaling quasi-Newton method. Math Program, 1993, 61: 19–37

Oren S S, Luenberger D G. Self-scaling variable metric (ssvm) algorithms: Part i: Criteria and sufficient conditions for scaling a class of algorithms. Manag Sci, 1974, 20(5): 845–862

Yuan Y X. A modified bfgs algorithm for unconstrained optimization. IMA J Numer Anal, 1991, 3: 325–332

Cheng W Y, Li D H. Spectral scaling BFGS method. J Optim Theory Appl, 2010, 146(2): 305–319

Sheng Z, Yuan G L, Cui Z R. A new adaptive trust region algorithm for optimization problems. Acta Math Sci, 2018, 38B(2): 479–496

Yuan G L, Wei Z X. The superlinear convergence analysis of a nonmonotone BFGS algorithm on convex objective functions. Acta Math Sci, 2008, 24B: 35–42

Dai Y H. Convergence properties of the BFGS algoritm. SIAM J Optim, 2002, 13(3): 693–701

Yuan G L, Sheng Z, Wang B P, et al. The global convergence of a modified BFGS method for nonconvex functions. J Comput Appl Math, 2017, 327: 274–294

Li D H, Fukushima M. A modified BFGS method and its global convergence in nonconvex minimization. J Comput Appl Math, 2001, 129(1/2): 15–35

Li D H, Fukushima M. On the global convergence of BFGS method for nonconvex unconstrained optimization problems. SIAM J Optim, 2001, 11(4): 1054–1064

Yuan G L, Wang X L, Sheng Z. The projection technique for two open problems of unconstrained optimization problems. J Optim Theory Appl, 2020, 186(2): 590–619

Yuan G L, Wei Z X, Lu X W. Global convergence of BFGS and PRP methods under a modified weak Wolfe-Powell line search. Appl Math Model, 2017, 47: 811–825

Bongartz I, Conn A R, Gould N, et al. CUTE: Constrained and unconstrained testing environment. ACM Trans Math Software, 1995, 21(1): 123–160

More J J, Garbow B S, Hillstrom K E. Testing unconstrained optimization software. ACM Trans Math Software, 1981, 7(1): 17–41

Andrei N. An unconstrained optimization test functions collection. Adv Model Optim, 2008, 10(1): 147–161

Dolan E D, More, Jorge J. Benchmarking optimization software with performance profiles. Math Program, 2002, 91(2): 201–213

Zhou W J, Zhang L. A nonlinear conjugate gradient method based on the MBFGS secant condition. Optim Methods Softw, 2006, 21(5): 707–714

Yang Y G. A robust BFGS algorithm for unconstrained nonlinear optimization problems. Optim, 2022, 73(3): 851–873

Yuan G L, Zhao X, Liu K Jun, et al. An adaptive projection BFGS method for nonconvex unconstrained optimization problems. Numer Algorithms, 2024, 95(4): 1747–1767

Yuan G L, Li P Y, Lu J Y. The global convergence of the BFGS method with a modified WWP line search for nonconvex functions. Numer Algorithms, 2022, 91(1): 353–365

Yuan G L, Zhang M X, Zhou Y J. Adaptive scaling damped BFGS method without gradient Lipschitz continuity. Appl Math Lett, 2022, 124: Art 107634

Tits A L, Yang Y. Globally convergent algorithms for robust pole assignment by state feedback. IEEE Trans Automat Contr, 1996, 41(10): 1432–1452

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of Interest The authors declare no conflict of interest.

Additional information

Yuan’s research was supported by the Guangxi Science and Technology base and Talent Project (AD22080047), the National Natural Science Foundation of Guangxi Province (2023GXNFSBA 026063), the Innovation Funds of Chinese University (2021BCF03001), and the special foundation for Guangxi Ba Gui Scholars.

Rights and permissions

About this article

Cite this article

Yuan, G., Zhao, X. & Yu, J. Global convergence of a cautious projection BFGS algorithm for nonconvex problems without gradient Lipschitz continuity. Acta Math Sci 44, 1735–1746 (2024). https://doi.org/10.1007/s10473-024-0506-3

Received:

Revised:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10473-024-0506-3