Abstract

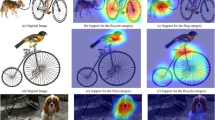

This paper reviews recent studies in understanding neural-network representations and learning neural networks with interpretable/disentangled middle-layer representations. Although deep neural networks have exhibited superior performance in various tasks, interpretability is always Achilles’ heel of deep neural networks. At present, deep neural networks obtain high discrimination power at the cost of a low interpretability of their black-box representations. We believe that high model interpretability may help people break several bottlenecks of deep learning, e.g., learning from a few annotations, learning via human–computer communications at the semantic level, and semantically debugging network representations. We focus on convolutional neural networks (CNNs), and revisit the visualization of CNN representations, methods of diagnosing representations of pre-trained CNNs, approaches for disentangling pre-trained CNN representations, learning of CNNs with disentangled representations, and middle-to-end learning based on model interpretability. Finally, we discuss prospective trends in explainable artificial intelligence.

Article PDF

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Avoid common mistakes on your manuscript.

References

Aubry M, Russell BC, 2015. Understanding deep features with computer-generated imagery. IEEE Int Conf on Computer Vision, p.2875–2883. https://doi.org/10.1109/ICCV.2015.329

Aubry M, Maturana D, Efros A, et al., 2014. Seeing 3D chairs: exemplar part-based 2D–3D alignment using a large dataset of CAD models. Proc IEEE Conf on Computer Vision and Pattern Recognition, p.3762–3769.

Bau D, Zhou B, Khosla A, et al., 2017. Network dissection: quantifying interpretability of deep visual representations. Proc IEEE Conf on Computer Vision and Pattern Recognition, p.1063–6919. https://doi.org/10.1109/CVPR.2017.354

Chen X, Duan Y, Houthooft R, et al., 2016. Infogan: interpretable representation learning by information maximizing generative adversarial nets. NIPS, p.2172–2180.

Dosovitskiy A, Brox T, 2016. Inverting visual representations with convolutional networks. Proc IEEE Conf on Computer Vision and Pattern Recognition, p.4829–4837.

Fong RC, Vedaldi A, 2017. Interpretable explanations of black boxes by meaningful perturbation. IEEE Int Conf on Computer Vision, p.3429–3437. https://doi.org/10.1109/ICCV.2017.371

Goyal Y, Mohapatra A, Parikh D, et al., 2016. Towards transparent AI systems: interpreting visual question answering models. https://arxiv.org/abs/1608.08974

He K, Zhang X, Ren S, et al., 2016. Deep residual learning for image recognition. Proc IEEE Conf on Computer Vision and Pattern Recognition, p.770–778. https://doi.org/10.1109/CVPR.2016.90

Hu Z, Ma X, Liu Z, et al., 2016. Harnessing deep neural networks with logic rules. http://arxiv.org/abs/1603.06318

Huang G, Liu Z, Weinberger KQ, et al., 2017. Densely connected convolutional networks. Proc IEEE Conf on Computer Vision and Pattern Recognition, p.4700–4708.

Kindermans PJ, Schütt KT, Alber M, et al., 2017. Learning how to explain neural networks: patternnet and patternattribution. http://arxiv.org/abs/1705.05598

Koh P, Liang P, 2017. Understanding black-box predictions via influence functions. Proc 34th Int Conf on Machine Learning, p.1885–1894.

Krizhevsky A, Sutskever I, Hinton GE, 2012. Imagenet classification with deep convolutional neural networks. NIPS, p.1097–1105.

Kumar D, Wong A, Taylor GW, 2017. Explaining the unexplained: a class-enhanced attentive response (clear) approach to understanding deep neural networks. IEEE Conf on Computer Vision and Pattern Recognition Workshops, p.1686–1694. https://doi.org/10.1109/CVPRW.2017.215

Lakkaraju H, Kamar E, Caruana R, et al., 2017. Identifying unknown unknowns in the open world: representations and policies for guided exploration. Proc 31st AAAI Conf on Artificial Intelligence, p.2124–2132.

LeCun Y, Bottou L, Bengio Y, et al., 1998a. Gradient-based learning applied to document recognition. Proc IEEE, 86(11):2278–2324. https://doi.org/10.1109/5.726791

LeCun Y, Cortes C, Burges CJ, 1998b. The MNIST Database of Handwritten Digits. http://yann.lecun.com/exdb/ mnist/ [Accessed on June, 2017]

Liu Z, Luo P, Wang X, et al., 2015. Deep learning face attributes in the wild. IEEE Int Conf on Computer Vision, p.3730–3738. https://doi.org/10.1109/ICCV.2015.425

Lu Y, 2015. Unsupervised learning on neural network outputs (v9). http://arxiv.org/abs/1506.00990

Mahendran A, Vedaldi A, 2015. Understanding deep image representations by inverting them. Proc IEEE Conf on Computer Vision and Pattern Recognition, p.5188–5196. https://doi.org/10.1109/CVPR.2015.7299155

Netzer Y, Wang T, Coates A, et al., 2011. Reading digits in natural images with unsupervised feature learning. NIPS, p.1–9.

Nguyen A, Clune J, Bengio Y, et al., 2017. Plug & play generative networks: conditional iterative generation of images in latent space. IEEE Conf on Computer Vision and Pattern Recognition, p.3510–3520. https://doi.org/10.1109/CVPR.2017.374

Olah C, Mordvintsev A, Schubert L, 2017. Feature visualization. Distill. https://doi.org/10.23915/distill.00007

Paysan P, Knothe R, Amberg B, et al., 2009. A 3D face model for pose and illumination invariant face recognition. 6th IEEE Int Conf on Advanced Video and Signal Based Surveillance, p.296–301. https://doi.org/10.1109/AVSS.2009.58

Ribeiro MT, Singh S, Guestrin C, 2016. “Why should I trust you?” explaining the predictions of any classifier. Proc 22nd ACM SIGKDD Int Conf on Knowledge Discovery and Data Mining, p.1135–1144. https://doi.org/10.1145/2939672.2939778

Sabour S, Frosst N, Hinton GE, 2017. Dynamic routing between capsules. NIPS, p.3859–3869.

Selvaraju RR, Cogswell M, Das A, et al., 2017. Grad-CAM: visual explanations from deep networks via gradientbased localization. IEEE Int Conf on Computer Vision, p.618–626. https://doi.org/10.1109/ICCV.2017.74

Simonyan K, Vedaldi A, Zisserman A, 2013. Deep inside convolutional networks: visualising image classification models and saliency maps. http://arxiv.org/abs/1312.6034

Springenberg JT, Dosovitskiy A, Brox T, et al., 2015. Striving for simplicity: the all convolutional net. Inte Conf on Learning Representations, p.1–14.

Su J, Vargas DV, Kouichi S, 2017. One pixel attack for fooling deep neural networks. http://arxiv.org/abs/1710.08864

Szegedy C, Zaremba W, Sutskever I, et al., 2014. Intriguing properties of neural networks. http://arxiv.org/abs/1312.6199

Wang P, Wu Q, Shen C, et al., 2017. The VQA-machine: learning how to use existing vision algorithms to answer new questions. Proc IEEE Conf on Computer Vision and Pattern Recognition, p.1173–1182. https://doi.org/10.1109/CVPR.2017.416

Wu TF, Zhu SC, 2011. A numerical study of the bottomup and top-down inference processes in And-Or graphs. Int J Comput Vis, 93(2):226–252.

Wu TF, Xia GS, Zhu SC, 2007. Compositional boosting for computing hierarchical image structures. Proc IEEE Conf on Computer Vision and Pattern Recognition, p.1–8. https://doi.org/10.1109/CVPR.2007.383034

Wu TF, Li X, Song X, et al., 2017. Interpretable R-CNN. http://arxiv.org/abs/1711.05226

Yang X, Wu TF, Zhu SC, 2009. Evaluating information contributions of bottom-up and top-down processes. IEEE 12th Int Conf on Computer Vision, p.1042–1049. https://doi.org/10.1109/ICCV.2009.5459386

Yosinski J, Clune J, Bengio Y, et al., 2014. How transferable are features in deep neural networks? NIPS, p.1173–1182.

Zeiler MD, Fergus R, 2014. Visualizing and understanding convolutional networks. European Conf on Computer Vision, p.818–833. https://doi.org/10.1007/978-3-319-10590-1_53

Zhang Q, Cao R, Wu YN, et al., 2016. Growing interpretable part graphs on convnets via multi-shot learning. Proc 30th AAAI Conf on Artificial Intelligence, p.2898–2906.

Zhang Q, Cao R, Wu YN, et al., 2017a. Mining object parts from CNNs via active question-answering. Proc IEEE Conf on Computer Vision and Pattern Recognition, p.346–355. https://doi.org/10.1109/CVPR.2017.414

Zhang Q, Cao R, Zhang S, et al., 2017b. Interactively transferring CNN patterns for part localization. http://arxiv.org/abs/1708.01783

Zhang Q, Wang W, Zhu SC, 2018a. Examining CNN representations with respect to dataset bias. Proc 32nd AAAI Conf on Artificial Intelligence, in press.

Zhang Q, Cao R, Shi F, et al., 2018b. Interpreting CNN knowledge via an explanatory graph. Proc 32nd AAAI Conf on Artificial Intelligence, p.2124–2132.

Zhang Q, Yang Y, Wu YN, et al., 2018c. Interpreting CNNs via decision trees. http://arxiv.org/abs/1802.00121

Zhang Q, Wu YN, Zhu SC, 2018d. Interpretable convolutional neural networks. Proc IEEE Conf on Computer Vision and Pattern Recognition, in press.

Zhou B, Khosla A, Lapedriza A, et al., 2015. Object detectors emerge in deep scene CNNs. http://arxiv.org/abs/1412.6856

Zintgraf LM, Adel TSCT, Welling M, 2017. Visualizing deep neural network decisions: prediction difference analysis. http://arxiv.org/abs/1702.04595

Author information

Authors and Affiliations

Corresponding author

Additional information

Project supported by the ONR MURI project (No. N00014-16-1-2007), the DARPA XAI Award (No. N66001-17-2-4029), and NSF IIS (No. 1423305)

Rights and permissions

About this article

Cite this article

Zhang, Qs., Zhu, Sc. Visual interpretability for deep learning: a survey. Frontiers Inf Technol Electronic Eng 19, 27–39 (2018). https://doi.org/10.1631/FITEE.1700808

Received:

Revised:

Published:

Issue Date:

DOI: https://doi.org/10.1631/FITEE.1700808