Abstract

A major role of vision is to guide navigation, and navigation is strongly driven by vision1,2,3,4. Indeed, the brain’s visual and navigational systems are known to interact5,6, and signals related to position in the environment have been suggested to appear as early as in the visual cortex6,7. Here, to establish the nature of these signals, we recorded in the primary visual cortex (V1) and hippocampal area CA1 while mice traversed a corridor in virtual reality. The corridor contained identical visual landmarks in two positions, so that a purely visual neuron would respond similarly at those positions. Most V1 neurons, however, responded solely or more strongly to the landmarks in one position rather than the other. This modulation of visual responses by spatial location was not explained by factors such as running speed. To assess whether the modulation is related to navigational signals and to the animal’s subjective estimate of position, we trained the mice to lick for a water reward upon reaching a reward zone in the corridor. Neuronal populations in both CA1 and V1 encoded the animal’s position along the corridor, and the errors in their representations were correlated. Moreover, both representations reflected the animal’s subjective estimate of position, inferred from the animal’s licks, better than its actual position. When animals licked in a given location—whether correctly or incorrectly—neural populations in both V1 and CA1 placed the animal in the reward zone. We conclude that visual responses in V1 are controlled by navigational signals, which are coherent with those encoded in hippocampus and reflect the animal’s subjective position. The presence of such navigational signals as early as a primary sensory area suggests that they permeate sensory processing in the cortex.

Similar content being viewed by others

Main

To characterize the influence of spatial position on the responses of area V1, we took mice expressing the calcium indicator GCaMP6 in excitatory cells and placed them in a corridor in virtual reality (Fig. 1a). The corridor had a pair of landmarks (a grating and a plaid) that repeated twice, thus creating two visually matching segments 40 cm apart (Fig. 1a, b; Extended Data Fig. 1). We identified V1 using the retinotopic map measured using wide-field imaging (Fig. 1c). We then used a two-photon microscope to view medial V1, focusing our analysis on neurons with receptive field centres more lateral than 40° azimuth (Fig. 1c), which were driven as the mouse passed the landmarks. As expected, given the repetition of visual scenes in the two segments of the corridor, some V1 neurons had a response profile with two equal peaks 40 cm apart (Fig. 1d). Other V1 neurons, however, responded differently to the same visual stimuli in the two segments (Fig. 1d). These results indicate that visual activity in V1 can be strongly modulated by an animal’s position in an environment.

a, Mice ran on a cylindrical treadmill to navigate a virtual corridor. The corridor had two landmarks that repeated after 40 cm, creating visually matching segments (red and blue bars). b, Screenshots showing the right half of the corridor at pairs of positions 40 cm apart. c, Example retinotopic map of the cortical surface. Grey curve shows the border of V1. Squares denote the field of view in two-photon imaging sessions targeted to medial V1 (inset shows the field with green frame). We analysed responses from neurons with receptive field centres greater than 40° azimuth (curve). d, Normalized response as a function of position in the corridor for six example V1 neurons. Dotted lines show predictions, assuming identical responses in matching segments of the corridor. e, Normalized response as a function of position, obtained from odd trials, for 4,958 V1 neurons. Neurons are ordered by the position of their maximum response. f, As in e for even trials. Curves indicate preferred position (yellow) and preferred position ± 40 cm (blue and red). g, Cumulative distribution of the spatial modulation ratio in even trials: response at non-preferred position (40 cm from peak response) divided by response at preferred position for cells with responses within the visually matching segments (median ± m.a.d., 0.61 ± 0.31; significantly less than 1, P < 10−104, n = 2,422, Wilcoxon two-sided signed rank test). h, As in g, stratifying the data by running speed and considering a model without spatial selectivity, the non-spatial model. The curves corresponding to low (cyan) and high (purple) speeds overlap and appear as a single dashed curve (P = 0.21, Wilcoxon two-sided signed rank test). Grey curve, spatial modulation ratios from a non-spatial model considering visual and behavioural factors (Extended Data Fig. 7).

This modulation of visual responses by spatial position occurred in the majority of V1 neurons (Fig. 1e–g). We imaged 8,610 V1 neurons across 18 sessions in 4 mice and selected 4,958 neurons with receptive field centres beyond 40° azimuth and reliable firing along the corridor (see Methods). We divided the trials in half, and used the odd-numbered trials to find the position at which each neuron fired maximally. The resulting representation reveals a striking preference of V1 neurons for spatial position (Fig. 1e), with most neurons giving stronger responses in one position (preferred position) than in the visually matching position 40 cm away (non-preferred position). To avoid circularity, we quantified this preference on the other half of the data (the even-numbered trials) and found that the preference for position was robust (Fig. 1f). Indeed, among the neurons that responded when the mouse traversed the visually matching segments (n = 2,422), the responses at the non-preferred position were markedly smaller than at the preferred position (Fig. 1g; Extended Data Fig. 2). We defined a spatial modulation ratio for each cell as the ratio of responses at the two visually matching positions (non-preferred/preferred, in the even trials). The median spatial modulation ratio was 0.61 ± 0.31 ( ± median absolute deviation, m.a.d.), significantly less than 1 (P < 10−104, Wilcoxon two-sided signed rank test). Neurons preferred the first or second sections in similar proportions (49% versus 51%), making it unlikely that a global factor such as visual adaptation could explain their preference.

The modulation of V1 responses by spatial position could not be explained by visual factors. To confirm that the receptive fields of most neurons saw similar stimuli in the two visually matching locations, we ran a model of receptive field responses (a simulation of V1 complex cells) on the sequences of images. As expected, this model generated spatial modulation ratios close to 1 (0.97 ± 0.17, Extended Data Fig. 3). We next asked whether the different responses seen in the two locations could be due to differences in images far outside the receptive field, particularly the end (grey) wall of the corridor. To test this, we placed two additional mice in a modified virtual reality environment, in which the two sections of the corridor were pixel-to-pixel identical (Extended Data Fig. 4). The spatial modulation ratio was again overwhelmingly less than 1 (0.62 ± 0.26; P < 10−81; n = 1,044 neurons), confirming that spatial modulation of V1 responses could not be explained by distant visual cues.

Spatial modulation of V1 responses could also not be explained by running speed, deviations in pupil position and diameter, or reward. Given that V1 neurons are influenced by running speed and visual speed8,9, their different responses in visually matching segments of the corridor could reflect speed differences. To control for this, we stratified the data according to three running speed ranges (low, medium, or high; Extended Data Fig. 5). Even within a group (medium speed), the spatial modulation ratio was substantially below 1 (0.47 ± 0.22; P < 10−33). Moreover, the spatial ratio of responses was identical at low and high speeds (Fig. 1h). We could also exclude a role of reward or deviations in pupil position and size, as the spatial modulation ratio was markedly below 1 even in sessions during which the animals ran without a reward (0.57 ± 0.37; P < 10−14), or when there were no changes in pupil size (0.63 ± 0.33, P < 10−45) or pupil position (0.63 ± 0.33, P < 10−27; Extended Data Fig. 6). To assess the joint contribution of visual, task-related, and position variables, we developed three prediction models (Extended Data Fig. 7). The first depended only on the visual scenes, which repeat twice, and on trial onset and offset, which introduce transients (visual model). The second additionally depended on running speed, reward times, pupil size, and eye position (non-spatial model). The third, in addition, allowed responses to differ in amplitude in the matching segments (spatial model). Only the last model could fit the activity of cells with unequal peaks, thus matching the spatial modulation ratios seen in the data (Extended Data Fig. 7c, d). By contrast, the first two models predicted spatial modulation ratios closer to 1 (Fig. 1h; Extended Data Fig. 7c, d).

Having established that V1 responses are modulated by spatial position, we next investigated whether the underlying modulatory signals reflect the spatial position encoded in the brain’s navigational systems (Fig. 2). We recorded simultaneously from V1 and hippocampal area CA1 using two 32-channel electrodes (Fig. 2a). To gauge a mouse’s estimate of position, we trained the mice to lick a spout for water reward upon reaching a specific region of the corridor (Fig. 2b; Supplementary Video 1; Extended Data Fig. 8). All four mice (wild type) learned to perform this task with more than 80% accuracy and relied strongly on vision: performance persisted when we changed the gain relating wheel rotation to progression in the corridor3,10 and performance decreased when we lowered visual contrast (Extended Data Fig. 8).

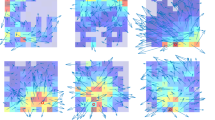

a, Example of reconstructed electrode tracks (red: DiI); green shows cells labelled with DAPI. Panel shows tracks from one array (four shanks) in CA1, and a second electrode (one shank) in V1. b, In the task, water was delivered when mice licked in a reward zone (green area). c, Normalized activity as a function of position in the corridor, for 226 V1 neurons (8 sessions). Neurons are ordered by the position of their maximum response. Curves indicate preferred position (yellow) and preferred position ± 40 cm (blue and red). d, Similar plot for CA1 place cells (334 neurons; 8 sessions). e, Density map showing the distribution of position decoded from the activity of simultaneously recorded V1 neurons (y-axis) as a function of the animal’s position (x-axis), averaged across recording sessions (n = 8), and considering only correct trials. The red diagonal stripe indicates accurate estimation of position. f, Similar plot for CA1 neurons. g, Density map showing the joint distribution of position decoding errors from V1 and CA1 in one example session at one position (74 cm; left), together with a similar analysis on data shuffled while preserving the correlation due to running speed and position (right). h, Pearson’s correlation coefficient of decoding errors in V1 and CA1 for each recording session (n = 3,800; 21,000 time points), against similar analysis of shuffled data. Correlations are above shuffling control (P = 0.0115, two-sided t-test, n = 8 sessions). i, Difference between joint distribution of V1 and CA1 decoded position and shuffled control, for the example in g. j, Difference between joint density map of V1 and CA1 decoded position, and shuffled control, averaged across positions (n = 50) and sessions (n = 8).

Many neurons in both visual cortex and hippocampus had place-specific response profiles, thus encoding the mouse’s spatial position (Fig. 2c–f). Consistent with our observations from two-photon imaging, V1 neurons responded more strongly in one of the two visually matching segments of the corridor (Fig. 2c, Extended Data Fig. 9c). In turn, hippocampal CA1 neurons exhibited place fields3,10,11, responding in a single corridor location (Fig. 2d, Extended Data Fig. 9a–c). Therefore, responses in both V1 and CA1 encoded the position of the mouse in the environment, with no ambiguity between the two visually matching segments. Indeed, an independent Bayes decoder was able to read out the mouse’s position from the activity of neurons recorded from V1 (33 ± 17 neurons per session, n = 8 sessions; Fig. 2e) or from CA1 (42 ± 20 neurons per session, n = 8 sessions; Fig. 2f).

Furthermore, when the visual cortex and hippocampus made errors in estimating the mouse’s position, these errors were correlated with each other (Fig. 2g, h). The distributions of errors in position decoded from V1 and CA1 peaked at zero (Fig. 2g) but were significantly correlated (Fig. 2h; ρ = 0.125, P = 0.0129, two-sided t-test, n = 8). In principle, this correlation could arise from a common modulation of both regions by behavioural factors such as running speed, which affects responses of both visual cortex8,9 and hippocampus12,13,14. To isolate the effect of speed, we shuffled the data between time points while preserving the relationship between speed and position (see Supplementary Methods). After shuffling, the correlation between decoding errors in V1 and CA1 decreased substantially from 0.125 to 0.022 (P = 0.0115; Fig. 2g, h). Moreover, when we subtracted the shuffled distribution from the original joint distributions, the residual decoding errors were distributed along the diagonal (Fig. 2i, j), indicating that representations in V1 and CA1 are more correlated than expected from common speed modulation. This correlation could also not be explained by common encoding of behavioural factors such as licking (Extended Data Fig. 9d–f). Indeed, a prediction of V1-encoded position from all external variables (true position, running speed, licks and rewards) could still be improved by the position decoded from CA1 activity (Extended Data Fig. 10).

We next tested whether the spatial position encoded by V1 and CA1 relates to the mouse’s subjective estimate of position (Fig. 3a–f). CA1 activity is influenced by the performance of navigation tasks15,16,17,18, and may reflect the animal’s subjective position more than its actual position15,17,19. We assessed a mouse’s subjective estimate of position from the location of its licks. We divided trials into three groups: early trials, in which too many licks (usually 4–6) occurred before the reward zone, causing the trial to be aborted; correct trials, during which one or more licks occurred in the reward zone; and late trials, in which the mouse missed the reward zone and licked afterwards. To understand how spatial representations in V1 and CA1 related to this behaviour, we trained the Bayesian decoder on the activity measured in correct trials, and analyzed the likelihood of decoding different positions in the three types of trial. Decoding performance in early and late trials showed systematic deviations: in early trials, V1 and CA1 overestimated the animal’s progress along the corridor (deviation above the diagonal, Fig. 3a, d), whereas in late trials they underestimated it (deviation below the diagonal, Fig. 3b, e). Accordingly, the probability of being in the reward zone, predicted from both CA1 and V1, peaked before the reward zone in early trials and after it in late trials (Fig. 3c, f). These consistent deviations suggest that the representations of position in V1 and CA1 correlate with the animal’s decisions to lick and thus reflect its subjective estimate of position.

a, Distribution of positions decoded from the V1 population, as a function of the animal’s actual position, on trials in which mice licked early. The decoder was trained on separate trials during which mice licked in the correct position. b, Same plot for trials during which mice licked late. c, The average decoded probability that the mouse is in the reward zone, as a function of distance from the reward. The curve for early trials (red) peaks before the reward zone, whereas the curve for late trials (blue) peaks after it, consistent with V1 activity reflecting subjective position rather than actual position. Probabilities were normalized relative to the probability of being in the reward zone in the correct trials (green). Red dots, positions at which the decoded probability of being in the reward zone differed significantly between early and correct trials (P < 0.05, two-sample two-sided t-test). Blue dots: same, for correct versus late trials. Shaded regions indicate mean ± s.e.m., n = 68 early trials (red), 334 correct trials (green), and 30 late trials (blue). d–f, Same as a–c, for decoding using the population of CA1 neurons. g, Position decoded from V1 activity as a function of mouse position, in an example session. Crosses show positions when the animal licked during early (red) or late trials (blue). Late trials can include some early licks. These distributions (mean ± s.d.) are summarized as shaded ovals for early trials (red, n = 20 licks) and late trials (blue, n = 12 licks). Green regions mark the reward zone. h, Summary distributions for all sessions (n = 8). i, Fraction of licks as a function of distance from reward location in positions decoded from V1 activity. j–l, Same as in g–i, for CA1 neurons.

The licks provide an opportunity to gauge when the mouse’s subjective estimate of position lies in the reward zone. If activity in V1 and CA1 reflects subjective position, it should place the animal in the reward zone whether the animal correctly licked in that zone or incorrectly licked earlier or later. To test this prediction, we decoded activity in V1 and CA1 at the time of licks. By definition, the distributions of licks in early, correct, and late trials were spatially distinct (Fig. 3g, h, j, k). However, when plotted as a function of decoded position, these distributions came into register over the reward zone, whether the decoding was done from V1 (Fig. 3g–i) or from CA1 (Fig. 3j–l). Thus, regardless of the animal’s position, when a mouse licked for a reward, the activity of both V1 and CA1 indicated a position in the reward zone.

Together, these results indicate that visual responses in V1 are modulated by the same spatial signals as those represented in the hippocampus, and that these signals reflect the animal’s subjective estimate of position. This modulation may become stronger as environments become familiar6,7, perhaps contributing to the changes observed in V1 as animals learn behavioural tasks20,21,22. The correlation between representations in V1 and CA1 may be due to feed-forward signals from vision or feedback signals from navigational systems. Although V1 and CA1 are not directly connected, they could share spatial signals through indirect connections23,24; these could involve the retrosplenial, parietal, entorhinal, or prefrontal cortices, which are known to carry spatial information25,26. Further insights into the nature of these signals could be obtained by modulating the relationship between actual position and distance run3,10 or time27, and by investigating more natural 2D environments28,29,30. In such environments, however, it would be difficult to control and repeat visual stimulation, which proved essential in our study. Our results show that signals related to an animal’s own estimate of position appear as early as in primary sensory cortex. This observation suggests that the mouse cortex does not keep a firm distinction between navigational and sensory systems; rather, spatial signals may permeate cortical processing.

Methods

All experiments were conducted according to the UK Animals (Scientific Procedures) Act, 1986 under personal and project licenses issued by the Home Office following ethical review.

For simultaneous recordings in V1 and CA1, we used four C57BL/6 mice (all male, implanted at 4–8 weeks of age). For calcium imaging experiments, we used double or triple transgenic mice expressing GCaMP6 in excitatory neurons (5 females, 1 male, implanted at 4–10 weeks of age). The triple transgenic mice expressed GCaMP6 fast31(Emx1-Cre;Camk2a-tTA;Ai93, 3 mice). The double transgenic mice expressed GCaMP6 slow32 (Camk2a-tTA;tetO-G6s, 3 mice). Because Ai93 mice may exhibit aberrant cortical activity33, we used the GCamp6 slow mice to validate the results obtained from the GCaMP6 fast mice. Additional tests33 confirmed that none of these mice displayed the aberrant activity that is sometimes seen in Ai93 mice. No randomization or blinding was performed in this study. No statistical methods were used to predetermine sample size.

Virtual environment and task

The virtual reality environment was a corridor adorned with a white noise background and four landmarks: two grating stimuli oriented orthogonal to the corridor and two plaid stimuli (Fig. 1a). The corridor dimensions were 100 × 8 × 8 cm, and the landmarks (8 cm wide) were centred 20, 40, 60 and 80 cm from the start of the corridor. The mice navigated the environment by walking on a custom-made polystyrene wheel (15 cm wide, 18 cm diameter). Movements of the wheel were captured by a rotary encoder (2,400 pulses per rotation, Kübler, Germany), and used to control the virtual reality environment presented on three monitors surrounding the animal, as previously described9. When the mouse reached the end of the corridor, it was placed back at the start of the corridor after a 3–5-s presentation of a grey screen. Trials longer than 120 s were timed out and were excluded from further analysis.

Mice used for simultaneous V1 and CA1 recordings (n = 4 animals, 8 sessions) were trained to lick in a specific region of the corridor, the reward zone. This zone was centred at 70 cm and was 8 cm wide. Trials in which the animals were not engaged in the task, that is, when they ran through the environment without licking, were excluded from further analysis. The animal was rewarded for correct licks with ~2 μl water using a solenoid valve (161T010; Neptune Research, USA), and licks were monitored using a custom device that detected breaks in an infrared beam.

Mice used for calcium imaging (n = 6 animals, 25 recording sessions) ran the two versions of the virtual corridor, with no specific task.

In the standard version of the corridor, two of the mice (10 sessions) were motivated to run with water rewards: one mouse received rewards at random positions along the corridor and the other at the end of the corridor. To control for the effect of the reward on V1 responses, no reward was delivered to two other mice ( 8 sessions).

To ensure that the spatial modulation of V1 responses could not be explained by the end wall of the corridor being more visible in the second half than in the first half, two additional mice used for calcium imaging were trained in a modified version of the corridor, where visual scenes were strictly identical 40 cm apart (7 sessions). In this environment, mice ran the same distance as before (100 cm) and were also placed back at the start of the corridor after a 3–5-s presentation of a grey screen. The same four landmarks were also centred in the same positions as before. However, the corridor was extended to 200 cm length, repeating the same sequence of landmarks (Extended Data Fig. 4). The virtual reality software was modified to render only up to 70 cm ahead of the animal, ensuring the visual scenes were strictly identical in the sections between 10 and 50 cm and 50 and 90 cm; the white noise background also repeated with the same 40 cm periodicity. Prior to recording in the 200 cm corridor, mice were first exposed to 5 sessions in the 100 cm corridor, then placed in the 200 cm corridor and allowed to habituate to the new environment for another two or three sessions before the start of recordings.

Surgery and training

The surgical methods are similar to those described previously9,34. In brief, a custom head-plate with a circular chamber (3–4 mm diameter for electrophysiology; 8 mm for imaging) was implanted on 4–10-week-old mice under isoflurane anaesthesia. For imaging, we performed a 4-mm craniotomy over the left visual cortex by repeatedly rotating a biopsy punch. The craniotomy was shielded with a double coverslip (4 mm inner diameter; 5 mm outer diameter). After 4 days of recovery, some mice were water restricted (>40 ml/kg/day) and were trained for 30–60 min, 5–7 days/week.

Mice used for simultaneous V1 and CA1 recordings were trained to lick selectively in the reward zone using a progressive training procedure. Initially, the animals were rewarded for running past the reward location on all trials. After this, we introduced trials in which the mouse was rewarded only when it licked in the rewarded region of the corridor. The width of the reward region was progressively narrowed from 30 cm to 8 cm across successive days of training. To prevent the animals from licking all across the corridor, trials were terminated early if the animal licked more than a certain number of times before the rewarded region. We reduced this number as the animals performed more accurately, typically reaching a level of 4–6 licks by the time recordings were made. Once a sufficient level of performance was reached, we controlled on some (random) trials that the animal performed the task visually by measuring the performance when we decreased visual contrast or changed the distance to the reward zone (Extended Data Fig. 8). Training was carried out for 3–5 weeks. Animals were kept under light-shifted conditions (9 a.m. light off, 9 p.m. light on) and experiments were performed during the day.

Widefield calcium imaging

For widefield imaging we used a standard epi-illumination imaging system35,36 together with an SCMOS camera (pco.edge, PCO AG). A Leica 1.6× Plan APO objective was placed above the imaging window and a custom black cone surrounding the objective was fixed on top of the headplate to prevent contamination from the monitors’ light. The excitation light beam emitted by a high-power LED (465 nm LEX2-B, Brain Vision) was directed onto the imaging window by a dichroic mirror designed to reflect blue light. Emitted fluorescence passed through the same dichroic mirror and was then selectively transmitted by an emission filter (FF01-543/50-25, Semrock) before being focused by another objective (Leica 1.0 Plan APO objective) and finally detected by the camera. Images of 200 × 180 pixels, corresponding to an area of 6.0 × 5.4 mm, were acquired at 50 Hz.

To measure retinotopy we presented a 14° wide vertical window containing a vertical grating (spatial frequency 0.15 cycles per degree), and swept37,38 the horizontal position of the window over 135° of azimuth angle, at a frequency of 2 Hz. Stimuli lasted 4 s and were repeated 20 times (10 in each direction). We obtained maps for preferred azimuth by combining responses to the two stimuli moving in opposite directions, as previously described37.

Two-photon imaging

Two-photon imaging was performed with a standard multiphoton imaging system (Bergamo II; Thorlabs) controlled by ScanImage439. A 970 nm laser beam, emitted by a Ti:sapphire laser (Chameleon Vision, Coherent), was targeted onto L2/3 neurons through a 16× water-immersion objective (0.8 NA, Nikon). The fluorescence signal was transmitted by a dichroic beamsplitter and amplified by photomultiplier tubes (GaAsP, Hamamatsu). The emission light path between the focal plane and the objective was shielded with a custom-made plastic cone, to prevent contamination from the monitors’ light. In each experiment, we imaged four planes set apart by 40 μm. Multiple-plane imaging was enabled by a piezo focusing device (P-725.4CA PIFOC, Physik Instrumente), and an electro-optical modulator (M350-80LA, Conoptics Inc.), which allowed us to adjust the laser power with depth. Images of 512 × 512 pixels, corresponding to a field of view of 500 × 500 μm, were acquired at a frame rate of 30 Hz (7.5 Hz per plane).

Pre-processing of raw imaging movies was done using the Suite2p pipeline40 and involved: 1) image registration to correct for brain movement; 2) ROI extraction (that is, cell detection); and 3) correction for neuropil contamination. For neuropil correction, we used an established method41,42. We used Suite2p to determine a mask surrounding each cell’s soma, the ‘neuropil mask’. The inner diameter of the mask was 3 µm and the outer diameter was <45 µm. For each cell we obtained a correction factor, α, by regressing the binned neuropil signal (20 bins in total) from the fifth percentile of the raw binned cell signal. For a given session, we obtained the average correction factor across cells. This average factor was used to obtain the corrected individual cell traces, from the raw cell traces and the neuropil signal, assuming a linear relationship. All correction factors fell between 0.7 and 0.9.

To manually curate the output of Suite2p, we used two criteria: one anatomical and one activity-dependent. One of the anatomical criteria in Suite2p is ‘area’, that is, mean distance of pixels from ROI centre, normalized to the same measure for a perfect disk. We used this criterion (area <1.04) to exclude ROIs that were likely to correspond to dendrites rather than somata. The activity-related criterion is the standard deviation of the cell trace, normalized to the standard deviation of the neuropil trace. We used this criterion to exclude ROIs whose activity was too small relative to the corresponding neuropil signal (typically with std(neuropil corrected trace)/std(neuropil signal) < 2). We finally excluded cells that fired extremely seldom (once or twice within a 20 min session).

Pupil tracking

We tracked the eye of the animal using an infrared camera (DMK 21BU04.H, Imaging Source) and a zoom lens (MVL7000, Navitar) at 25 Hz. Pupil position and size were calculated by fitting an ellipsoid to the pupil for each frame using a custom software. X and Y positions of the pupil were derived from the centre of mass of the fitted ellipsoid.

Electrophysiological recordings

On the day before the first recording session, we made two 1-mm craniotomies, one over CA1 (1.0 mm lateral, 2.0 mm anterior from lambda), and a second one over V1 (2.5 mm lateral, 0.5 mm anterior from lambda). We covered the chamber using KwikCast (World Precision Instruments) and the mice were allowed to recover overnight. The CA1 probe was lowered until all shanks were in the pyramidal layer, which was identified by the increase in theta power (5–8 Hz) of the local field potential and an increase in the number of detected units. The V1 probe was lowered to a depth of ~800 µm. We waited ~30 min for the tissue to settle before starting the recordings. In two mice, we dipped the probes in red-fluorescent DiI (Fig. 2a). In these mice, we had only one recording session. The other two mice underwent two and four recording sessions, respectively.

Offline spike sorting was carried out using the KlustaSuite43 package, with automated spike sorting using KlustaKwik44, followed by manual refinement using KlustaViewa43. Hippocampal interneurons were identified by their spike time autocorrelation and excluded from further analysis. Only time points with running speeds greater than 5 cm/s were included in further analyses.

Data analysis and modelling methods

See Supplementary Methods for details of analysis and models.

Reporting summary

Further information on experimental design is available in the Nature Research Reporting Summary linked to this paper.

Code availability

The custom code from this study is available from the corresponding author upon reasonable request.

Data availability

The data from this study are available from the corresponding author upon reasonable request.

References

Muller, R. U. & Kubie, J. L. The effects of changes in the environment on the spatial firing of hippocampal complex-spike cells. J. Neurosci. 7, 1951–1968 (1987).

Wiener, S. I., Korshunov, V. A., Garcia, R. & Berthoz, A. Inertial, substratal and landmark cue control of hippocampal CA1 place cell activity. Eur. J. Neurosci. 7, 2206–2219 (1995).

Chen, G., King, J. A., Burgess, N. & O’Keefe, J. How vision and movement combine in the hippocampal place code. Proc. Natl Acad. Sci. USA 110, 378–383 (2013).

Geiller, T., Fattahi, M., Choi, J.-S. & Royer, S. Place cells are more strongly tied to landmarks in deep than in superficial CA1. Nat. Commun. 8, 14531 (2017).

Ji, D. & Wilson, M. A. Coordinated memory replay in the visual cortex and hippocampus during sleep. Nat. Neurosci. 10, 100–107 (2007).

Haggerty, D. C. & Ji, D. Activities of visual cortical and hippocampal neurons co-fluctuate in freely moving rats during spatial behavior. eLife 4, e08902 (2015).

Fiser, A. et al. Experience-dependent spatial expectations in mouse visual cortex. Nat. Neurosci. 19, 1658–1664 (2016).

Niell, C. M. & Stryker, M. P. Modulation of visual responses by behavioral state in mouse visual cortex. Neuron 65, 472–479 (2010).

Saleem, A. B., Ayaz, A., Jeffery, K. J., Harris, K. D. & Carandini, M. Integration of visual motion and locomotion in mouse visual cortex. Nat. Neurosci. 16, 1864–1869 (2013).

Ravassard, P. et al. Multisensory control of hippocampal spatiotemporal selectivity. Science 340, 1342–1346 (2013).

Harvey, C. D., Collman, F., Dombeck, D. A. & Tank, D. W. Intracellular dynamics of hippocampal place cells during virtual navigation. Nature 461, 941–946 (2009).

McNaughton, B. L., Barnes, C. A. & O’Keefe, J. The contributions of position, direction, and velocity to single unit activity in the hippocampus of freely-moving rats. Exp. Brain Res. 52, 41–49 (1983).

Wiener, S. I., Paul, C. A. & Eichenbaum, H. Spatial and behavioral correlates of hippocampal neuronal activity. J. Neurosci. 9, 2737–2763 (1989).

Czurkó, A., Hirase, H., Csicsvari, J. & Buzsáki, G. Sustained activation of hippocampal pyramidal cells by ‘space clamping’ in a running wheel. Eur. J. Neurosci. 11, 344–352 (1999).

O’Keefe, J. & Speakman, A. Single unit activity in the rat hippocampus during a spatial memory task. Exp. Brain Res. 68, 1–27 (1987).

Lenck-Santini, P. P., Save, E. & Poucet, B. Evidence for a relationship between place-cell spatial firing and spatial memory performance. Hippocampus 11, 377–390 (2001).

Lenck-Santini, P.-P., Muller, R. U., Save, E. & Poucet, B. Relationships between place cell firing fields and navigational decisions by rats. J. Neurosci. 22, 9035–9047 (2002).

Hok, V. et al. Goal-related activity in hippocampal place cells. J. Neurosci. 27, 472–482 (2007).

Rosenzweig, E. S., Redish, A. D., McNaughton, B. L. & Barnes, C. A. Hippocampal map realignment and spatial learning. Nat. Neurosci. 6, 609–615 (2003).

Makino, H. & Komiyama, T. Learning enhances the relative impact of top-down processing in the visual cortex. Nat. Neurosci. 18, 1116–1122 (2015).

Poort, J. et al. Learning enhances sensory and multiple non-sensory representations in primary visual cortex. Neuron 86, 1478–1490 (2015).

Jurjut, O., Georgieva, P., Busse, L. & Katzner, S. Learning enhances sensory processing in mouse V1 before improving behavior. J. Neurosci. 37, 6460–6474 (2017).

Witter, M. P. et al. Cortico-hippocampal communication by way of parallel parahippocampal-subicular pathways. Hippocampus 10, 398–410 (2000).

Wang, Q., Gao, E. & Burkhalter, A. Gateways of ventral and dorsal streams in mouse visual cortex. J. Neurosci. 31, 1905–1918 (2011).

Moser, E. I., Kropff, E. & Moser, M.-B. Place cells, grid cells, and the brain’s spatial representation system. Annu. Rev. Neurosci. 31, 69–89 (2008).

Grieves, R. M. & Jeffery, K. J. The representation of space in the brain. Behav. Processes 135, 113–131 (2017).

Eichenbaum, H. Time cells in the hippocampus: a new dimension for mapping memories. Nat. Rev. Neurosci. 15, 732–744 (2014).

Cushman, J. D. et al. Multisensory control of multimodal behavior: do the legs know what the tongue is doing? PLoS One 8, e80465 (2013).

Aronov, D. & Tank, D. W. Engagement of neural circuits underlying 2D spatial navigation in a rodent virtual reality system. Neuron 84, 442–456 (2014).

Chen, G., King, J. A., Lu, Y., Cacucci, F. & Burgess, N. Spatial cell firing during virtual navigation of open arenas by head-restrained mice. eLife 7, e34789 (2018).

Madisen, L. et al. Transgenic mice for intersectional targeting of neural sensors and effectors with high specificity and performance. Neuron 85, 942–958 (2015).

Wekselblatt, J. B., Flister, E. D., Piscopo, D. M. & Niell, C. M. Large-scale imaging of cortical dynamics during sensory perception and behavior. J. Neurophysiol. 115, 2852–2866 (2016).

Steinmetz, N. A. et al. Aberrant cortical activity in multiple GCaMP6-expressing transgenic mouse lines. eNeuro https://doi.org/10.1523/ENEURO.0207-17.2017 (2017).

Ayaz, A., Saleem, A. B., Schölvinck, M. L. & Carandini, M. Locomotion controls spatial integration in mouse visual cortex. Curr. Biol. 23, 890–894 (2013).

Ratzlaff, E. H. & Grinvald, A. A tandem-lens epifluorescence macroscope: hundred-fold brightness advantage for wide-field imaging. J. Neurosci. Methods 36, 127–137 (1991).

Carandini, M. et al. Imaging the awake visual cortex with a genetically encoded voltage indicator. J. Neurosci. 35, 53–63 (2015).

Kalatsky, V. A. & Stryker, M. P. New paradigm for optical imaging: temporally encoded maps of intrinsic signal. Neuron 38, 529–545 (2003).

Yang, Z., Heeger, D. J. & Seidemann, E. Rapid and precise retinotopic mapping of the visual cortex obtained by voltage-sensitive dye imaging in the behaving monkey. J. Neurophysiol. 98, 1002–1014 (2007).

Pologruto, T. A., Sabatini, B. L. & Svoboda, K. ScanImage: flexible software for operating laser scanning microscopes. Biomed. Eng. Online 2, 13 (2003).

Pachitariu, M. et al. Suite2p: beyond 10,000 neurons with standard two-photon microscopy. Preprint at https://www.biorxiv.org/content/early/2017/07/20/061507 (2016).

Peron, S. P., Freeman, J., Iyer, V., Guo, C. & Svoboda, K. A cellular resolution map of barrel cortex activity during tactile behavior. Neuron 86, 783–799 (2015).

Dipoppa, M. et al. Vision and locomotion shape the interactions between neuron types in mouse visual cortex. Neuron 98, 602–615.e8 (2018).

Rossant, C. et al. Spike sorting for large, dense electrode arrays. Nat. Neurosci. 19, 634–641 (2016).

Kadir, S. N., Goodman, D. F. M. & Harris, K. D. High-dimensional cluster analysis with the masked EM algorithm. Neural Comput. 26, 2379–2394 (2014).

Acknowledgements

We thank N. Burgess and B. Haider for helpful discussions, and C. Reddy, S. Schroeder, and M. Krumin for help with experiments. This work was funded by a Sir Henry Dale Fellowship, awarded by the Wellcome Trust/Royal Society (grant 200501) to A.B.S., EPSRC PhD award F500351/1351 to E.M.D., Human Frontier Science Program and EC Horizon 2020 grants to J.F. (grant 709030), the Wellcome Trust (grants 205093 and 108726) to M.C. and K.D.H., the Simons Collaboration on the Global Brain (grant 325512) to M.C. and K.D.H. M.C. holds the GlaxoSmithKline/Fight for Sight Chair in Visual Neuroscience.

Reviewer information

Nature thanks M. Mehta and the other anonymous reviewer(s) for their contribution to the peer review of this work.

Author information

Authors and Affiliations

Contributions

All authors contributed to the design of the study. A.B.S. carried out the electrophysiology experiments and E.M.D. the imaging experiments; A.B.S., E.M.D. and J.F. analysed the data. All authors wrote the paper.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note: Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Extended data figures and tables

Extended Data Fig. 1 Design of virtual environment with two visually matching segments.

a, The virtual corridor had four prominent landmarks. Two visual patterns (grating and plaid) were repeated at two positions, 40 cm apart, to create two visually matching segments in the room, from 10 cm to 50 cm and from 50 cm to 90 cm (red and blue bars in the left panel), as illustrated in the right panel. b, Example screenshots of the right visual field displayed in the environment when the animal is at different positions. Each row displays screen images at positions approximately 40 cm apart.

Extended Data Fig. 2 Spatial averaging of visual cortical activity confirms the difference in response between visually matching locations.

a, Mean response of V1 neurons as a function of the distance from the peak response location (2,422 cells with peak response between 15 and 85 cm along the corridor). To ensure that the average captured reliable, spatially specific responses, the peak response location for each cell was estimated only from odd trials, whereas the mean response was computed only from even trials. b, Population average of responses shown in a. Lower values of the side peaks compared to central peak indicate strong preference of V1 neurons for one segment of the corridor over the other visually matching segment (40 cm from peak response).

Extended Data Fig. 3 Simulation of purely visual responses to position in VR.

a–f, Responses of six simulated neurons with purely visual responses, produced by a complex cell model with varying spatial frequency, orientation, or receptive field location. The images on the left of each panel show the quadrature pair of complex cell filters; traces on the right show the cell’s simulated response as a function of position in the virtual environment. Simulation parameters matched those that are commonly observed in mouse V1 (spatial frequency: 0.04, 0.05, 0.06 or 0.07 cycles per degree; orientation: uniform between 0° and 179° but with twice as many cells for cardinal orientations; receptive field positions 40°, 50°, 70° and 80°, similar to the V1 neurons we considered for analysis. In rare cases (as in f) when the receptive fields do not match the features of the environment, there is little selectivity along the corridor. These cases lead to lower spatial modulation ratios. g, The spatial modulation ratios calculated for the complex cell simulations are close to 1 (0.97 ± 0.17), and different from the ratios calculated for V1 neurons. Black curve is the same as in Fig 1g.

Extended Data Fig. 4 The spatial modulation of V1 responses is not due to end-of-corridor visual cues.

a, Diagram of the 200-cm virtual corridor, containing the same grating and plaid as the regular corridor, repeated four times instead of twice. b, Visual scenes from locations within the first 100 cm of the extended corridor, separated by 40 cm, are visually (pixel-to-pixel) identical. c, Cumulative distribution of the spatial modulation ratio across the two mice that were placed in the long corridor (7 sessions, 2 mice; median ± m.a.d: 0.62 ± 0.26; 1,044 neurons, black line). Grey line shows the spatial modulation ratio predicted by the non-spatial model (which predicts activity from the visual scene, trial onset and offset, speed, reward, pupil size and displacement from the central position of the eye; see Extended Data Fig. 7, non-spatial model). The two distributions are significantly different (two-sided Wilcoxon rank sum test; P < 10−14).

Extended Data Fig. 5 The spatial modulation of V1 responses cannot be explained by speed.

a, Speed–position plots for all single-trial trajectories in three example recording sessions. b, Response profile of example V1 cells in each session as a function of position in the corridor, stratified for three speed ranges corresponding to the shading bands in a. c, Two-dimensional response profiles of the same example neurons showing activity as a function of position and running speed for speeds higher than 1 cm s−1.

Extended Data Fig. 6 The spatial modulation of V1 responses cannot be explained by reward, pupil position or diameter.

a, Normalized response as a function of position in the virtual corridor, for sessions without reward (1,173 neurons). Data come from two out of four mice that ran the environment without reward (8 sessions, 2 mice). Responses in even trials (right) are ordered according to the position of maximum activity measured in odd trials (left). b, Distribution of spatial modulation ratio for unrewarded sessions (8 sessions; median ± m.a.d. = 0.57 ± 0.37; cyan) and for modelled ratios obtained from the non-spatial model on the same sessions (black, see Extended Data Fig. 7). The two distributions are significantly different (two-sided Wilcoxon rank sum test; P < 10−8). c, Pupil position as a function of location in the virtual corridor, for an example session with steady eye position. Sessions with steady eye positions were defined as those with no significant difference in eye positions between visually matching positions 40 cm apart (with unpaired t-test, P < 0.01). Thin red curves: position trajectories on individual trials; thick curves, average. Top and bottom panels: x and y coordinates of the pupil, respectively. d, Distribution of spatial modulation ratio for sessions with steady eye position (10 sessions; median ± m.a.d. = 0.63 ± 0.33; 1,154 neurons, red) and for modelled ratios obtained from the non-spatial model on the same sessions (black). The two distributions are significantly different (two-sided Wilcoxon rank sum test; P < 10−14). e, Pupil size as a function of position for an example session with steady pupil size. f, Distribution of spatial modulation ratio for sessions with steady pupil size (5 sessions; median ± m.a.d. = 0.63 ± 0.33; 1,069 neurons, red) and for modelled ratios obtained from the non-spatial model on the same sessions (black). The two distributions are significantly different (two-sided Wilcoxon rank sum test; P < 10−13).

Extended Data Fig. 7 Observed values of spatial modulation ratio can be modelled only using spatial position.

a, b, We constructed three models to predict the activity of individual V1 neurons from successively larger sets of predictor variables. In the simplest, the visual model, activity is required to depend only on the visual scene visible from the mouse’s current location, and is thus constrained to be a function of space that repeats in the visually matching section of the corridor. The second, non-spatial model, also includes modulation by behavioural factors that can differ within and across trials: speed, reward times, pupil size, and eye position. Because these variables can differ between the first and second halves of the track, modelled responses need no longer be exactly symmetrical; however, this model does not explicitly use space as a predictor. The final, spatial model, extends the previous model by also allowing responses to the two matching segments to vary in amplitude, thereby explicitly including space as a predictor. Example single-trial predictions are shown as a function of time in a, together with measured fluorescence. Spatial profiles derived from these predictions are shown in b. c, Cumulative distributions of spatial modulation ratio for the three models (purple). For comparison, the black curve shows the ratio of peaks derived from the data (even trials) (median ± m.a.d: visual model, 0.99 ± 0.03; P < 10−40, two-sided Wilcoxon rank sum test; non-spatial model, 0.83 ± 0.18; P < 10−40; spatial model, 0.60 ± 0.27; P = 0.09, n = 2,422 neurons). d, Measured spatial modulation ratio versus predictions of the three models. Each point represents a cell; red ellipse represents best fit Gaussian, dotted line measures its slope. The purely visual model (top) does poorly, and is improved only slightly by adding predictions from speed, reward, pupil size, and eye position (middle). Adding an explicit prediction from space provides a much better match to the data (bottom). r, Pearson’s correlation coefficient, n = 2,422 neurons; θ, orientation of the major axis of the fitted ellipsoid.

Extended Data Fig. 8 Behavioural performance in the task.

a, Illustration of the virtual reality environment with four prominent landmarks, a reward zone, and the zones that define trial types: early, correct and late. b, Percentage of trials during which the animal makes behavioural errors, by licking either too early or too late at three different contrast levels: 18% (low), 60% (medium) or 72% (high). c, Illustration of performance on all trials of one example recording session. Each row represents a trial, black dots represent positions where the animal licked, and cyan dots indicate the delivery of a water reward. Coloured bars indicate the outcome of the trial (red, early; green, correct; blue, late). d–f, Successful performance relies on vision. d, The mouse did not lick when the room was presented at zero contrast. e, On some trials, we changed the gain between the animals’ physical movement and movement in the virtual environment, thus changing the distance to the reward zone (high gain resulting in shorter distance), while visual cues remained in the same place. When plotted as a function of the distance run, the licks of the animal shifted, indicating that the animal was not relying simply on the distance travelled from the beginning of the corridor. f, If the position of the visual cues was shifted forward or back (high or low room length (RL)), the lick position shifted accordingly, indicating that the animals relied on vision to perform the task.

Extended Data Fig. 9 Comparison of response properties between V1 and CA1 neurons and correlation of V1 and CA1 errors in the two halves of the environment.

a, Cumulative distribution of the stability of V1 and CA1 response profiles. Tuning stability (the stability of responses) was computed as the correlation between the spatial responses measured from the first half and the second half of the trials. V1 and CA1 responses were highly stable within each recording session: the tuning stability was >0.7 for more than 60% of neurons in both V1 and CA1. b, Cumulative distribution of the Skaggs information (bits per spike) carried by V1 and CA1 neurons. Note that while V1 and CA1 neurons had comparable amounts of spatial information, this does not suggest that V1 represents space as strongly as CA1, because the Skaggs information metric mixes the influences of vision and spatial modulation. c, Normalized firing rate averaged across V1 or CA1 neurons as a function of distance from the peak response (similar to Extended Data Fig. 2b). Unlike CA1, the mean response averaged from V1 neurons shows a second peak at ±40 cm, consistent with the repetition of the visual scene. d, e, Pearson’s correlation between position errors estimated from V1 and CA1 populations in the first half of the corridor (shown in d). Each point represents a behavioural session (n = 8 sessions); x-axis values represent measured correlations; y-axis values represent correlations calculated after having shuffled the data within the times where the speed was similar (similar to Fig. 2h). The occurrence of error correlations in the unshuffled data indicates that these correlations are not due to rewards (which did not occur in this half of the maze) or licks (which were rare, and the 100-ms periods surrounding the few that occurred were removed from analysis). The significance of the difference between the measured and shuffled correlations was calculated using a two-sided two-sample t-test. f, Similar to e for the second half of the corridor.

Extended Data Fig. 10 Position decoded from CA1 activity helps to predict position decoded from V1 activity (and vice versa).

a, To test whether the positions encoded in V1 and CA1 populations are correlated with each other beyond what would be expected from a common influence of other spatial and non-spatial factors, we used a random forests decoder (Tree Bagger implementation in MATLAB) to predict V1 or CA1 decoded positions from different predictors. We then tested whether the model prediction was further improved when we added the position decoded from the other area as an additional predictor (that is, using the positions decoded from CA1 to predict V1 decoded positions and vice versa). b, Adding CA1 decoded position as an additional predictor improved the prediction of V1 decoded positions in every recording session (that is, reduced the prediction errors). V1 and CA1 decoded positions are thus correlated with each other beyond what can be expected from a common contribution of position, speed, licks and reward to V1 and CA1 responses. c, Same as b for predicting CA1 decoded position.

Supplementary information

Supplementary Information

Details of data analysis methods and description of models.

Supplementary Video 1

Video of animal performing the task in virtual reality. Top panel: Video of mouse while performing the navigation task in the virtual reality corridor, on one of the training sessions when no recording was made. Bottom panel: Illustration of the position of the animal along the length of the corridor. The ends of the reward zone are highlighted by cyan lines. On each trial, the positions where the animal licked are highlighted by a red mark, and the position where it was rewarded by a cyan mark.

Rights and permissions

About this article

Cite this article

Saleem, A.B., Diamanti, E.M., Fournier, J. et al. Coherent encoding of subjective spatial position in visual cortex and hippocampus. Nature 562, 124–127 (2018). https://doi.org/10.1038/s41586-018-0516-1

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1038/s41586-018-0516-1

- Springer Nature Limited

Keywords

This article is cited by

-

Distributed representations of prediction error signals across the cortical hierarchy are synergistic

Nature Communications (2024)

-

Acquisition of non-olfactory encoding improves odour discrimination in olfactory cortex

Nature Communications (2024)

-

A distributed and efficient population code of mixed selectivity neurons for flexible navigation decisions

Nature Communications (2023)

-

Interactions between rodent visual and spatial systems during navigation

Nature Reviews Neuroscience (2023)

-

Coregistration of heading to visual cues in retrosplenial cortex

Nature Communications (2023)