Abstract

The Job Search Self-Efficacy (JSSE) scale is a widely used measure in the job search literature. To date, the psychometric properties of the JSSE have been based only on classical test theory. This study used item response theory approach to evaluate the JSSE’s psychometric properties to clarify its structure. Participants were 429 recent university graduates. Rasch analysis supported the unidimensional structure of each of the two dimensions of the JSSE. Internal consistency reliabilities were good. The response category functioned properly and the fit of the data to the Rasch model was good. Further, there was no noticeable differential item functioning across gender. The results provided evidence that the JSSE is a reliable measure for assessing job search self-efficacy beliefs among graduate job seekers.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

Self-efficacy theory (Bandura, 1997) has provided a crucial framework for understanding job search beliefs and effort for 30-odd years (see Kim, Kim, & Lee, 2019; Saks, Zikic, & Koen, 2015). Job search self-efficacy is defined as the confidence in one’s ability to successfully search for jobs and to gain employment, and has long been found to be the most proximal determinant of employment among job seekers (Eden & Aviram, 1993; Kanfer & Hulin, 1985; Kanfer, Wanberg, & Kantrowitz, 2001; Saks & Ashforth, 1999). Despite this, the measurement of job search self-efficacy has been somewhat diverse with various researchers developing and using their own measure to assess the construct (see Caplan, Vinokur, Price, & van Ryn, 1989; Ellis & Taylor, 1983; Wanberg, Zhang, & Diehn, 2010; van Hoye, Van Hooft, Stremersch, & Lievens, 2019). To address the proliferation of job search self-efficacy measures in the job search literature in order to offer opportunities for the comparison of research results across studies, Saks et al. (2015) organised the various measures and integrated them into a single measure, the Job-Search Self-Efficacy (JSSE) scale. The JSSE scale is a 20-item measure, composed of two dimensions that tap important aspects of the job search beliefs and outcomes. Saks et al. (2015) named the two dimensions (a) Job-search self-efficacy behaviour (JSSE-B) and (b) Job-search self-efficacy outcomes (JSSE-O). The initial validity information reported by the authors for the JSSE is promising.

Yet, to date, the psychometric properties of the JSSE have been based only on classical test theory (CTT) approaches. The CTT approach has inherent weaknesses, which limit its capability to provide full diagnostic information on the functioning of the JSSE. Most notably, the CTT approach cannot evaluate the 5-point Likert rating scale of the JSSE (see Kean, Bisson, Brodke, Biber, & Gross, 2017; Petrillo, Cano, McLeod, & Coon, 2015). Consequently, it remains unknown whether respondents use all of the response categories of the JSSE or not. Given job search self-efficacy’s importance in the job search literature, there is a clear need for further psychometric evaluation of the JSSE with robust psychometric techniques from modern test theory.

Moreover, there is little information about the JSSE’s ability to achieve measurement equivalence across gender because Saks et al. (2015) did not examine it. Thus, we do not know whether male and female respondents would interpret the scale items and the latent factor in the same way. This important information is needed because some previous work has shown that there are gender differences in job search activities, in general, and job search self-efficacy, in particular (Cifre, Vera, Sánchez-Cardona, & de Cuyper, 2018; Llinares-Insa, González-Navarro, Córdoba-Iñesta, & Zacarés-González, 2018; Papyrina, Strebel, & Robertson, 2020). For example, Eriksson and Lagerström (2012) found that, compared with men, women job seekers searched for jobs in areas closer to where they lived and that they received fewer firm contacts. They concluded that because the labour market is not gender-neutral, gender differences should be considered central in job search research. One advantage to gain from establishing the measurement equivalence of the JSSE across gender is that it will make it possible for future studies to meaningfully compare groups or individuals over time (see Davidov, Meuleman, Cieciuch, Schmidt, & Billiet, 2014; Jones, 2019; Putnick & Bornstein, 2016).

The aim of the current study was to provide further validity evidence for the JSSE using Rasch measurement (Rasch, 1960), which is one of a family of item response models. Rasch analysis has the capability to transform ordinal level Likert scale data into an interval level data using the raw score-to-logit transformation (Andrich & Marais, 2019; Bond & Fox, 2015; Boone, Staver, & Yale, 2014Footnote 1). The linear Rasch measures allow for the differences between the response categories to be interpretable (Andrich, 1978, 2011; Boone & Noltemeyer, 2017; Harwell & Gatti, 2001; Medvedev et al., 2019). Another aim was to examine if the JSSE latent construct and items have the same meaningful structure for men and women. To achieve the research aims, this study used data from recent university graduates. This is because recent university graduates generally search for jobs to enable them to use their newly acquired knowledge and skills (i.e. school-to-work transition). Young graduates seeking jobs, following the completion of compulsory schooling, may find the process challenging and would require personal agency in the form of self-efficacy beliefs to persevere, devote more efforts, and to perceive control over the job search process.

2 Method

2.1 Participants

Data for the current analysis are from the School-to-Work-Transition (SWoT) study. The SWoT study aims at validating selected job search and career interest measures. A total of 480 recent university graduates in the Greater Accra Region of Ghana who were making the school-to-work transition were invited to participate in the SWoT study. Some individuals did not return their completed questionnaire whereas others abstained for various reasons. The final sample (N = 429) comprised 50.1% men and 49.9% women with a mean age of 24.11 years (SD = 2.80). Participants completed a questionnaire battery on job search and career interest together with consent forms. Inclusion criteria were being an unemployed recent university graduate looking for a job or serving under the one-year mandatory national service programme, and a willingness to participate. The study was approved by the Institutional Review Board of the University of Ghana (Ref#: ECH116/19-20).

2.2 Measure

Job search self-efficacy was assessed with the 20-item JSSE scale developed by Saks et al. (2015). The JSSE is a self-report measure (see Table 1), composed of two dimensions consisting of 10 items each. The two dimensions are Job-search self-efficacy behaviour (JSSE-B; 10 items) and Job-search self-efficacy outcomes (JSSE-O; 10 items). The JSSE asks respondents about their confidence/beliefs to successfully engage in job search behaviours and to receive favourable job search outcomes. All of the 20 items are rated on a 5-point Likert scale from 1 (not at all confident) to 5 (totally confident). Saks et al. (2015) reported Cronbach’s alpha reliability of 0.89 and 0.96 for the JSSE-B and JSSE-O, respectively, in a sample of 487 job seekers. Pilot work led to a slight modification of two phrases “cold calls” and “sales pitch” in two of the items used in the original study. In the current study, “cold calls” was modified to read “unsolicited calls” (item#4) and “sales pitch” was re-worded to read “persuasive talk” (item#6). In addition to the JSSE, study participants provided biographical data on gender and age (years).

2.3 Statistical Analysis

The statistical analysis was undertaken in WINSTEPS® (v4.4.2; Linacre, 2019), an item response theory based Rasch analysis software. To evaluate how well the JSSE data fit the Rasch model, the Rasch-Andrich rating scale model (RSM) was used (Andrich & Marais, 2019). The RSM was used because the JSSE consists of polytomous items that are rated on the same Likert response scale. RSM analysis was conducted separately for each of the two dimensions of the JSSE (i.e. JSSE-B and JSSE-O). Item difficulty, person and item reliabilities, person and separation indices, point-measure correlation, response category functioning and threshold ordering, construct unidimensionality, and differential item functioning were all calculated in WINSTEPS®. Data-Rasch model fit was evaluated using the information-weighted sensitive fit (infit) and outlier-sensitive fit (outfit) mean-squared (MNSQ) cut-off values greater than 0.5 but less than 1.5, as recommended by Aryadoust, Ng, and Sayama (2021) and by Linacre (2020). Infit MNSQ and outfit MNSQ statistic greater than 0.5 but less than 1.5 indicate good data-model fit (Boone et al., 2014).

Rasch models assume that items in a measure are unidimensional and locally independent (Edwards et al., 2018; Fan & Bond, 2019; Linacre, 2009). To test the assumption of unidimensionality and local independency, the principal components analysis of residuals (PCA-R) test in WINSTEPS is used. The PCA-R looks for patterns in the data (i.e. the correlation matrix of the residuals) to find the component/dimension that accounts for the biggest variance in the residuals (Chou & Wang, 2010; Smith & Miao, 1994; Wright, 1994, 2000). The raw variance explained by the measures in the data is referred to as the “Rasch dimension/component” (Boone & Staver, 2020). Any remaining raw unexplained variance in the data is referred to as the “secondary dimension/component” or “contrast”. By default, WINSTEPS produces up to five secondary dimensions/contrasts in the data (see Boone & Staver, 2020). To satisfy the unidimensionality assumption in Rasch measurement, the unexplained variance in the first contrast/first secondary dimension should have an eigenvalue less than 2.00 (see Linacre, 1998a, 1998b; Raîche, 2005; Smith, 2002).

Overall, the PCA-R computation processes are similar to the traditional dimension reduction PCA test used in common factor analysis, except that the PCA-R results are interpreted differently because they indicate contrasts between opposing components rather than loadings on a factor. Note also that WINSTEPS computes a PCA using the residuals instead of the original observations. For more information on the WINSTEPS data analysis procedures, see Linacre (2020). Local independence is an additional indicator of construct unidimensionality. Locally independent items suggest that the answer to one item on the measure is independent of the answer to another item (De Bruin & Henn, 2013; De Bruin, Hill, Henn, & Muller, 2013; Hattie, 2015; Wilson, 1988). In other words, a wrong or correct response to an item should not bring about a wrong or correct response to another item on the measure.

Construct unidimensionality was evaluated through the principal components analysis of the standardised residuals (PCA-R). Each subscale was considered unidimensional if the Rasch dimension explained more than 40% of the total variance (Cordier et al., 2017; Linacre, 2020) and the eigenvalue of the first contrast/secondary dimension was less than 2.00 (see Linacre, 1998a, 1998b; Raîche, 2005; Smith, 2002). Additionally, residual correlation values between item pairs were inspected to determine local independence (Edwards et al., 2018; Testa et al., 2019). Items were considered locally independent of each other if the relationship between any two item pairs was (r < 0.30; Lambert et al., 2013; Røe et al., 2014; Zhong et al., 2014). Average measures and thresholds were calculated to assess the response category functioning. Response category functioning was considered acceptable if the Andrich thresholds were correctly ordered (i.e. progressed monotonically; Hansen & Kjaersgaard, 2020), supplemented by well-ordered category probability curves and fit statistics less than 2.00. Interpreted much like Cronbach’s alpha, person and item reliabilities typically range from 0 to 1 with estimates >0.8 considered evidence of good internal consistency reliability (Bond & Fox, 2015; Boone & Noltemeyer, 2017).

Separation indices provide important, additional information about a test’s functioning relative to internal construct validity. Separation indices range typically from 0 to infinity, and higher indices are indicative of better test functioning (Boone, 2016; Boone et al., 2014). Person separation index shows how well scale items distinguish participants into different ability levels on the trait. A person separation index >2.00 is deemed desirable. Relatedly, item separation demonstrates how well the study participants can separate the items along the trait construct (Bond & Fox, 2015). An item separation index >2.00 reflects the ability of the test to distinguish, at least, two-person ability levels (e.g. low versus high) along the construct (Chang, Wang, Tang, Cheng, & Lin, 2014). Uniform differential item functioning (DIF; Henn & Morgan, 2019) analysis was performed across gender to investigate whether the JSSE items were interpreted and used in the same way by men and women. When both DIF contrast is <.50 and significance level of Mantel χ2 statistic is (p > .05), they indicate lack of noticeable DIF across groups.

3 Results

3.1 Response Category Functioning

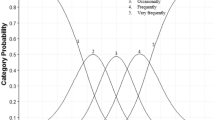

The JSSE uses a 5-point Likert rating scale from 1(not at all confident) to 5 (totally confident) to assess job search self-efficacy beliefs. In this study, the average measures progressed monotonically with each of the five response categories (see Table 1). Fit statistics of the response categories were excellent, with the infit MNSQ statistic ranging from 0.91 to 1.27 and the outfit MNSQ statistic ranging from 0.90 to 1.21. The fit statistics provided further evidence that the items on the JSSE were correctly ordered relative to their difficulty. Inspection of the category probability curves for each dimension revealed that each response category exhibited a clear-cut peak on the underlying construct with four distinct Andrich thresholds. The probability curves indicated that each response category was the most probable of being endorsed or agreed with along the trait continuum. Figures 1 and 2 report the test information function/curve, which plots information in the data relative to each measure or score on the scale.

Test information function for the JSSE-B. Note. The test information function/curve displays information for the JSSE-B along the latent trait continuum. The curve shows that the JSSE-B yields most information between approximately −2.0 and +1.5 logits (see rotated rectangle). Approximately 82% of the person measures fell within the rotated rectangle area, which indicates that the JSSE-B yields sensitive measures for most of the participants. JSSE-B =job-search self-efficacy behaviour

Test information function for the JSSE-O. Note. The test information function/curve displays information for the JSSE-O along the latent trait continuum. The curve shows that the JSSE-O yields most information between approximately −2.0 and +2.0 logits (see rotated rectangle). Approximately 85% of the person measures fell within the rotated rectangle area, which indicates that the JSSE-O yields sensitive measures for most of the participants. JSSE-O = job search self-efficacy outcomes

3.2 Data-Model Fit

The fit of the JSSE data to the Rasch model was evaluated using the infit and outfit MNSQ goodness-of-fit statistics. Results showed that the JSSE data fitted the Rasch model very well (see Table 2). As can be seen in Table 2, the infit MNSQ statistic for the 10-item JSSE-B ranged from 0.90 to 1.40 whereas the outfit MNSQ statistic ranged from 0.90 to 1.41. Both the infit and outfit MNSQ values were well within the acceptable cut-off criteria of >0.5 and <1.5 recommended by Linacre (2020) and by Aryadoust et al. (2021). In addition, the point-measure correlation (PTMEA) estimates were strong and in the positive direction from (r = .62) to (r = .72). Similarly, for the 10-item JSSE-O, the infit MNSQ values were from 0.87 to 1.17 and the outfit MNSQ values were from 0.87 to 1.14. The PTMEA estimates ranged from (r = .67) to (r = .75).

3.3 Unidimensionality and Local Independence

Principal components analysis of the residuals showed that the Rasch dimension explained 45.8% of the total raw variance in the JSSE-B data. The eigenvalue of the first component (i.e. first contrast) of the raw unexplained variance was 1.83 and satisfied the recommended cut-off criterion of eigenvalues below 2.00. Thus, the JSSE-B data demonstrated unidimensionality with very little possibility of a secondary dimension emerging from the data. Inspection of the item pair residual correlations revealed that the JSSE-B’s items were locally independent of each other because the largest correlation value was (r = .16; between item#1 and item#2). For the JSSE-O data, the Rasch dimension explained 48.3% of the total raw variance and the eigenvalue of the first component of the raw unexplained variance was 1.84. This eigenvalue confirmed the unidimensionality of the JSSE-O and revealed that the unexplained raw variance was substantively too random to constitute a secondary dimension. Similarly, inspection of the item pair residual correlations showed that the JSSE-O items were locally independent of each other. The largest item pair correlation value for the JSSE-O items was (r = .20; between item#11 and item#13).

3.4 Reliability and Separation Indices

Rasch reliability analysis showed that both the JSSE-B and JSSE-O had good internal consistency reliability estimates and separation indices. The person reliability estimate of the JSSE-B was 0.87 (person separation index = 2.58) whereas the item reliability was 0.83 (item separation index = 2.18). For the JSSE-O, the person reliability was 0.88 [person separation index = 2.70]) and the item reliability was 0.82 [item separation index = 2.16]).

3.5 Differential Item Functioning

Uniform DIF analysis across gender (male vs. female) using the recommended (a) DIF contrast cut-off value >0.5 logits and (b) Mantel χ2 statistic with p < 0.05 indicated that no noticeable DIF was present in the data across the two groups for both JSSE-B and JSSE-O (see Table 3). Note that for DIF to be noticeable both Mantel χ2 statistical significance (p < 0.05) and DIF magnitude (>0.5 logits) should be established contemporaneously. The current results demonstrated that men and women did not differ in their interpretation and use of JSSE items.

4 Discussion

In this study, we evaluated the psychometric properties of the JSSE scale (see Saks et al., 2015), a widely used instrument in job search research (see Kim et al., 2019), in a sample of recent university graduates. Rasch analysis, an item response theory approach, was used to perform the psychometric evaluation. The use of Rasch analysis enhanced a detailed diagnostic assessment of the functioning of the JSSE. Various psychometric properties were examined, including response category functioning, construct unidimensionality, person and item reliability, data-model fit, and measurement invariance across gender. Overall, Rasch analysis provided sound evidence that the JSSE is a reliable measure for assessing job search self-efficacy beliefs and outcomes in job seeker populations. In the paragraphs that follow, we offer a detailed discussion of the results.

The 5-point Likert rating scale of the JSSE displayed a stable structure and satisfied the monotonicity assumptions inherent in Rasch measurement. The expectation of the Rasch measurement is that for each scale item, the response category should be properly ordered relative to the item’s difficulty (Hagquist & Andrich, 2017; Khine, 2020). In order words, the Rasch measurement expects that respondents should demonstrate a lower challenge of agreeing with or endorsing the response category “not at all confident” than the response category “only a little confident” and that successive response categories should be endorsed following this response pattern (Boone et al., 2014; Linacre, 2020). In this study, the response categories functioned in this expected order as indicated by the average measures and Andrich thresholds (see Table 1), suggesting that there was no disordering of the category thresholds and that there was no need for rescoring the responses. That is, respondents in the current study made good use of all of the five response options in endorsing the items.

Moreover, the JSSE-B and JSSE-O demonstrated unidimensionality as expected by the Rasch measurement theory. For both dimensions, the unexplained variance of the first contrast or first secondary dimension had an eigenvalue less than 2.00, suggesting that no secondary dimension was probable in the data. In other words, for each dimension of the JSSE, the unexplained variance of the first contrast/first secondary dimension displayed only one-item strength (i.e. had only one item), which falls short of the requirement that, at least, the strength of three items (Boone & Staver, 2020; Bravini, Giordano, Sartorio, Ferriero, & Vercelli, 2017; Linacre, 2020) is needed for a secondary dimension to be considered meaningful and acceptable. In addition, all of the items on the JSSE fitted the Rasch model well, suggesting that all items tapped their respective latent constructs. These results reflected good targeting between item difficulty and person ability. Besides, the item difficulty calibrations ranged from −0.17 to 0.33 logits for the JSSE-B and from −0.34 to 0.26 logits for the JSSE-O. Person and item reliabilities for the two dimensions were found to be good (JSSE-B: 0.87, 0.83; JSSE-O: 0.88, 0.82). That is, the results showed that the JSSE was able to locate items and persons along the trait continuum with sufficient precision. The reliability estimates obtained in this study are consistent with the requirement of the Rasch measurement (Boone & Noltemeyer, 2017).

Relatedly, the separation indices of the JSSE were good. For example, the person separation index of the JSSE-B was 2.58 while item separation index was 2.18. For the JSSE-O, person separation index was 2.70 and the item separation index was 2.16. These results indicated that (a) the sample size used in this study was large enough to establish the item difficulty hierarchy of the JSSE, and (b) the JSSE was sensitive enough to classify respondents into, at least, two ability levels (i.e. high job search self-efficacy versus low job search self-efficacy). These psychometric properties provide useful information about the utility of a measure such as the JSSE (see Bragstad et al., 2020).

Furthermore, there was no noticeable DIF in the interpretation of the items by male and female graduates in this study. The absence of noticeable DIF (>0.5 logits) in the JSSE indicates that the items demonstrated consistency in meaning across the two groups. This finding suggests that the JSSE is sufficiently robust enough to be used to detect differences in job search self-efficacy mean scores across groups or to meaningfully compare the job search self-efficacy mean score of individuals over time. Measurement equivalence is considered an important property of good measures (Davidov et al., 2014; Jones, 2019) because it provides opportunities not only to meaningfully compare groups but also to assess a measure’s responsiveness.

5 Limitations

This study has some limitations. First, job search self-efficacy was assessed using a self-report measure. Self-report data can be compromised by threats such as common method bias and social desirability. Second, although the sample size used in this study was found to be adequate by the Rasch model, a larger sample size may have enhanced the precision and fit of the data to the Rasch model. Third, participants in this study were recruited using convenience sampling techniques. Thus, generalization of the results ought to be done with caution. Nonetheless, this study provided evidence to the effect that, in its current form, the 20-item JSSE scale fulfilled the requirements of the Rasch measurement. Fourth, DIF was not examined across age, ethnicity, and academic disciplinary background (e.g. social science versus humanities or physical sciences) in the present study. This limitation may be considered as an important idea for future research on the JSSE.

6 Conclusion

Job search self-efficacy (JSSE) scale is a popular measure in the job search literature. However, previous psychometric work on the measure relied only on classical test theory. This study used Rasch analysis, an item response theory approach, to provide useful information on the reliability and internal validity of the measure. Overall, the results showed that the JSSE has sound psychometric properties and can be used for job search research among recent university graduates making the school-to-work transition.

Data Availability

The data on which the article reports are available from the author on reasonable written request.

Notes

Whereas proponents of Rasch measurement theory advocate that Rasch models have the capability to convert ordinal scale data to linear measures, some scholars hold contrary views (see Adroher, Prodinger, Fellinghauer, &Tennant, 2018).

References

Adroher, N. D., Prodinger, B., Fellinghauer, C. S., & Tennant, A. (2018). All metrics are equal, but some metrics are more equal than others: A systematic search and review on the use of the term 'metric'. PLoS One, 13(3), e0193861. https://doi.org/10.1371/journal.pone.0193861.

Andrich, D. (1978). Scaling attitude items constructed and scored in the Likert tradition. Educational and Psychological Measurement, 38(3), 665–680. https://doi.org/10.1177/001316447803800308.

Andrich, D. (2011). Rating scales and Rasch measurement. Expert Review of Pharmacoeconomics Outcomes Research, 11(5), 571–585. https://doi.org/10.1586/ERP.11.59.

Andrich, D., & Marais, I. (2019). A course in Rasch measurement theory measuring in the educational, social and health sciences. Singapore: Springer Nature.

Aryadoust, V., Ng, L. Y., & Sayama, H. (2021). A comprehensive review of Rasch measurement in language assessment: Recommendations and guidelines for research. Language Testing, 38(1), 6–40. https://doi.org/10.1177/0265532220927487.

Bandura, A. (1997). Self-efficacy: The exercise of control. New York: W. H. Freeman and Company.

Bond, T. G., & Fox, C. M. (2015). Applying the Rasch model: Fundamental measurement in the human sciences (3rd ed.). New York: Routledge.

Boone, W. J. (2016). Rasch analysis for instrument development: Why, when, and how? Life Sciences Education, 15(4), 1–7. https://doi.org/10.1187/cbe.16-04-0148.

Boone, W. J., & Noltemeyer, A. (2017). Rasch analysis: A primer for school psychology researchers and practitioners. Cogent Education, 4(1), 1416898. https://doi.org/10.1080/2331186X.2017.1416898.

Boone, W. J., & Staver, J. R. (2020). Principal component analysis of residuals (PCAR). In W. J. Boone & J. R. Staver (Eds.), Advances in Rasch analyses in the human sciences (Vol. 2, pp. 13–24). Cham: Springer. https://doi.org/10.1007/978-3-030-43420-5_2.

Boone, W. J., Staver, J. R., & Yale, M. S. (2014). Rasch analysis in the human sciences. The Netherlands: Springer Nature.

Bragstad, L. K., Lerdal, A., Gay, C. L., Kirkevold, M., Lee, K. A., Lindberg, M. F., Skogestad, I. J., Hjelle, E. G., Sveen, U., & Kottorp, A. (2020). Psychometric properties of a short version of Lee fatigue scale used as a generic PROM in persons with stroke or osteoarthritis: Assessment using a Rasch analysis approach. Health and Quality of Life Outcomes, 18, 168. https://doi.org/10.1186/s12955-020-01419-8.

Bravini, E., Giordano, A., Sartorio, F., Ferriero, G., & Vercelli, S. (2017). Rasch analysis of the Italian lower extremity functional scale: Insights on dimensionality and suggestions for an improved 15-item version. Clinical Rehabilitation, 31(4), 532–543. https://doi.org/10.1177/0269.

Caplan, R. D., Vinokur, A. D., Price, R. H., & van Ryn, M. (1989). Job seeking, reemployment, and mental health: A randomized field experiment in coping with job loss. Journal of Applied Psychology, 74(5), 759–769. https://doi.org/10.1037/0021-9010.74.5.759.

Chang, K.-C., Wang, J.-D., Tang, H.-P., Cheng, C.-M., & Lin, C.-Y. (2014). Psychometric evaluation, using Rasch analysis, of the WHOQOL-BREF in heroin-dependent people undergoing methadone maintenance treatment: Further item validation. Health and Quality of Life Outcomes, 12, 148 http://www.hqlo.com/content/12/1/148.

Chou, Y.-T., & Wang, W.-C. (2010). Checking dimensionality in item response models with principal component analysis on standardized residuals. Educational and Psychological Measurement, 70(5), 717–731. https://doi.org/10.1177/0013164410379322.

Cifre, E., Vera, M., Sánchez-Cardona, I., & de Cuyper, N. (2018). Sex, gender identity, and perceived employability among Spanish employed and unemployed youngsters. Frontiers in Psychology, 9, 2467. https://doi.org/10.3389/fpsyg.2018.02467.

Cordier, R., Joosten, A., Clavé, P., Schindler, A., Bulow, M., Demir, N., Arslan, S. S., & Speyer, R. (2017). Evaluating the psychometric properties of the eating assessment tool (EAT-10) using Rasch analysis. Dysphagia, 32, 250–260. https://doi.org/10.1007/s00455-016-9754-2.

Davidov, E., Meuleman, B., Cieciuch, J., Schmidt, P., & Billiet, J. (2014). Measurement equivalence in cross-national research. Annual Review of Sociology, 40, 55–75. https://doi.org/10.1146/annurev-soc-071913-043137.

De Bruin, G. P., & Henn, C. M. (2013). Dimensionality of the 9-item Utrecht work engagement scale (UWES-9). Psychological Reports, 112, 1–12. https://doi.org/10.2466/01.03.PR0.112.3.788-799.

De Bruin, G. P., Hill, C., Henn, C. M., & Muller, K.-P. (2013). Dimensionality of the UWES-17: An item response modelling analysis. SA Journal of Industrial Psychology, 39(2), Art. #1148. https://doi.org/10.4102/sajip.v39i2.1148.

Eden, D., & Aviram, A. (1993). Self-efficacy training to speed reemployment: Helping people to help themselves. Journal of Applied Psychology, 78(3), 352–360. https://doi.org/10.1037/0021-9010.78.3.352.

Edwards, M. C., Houts, C. R., & Cai, L. (2018). A diagnostic procedure to detect departures from local independence in item response theory models. Psychological Methods, 23(1), 138–149. https://doi.org/10.1037/met0000121.

Ellis, R. A., & Taylor, M. S. (1983). Role of self-esteem within the job search process. Journal of Applied Psychology, 68(4), 632–640. https://doi.org/10.1037/0021-9010.68.4.632.

Eriksson, S., & Lagerström, J. (2012). The labor market consequences of gender differences in job search. Journal of Labor Research, 33, 303–327. https://doi.org/10.1007/s12122-012-9132-2.

Fan, J., & Bond, T. (2019). Applying Rasch measurement in language assessment: Unidimensionality and local independence. In V. Aryadoust & M. Raquel (Eds.), Quantitative data analysis for language assessment (vol. 1): Fundamental techniques (pp. 83–102). London: Routledge.

Hagquist, C., & Andrich, D. (2017). Recent advances in analysis of differential item functioning in health research using the Rasch model. Health and Quality of Life Outcomes, 15, 181. https://doi.org/10.1186/s12955-017-0755-0.

Hansen, T., & Kjaersgaard, A. (2020). Item analysis of the eating assessment tool (EAT-10) by the Rasch model: A secondary analysis of cross-sectional survey data obtained among community-dwelling elders. Health and Quality of Life Outcomes, 18, 139. https://doi.org/10.1186/s12955-020-01384-2.

Harwell, M. R., & Gatti, G. G. (2001). Rescaling ordinal data to interval data in educational research. Review of Educational Research, 71(1), 105–131. https://doi.org/10.3102/00346543071001105.

Hattie, J. (2015). Dimensionality of tests: Methodology. In: International Encyclopedia of the Social & Behavioral Sciences (2nd ed., Vol. 6, pp. 437–439). Amsterdam: Elsevier Ltd. Retrieved from https://doi.org/10.1016/b978-0-08-097086-8.44016-x

Henn, C., & Morgan, B. (2019). Differential item functioning of the CESDR-R and GAD-7 in African and white working adults. SA Journal of Industrial Psychology, 45(0), a1663. https://doi.org/10.4102/sajip.v45i0.1663.

Jones, R. N. (2019). Differential item functioning and its relevance to epidemiology. Current Epidemiology Reports, 6, 174–183. https://doi.org/10.1007/s40471-019-00194-5.

Kanfer, R., & Hulin, C. L. (1985). Individual differences in successful job searches following lay-off. Personnel Psychology, 38(4), 835–847. https://doi.org/10.1111/j.1744-6570.1985.tb00569.x.

Kanfer, R., Wanberg, C. R., & Kantrowitz, T. M. (2001). Job search and employment: A personality–motivational analysis and meta-analytic review. Journal of Applied Psychology, 86(5), 837–855. https://doi.org/10.1037/0021-9010.86.5.837.

Kean, J., Bisson, E. F., Brodke, D. S., Biber, J., & Gross, P. H. (2017). An introduction to item response theory and Rasch analysis: Application using the eating assessment tool (EAT-10). Brain Impairment, 19(1), 91–102. https://doi.org/10.1017/BrImp.2017.31.

Khine, M. S. (Ed.). (2020). Rasch measurement applications in quantitative educational research. Singapore: Springer Nature.

Kim, J. G., Kim, H. J., & Lee, K.-H. (2019). Understanding behavioral job search self-efficacy through the social cognitive lens: A meta-analytic review. Journal of Vocational Behavior, 112, 17–34. https://doi.org/10.1016/j.jvb.2019.01.004.

Lambert, S. D., Pallant, J. F., Boyes, A. W., King, M. T., Britton, B., & Girgis, A. (2013). A Rasch analysis of the hospital anxiety and depression scale (HADS) among cancer survivors. Psychological Assessment, 25, 379–390. https://doi.org/10.1037/a0031154.

Linacre, J. M. (1998a). Detecting multidimensionality: Which residual data-type works best? Journal of Outcome Measurement, 2(3), 266–283.

Linacre, J. M. (1998b). Structure in Rasch residuals: Why principal components analysis? Rasch Measurement Transactions, 12(2), 636.

Linacre, J. M. (2009). Local independence and residual covariance: A study of Olympic figure skating ratings. Journal of Applied Measurement, 10(2), 157–169.

Linacre, J. M. (2019). Winsteps® (version 4.4.2) [computer software]. Beaverton, Oregon: Winsteps.com. Retrieved May 8, 2020. Available from https://www.winsteps.com/

Linacre, J. M. (2020). Winsteps® (version 4.5.2) [computer software]. Beaverton, Oregon: Winsteps.com. Retrieved April 28, 2020. Available from https://www.winsteps.com/

Llinares-Insa, L. I., González-Navarro, P., Córdoba-Iñesta, A. I., & Zacarés-González, J. J. (2018). Women’s job search competence: A question of motivation, behavior, or gender. Frontiers in Psychology, 9, 137. https://doi.org/10.3389/fpsyg.2018.00137.

Medvedev, O. N., Krägeloh, C. U., Hill, E. M., Billington, R., Siegert, R. J., Webster, C. S., Booth, R. J., & Henning, M. A. (2019). Rasch analysis of the perceived stress scale: Transformation from an ordinal to a linear measure. Journal of Health Psychology, 24(8), 1070–1081. https://doi.org/10.1177/1359105316689603.

Papyrina, V., Strebel, J., & Robertson, B. (2020). The student confidence gap: Gender differences in job skill self-efficacy. Journal of Education for Business. https://doi.org/10.1080/08832323.2020.1757593.

Petrillo, J., Cano, S. J., McLeod, L. D., & Coon, C. D. (2015). Using classical test theory, item response theory, and Rasch measurement theory to evaluate patient-reported outcome measures: A comparison of worked examples. Value in Health, 18, 25–34. https://doi.org/10.1016/j.jval.2014.10.005.

Putnick, D. L., & Bornstein, M. H. (2016). Measurement invariance conventions and reporting: The state of the art and future directions for psychological research. Developmental Review, 41, 71–90. https://doi.org/10.1016/j.dr.2016.06.004.

Raîche, G. (2005). Critical eigenvalue sizes in standardized residual principal components analysis. Rasch Measurement Transactions, 19, 1012. https://doi.org/10.1016/B978-012471352-9/50004-3.

Rasch, G. (1960). Probabilistic models for some intelligence and attainment tests. Copenhagen: Danmarks Paedogogiske Institut.

Røe, C., Damsgård, E., Fors, T., & Anke, A. (2014). Psychometric properties of the pain stages of change questionnaire as evaluated by Rasch analysis in patients with chronic musculoskeletal pain. BMC Musculoskeletal Disorders, 15, 95 http://www.biomedcentral.com/1471-2474/15/95.

Saks, A. M., & Ashforth, B. E. (1999). Effects of individual differences and job search behaviors on the employment status of recent university graduates. Journal of Vocational Behavior, 54(2), 335–349. https://doi.org/10.1006/jvbe.1998.1665.

Saks, A. M., Zikic, J., & Koen, J. (2015). Job search self-efficacy: Reconceptualizing the construct and its measurement. Journal of Vocational Behavior, 86, 104–114. https://doi.org/10.1016/j.jvb.2014.11.007.

Smith, E. V., Jr. (2002). Understanding Rasch measurement: Detecting and evaluating the impact of multidimenstionality using item fit statistics and principal component analysis of residuals. Journal of Applied Measurement, 3(2), 205–231.

Smith, R. M., & Miao, C. Y. (1994). Assessing unidimensionality for Rasch measurement. In M. Wilson (Ed.), Objective measurement: Theory into practice (Vol. 2, pp. 316–327). Norwood, NJ: Albex.

Testa, S., Doucerain, M. M., Migliettaa, A., Jurcik, T., Ryder, A. G., & Gattino, S. (2019). The Vancouver index of acculturation (VIA): New evidence on dimensionality and measurement invariance across two cultural settings. International Journal of Intercultural Relations, 71, 60–71. https://doi.org/10.1016/j.ijintrel.2019.04.001.

Van Hoye, G., Van Hooft, E. A. J., Stremersch, J., & Lievens, F. (2019). Specific job search self-efficacy beliefs and behaviors of unemployed ethnic minority women. International Journal of Selection and Assessment, 27(1), 9–20. https://doi.org/10.1111/ijsa.12231.

Wanberg, C. R., Zhang, Z., & Diehn, E. W. (2010). Development of the “getting ready for your next job” inventory for unemployed individuals. Personnel Psychology, 63(2), 439–478. https://doi.org/10.1111/j.1744-6570.2010.01177.x.

Wilson, M. (1988). Detecting and interpreting local item dependence using a family of Rasch models. Applied Psychological Measurement, 12(4), 356–364. https://doi.org/10.1177/014662168801200403.

Wright, B. D. (1994). Comparing factor analysis and Rasch measurement. Rasch Measurement Transactions, 8(1), 350.

Wright, B. D. (2000). Conventional factor analysis vs. Rasch residual factor analysis. Rasch Measurement Transactions, 14(2), 753.

Zhong, Q., Gelaye, B., Fann, J. R., Sanchez, S. E., & Williams, M. A. (2014). Cross-cultural validity of the Spanish version of PHQ-9among pregnant Peruvian women: A Rasch item response theory analysis. Journal of Affective Disorders, 158, 148–153. https://doi.org/10.1016/j.jad.2014.02.012.

Acknowledgements

This study was generously supported by a postdoctoral fellowship award from the Department of Industrial Psychology, Stellenbosch University, to the author.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of Interest

The author declares that they have no conflicts of interest to report.

Ethics Approval

The study protocol was approved by the Institutional Review Board of the University of Ghana (Ref#: ECH116/19-20).

Consent to Participate

Informed consent was obtained from all the participants of the study.

Consent for Publication

Consent for publication was obtained from all of the participants of the study.

Additional information

Publisher’s Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Teye-Kwadjo, E. The Job-Search Self-Efficacy (JSSE) Scale: an Item Response Theory Investigation. Int J Appl Posit Psychol 6, 301–314 (2021). https://doi.org/10.1007/s41042-021-00050-2

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s41042-021-00050-2