Abstract

In this article we consider the post-retirement phase optimization problem for a specific pension product in Germany that comes without guarantees. The continuous-time optimization problem is defined consisting of two specialties: first, we have a product-specific pension adjustment mechanism based on a certain capital coverage ratio which stipulates compulsory pension adjustments if the pension fund is underfunded or significantly overfunded. Second, due to the retiree’s fear of and aversion against pension reductions, we introduce a total wealth distribution to an investment portfolio and a buffer portfolio to lower the probability of future potential pension shortenings. The target functional in the optimization, that is to be maximized, is the client’s expected accumulated utility from the stochastic future pension cash flows. The optimization outcome is the optimal investment strategy in the proposed model. Due to the inherent complexity of the continuous-time framework, the discrete-time version of the optimization problem is considered and solved via the Bellman principle. In addition, for computational reasons, a policy function iteration algorithm is introduced to find a stationary solution to the problem in a computationally efficient and elegant fashion. A numerical case study on optimization and simulation completes the work with highlighting the benefits of the proposed model.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

In this article, we study a pension insurance related optimization problem which targets to maximize the expected utility of the future stochastic pension cash flows of a client within a specific pension adjustment and investment model. This model covers a certain pension adjustment mechanism, where pension guarantees are disregarded, but the pension needs to be adjusted (either reduced or increased) if the pension fund becomes underfunded or significantly overfunded, and a buffer rule to smooth the pension development over time and to reduce the probability of pension shortenings. The solution to the problem is given in form of the optimal investment strategy in the proposed system.

The above is motivated by the need of a suitable pension product that allows for higher expected returns on the investments particularly when interest rates are low or even negative. In the recent low interest-rate environment, traditional pension funds, which allocate a high proportion of their wealth to defensive assets such as government bonds due to the promised guarantees, can only offer a relatively small expected return on the investments. By this, the pension fund wealth of a client grows at a rather small rate and consequently the future pension payments will be quite low. Generally, clients seek for and desire a stable evolution of their reported wealth (and their pension) at a high expected return and with a limited downside. Therefore, alternative strategies without guarantees but with a certain downside protection can provide a significant contribution.

For this reason, we consider a certain pension productFootnote 1 that comes with a buffer and a pension adjustment mechanism to enhance expected returns. The product allows company pension schemes to only make contribution-related promises but forbids performance-related guarantees. To allow for a performance- or return-seeking characteristic, the product comes with no pension cash flow guarantee at all. The product generally consists of two phases: the pre-retirement or accumulation phase and the post-retirement or decumulation phase. We focus on the wealth decumulation phase in what follows. This phase can be regarded as a modification of a defined benefit (DB) plan, where the pensions stay constant as long as the wealth remains inside a pre-defined corridor. As this new pension product is currently in a development stage in Germany and is being built up, we study the impact of the associated model. As every investor has an individual risk appetite or risk attitude, professional decision making under uncertainty needs to consider an adequate modeling of a certain risk-reward tradeoff. A more risk-averse investor generally prefers a portfolio with a lower risk in terms of some risk measure, coming at the cost of smaller returns on average. The general question arises how the pension fund’s wealth is to be invested such that the benefits for the clients are maximized. This particular question about a scientifically founded investment strategy for a Nahles–Rente pension product is addressed in this work. Thus, we contribute with the following: First, a mathematical model (for a single client and an age-grouped cohort) is built up that incorporates a certain buffer rule and a pension adjustment mechanism. Moreover, the optimal investment strategy is derived by maximizing the expected utility of the stochastic pension cash flows. Afterwards, a numerical optimization and simulation case study is carried out to illustrate the optimal control and analyze its characteristics. Based on the case study, under the assumption of a positive interest rate, we find that the introduction of the proposed buffer system significantly reduces the probabilities for pension reductions and leads to a certain tradeoff between the initial pension level at retirement time and performance: A more pronounced buffer system is connected to a smaller initial pension level, but at the same time leads to a superior performance of the pension evolution. We conclude that our proposed model leads to a sophisticated optimal dynamic asset allocation policy that provides remarkable benefits to clients and represents a meaningful alternative to risk-averse clients. For a proposal and some discussion of an alternative model formulation that designs a pension product without guarantees we refer to [6], where the modeling is related to but differs from our approach.

The remainder is organized as follows: First of all, Sect. 2 introduces the considered financial market model (classical Black–Scholes model with constant parameters) that consists of a riskless and multiple risky assets. Sect. 3 models the continuous-time mathematical framework for the decumulation phase under a constant force of mortality. The resulting portfolio selection problem (single-client and cohort version) is stated in Sect. 4 and is solved in discrete-time. Although the problem is finally solved in a discrete-time framework, we first introduce the continuous-time setup as we would like to embed the problem into the standard portfolio selection problems that deal with continuous-time capital market and decision models. Due to implementation reasons, Sect. 5 provides an approximate solution to the original problem in form of a stationary solution. To be able to solve the complex problem, we particularly impose the following assumptions and simplifications: The applied constant force of mortality implies an exponentially distributed remaining uncertain lifetime. For algorithmic reasons, the planning or investment horizon of the system is set to infinity to obtain an optimal stationary solution. This solution can be used as an approximation for a finite planning horizon when the survival probability of exceeding this horizon is sufficiently small. Additionally, separability of time and pension in the pension utility function is assumed jointly with an exponential time-dependence. Moreover, certain (mostly equidistant) discretization grids are utilized when the problem is addressed in discrete-time. An extensive numerical case study visualizes the optimal asset allocation strategy and highlights its benefits in Sect. 6. Finally, Sect. 7 concludes.

2 The financial market model

Let \(T < \infty \) denote the initial time of the post-retirement phase, the retirement entry time in most cases. Further let \({\tilde{T}}\) denote the end of the investment period and let \((\Omega , {\mathcal {F}}, {\mathbb {F}} = \left( {\mathcal {F}}_{t}\right) _{t \in [T,{\tilde{T}}]}, {\mathbb {P}})\) be a filtered complete probability space that satisfies the usual conditions and let \({W = \left( W(t)\right) _{t \in [T,{\tilde{T}}]}}\), \(W(t) = (W_{1}(t), \ldots , W_{N}(t))'\), \(N \in {\mathbb {N}} \), denote a standard N-dimensional Brownian motion. \(\Omega \) is the sample space, \({\mathbb {P}}\) denotes the real-world probability measure and \({\mathcal {F}}_{t}\) the natural filtration generated by W(s), \({T \le s \le t}\), that is augmented by all the null sets. By this we introduce uncertainty into the considered continuous-time financial market model that is frictionless and consists of \(N+1\) continuously traded assets: one risk-less asset \(P_{0}\) and N risky assets \(P_{i}\), \(i = 1, \ldots , N\). The price of the risk-less asset is subject to the equation

where \(r \ge 0\) is the constant risk-less interest rate. The remaining N assets, usually referred to as asset classes, are subject to the stochastic differential equations

where \({\mu = \left( \mu _{1},\ldots ,\mu _{N}\right) ' \in {\mathbb {R}}_{+}^{N}}\) with \({\mu - r {\mathbf {1}} > {\mathbf {0}}}\) is the constant drift and \(\sigma _{i} = (\sigma _{i1}, \ldots , \sigma _{iN}) \in {\mathbb {R}}_{+}^{1 \times N}\) denotes the constant volatility vector of assets \(i = 1, \ldots , N\). Here, \(x'\) stands for the transpose of some vector x, \({\mathbf {1}} := (1, \ldots , 1)'\) and \({\mathbf {0}} := (0, \ldots , 0)'\). The volatility matrix is defined as \({\sigma = \left( \sigma _{ij}\right) _{i,j = 1,\ldots ,N}}\) with corresponding covariance matrix \({\Sigma = \sigma \sigma '}\) of the log-returns which is assumed to be strongly positive definite, i.e. there exists \(K > 0\) such that \({\mathbb {P}}\)-a.s. it is \({x' \Sigma x \ge K x' x}\), \({\forall x \in {\mathbb {R}}^{N}}\). Moreover, within this framework \({\gamma = \sigma ^{-1} (\mu - r {\mathbf {1}})}\) denotes the market price of risk. In accordance with [13] there exists a unique risk-neutral probability measure \({\mathbb {Q}}\) within the above market dynamics. Additionally, the financial market is complete which enables us to determine the present value of stochastic cash flows as expected discounted payments under the measure \({\mathbb {Q}}\). The associated pricing kernel or state price deflator, which we denote by \({\tilde{Z}}(t)\), is defined as

and can be used for the valuation of cash flow streams under the real-world probability measure \({\mathbb {P}}\). The dynamics of the pricing kernel is

Further, let \(\varphi = (\varphi _{0}, {\hat{\varphi }})'\), \({{\hat{\varphi }} = (\varphi _{1}, \ldots , \varphi _{N})'}\) denote a trading strategy that is assumed to be \({\mathcal {F}}_{t}\)-progressively measurable, self-financing with \({\mathbb {P}}\left( \int _{0}^{T} |\varphi _{0}(t)| + \Vert {\hat{\varphi }}(t)\Vert ^{2} dt < \infty \right) = 1\). \(\varphi _{i}(t)\) represents the number of individual shares of asset i held by the investor at time t. Analogically, we denote the \({\mathcal {F}}_{t}\)-progressively measurable and self-financing relative portfolio process with \({\pi = (\pi _{0}, {\hat{\pi }}')'}\), \({{\hat{\pi }} = (\pi _{1}, \ldots , \pi _{N})'}\), where \({\hat{\pi }}\) represents the risky relative investment and \({\pi _{0}(t) = 1-{\hat{\pi }}(t)'{\mathbf {1}}}\) the risk-less relative investment. In general, \(\pi _{i}(t)\) denotes the proportion of wealth allocated to asset i at time t and is related to \(\varphi _{i}(t)\) through

where V(t) denotes the corresponding wealth at time t. The wealth V(t), and in particular its characterizing dynamics dV(t), are to be defined in the upcoming section.

3 The decumulation phase mathematical model

In the following we present and explain the mathematical modeling of the pension plan dynamics associated with the decumulation phase. At first we consider a single client, but later relax the framework to a cohort model where customers are grouped by their age. Remember that time T denotes the initial time where the post-retirement pension fund is started. The total individual wealth of client i in cohort j at time \(t \ge T\) is denoted by \(V_{ij}^{\text {(total)}}(t)\), the individual continuously-withdrawn pension payment rate by \(P_{ij}(t) \ge 0\) and the time-t present value of all outstanding future pension payments to this specific client under a constant pension development assumption by \(E_{ij}(t)\). The latter can be expressed as

where \(\tau _{ij}^x(T)\) denotes the uncertain total lifetime of client i in cohort j who is aged x at time T. Throughout the paper we consider a constant mortality rate \(\lambda _{x} = \lambda _{x(ij)} > 0\). Therefore, the survival probability of a client aged x at time T to survive from time T until time \(t > T\) is given by \({\mathbb {P}}(\tau _{ij}^x(T) \ge t | \tau _{ij}^x(T) \ge T) = e^{- \lambda _{x} (t-T)}\), \(\lambda _{x} > 0\). Moreover, we assume \(\tau _{ij}^x(T)\) (uncertain total lifetime) to be independent of the filtration \({\mathbb {F}}\). Within this model, we have for \(s \ge t \ge T\):

Together with a given and thus known \(P_{ij}(t)\) at time t, applying Fubini and using that \(\tau _{ij}^x(T)\) is independent of \({\mathbb {F}}\), \(E_{ij}(t)\) becomes

\(E_{ij}(t)\) can be regarded as perpetual annuity. Moreover, the pension rate \(P_{ij}(t)\) is adjusted such that a certain capital coverage ratio

is met. Particularly, regulations of BaFin force

Generally for all \(t \ge T\), the total wealth \(V_{ij}^{\text {(total)}}(t)\) that belongs to client i in cohort j is internally divided into an investment portfolio \(V_{ij}^{\text {(inv)}}(t)\) (portfolio mix of riskless and risky assets) and a buffer portfolio \(V_{ij}^{\text {(buffer)}}(t)\) (deposit account with zero interest rateFootnote 2) such that

Let us define

This immediately implies the relationship

We propose the following structure:

for some \(\alpha \in [0,1]\). The remainder builds the investment portfolio

Thus, we define the buffer balance to be the proportion \(\alpha \) of the cushion or surplus \(V_{ij}^{\text {(total)}}(t) - E_{ij}(t)\), the remaining fund flows into the investment portfolio. Further we would like to control the capital coverage ratio for the investment portfolio such that all pension payments can be made by the investment portfolio under normal circumstances, where the buffer account can help out in bad scenarios. For this sake, let us denoteFootnote 3 by \({\bar{p}} \in [100\%, 125\%]\) the value for \(CCR_{ij}^{\text {(inv)}}(t_{n})\) after readjustment at some re-set time \(t_{n}\) where the pre-readjustment value at time \(t_{n}\) falls outside the corridor \([100\%, 125\%]\). For instance, one could set \({\bar{p}} = 112.5 \%\) to the center of the corridor. Note that Eq. (3.9) leads to

for all \(t \ge T\), which can be reformulated to

We would like to stress out that the parameters \(\alpha \) and \({\bar{p}}\) are exogenously given and time- as well as client-independent. In the following we propose and describe a certain adjustment mechanism for the pension rate and the buffer system and demonstrate that it actually satisfies Eq. (3.9) for all adjustment times (called \(t_{n}\)) as well as all non-adjustment times (\(t \ne t_{n}\)), i.e. for all \(t \ge T\).

3.1 System at re-adjustment times

As already mentioned, whenever the corridor is exceeded at some time \(t_{n} \ge T\), the pension rate \(P_{ij}(t_{n})\) needs to be adjusted (either reduced or increased) such that \(CCR_{ij}^{\text {(total)}}(t_{n}) \in [100 \%, 125 \%]\). The fund keeps the pension rates constant between the re-adjustment times \(t_{n}\), \(n \in {\mathbb {N}}\), which are defined by

For the sake of convenience we define \(t_{0} := T\) as first (re-)adjustment time. At time \(t_{n}\) the system gets re-adjusted such that \(CCR_{ij}^{\text {(inv)}}(t_{n})\) becomes \({\bar{p}}\):

In view of Eq. (3.12) this is equivalent to

Moreover, from Eq. (3.15) it follows

This means that when \({\bar{p}}\) and \(V_{ij}^{\text {(total)}}(t_{n})\) are known at time \(t_{n}\), then the selection of \(\alpha \) determines the adjusted pension rates \(P_{ij}(t_{n})\) at re-set time \(t_{n}\). Moreover, a higher value of \({\bar{p}}\), everything else staying constant, implies a smaller adjusted pension rate \(P_{ij}(t_{n})\). Hence, at every re-adjustment time \(t_{n}\) (especially at time \(t_{0} = T\)), the adjusted pension rate \(P_{ij}(t_{n})\) is selected according to Eq. (3.16) such that \(CCR_{ij}^{\text {(total)}}(t_{n}) = \frac{{\bar{p}} - \alpha }{1 - \alpha }\) (and \(CCR_{ij}^{\text {(inv)}}(t_{n}) = {\bar{p}}\)), and it further holdsFootnote 4\(P_{ij}(t) \equiv P_{ij}(t_{n})\) \(\forall t \in [t_{n}, t_{n+1})\). Finally, we receive

Notice that as we define \({\bar{p}} := CCR_{ij}^{\text {(inv)}}(t_{n})\) in Eq. (3.14) to coincide for any customer (\({\bar{p}}\) independent of ij) and to be time-independent, so do \(CCR_{ij}^{\text {(total)}}(t_{n})\) and \(CCR_{ij}^{\text {(buffer)}}(t_{n})\) which we learn from Eqs. (3.15) and (3.18). As \(CCR_{ij}^{\text {(total)}}(t_{n}) \in [100 \%, 125 \%]\) is required, i.e. it has to stay inside the boundaries, we must have \({\frac{{\bar{p}} - \alpha }{1 - \alpha } \in [100 \%, 125 \%]}\). For economic reasons, suppose \({{\bar{p}} \in [100 \%, 125 \%]}\) and \(\alpha \in [0,1]\). Therefore we have the regulatory condition

on the variable \(\alpha \). In particular, when \({\bar{p}} = 112.5 \%\), then \(\alpha \) can be selected out of the interval \({\left[ 0 \%, 50 \%\right] }\).

3.2 Dynamics between re-adjustment times

So far we described the framework and mechanism at the re-adjustment times \(t_{n}\). For all \(t \ge T\), \(t \ne t_{n}\), we propose the following buffer rate mechanism (rate of change of the buffer balance) that drives \(V_{ij}^{\text {(buffer)}}(t)\), where we implicitly assume that the buffer account is a simple account that pays no interest (see Footnote 2):

This means that between any two re-adjustment times, the buffer portfolio \(V_{ij}^{\text {(buffer)}}(t)\) evolves according to the changes in the surplus \(V_{ij}^{\text {(total)}}(t) - E_{ij}(t)\). Especially in a situation where the change in the total wealth \(V_{ij}^{\text {(total)}}(t)\) and the change in the liabilities \(E_{ij}(t)\) coincide, the buffer portfolio remains constant. Eq. (3.20) leads to

for all \(t \ge T\), i.e. Eq. (3.9) could be verified for all \(t = t_{n}\) as well as \(t \ne t_{n}\). Hence it turns out that the proportional distribution of the total wealth \(V_{ij}^{\text {(total)}}(t)\) to the investment and buffer portfolio is identical for all times \(t \ge T\). The formula for the pension rate at \(t \ne t_{n}\) was already shown in Eq. (3.17). A very beneficial feature of this proposed buffer account and process is the following relation for times \(t \ne t_{n}\):

Therefore, a downwards adjustment of the pension rate (\(CCR_{ij}^{\text {(total)}}(t)\) falls short \(100 \%\)) comes at the same time as a zero value in the buffer account.

Additionally, for times \(t \ne t_{n}\), the dynamics of the investment portfolio, which serves the pension outflows and the buffer rate (positive or negative), follows the stochastic differential equation of a classically portfolio that is invested into the capital market:

The first component of the formula coincides with a pure classical investment part, where \({\hat{\pi }}^{\text {(inv)}}(t)\) denotes the risky relative investment that corresponds to the investment portfolio with wealth \(V_{ij}^{\text {(inv)}}(t)\), plus two additional components in the form of a pension rate outflow \(- P_{ij}(t) dt\) and a buffer rate inflow or outflow \(- c_{ij}^{\text {(buffer)}}(t) dt\). Furthermore, bringing the dynamics of \(V_{ij}^{\text {(inv)}}(t)\) and \(V_{ij}^{\text {(buffer)}}(t)\) together, gives

As the wealth of the buffer portfolio is not invested in the capital market, the total time-t risky exposure is given by \({\hat{\pi }}^{\text {(inv)}}(t) V_{ij}^{\text {(inv)}}(t)\) which determines the following relative risky investment \({\hat{\pi }}^{\text {(total)}}(t)\) of the total wealth \(V_{ij}^{\text {(total)}}(t)\) depending on the capital coverage ratio:

Since \(CCR_{ij}^{\text {(total)}}(t) \in [100 \%, 125 \%]\) by regulation, we obtain

It follows immediately that \({\hat{\pi }}^{\text {(total)}}(t) \le {\hat{\pi }}^{\text {(inv)}}(t)\), hence the buffer indeed dampens the relative risky investment for the total portfolio. To prevent from leverage for a given \(\alpha \), one has to restrict \(({\hat{\pi }}^{\text {(inv)}}(t))' {\mathbf {1}} \le 1\) resp. \(({\hat{\pi }}^{\text {(total)}}(t))' {\mathbf {1}} \le 1\). To exclude short-selling, one needs to enforce \({\hat{\pi }}^{\text {(total)}}(t) \ge {\mathbf {0}}\) resp. \({\hat{\pi }}^{\text {(inv)}}(t) \ge {\mathbf {0}}\).

Finally, the discretized version of the stochastic differential equation (3.24) of the total wealth is given by

where \(Z \sim {\mathcal {N}}(0,1)\) is an N-dimensional vector of independent standard normal random variables. Moreover, based on Eqs. (3.16) and (3.17), the discrete-time version of the pension rate development is

Equation (3.28) tells that if the past performance of the total wealth investment was very high, then the pension rate for the next period gets larger. In opposite, if the performance of the total wealth in the preceding period was very low, the pension rate for the upcoming period gets reduced. Finally, the pension rate remains unchanged if the total wealth stays within some lower and upper boundary.

4 The decumulation phase portfolio selection problem

4.1 Continuous-time optimization problem

The fund’s target is to maximize the client’s expected accumulated utility coming from the stochastic future pension cash flows. The buffer portfolio is established to reduce the probability of undesired pension shortenings and thus to keep the pension more stable. The risk-return tradeoff in the optimization depends on the type of applied utility function U. Since no bequest payments are considered, the continuous-time portfolio selection problem for an initial wealth \(V_{ij}^{\text {(total)}}(T) = v_{0}\) and planning horizon \({\tilde{T}} \in (T, \infty ]\) is given by

The dynamics of \(P_{ij}(t)\) is covered by Eqs. (3.16)–(3.17). The set \(\Lambda \) covers all admissible strategies \(\pi ^{\text {(inv)}}\). A personal discount rate can be hidden in U. Later we select U to be an increasing concave utility function, which means that the client prefers a larger pension rate \(P_{ij}(t)\), but an increase in the pension rate would lead to less additional satisfaction the larger the pension rate already is. The objective function that is to be maximized in Problem (4.1) arises from

Similarly, the budget constraint in Problem (4.1) arises from

Throughout, let the intertemporal utility function U(t, p) admit the following form:

where \({\tilde{U}}\) is a strictly increasing and concave utility function and \(\beta \ge 0\) denotes the subjective discount rate with utility discount factor \(e^{- \beta (t-T)}\).

4.2 Discrete-time dynamic optimization

In what follows we target to solve Problem (4.1). Due to the nature and complexity of the scheme (especially the pension rate adjustment mechanism) coming from the regulatory requirements, we consider the discrete-time version of Problem (4.1) from now on and apply discrete-time dynamic optimization methods. We first translate the Problem (4.1) into the corresponding discrete-time problem. For this sake, we divide the investment period \([T, {\tilde{T}}]\) into an equidistant grid with a distance of \(\Delta > 0\) between every grid point

with \(N_{\Delta } := \frac{{\tilde{T}} - T}{\Delta }\), such that \(t^{(0)} = T\) and \(t^{(N_{\Delta })} = {\tilde{T}}\) with \(t^{(k+1)} - t^{(k)} \equiv \Delta \). We assume \(N_{\Delta } = \frac{{\tilde{T}} - T}{\Delta } {\mathop {\in }\limits ^{!}} {\mathbb {N}}\) which for instance holds true if \({\tilde{T}} - T \in {\mathbb {N}}\) is in full years and \(\Delta \in \{1, \frac{1}{2}, \frac{1}{4}, \frac{1}{12}, \frac{1}{52}, \frac{1}{250}, \ldots \}\), i.e. pension rate adjustments and rebalancing of the portfolio take place annually, semi-annually, quarterly, monthly, weekly, daily, etc.. The decision variable \(\pi ^{\text {(inv)}}(t^{(k)})\) is applied on the entire interval \([t^{(k)}, t^{(k+1)}) = [t^{(k)}, t^{(k)} + \Delta )\) and is updated again at time \(t^{(k+1)} = t^{(k)} + \Delta \); the same holds for \(P_{ij}(t)\). Within the discrete framework, the objective function \({\mathcal {J}}(\pi ^{\text {(inv)}};v_{0}, c_{ij}^{\text {(buffer)}})\) that is to be maximized translates to

For simplifying notations, let us define for \({k \in \{0, \ldots , N_{\Delta }\}}\):

\(S_{(k)}\) denotes the two-dimensional state space with \(S_{(k)} \subseteq {\mathbb {R}}_{+}^{2}\). \(a_{k}\) is the action (or control variable) for period \([t^{(k)}, t^{(k+1)})\). It is the risky relative investment strategy of the investment portfolio, with \(a_{k} \in {\mathbb {A}}\), where \({\mathbb {A}} := \left\{ a \in [0,1]^{N}:\ a' {\mathbf {1}} \le 1\right\} \) denotes the set that includes all possible portfolio weights at a given time point. The definition of \({\mathbb {A}}\) ensures the following: For a vector \(a \in {\mathbb {A}}\), \(a \ge {\mathbf {0}}\) prevents from short-selling of a risky asset, \(a' {\mathbf {1}} \le 1\) rules out leverage. In the case of a single asset class (\(N = 1\)), the set reduces to \({\mathbb {A}} = [0,1]\). Moreover, \({\mathcal {F}}_{k}\) contains all the information accumulated from time \(t = 0\) to time \(t = t^{(k)}\), which particularly includes the information \(\left( V_{ij}^{\text {(total)}}(t^{(k)}), P_{ij}(t^{(k)})\right) = \left( V_{(k)}, P_{(k)}\right) = S_{(k)}\). The optimization problem in discrete time then reads

We now address the stochastic control problem in (4.8). We assume a Markov model, i.e. the objects at time \(t^{(k+1)}\) depend only on the respective objects at time \(t^{(k)}\) but not on all preceding times \(t^{(0)}, \ldots , t^{(k-1)}\). Hence, the information \(S_{(k)}\) at time \(t^{(k)}\) is sufficient, \({\mathcal {F}}_{k}\) contains additional but unnecessary information. In view of the dynamic programming principle (or Bellman’s principle) (cf. [2, 4, 5, 14], or [9] for an application), we consider the following time-t problem (for convenience let \(t = t^{(k)}\) for some \(k \in \{0, \ldots , N_{\Delta } - 1\}\)):

and

As we have a Markov model, we search for the optimal asset allocation decision rule \(a_{k}^{\star } = {\hat{\pi }}^{\star \text {(inv)}}(t^{(k)}) = {\hat{\pi }}^{\star \text {(inv)}}(S_{(k)})\) at every time \(t^{(k)}\). Note

where \(S_{(0)} = (V_{(0)}, P_{(0)}) {\mathop {=}\limits ^{(3.16)}} (v_{0}, \frac{1 - \alpha }{{\bar{p}} - \alpha } (r + \lambda _{x}) v_{0} )\). In order to write the Bellman equation associated with Problem (4.9), the definition of the state transition function comes next. Let \(Z \sim {\mathcal {N}}(0,1)\) be a multi-dimensional vector of independent standard normal random variables of dimension N (= number of risky assets). Z represents the stochastic part of the fund return in period \([t^{(k)}, t^{(k+1)})\) (independent in every period), i.e. Z is the risk driver or risk factor that drives the fund’s performance besides the deterministic drift part. According to Eqs. (3.27) and (3.28), the transition function \(T_{B}\) for \(S_{(k)} \mapsto S_{(k+1)}\) is

where

and

with

for \(j \ge i\). We further have

4.3 Bellman equation

The definition of the transition function enables us to introduce the associated Bellman equation

with \(S_{(k+1)} = T_{B}(S_{(k)}, a_{k}, Z)\). The formula for \(k \in \{N_{\Delta } - 1, \ldots , 0\}\) follows from inserting the one-period or one-stage reward function \(r_{k}(S_{(k)}, a_{k}) \equiv r_{k}(S_{(k)})\) into the original equation

The one-period reward function describes the contribution or reward to the client’s satisfaction in the period \([t^{(k)}, t^{(k+1)})\) linked to the pension \(P_{(k)}\) that is paid out in \([t^{(k)}, t^{(k+1)})\) independently of the action or applied relative risky investment strategy \(a_{k} = {\hat{\pi }}^{\text {(inv)}}(t^{(k)})\). Since the value for \(r_{k}(S_{(k)})\) is already known at time \(t^{(k)}\), i.e. it is deterministic and independent of the decision \(a_{k}\). We moreover obtain

The original discrete-time dynamic optimization problem (4.8) can then be solved by backwards induction of the Bellman equation (4.17). The optimal decision rule or policy \(a_{k}^{\star } = {\hat{\pi }}^{\star \text {(inv)}}(S_{(k)})\) needs to be determined in any step and for every possible state \(S_{(k)}\) backwards in time. By this, we further receive the optimal total risky relative portfolio process \({\hat{\pi }}^{\star \text {(total)}} = {\hat{\pi }}^{\text {(total)}}(a_{k}^{\star })\) through Eq. (4.16).

4.4 Extension to a single-cohort model

In this section we briefly describe one possible method of how the so far explained single-client model can easily be extended and aggregated into a single-cohort model where one cohort covers all clients of (roughly) the same age. Let us consider a cohort of clients grouped by age (\(x = x(j)\) years old at time T) that has \(m_{j}\) members. We manage the total cohort portfolio and the pension collectively and thus define

to be the sum of all pension payments \(P_{ij}(t)\) connected to all members i in cohort j at time t. Since we consider one cohort, there are no intertemporal inflows into the model. We assume that there is no bequest paid out in the case of a cohort member’s death. Further we re-interpret the mortality model: The survival probability

for a single client is now regarded as the average relative survival frequency of the cohort, i.e. we assume \(e^{- \lambda _{x(j)} (s - t)}\) to be the average proportion of clients in cohort j that survive from time t to time s. This comes from the following observation: Let \(\tau _{ij}^{x(j)}(T)\) denote the uncertain remaining lifetime of client i in cohort j which is identically distributed among all clients in one cohort. Then the uncertain proportion of survivors from time t to s in the cohort is described by the random variable \(\frac{\sum _{i = 1}^{m_j} {\mathbbm {1}}_{\{\tau _{ij}^{x(j)}(T) \ge s | \tau _{ij}^{x(j)}(T) \ge t\}}}{m_j}\). Its expectation is

In other words, the average cohort proportion of surviving clients equals the survival probability of a single client in this cohort. Moreover, since the number of customers in cohort j reduces continuously in time due to deaths of cohort members, the average pension cash flows \(P_{j}(t)\) needs to be adjusted to

assuming that all single-client pensions remain constant, and only those connected to a client’s death are removed. We define \(P_{(k)} := P_{j}(t^{(k)})\) in the state \(S_{(k)} = \left( V_{(k)}, P_{(k)}\right) \), where \(V_{(k)}\) denotes the total collective wealth of cohort j. For this reason, we have to modify the transition function \(T_{B}^{(P)}\) for the pension \(P_{(k)}\) as follows:

with

for \(l \ge i\). Following earlier definitions we further introduce the collective cohort-specific functionals

Note that the properties \(CCR_{ij}^{\text {(inv)}}(t_{n}) \equiv {\bar{p}}\) and \(CCR_{ij}^{\text {(total)}}(t_{n}) \equiv \frac{{\bar{p}} - \alpha }{1 - \alpha }\) at the re-adjustment times \(t_{n}\) are passed to the collective objects

Hence, the regulatory constraint \(CCR_{ij}^{\text {(total)}}(t) \in [100 \%, 125 \%]\) for any single customer is satisfied iff it is satisfied for the cohort-specific constraint \(CCR_{j}^{\text {(total)}}(t) \in [100 \%, 125 \%]\) on the collective fund. In summary, under the proposed framework, both collective ratios \(CCR_{j}^{\text {(total)}}(t)\) as well as \(CCR_{j}^{\text {(inv)}}(t)\) coincide with the individual ratios \(CCR_{ij}^{\text {(total)}}(t)\) and \(CCR_{ij}^{\text {(total)}}(t)\).

According to the definition of the transition function in Eq. (4.24), if \(CCR_{j}^{\text {(total)}}(t^{(k+1)})\) stays inside its pre-defined corridor, the collective cohort pension \(P_{(k+1)}\) at time \(t^{(k+1)}\) decreases with rate \(\lambda _{x(j)}\) (on average) due to the deaths of cohort members. At the same time, the individual pensions of clients that survived until time \(t^{(k+1)}\) remain untouched, i.e. stable. Thus, \(P_{(k+1)} = e^{- \lambda _{x(j)} (t^{(k+1)} - t^{(k)})} P_{(k)}\) indicates a stable, constant individual pension \(P_{ij}(t^{(k+1)}) = P_{ij}(t^{(k)})\) for those clients in the cohort that are still alive at time \(t^{(k+1)}\). Using this notation, the Bellman equation in (4.17) and the one-period reward function in (4.19) remain the same.Footnote 5 Finally, due to this definition, \(E_{j}\) decreases in time (death of cohort members). As there are no bequest payments, this implies that the \(CCR_{j}^{\text {(total)}}\) is more likely to cross the \(125 \%\)-border and less likely to fall short the \(100 \%\)-border compared to the single-client model if the same investment strategy is applied.

Remark 1

It is remarkable that the probabilities for future reductions of individual customer pensions in the cohort model are smaller than the ones in the single-client model, whereas the probability of future pension enhancements in the cohort model are larger than in the single-client model, if the same investment strategy is applied. The economic reason is that the wealth of a client in the cohort that died in the previous period remains in the collective portfolio and is not paid out to heirs, while the cohort-related collective pension declines. Therefore, the survivors in the cohort benefit from the death of a cohort member.

5 A stationary solution

It was already shown that the discrete-time Problem (4.8) could be solved by backwards induction of the Bellman equation (4.17). However, this procedure shows noteworthy shortfalls: First, it has to be performed for every single client (or cohort) with different initial state \(S_{(0)} = (V_{(0)}, P_{(0)})\). Furthermore, the computational effort dramatically increases if a long time horizon \({\tilde{T}}\) is considered. All the mentioned arguments considerably increase the computation time. To find a computationally efficient solution for an arbitrary planning horizon, an arbitrary number of decision periods and an arbitrary initial state (customer), we present an elegant approximate stationary solution next, where the solution to the finite-horizon problem is approximated with the solution to the infinite-horizon problem. The stationary solution will depend on the specific state only, but not on the time point, which makes this approach very practicable and simplifies application and implementation for a wide range of customers with different states. In general, we are now looking for a faster and more efficient algorithm to find the optimal investment decision.

5.1 The infinite-time horizon problem

Let \({\tilde{T}} = \infty \) hold. The idea is that \(a_{k}^{\star }(S) \equiv a^{\star }(S) = {\hat{\pi }}^{\star \text {(inv)}}(S)\) for all states S, i.e. the optimal asset allocation decision depends on the current state and is independent of time; we seek for a stationary solution. This leads to the infinite-horizon discrete-time optimization problem

with state \(S = (V,P)\) and stochastic pension \(P = P(t^{(i)})\) at time \(t^{(i)}\). Due to \({\tilde{T}} = \infty \), the corresponding Bellman equation to this problem is as follows:

where the last equality holds due to \(r(S, a(S)) \equiv r(S)\) for the one-period reward. It is obvious that the problem is independent of time and falls into the class of stationary, infinite-horizon Markovian decision problems, also known as Markovian dynamic programming (MDP) problems. The transition function \(T_{B}\) for \(S \mapsto T_{B}(S, a, Z)\) is given by

where \(S = (V,P)\) and

andFootnote 6

with

The gap in the expected utility between the finite- and the infinite-horizon model, i.e. \({\tilde{T}} < \infty \) vs. \({\tilde{T}} = \infty \), is usually very small, but it simplifies calculations a lot. If particularly the survival probability (from time T to time \({\tilde{T}} < \infty \), for instance \({\tilde{T}} = 120\)) is close to zero, the error stays rather small which implies that the approximation becomes more reliable and the approach can be justified from a mathematical perspective. In more detail, the crucial object is the absolute error in the infinite-horizon problem (5.1) compared to the finite-horizon problem (4.8):

The relative error is then defined as

The approximation is more reliable if the relative error is small. Thus, if one desires to use the solution to the infinite-horizon problem as an approximation for the solution to the finite-horizon problem, one needs to ensure that \(error_{{\tilde{T}}, \infty }^{rel}\) is sufficiently small to justify the approach.Footnote 7

From now on we consider utility functions with hyperbolic absolute risk aversion (HARA) as intertemporal pension utility function:

with coefficient of risk aversion \(b < 1\), \(b \ne 0\) and \({\hat{a}} > 0\), \(p > F\) with \(F \ge 0\). This utility function is increasing and strictly concave in the argument p and provides a floor F. For the one-period reward r(S) in the Bellman equation (5.2) the choice of a HARA utility function leads to

For ease of exposition, we place the following assumption on the utility function that is to hold from now on.

Assumption 1

Let us consider HARA utility \({\tilde{U}}(P)\) (parameterization in Eq. (5.11)) for \(P_{min} \le P \le P_{max}\) withFootnote 8\(F< P_{min}< P_{max} < \infty \).

Assumption 1 introduces lower and upper bounds for the pension payment that is to be paid out. We then have to adjust the transition function \(T_{B}^{(P)}\) for the pension to become

in the single-client model.Footnote 9 Based on Assumption 1, it can immediately be concluded that \({\tilde{U}}(p)\) is bounded. Furthermore, since the later proposed algorithm will be supposed to perform the optimization on a finite set for the action a(S) (discretization grid for \({\mathbb {A}}\) with \(a(S) \in {\mathbb {A}}\)), i.e. on a set with a finite number of elements, the following assumption is said to hold true from now on.

Assumption 2

\({\mathbb {A}}\) has a finite number of elements.

For instance, one could assume \(1 \%\)-point steps in \({\mathbb {A}} = \left\{ a \in {\mathbb {A}}_{1}^{N}:\ a' {\mathbf {1}} \le 1\right\} \), \({\mathbb {A}}_{1} := \{0 \%, 1 \%, \ldots , 99 \%, 100 \%\}\), with \({\mathbb {A}} \equiv {\mathbb {A}}_{1}\) in the situation of one risky asset class (\(N = 1\)). Further note that every function \(g : {\mathbb {A}} \rightarrow {\mathbb {R}}\) attains its maximum on the finite set \({\mathbb {A}}\). Hence, from Assumption 2 it immediately follows that the supremum over \(a(S) \in {\mathbb {A}}\) turns into its maximum:

Thus, the maximum is attained. In what follows we present an approach to solve the infinite-horizon problem (5.1) under Assumptions 1 and 2 via the Bellman equation (5.2).

5.2 Definition of the grid

Since the algorithm for solving the Bellman equation for every possible state S will work on grids of the relevant objects, we introduce the respective grid definitions:

-

1.

Discretization of the state space \(S = (V,P)\): We build up the state space grid following the three steps below:

-

(a)

One-dimensional total wealth grid V: \(V_{min}\) (minimal value for V), \(V_{max}\) (maximal value for V), \(n_{V}\) (number of values for V with equidistant distances):

$$\begin{aligned} V^{(i)} \in \text {Grid}(V) := \{V_{min}, \ldots , V_{max}\},\ i = 1, \ldots , n_{V}, \end{aligned}$$(5.16)with cardinality \(n_{V}\).

-

(b)

One-dimensional capital coverage ratio grid CCR: Let \(\text {Grid}(CCR)\) be the grid of the capital coverage ratio that ranges from \(CCR_{min} = 100 \%\) to \(CCR_{max} = 125 \%\) with \(n_{CCR}\) number of values in the equidistant grid, for instance \(\text {Grid}(CCR) = \{1, 1.01, 1.02, \ldots , 1.23, 1.24, 1.25\}\).

-

(c)

Two-dimensional state space S: For every \(V^{(i)} \in \text {Grid}(V)\) and every \(CCR^{(j)} \in \text {Grid}(CCR)\), the pair \((V^{(i)}, P^{(ij)}) \in \text {Grid}(S)\) lies in \(\text {Grid}(S)\) for the state space S, where

$$\begin{aligned} P^{(ij)} := \frac{V^{(i)}}{CCR^{(j)} \frac{1}{r + \lambda _{x}}} \Leftrightarrow CCR^{(j)} = \frac{V^{(i)}}{P^{(ij)} \frac{1}{r + \lambda _{x}}}. \end{aligned}$$(5.17)Thus, the size of \(\text {Grid}(S)\) is \(n_{S} = n_{V} \cdot n_{CCR}\). Notice that in view of Assumption 1 it must hold \(F < \min _{i,j} P^{(ij)}\) with \(\min _{i,j} P^{(ij)} = \frac{\min _{i} V^{(i)}}{\max _{j} CCR^{(j)} \frac{1}{r + \lambda _{x}}} \ge \frac{V_{min}}{1.25 \frac{1}{r + \lambda _{x}}}\) as well as \(\max _{i,j} P^{(ij)} < \infty \) with \(\max _{i,j} P^{(ij)} = \frac{\max _{i} V^{(i)}}{\min _{j} CCR^{(j)} \frac{1}{r + \lambda _{x}}} \le \frac{V_{max}}{\frac{1}{r + \lambda _{x}}}\).

-

(a)

-

2.

Discretization of the risk driver Z: We assume \(N = 1\) from now on whenever it comes to implementation, i.e. the financial market consists of a single risky asset class that can be interpreted as a mutual fund. The stochastic return or shock is discretized by the following equidistant partition of the probability space [0, 1] for the risk factor \(Z \in {\mathbb {R}}= (- \infty , \infty )\): Let \(q \in (0,1)\); for instance \(q = 5 \%\). The corresponding cumulative probabilities are

$$\begin{aligned} q^{(i)} := q^{(0)} + \Delta ^{(q)} \cdot i,\ i = 0, \ldots , N_{q} \end{aligned}$$(5.18)with \(\Delta ^{(q)} := q\), \(N_{q} := \frac{1 - q}{q} {\mathop {\in }\limits ^{!}} {\mathbb {N}}\) because then \(\sum _{i = 0}^{N_{q}} q = (1 + N_{q}) q = 1\). For instance, one could set \(q^{(0)} = 5 \%\), \(\Delta ^{(q)} = 5 \%\) (\(N_{q} = 19\)), then \(q^{(i)} = 5 \%, 10 \%, \ldots , 95 \%, 100 \%\). The corresponding values or representatives for Z with probability \({\mathbb {P}}(Z = z(q^{(i)})) = q\) and quantile probabilities \(q^{(i)}\) are obtained by

$$\begin{aligned} \begin{aligned} z(q^{(0)})& := N^{-1}\left( \frac{0 + q^{(0)}}{2}\right) , \\ z(q^{(i)}) &:= N^{-1}\left( \frac{q^{(i)} + q^{(i+1)}}{2}\right) ,\ i = 1, \ldots , N_{q}-1, \\ z(q^{(N_{q})}) &:= N^{-1}\left( \frac{q^{(N_{q})} + 1}{2}\right). \end{aligned} \end{aligned}$$(5.19)With this definition, the z values are stronger centered around zero, with a larger step size for large positive and negative values. Then

$$\begin{aligned} Z \in \text {Grid}(Z) := \{z(q^{(0)}), \ldots , z(q^{(N_{q})})\} = \{Z_{1}, \ldots , Z_{n_{Z}}\} \end{aligned}$$(5.20)with cardinality \(n_{Z} := N_{q} + 1\) and probabilities q, i.e. \(Z_{j} = z(q^{(j-1)})\), \(j = 1, \ldots , n_{Z}\).

-

3.

Discretization of the investment decision \(a \in {\mathbb {A}}\): Lastly, we discretize the decision set for the control variable a. Since \(a \in {\mathbb {A}} \) with \({\mathbb {A}} = [0,1]\), we split the interval \({\mathbb {A}} = [0,1]\) into a grid with equidistant distances and representatives

$$\begin{aligned} a_{(i)} := a_{(0)} + \Delta ^{(a)} \cdot i,\ i = 0, \ldots , N_{a}, \end{aligned}$$(5.21)with \(N_{a} := \frac{a_{(N_{a})} - a_{(0)}}{\Delta ^{(a)}} {\mathop {\in }\limits ^{!}} {\mathbb {N}}\). It is natural to select \(a_{(0)} = 0\) and \(a_{(N_{a})} = 1\), or apply lower and upper bound constraints on the relative risky investment if present. Thus, if for instance \(\Delta ^{(a)} = 1 \%\), a can take any integer percentage value, i.e. \(a \in \left\{ a_{(0)}, \ldots , a_{(N_{\lambda })}\right\} = \left\{ 0 \%, 1\%, 2 \%, \ldots , 98 \%, 99 \%, 100 \%\right\} \). Therefore,

$$\begin{aligned} a \in \text {Grid}(a) := \{a_{(0)}, \ldots , a_{(N_{a})}\} \end{aligned}$$(5.22)with cardinality \(n_{a} := N_{a} + 1\). It is clear that the discretization \(\text {Grid}(a)\) for \({\mathbb {A}}\) fulfills Assumption 2 on \({\mathbb {A}}\).

We would like to mention that the construction of \(\text {Grid}(S)\) is very efficient since it consists of admissible (V, P)-pairs only and rules out non-admissible (V, P)-pairs; admissible pairs fulfill \({V}/{\frac{P}{r + \lambda _{x}}} \in [100 \%, 125 \%]\). Furthermore, by construction we ensure that the CCR values are uniformly spread over the entire corridor \([100 \%, 125 \%]\). If now \(T_{B}(S, a, Z) \not \in \text {Grid}(S)\), we select the grid node in the state space grid that provides the smallest sum of squared relative distances to \(T_{B}(S, a, Z) \not \in \text {Grid}(S)\) as method for interpolation between grid points.

5.3 Stationary grid solution

The definition of the grids allows us to rewrite the expectation in the Bellman equation for any state \(S^{(l)} \in \text {Grid}(S)\):

The optimal policy \(a^{\star }(S^{(l)})\) for state \(S^{(l)}\) is the maximizer

In the following we aim to solve the above Bellman equation for every \(S^{(l)} \in \text {Grid}(S)\) and by this determine the optimal decisions or policies \(a^{\star }(S^{(l)})\) for all states in the grid. We now treat every \(a = a(S^{(l)}) \in \text {Grid}(a)\) as if it was the maximizer of the Bellman equation, and select the optimal \(a^{\star }(S^{(l)})\) at the very end by choosing the one that maximizes \({\mathcal {V}}(S^{(l)})\). Hence, consider

for all \(S^{(l)} \in \text {Grid}(S)\) and all \(a(S^{(l)}) \in \text {Grid}(a)\) which is a linear system of equations since \(T_{B}(S^{(l)}, a(S^{(l)}), Z_{j}) \in \text {Grid}(S)\) according to the applied interpolation rule if not already in the grid.

Given a specific \(a(S^{(l)}) = a_{(i(l))} \in \text {Grid}(a)\) for some \(i(l) \in \{0, \ldots , N_{a}\}\), we solve this linear system in \({\mathcal {V}}(S^{(l)})\) for all \(l = 1, \ldots , n_{S}\) by rewriting the right-hand sum using matrix notation with \(S = (S^{(1)}, \ldots , S^{(n_{S})})'\) the vector that consists of all state grid points in \(\text {Grid}(S)\). Define the matrix \(Q \in {\mathbb {N}}^{n_{S} \times n_{S}}\) by

with block matrix \(Q_{1} \in \{0,1\}^{n_{S} \times (n_{S} \cdot n_{Z})}\) such that

where \(I_{n_{S}} \in \{0,1\}^{n_{S} \times n_{S}}\) is the identity matrix with dimension \(n_{S}\). Furthermore, \(Q_{2} \in \{0,1\}^{(n_{S} \cdot n_{Z}) \times n_{S}}\) is defined as a block matrix such that

with \(A_{j} \in \{0,1\}^{n_{S} \times n_{S}}\) defined by

Then it follows

with \(d_{l} \in {\mathbb {N}}^{1 \times n_{S}}\) such that \((d_{l})_{m}\) is the number of \(Z_{j}\), \(j = 1, \ldots , n_{Z}\), which leads to a transition from \(S^{(l)}\) to \(S^{(m)}\). Consequently, it follows for \(Q {\mathcal {V}}(S) \in {\mathbb {R}}^{n_{S}}\), where \({\mathcal {V}}(S) = ({\mathcal {V}}(S^{(1)}), \ldots , {\mathcal {V}}(S^{(n_{S})}))' \in {\mathbb {R}}^{n_{S}}\):

Note \(Q = Q(a)\) with \(a = (a_{(i(1))}, \ldots , a_{(i(n_{S}))})'\), and therefore,

which allows us to rewrite the linear system in the value function in matrix-vector form:

where \(r(S) = (r(S^{(1)}), \ldots , r(S^{(n_{S})}))' \in {\mathbb {R}}^{n_{S}}\). This is a linear equation system in \({\mathcal {V}}(S)\) and can easily be solved; theoretically the solution reads

Notice that existence of the inverse of \(I_{n_{S}} - e^{- (\lambda _{x} + \beta ) \Delta } q Q(a)\) is proven in Appendix 1.

Equation (5.33) shows that the problem of solving the Bellman equation can be reduced to finding the fixed point of the Bellman operator \(\Gamma \):

Now we would like to draw attention to the fact that \(Q = Q(a)\) with \(a = (a_{(i(1))}, \ldots , a_{(i(n_{S}))})'\): For this reason, we need to repeat the above for all possible combinations \(i(l) \in \{0, \ldots , N_{a}\}\), \(l = 1, \ldots , n_{S}\), and finally select the combination \(a^{\star }(S) = (a^{\star }(S^{(1)}), \ldots , a^{\star }(S^{(n_{S})}))'\) that maximizes \({\mathcal {V}}(S)\) across all \(a_{(i)}\) combinations in \(\text {Grid}(a)\). The total number of combinations equals \(n_{a}^{n_{S}}\). Thus, we have to calculate \(n_{a}^{n_{S}}\) times an \(n_{S} \times n_{S}\) transition matrix Q(a) (plus additionally the inverse of \(I_{n_{S}} - e^{- (\lambda _{x} + \beta ) \Delta } q Q(a)\)). If for instance we consider \(n_{V} = 1,000\) grid points for V and \(n_{CCR} = 26\) for CCR,Footnote 10 then \(n_{S} = 26,000\). If additionally the allocation grid is divided into steps of \(5 \%\), i.e. \(n_{a} = 21\), we would have to calculate \(21^{26,000} \approx 10^{34,378}\) times an \(26,000 \times 26,000\) matrix, which is a vast number. To overcome this computational problem, we present an alternative by using a policy function iteration algorithm in the following.

5.4 Policy function iteration: the algorithm

The algorithm is a tailored version of Howard’s improvement algorithm, and iterates the policy a(S) until it converges towards its optimal value. For further readings on the policy iteration we refer to [3, 4, 12, 16,17,18] and [20]. Notice that the one-period total discount factor to this problem is \(0< e^{- (\lambda _{x} + \beta ) \Delta } < 1\) and is a composite of the one-period utility discount factor \(e^{- \beta \Delta }\) and the mortality discount factor \(e^{- \lambda _{x} \Delta }\). For n periods, the total discount factor is \(e^{- (\lambda _{x} + \beta ) n \Delta }\) and converges to zero as \(n \rightarrow \infty \). In what follows we describe the policy function iterating mechanism:

Let \(a^{(i)} = a^{(i)}(S)\) denote the decision value at iteration step i. Let \(n_{iter}\) denote the number of iterations until the algorithm stops. The terminal \(a^{(n_{iter})} = a^{(n_{iter})}(S)\) is regarded as the optimal final decision variable \(a^{\star } = a^{\star }(S)\). Inside the algorithm we repeat the policy improvement and policy evaluation until a sufficient, prescribed level of convergence or solution tolerance is achieved.

-

1.

\(i = 0\):

-

(a)

Select initially \(a^{(0)}(S) \in \text {Grid}(a)\) for all states in the grid, for instance \(a^{(0)}(S^{(l)}) := 0 \in \text {Grid}(a)\) \(\forall l \in \{1, \ldots , n_{S}\}\).

-

(b)

Define initially \({\mathcal {V}}(S) = \left( I_{n_{S}} - e^{- (\lambda _{x} + \beta ) \Delta } q Q(a^{(0)}(S))\right) ^{-1} r(S)\) for all states in the grid according to Eq. (5.34).

-

(c)

Select a convergence criterion \(\epsilon > 0\).

-

(a)

-

2.

Iteration \(i = 1, 2, \ldots \):

-

1.

Policy improvement: For all \(S^{(l)} \in \text {Grid}(S)\), \(l = 1, \ldots , n_{S}\), find a new policy rule \(a^{(i)}(S^{(l)}) \in \text {Grid}(a)\), such thatFootnote 11

$$\begin{aligned} \begin{aligned} a^{(i)}(S^{(l)}) := {{\,{\text{arg max}}\,}}_{a \in \text {Grid}(a)} \left\{ \sum _{j = 1}^{n_{Z}} {\mathcal {V}}^{(i-1)}(T_{B}(S^{(l)}, a, Z_{j}))\right\} \end{aligned} \end{aligned}$$(5.36)with \({\mathcal {V}}^{(i-1)}(T_{B}(S^{(l)}, a, Z_{j})) = {\mathcal {V}}^{(i-1)}(S^{(m)})\) given from the previous iteration step and according to applied interpolation rule if \(S^{(m)}\) not already in the grid.

-

2.

Policy evaluation: Having determined \(a^{(i)}(S^{(l)})\) for each \(S^{(l)} \in \text {Grid}(S)\), \(a^{(i)}(S) = (a^{(i)}(S^{(1)}), \ldots , a^{(i)}(S^{(n_{S})}))'\), we update the value function according to Eq. (5.34):

$$\begin{aligned} {\mathcal {V}}^{(i)}(S) = \left( I_{n_{S}} - e^{- (\lambda _{x} + \beta ) \Delta } q Q(a^{(i)}(S))\right) ^{-1} r(S). \end{aligned}$$(5.37)

-

1.

-

3.

Check the convergence criterion: If \(\max _{S \in \text {Grid}(S)}\left\{ \left| a^{(i)}(S) - a^{(i-1)}(S)\right| \right\} \le \epsilon \), then stop and set \(n_{iter} := i\) and \(a^{\star }(S) := a^{(n_{iter})}(S)\).Footnote 12 Otherwise, repeat Step 2. for iteration \(i+1\).

When the algorithm stops after \(n_{iter}\) iterations, i.e. when the convergence criterion after iteration step \(n_{iter}\) is met, the stationary solution to the problem is defined as \(a^{\star } = a^{\star }(S) = a^{(n_{iter})}(S)\) for all grid states.

Before we derive the optimal allocations in a case study next, we briefly summarize the benefits that are associated with this policy function iteration procedure:

-

One can determine and thereafter use the optimal strategy for all states independently of time; thus the iterative approach as a very elegant method enhances the speed and efficiency of the numerical optimization.

-

The optimal control is independent of the initial state. Therefore, the derived optimals can be used for different initial states. One only has to make sure that the considered initial state lies approximately in the center of the grid, such that a sufficient number of grid nodes still are above and below the starting state. Otherwise it could happen that one remains at the edge of the grid (due to the applied interpolation rule) which would lead to a suboptimal strategy.

In addition, we provide a comment on the speed of convergence of the algorithm. As already mentioned before, the total discount factor equals \(e^{- (\lambda _{x} + \beta ) n \Delta }\) for n periods. In the case study in Sect. 6 we will use \(\beta = 3 \%\) and \(\lambda _{x} = 1.18 \%\). Then the convergence factor has an approximate size of \(e^{- (\lambda _{x} + \beta ) \Delta } = 0.9591\) after one iteration (step size \(\Delta = 1\) year), \(e^{- (\lambda _{x} + \beta ) 10 \Delta } = 0.6584\) after ten iterations and \(e^{- (\lambda _{x} + \beta ) 100 \Delta } = 0.0153\) after hundred iterations. This shows that a rather low number of iterations is necessary. In particular, [20] further argue that policy iteration commonly converges to its stationary solution after a small number of iterations.

Every iteration requires the calculation of \(Q(a^{(i)}(S))\) plus the calculation of the inverse of \(I_{n_{S}} - e^{- (\lambda _{x} + \beta ) \Delta } q Q(a^{(i)}(S))\) which are both matrices of dimension \(n_{S} \times n_{S}\); in total the algorithm requires the calculation of \(n_{iter}\) times an \(n_{S} \times n_{S}\) (plus an inverse). This is usually dramatically faster than calculating \(n_{a}^{n_{S}}\) times an \(n_{S} \times n_{S}\) matrix (plus an inverse) in the previous section on the stationary grid solution. Furthermore, one can use and exploit the property of \(Q, Q_{1}, Q_{2}\) to be very sparse matrices; in Matlab the functions sparse(m,n) and speye(n) generate the required sparse matrices which saves memory. Additionally the command \(x = A\backslash b\) is recommended for solving systems of linear equations of the form \(A x = b\) efficiently.

5.5 Policy function iteration: theoretical foundation

The infinite-horizon problem (5.1) is a stationary, infinite-horizon Markovian dynammic programming (MDP) problem in line with the definition in [20]. We now theoretically justify our policy iteration approach for solving Problem (5.1) under Assumptions 1 and 2, where we used that the value function is a fixed point. It is necessary to prove the existence and optimality of a unique fixed point for our policy function iteration algorithm and monotone convergence to such a solution. First, we prove existence of a unique fixed point and optimality of the stationary solution. In what follows we denote S the state space, \(s \in S\) a certain state. Further, X is the set of functions that map from S to \({\overline{\mathbb {R}}} := {\mathbb {R}}\cup \{\pm \infty \}\), i.e. \(X = \{f : S \rightarrow {\overline{\mathbb {R}}}\}\), or a suitable subset of this set. In accordance with [21] and [22] the notion of a contraction mapping and a fixed point can be found in Appendix 1 with additional background details. [20] further comment that MDP problems are mathematically equivalent to computing the fixed point to the Bellman equation

with Bellman operator of interest \(\Gamma \) (defined in line with Eqs. (5.2) and (5.35))

for some function f, where \({\overline{\beta }} := e^{- (\lambda _{x} + \beta ) \Delta } \in (0,1)\). We now follow the line of [19] and prove the existence of a unique fixed point of the Bellman operator \(\Gamma \) and optimality of the stationary policy for the infinite-horizon problem, where a stationary policy is formally defined as a sequence \(a^{\infty } := \{a,a,a, \ldots \}\) for some decision rule \(a = \left( a(s)\right) _{s \in S} \in {\mathbb {A}}\) ( [10, 23]). Let

We show that the Bellman operator \(\Gamma \) is a contraction mapping on X equipped with the sup norm \(d := \left\| \cdot \right\| _{\infty }\), i.e. on the metric space (X, d).

Theorem 3

Let Assumptions 1and2be fulfilled. Then, \(\Gamma \) is a contraction mapping on X with modulus \({\overline{\beta }} \in (0,1)\).

Proof

The proof can be found in Appendix 1. \(\square \)

We now come to the main result in [19] about existence of a unique fixed point of \(\Gamma \) and optimality of the stationary policy. The respective theorem is Theorem 8 in Appendix 1, where we copied the relevant statements from [19] and omitted the unnecessary conditions. Using this result, we infer the following outcome:

Theorem 4

Let Assumptions 1and 2hold true. Further, let X be defined according to Eq. (5.40) and d be the uniform metric. Then the value function \({\mathcal {V}}\) is the unique fixed point of \(\Gamma \) in X and the stationary policy \((a_{{\mathcal {V}}})^{\infty }\) is the optimal solution to the infinite-horizon discrete-time optimization problem.

Proof

The proof can be found in Appendix 1. \(\square \)

From Theorem 4 we infer that, under Assumptions 1 and 2, there exists a fixed point of \(\Gamma \) in X and if one can find a fixed point of \(\Gamma \) in X, this fixed point is unique and coincides with the value function \({\mathcal {V}}\). Moreover, the stationary policy \((a_{{\mathcal {V}}})^{\infty }\) is the optimal solution to the infinite-horizon discrete-time optimization problem.

In view of the previously presented policy function iteration algorithm, it remains to show that this algorithm indeed converges to a fixed point \({\mathcal {V}}^{(i)} \rightarrow {\mathcal {V}}\) with corresponding optimal stationary policy \(a^{(i)} \rightarrow (a_{{\mathcal {V}}})^{\infty }\). [20] explain that the policy function iteration algorithm can be shown to generate a sequence with \({\mathcal {V}}^{(i+1)} \ge {\mathcal {V}}^{(i)}\) under fairly general conditions. In our setup where the state space S and the values for the risk driver Z come from finite sets or grids, we have the following general monotonicity result for the iterated value function:

Theorem 5

(Monotonicity of \({\mathcal {V}}^{(i)}\)) The iteration in the policy function algorithm leads to a monotone increasing sequence \(\left( {\mathcal {V}}^{(i)}\right) _{i = 0,1,2,\ldots }\):

Proof

The proof can be found in Appendix 1. \(\square \)

In view of Theorem 5,, that tells that the iterated value function does not cycle, we conclude the following:

Theorem 6

(Convergence of the policy function iteration algorithm) Let us consider a finite state space \(\text {Grid}(S)\) and a finite action space \(\text {Grid}(a)\). Then the policy function iteration algorithm converges to the true fixed point for the contraction \(\Gamma \), which is the optimal value function \({\mathcal {V}}\) of the problem, within a finite number of iteration steps.

The argument is clear due to monotonicity in Theorem 5 and the finite cardinality of the state and action space, see also [20]. Further readings on convergence results can also be found in [17, 18]. In summary, we have proven that, under Assumptions 1 and 2, our problem admits a unique fixed point solution and our presented policy function iteration algorithm converges to this solution.

6 Case study: policy function iteration for a cohort of clients

We focus on the cohort perspective and consider a cohort of clients with an initial age of \(T = 65\) years (retirement entry time). This section aims for solving the discrete-time infinite-horizon optimization problem with the policy function iteration algorithm as well as analyzing the performance of the optimal asset allocation strategy via simulation. First, we introduce the general setting and the parameter choices: For the market we suppose \(r = 1 \%\), \(\mu = 2.97 \%\), \(\sigma = 11.75 \%\) (market parameters: risk-free interest rate; drift and volatility of one risky asset which is interpreted as a buy and hold portfolio that consists of the three asset classes government bonds, corporate bonds and equity with initial weights \(\frac{1}{N} = \frac{1}{3}\) each, where we used the numbers from the parameter estimation in [9]). Moreover, the HARA utility function parameters are assumed to be \(\beta = 3 \%\) (cf. [24]), \(b = -1\), \(a = 1\) andFootnote 13\(F = 25.8\) which gives

Further let \(\lambda _{x} = \lambda _{x(j)} = 1.18 \%\) (mortality rate of the cohort), determined such that the survival probability of a 65-year old client to survive one more year coincides with the average survival probability of \(99.202956 \%\) (female) and \(98.457889 \%\) (male) in Germany, cf. [7], and \({\bar{p}} = 112.5 \%\) (CCR of the investment portfolio at initial time and at every re-set).

For the discretization grids we suppose the following: The time grid is divided into points with distance or step size \(\Delta = 1\) (annual rebalancing and adjustments of the pension payments) which implies \(t^{(i)} = T + i\), \(i = 0, \ldots , \infty \), and thus \(t^{(i)} \in \left\{ T, T+1, T+2, \ldots \right\} \). The grid for the risk driver Z follows from Eq. (5.19) with probability intervals of length \(q = 2.5 \%\), i.e. \(q^{(i)} = q^{(0)} + \Delta ^{(q)} \cdot i,\ i = 0, \ldots , N_{q}\), with \(\Delta ^{(q)} = q = 2.5 \%\), \(N_{q} = \frac{1 - q}{q} = 39\). The corresponding representatives for Z start with \(z(q^{(0)}) = - 2.2414\) and end with \(z(q^{(39)}) = 2.2414\). Finally, for the sake of simplicity let us consider steps of five percentage points for the decision interval \({\mathbb {A}}\), i.e. \(a_{(0)} = 0 \%\), \(a_{(N_{a})} = a_{(20)} = 100 \%\), \(N_{a} = 20\) (equivalent to \(\Delta ^{(a)} = 5 \%\)) which translates to \(a \in \left\{ 0 \%, 5 \%, 10 \%, \ldots , 90 \%, 95 \%, 100 \%\right\} \). Finally, let \(V_{0} = 10,000\) (initial post-retirement wealth at time \(t^{(0)} = T\)) and let us define the state space grid by \(V_{min} = 20 \% \times V_{0}\), \(V_{max} = 500 \% \times V_{0}\), \(n_{V} = 1,000\) for \(\text {Grid}(V)\). We further select a step size of \(1 \%\) for \(\text {Grid}(CCR)\), i.e. \(CCR \in \text {Grid}(CCR) = \{100 \%, 101 \%, \ldots , 124 \%, 125 \%\}\), and thus \(n_{CCR} = 26\). This leads to a total grid size of \(n_{S} = 26,000\) states. In particular, Assumptions 1 and 2 are fulfilled.

Let us consider three different values for the buffer parameter, namely \(\alpha = (0 \% | 20 \% | 40 \%)\) (no | moderate | pronounced buffer). In what follows we demonstrate the presented policy function iteration algorithm, where we determine the optimal investment decision variables for every state under the infinite-horizon problem (stationary solution). Afterwards, a simulation analysis in the finite-horizon model, where the approximate optimal stationary solution to the infinite-horizon model is applied, provides the most relevant numbers and probabilities and compares the considered strategies for different \(\alpha \) values.

6.1 Optimization

We seek for a fixed-point solution to the value function according to the policy function iteration algorithm in Sect. 5. We would like to comment that it only takes seven iterations maximal (\(n_{iter} \le 7\)) to find the fixed point for each \(\alpha = (0 \% | 20 \% | 40 \%)\) and with that the stationary solution to the infinite-horizon optimization problem. Thus, the algorithm converges very quickly.

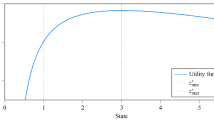

Figure 1 visualizes the average optimal risky relative asset allocations \({\hat{\pi }}^{\star \text {(inv)}} = a^{\star }\) (investment portfolio) and \({\hat{\pi }}^{\star \text {(total)}}\) (total cohort portfolio) for all \(CCR_{j}^{\text {(total)}}\) values in the grid. Hence, for every \(CCR \in \text {Grid}(CCR)\), we build the average over the \(n_{V}\) values for \(a^{\star }\) and \({\hat{\pi }}^{\star \text {(total)}}\) that have an equal CCR value. The pattern comes close to an S-shaped form: It can be seen that a higher buffer parameter \(\alpha \), in particular for \(\alpha = 40 \%\), leads to a lower relative risky investment for small \(CCR_{j}^{\text {(total)}}\) values, but catches up for large \(CCR_{j}^{\text {(total)}}\) values. This is a desired behavior, since it implies a lower risk of a pension shortening for small \(CCR_{j}^{\text {(total)}}\) values within the range \([100 \%, 110 \%]\), without losing the upside potential of a pension enhancement for \(CCR_{j}^{\text {(total)}}\) values close to \(125 \%\). Furthermore, except for the region \(CCR_{j}^{\text {(total)}} \in [100 \%, 105 \%]\), the average optimal risky relative investment increases with the \(CCR_{j}^{\text {(total)}}\) value. This is meaningful since with a higher \(CCR_{j}^{\text {(total)}}\) value, one is less exposed to the risk of falling outside the lower boundary of the \(CCR_{j}^{\text {(total)}}\) corridor (pension reduction risk). The higher risky investment close to \(100 \%\) is also reasonable. Imagine the \(CCR_{j}^{\text {(total)}}\) is close to \(100 \%\); if now the risky allocation is very small, even some positive return of the underlying asset class cannot compensate for the outflows (cohort-related pensions), which pushes the \(CCR_{j}^{\text {(total)}}\) below \(100 \%\) with a high probability.

6.2 Simulation study

We next carry out a simulation study with a finite time horizon of \({\tilde{T}} = T + 10 \Delta = T + 10\) years. We start with the initial states \(S_{0} = (V_{0}, P_{0}) = (10,000, (277 | 270 | 258))\) for \(\alpha = (0 \% | 20 \% | 40 \%)\), where \(P_{0}\) comes from Eq. (3.16) (see also Eq. (4.24)). Intuitively, the higher the buffer parameter \(\alpha \), the lower is the initial pension \(P_{0}\). Moreover, the initial distribution to the investment and the buffer portfolio is \(\frac{V_{j}^{\text {(buffer)}}(T)}{V_{0}} = (0 \% | 2.7 \% | 6.9 \%)\), \(\frac{V_{j}^{\text {(inv)}}(T)}{V_{0}} = (100 \% | 97.3 \% | 93.1 \%)\) due to Eq. (4.16). The initial capital coverage ratio is by definition \(CCR_{j}^{\text {(total)}}(T) {\mathop {=}\limits ^{(4.27)}} \frac{{\bar{p}} - \alpha }{1 - \alpha } = (112.5 \% | 115.6 \% | 120.8 \%)\) (at every re-set time \(t_{n}\) as well). We simulate 10, 000 paths of the relevant processes where we use the optimal stationary solution as asset allocation that corresponds to the closest grid point.

We assume that the average mortality (explained in Sect. 4) for the cohort is realized. We look at the optimal relative pension evolution \(\frac{P^{\star }(t)}{e^{- \lambda _{x(j)} (t-T)} P_{0}}\), where \(P^{\star }(t)\) denotes the cohort pension at time t under the optimal stationary asset allocation strategy \(a^{\star } = a^{\star }(S)\). We already explained earlier that \(\frac{P^{\star }(t)}{e^{- \lambda _{x(j)} (t-T)} P_{0}} = \frac{P^{\star }(t + \Delta )}{e^{- \lambda _{x(j)} (t+\Delta -T)} P_{0}}\) indicates a stable individual pension for the customers in the cohort from time t to \(t + \Delta \), i.e. if \(\frac{P^{\star }(t)}{e^{- \lambda _{x(j)} (t-T)} P_{0}}\) is stable then the individual pensions keep stable. Consequently, due to the cohort view, \(\frac{P^{\star }(t + \Delta )}{P^{\star }(t) e^{- \lambda _{x(j)} \Delta }} < 100 \%\) indicates an individual pension reduction, \(\frac{P^{\star }(t + \Delta )}{P^{\star }(t) e^{- \lambda _{x(j)} \Delta }} = 100 \%\) a stable individual client’s pension development and \(\frac{P^{\star }(t + \Delta )}{P^{\star }(t) e^{- \lambda _{x(j)} \Delta }} > 100 \%\) an enhancement of the individual pension of the client members from time t to \(t + \Delta \).

In what follows we always look at the individual pension perspective in the cohort. Moreover, let \(V^{\star }(t)\) denote the total cohort wealth at time t under \(a^{\star } = a^{\star }(S)\). Analogously to the relative pension, we look at the optimal relative total wealth evolution \(\frac{V^{\star }(t)}{V_{0}}\). Note that \(\frac{P^{\star }(t)}{e^{- \lambda _{x(j)} (t-T)} P_{0}} = 1\) and \(\frac{V^{\star }(t)}{V_{0}} = 1\) at initial time \(t = T\).

Table 1 illustrates relevant probabilities of pension shortenings and enhancements. Table 2 provides risk and reward numbers for the relative pension and the total wealth. In general, we observe that a higher buffer parameter \(\alpha \) significantly improves the probabilities in Table 1 from a client’s perspective. In particular, the probability that the average individual pension that is to be paid out over the entire period is larger than the initial pension level \(P_{0}\) and the probability that there are more pension enhancements than reductions are quite high, especially for \(\alpha = 40 \%\). However, both the (relative) risk in terms of volatility and Value-at-Risk and the (relative) reward in terms of expected value do not suffer, which is remarkable. Actually the opposite is the case: A higher buffer parameter \(\alpha \) leads to a higher average of the relative pension level and a lower standard deviation (lower standard deviation of relative pension means a more stable pension development). Moreover, the worst case relative pensions in the tail (Value-at-Risk) also exceed the ones for smaller \(\alpha \). The single exception is the volatility of the pension, where \(\alpha = 20 \%\) shows a slightly smaller number than \(\alpha = 40 \%\). Those benefits of the \(\alpha > 0 \%\) portfolios comes at the cost of an initially lower pension level \(P_{0} = P_{0}(\alpha )\), which represents a tradeoff between the initial pension level and future pension properties. The selection of the case-specific optimal \(\alpha \) value, named \(\alpha ^{\star }\), depends on the respective target or criterion. If for instance the probability of at least one pension shortening shall coincide with a pre-defined probability \(p_{\text {red}}\), \(\alpha ^{\star }\) can be selected such that the corresponding probability comes closest to \(p_{\text {red}}\). Alternatively, \(\alpha ^{\star }\) could be selected such that the expectation of the sum of pension cash flows gets maximized.

In summary in terms of the relative individual cohort pension, one can see that \(\alpha = 40 \%\) outperforms the \(\alpha = (0 \% | 20 \%)\) strategies, and the \(\alpha = 20 \%\) outperforms the \(\alpha = 0 \%\) strategy. The higher the buffer parameter \(\alpha \), the more the downside risk is limited, and even the upside potential is enhanced.

We draw the conclusion that our proposed model, where we divide our total wealth into an investment and a buffer portfolio, leads to a sophisticated optimal dynamic asset allocation policy that is performance seeking while reducing downside risks and improving probabilities; hence provides remarkable and meaningful benefits to clients.

Finally, we simulate the optimal strategy \(a^{\star }\), the pension \(P^{\star }\) and the wealth \(V^{\star }\) evolution under three different scenarios: a bullish, a bearish and a non-directional market. In each simulation we need to generate the risk driver Z for every period. Figure 2 provides the corresponding underlying risky asset class price processes, denoted by \(V_{Z}(t)\), that correspond to the development of Z. Next, Fig. 3 illustrates the evolution of the relative pension, Fig. 4 visualizes the very same but for the total wealth. From Fig. 3 we infer that

-

1.

the individual pensions increase more often for higher \(\alpha \) and even end up with a higher terminal pension (relative to \(P_{0}\)) in a bullish market,

-

2.

the individual pensions decrease only once for \(\alpha = 40 \%\) but twice for the remaining (\(\alpha = (0 \% | 20 \%)\)) in a bearish market,

-

3.

and the individual pensions do not decline for \(\alpha = 40 \%\) but do decrease and behave very unstable and volatile for the remaining (\(\alpha = (0 \% | 20 \%)\)) in a non-directional market.

In total, the number of pension reductions for \(\alpha > 0 \%\) (with buffer) never exceeds the respective number for \(\alpha = 0 \%\) (no buffer) in the considered representative scenarios.

Figure 5 complements the former figures on the pension and wealth evolution with a visualization of the \(CCR_{j}^{\text {(total)}}(t)\) development. While the \(CCR_{j}^{\text {(total)}}(t)\) values for \(\alpha > 0 \%\) (with buffer) generally do not fall short the respective values for \(\alpha = 0 \%\) (no buffer), the \(\alpha > 0 \%\) portfolios need less pension shortenings to keep the \(CCR_{j}^{\text {(total)}}(t)\) inside its target corridor. Therefore, with selecting a higher \(\alpha \%\) value, one can improve the management of the wealth such that the \(CCR_{j}^{\text {(total)}}(t)\) remains more stable in its corridor without reducing the pension.

In addition, Figs. 6 and 7 show the optimal asset allocation policies \(a^{\star }(t)\) for the investment wealth and \({\hat{\pi }}^{\star \text {(total)}}(t)\) for the total wealth. One can observe that the optimal strategy for \(\alpha = 40 \%\) frequently behaves opposed to the optimal strategy for \(\alpha = 0 \%\). Moreover, Fig. 8 illustrates the kernel density estimates for the path-wise average pensions and wealths. Note that for one path, a higher path-wise average pension automatically implies a higher total sum of pension cash flows received by the customer. The figure points out that although the distributions of the wealths are rather close among all considered \(\alpha \) values (see also expected values and volatilities in Table 2), the distributions of the relative pensions differ. The pension distribution for \(\alpha = 40 \%\) has lower probability on the left end and is more shifted to the right; this is also reflected in Table 2. Thus, a pension fund client that follows the \(\alpha = 40 \%\) strategy benefits in terms of the pension distribution since lower pensions compared to the initial pension level \(P_{0}\) are on average less likely. However, as already explained, these benefits come at the cost of an initially lower pension level \(P_{0}\). We would like to comment that the averages over all simulated \(a^{\star }(t)\) and \({\hat{\pi }}^{\star \text {(total)}}(t)\) values are very close to each other among the three considered buffer parameters \(\alpha \). However, as analyzed above, the relative performance and characteristics of the optimal portfolios with a buffer (\(\alpha > 0 \%\)) are superior over the optimal portfolio without a buffer (\(\alpha = 0 \%\)). This shows that the dynamics and the structure of the asset allocation plays a crucial role.

Closing this numerical case study, we provide a brief discussion about the fund behavior in good and bad times of the financial market: In good times, as the buffer represents a certain percentage of the difference between the assets and the liabilities, the buffer increases if the fund develops nicely. In this way, the development of the Geometric Brownian Motion is damped compared to the system without a buffer and the potential for pension’s increase is reduced. However, we observe in Fig. 4a that a pension increase happens more often for \(\alpha > 0\%\) than for \(\alpha = 0\%\), even though the growth in the pension rates is smaller. This behavior is reasonable as a higher buffer (higher \(\alpha \)) goes hand in hand with a higher funding at the beginning and at every reset time (which comes at the cost of a smaller initial pension rate), compared to the system without a buffer (\(\alpha = 0\%\)). Moreover, when \(\alpha > 0\%\), then the fund wealth increase is dampened but the buffer portfolio gets increased compared to the \(\alpha = 0\%\) case, which can be regarded as a profit lock-in feature. If \({\bar{p}}\) would increase, then a pension increase becomes more likely for the \(\alpha = 0\%\) case as well, but as the pension increases happen more frequently and the funding after a reset of the system becomes higher, the difference between the pension rates before and after adjustments will be rather small. Generally, Eq. (3.19) visualizes that if \({\bar{p}}\) approaches the maximum value of \(125\%\), the possible \(\alpha \) values approach \(0\%\). From a risk management perspective, when \({\bar{p}}\) is already very high (and thus the probability of pension reductions rather small), then the additional buffering through \(\alpha \) might not be that beneficial anymore.

Let us assume the fund is having bad times, but \(CCR_{j}^{\text {(total)}}(t)\) is close to but still above \(100 \%\). In this case, the buffer account is almost empty. And therefore, in such a scenario where the fund recently went down, one keeps almost everything of the wealth in the fund. But still the fund remains well-funded and there is no need for a pension reduction. Therefore, the implication on the fund in such bad times is actually not that bad. If the fund decreases further and \(CCR_{j}^{\text {(total)}}(t)\) falls below \(100\%\), then the system gets adjusted and the buffer is refilled. A nice feature of the buffer approaching zero if \(CCR_{j}^{\text {(total)}}(t)\) approaches \(100\%\) is the interpretation of a market re-entry component. As traditional strategic asset allocations realize big losses in V-markets due to falling short in timing market re-entries after market declines, the fund in the paper still holds risky assets in bad times and hence stays invested and can participate if the market recovers. The fund’s wealth is not shifted to the buffer account as long as the fund stays well-funded (\(CCR_{j}^{\text {(total)}}(t) \ge 100\%\)). Hence, the definition of the buffer portfolio allows to stay invested during preceding bad times.

7 Conclusion

In this article a possible post-retirement phase implementation of an innovative pension plan without guarantees, currently under discussion in Germany, was studied. We transferred the product rules into a mathematical model and solved the resulting portfolio selection problem via the discrete-time Bellman equation, to the best of our knowledge for the first time. We draw the following conclusions: