Abstract

Information is valuable to decision makers in both public and private sectors. The New Public Management (NPM) reform in the public sector has stressed the importance of performance information to politicians, public managers, and citizens. Information economics has acknowledged the meaning of information as a market determinant. However, as a discipline, information economics has not developed a cost concept that describes the negative value of an incorrect decision caused by the non-use or misuse of information. A systematic theoretical approach describing the factors causing such non-use or misuse is also currently missing in information economics. This article aims to fill these two research gaps. It defines infonomic costs (ICs) as the negative value of information non-use or misuse, denoting the benefits lost by the decision maker. By conducting an exploratory literature review, another new concept called the information expectation gap (IEG) is created to depict why the non-use or misuse leading to ICs occurs. The IEG also explains how information and knowledge asymmetries come into existence. The conceptual work presented here offers novel understanding and terminology to both academics and practitioners. Practitioners can utilize the IEG concept in information system management because it displays several dysfunctions that they may face in their information systems. For academics, this research opens up new theoretical conversations about the different types of information system dysfunctions that cause market errors and adverse policy decisions.

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Avoid common mistakes on your manuscript.

Introduction

Information is important for decision-making (Coleman 1988: 104). Nevertheless, information is sometimes misused or not used in both public (e.g., Jansen 2008; Van Dooren and Van de Walle, 2008) and private sectors (e.g., Boulding et al. 1997). Misuse and non-use have consequences for decision-making and thus for utility. Deciding between mutually exclusive actions lies at the heart of decision-making, and information often provides facts about the costs related to different action options. The concept of opportunity costs has been used to describe all real costs associated with different choices of action, including the loss of money, time, energy, and a derived pleasure/utility. In this study, it is argued that the decision maker sometimes assesses opportunity costs incorrectly because information is misused or is not searched properly. Unfortunately, previous research has not developed a cost concept that describes the negative value of an incorrect decision caused by the non-use or misuse of information. Thus, the first aim of this research is to develop such a concept. It is also claimed here that information system dysfunctions relating to information non-use and misuse have been underexamined in the field of information economics. Therefore, the second objective is to develop a concept that describes these types of information system dysfunctions. The third purpose is to clarify the distinction between information and knowledge asymmetries, a third topic that has not been addressed properly in previous literature. The precise research questions are the following:

-

1.

How should the costs arising from information non-use and misuse be defined?

-

2.

What factors contribute to information non-use and misuse, and how do they do so?

-

3.

How does information asymmetry differ from knowledge asymmetry?

The research method is based on constructivist epistemology (cf. Guba and Lincoln 1998) and the logic of abductive reasoning (cf. Peirce 1998). This means that the new concepts constructed in this research are derived from previous scientific arguments describing either the dysfunctional information uses and their meanings to decision makers or the opportunity costs relating to actions. This study utilizes some of the most relevant and important arguments from previous research traditions to construct new theoretical concepts and mental models that justify these concepts. For this reason, an exploratory literature review is conducted, in which comprehensiveness is not as important as the focus on the research questions. In this type of review, the researcher examines the literature to find novel ideas and insights. A model of information systems described by Joos (2000: 7) is used to detect their components. Described in Fig. 1, these components (or themes in other words) guide the information search by pointing out the possible sources of dysfunctions. This research simply searches dysfunctions relating to these information system components. To understand the information expectation gap (IEG), knowledge asymmetries, and infonomic costs (ICs), previous theoretical and empirical results from psychology, economics, computer sciences, philosophy, and information sciences are utilized. If future research wants to understand non-use and misuse better, this type of synthesis is useful because it collects together dispersed information from causes leading to non-use and misuse and points out to new research directions in empirical settings examining these causes together.

This research contributes to the current literature by developing three new theoretical concepts in economics—knowledge asymmetry, ICs, and the IEG. The IEG helps in understanding the use, non-use, and misuse of information. It also clarifies how imperfect information can be and what factors in information systems cause problems to knowledge sharing (cf. Obeso and Sarabia 2016), transfer (cf. Powell and Snellman 2004; Janicot et al. 2016), and management (cf. Ahmad et al. 2015). Additionally, the IEG provides reasons why information asymmetries, knowledge asymmetries, and ICs can arise and why these market dysfunctions exist although signaling, screening, and other methods are used to balance information asymmetries. For academics, this study offers new theoretical concepts and research topics to be addressed in future research endeavors. Practitioners can use the theoretical framework underlying the concepts to improve the operation of information systems and to troubleshoot these in general.

This article is divided into four sections. The first section briefly explains the main concepts used in this study. The second section depicts the components of an IEG and the mechanism that creates it. The third section deepens the understanding of how the IEG comes into existence. The last section presents the conclusions.

Knowledge Asymmetry, Infonomic Costs, and Information Expectation Gap

While information asymmetry refers to discrepancies in the information available to different market agents (Akerlof 1995), knowledge asymmetry describes differences in the knowledge gained from the same information delivered to the market agents. Knowledge asymmetry prevails when agent A gains more knowledge from exactly the same information than agent B. For example, there might be a situation in which both parties involved in a market transaction have access to the same information channels and information. Although both parties have the same information, their interpretations of it can significantly deviate from each other, as well as how they use it in a transaction. Moreover, how the information is delivered to the agents can make a difference. For instance, knowledge asymmetry can result when agent A suffers from information overload, but agent B obtains the information needed in decision-making in a more optimal way, which means that the amount of information is sufficient, and the information enters at a correct pace with agent B’s awareness. At first, agent A obtains the same information as agent B does and some additional information that agent B lacks. Initially, information asymmetry prevails in favor of agent A, but in the end, agent B gains more knowledge than agent A because agent B has absorbed the information more optimally. Thus, knowledge asymmetry has come into existence. If agent B does not share this knowledge with agent A, then a new form of information asymmetry emerges.

The above case does not mean that knowledge asymmetry always precedes information asymmetry. Knowledge asymmetry in the present moment can create new information asymmetries in the future, but previous information asymmetries can cause the current knowledge asymmetry. This happens because previous information asymmetries affect how new information is perceived. For this reason, cause and effect can be hard to distinguish between knowledge and information asymmetries. Nonetheless, it is useful to separate these two phenomena because knowledge asymmetry points to insights, whereas information asymmetry refers to availability.

Here, the definition of ICs is presented in relation to opportunity costs. ICs occur when an agent’s best possible utility, in the present or in the future, is lost. If opportunity costs refer to benefits that are lost when a particular course of action is pursued instead of a mutually exclusive alternative, ICs are the costs of incorrect decisions that are based on incomplete knowledge about the utility benefits of different opportunities. If the decision and actions are wrong because of the incorrect knowledge about the true opportunity costs, ICs denote the difference between the real value of the disregarded option and the real value of the chosen option. The incomplete knowledge about the utility benefits occurs because the agent ignores important information or fails to transform information into correct knowledge about the utility benefits of different opportunities in the decision-making situation. Ignoring information includes blocking the relevant facts and preventing the search for them. In this research, five reasons why people ignore information or fail to transform it into correct knowledge are acknowledged as follows:

-

1.

The information provider is untrustworthy.

-

2.

Invalid and unreliable information is being produced and used, and the user does not know this.

-

3.

The information channel is not working properly.

-

4.

The context of use inhibits the information use.

-

5.

The information user’s attributes hinder information searching or the correct use of the information.

To summarize, ICs are caused by information non-use and misuse. The IEG as a phenomenon explains this non-use and misuse. The agent might or might not be aware of the IEG. The awareness depends on the agent’s ability to recognize the factors that cause the IEG.

The non-use or misuse occurs because the components of the information system are incongruent. The IEG describes this incongruence. The components are the information provider, the information, the information channel, the information user, and the context where the information user operates (see Fig. 1). The incongruity refers to the agent’s unmet expectations about the information, the information provider, information channel, or the context of use. However, this incongruity is a complex phenomenon because the information and its provider, the information channel, and the context of use influence the agent’s expectations. Overall, the incongruence between two or more components explains why knowledge asymmetries and information asymmetries emerge. Thus, the IEG broadens our current knowledge of information asymmetries by describing how social contexts, information providers, information channels, the information’s features, and the user’s attributes can create these asymmetries.

What Causes Information Expectation Gaps?

Five distinctive factors can cause IEGs—the features of the information, the qualities of the information provider, the information channel, the attributes of the information user, and the context of information use. The features of the information are its form, quantity and quality, and essence. The information channel is the medium that conveys the information, and the information provider is the operator producing the information. The attributes of the information user are formed from the ability to use and understand the information. The ability to use and understand the information originates from many factors, such as the information need; the opinions regarding the information provider, the usability and usefulness of the information, and the information channel; intellectual capabilities, personal history, traits, moods, habits, age, and genetics; and the operational environment, which refers to the context of information use. The context as a concept withholds the social pressures surrounding the user. Our motives, beliefs, and epistemological and ontological views form the basis of our information needs. Motives are derived from the needs that serve hedonism and/or self-actualization. The ontological view refers to how an individual understands the nature of reality. The epistemological view relates to conceptions of what can be known and how a person can acquire knowledge.

The user has expectations about the information provider, the information channel, the information, and the context of information use. The ability to use and understand information determines these expectations, and the information provider, the information channel, the information, and the context of information use have to live up to these expectations, at least to some extent, even if the expectations are not realistic or logical. If the information provider, the information channel, the information, or the context of information use does not match the expectations, there is an IEG, and the information might be abandoned or incorrectly used.

How Can the Information’s Features Cause an Information Expectation Gap?

The agent’s expectations about the information can differ from the features of the information encountered by the agent. If the information’s features exceed expectations, then there are two possible outcomes. In the first outcome scenario, the agent accepts and uses the information since it exceeds his or her expectations in a positive way. The alternative is that the agent disregards the information because it seems excessive, implausible, uninteresting, irrational, incomprehensible, or cannot exist according to the agent’s world views. In other words, the agent lacks the motivation to go through all the information or the ability to understand it. The agents’ will to understand information may also be absent. Disregarding the information might mean its complete rejection or a tunnel vision that focuses only on a certain part or parts of the information. A situation where the information exceeds the expectations is called a positive IEG, which is a rather underexamined phenomenon in information economics.

If the information’s features fall below the agent’s expectations, he or she starts searching for more information, disregards the information, or uses it anyway because better information is unavailable. Different screening processes (cf. Stiglitz 1975) describe how the agent tries to solve the situation where he or she faces a negative IEG. On the other hand, signaling (cf. Spence 1973) demonstrates the agent’s willingness to solve others’ negative IEGs. A situation where information asymmetry prevails does not guarantee that a negative or a positive IEG exists. Information asymmetries can prevail without the existence of an IEG. Agents’ expectations about the imperfect information determine whether or not an IEG exists.

The agent might or might not be aware of the negative or the positive IEG since misperceptions exist. Generally, the following are the reasons for the IEG when expectations about the information are considered:

-

1.

The form of the information differs from the expected form.

-

2.

The essence of the information deviates from the essence of the phenomenon that the information is supposed to describe, according to the user (e.g., complex objects are presented in a simple fashion).

-

3.

Too little or too much information is available to the user.

-

4.

The information’s quality is either below or above the expected and required level in order to make a decision.

Knowledge can be either propositional (expressed by voices, characters, signs, pictures, etc.) or tacit (know-how knowledge); hence, information is either propositional or tacit when it carries knowledge.Footnote 1 According to Polanyi (2009), tacit knowledge is unformulated knowledge that actively and continuously affects a person and can mainly be observed in that person’s actions. Koivunen (1997) describes tacit and explicit knowledge as elements that support each other. Koivunen adds that our tacit knowledge automatically receives and processes most of the sensory data that we encounter in the course of our lives, and we are mostly unaware of this process. Niiniluoto (1996) states that propositional knowledge has a truth value. On the one hand, if a propositional sentence makes a claim that accurately describes the state of the world, the truth value of that sentence is true. On the other hand, if the propositional sentence makes a claim that does not describe the state of the world, the truth value is false.

Propositional knowledge can be divided into eight forms, as follows: singular, general, statistical, modular, conditional, explanatory, operational, and evaluation knowledge (Niiniluoto 1996). Singular knowledge describes or interprets individual things, facts, or events. Exceptional historical events or our own unique observations of mundane life fall under this category. General knowledge is related to common cause-and-effect relationships, facts, and regularities (see also Baumard 1999). General information can be found in the laws and the theories of the natural sciences. Statistical knowledge is conceptually located between singular and general knowledge, and it presents knowledge about the qualities of a certain population (Niiniluoto 1996). Modal knowledge describes the possibilities, necessities, and other modalities that exist (Girle 2009). Conditional knowledge is expressed with counterfactual statements. Explanatory knowledge elucidates why certain events happen the way they do or why the state of the world is how it is. For example, explanatory knowledge can describe how nature works or how and why humans do certain activities. Operational knowledge informs us what ways and means we can use to achieve our goals. Operational knowledge is know-how knowledge, but it differs from tacit knowledge since it can be described with words. Evaluation knowledge declares that something is valuable according to the criteria used to assess it. For instance, the value of a piece of gold is estimated based on its market price (Niiniluoto 1996).

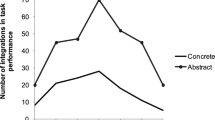

The form of information has been demonstrated to have significance to the user. Borgida and Nisbett (1977: 258) find that statistical information has little effect on course choice, whereas the face-to-face method producing singular knowledge significantly influences students and their course choices. Colarelli et al. (2002) state that natural selection has shaped the human mind to prefer face-to-face interactions and narratives when seeking information about other people rather than from statistical analyses—what Moore (1996) refers to as an “identified” versus a “statistical” life. As a result, people’s beliefs, decisions, and actions are based on face-to-face and narrative information that depicts other people (Moore 1996).

Terhune and Kennedy (1963) report that people who think in a more complex fashion show more reliance on conceptual information about relationships (general knowledge) than more simplistically thinking people who prefer concrete data from isolated facts (singular knowledge). Dermer’s (1973) research shows that individual differences affect perceptions about the importance of information although the study does not measure how significant such differences are. Preferring some form over another is a common feature of information seeking. A professional gambler who is tossing a coin wants modal knowledge about the chances to win, and singular knowledge from a previous coin toss will not help him or her. A scientist can seek explanatory knowledge but not evaluation knowledge. The question that triggers the information seeking defines the kind of propositional knowledge that people will accept in different situations. The phenomenon is so ordinary that it is fairly easy not to notice it, yet this bias toward a certain kind of information has the potential to create problems in decision-making. Moreover, signaling the wrong form of information to customers, for example, may be unfruitful.

The essence of the information that carries knowledge and the essence of phenomena affect the way that the information is considered by the user.Footnote 2 If a phenomenon is complex but the information describing it is simple, an IEG can arise. Hayek (1967) notes that relations determined by the functions of a few variables are typical of simple phenomena. According to Axelrod and Cohen (2008), something is complex when its behavior cannot be reduced to the behavior of its constituent parts, and complex objects are always capable of surprising the observer. Stacey (1996: 10) states:

The science of complexity studies the fundamental properties of nonlinear feedback networks and particularly of complex adaptive networks. Complex adaptive systems consist of a number of components, or agents, that interact with each other according to sets of rules that require them to examine and respond to each other’s behavior in order to improve their behavior and thus the behavior of the system they comprise. In other words, such systems operate in a manner that constitutes learning. Because those learning systems operate in environments that consist mainly of other learning systems, it follows that together they form a coevolving suprasystem that, in a sense, creates and learns its way into the future.

Tsoukas (2004: 4) argues that complex systems require complex knowing. Complex knowing involves the forms of understanding that consider beliefs, desires, time, changes, events, power, circularity, and feedback loops. Complex forms of understanding view the world as full of possibilities, which are enacted by purposeful agents embedded in powerful social practices. If complex and simple phenomena are defined as explained above, for a person, it can seem quite irrational to describe a simple phenomenon in a complex way and vice versa. Therefore, understanding the essence of information may be crucial to successful signaling. Similarly, screening simple information from complex phenomena can lead to troubles.

Prior research has demonstrated how complex information is rejected when simpler information is needed. On the one hand, Lindblom (1959: 87) argues that scientists offer overly complex theories to practitioners in administration; thus, government officials reject these theories since incremental changes in governmental systems do not require such a comprehensive and complex approach. Thus, simpler information, theory, and approach seem more useful to officials. On the other hand, Mishkin (2004) states that the “keep it simple, stupid” (KISS) principle requires the articulation of a monetary policy to be as simple as possible because communication becomes straightforward in this way. A central bank should only name the target inflation regime as its goal since discussing both output and inflation goals could confuse the general public and make it more likely that people would perceive the central bank’s mission as the elimination of short-run output fluctuations, thus worsening the time-inconsistency problem (Mishkin 2004).

As it is possible to require simple instead of complex information, it is also possible to expect complex instead of simple information. For instance, Terano (2008) notes that to cope with real phenomena, we must go beyond the KISS principle in the models of agent-based social simulation. Adelman (1999) points out that economics as a discipline has enshrined the KISS principle as an overarching tenet (taught in graduate schools) that can only be violated at the violator’s own peril. The KISS principle demands simple explanations and universally valid propositions, leading to major fallacies, with significant, harmful consequences for both theory and policy (Adelman 1999). Lindblom and Cohen (1979) also argue that research in social science can only be an increment to other knowledge; thus, research knowledge by itself is too simple to solve complex social problems that policymakers attempt to solve. The statements by Adelman, Terano, and Lindblom and Cohen clearly demonstrate unfulfilled expectations about information.

The main problem with the essence of information is that it is hard to define whether or not the KISS principle is a key to success in certain situations. For example, mastering the use and non-use of the KISS principle has been demonstrated to have relevance to the high performance of a salesperson (cf. Macintosh and Gentry 1999). However, screening for simple information from a complex phenomenon may cause problems. It can be concluded that the essence of information is an important factor that causes IEGs.

The amount of information also plays a role in the process that leads to an IEG. Studies have shown that too much information can lead to information overload, which can be defined as a condition in which the amount of stimuli coming through the senses exceeds the processing capacity of a person’s system. Information overload occurs when too many stimuli enter our awareness at the same time or when consecutive inputs enter our processing system at a too fast pace (Speier et al. 1999: 338–339). Research from a number of disciplines (e.g., accounting, finance, consumer behavior) has shown that information overload decreases the decision quality (Chewning and Harrell 1990), increases the time needed to make decisions, and heightens the confusion related to the decision (Malhotra et al. 1982).

Information overload has been mostly understood as information load (Speier et al. 1999: 339), whose content has varied to some degree in different studies. Casey (1980) and O’Reilly (1980) use the number of cues, Shield (1980) applies the number of alternative outcomes, and Iselin (1988) utilizes the overall diversity of the information to describe the information load. Hart (1986) points out that adding demands to a task (i.e., task complexity) has a direct impact on the mental workload and can even cause information overload. From Schick et al.’s (1990) research, they suggest that information overload happens when the time needed to meet a decision maker’s processing requirements exceeds the amount of time available for such processing, which in fact degrades the decision quality (Hahn et al. 1992). It can be inferred that signaling may fail if too much information is used, with too little time to process it. Additionally, actors who want to exploit information overload in public and private sectors can intentionally cause its occurrence while signaling (e.g., Hood 2010). Information asymmetries can be created this way (cf. Stiglitz 2002).

Lack of information can be as severe a problem as information overload. Research has shown how harmful the situations in which a producer or a customer has too little information can be to market operations. For example, Greenwald and Stiglitz (1986: 259) state that imperfect information causes distortions in the economy. Jaffe et al. (2005 argue that incomplete information plays a role in market failures, which in turn negatively influence innovations. An indication of incomplete information is information asymmetry, which can lead to moral hazards, adverse selections, opportunism, screening, and signaling, all of which have been studied by various researchers.

The quality of information creates IEGs and can cause incomplete information. For instance, Wang and Strong (1996: 5) find that poor information quality can have economic impacts. They also demonstrate that different aspects of quality are evaluated and appreciated in various ways by the information user. Jung et al. (2005) show that information quality significantly affects decision-making. According to Miller (1996) and the International Accounting Standards Board (IASB) (2008, 2010), the quality of information is determined by many factors. These factors are linked in different ways and form a complex entity that depicts diverse aspects of quality. Applying Miller’s (1996), Stigler’s (1961), and Nelson’s (1970) studies to the conceptual framework of the IASB (2008, 2010) indicates usefulness as the main attribute of quality, comprising the following aspects:

-

1.

Relevance, which means that information has:

-

(a)

Predictive

-

(b)

Confirmatory

-

(c)

Operational/practical values

-

(a)

-

2.

Reliability/faithful representation, which is formed from:

-

(a)

Neutrality, including:

-

(i)

Prudence (does not overestimate income or underestimate costs)

-

(i)

-

(b)

Completeness, which is constructed from:

-

(i)

Substance over form

-

(ii)

A true and fair view

-

(iii)

Accessibility

-

(iv)

Security

-

(i)

-

(c)

Validity, which is characterized by:

-

(i)

A correct level of accuracy (macro or micro)

-

(ii)

Being error-free

-

(iii)

Timeliness

-

(i)

-

(a)

-

3.

Understandability, which is built by:

-

(a)

Correct formatFootnote 3

-

(b)

Proper semantics

-

(c)

Accurate and comprehensive content

-

(a)

-

4.

Compatibility, which is derived from:

-

(a)

Coherence

-

(a)

-

5.

Transparency

-

6.

Credibility (the process that produces information has credibility)

-

7.

Cost efficiencyFootnote 4

-

8.

Legitimacy (a common perception that information is proper, decent, and appropriate according to social values, norms, beliefs, and concepts)

The list of aspects relating to quality is extensive, and its complexity can exceed the processing power of the human brainFootnote 5 although it can be questioned whether all the terms listed above are really necessary since some of them overlap in previous literature. For example, according to the IASB (2010), transparency and a true and fair view are different words to describe the information that has the qualitative characteristics of relevance and representational faithfulness, enhanced by comparability, verifiability, timeliness, and understandability. However, transparency can also be perceived as the ability to offer access to all the relevant data when needed. Hence, at the minimum, transparency includes relevance, accessibility, timeliness, the correct level of accuracy, and completeness (cf. Naciri 2009). The minimum combination of the factors required for transparency shows that the concepts on the list can interact in multiple ways, which breaks the conceptual boundaries and hierarchies depicted on the list. Thus, transparency is needed to confirm completeness, timeliness, relevance, accessibility, and the right level of accuracy, but these features are also required to confirm transparency, indicating how intertwined the concepts are and how the concepts on the list and their hierarchy are subject to interpretation and ambiguous. For example, if an agent lacks access to transparency, he or she cannot determine whether or not the information is relevant and reliable. If the information is irrelevant, the agent starts to wonder whether or not transparency is really present.

Furthermore, a true and fair view can be regarded as a product that is formed from usefulness, validity, neutrality, and completeness (e.g., Kirk 2006). The existence of a true and fair view demonstrates that any combination of concepts could form a new concept, and all the different combinations of concepts on the list have not been named and presented there. Naming all the combinations would make the list very long since the combinations would multiply rapidly; only the imagination and mental abilities would limit the concepts and the combinations on the list. However, in reality, the reasons for either abandoning or using the information can be rather numerous. Additionally, if someone evaluates all the connections among different aspects of quality, one can observe that an agent needs, for example, validity to confirm every other aspect of quality, and the confirmation of validity requires the confirmation of every other aspect on the list if the agent is going to confirm the true quality of the information. Thus, quality is constructed from different aspects/concepts, which form an interactive network. Since each concept on the list influences every other concept, 300 different interactions exist among the concepts. If there are problems with one aspect of quality, these will be reflected in the other concepts, making the quality hard to control, manage, and understand. Overall, the complexity of quality is a mechanism that creates imperfections in information and mismatches between the agent and the information. The complexity therefore creates positive and negative IEGs and triggers the need for signaling and screening. However, with signaling and screening, it is difficult to achieve full certainty from the aspects of quality. Thus, contracts, warrants, trademarks, and other mechanisms are used to secure market transactions (cf. Stiglitz 2002).

Usually, more than one piece of information is evaluated at the same time. All the information available to users affect how they will perceive and value different types of information (e.g., Herr et al. 1991). Thus, the evaluation of one type of information is influenced by that of another type, making the process complex and problematic. It also means that IEGs can arise in many different ways. Hence, the user can have multiple IEGs at any given moment. For instance, there could be a positive IEG between the user and information A and a negative IEG between the user and information B. Additionally, there could be no IEG between the agent and information C. Mapping the IEGs and solving the problems that cause them become harder every time when more information becomes available to the decision maker. For this reason, the information society can create complex networks that produce IEGs. Thus, the real challenge for the human-centered computer systems in the future is to provide the right information, at the right time, in the right place, in the right way, and to the right person (cf. Fischer 2012).

Information User’s Attributes as Causes of IEGs

Genetics,Footnote 6 environments,Footnote 7 and habitsFootnote 8 influence cognitive capabilities, which are central to understanding the market information. Taylor and Dunnette’s (1974: 442) research shows that the decision maker’s cognitive attributes significantly affect the evaluative aspects of the decision-making process, that is, judging information diagnosticity and integrating the information into a high-quality solution, particularly for pre-decision and decision point behaviors. Taylor and Dunnette (1974: 441–442) point out that the decision maker’s motivational and personality attributes influence the idiosyncratic or stylistic behaviors that lead to a choice (e.g., the amount of information sought and the processing rate required) and are especially influential on post-decisional behaviors (e.g., decision confidence and decision flexibility). Taber and Lodge (2006) suggest that motivated reasoning and thus prior beliefs affect information processing. Nyhan and Reifler (2010) note that correcting misbeliefs can be difficult. Festinger (1957) argues that agents are sometimes motivated to use defense mechanisms against the information that distorts their information processing. For example, people can block information or pick up only those parts that fit their belief systems. Festinger adds that people can change and manipulate the information content to combine it with their existing cognitive structures more easily.

According to Dweck (1999), people explain and react differently to the same event (or information in this case) because they have different implicit views, indicating that the same market information will cause different reactions in different agents. Neisser et al. (1996) state that individuals differ in their abilities to understand complex concepts, adapt efficiently to the surrounding environment, learn from past experiences, utilize various forms of reasoning, and overcome obstacles by figuring out the correct solutions. These individual differences can be substantial, but they are never completely consistent (Neisser et al. 1996). Thus, a given person’s level of intellectual performance varies on different occasions and in different domains since occasions and domains are judged with diverse criteria. To conclude, cognitive capabilities can explain expectations about information, as well as knowledge asymmetries, information asymmetries, and misperceptions that, for example, hide the IEGs from the information user.

Epistemological and ontological views can lead to IEGs. Ontological perceptions are cognitive structures that influence information processing; moreover, according to Morgan and Smircich (1980), ontological views affect epistemological preferences. In epistemology, there has been a long and historical debate about whether empiricism, rationalism, idealism, or constructivism can produce correct knowledge in different situations.Footnote 9 For example, closely consider the following statement: Knowledge can be either a priori (based on rationalism) or a posteriori (based on empiricism).Footnote 10 If the information seeker is searching for information about some phenomenon that is a posteriori by nature and based on experience, a priori knowledge will not necessarily satisfy the seeker. A posteriori knowledge might also not satisfy the information seeker if he or she is searching for a priori knowledge. Overall, information based on the wrong type of knowledge-acquiring method might lead to an IEG if (with reference to the agent’s epistemological views) he or she is expecting another kind of method. For example, the history of science is full of disputes concerning proper research methodology. Furthermore, how an agent justifies knowledge can reflect how the information that considers knowledge justification is perceived by the user. For instance, if the agent happens to believe in the regress problemFootnote 11 or infinitism,Footnote 12 searching for information that would justify knowledge in the market context can be seem futile. Additionally, Pyrrhonian skepticism and academic skepticism both have the potential to cause information blocking, as well as reduce the information needs involving knowledge justification.

Context of Information Use, Channel, and Provider Causing IEGs

The information itself might be viewed as unreliable if the user considers the provider untrustworthy. An IEG might arise as a result. Thus, an agent values not only the information but also its source. A lack of trust in or biased views of the source can distort the information-interpretation process and prevent the information use, which may lead to ICs. For instance, the seller can seem less sincere if the buyer uses persuasion knowledge in a transaction situation (Campbell and Kirmani 2000: 69–70). In such cases, trust issues exist between the buyer and the information provider. When people are mistrustful, they spontaneously activate associations that are incongruent with the given message (Schul et al. 2004). Moreover, what Peirce (1877) describes as a method of authority relies on positive perceptions about the information channel or provider.

The contexts in which agents operate influence them and their evaluations of the information, the provider, and the channel. For example, Lindblom and Cohen (1979) argue that social research provides inadequate information for policymakers who aim to solve social problems in policymaking contexts although the research information provides all the elements required in scientific environments. Thus, information that is useful in one context may not be so in another. Even if the information relates meaningfully to both contexts, its usefulness can disappear when the context changes. Adelman (1999) makes arguments similar to those of Lindblom and Cohen (1979), but her assertions consider economic studies and their harmful implications for policy solutions. Additionally, the information asymmetry literature has demonstrated how the context shapes the messages used in signals (cf. Stiglitz 1975; Milgrom and Roberts 1986).

In computer science, the concept of user experience (UX) refers to the context where a person encounters a system with a beginning and an end.Footnote 13 In this research, the agent encounters information channels in private and public sector contexts. The UX refers to an overall designation of how people experience their encounters with information channels. The information channel can be as simple as two people talking to each other or can be complex, such as the Internet. The UX can involve either an individual or a group of people encountering the information channel together, and the UX causes IEGs. For example, whether or not the information will flow from the website to the information user depends largely on the UX over the long term since a positive UX determines whether the customer will visit the website again (Garrett 2011). The quality of the UX also determines whether an online service will be accepted by users (Wu and Wang 2015). The existence of the UX can be perceived in simple or complex and technical or non-technical information channels. For instance, people seek the most easily accessed information (such as asking co-workers) rather than search for high-quality information that is more difficult to find (O’Reilly 1982). O’Reilly’s finding can be explained with the UX concept because a UX exists between an employee and his or her co-workers, people and computers, people and magazines, and so on, and efficiency, which closely relates to accessibility, is one aspect of the UX. The UX’s widespread existence makes it an important concept that is largely unexplored in information economics; therefore, it opens up a lot of new research possibilities.

Multiple factors can affect a person’s UX, which are classified in a UX white paper (Roto et al. 2011: 10) into three main categories, as follows:

-

1.

Context of the user and the system. Whenever the context changes, the UX can change even if the information channel remains constant. The context in the UX domain refers to a mix of social (e.g., a crowded space full of sellers and buyers vs. online shopping), physical (e.g., using the information in a store vs. using the information at home), task (someone may multitask when encountering the information channel, or the task may require symbolic or spatial information), and technical contexts (e.g., the information can come via different technical devices or non-technical ways). Thus, information can be blocked because the context inhibits the use of or the search for information from the channel. The information use can also be suboptimal because of the mismatch between the task context and the information format (cf. Vessey 1991).

-

2.

User’s state. The UX is dynamic by nature; thus, a person interacting with an information channel involves a dynamic process. For example, this refers to the agent’s motivation to use the information channel, his or her mood,Footnote 14 expectations, and current mental and physical resources. Thus, the dynamic process involving the information, the agent, the channel, and the context is constantly evolving; as a result, IEGs might arise and later disappear. For instance, at first, the available information fulfills the user’s expectations, but the context, combined with the channel, does not please the user, who chooses to ignore the information. After a while, the user searches and finds the usable information (that he or she initially ignored) because its context and channel have changed.

-

3.

System properties. A user’s perception of the information channel’s properties influences the UX. Important for the UX are the properties designed into the information channel (e.g., functionality, interactive behavior, responsiveness, and even esthetics) and those that the user has added, changed, or are consequential to its use.

The functionality aspect mentioned in the system dimension of the UX has been studied extensively in computer science. The usability literature has listed some factors that cause functionality problems. In this article, the information channel’s usability is based on five factors derived from computer science. These factors describe how people interact with systems regardless of whether the system is a technical device, such as a computer, or a simple information channel, such as a pen and paper or two persons talking to each other. Nielsen (1993: 24–26) lists the following five usability factors in his well-known research:

-

1.

Learnability. Learning to use the information channel should not require a lot of time and effort; otherwise, the agent will be unable to perform the work quickly with the system.

-

2.

Efficiency. A high level of productivity and efficiency is achieved once the agent has learned the system.

-

3.

Memorability. The agent should easily remember how to use the system properly and without relearning everything each time he or she returns to the system after a period of disuse.

-

4.

Errors. The information channel should not cause errors, and recovering from errors should be easy.

-

5.

Satisfaction. The information channel needs to be pleasant to use, and the agent should feel satisfied when doing so.

Poor usability of the information channel can cause IEGs. The usability’s problematic nature has been demonstrated in previous studies. For instance, Goodwin (1987) states that the characteristics that make a system usable for one set of users can render it unusable for another set. If the information channel is unusable, then the information is not being transmitted via that system to some users. Nonetheless, the usability concept is important because it offers ways to improve information channels in the market context. For instance, “including usability testing as a part of evaluation improves the quality and effectiveness of computer-mediated instruction” (Crowther et al. 2004: 289).

The UX also relates to organizational variables and social pressures, which both affect how information is perceived, used, or not used by an agent.Footnote 15 For example, Asch (1956) reports that social pressure can influence information use; therefore, it can create IEGs. According to Van Maanen and Schein (1979: 1–2):

any organizational culture consists broadly of long standing rules of thumb, a somewhat special language and ideology that help edit a member’s everyday experience, shared standards of relevance as to the critical aspects of the work that is being accomplished, matter-of-fact prejudices, models for social etiquette and demeanor, certain customs and rituals suggestive of how members are to relate to colleagues, subordinates, superiors, and outsiders, and a sort of residual category of some rather plain ‘horse sense’ regarding what is appropriate and smart behavior within the organization and what is not. All of these cultural modes of thinking, feeling, and doing are, of course, fragmented to some degree giving rise within large organizations to various subcultures or organizational segments.

Mortimer and Lorence (1979) demonstrate some of the effects discussed by Van Maanen and Schein (1979) upon finding that occupational socialization affects individual values. On the other hand, values will influence information processing and decision-making (cf. Maker and Hu 2003; Chang and Gotcher 2010). Chatman (1991: 459–464) also finds evidence regarding socialization in public accounting firms. Socialization in turn affects decision-making behavior and information processing in decision-making situations.

The UX factors highlight the interplay among the context, the information, the provider, the channel, and the user. The mechanism that creates IEGs can be assessed when the context, the UX, and the usability factors are evaluated side by side with the features of the information and its provider and the rest of the user attributes. This article has demonstrated the complexity of the mechanism underlying IEGs.

How IEG Arises

User attributes, contexts, information providers, information channels, and information features influence the three gaps presented in this section. These gaps explain the existence of an IEG. First, there might be a gap between what an agent knows (x) and would like to know (y).Footnote 16 For example, when y > x, the agent would like to know more than he or she already knows. The relation between variables x and y is largely determined by whether the agent is willing or unwilling to search for new information. If x > y, there are no strong incentives to look up the available information, and an IEG is present.

Second, a gap could exist between what an agent knows (x) and could know (z) in the future from all the information to which he or she has access but has not yet accessed. A positive IEG might form when x < z, and a negative IEG might occur when x > z. Inaccurate information and misunderstanding cause the situation where x > z.

Third, a gap could occur between what an agent would like to know (y) and could know (z) in the future from all the information to which he or she has access. An IEG could also form from the relation between y and z. On the one hand, when z > y, a positive IEG exists. On the other hand, a negative IEG is prevalent in a situation where y > z. It is noteworthy that the interplay among all three gaps will have an effect on the formation of an IEG, so a researcher should not solely examine the interaction between two variables. Table 1 summarizes some of the ways that an IEG can arise from the interplay among these gaps.Footnote 17

The x, y, and z values are all estimates made by the agent who is evaluating the information channels, providers, the contexts, his or her attributes and needs, and the information available. Subjectivity and irrationality in attribute evaluation and decision-making behavior are possible, indicating an element of randomness in how an IEG emerges. Thus, an IEG’s formation does not necessarily follow reasoned decision-makingFootnote 18 and rationality. In fact, interdeterminacy,Footnote 19 non-reasoned decision-making (NRDM),Footnote 20 the paradox of choice,Footnote 21 the Dunning–Kruger effect,Footnote 22 and irrational behavior can occur and cause the IEG. The randomness associated with an IEG can make its prediction difficult.

Conclusions

Information misuse and non-use and their costs have been underexamined, not only in information economics but also in knowledge management and knowledge economy. This research has studied what factors contribute to information non-use and misuse and how they do so. Additionally, a cost concept related to misuse and non-use has been introduced and examined, as well as the differences between knowledge asymmetry and information asymmetry. As the first result, the IC concept has been developed, which describes the costs arising from information misuse and non-use. As the second result, the IEG concept has been recognized, which displays several reasons for information misuse and non-use. The IEG describes in detail how complex, fragile, vulnerable, and prone to dysfunctions the information systems are and why these dysfunctions can lead to non-use and misuse. As a concept, the IEG combines previous theories and empirical results from different branches of science to create a new holistic view about information system dysfunctions. Many of these dysfunctions are well-proven findings. The information system dysfunctions presented in this article have implications for market and government failures, such as ICs and information asymmetries. The study’s third major result has been the distinction made between knowledge and information asymmetries. This distinction is important because knowledge asymmetry points to insights gained from the available information, whereas information asymmetry refers to information availability. Knowing the difference between them helps agents understand how information asymmetries prevail.

The IEG is a timeless problem. If agents know how to mind the IEG, a certain awareness is present, meaning that they acknowledge and recognize what they know and do not know and why such is the case. This awareness offers a gateway to better information. Practitioners and academics can benefit from the IEG by using it in their attempts to understand and demolish the knowledge-sharing barriers existing in their current information systems. Overcoming these obstacles can enhance information systems and enable more informed decision-making, leading to better well-being. For example, the IEG can help detect whether the information’s features, the user’s attributes, the channel, the provider, and the operating environment where the user functions are causing the knowledge-sharing barriers or unwanted knowledge transformation where correct knowledge transforms into incorrect knowledge.

The exploratory literature review conducted in this study has been selective, which might have left out some important aspects. Thus, further theoretical research is recommended to correct the ideas presented in this article and/or to broaden them. Academics can also conduct empirical research on IEGs, as well as ICs. For example, how much ICs there are in private companies, public organizations, and households is a completely unexplored empirical question to date. Moreover, how typical it is for decision makers to encounter IEGs in different decision-making situations is another question begging for more studies in the future. Since information is important to decision-making, paying attention to these empirical questions can be fruitful.

Notes

Information is either physical or linguistic (cf. Niiniluoto 1996).

Since information conveys knowledge, the arguments in this paper based on the essence of knowledge are also valid for the essence of information.

Cost efficient means that the marginal cost of the acquired information should equal the marginal return. For instance, search and experience are forms of information seeking, and they maximize the expected utility when an agent searches or experiences until the marginal expected cost of searching or experiencing becomes greater than its marginal expected return (Nelson 1970: 313). However, utility can be understood and measured in many ways (see Fumagalli 2013).

According to Malhotra (1982: 419), the respondents experience information overload when they receive information on 15, 20, or 25 attributes. There are 25 intertwined attributes of quality, according to the above list.

Sternberg (2012) describes in more detail how the environment influences intelligence.

For example, Hillman et al. (2008) find that physical exercise affects both the brain and cognition.

See Markie (2004) regarding what this debate is about.

Nordin et al. (1999) state that a priori means knowledge before experience, and a posteriori means knowledge after experience.

According to Bonjour (2010), the regress problem means that every propositional statement leads to an infinite chain of justificatory arguments that cannot be completed; thus, a person cannot justify any knowledge.

Klein (1998: 919) states that infinitism means an infinite chain of justificatory arguments that is non-circular in nature.

The UX concept in this paper is derived from a UX white paper (Roto et al. 2011).

Diener (2000: 38) suggests that moods and emotions influence reactions to the events we encounter. Happy and unhappy people react differently to the same stimuli/information.

March (1991) demonstrates how a firm’s variables influence learning.

In this example, x and y denote the level of knowledge that can be indicated by any number. For example, a perfectly rational homo economicus could operate with a knowledge level that equals 100. If an agent wants to be omniscient and perfectly rational, then he or she would like to have a knowledge level of 100. If the agent is not omniscient and perfectly rational at the moment, then he or she has a knowledge level value of less than 100.

Table 1 is presented here for demonstration purposes only, and its content includes interpretive choices. Readers can identify the same formulas under different IEG categories.

According to Stone (2014: 197), reasoned decision-making uses reasons in the decision-making process to identify a set of possible options and to reduce those options to a single choice.

This paper uses Elster’s (1987) definition of interdeterminacy.

Stone (2014: 199) states, “A decision made using NRDM is not a decision in which no reasoning of any kind took place. It is a decision in which reasoning was not used at a particular point in the decision-making process. One can engage in NRDM in order to resolve an indeterminacy even if that indeterminacy was the result of a lengthy and strenuous reasoning process, a process that (whatever the time and energy involved) was simply not enough to generate a unique decision.” In this paper’s context, an agent decides whether or not to use some information in the decision-making, and his or her ultimate decision can be based on gut feeling, not rationality. Moreover, the agent’s own skills can be assessed with the help of gut feeling.

Schwartz (2004) implies that providing more relevant and personally important options to the decision maker will lead to a poorer choice, which degrades the decision maker’s satisfaction. The modern information society is full of complex information that poses challenges to everyday decision-making.

According to Kruger and Dunning (1999), people can fail to recognize their incompetence, which contributes to the creation of IEGs.

References

Adaval, R., & Wyer, R. S. (1998). The role of narratives in consumer information processing. Journal of Consumer Psychology, 7, 207–245.

Adelman, I. (1999). Fallacies in development theory and their implications for policy. In G. Meier & J. Stiglitz (Eds.), Frontiers of development economics. Washington: World Bank and Oxford University Press.

Ahmad, N., Lodhi, M. S., Zaman, K., & Naseem, I. (2015). Knowledge management: a gateway for organizational performance. Journal of the Knowledge Economy, 7, 1–18.

Akerlof, G. (1995). The market for “lemons”: quality uncertainty and the market mechanism. In S. Estrins’s & A. Marin’s (Eds.), Essential readings in economics (pp. 175–188). New York: St. Martin’s Press.

Asch, S. E. (1956). Studies of independence and conformity: I. A minority of one against a unanimous majority. Psychological monographs: general and applied, 70, 1–70.

Axelrod, R., & Cohen, M. (2008). Harnessing complexity: organizational implications of a scientific frontier. New York: Basic Books.

Baumard, P. (1999). Tacit knowledge in organizations. London: SAGE Publications Ltd.

Bonjour, L. (2010). Epistemology: classic problems and contemporary responses. Lanham: Rowman & Littlefield.

Borgida, E., & Nisbett, R. E. (1977). The differential impact of abstract vs. concrete information on decisions. Journal of Applied Social Psychology, 7, 258–271.

Boulding, W., Morgan, R., & Staelin, R. (1997). Pulling the plug to stop the new product drain. Journal of Marketing Research, 34, 164–176.

Campbell, M. C., & Kirmani, A. (2000). Consumers’ use of persuasion knowledge: the effects of accessibility and cognitive capacity on perceptions of an influence agent. Journal of Consumer Research, 27, 69–83.

Casey, C. J., Jr. (1980). Variation in accounting information load: the effect on loan officers’ predictions of bankruptcy. Accounting Review, 55, 36–49.

Chang, K. H., & Gotcher, D. F. (2010). Conflict-coordination learning in marketing channel relationships: the distributor view. Industrial Marketing Management, 39, 287–297.

Chatman, J. A. (1989). Matching people and organizations: selection and socialization in public accounting firms. Administrative Science Quarterly, 36, 459–484.

Chewning, E. G., & Harrell, A. M. (1990). The effect of information load on decision makers’ cue utilization levels and decision quality in a financial distress decision task. Accounting, Organizations and Society, 15, 527–542.

Colarelli, S. M., Hechanova-Alampay, R., & Canali, K. G. (2002). Letters of recommendation: an evolutionary psychological perspective. Human Relations, 55, 315–344.

Coleman, J. S. (1988). Social capital in the creation of human capital. American Journal of Sociology, 94, 95–120.

Crowther, M. S., Keller, C. C., & Waddoups, G. L. (2004). Improving the quality and effectiveness of computer‐mediated instruction through usability evaluations. British Journal of Educational Technology, 35, 289–303.

Deary, I. J., Johnson, W., & Houlihan, L. M. (2009). Genetic foundations of human intelligence. Human Genetics, 126(1), 215–232.

Dermer, J. D. (1973). Cognitive characteristics and the perceived importance of information. Accounting Review, 48, 511–519.

Diener, E. (2000). Subjective well-being. Social Indicators Research Series, 37, 11–58.

Dweck, C. S. (1999). Self-theories: their role in motivation, personality, and development. Philadelphia: Psychology Press.

Elster, J. (1987). Solomonic judgments: against the best interest of the child. The University of Chicago Law Review, 54, 1–45.

Festinger, L. (1957). A theory of cognitive dissonance. Evanston: Row, Peterson.

Fischer, G. (2012). Context-aware systems: the ‘right’ information, at the ‘right’ time, in the ‘right’ place, in the ‘right’ way, to the ‘right’ person. In Proceedings of the International Working Conference on Advanced Visual Interfaces in Capri Island (Naples), Italy (pp. 287–294).

Fumagalli, R. (2013). The futile search for true utility. Economics and Philosophy, 29, 325–347.

Garrett, J. J. (2011). The elements of user experience: user-centered design for the web and beyond. Berkeley: New Riders.

Girle, R. (2009). Modal logics and philosophy. Durham: Acumen.

Goodwin, N. C. (1987). Functionality and usability. Communications of the ACM, 30, 229–233.

Greenwald, B. C., & Stiglitz, J. E. (1986). Externalities in economies with imperfect information and incomplete markets. The Quarterly Journal of Economics, 101, 229–264.

Guba, E. G., & Lincoln, Y. S. (1998). Competing paradigms in qualitative research. In N. K. Denzin & Y. S. Lincoln (Eds.), The Sage handbook of qualitative research (3rd ed., pp. 191–215). Thousand Oaks: Sage Publications.

Hahn, M., Lawson, R., & Lee, Y. G. (1992). The effects of time pressure and information load on decision quality. Psychology & Marketing, 9, 365–378.

Hart, S. G. (1986). Theory and measurement of human workload. In J. Zeidner (Ed.), Human productivity enhancement: training and human factors in system design (pp. 396–456). New York: Praeger.

Hayek, F. A. V. (1967). Studies in philosophy, politics and economics. London: Routledge & K. Paul.

Herr, P. M., Kardes, F. R., & Kim, J. (1991). Effects of word-of-mouth and product-attribute information on persuasion: an accessibility-diagnosticity perspective. Journal of Consumer Research, 17, 454–462.

Hillman, C. H., Erickson, K. I., & Kramer, A. F. (2008). Be smart, exercise your heart: exercise effects on brain and cognition. Nature Reviews Neuroscience, 9, 58–65.

Hood, C. (2010). The blame game: spin, bureaucracy, and self-preservation in government. Princeton: Princeton University Press.

International Accounting Standards Board (IASB). (2008). An improved conceptual framework for financial reporting. London: International Accounting Standards Committee Foundation (IASCF).

IASB. (2010). The conceptual framework for financial reporting 2010. London: IASCF.

Iselin, E. R. (1988). The effects of information load and information diversity on decision quality in a structured decision task. Accounting, Organizations and Society, 13, 147–164.

Jaffe, A. B., Newell, R. G., & Stavins, R. N. (2005). A tale of two market failures: technology and environmental policy. Ecological Economics, 54, 164–174.

Janicot, C., Mignon, S., & Walliser, E. (2016). Information process and value creation: an experimental study. Journal of the Knowledge Economy, 7(1), 276–291.

Jansen, E. P. (2008). New public management: perspectives on performance and the use of performance information. Financial Accountability & Management, 24(2), 169–191.

Joos, I. M. (2000). Computers in small bytes: a workbook for healthcare professionals. Boston: Jones and Bartlett.

Jung, W., Olfman, L., Ryan, T., & Park, Y. (2005). An experimental study of the effects of representational data quality on decision performance. In Proceedings of the American Conference on Information Systems (AMCIS) 2005 Conference in Omaha.

Kirk, N. (2006). Perceptions of the true and fair view concept: an empirical investigation. Abacus, 42(2), 205–235.

Klein, P. (1998). Foundationalism and the infinite regress of reasons. Philosophy and Phenomenological Research, 58, 919–925.

Koivunen, H. (1997). Hiljainen tieto. Helsinki: Otava.

Kruger, J., & Dunning, D. (1999). Unskilled and unaware of it: how difficulties in recognizing one’s own incompetence lead to inflated self-assessments. Journal of Personality and Social Psychology, 77, 30–46.

Levin, I. P., & Gaeth, G. J. (1988). How consumers are affected by the framing of attribute information before and after consuming the product. Journal of Consumer Research, 15, 374–378.

Lindblom, C. E. (1959). The science of “muddling through”. Public Administration Review, 19, 79–88.

Lindblom, C. E., & Cohen, D. K. (1979). Usable knowledge: social science and social problem solving. New Haven: Yale University Press.

Macintosh, G., & Gentry, J. W. (1999). Decision making in personal selling: testing the “KISS principle”. Psychology & Marketing, 16(5), 393–408.

Maker, J. K., & Hu, M. (2003). The priming of material values on consumer information processing of print advertisements. Journal of Current Issues & Research in Advertising, 25, 21–30.

Malhotra, N. K. (1982). Information load and consumer decision making. Journal of Consumer Research, 8, 419–430.

Malhotra, N. K., Jain, A. K., & Lagakos, S. W. (1982). The information overload controversy: an alternative viewpoint. The Journal of Marketing, 46, 27–37.

March, J. G. (1991). Exploration and exploitation in organizational learning. Organization Science, 2, 71–87.

Markie, P. (2004). Rationalism vs. empiricism. In E. N. Zalta (Ed.), The Stanford encyclopedia of philosophy (Summer 2015th ed.). http://plato.stanford.edu/archives/sum2015/entries/rationalism-empiricism/.20.12.2016.

Milgrom, P., & Roberts, J. (1986). Price and advertising signals of product quality. The Journal of Political Economy, 4, 796–821.

Miller, H. (1996). The multiple dimensions of information quality. Information Systems Management, 13, 79–82.

Mishkin, F. S. (2004). Can central bank transparency go too far? (No. w10829). In C. Kent & S. Guttmann (Eds.), The future of inflation targeting Reserve Bank of Australia. Sydney: J.S. McMillan Printing Group.

Moore, R. F. (1996). Caring for identified versus statistical lives: an evolutionary view of medical distributive justice. Ethology and Sociobiology, 17, 379–401.

Morgan, G., & Smircich, L. (1980). The case for qualitative research. Academy of Management Review, 5, 491–500.

Mortimer, J. T., & Lorence, J. (1979). Work experience and occupational value socialization: a longitudinal study. American Journal of Sociology, 84, 1361–1385.

Naciri, A. (2009). Internal and external aspects of corporate governance. New York: Routledge.

Neisser, U., Boodoo, G., Bouchard, T. J., Jr., Boykin, A. W., Brody, N., Ceci, S. J., & Sternberg, R. J. (1996). Intelligence: knowns and unknowns. American Psychologist, 51, 77–101.

Nelson, P. (1970). Information and consumer behavior. Journal of Political Economy, 78, 311–329.

Nielsen, J. (1993). Usability engineering. Boston: Academic.

Niiniluoto, I. (1996). Informaatio, tieto ja yhteiskunta: Filosofinen käsiteanalyysi. Helsinki: Edita.

Nordin, S., Heiskanen, J., & Niiniluoto, I. (1999). Filosofian historia: Länsimaisen järjen seikkailut thaleesta postmodernismiin. Oulu: Pohjoinen.

Nyhan, B., & Reifler, J. (2010). When corrections fail: the persistence of political misperceptions. Political Behavior, 32, 303–330.

Obeso, M., & Sarabia, M. (2016). Knowledge and enterprises in developing countries: evidences from Chile. Journal of the Knowledge Economy. doi:10.1007/s13132-016-0374-8.

O’Reilly, C. A. (1980). Individuals-and-information overload in organizations: is more necessarily better? Academy of Management Journal, 23, 684–696.

O’Reilly, C. A. (1982). Variations in decision makers’ use of information sources: the impact of quality and accessibility of information. Academy of Management Journal, 25, 756–771.

Peirce, C. S. (1877). The fixation of belief. Popular Science Monthly, 12, 1–15.

Peirce, C. S. (1998). The essential peirce: selected philosophical writings. Bloomington: Indiana University Press.

Plomin, R., & Spinath, F. M. (2004). Intelligence: genetics, genes, and genomics. Journal of Personality and Social Psychology, 86, 112–129.

Polanyi, M. (2009). The tacit dimension. Chicago: University of Chicago Press.

Powell, W. W., & Snellman, K. (2004). The knowledge economy. Annual Review of Sociology, 30, 199–220.

Roto, V., Law, E., Vermeeren, A., & Hoonhout, J. (2011). User experience white paper. Bringing clarity to the concept of user experience. Leibniz: Schloss Dagstuhl.

Schick, A. G., Gordon, L. A., & Haka, S. (1990). Information overload: a temporal approach. Accounting, Organizations and Society, 15, 199–220.

Schul, Y., Mayo, R., & Burnstein, E. (2004). Encoding under trust and distrust: the spontaneous activation of incongruent cognitions. Journal of Personality and Social Psychology, 86, 668–679.

Schwartz, B. (2004). The paradox of choice: why more is less. New York: Ecco.

Shields, M. D. (1980). Some effects of information load on search patterns used to analyze performance reports. Accounting, Organizations and Society, 5(4), 429–442.

Speier, C., Valacich, J. S., & Vessey, I. (1999). The influence of task interruption on individual decision making: an information overload perspective. Decision Sciences, 30, 337–360.

Spence, M. (1973). Job market signaling. The Quarterly Journal of Economics, 87, 355–374.

Stacey, R. D. (1996). Complexity and creativity in organizations. San Francisco: Berrett-Koehler Publishers.

Sternberg, R. J. (2012). Intelligence. Wiley Interdisciplinary Reviews: Cognitive Science, 3, 501–511.

Stigler, G. J. (1961). The economics of information. The Journal of Political Economy, 69, 213–225.

Stiglitz, J. E. (1975). The theory of “screening”, education, and the distribution of income. The American Economic Review, 65(3), 283–300.

Stiglitz, J. E. (2002). Information and the change in the paradigm in economics. The American Economic Review, 92(3), 460–501.

Stone, P. (2014). Non-reasoned decision-making. Economics and Philosophy, 30, 195–214.

Taber, C. S., & Lodge, M. (2006). Motivated skepticism in the evaluation of political beliefs. American Journal of Political Science, 50(3), 755–769.

Taylor, R. N., & Dunnette, M. D. (1974). Influence of dogmatism, risk-taking propensity, and intelligence on decision-making strategies for a sample of industrial managers. Journal of Applied Psychology, 59, 420–423.

Terano, T. (2008). Beyond the KISS principle for agent-based social simulation. Journal of Socio-informatics, 1(1), 175–187.

Terhune, K. W., & Kennedy, J. L. (1963). Exploratory analysis of a research and development game. Princeton: Princeton University Press.

Tsoukas, H. (2004). Complex knowledge: studies in organizational epistemology. Oxford: Oxford University Press.

Van Dooren, W., & Van In, W. S. (2008). Performance information in the public sector: how it is used. Basingstoke: Palgrave Macmillan.

Van Maanen, J., & Schein, E. H. (1979). Toward a theory of organizational socialization. Cambridge: MIT Press.

Vessey, I. (1991). Cognitive fit: a theory-based analysis of the graphs versus tables literature. Decision Sciences, 22, 219–240.

Wang, R. Y., & Strong, D. M. (1996). Beyond accuracy: what data quality means to data consumers. Journal of Management Information Systems, 12, 5–33.

Wu, X. Y., & Wang, P. (2015). Measuring network user psychological experience quality. In K. Chan (Ed.), Proceedings of the 2015 International Conference on Testing and Measurement: Techniques and Applications (TMTA2015). (TMTA2015), 16–17 January 2015, Phuket Island, Thailand.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Rajala, T. Mind the Information Expectation Gap. J Knowl Econ 10, 104–125 (2019). https://doi.org/10.1007/s13132-016-0445-x

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s13132-016-0445-x