Abstract

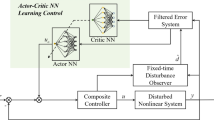

This paper proposes a robust near-optimal control algorithm for uncertain nonlinear systems with state constraints and input saturation. By incorporating a barrier function and a non-quadratic term, the robust stabilization problem with constraints and uncertainties is converted into an unconstrained optimal control problem of the nominal system, which requires the solution of the Hamilton-Jacobi-Bellman (HJB) equation. The proposed integral reinforcement learning (IRL)-based method can obtain the approximate solution of the HJB equation without requiring any knowledge of system drift dynamics. An online gain-adjustable update law of the actor-critic architecture is developed to relax the persistence of excitation (PE) condition and ensure the closed-loop system stability throughout learning. The uniform ultimate boundedness of the closed-loop system is verified using Lyapunov’s direct method. Simulation results demonstrate the effectiveness and feasibility of the proposed method.

Article PDF

Similar content being viewed by others

Avoid common mistakes on your manuscript.

References

G. A. Rovithakis, “Robust redesign of a neural network controller in the presence of unmodeled dynamics,” IEEE Transactions on Neural Networks, vol. 15, no. 6, pp. 1482–1490, November 2004.

J. L. Chang and T. C. Wu, “Disturbance observer based output feedback controller design for systems with mismatched disturbance,” International Journal of Control, Automation, and Systems, vol. 16, no. 4, pp. 1775–1782, August 2018.

F. Lin and R. D. Brandt, “An optimal control approach to robust control of robot manipulators,” IEEE Transactions on Robotics and Automation, vol. 14, no. 1, pp. 69–77, February 1998.

D. Wang, D. R. Liu, and H. L. Li, “Policy iteration algorithm for online design of robust control for a class of continuous-time nonlinear systems,” IEEE Transactions on Automation Science and Engineering, vol. 11, no. 2, pp. 627–632, April 2014.

D. Wang, D. R. Liu, H. L. Li, and H. W. Ma, “Neural-network-based robust optimal control design for a class of uncertain nonlinear systems via adaptive dynamic programming,” Information Sciences, vol. 282, pp. 167–179, October 2014.

D. Wang, D. R. Liu, Q. C. Zhang, and D. B. Zhao, “Data-based adaptive critic designs for nonlinear robust optimal control with uncertain dynamics,” IEEE Transactions on Systems, Man, and Cybernetics: Systems, vol. 46, no. 11, pp. 1544–1555, November 2016.

H. G. Zhang, K. Zhang, G. Y. Xiao, and H. Jiang, “Robust optimal control scheme for unknown constrained-input nonlinear systems via a plug-n-play event-sampled critic-only algorithm,” IEEE Transactions on Systems, Man, and Cybernetics: Systems, vol. 50, no. 9, pp. 3169–3180, September 2020.

Y. L. Yang, Y. X. Yin, W. He, K. G. Vamvoudakis, H. Modares, and D. C. Wunsch, “Safety-aware reinforcement learning framework with an actor-critic-barrier structure,” Proc. of the 2019 American Control Conference, pp. 2352–2358, August 2019.

J. Lee and R. S. Sutton, “Policy iterations for reinforcement learning problems in continuous time and space-Fundamental theory and methods,” Automatica, vol. 126, 109421, April 2021.

Q. L. Wei, L. Y. Han, and T. L. Zhang, “Spiking adaptive dynamic programming based on poisson process for discrete-time nonlinear systems,” IEEE Transactions on Neural Networks and Learning Systems, vol. 33, no. 5, pp. 1846–1856, May 2022.

K. G. Vamvoudakis and F. L. Lewis, “Online actor-critic algorithm to solve the continuous-time infinite horizon optimal control problem,” Automatica, vol. 46, no. 5, pp. 878–888, May 2010.

Y. L. Yang, D. W. Ding, H. Y. Xiong, Y. X. Yin, and D. C. Wunsch, “Online barrier-actor-critic learning for H ∞ control with full-state constraints and input saturation,” Journal of the Franklin Institute, vol. 357, no. 6, pp. 3316–3344, April 2020.

H. Y. Dong, X. W. Zhao, and H. Y. Yang, “Reinforcement learning-based approximate optimal control for attitude reorientation under state constraints,” IEEE Transactions on Control Systems Technology, vol. 29, no. 4, pp. 1664–1673, July 2021.

X. X. Guo, W. S. Yan, and R. X. Cui, “Reinforcement learning-based nearly optimal control for constrained-input partially unknown systems using differentiator,” IEEE Transactions on Neural Networks and Learning Systems, vol. 31, no. 11, pp. 4713–4725, November 2020.

D. R. Liu, X. Yang, D. Wang, and Q. L. Wei, “Reinforcement-learning-based robust controller design for continuous-time uncertain nonlinear systems subject to input constraints,” IEEE Transactions on Cybernetics, vol. 45, no. 7, pp. 1372–1385, July 2015.

D. Vrabie and F. Lewis, “Neural network approach to continuous-time direct adaptive optimal control for partially unknown nonlinear systems,” Neural Networks, vol. 22, no. 3, pp. 237–246, April 2009.

H. Modares and F. L. Lewis. “Optimal tracking control of nonlinear partially-unknown constrained-input systems using integral reinforcement learning,” Automatica, vol. 50, no. 7, pp. 1780–1792, July 2014.

J. Y. Lee, J. B. Park, and Y. H. Choi. “Integral reinforcement learning for continuous-time input-affine nonlinear systems with simultaneous invariant explorations,” IEEE Transactions on Neural Networks and Learning Systems, vol. 26, no. 5, pp. 916–932, May 2015.

R. Z. Song, F. L. Lewis, Q. Wei, and H. G. Zhang, “Off-policy actor-critic structure for optimal control of unknown systems with disturbances,” IEEE Transactions on Cybernetics, vol. 46, no. 5, pp. 1041–1050, May 2016.

K. G. Vamvoudakis, D. Vrabie, and F. L. Lewis, “Online adaptive algorithm for optimal control with integral reinforcement learning,” International Journal of Robust and Nonlinear Control, vol. 24, no. 17, pp. 2686–2710, November 2014.

Q. L. Wei, H. Y. Li, X. Yang, and H. B. He, “Continuous-time distributed policy iteration for multicontroller nonlinear systems,” IEEE Transactions on Cybernetics, vol. 51, no. 5, pp. 2372–2383, May 2021.

C. Liu, H. G. Zhang, H. Ren, and Y. L. Liang, “An analysis of IRL-based optimal tracking control of unknown nonlinear systems with constrained input,” Neural Processing Letters, vol. 50, no. 3, pp. 2681–2700, December 2019.

Z. L. Zhang, R. Z. Song, and M. Cao, “Synchronous optimal control method for nonlinear systems with saturating actuators and unknown dynamics using off-policy integral reinforcement learning,” Neurocomputing, vol. 356, pp. 162–169, September 2019.

X. Yang, D. R. Liu, B. Luo, and C. Li, “Data-based robust adaptive control for a class of unknown nonlinear constrained-input systems via integral reinforcement learning,” Information Sciences, vol. 369, pp. 731–747, November 2016.

F. A. Yaghmaie and D. J. Braun. “Reinforcement learning for a class of continuous-time input constrained optimal control problems,” Automatica, vol. 99, pp. 221–227, January 2019.

H. Modares, F. L. Lewis, and M.-B. Naghibi-Sistani, “Integral reinforcement learning and experience replay for adaptive optimal control of partially-unknown constrained-input continuous-time systems,” Automatica, vol. 50, no. 1, pp. 193–202, January 2014.

A. Mishra and S. Ghosh, “Simultaneous identification and optimal tracking control of unknown continuous-time systems with actuator constraints,” International Journal of Control, pp. 1–19, March 2021.

A. Mishra and S. Ghosh, “H ∞ tracking control via variable gain gradient descent-based integral reinforcement learning for unknown continuous time non-linear system,” IET Control Theory & Applications, vol. 14, no. 20, pp. 3476–3489, December 2020.

H. Modares, F. L. Lewis, and M.-B. Naghibi-Sistani, “Adaptive optimal control of unknown constrained-input systems using policy iteration and neural networks,” IEEE Transactions on Neural Networks and Learning Systems, vol. 24, no. 10, pp. 1513–1525, October 2013.

B. A. Finlayson, The Method of Weighted Residuals and Variational Principles, Society for Industrial and Applied Mathematics, 2013.

K. G. Vamvoudakis, M. F. Miranda, and J. P. Hespanha, “Asymptotically stable adaptive-optimal control algorithm with saturating actuators and relaxed persistence of excitation,” IEEE Transactions on Neural Networks and Learning Systems, vol. 27, no. 11, pp. 2386–2398, November 2016.

X. Yang and Q. L. Wei, “Adaptive critic learning for constrained optimal event-triggered control with discounted cost,” IEEE Transactions on Neural Networks and Learning Systems, vol. 32, no. 1, pp. 91–104, January 2021.

F. W. Lewis, S. Jagannathan, and A. Yesildirak, Neural Network Control of Robot Manipulators and Non-linear Systems, CRC press, 2020.

B. Zhao, D. R. Liu, and C. M. Luo. “Reinforcement learning-based optimal stabilization for unknown nonlinear systems subject to inputs with uncertain constraints,” IEEE Transactions on Neural Networks and Learning Systems, vol. 31, no. 10, pp. 4330–4340, October 2020.

C. L. Darby, hp-Pseudospectral Method for Solving Continuous-time Nonlinear Optimal Control Problems, University of Florida, 2011.

Author information

Authors and Affiliations

Corresponding author

Additional information

Yu-Qing Qiu received his B.S. degree in Nanjing University of Aeronautics and Astronautics, Nanjing, China, in 2017, an M.S. degree in Northwestern Polytechnical University, Xi’an, China, in 2020, where he is currently pursuing a Ph.D. degree in navigation, guidance, and control. His research interests include robust control, optimal control and their applications to aerial vehicles.

Yan Li received his B.S. and M.S. degrees in automatic control from Northwestern Polytechnical University, Xi’an, China, in 1995 and 1998, respectively, and a Ph.D. degree in Nanyang Technological University, Singapore in 2001. He is currently a Professor with the Department of Navigation, Guidance and Control, Northwestern Polytechnical University. His current research interests include robust control, optimal control, fault-tolerant control, and flight control theory.

Zhong Wang received his B.S., M.S., and Ph.D. degrees in automatic control from Northwestern Polytechnical University, Xi’an, China, in 2013, 2016, and 2021, respectively. His current research interests include flight control, nonlinear control, and Bayesian inference.

Publisher’s Note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Qiu, YQ., Li, Y. & Wang, Z. Robust Near-optimal Control for Constrained Nonlinear System via Integral Reinforcement Learning. Int. J. Control Autom. Syst. 21, 1319–1330 (2023). https://doi.org/10.1007/s12555-021-0674-z

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s12555-021-0674-z