Abstract

The paper introduces two modifications for the well-known PSO method to solve global optimization problems. The first modification deals with the termination of the method and the second with the bounding of the so-called velocity in order to prevent the method from creating particles outside the domain range of the objective function. The modified method was tested on a series of global optimization problems from the relevant literature and the results are reported.

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Avoid common mistakes on your manuscript.

1 Introduction

The common problem of estimating the global minimum of a multi-dimensional function is given as

where \(S\subset R^{n}\) is defined by:

This problem appears in many scientific areas such as Yapo et al. (1998), Duan et al. (1992), chemistry Wales and Scheraga (1999), Pardalos et al. (1994), economics Gaing (2003) etc. During the past years many methods have been proposed in the relevant literature to tackle the global optimization problem. These methods usually are divided into two main categories: deterministic and stochastic. The first category includes techniques such as interval methods Lin and Stadtherr (2004), the TRUST method Barhen et al. (1997), fuzzy optimization methods Angelov (1994) etc. Typically, the deterministic methods are more hard to implement than stochastic methods and in some cases they require a—priory knowledge of the objective function. On the other hand, the stochastic methods are more easy to implement and hence there are a great variety of stochastic optimization methods such as: Simulated Annealing Kirkpatrick et al. (1983), Controlled Random Search Price (1977), Genetic Algorithms Goldberg (1989), Michaelewizc (1996) and Particle Swarm Optimization Kennedy and Eberhart (1999). The Particle Swarm Optimization method (PSO) is a , population-based method. The method creates a population of candidate solutions (swarm of particles) that are evolved simulating the movement of actual particles. For that purpose, each particle maintains its current position \(\overrightarrow{x}\) and a corresponding velocity \(\overrightarrow{u}\). The PSO method has been applied with success in a wide area of problems such as problems from physics de Moura Meneses et al. (2009), Shaw and Srivastava (2007), medicine Wachowiak et al. (2004), Marinakis (2008), economics Park et al. (2010), electronics Hosseini et al. (2019) etc. During the recent years, many modifications of the original PSO method have been suggested such as hybrid techniques Liu et al. (2005), Shi et al. (2005), methods to improve the calculation of the parameters of the method (such as inertia, velocity) Tang and Fang (2015), Yasuda and Iwasaki (2004), Shahzad et al. (2009), methods that adapt the control parameters of the PSO in order to learn only feasible solutions Isiet and Gadala (2019), chaotic quantum-behaved particle swarm optimization techniques Mariani et al. (2012), Araujo and Coelho (2008) etc.

Hybrid methods tends to locate more accurately the global minimum but usually the required an additional amount of function calls. On the other hand, methods that used modified versions of the velocity tends to avoid the explosion problem of the PSO technique but in some cases they are trapped to a local minimum instead of locating the global one.

This article proposes a new stopping rule specifically designed for PSO methods and a technique to bound the velocity avoiding calculation of the objective function outside of the domain definition S (Eq. 2). Various experiments were conducted on a series of well-known test functions from the relevant literature in order to demonstrated the efficiency of the proposed modifications and the results are listed.

The rest of this article is organized as follows: in Sect. 2 the general description of the PSO method is provided as well as the proposed modifications, in Sect. 3 the test functions are described with the conducted experiments and finally in Sect. 4 some conclusions are derived.

2 Method description

The general scheme of the PSO method is listed in Algorithm 1. The algorithm has m particles and each particle has n elements where n is the dimension of the objective function. Each of the m particles is associated with two vectors: the position \(x_{i}\) of the particle and the velocity \(u_{i}\) of the particle. At every iteration a new position \(x_{i}\) is calculated as a combination of the old position \(x_{i}\), the associated velocity \(u_{i}\), the previous best location of the particle \(p_{i}\) and the best location of all particle \(p_{\text{ best }}\). The new point \(x_{i}\) is updated using

In most cases the following scheme is used to update the j element of the velocity \(u_{i}\)

where

-

1.

The variables \(r_{1},\ r_{2}\) are random numbers in the range [0,1].

-

2.

The parameters \(c_{1},\ c_{2}\) are constant numbers usually in the range [1,2].

-

3.

The variable \(\omega\) is called inertia and typically is in the range [0,1]. This article uses an update mechanism for the inertia proposed in Shi and Eberhart (1998) and it is given below:

$$\begin{aligned} \omega =\omega _{\text{ max }}-\frac{k}{k_{\text{ max }}}\left( \omega _{\text{ max }}-\omega _{\text{ min }}\right) \end{aligned}$$(5)where k is the current number of iterations and \(k_{\text{ max }}\) the maximum number of allowed iterations. Also, \(\omega _{min},\ \omega _{max}\) are user defined and for this article \(\omega _{\text{ min }}=0.4,\ \omega _{\text{ max }}=0.9.\)

Nevertheless, it is clear that Eq. 3 can produce elements that are not belong to set S. This article proposes in Sect. 2.3 a technique that can prevent such cases.

The PSO algorithm terminates when the termination criteria are hold. In these article two stopping criteria are used: a termination check based on the variance of the best discovered minimum that was also proposed in the previous work Tsoulos (2008) and a new termination check based on the changes of the best located positions. The first procedure is defined in Sect. 2.1 and the second in Sect. 2.2.

2.1 Termination rule based on variance

At every iteration k the variance of \(f\left( p_{\text{ best }}\right)\) is measured. Denote this variance with \(\sigma ^{(k)}\). If there is no any new minimum found for a number of generations, then it is highly possible that the algorithm has found the global minimum and hence it should terminate. The algorithm terminates when

where \(\text{ klast }\) is the last iteration where a new minimum was found. As an example consider the function Rastrigin defined as

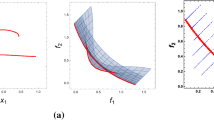

The global minimum in the range \([-\,1,1]^{2}\) is the value − 2.0 Now consider a PSO with 100 particles and let the algorithm terminates when the current number of iterations \(k\ge 200\). The progress of minimization for the Rastrigin function is outlined in Fig. 1. The algorithm located the global minimum at the early stages of the run and before iteration 20. Now consider the plot of the variance of best located value at Fig. 2. The variance decreases very smoothly and hence it can be used as a criterion to terminate the algorithm and preventing unnecessary function calls.

2.2 Termination rule based on similarity

Let us denote with \(p^{(k)}\) the table with the best locations of each particle at iteration k. Also set \(E=0\) at the beginning of the algorithm. At every iteration \(k>1\) we measure the quantity

If \(g<e\), where e a small positive number, then \(E=E+1\). In other words the similarity between the best location between two successive iterations is measured. The algorithm terminates when \(E\ge E_{\text{ max }}\), where \(E_{\text{ max }}\) a small positive integer number. To outline the efficiency of this stopping rule consider again the Rastrigin problem and the same test run with 100 particles and \(K_{max}=200\) as in Sect. 2.1 In Fig. 3 the graph of the quantity g is plotted against the number of iterations. As it can be noticed the value of g very soon tends to zero and hence this quantity can be used as a stopping rule.

2.3 Velocity bound technique

A common problem of the PSO method is the production of points that are outside of the domain range of the objective function. This problem produced when the changes in the velocity performed without control and it is usually called explosion. The velocity bound technique suggested here is used to prevent this effect with some changes in the basic PSO algorithm.

The first change is performed when the velocities are initialized. The new procedure for velocity initialization is shown in Algorithm 2.3. This procedure ensures that at least for the first iteration of the PSO method every point \(x_{i}\in S\). The second change is to alter the Eq. 4 in order to ensure that every \(x_{i}\in S\). The proposed change for the update of the velocities is listed in algorithm 2.3. This algorithm does not perform any changes in the velocity that will responsible for producing points \(x_{i}\) outside of the domain range.

3 Experiments

In order to measure the efficiency of the proposed modifications a series of test functions provided in Ali et al. (2005) were used. These problems are listed in Table 1.

The proposed modifications have been evaluated in terms of number of function calls and successful discover of global minimum using four different experiments:

-

1.

With the variance stopping rule only. In this test only the stopping rule that is based on variance was used. This test is denoted as VARIANCE in the experiments.

-

2.

With the similarity stopping rule only, where only the stopping rule based on similarity was used. This test is denoted as SIMILARITY in the experiments.

-

3.

A test that combines the variance stopping rule and the new bounding technique of velocities. This test is named as VBOUND in the experiments.

-

4.

A test that combines both the similarity stopping rule and the new bounding technique. This test is named SBOUND in the experiments.

The parameters of the PSO method used in the relevant experiments are listed in Table 2. The results for the previous four experiments are listed in Table 3. The column FUNCTION represents the objective function. The numbers in cells stand for the average number of function calls from 30 independent runs using different seeds for the random generator each time. The numbers in parentheses denote the fraction of runs where the global minimum was located. If this number is missing then the global minimum was discovered in every independent run (100% success). The last row of the table contains the total number of function calls for all experiments as well as the average number of success rate. At the end of every run the local search optimization method BFGS of Powell Powell (1989) was applied.

Also, the original pso method using the similarity stopping rule and the new bounding technique has been compared with Quantum behaved PSO method Sun et al. (2006) for the above test functions. The results from this comparison are listed in Table 4. The proposed stopping rule of similarity has been applied successfully in Quantum Pso method and as a consequence the method terminated successfully in the majority of cases. The Quantum PSO method requires less number of function calls for a series of optimization problems in two dimensions but for problems of higher dimension the proposed technique performs better.

From the conducted experiments it is clear that the SIMILARITY rule outperforms VARIANCE stopping rule in almost any test function in terms of function calls and success rate. Also, the new bounding technique for the velocity reduces dramatically (in most cases) the number of required function calls for both stopping rules.

4 Conclusions

Two modifications for the general PSO method have been proposed in this article: a stopping rule and a bounding technique for the velocity of the particles. Both modifications are general enough and they can be incorporated in any PSO variant. These modifications does not require any additional information from the objective function and they do not affect the speed of the algorithm since they have small overhead in the computation time. The experimental results indicates that the new stopping rule outperforms another statistical based stopping rule used in the past years and also the conjunction of the new stopping rule with the bounding technique reduces the number of function evaluations needed to discover the global minimum.

References

Ali MM, Khompatraporn C, Zabinsky ZB (2005) A numerical evaluation of several stochastic algorithms on selected continuous global optimization test problems. J Glob Optimiz 31:635–672

Angelov P (1994) A generalized approach to fuzzy optimization. Int J Intell Syst 1994:261–268

Araujo E, Coelho LS (2008) Particle swarm approaches using Lozi map chaotic sequences to fuzzy modelling of an experimental thermal-vacuum system, Appl Soft Comput 8:1354–1364

Barhen J, Protopopescu V, Reister D (1997) TRUST: a deterministic algorithm for global optimization. Science 16:1094–1097

de Moura Meneses AA, Machado MD, Schirru R (2009) Particle swarm optimization applied to the nuclear reload problem of a pressurized water reactor. Progr Nuclear Energy 51:319–326

Duan Q, Sorooshian S, Gupta V (1992) Effective and efficient global optimization for conceptual rainfall-runoff models. Water Resourc Res 28:1015–1031

Gaing Z-L (2003) Particle swarm optimization to solving the economic dispatch considering the generator constraints. IEEE Trans Power Syst 18:1187–1195

Garg H (2016) A hybrid PSO-GA algorithm for constrained optimization problems. Appl Math Comput 274:292–305

Gaviano M, Ksasov DE, Lera D, Sergeyev YD (2003) Software for generation of classes of test functions with known local and global minima for global optimization. ACM Trans Math Softw 29:469–480

Goldberg D (1989) Genetic algorithms in search. Optimization and machine learning. Addison-Wesley Publishing Company, Reading

Hosseini SA, Hajipour A, Tavakoli H (2019) Design and optimization of a CMOS power amplifier using innovative fractional-order particle swarm optimization. Appl Soft Comput 85:105831

Isiet M, Gadala M (2019) Self-adapting control parameters in particle swarm optimization. Appl Soft Comput 83:105653

Kennedy J, Eberhart RC (1999) The particle swarm: social adaptation in information processing systems. In: Corne D, Dorigo M, Glover F (eds) New ideas in optimization. McGraw-Hill, Cambridge, pp 11–32

Kirkpatrick S, Gelatt CD, Vecchi MP (1983) Optimization by simulated annealing. Science 220:671–680

Lennard-Jones JE (1924) On the determination of molecular fields. Proc R Soc Lond A 106:463–477

Lin Y, Stadtherr MA (2004) Advances in interval methods for deterministic global optimization in chemical engineering. J Glob Optimiz 29:281–296

Liu B, Wang L, Jin YH, Tang F, Huang DX (2005) Improved particle swarm optimization combined with chaos. Chaos Solitons Fractals 25:1261–1271

Mariani VC, Duck ARK, Guerra FA (2012) Leandro dos Santos Coelho, R.V. Rao, Heat exchanger design, Shell and tube heat exchanger (STHE), Economic optimization, Particle swarm optimization, Quantum particle swarm optimization, Chaos theory. Appl Therm Eng 42:119–128

Marinakis Y (2008) Magdalene Marinaki, Georgios Dounias, particle swarm optimization for pap-smear diagnosis. Expert Syst Appl 35:1645–1656

Michaelewizc Z (1996) Genetic algorithms + data structures = evolution programs. Springer, Berlin

Pardalos PM, Shalloway D, Xue G (1994) Optimization methods for computing global minima of nonconvex potential energy functions. J Glob Optimiz 4:117–133

Park J-B, Jeong Y-W, Shin J-R, Lee KY (2010) An improved particle swarm optimization for nonconvex economic dispatch problems. IEEE Trans Power Syst 25:156–166

Powell MJD (1989) A tolerant algorithm for linearly constrained optimization calculations. Math Program 45:547–566

Price WL (1977) Global optimization by controlled random search. Comput J 20:367–370

Shahzad F, Baig AR, Masood S, Kamran M, Naveed N (2009) Opposition-based particle swarm optimization with velocity clamping (OVCPSO). In: Yu W, Sanchez EN (eds) Advances in computational intelligence. Advances in intelligent and soft computing, vol 116. Springer, Berlin, Heidelberg

Shaw R, Srivastava S (2007) Particle swarm optimization: a new tool to invert geophysical data. Geophysics 2007:72

Shi Y, Eberhart RC (1998) Parameter Selection in particle swarm optimization. In: Evolutionary Programming VII. Lecture Notes in Computer Science, vol 1447. Springer, Berlin, pp 591-600

Shi XH, Liang YC, Lee HP, Lu C, Wang LM (2005) An improved GA and a novel PSO-GA based hybrid algorithm. Inf Process Lett 93:255–261

Sun J, Xu W, Fang W, Algorithm Quantum-Behaved Particle Swarm Optimization, with Controlled Diversity. In: Alexandrov VN, van Albada GD, Sloot PMA, Dongarra J (eds) Computational science–ICCS 2006. ICCS, (2006) Lecture Notes in Computer Science, vol 3993. Springer, Berlin, Heidelberg, p 2006

Tang R-L, Fang Y-J (2015) Modification of particle swarm optimization with human simulated property. Neurocomputing 153:319–331

Tsoulos IG (2008) Modifications of real code genetic algorithm for global optimization. Appl Math Comput 203:598–607

Wachowiak MP, Smolikova R, Yufeng Z, Zurada JM, Elmaghraby AS (2004) An approach to multimodal biomedical image registration utilizing particle swarm optimization. IEEE Trans Evol Comput 8:289–301

Wales DJ, Scheraga HA (1999) Global optimization of clusters, crystals, and biomolecules. Science 27:1368–1372

Yapo PO, Gupta HV, Sorooshian S (1998) Multi-objective global optimization for hydrologic models. J Hydrol 204:83–97

Yasuda K, Iwasaki N (2004) Adaptive particle swarm optimization using velocity information of swarm. In: 2004 IEEE international conference on systems, man and cybernetics (IEEE Cat. No.04CH37583), The Hague, pp 3475-3481, vol 4

Funding

This work is partly funded by the project entitled HuMORIST-Hospital MOnitoRIng SysTem, co-financed by the European Union and Greek national funds through the Operational Program for Research and Innovation Smart Specialization Strategy (RIS3) of Ipeiros (Project Code: ΗΠ1ΑΒ-00260).

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Tsoulos, I.G., Tzallas, A. & Karvounis, E. Improving the PSO method for global optimization problems. Evolving Systems 12, 875–883 (2021). https://doi.org/10.1007/s12530-020-09330-9

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s12530-020-09330-9