Abstract

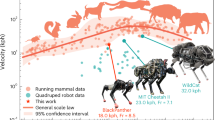

Bionic gait learning of quadruped robots based on reinforcement learning has become a hot research topic. The proximal policy optimization (PPO) algorithm has a low probability of learning a successful gait from scratch due to problems such as reward sparsity. To solve the problem, we propose a experience evolution proximal policy optimization (EEPPO) algorithm which integrates PPO with priori knowledge highlighting by evolutionary strategy. We use the successful trained samples as priori knowledge to guide the learning direction in order to increase the success probability of the learning algorithm. To verify the effectiveness of the proposed EEPPO algorithm, we have conducted simulation experiments of the quadruped robot gait learning task on Pybullet. Experimental results show that the central pattern generator based radial basis function (CPG-RBF) network and the policy network are simultaneously updated to achieve the quadruped robot’s bionic diagonal trot gait learning task using key information such as the robot’s speed, posture and joints information. Experimental comparison results with the traditional soft actor-critic (SAC) algorithm validate the superiority of the proposed EEPPO algorithm, which can learn a more stable diagonal trot gait in flat terrain.

摘要

基于强化学习方法来实现四足机器人仿生步态学习已成为一种新的研究方向; 其中近端策略优化(proximal policy optimization, PPO)算法由于存在奖励稀疏等问题, PPO算法从零开始学习到成功的步态概率不高. 为解决上述问题, 本文提出一种融合先验知识进化引导的四足机器人步态学习算法EEPPO(experience evolution proximal policy optimization)算法. EEPPO算法将进化策略训练成功样本作为先验知识, 用于引导学习方向以提高学习算法成功概率. 为验证提出的EEPPO算法有效性, 在Pybullet平台上进行了四足机器人步态学习任务仿真实验. 实验结果表明: 使用机器人的速度、 姿态与关节等关键信息, 对CPG-RBF网络和策略网络同时更新以实现四足机器人的仿生对角小跑步态学习任务. 为进一步验证本文算法优越性, 将EEPPO算法与传统SAC(soft actor-critic)算法进行比较; 该方法能够在平地地形中学习到更加稳定的对角小跑步态.

Article PDF

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Avoid common mistakes on your manuscript.

References

YANG J J, SUN H, WANG C H, et al. An overview of quadruped robots [J]. Navigation Positioning and Timing, 2019, 6(5): 61–73 (in Chinese).

ZHANG W, TAN W H, LI Y B. Locmotion control of quadruped robot based on deep reinforcement learning: Review and prospect [J]. Journal of Shandong University (Health Sciences), 2020, 58(8): 61–66 (in Chinese).

KOHL N, STONE P. Policy gradient reinforcement learning for fast quadrupedal locomotion [C]//IEEE International Conference on Robotics and Automation, 2004. New Orleans: IEEE, 2004: 2619–2624.

YANG C Y, YUAN K, ZHU Q G, et al. Multi-expert learning of adaptive legged locomotion [J]. Science Robotics, 2020, 5(49): eabb2174.

LEE J, HWANGBO J, WELLHAUSEN L, et al. Learning quadrupedal locomotion over challenging terrain [J]. Science Robotics, 2020, 5(47): eabc5986.

THOR M, KULVICIUS T, MANOONPONG P. Generic neural locomotion control framework for legged robots [J]. IEEE Transactions on Neural Networks and Learning Systems, 2021, 32(9): 4013–4025.

PENG X B, ABBEEL P, LEVINE S, et al. Deep-Mimic: Example-guided deep reinforcement learning of physics-based character skills [J]. ACM Transactions on Graphics, 2018, 37(4): 1–14.

PENG X B, COUMANS E, ZHANG T N, et al. Learning agile robotic locomotion skills by imitating animals [DB/OL]. (2020-04-02). https://arxiv.org/abs/2004.00784

RAHME M, ABRAHAM I, ELWIN M L, et al. Linear policies are sufficient to enable low-cost quadrupedal robots to traverse rough terrain [C]//2021 IEEE/RSJ International Conference on Intelligent Robots and Systems. Prague: IEEE, 2021: 8469–8476.

TAN J, ZHANG T, COUMANS E, et al. Sim-to-real: Learning agile locomotion for quadruped robots [J]. (2018-04-27). https://arxiv.org/abs/1804.10332

WANG Z, CHEN C L, DONG D Y. Instance weighted incremental evolution strategies for reinforcement learning in dynamic environments [J]. IEEE Transactions on Neural Networks and Learning Systems, 2022. https://doi.org/10.1109/TNNLS.2022.3160173

BELLEGARDA G, CHEN Y Y, LIU Z C, et al. Robust high-speed running for quadruped robots via deep reinforcement learning [C]//2022 IEEE/RSJ International Conference on Intelligent Robots and Systems. Kyoto: IEEE, 2022: 10364–10370.

SHENG J P, CHEN Y Y, FANG X, et al. Bio-inspired rhythmic locomotion for quadruped robots [J]. IEEE Robotics and Automation Letters, 2022, 7(3): 6782–6789.

SHI H J, ZHOU B, ZENG H S, et al. Reinforcement learning with evolutionary trajectory generator: A general approach for quadrupedal locomotion [J]. IEEE Robotics and Automation Letters, 2022, 7(2): 3085–3092.

SCHULMAN J, WOLSKI F, DHARIWAL P, et al. Proximal policy optimization algorithms [DB/OL]. (2017-07-20). https://arxiv.org/abs/1707.06347

PITCHAI M, XIONG X F, THOR M, et al. CPG driven RBF network control with reinforcement learning for gait optimization of a dung beetle-like robot [M]//Artificial neural networks and machine learning–ICANN 2019: Theoretical neural computation. Cham: Springer, 2019: 698–710.

SALIMANS T, HO J, CHEN X, et al. Evolution strategies as a scalable alternative to reinforcement learning [DB/OL]. (2017-05-10). https://arxiv.org/abs/1703.03864

SUTTON R S, MCALLESTER D, SINGH S, et al. Policy gradient methods for reinforcement learning with function approximation [C]//12th International Conference on Neural Information Processing Systems. Denver: ACM, 1999: 1057–1063.

BIE T, ZHU X Q, FU Y, et al. Safety priority path planning method based on Safe-PPO algorithm [J]. Journal of Beijing University of Aeronautics and Astronautics, 2023, 49(8): 2108–2118 (in Chinese).

SCHULMAN J, MORITZ P, LEVINE S, et al. High-dimensional continuous control using generalized advantage estimation [DB/OL]. (2015-06-08). https://arxiv.org/abs/1506.02438

COUMANS E, BAI Y F. PyBullet quickstart guide [EB/OL]. [2023-02-01]. https://usermanual.wiki/Doc-ument/PyBullet20Quickstart20Guide.543993445.pdf

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of Interest The authors declare no conflict of interest.

Additional information

Foundation item: the National Natural Science Foundation of China (No. 62103009)

Rights and permissions

About this article

Cite this article

Li, C., Zhu, X., Ruan, X. et al. Gait Learning Reproduction for Quadruped Robots Based on Experience Evolution Proximal Policy Optimization. J. Shanghai Jiaotong Univ. (Sci.) (2023). https://doi.org/10.1007/s12204-023-2666-z

Received:

Accepted:

Published:

DOI: https://doi.org/10.1007/s12204-023-2666-z

Key words

- quadruped robot

- proximal policy optimization (PPO)

- priori knowledge

- evolutionary strategy

- bionic gait learning