Abstract

Let \(G:H\rightarrow H\) and \(K: H\rightarrow H\) be monotone mappings that are either sequentially weakly continuous or continuous, where H is a real Hilbert space. In this work, we introduce two new iterative methods for approximating solutions of the Hammerstein equation \(u+GKu=0\), if they exist. The first iterative method is shown to always converge weakly to an element in the solution set of the Hammerstein equation if this solution set is nonempty. The second iterative method is a modification of the first method to upgrade weak convergence to strong convergence. Convergence results are obtained without requiring the maps to be bounded. Numerical examples are provided to demonstrate the convergence of one of these methods. Comparisons with some existing methods show that the method is cost effective in terms of the number of iterations required to obtain a solution and the computational time.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

The subject of discussion in this paper is iterative methods for solving the Hammerstein equation

where K and G are monotone mappings defined on a real Hilbert space H and u is a vector in H. A detailed discussion and formulation of nonlinear integral equations of Hammerstein type into (1) can be found in [11]. Results on the existence and uniqueness of solutions of Eq. (1) are also available in the literature, see for example Appell and Benavides [2], Brézis and Browder [6, 7], Browder [9,10,11], Browder et al. [13], Browder and Gupta [12], Chepanovich [14] and De Figueiredo and Gupta [32], Kazemi [36], Kazemi [37] and Kazemi and Ezzati [38]. Interest in the study of Hammerstein equations lies in their broad domain of application which include differential equations [46], automation and network theory as well as in optimal control systems [34].

It is known that nonlinear integral equations of Hammerstein type have in general no closed-form solution. For this reason, the theory of iterative methods plays a crucial role in approximating solutions of these types of equations. To the best of our recollection, Brézis and Browder [6] were the first to construct an iterative method that converges to the solution of the Hammerstein type integral equation in the case where one of the operators was assumed to be angle bounded (see also Brézis and Browder [8]). Since then, many researchers have constructed iterative methods that converge to the solution set of Eq. (1), if it is nonempty. These researchers include, Bello et al. [3], Chidume and Bello [15], Chidume et al. [28,29,30], Chidume and Djitte [16,17,18], Chidume and Idu [19], Chidume and Ofoedu [20], Chidume and Shehu [22,23,24], Chidume and Zegeye [25,26,27], Daman et al. [31], Djitte and Sene [33], Minjibir and Mohammed [42], Ofoedu and Onyi [44], Shehu [47], Tufa et al. [48], Uba et al. [49], Zegeye and Malonza [52]. Recent results in this direction have been proved for the case when both K and G are bounded, see Zegeye and Malonza [52] and Bello et al. [3]. In addition, the results in [3] rely on the existence of a certain constant \(\gamma _0\) which is not clear how it is calculated. Numerical methods regarding the solution of Hammerstein integral equations can be found in Kürkçü [39], Neamprem et al. [43], Micula and Cattani [41], Allouch et al. [1] and Wang [50].

In this work, we introduce two iterative methods for solving Hammerstein equations for mappings that are not necessarily bounded. The main objective of introducing these methods is in three folds: (a) to get rid of the constant \(\gamma _0\) used by Bello et al. [3] in their recent work; (b) get rid of the boundedness condition imposed on both G and K by Zegeye and Malonza [52] and Bello et al. [3]; and (c) introduce the over-relaxed parameter that has been used in the literature to improve the speed of convergence in other algorithms (for example, [5]) but has never been used in algorithms that approximate solutions of Hammerstein equations. The requirement imposed on our maps is that they are either monotone and sequentially weakly continuous or monotone and continuous. The first iterative method is shown to always converge weakly to an element in the solution set of the Hammerstein Eq. (1), if this solution set is nonempty, while the second iterative method is a modification of the first method to upgrade weak convergence to strong convergence. Numerical examples are provided to demonstrate the convergence of one of these methods. Comparisons with some existing methods show that the method is cost effective in terms of the number of iterations required to obtain a solution and the computational time taken for the generated sequence to converge to the solution.

The rest of the paper is organized as follows: In Sect. 2, preliminary results that help to establish and prove our main results are given. Section 3 presents the algorithms introduced in this paper and their associated convergence results, while Sect. 4 is dedicated to numerical examples for one of the algorithms. Finally, concluding remarks are given in Sect. 5. The acknowledgement, some declarations and the list of reference are found at the end of the paper.

2 Preliminaries

Throughout this paper, H will denote a real Hilbert space with inner product \(\langle \cdot ,\cdot \rangle \) and induced norm \(\left\| \,\cdot \,\right\| \). A mapping \(A:H\rightarrow H\) is monotone if

For any mapping \(T:H\rightarrow H\), the set \(\{z\in H: Tz=z\}\), called the set of fixed points of T will be denoted by F(T). Recall that \(T:H\rightarrow H\) is said to be nonexpansive if for any \(x,y\in H\),

and a mapping \(T:H\rightarrow H\) is called firmly nonexpansive if for any \(x,y\in H\),

Equivalently, T is firmly nonexpansive if for any \(x,y\in H\),

It is obvious from the definition that a firmly nonexpansive mapping is both monotone and nonexpansive. The following lemma is well known in Hilbert spaces.

Lemma 1

Let \(x,y\in H\) and \(c\in (0,1)\). Then the following holds:

-

(a)

\(\left\| cx+(1-c)y\right\| ^2= c\left\| x\right\| ^2+(1-c)\left\| y\right\| ^2-c(1-c)\left\| x-y\right\| ^2\);

-

(b)

\(\left\| x+y\right\| ^2\le \left\| x\right\| ^2+2\langle y,x+y\rangle \).

Let C be a nonempty, closed and convex subset of H. The metric projection (nearest point mapping) \(P_C: H\rightarrow C\) is defined as follows: Given \(x\in H\), \(P_C x\) is the unique point in C having the property

The following two lemmas give characterizations of projections and nonexpansive mappings that will be key in proving our main result.

Lemma 2

Assume that C is a nonempty, closed and convex subset of H. Let \(x\in H\) and \(y\in C\) be given. Then \(y=P_C x\) if and only if the inequality

holds true.

Lemma 3

(Goebel and Kirk [35]) A map \(S: H \rightarrow H\) is firmly nonexpansive if and only if \(2S - I\) (where I is the identity map) is nonexpansive.

Lemma 4

(Xu [51]) Let \((a_{n})\) be a sequence of nonnegative real numbers satisfying the following relation:

where \((\beta _n) \subset (0,1)\) and \((\delta _n)\subset R\) satisfying the following conditions: \(\sum _{n=1}^{\infty } \beta _n=\infty \), and \(\limsup _{n\rightarrow \infty }\delta _n\le 0\). Then, \(\lim _{n\rightarrow \infty }a_{n}=0\).

Lemma 5

(Maingé [40]) Let \((c_{n})\) be a sequence of real numbers such that there exists a subsequence \((n_i)\) of (n) such that \(c_{n_i}<c_{{n_i}+1}\) for all \(i\in \mathbb {N}\). Then there exists a nondecreasing sequence \((m_k)\subset \mathbb {N}\) such that \(m_k\rightarrow \infty \) and the following properties are satisfied by all (sufficiently large) numbers \(k\in \mathbb {N}\):

In fact, \(m_k=\max \{j\le k:c_j<c_{j+1}\}\).

Recall that a sequence \((x_n)\) in a Hilbert space H converges strongly (respectively, weakly) to \(x\in H\) if \(\left\| x_n-x\right\| \rightarrow 0\) (respectively, \(\langle x_n,y\rangle \rightarrow \langle x,y\rangle \) for all \(y\in H\)). Strong (respectively, weak) convergence of \((x_n)\) to x is denoted by \(x_n\rightarrow x\) (respectively, \(x\rightharpoonup x\)).

Given a mapping T from H into itself, \(I-T\) is said to be demiclosed at zero if for any sequence \(\{z_n\}\) in H satisfying the conditions

-

(i)

\(\{z_n\}\) converges weakly to z;

-

(ii)

\({\lim _{n\rightarrow \infty }\left\| z_n-Tz_n\right\| }=0\), we have \(z-Tz=0\).

The following lemma, which was proved in the setting of real Banach spaces, will be used to motivate our main results.

Lemma 6

(Blum and Oettli [4]) Let C be a nonempty, closed and convex subset of a real Hilbert space H and let f be a bifunction from \(C\times C\) to \(\mathbb {R}\) satisfying

-

(A1)

\(f(x,x)=0\) for all \(x\in C\);

-

(A2)

f is monotone, i.e., \(f(x,y)+f(y,x)\le 0\) for all \(x,y\in C\);

-

(A3)

for all \(x,y,z\in C\),

$$\begin{aligned} \limsup _{t\downarrow 0}f(tz+(1-t)x,y)\le f(x,y); \end{aligned}$$ -

(A4)

for all \(x\in C\), \(f(x,\cdot )\) is convex and lower semicontinuous. Let \(r>0\) and \(x\in H\). Then, there exists \(z\in C\) such that

$$\begin{aligned} f(z,y)+\frac{1}{r}\langle y-z, z-x\rangle \ge 0\quad \text{ for } \text{ all }\quad y\in C. \end{aligned}$$

Recall that a mapping \(T:H\rightarrow H\) is called sequentially weakly continuous if for any sequence \(\{z_n\}\) in H converging weakly to z, the sequence \(\{Tz_n\}\) converges weakly to Tz.

Lemma 7

Let H be a real Hilbert space, \(K:H\rightarrow H\) and \(G:H\rightarrow H\) be monotone mappings that are either sequentially weakly continuous or continuous. Then for any \([x,y]\in X=H\times H\) and \(r>0\), there exists \([w,z]\in X\) such that

for all \([u,v]\in X\).

Proof

We prove the lemma for the case when both K and G are sequentially weakly continuous. The other case(s) can be proved in a similar way.

Define a mapping \(T:X\times X\rightarrow \mathbb {R}\) by

Then \(T([u,v],[u,v])=0\) for all \([u,v]\in X\), and from the monotonicity of K and G, we have

Moreover, if \(t\in (0,1)\) and \([x,y], [u,v], [w,z]\in X\), then

Denote \(b=tw+(1-t)x\) and \(d=tz+(1-t)y\). Then \(b\rightarrow x\) and \(d\rightarrow y\) as \(t\rightarrow 0\). This implies that \(b\rightharpoonup x\) and \(d\rightharpoonup y\) as \(t\rightarrow 0\). Since K and G are sequentially weakly continuous, we have \(K(b)-d\rightharpoonup Kx -y\) and \(G(d)+b\rightharpoonup Gy+x\) as \(t\rightarrow 0\). Therefore,

This implies that

Furthermore, for all \([u,v]\in X\),

This shows that for all \([u,v]\in X\), \(T([u,v], \cdot )\) is continuous, and hence lower semicontinuous.

Next, we show that for all \([x,y]\in X\), \(T([x,y], \cdot )\) is convex. Indeed, let \([x,y]\in X\) be arbitrary but fixed, and let \([u,v],[w,z]\in X\) and \(c\in [0,1]\). Then

We have shown that the bifunction T from \(X\times X\) into \(\mathbb {R}\) satisfies conditions (A1)–(A4). By Lemma 6, for any \([x,y]\in X\) and \(r>0\), there exists \([w,z]\in X\) such that

for all \([u,v]\in X\). That is, for any \([x,y]\in X\) and \(r>0\), there exists \([w,z]\in X\) such that

for all \([u,v]\in X\). \(\square \)

Throughout this paper, A will denote a mapping from the set \(X=H\times H\) into itself given by \(A[x,y]=[Kx-y,Gy+x]\) for all \([x,y]\in X\), where K and G are monotone mappings from H into H. This mapping was first introduced and studied by Chidume and Zegeye [27] (see also Chidume and Zegeye [25, 26]), who showed that solving the Hammerstein Eq. (1) is equivalent to computing zeros of the mapping A. As usual, the set of zeros of A will be denoted by \(A^{-1}[0,0]\).

Lemma 8

Fix \(r>0\), and let H be a real Hilbert space, \(K:H\rightarrow H\) and \(G:H\rightarrow H\) be monotone mappings that are either sequentially weakly continuous or continuous such that \(A^{-1}[0,0]\ne \emptyset \). Define a mapping \(S_r:X\rightarrow X\) by

for all \([x,y]\in X\). Then

-

(a)

\(S_r\) is single valued;

-

(b)

\(S_r\) is firmly nonexpansive on X, i.e., for all \([x,y], [w,z]\in X\),

$$\begin{aligned} \left\| S_r[x,y]-S_r[w,z]\right\| ^2\le \left\langle S_r[x,y]-S_r[w,z],[x,y]-[w,z]\right\rangle ; \end{aligned}$$ -

(c)

\(F(S_r)=A^{-1}[0,0]\);

-

(d)

\(I-S_r\) is demiclosed at zero.

-

(e)

for all \([x,y]\in X\) and \([p,q]\in A^{-1}[0,0]\),

$$\begin{aligned} \left\| S_r[x,y]-[p,q]\right\| ^2+\left\| [x,y]-S_r[x,y]\right\| ^2\le \left\| [x,y]-[p,q]\right\| ^2; \end{aligned}$$ -

(f)

\(F(S_r)\) is closed and convex.

Proof

We prove the lemma for the case when both K and G are sequentially weakly continuous. The other case(s) can be proved in a similar way.

-

(a)

For any \([x,y]\in X\) and \(r>0\), let \([x_1,y_1], [x_2,y_2]\in S_r[x,y]\). Then from the definition of \(S_r\), we have

$$\begin{aligned} T([x_1,y_1],[x_2,y_2])+\frac{1}{r}\langle [x_2,y_2]-[x_1,y_1], [x_1,y_1]-[x,y] \rangle \ge 0, \end{aligned}$$(where T is the mapping given in Lemma 7) and

$$\begin{aligned} T([x_2,y_2],[x_1,y_1])+\frac{1}{r}\langle [x_1,y_1] - [x_2,y_2],[x_2,y_2] -[x,y]\rangle \ge 0. \end{aligned}$$Adding these two inequalities and using condition (A2), we obtain

$$\begin{aligned}&\langle [x_2,y_2]-[x_1,y_1], [x_1,y_1]-[x,y] \rangle + \langle [x_1,y_1] - [x_2,y_2],[x_2,y_2] -[x,y]\rangle \\&\quad \ge 0, \end{aligned}$$which implies that

$$\begin{aligned} \langle [x_2,y_2]-[x_1,y_1], [x_1,y_1]-[x_2,y_2]\rangle \ge 0. \end{aligned}$$It follows from this last inequality that

$$\begin{aligned} \left\| [x_2,y_2]-[x_1,y_1]\right\| ^2\le 0, \end{aligned}$$which implies that \(x_2=x_1\) and \(y_2=y_1\).

-

(b)

Let \([x,y], [w,z]\in X\). Then

$$\begin{aligned} T(S_r[x,y], S_r[w,z])+\frac{1}{r}\langle S_r[w,z] -S_r[x,y], S_r[x,y]- [x,y]\rangle \ge 0, \end{aligned}$$and

$$\begin{aligned} T(S_r[w,z], S_r[x,y])+\frac{1}{r}\langle S_r[x,y]-S_r[w,z], S_r[w,z]- [w,z]\rangle \ge 0 \end{aligned}$$Adding these two inequalities and making use of (A2), we get

$$\begin{aligned} \langle S_r[w,z]-S_r[x,y], S_r[x,y] - [x,y]+ [w,z]- S_r[w,z]\rangle \ge 0. \end{aligned}$$Rearranging terms, we obtain

$$\begin{aligned} \langle S_r[w,z]-S_r[x,y], [w,z] - [x,y] \rangle \ge \left\| S_r[w,z]-S_r[x,y]\right\| ^2. \end{aligned}$$ -

(c)

We now show that \(F(S_r)=A^{-1}[0,0]\). To this end, using the definition of \(S_r\), we have

$$\begin{aligned}{}[p,q]\in F(S_r)\Longleftrightarrow & {} S_r[p,q]=[p,q] \\\Longleftrightarrow & {} \langle Kp-q,u-p\rangle +\langle Gq+p,v-q\rangle +\frac{1}{r}\langle p-p,u-p\rangle \\&\; + \frac{1}{r}\langle q-q,v-q\rangle \ge 0\quad \forall \, [u,v]\in X \\\Longleftrightarrow & {} \langle Kp-q,u-p\rangle +\langle Gq+p,v-q\rangle \ge 0\quad \forall \, [u,v]\in X \\\Longleftrightarrow & {} \langle [Kp-q,Gq+p],[u-p,v-q]\rangle \ge 0\quad \forall \, [u,v]\in X\\\Longleftrightarrow & {} \langle A[p,q],[u,v]-[p,q]\rangle \ge 0\quad \forall \, [u,v]\in X\\\Longleftrightarrow & {} [p,q]\in A^{-1}[0,0], \end{aligned}$$where the last equivalence follows from Lemma 2.

-

(d)

Let \(\{[x_n,y_n]\}\) be a sequence in X such that \([x_n,y_n] \rightharpoonup [p,q]\) and \([x_n,y_n]-S_r[x_n,y_n]\rightarrow 0\) as \(n\rightarrow \infty \). It then follows that \(x_n\rightharpoonup p\), \(y_n\rightharpoonup q\) and \(S_r[x_n,y_n] \rightharpoonup [p,q]\) as \(n\rightarrow \infty \). Denote \([w_n,z_n]=:S_r[x_n,y_n]\). Then \([x_n,y_n]-[w_n,z_n]\rightarrow 0\) as \(n\rightarrow \infty \) which implies that \(x_n-w_n\rightarrow 0\) and \(y_n-z_n\rightarrow 0\) as \(n\rightarrow \infty \). Also, we have \(w_n\rightharpoonup p\) and \(z_n\rightharpoonup q\) as \(n\rightarrow \infty \). From the definition of \(S_r\), we have

$$\begin{aligned}&\langle Kw_n-z_n,u-w_n\rangle +\langle Gz_n+w_n,v-z_n\rangle \\&\quad +\frac{1}{r}\langle w_n-x_n,u-w_n\rangle +\frac{1}{r}\langle z_n-y_n,v-z_n\rangle \ge 0 \end{aligned}$$for all \([u,v]\in X\). From the monotonicity of K and G, we have

$$\begin{aligned}&\langle Ku-z_n,u-w_n\rangle +\langle Gv+w_n,v-z_n\rangle \\&\quad +\frac{1}{r}\langle w_n-x_n,u-w_n\rangle +\frac{1}{r}\langle z_n-y_n,v-z_n\rangle \ge 0 \end{aligned}$$for all \([u,v]\in X\). This last inequality is the same as

$$\begin{aligned} 0\le & {} \langle Ku-v,u-w_n\rangle +\langle v-z_n,u-w_n\rangle +\langle Gv+u,v-z_n\rangle \\&+\langle w_n-u,v-z_n\rangle + \frac{1}{r}\langle w_n-x_n,u-w_n\rangle +\frac{1}{r}\langle z_n-y_n,v-z_n\rangle , \end{aligned}$$which is equivalent to

$$\begin{aligned}&\langle Ku-v,u-w_n\rangle +\langle Gv+u,v-z_n\rangle +\frac{1}{r}\langle w_n-x_n,u-w_n\rangle \\&\quad +\frac{1}{r}\langle z_n-y_n,v-z_n\rangle \ge 0 \end{aligned}$$for all \([u,v]\in X\). Taking the limit as \(n\rightarrow \infty \), we get

$$\begin{aligned} \langle Ku-v,u-p\rangle +\langle Gv+u,v-q\rangle \ge 0 \quad \forall \; [u,v]\in X. \end{aligned}$$(3)Let \(t\in (0,1)\) and set

$$\begin{aligned}{}[\widehat{u}_t, \widehat{v}_t]=t[u,v]+(1-t)[p,q]=[tu+(1-t)p,tv+(1-t)q]\in X. \end{aligned}$$Obviously, \([\widehat{u}_t, \widehat{v}_t] \rightarrow [p,q]\) as \(t\rightarrow 0\), which implies that \(\widehat{u}_t \rightarrow p\) and \(\widehat{v}_t \rightarrow q\) as \(t\rightarrow 0\). This in turn implies that \(\widehat{u}_t \rightharpoonup p\) and \(\widehat{v}_t \rightharpoonup q\) as \(t\rightarrow 0\). But both K and G are sequentially weakly continuous, and so \(K(\widehat{u}_t)- \widehat{v}_t\rightharpoonup Kp-q\) and \(G(\widehat{v}_t)+\widehat{u}_t \rightharpoonup Gq+p\) as \(t\rightarrow 0\), respectively. Moreover, from (3), we have

$$\begin{aligned} \langle K(\widehat{u}_t)- \widehat{v}_t, \widehat{u}_t-p\rangle +\langle G(\widehat{v}_t)+\widehat{u}_t, \widehat{v}_t-q\rangle \ge 0 \end{aligned}$$which implies that

$$\begin{aligned} t\langle K(\widehat{u}_t)- \widehat{v}_t, u-p\rangle + t\langle G(\widehat{v}_t)+\widehat{u}_t, v-q\rangle \ge 0. \end{aligned}$$Since \(t>0\), we have

$$\begin{aligned} \langle K(\widehat{u}_t)- \widehat{v}_t, u-p\rangle +\langle G(\widehat{v}_t)+\widehat{u}_t, v-q\rangle \ge 0. \end{aligned}$$Taking the limit as \(t\rightarrow 0\), we obtain

$$\begin{aligned} \langle Kp-q,u-p\rangle +\langle Gq+p,v-q\rangle \ge 0\quad \forall \,\, [u,v]\in X. \end{aligned}$$Hence \([p,q]\in A^{-1}[0,0]=F(S_r)\), where equality of sets follows from part (c) above.

-

(e)

Note that the inequality in part (b) above is equivalent to

$$\begin{aligned} \left\| S_r[x,y] -S_r[w,z]\right\| ^2\le & {} \left\| [x,y]-[w,z]\right\| ^2\\&-\left\| [x,y]-S_r[x,y]-([w,z]-S_r[w,z])\right\| ^2. \end{aligned}$$In particular, for \([w,z]=[p,q]\in A^{-1}[0,0]=F(S_r)\), we have

$$\begin{aligned} \left\| S_r[x,y] -[p,q]\right\| ^2\le \left\| [x,y]-[p,q]\right\| ^2-\left\| [x,y]-S_r[x,y]\right\| ^2. \end{aligned}$$ -

(f)

We first show that \(F(S_r)\) is closed. Let \(\{[x_n,y_n]\}\) be a sequence in \(F(S_r)\) such that \([x_n,y_n]\rightarrow [p,q]\in X\) as \(n\rightarrow \infty \). Then from part (e) above,

$$\begin{aligned} \left\| [x_n,y_n]-S_r[p,q]\right\| ^2+\left\| [p,q]-S_r[p,q]\right\| ^2\le \left\| [x_n,y_n]-[p,q]\right\| ^2, \end{aligned}$$which implies that

$$\begin{aligned} \left\| [p,q]-S_r[p,q]\right\| \le \left\| [x_n,y_n]-[p,q]\right\| \rightarrow 0\quad \text{ as }\quad n\rightarrow \infty . \end{aligned}$$It means that \([p,q]\in F(S_r)\), showing that \(F(S_r)\) is closed.

Finally, we show that \(F(S_r)\) is convex. From part (e) above, we have

Let \([p_1,q_1],[p_2,q_2]\in F(S_r)\) and \(t\in [0,1]\). Denote \([x,y]=t[p_1,q_1]+(1-t)[p_2,q_2]\). Then from Lemma 1,

Now, using (4), we have

Since \(t(1-t)^2+(1-t)t^2-t(1-t)=0\), we conclude that \(S_r[x,y]=[x,y]\). Therefore, \(F(S_r)\) is convex. \(\square \)

3 Main results

To construct our algorithms, first assume that the solution set of the Hammerstein Eq. (1) is nonempty. Let \(\lambda _n\in (0,2)\) for all \(n\in \mathbb {N}\) and \(r>0\). If the initial starting points \(x_0\) and \(y_0\) are given, then generate the \((n+1)\)th iterate by

where \(S_r\) is as defined in Lemma 8.

Theorem 9

Let H be a real Hilbert space. Fix \(r>0\), and the initial starting points \(x_0\in H\) and \(y_0\in H\). Assume that \(\lambda _n\in [a,b]\subset (0,2)\) for all \(n\in \mathbb {N}\). Let \(K:H\rightarrow H\) and \(G:H\rightarrow H\) be monotone mappings that are either sequentially weakly continuous or continuous such that the solution set of the Hammerstein Eq. (1) is nonempty. Assume that \(\{[x_n,y_n]\}\) is a sequence generated by (5). Then \(\{x_n\}\) converges weakly to some \(x^*\), the solution of the Hammerstein Eq. (1), and \(\{y_n\}\) converges weakly to \(y^*\), where \(y^*=Kx^*\).

Proof

We prove the theorem for the case when both K and G are sequentially weakly continuous. The other case(s) can be proved in a similar way.

Let p be the solution of the Hammerstein Eq. (1). Then \(p+Gq=0\), where \(q=Kp\). Therefore, \([p,q]\in A^{-1}[0,0]\). Denote \(H_r=2S_r-I\), where I is the identity mapping on \(H\times H\). Since \(S_r\) is firmly nonexpansive by Lemma 8, we conclude by Lemma 3 that \(H_r\) is nonexpansive. It then follows from (5) that

Using the assumptions on \(\{\lambda _n\}\), we obtain from (6),

which implies that the sequence \(\{[x_{n},y_{n}]-[p,q]\}\) is decreasing, hence it is convergent. That is, there exists a nonnegative real number \(\gamma [p,q]\) such that

Taking the limit in (7), we deduce that

In view of (8), \(\{[x_{n},y_{n}]\}\) is bounded. Let \(\{[x_{n_k},y_{n_k}]\}\) be a subsequence of \(\{[x_{n},y_{n}]\}\) that converges weakly to \([x^*,y^*]\in H\times H\). Then (9) and Lemma 8(d) imply that \([x^*,y^*]\in F(S_r)\). By Lemma 8(c), \([x^*,y^*]\in A^{-1}[0,0]\). Therefore, (8) and Opial’s lemma [45] imply that \(\{[x_{n},y_{n}]\}\) converges weakly to \([x^*,y^*]\in A^{-1}[0,0]\). That is, \(\{x_n\}\) converges weakly to \(x^*\), the solution of the Hammerstein Eq. (1), and \(\{y_n\}\) converges weakly to \(y^*\), where \(y^*=Kx^*\). \(\square \)

In general, strong convergence is desired for effective approximation of solutions of a given equation. To generate sequences that always converge strongly to some solution of the Hammerstein Eq. (1) (assuming that the solution set of (1) is nonempty), we modify algorithm (5) to obtain the following viscosity type algorithm: Fix \(r>0\) and let the initial starting points \(x_0\in H\) and \(y_0\in H\) be given. Then the \((n+1)\)th iterate is given by

where \(a_n\in (0,1)\) and \(\lambda _n\in (0,2)\) for all \(n\in \mathbb {N}\), \(f:H\rightarrow H\) and \(g:H\rightarrow H\) are contractions, and \(S_r\) is as defined in Lemma 8.

Theorem 10

Let H be a real Hilbert space, \(f:H\rightarrow H\) be a \(\tau \)-contraction and \(g:H\rightarrow H\) be a \(\eta \)-contraction such that \(\tau ,\eta <\frac{1}{2}\). Fix \(r>0\) and choose the initial starting points \(x_0\in H\) and \(y_0\in H\) arbitrarily. Let \(K:H\rightarrow H\) and \(G:H\rightarrow H\) be monotone mappings that are either sequentially weakly continuous or continuous such that the solution set of the Hammerstein Eq. (1) is nonempty. Assume that \(\{[x_n,y_n]\}\) is a sequence generated by (10), where \(\lambda _n\in [a,b]\subset (0,2)\) and \(a_n\in (0,1)\) for all \(n\ge 0\) with \(\lim _{n\rightarrow \infty }a_n=0\) and \({\sum _{n=0}^{\infty }a_n=\infty }\). Then \(\{x_n\}\) converges strongly to some p, the solution of the Hammerstein Eq. (1), and \(\{y_n\}\) converges strongly to q, where \(q=Kp\).

Proof

We prove the theorem for the case when both K and G are sequentially weakly continuous. The other case(s) can be proved in a similar way.

Let \([u_n,v_n] = (1-\lambda _n)[x_n,y_n] +\lambda _nS_r[x_n,y_n]\) and let z be any solution of the Hammerstein Eq. (1). Then \(z+Gw=0\), where \(w=Kz\). Therefore, \([z,w]\in A^{-1}[0,0]\). Let [p, q] be the unique fixed point of \(P_{A^{-1}[0,0]}B\), where \(B:H\times H\rightarrow H\times H\) is given by \(B[u,v]=[f(u),g(v)]\). That is, \([p,q]=P_{A^{-1}[0,0]}[f(p),g(q)]\). It is easy to check that B is a \(\gamma \)-contraction, where \(\gamma =\max \{\tau ,\eta \}\). Then from (10) and (6), we have

where \(\mu _n=a_n(1-2\gamma )\). Note that \(\mu _n\in (0,1)\) for all \(n\in \mathbb {N}\) with \(\mu _n\rightarrow 0\) as \(n\rightarrow \infty \) and \(\sum _{k=1}^{\infty }\mu _k=\infty \). It then follows from (11) that

This inequality shows that \(\{[x_n,y_n]\}\) is bounded, and so there exists a subsequence \(\{[x_{n_k},y_{n_k}]\}\) of \(\{[x_n,y_n]\}\) such that

Since \(\{[x_n,y_n]\}\) is bounded, \(\{[x_{n_k},y_{n_k}]\}\) has a subsequence, again denoted by \(\{[x_{n_k},y_{n_k}]\}\), that converges weakly to \([\widehat{x},\widehat{y}]\) as \(k\rightarrow \infty \). It then follows that

Next, we observe from Lemma 1 and (6) that

From (11), (12) and the Cauchy–Schwarz inequality, we have

Again from the Cauchy–Schwarz inequality,

Substituting (15) and (16) into (14), we obtain

where

To show that \(\{[x_n,y_n]\}\) converges strongly to [p, q], we consider two possible cases on the sequence \(\{[x_n,y_n]-[p,q]\}\).

Case I. Assume that \(\{ \left\| [x_n,y_n]-[p,q]\right\| \}\) is decreasing. Then this sequence is convergent. From (17), and taking note that \(\{[x_n,y_n]\}\) is bounded, we have

for some \(\widetilde{M}>0\). Using the condition \(\mu _n\rightarrow 0\) as \(n\rightarrow \infty \), we get

But \([x_{n_k},y_{n_k}] \rightharpoonup [\widehat{x},\widehat{y}]\) as \(k\rightarrow \infty \), and so \(S_r[x_{n_k},y_{n_k}] \rightharpoonup [\widehat{x},\widehat{y}]\) as \(k\rightarrow \infty \). Following similar steps as in the proof of Lemma 8, we obtain \([\widehat{x},\widehat{y}]\in A^{-1}[0,0]\). Therefore, from (13) and Lemma 2, we get

Also, from (10) and (18), we have

Taking the limit on both sides as \(n\rightarrow \infty \), we obtain

Therefore, we conclude that

On the other hand, inequality (17) reduces to

From this last inequality, (21) and Lemma 4, we conclude that \(\{[x_n,y_n]\}\) converges strongly to [p, q]. That is, \(\{x_n\}\) converges strongly to p and \(\{y_n\}\) converges strongly to q, where \(p+Gq=0\) and \(q=Kp\). In particular, \(\{x_n\}\) converges strongly to p, the solution of the Hammerstein Eq. (1).

Case II. Assume that there exists a subsequence \(\{{n_i}\}\) of \(\{{n}\}\) such that

for all \(i\in \mathbb {N}\). Then by Lemma 5, there exists a nondecreasing sequence \((m_{k})\subset \mathbb {N}\) such that \(m_{k}\rightarrow \infty \) as \(k\rightarrow \infty \) and

for all \(i\in \mathbb {N}\). In this case, we have from (17) and (22)

Rearranging, we obtain

Taking the limit as \(k\rightarrow \infty \) and remembering that the sequence \(\{[x_{m_k},y_{m_k}]\}\) is bounded, we obtain

As in the proof of Case I, we easily derive the limit

Now, we can find a subsequence \(\{[x_{m_k(l)},y_{m_k(l)}]\}\) of \(\{[x_{m_k},y_{m_k}]\}\) such that

Following similar arguments as in the proof of Case I, we arrive at

Finally, rearranging (23), we obtain

which together with (24) imply that

Using (22), we deduce that

showing that \(\{[x_n,y_n]\}\) converges strongly to [p, q]. In particular, \(\{x_n\}\) converges strongly to p, the solution of the Hammerstein Eq. (1). \(\square \)

Remark 1

Our results improve the results of Bello et al. [3] in the sense that the boundedness of both K and G have been dispensed with, and our results do not rely on the existence of the constant \(\gamma _0\) used in [3] which is not clear how it can be found. For the Hilbert space setting the assumption that both K and G are bounded that was used by Zegeye and Malonza [52] have also been dispensed with. Our results also cover the case when K and G are sequentially weakly continuous which was not discussed in [52]. In addition, the presence of the viscosity approximation term and the over-relaxed parameter \(\lambda _n\in (0,2)\) in our algorithm generalize the algorithm due to Zegeye and Malonza [52].

4 Numerical example

In this section, we give some examples to illustrate the convergence of one of our algorithm to solutions of the Hammerstein Eq. (1), when they exists. We also compare algorithm with the existing ones and present these comparisons using graphs and tables. All numerical experiments were performed on MATLAB R2022a version. The specifications of the laptop used to run the programs are: Intel(R) Core(TM) i7-7700HQ CPU @ 2.80 Ghz 2.81 GHz with 16 GB.

Example 1

Let \(H=\mathbb {R}\), and define \(K:H\rightarrow H\) by \(Kx=3x\) and \(G:H\rightarrow H\) by \(Gx=x-4\). Then both K and G are monotone and continuous. The solution of the Hammerstein Eq. (1) is \(x^*=1\) with \(y^*=3\). Hence the only root of A is \(z^*=[x^*,y^*]=[1,3]\), where as before \(A:H\times H\rightarrow H\times H\) is given by \(A[x,y]=[Kx-y,Gy+x]\). Moreover, for any \(r>0\), one can check that

Define \(f:H\rightarrow H\) by \(f(x)=\frac{x}{4}\) and \(g:H\rightarrow H\) by \(g(x)=\frac{x}{3}\). Then both f and g are contractions that satisfy the condition of Theorem 10. Equation (10) can now be written as

To implement algorithm (25), we chose \(a_n=(n+10000)^{-1}\) and \(\lambda _n=\frac{n+3}{n+2}\) for all \(n\ge 0\). Choosing \(r=2\), we can see from Fig. 1a and b that the sequence \(\{x_n\}\) converges to \(x^*=1\), the solution of the Hammerstein Eq. (1) and the sequence \(\{y_n\}\) converges to \(y^*=3\), respectively, for different initial starting points \(z_0=[x_0,y_0]\).

If we now fix \(z_0=[1.7,0]\), then it can be seen from Fig. 2a and b that the sequence \(\{x_n\}\) converges to \(x^*=1\), the solution of the Hammerstein Eq. (1), and the sequence \(\{y_n\}\) converges to \(y^*=3\), respectively, for different values of r.

Figure 2a and b suggests that the sequence \(\{x_n\}\) and \(\{y_n\}\), respectively, requires fewer iterations to converge when the value of r is large compared to when r is small.

Now if we fix \(z_0=[0.6,2.5]\) and \(r=1\), then it can be seen from Fig. 3a and b that the sequence \(\{x_n\}\) converges to \(x^*=1\), the solution of the Hammerstein Eq. (1) and the sequence \(\{y_n\}\) converges to \(y^*=3\), respectively, for different values of \(\lambda _n\in (0,2)\).

Figure 3a and b suggests that the sequence \(\{x_n\}\) and \(\{y_n\}\), respectively, requires fewer iterations to converge when the value of \(\lambda _n\) is large compared to when \(\lambda _n\) is small.

By means of numerical experiments, Bello et al. [3] showed that their algorithm

converges much faster, in terms of the number of iterations than the convergence obtained with existing algorithms by Chidume and Shehu [23], Chidume and Shehu [21], Minjibir and Mohammad [42] and Shehu [47]. In Table 1, Figs. 4 and 5, we compare the convergence of (26) and (25). In this case, we choose the initial starting points \(x_0=-1\) and \(y_0=1\) for algorithm (25), and \(x_0= -1.5\), \(x_1=-1\), \(y_0=0.5\) and \(y_1=1\) for algorithm (26), \(r=1\), \(a_n=(n+10,000)^{-1}\), \(\lambda _n=\frac{n+3}{n+2}\), \(\theta _n=\frac{1}{(n+1)^2}\), \(b_n=\frac{1}{(n+1)^{\frac{1}{4}}}\) and \(c_n=\frac{1}{(n+1)^{\frac{1}{5}}}\). Results of this example are reported in Table 1 below.

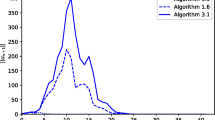

Figure 4a and b give a comparison of algorithms (25) and (26) in terms of the number of iteration taken to reach the solution of the Hammerstein Eq. (1). Figure 5a and b gives a comparison of algorithm (25) and algorithm (26) in terms of the time taken to reach the solution of the Hammerstein Eq. (1).

Figure 4a and b show that algorithm (25) takes fewer iterations to converge to the desired solution than algorithm (26). Figure 5a and b show that algorithm (25) takes less time to converge to the desired solution than algorithm (26).

Example 2

Let \( H=L_{2}^{\mathbb {R}}([0, 1]) \) with the norm \( \left\| x\right\| _{L_{2}}=\left( \int _{0}^{1}\left| x(t)\right| ^{2}dt\right) ^{\dfrac{1}{2}} \). Let K, G: \(H\rightarrow H \) be defined by \( K(x(t))=\frac{3}{4}x(t)+1\) and \( G(y(t))=\frac{y(t)}{3} -3\). Let \(f,g:H\rightarrow H\) be defined by \(f(x(t))=\frac{x(t)}{3}\) and \(g(x(t))=\frac{y(t)}{3}\). One can show that K and G are continuous monotone, and f and g are contraction mappings. In addition we observe that \(S_r(x_n(t),y_n(t))=\Big (\frac{2x_n(t)}{5} + \frac{3y_n(t)}{10}+\frac{1}{2}, \frac{21 y_n(t)}{40}-\frac{3x_n(t)}{10}+\frac{15}{8}\Big )\) and the solutions of the equations \( x(t)+GKx(t)=0 \) is \(x^{*}(t)=\dfrac{32}{15}\). Thus, the algorithm in (10) reduces to the following scheme: \(x_{0}(t),y_0(t)\in H\), are chosen arbitrarily;

Now, if we choose the initial starting point \((x_0(t),y_0(t))=(t, t)\), and \( \lambda _n=\frac{n+1}{n+2}+0.002\), then the conditions of Theorem 10 are satisfied. Figure 6 gives the graph of the error term \(E_n=\left\| x_n(t)-x^*(t)\right\| _{L_{2}}\) versus the number of iterations n for different values of the parameter \(\{a_n\}\).

From Fig. 6, we observe that the sequence \(\{x_n(t)\}\) converges strongly to \(x^*(t)=\frac{32}{15}\), which is the solution of the Hammerstein Eq. (1), and the convergence is faster when the coefficient of “n”in the denominator of the control parameter \(\{a_n\}\) is large while the initial point and \(\{\lambda _n\}\) are kept fixed.

In Table 2 and Fig. 6, we compare the convergence of (26) and (27). In this case, we choose \( a_n= 1/(1000*n+1000)\), \(\lambda _n=((n+1)/(n+2))+0.002\), and the initial starting point \(x_1=t, y_1=t^2\), for algorithm (27). The parameters used for algorithm (26) are \(\theta _n=1/((1*n+1)^2)\), \( b_n=1/((n+1)^{(1/4)})\) and \(c_n=1/((n+1)^{(1/5)})\), while the initial points chosen are \(x0=-1.5\), \(y0=0.5\), \(x_1=t\) and \(y_1=t^2\). Results of this example are reported in Table 2.

Figures 7 and 8 indicate that algorithm (27) converges faster than algorithm (26) in terms of both the number of iterations and the time taken to converge.

5 Conclusion

In this paper, we have introduced two iterative methods for approximating solutions of the Hammerstein Eq. (1), if they exist. It is then shown that under suitable assumptions, sequences generated by the first and second iterative methods always converge weakly and strongly, respectively, to an element in the solution set of the Hammerstein Eq. (1), if this solution set is nonempty. The common feature of our algorithms is the presence of the over-relaxed parameter \(\lambda _n\) that has been used in the literature to speed up the rate of convergence of iterative methods. Numerical experiments show that our methods produce sequences that converges faster to the solution of the Hammerstein Eq. (1), assuming existence of solutions, compared with some of the iterative methods studied in the literature. Also, our results extend, improve and generalize some existing results in the literature.

Data availability

Not applicable.

Code availability

Not applicable.

References

Allouch, C., Sbibih, D., Tahrichi, M.: Legendre superconvergent Galerkin-collocation type methods for Hammerstein equations. J. Comput. Appl. Math. 353, 253–264 (2019). https://doi.org/10.1016/j.cam.2018.12.040

Appell, J., Benavides, T.D.: Nonlinear Hammerstein equations and functions of bounded Riesz–Medvedev variation. Topol. Methods Nonlinear Anal. 47, 319–332 (2016)

Bello, A.U., Omojola, M.T., Yahaya, J.: An inertial-type algorithm for approximation of solutions of Hammerstein integral inclusions in Hilbert spaces. Fixed Point Theory Algorithms Sci. Eng. 2021(8), 22 (2021)

Blum, E., Oettli, W.: From optimization and variational inequality to equilibrium problems. Math. Stud. 63, 123–145 (1994)

Boikanyo, O.A.: The generalized contraction proximal point algorithm with square-summable errors. Afr. Mat. 28, 321–332 (2017)

Brézis, H., Browder, F.E.: Some new results about Hammerstein equations. Bull. Am. Math. Soc. 80, 568–572 (1974)

Brézis, H., Browder, F.E.: Existence theorems for nonlinear integral equations of Hammerstein type. Bull. Am. Math. Soc. 81, 73–78 (1975)

Brézis, H., Browder, F.E.: Nonlinear integral equations and systems of Hammerstein type. Adv. Math. 18, 115–147 (1975)

Browder, F.E.: The solvability of nonlinear functional equations. Duke Math. J. 30, 557–566 (1963)

Browder, F.E.: Nonlinear mappings of non-expansive and accretive operators in Banach spaces. Bull. Am. Math. Soc. 73, 875–882 (1967)

Browder, F.E.: Nonlinear functional analysis and nonlinear integral equations of Hammerstein and Urysohn type. In: Zarantonello, E. (ed.) Contributions to Nonlinear Functional Analysis, pp. 425–500. Academic Press, New York (1971)

Browder, F.E., Gupta, C.P.: Nonlinear monotone operators and integral equations of Hammerstein type. Bull. Am. Math. Soc. 75, 1347–1353 (1969)

Browder, F.E., De Figueiredo, D.G., Gupta, C.P.: Maximal monotone operators and nonlinear integral equations of Hammerstein type. Bull. Am. Math. Soc. 76, 700–705 (1970)

Chepanovich, R.S.: Nonlinear Hammerstein equations and fixed points. Publ. Inst. Math. (Belgr.) 35, 119–123 (1984)

Chidume, C.E., Bello, A.U.: An iterative algorithm for approximating solutions of Hammerstein equations with monotone maps in Banach spaces. Appl. Math. Comput. 313, 408–417 (2017)

Chidume, C.E., Djitte, N.: Iterative approximation of solutions of nonlinear equations of Hammerstein-type. Nonlinear Anal. 70, 4086–4092 (2009)

Chidume, C.E., Djitte, N.: Approximation of solutions of Hammerstein equations with bounded strongly accretive nonlinear operator. Nonlinear Anal. 70, 4071–4078 (2009)

Chidume, C.E., Djitte, N.: An iterative method for solving nonlinear integral equations of Hammerstein type. Appl. Math. Comput. 219, 5613–5621 (2013)

Chidume, C.E., Idu, K.O.: Approximation of zeros of bounded maximal monotone maps, solutions of Hammerstein integral equations and convex minimization problems. Fixed Point Theory Appl. (2016). https://doi.org/10.1186/s13663-016-0582-8

Chidume, C.E., Ofoedu, E.U.: Solution of nonlinear integral equations of Hammerstein type. Nonlinear Anal. 74, 4293–4299 (2011)

Chidume, C.. E., Shehu, Y.: Strong convergence theorem for approximation of solutions of equations of Hammerstein type. Nonlinear Anal. Theory Methods Appl. 75(14), 5664–5671 (2012)

Chidume, C.E., Shehu, Y.: Approximation of solutions of generalized equations of Hammerstein type. Comput. Math. Appl. 63, 966–974 (2012)

Chidume, C.E., Shehu, Y.: Iterative approximation of solutions of equations of Hammerstein type in certain Banach spaces. Appl. Math. Comput. 219, 5657–5667 (2013)

Chidume, C.E., Shehu, Y.: Approximation of solutions of equations Hammerstein type in Hilbert spaces. Fixed Point Theory 16(1), 91–102 (2015)

Chidume, C.E., Zegeye, H.: Approximation of solutions of nonlinear equations of monotone and Hammerstein-type. Appl. Anal. 82(8), 747–758 (2003)

Chidume, C.E., Zegeye, H.: Iterative approximation of solutions of nonlinear equation of Hammerstein-type. Abstr. Appl. Anal. 6, 353–367 (2003)

Chidume, C.E., Zegeye, H.: Approximation of solutions nonlinear equations of Hammerstein type in Hilbert space. Proc. Am. Math. Soc. 133, 851–858 (2005)

Chidume, C.E., Adamu, A., Chinwendu, L.O.: Approximation of solutions of Hammerstein equations with monotone mappings in real Banach spaces. Carpath. J. Math. 35(3), 305–316 (2019)

Chidume, C.E., Nnakwe, M.O., Adamu, A.: A strong convergence theorem for generalized-\(\Phi \)-strongly monotone maps, with applications. Fixed Point Theory Appl. (2019). https://doi.org/10.1186/s13663-019-0660-9

Chidume, C.E., Adamu, A., Okereke, L.C.: Iterative algorithms for solutions of Hammerstein equations in real Banach spaces. Fixed Point Theory Appl. 2020, 4 (2020). https://doi.org/10.1186/s13663-020-0670-7

Daman, O., Tufa, A.R., Zegeye, H.: Approximating solutions of Hammerstein type equations in Banach spaces. Quaest. Math. 42(5), 561–577 (2019)

De Figueiredo, D.G., Gupta, C.P.: On the variational methods for the existence of solutions to nonlinear equations of Hammerstein type. Bull. Am. Math. Soc. 40, 470–476 (1973)

Djitte, N., Sene, M.: An iterative algorithm for approximating solutions of Hammerstein integral equations. Numer. Funct. Anal. Optim. 34(12), 1299–1316 (2013)

Dolezal, V.: Monotone operators and applications in control and network theory. In: Studies in Automation and Control, Vol. 2. Elsevier Scientific, New York (1978)

Goebel, K., Kirk, W.A.: Topics on Metric Fixed Point Theory. Cambridge University Press, Cambridge (1990)

Kazemi, M.: Triangular functions for numerical solution of the nonlinear Volterra integral equations. J. Appl. Math. Comput. 1–24 (2021)

Kazemi, M.: On existence of solutions for some functional integral equations in Banach algebra by fixed point theorem. Int. J. Nonlinear Anal. Appl. 13(1), 451–466 (2022)

Kazemi, M., Ezzati, R.: Existence of solution for some nonlinear two-dimensional Volterra integral equations via measures of noncompactness. Appl. Math. Comput. 275, 165–171 (2016)

Kürkçü, O.K.: An evolutionary numerical method for solving nonlinear fractional Fredholm–Volterra–Hammerstein integro-differential-delay equations with a functional bound. Int. J. Comput. Math. https://doi.org/10.1080/00207160.2022.2095510

Maingé, P.E.: Strong convergence of projected subgradiant method for nonsmooth and nonstrictily convex minimization. Set-Valued Anal. 16, 899–912 (2008)

Micula, S., Cattani, C.: On a numerical method based on wavelets for Fredholm–Hammerstein integral equations of the second kind. Math. Methods Appl. Sci. 41, 9103–9115 (2018)

Minjibir, M.S., Mohammed, I.: Iterative algorithms for solutions of Hammerstein integral inclusions. Appl. Math. Comput. 320, 389–399 (2018)

Neamprem, K., Klangrak, A., Kaneko, H.: Taylor–Series expansion methods for multivariate Hammerstein integral equations. Int. J. Appl. Math. 47(4), 1–5 (2017)

Ofoedu, E.U., Onyi, C.E.: New implicit and explicit approximation methods for solutions of integral equations of Hammerstein type. Appl. Math. Comput. 246, 628–637 (2014)

Opial, Z.: Weak convergence of the sequence of successive approximations for non-expansive mappings. Bull. Am. Math. Soc. 73, 591–597 (1967)

Pascali, D., Sburlan, S.: Nonlinear mappings of monotone type. Editura Academia, Bucharest (1978)

Shehu, Y.: Strong convergence theorem for integral equations of Hammerstein type in Hilbert spaces. Appl. Math. Comput. 231, 140–147 (2014)

Tufa, A.R., Zegeye, H., Thuto, M.: Iterative solutions of nonlinear integral equations of Hammerstein type. Int. J. Anal. Appl. 9(2), 129–141 (2015)

Uba, M.O., Uzochukwu, M.I., Onyido, M.A.: Algorithm for approximating solutions of Hammerstein integral equations with maximal monotone operators. Indian J. Pure Appl. Math. 48(3), 391–410 (2017)

Wang, J.: Numerical algorithm for two-dimensional nonlinear Volterra–Fredholm integral equations and fractional integro-differential equations (of Hammerstein and mixed types). Eng. Comput. 38(9), 3548–3563 (2021). https://doi.org/10.1108/EC-06-2020-0353

Xu, H.K.: Another control condition in an iterative method for nonexpansive mappings. Bull. Aust. Math. Soc. 65, 109–113 (2002)

Zegeye, H., Malonza, D.M.: Hybrid approximation of solutions of integral equations of the Hammerstein type. Arab. J. Math. 2, 221–232 (2013)

Acknowledgements

The authors are very grateful to the anonymous referees for their comments and suggestions which have helped to improve this manuscript.

Funding

No funding was used to undertake this work.

Author information

Authors and Affiliations

Contributions

All authors contributed equally. Authors read and approved the final manuscript.

Corresponding author

Ethics declarations

Conflict of interest

The authors declare that they have no competing interests.

Ethics approval

Not applicable.

Consent to participate

Authors agree to participate in the revision of the manuscript to the format required by the journal.

Consent for publication

Authors give their consent for the manuscript to be published.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Springer Nature or its licensor holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Boikanyo, O.A., Zegeye, H. New iterative methods for finding solutions of Hammerstein equations. J. Appl. Math. Comput. 69, 1465–1490 (2023). https://doi.org/10.1007/s12190-022-01795-y

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s12190-022-01795-y