Abstract

The essential matrix incorporates relative rotation and translation parameters of two calibrated cameras. The well-known algebraic characterization of essential matrices, i.e. necessary and sufficient conditions under which an arbitrary matrix (of rank two) becomes essential, consists of a single matrix equation of degree three. Based on this equation, a number of efficient algorithmic solutions to different relative pose estimation problems have been proposed in the last two decades. In three views, a possible way to describe the geometry of three calibrated cameras comes from considering compatible triplets of essential matrices. The compatibility is meant the correspondence of a triplet to a certain configuration of calibrated cameras. The main goal of this paper is to give an algebraic characterization of compatible triplets of essential matrices. Specifically, we propose necessary and sufficient polynomial constraints on a triplet of real rank-two essential matrices that ensure its compatibility. The constraints are given in the form of six cubic matrix equations, one quartic and one sextic scalar equations. An important advantage of the proposed constraints is their sufficiency even in the case of cameras with collinear centers. The applications of the constraints may include relative camera pose estimation in three and more views, averaging of essential matrices for incremental structure from motion, multiview camera auto-calibration, etc.

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Avoid common mistakes on your manuscript.

1 Introduction

In multiview geometry, the essential matrix describes the relative orientation of two calibrated cameras. It was first introduced by Longuet-Higgins (1981), where the essential matrix was used for the scene reconstruction from point correspondences in two views. Later, the algebraic properties of essential matrices have been investigated in detail in Faugeras and Maybank (1990), Horn (1990), Huang and Faugeras (1989). The well-known characterization of the algebraic variety of essential matrices, which is the closure of the set of essential matrices in the corresponding projective space, consists of a unique matrix equation of degree three (Demazure 1988; Maybank 1993). Based on this equation, a number of efficient algorithmic solutions to different relative pose estimation problems have been proposed in the last two decades, e.g. Nistér (2004), Stewénius et al. (2006, 2008), Kukelova and Pajdla (2007).

The relative orientation of three calibrated cameras can be naturally described by the so-called calibrated trifocal tensor. It first appeared in Spetsakis and Aloimonos (1990), Weng et al. (1992) as a tool of scene reconstruction from line correspondences. The algebraic properties of calibrated trifocal tensors were recently investigated in Martyushev (2017), where some necessary and sufficient polynomial constraints on a real calibrated trifocal tensor have been derived. A 3-view analog of the variety of essential matrices—the calibrated trifocal variety—has been introduced in Kileel (2017), where it was used to compute algebraic degrees of various 3-view relative pose problems. The complete characterization of the calibrated trifocal variety is an open and challenging problem. For the uncalibrated case such characterization consists of 10 cubic, 81 quintic, and 1980 sextic polynomial equations (Aholt and Oeding 2014).

Another way to describe the geometry of three calibrated cameras comes from considering the compatible triplets of essential matrices. The compatibility means that a triplet must correspond to a certain configuration of three calibrated cameras. In the recent paper (Kasten et al. 2019a), the authors considered compatible n-view multiplets of essential matrices, which are the natural n-view generalization of the triplets, and proposed their algebraic characterization in terms of the spectral (or singular value) decomposition of a symmetric \(3n\times 3n\) matrix constructed from the multiplet.

On the other hand, the polynomial constraints on compatible triplets of essential matrices have not previously been studied. The present paper is a step in this direction. Its main contribution is a set of polynomial constraints such that any triplet of real and rank-two essential matrices that fulfils these constraints is guaranteed to be compatible. An important advantage of the introduced constraints is their sufficiency even in the case of cameras with collinear centers. The results of the paper may be applied to different computer vision problems including relative camera pose estimation from three and more views, averaging of essential matrices for incremental structure from motion, multiview camera auto-calibration, etc.

The rest of the paper is organized as follows. In Sect. 2, we recall some definitions and results from multiview geometry. In Sect. 3, we propose our necessary and sufficient constraints on compatible triplets of essential matrices. The constraints have the form of 82 cubic, one quartic, and one sextic homogeneous polynomial equations in the entries of essential matrices. Section 4 outlines two possible applications of the proposed constraints. In Sect. 5, we discuss the results of the paper and make conclusions. The “Appendix” includes some auxiliary lemmas that we used throughout the proof of our main Theorem 5.

2 Preliminaries

2.1 Notation

We preferably use \(\alpha \), \(\beta , \ldots \) for scalars, a, \(b, \ldots \) for column 3-vectors or polynomials, and A, \(B, \ldots \) both for matrices and column 4-vectors. For a matrix A, the entries are \((A)_{ij}\), the transpose is \(A^\top \), and the adjugate (i.e. the transposed matrix of cofactors) is \(A^*\). The determinant of A is \(\det A\) and the trace is \({{\,\mathrm{tr}\,}}A\). For two 3-vectors a and b, the cross product is \(a\times b\). For a vector a, the entries are \((a)_i\). The notation \([a]_\times \) stands for the skew-symmetric matrix such that \([a]_\times b = a \times b\) for any vector b. We use I for the identity matrix and \(\Vert \cdot \Vert \) for the Frobenius norm.

2.2 Fundamental Matrix

We briefly recall some definitions and results from multiview geometry, see Faugeras (1993), Faugeras and Maybank (1990), Hartley and Zisserman (2003), Maybank (1993) for details.

A matrix \(P = \begin{bmatrix}A&a\end{bmatrix}\), where A is an invertible \(3\times 3\) matrix and a is a 3-vector, is called the finite projective camera. A camera P represents a perspective projection of 3-space onto the image plane, which is the image of P, through the camera center, which is the right null vector of P.

Let \(P_1 = \begin{bmatrix}A_1&a_1\end{bmatrix}\) and \(P_2 = \begin{bmatrix}A_2&a_2\end{bmatrix}\) be finite projective cameras. Let Q be a point in 3-space represented by its homogeneous coordinates and \(q_j\) be its jth perspective image. Then,

where \(\sim \) means an equality up to a non-zero scale. The epipolar constraint for a pair \((q_1, q_2)\) says

where Sengupta et al. (2017), Kasten et al. (2019a)

is called the fundamental matrix. By definition, \(F_{21}\) must be of rank two. The left and right null vectors of \(F_{21}\), that is the vectors

respectively, are called the epipoles. Geometrically, \(e_{ij}\) is the perspective projection of the jth camera center onto the ith image plane.

The following theorem gives an algebraic characterization of the set of fundamental matrices.

Theorem 1

(Hartley and Zisserman 2003) Any real \(3\times 3\) matrix of rank two is a fundamental matrix.

2.3 Essential Matrix

The essential matrix \(E_{21}\) is the fundamental matrix for calibrated cameras \({{\hat{P}}}_1 = \begin{bmatrix} R_1&t_1 \end{bmatrix}\) and \({{\hat{P}}}_2 = \begin{bmatrix} R_2&t_2\end{bmatrix}\), where \(R_1, R_2 \in \mathrm {SO}(3)\) and \(t_1\), \(t_2\) are 3-vectors, that is

The proof of formula (5) can be found in Arie-Nachimson et al. (2012).

In general, the calibrated and uncalibrated camera matrices are related by

where \(K_j\) is an invertible upper-triangular matrix known as the calibration matrix of the jth camera and H is a certain invertible \(4\times 4\) matrix. Then it can be shown that

Hence, the epipolar constraint for the essential matrix becomes

where \({{\hat{q}}}_j = K_j^{-1} q_j\).

The following theorem gives an algebraic characterization of the set of essential matrices.

Theorem 2

(Demazure 1988; Faugeras and Maybank 1990; Maybank 1993) A real \(3\times 3\) matrix E of rank two is an essential matrix if and only if E satisfies

2.4 Compatible Triplets of Fundamental Matrices

A triplet of fundamental matrices \(\{F_{12}, F_{23}, F_{31}\}\) is said to be compatible if there exist matrices \(B_1, B_2, B_3 \in \mathrm {GL}(3)\) and 3-vectors \(b_1\), \(b_2\), \(b_3\) such that

for all distinct \(i, j \in \{1, 2, 3\}\). It follows from (10) that \(F_{ji} = F_{ij}^\top \).

Theorem 3

Let \({\mathcal {F}} = \{F_{12}, F_{23}, F_{31}\}\) be a triplet of real rank-two fundamental matrices with non-collinear epipoles, i.e. \(e_{ki} \not \sim e_{kj}\) for all distinct \(i, j, k \in \{1, 2, 3\}\). Then, \({\mathcal {F}}\) is compatible if and only if it satisfies

Proof

By the definition of epipoles, constraints (11) imply (and are implied by)

for all distinct indices \(i, j, k \in \{1, 2, 3\}\). Geometrically, constraints (12) mean that the epipoles \(e_{ij}\) and \(e_{kj}\) are matched and correspond to the projections of the jth camera center onto the ith and kth image plane respectively. The proof of compatibility of three fundamental matrices with non-collinear epipoles satisfying (12) can be found in Hartley and Zisserman (2003). \(\square \)

The main goal of this paper is to propose a generalized analog of Theorem 3 for a triplet of essential matrices with possibly collinear epipoles.

3 Compatible Triplets of Essential Matrices

A triplet of essential matrices \(\{E_{12}, E_{23}, E_{31}\}\) is said to be compatible if there exist matrices \(R_1, R_2, R_3 \in \mathrm {SO}(3)\) and 3-vectors \(b_1\), \(b_2\), \(b_3\) such that

for all distinct \(i, j \in \{1, 2, 3\}\).

Given a triplet of essential matrices \(\{E_{12}, E_{23}, E_{31}\}\), let us denote

The symmetric \(9\times 9\) matrix E (as well as its analog for fundamental matrices) has been previously introduced in Sengupta et al. (2017), Kasten et al. (2019a, b) where some of its spectral properties were investigated. The matrix E is called compatible if it is constructed from a compatible triplet. In Kasten et al. (2019a), the authors propose necessary and sufficient conditions on the compatibility of matrix E (more precisely, of an n-view generalization of matrix E) in terms of its spectral or singular value decomposition. In particular, these conditions imply that the characteristic polynomial of a compatible matrix E must be of the form

where \(\lambda _1\), \(\lambda _2\), and \(\lambda _3\) are possibly non-zero eigenvalues of E.

Condition (15) induces polynomial constraints on the entries of matrix E. Namely, the coefficients of \(p_E(\lambda )\) in \(\lambda ^6\), \(\lambda ^4\), \(\lambda ^2\), \(\lambda ^1\), and \(\lambda ^0\) must vanish. It is clear that these coefficients are polynomials in the entries of matrices \(E_{12}\), \(E_{23}\), \(E_{31}\) of degree 3, 5, 7, 8, and 9 respectively. For example, the coefficient in \(\lambda ^6\) equals \(-2{{\,\mathrm{tr}\,}}(E_{12}E_{23}E_{31})\). Below we propose a set of cubic polynomial equations on matrix E such that constraint (15) is implied by these equations, see Eqs. (26)–(28).

Apart from condition (15), there exists an additional quadratic constraint on the eigenvalues of matrix E. Let \(|\lambda _1| > |\lambda _2|\) and \(|\lambda _1| > |\lambda _3|\). Then, by a straightforward computation, one verifies that

A symmetrized version of Eq. (16), which is independent of the ordering on the set of eigenvalues, has the form

Since the l.h.s. of Eq. (17) is a symmetric function in values \(\lambda _1^2\), \(\lambda _2^2\), and \(\lambda _3^2\), it can be expanded in terms of the elementary symmetric functions, which are the coefficients in (15). On the other hand, these coefficients can be represented as polynomials in \({{\,\mathrm{tr}\,}}(E^{2k})\) for \(k = 1, 2, 3\). Thus we obtain an additional sixth degree scalar equation on matrix E, see Eq. (30).

Finally, some of our polynomial constraints are easier to formulate using a specific binary operation on the space of \(3\times 3\) matrices. Let \(A^*\) be the adjugate of a matrix A, i.e. the transposed matrix of its cofactors. Then for a pair of \(3\times 3\) matrices A and B we define

An alternative expression for \(A\diamond B\) can be derived using the well-known formula

Thus we get

It is straightforward to show that for any \(3\times 3\) matrices A, B, C, D and any scalars \(\beta \) and \(\gamma \) the following identities hold:

Now we can formulate our polynomial constraints.

Theorem 4

Let \(\{E_{12}, E_{23}, E_{31}\}\) be a compatible triplet of essential matrices, matrix E be defined in (14). Then the following equations hold:

for all distinct \(i, j, k \in \{1, 2, 3\}\). There are in total \(1 + 6\cdot 9 + 3\cdot 9 = 82\) linearly independent cubic equations of type (26)–(28).

Proof

Let

where \(U_i \in \mathrm {SO}(3)\). It is clear that \({\mathcal {E}} = \{E_{12}, E_{23}, E_{31}\}\) is a compatible triplet if and only if so is \(\widetilde{{\mathcal {E}}} = \{{{\widetilde{E}}}_{12}, \widetilde{E}_{23}, {{\widetilde{E}}}_{31}\}\). Also it can be readily seen that \({\mathcal {E}}\) fulfils Eqs. (26)–(30) if and only if so does \(\widetilde{{\mathcal {E}}}\).

Given a compatible triplet \(\{E_{12}, E_{23}, E_{31}\}\), where each \(E_{ij}\) is represented by (13), we set \(U_i = R_i^\top \). Then essential matrices \({{\widetilde{E}}}_{ij} = U_i E_{ij}U_j^\top \) become skew-symmetric and can be written in form

where indices i, j, k are intended to be distinct. Denoting \(b_i - b_j = c\), \(b_j - b_k = d\) yields \(b_k - b_i = -c - d\) and hence we can write

By substituting this into Eqs. (26)–(28), we get

We used that \([a]_\times [b]_\times = ba^\top - (a^\top b)I\) and \(([a]_\times )^* = aa^\top \) for arbitrary 3-vectors a and b. Equations (29)–(30) can be verified in a similar manner, but the computation is more complicated. Theorem 4 is proved. \(\square \)

Remark 1

Constraint (11) for essential matrices is implied by Eq. (27). Namely, multiplying (27) on the right by \(E_{jk}^*\) gives (11).

We also note that Eq. (27) is a generalization of Eq. (9). Setting \(k = i\) in (27) and taking into account that \(E_{ii} = 0_{3\times 3}\) gives (9).

Theorem 5

Let \({\mathcal {E}} = \{E_{12}, E_{23}, E_{31}\}\) be a triplet of real rank-two essential matrices. Then \({\mathcal {E}}\) is compatible if and only if it satisfies Eqs. (26)–(30) from Theorem 4.

Proof

The “only if” part is by Theorem 4. Let us prove the “if” part.

First of all, we simplify the triplet \({\mathcal {E}}\) by using transform (31). Each essential matrix from \(\mathcal E\) can be represented in form \(E_{ij} = [b_{ij}]_\times R_{ij}\), where \(R_{ij} \in \mathrm {SO}(3)\) and \(b_{ij}\) is a 3-vector. We set \(U_2 = U_1 R_{12}\) and \(U_3 = U_2 R_{23} = U_1 R_{12}R_{23}\). Then,

where \(R = R_{12}R_{23}R_{31}\). The matrix \(U_1\) is chosen so thatFootnote 1

where \(\lambda ^2 + \mu ^2 = 1\) and also \((U_1R_{12}R_{23}b_{31})_1 = 0\). As a result, it suffices to prove the “if” part for the following triplet:

where \(\lambda , \mu , \alpha _1, \ldots , \gamma _3\) are some scalars. For the purpose of completeness, we also write down the epipoles corresponding to triplet (39):

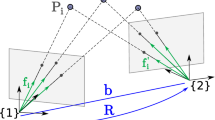

Let us define an ideal \(J \subset {\mathbb {C}}[\lambda , \mu , \alpha _1, \ldots , \gamma _3]\) generated by all polynomials from (26)–(30) for triplet \(\{\widetilde{E}_{12}, {{\widetilde{E}}}_{23}, {{\widetilde{E}}}_{31}\}\) and also by \(\lambda ^2 + \mu ^2 - 1\). The ideal J determines an affine variety \({\mathcal {V}}(J) \subset {\mathbb {C}}^{10}\). The rest of the proof consists in showing that each point of \({\mathcal {V}}(J)\) is either a compatible or degenerate triplet of essential matrices. By degeneration we mean that at least one essential matrix from the triplet is a zero matrix. For the reader’s convenience, the main steps of the further proof are schematically shown in Fig. 1.

To the proof of Theorem 5. Every real point of the variety defined by the ideal J is either a compatible triplet or a degenerate triplet with at least one \({{\widetilde{E}}}_{ij} = 0_{3\times 3}\). The dashed arrow means correspondence to a particular case

We consider the three main cases: (i) \(\mu = 0\), \(\lambda = 1\); (ii) \(\mu = 0\), \(\lambda = -1\); (iii) \(\mu \ne 0\).

Case I: \(\mu = 0\), \(\lambda = 1\).

First we note that a polynomial ideal and its radical define the same affine variety, whereas the algebraic structure of the radical may be much easier. For example, the Gröbner bases of the ideal J and its radical \(\sqrt{J}\) w.r.t. the same monomial ordering consist of 217 and 62 polynomials respectively. Besides, there exist a simple radical membership test allowing to check whether a given polynomial belongs to the radical or not, see Lemma 1 from the “Appendix”. The above arguments should explain the use of radicals throughout the further proof.

Let us define the polynomials

Then, by the radical membership test, we get

for all indices i and j.

First suppose that \(f_i \ne 0\) for a certain i. Then it follows from (42) that \(\alpha _1\beta _3 = \alpha _1\gamma _3 = \alpha _2\beta _3 = \alpha _2\gamma _3 = 0\). If \(\alpha _1 \ne 0\) or \(\alpha _2 \ne 0\), then \(\beta _3 = \gamma _3 = 0\) and we get \({{\widetilde{E}}}_{31} = 0_{3\times 3}\) in contradiction with the rank-two condition. Therefore \(\alpha _1 = \alpha _2 = 0\) and it follows from (42) that

The variables \(\beta _3\) and \(\gamma _3\) cannot be zero simultaneously. Without loss of generality, assume that \(\gamma _3 \ne 0\) and introduce parameter \(\delta = \beta _3/\gamma _3\). Then Eqs. (43) imply \(\beta _i = \delta \gamma _i\) for all i. Using the radical membership test, one verifies that the variables \(\gamma _i\) are constrained by

that is \(\gamma _3 = \epsilon _1\gamma _1 + \epsilon _2\gamma _2\) with \(\epsilon _i = {\pm } 1\). The triplet of essential matrices has the form (67) and is compatible by Lemma 3.Footnote 2

Now consider the case \(f_1 = f_2 = f_3 = 0\), that is

The triplet of essential matrices has the form (69) and is compatible by Lemma 4.

Case II: \(\mu = 0\), \(\lambda = -1\).

Let \(J' = J + \langle \mu , \lambda + 1 \rangle \). The ideal \(\sqrt{J'}\) contains the following polynomials:

where \(i = 1, 2\). Supposing that \(\alpha _1\beta _3 \ne 0\) or \(\alpha _2\beta _3 \ne 0\) yields

The case \(\beta _1 = \beta _2 = 0\) contradicts to the rank-two condition, since leads to \({{\widetilde{E}}}_{31} = 0_{3\times 3}\). If \(\beta _1 = 0\) and \(\beta _2 \ne 0\), then triplet \(\{\widetilde{E}_{12}, {{\widetilde{E}}}_{23}, {{\widetilde{E}}}_{31}\}\) has the form (70) and is compatible by Lemma 4. Similarly, we get compatible triplet (71) in the case \(\beta _2 = 0\) and \(\beta _1 \ne 0\). Finally, if \(\beta _1\beta _2 \ne 0\), then it follows from (47) that \(\beta _1\beta _2 + \alpha _1^2 = 0\) and so \(\beta _2 = -\alpha _1^2/\beta _1\). The triplet \(\{\widetilde{E}_{12}, {{\widetilde{E}}}_{23}, {{\widetilde{E}}}_{31}\}\) has the form (72) and is compatible by Lemma 4.

Now consider the case \(\alpha _1\beta _3 = \alpha _2\beta _3 = 0\). There are two possibilities. If \(\beta _3 = 0\), then ideal \(\sqrt{J' + \langle \beta _3 \rangle }\) contains the following polynomials:

The case \(\gamma _3 = 0\) contradicts to the rank-two condition. Thus, \(\alpha _1 + \alpha _2 = \beta _1 + \beta _2 = 0\). It follows that, if \(\alpha _2 = \beta _2 = 0\), then \(\alpha _1 = \beta _1 = 0\). By the radical membership test, the variables \(\gamma _1\), \(\gamma _2\), and \(\gamma _3\) are constrained by (44) and hence we get a particular case (\(\delta = 0\)) of triplet (67) which is compatible by Lemma 3. On the other hand, if \(\alpha _2 \ne 0\) or \(\beta _2 \ne 0\), then we get

The triplet \(\{{{\widetilde{E}}}_{12}, {{\widetilde{E}}}_{23}, \widetilde{E}_{31}\}\) is a particular case (\(\beta _3 = 0\)) of (69) which is compatible by Lemma 4.

The second possibility is \(\beta _3 \ne 0\) and \(\alpha _1 = \alpha _2 = 0\). Denote \(J'' = J' + \langle \alpha _1, \alpha _2 \rangle \) and define the polynomials

Then, the radical membership test yields

for all i.

Consider the case \(\beta _1\beta _2 = 0\). If \(\beta _1 = 0\), then ideal \(\sqrt{J'' + \langle \beta _1 \rangle }\) contains the polynomials

The case \(\gamma _1 = 0\) leads to \({{\widetilde{E}}}_{12} = 0_{3\times 3}\) and hence contradicts to the rank-two condition. Therefore we get

The triplet \(\{{{\widetilde{E}}}_{12}, {{\widetilde{E}}}_{23}, \widetilde{E}_{31}\}\) has the form (73) and is compatible by Lemma 4. Similarly we get compatible triplet (74) if \(\beta _2 = 0\).

Finally, let \(\beta _1\beta _2 \ne 0\). The case \(\gamma _1 + \gamma _2 + \gamma _3 = 0\) is impossible, since ideal \(\sqrt{J'' + \langle \gamma _1 + \gamma _2 + \gamma _3 \rangle }\) contains \(\beta _1\beta _2\beta _3 \ne 0\). Let us denote \(\delta = -1/(\gamma _1 + \gamma _2 + \gamma _3)\). Then it follows from (51) that

The triplet \(\{{{\widetilde{E}}}_{12}, {{\widetilde{E}}}_{23}, \widetilde{E}_{31}\}\) has the form (75) and is compatible by Lemma 4.

Case III: \(\mu \ne 0\).

The ideal \(\sqrt{J}\) contains the following polynomials:

Since \(\mu \ne 0\), we have \(\alpha _2\gamma _3 = \beta _2\gamma _3 - \beta _3\gamma _2 = 0\).

First, supposing that \(\gamma _i \ne 0\) for a certain i gives \(\alpha _2\beta _3 = 0\). If \(\alpha _2 \ne 0\), then \(\beta _3 = \gamma _3 = 0\) and hence \({{\widetilde{E}}}_{31} = 0_{3\times 3}\) in contradiction with the rank-two condition. If \(\alpha _2 = 0\), then ideal \(\sqrt{J + \langle \alpha _2 \rangle }\) contains \(\alpha _1\beta _2\) and \(\alpha _1\gamma _2\). If \(\alpha _1 \ne 0\), then \({{\widetilde{E}}}_{23} = 0_{3\times 3}\) in contradiction with the rank-two condition. Continuing this way, one concludes that \(\beta _1 = \beta _2 = \beta _3 = 0\). The ideal \(\sqrt{J + \langle \alpha _1, \alpha _2, \beta _1, \beta _2, \beta _3 \rangle }\) contains \(\mu \gamma _1\gamma _2\gamma _3\). The condition \(\mu \ne 0\) implies that at least one \(\gamma _i = 0\) and so at least one essential matrix \({{\widetilde{E}}}_{ij}\) is a zero matrix. This contradicts to the rank-two condition.

Let \(\gamma _i = 0\) for all i. The ideal \(\sqrt{J + \langle \gamma _1, \gamma _2, \gamma _3 \rangle }\) contains \(\beta _3(\alpha _1\lambda + \beta _1\mu + \alpha _2)\). Since \(\beta _3 \ne 0\) (otherwise \({{\widetilde{E}}}_{31} = 0_{3\times 3}\)), we conclude that \(\alpha _1\lambda + \beta _1\mu + \alpha _2 = 0\). Denote \(J' = J + \langle \gamma _1, \gamma _2, \gamma _3, \alpha _1\lambda + \beta _1\mu + \alpha _2\rangle \) and define the polynomials

Then, by the radical membership test, we get

for all indices i, j, and k.

Suppose that \(\alpha _2\beta _3 = 0\). Since \(\beta _3 \ne 0\) (otherwise \({{\widetilde{E}}}_{31} = 0_{3\times 3}\)), we conclude that \(\alpha _2 = 0\). The ideal \(\sqrt{J' + \langle \alpha _2 \rangle }\) contains \(\alpha _1\beta _2\). Since \(\beta _2 \ne 0\) (otherwise \({{\widetilde{E}}}_{23} = 0_{3\times 3}\)), we have \(\alpha _1 = 0\). The ideal \(\sqrt{J' + \langle \alpha _1, \alpha _2 \rangle }\) contains \(\mu \beta _1\). Since \(\mu \ne 0\), we have \(\beta _1 = 0\) and thus \({{\widetilde{E}}}_{12} = 0_{3\times 3}\) in contradiction with the rank-two condition.

Let \(\alpha _2\beta _3 \ne 0\). Then it follows from (57) that

Each of the three cases \(p_1 = q_1 = 0\), \(p_1 = r_1 = 0\), and \(q_1 = r_1 = 0\) is impossible, since leads to \(\mu \alpha _2\beta _3 = 0\) in contradiction with \(\mu \ne 0\) and \(\alpha _2\beta _3 \ne 0\).

Assume that \(p_1 = 0\), \(q_1 \ne 0\), and \(r_1 \ne 0\). Then it follows from (57) that \(p_i = 0\) for all i. The triplet \(\{{{\widetilde{E}}}_{12}, {{\widetilde{E}}}_{23}, \widetilde{E}_{31}\}\) has the form (86) and is compatible by Lemma 5.

Let \(q_1 = 0\), \(p_1 \ne 0\), and \(r_1 \ne 0\). Then we have \(q_j = 0\) for all j. The triplet \(\{{{\widetilde{E}}}_{12}, {{\widetilde{E}}}_{23}, {{\widetilde{E}}}_{31}\}\) has the form (87) and is compatible by Lemma 5.

Finally, if \(r_1 = 0\), \(p_1 \ne 0\), and \(q_1 \ne 0\), then \(r_k = 0\) for all k. Taking into account that \(\mu \ne 0\), after some computation, we conclude that the triplet \(\{{{\widetilde{E}}}_{12}, {{\widetilde{E}}}_{23}, {{\widetilde{E}}}_{31}\}\) has the form (88) and is compatible by Lemma 5. We note that the denominator \(p_3 = \alpha _1\mu - \beta _1(\lambda - 1)\) in (89) does not vanish, since otherwise \(\sqrt{J' + \langle r_1, r_2, r_3, p_3 \rangle }\) contains \(\mu \alpha _2\beta _3 \ne 0\).

To summarize, we have shown that a triplet of real rank-two essential matrices \(\{{{\widetilde{E}}}_{12}, {{\widetilde{E}}}_{23}, {{\widetilde{E}}}_{31}\}\) that has the form (39) and fulfils Eqs. (26)–(30) is compatible. Formula (31) implies that the triplet \(\{E_{12}, E_{23}, E_{31}\}\) is compatible too as required. Theorem 5 is proved. \(\square \)

Remark 2

Although the 82 cubic equations from Theorem 5 are linearly independent, some of them may be algebraically dependent and therefore redundant for some applications. It is clear that if an ideal generated by a certain subset of Eqs. (26)–(30) equals the ideal J defined in the proof, then Theorem 5 remains valid for this subset. The equality of ideals is readily verified by computing their reduced Gröbner bases. In this way we found that Eq. (26) and the 27 equations of type (27) for indices \((i, j, k) \in \{(1, 3, 2), (3, 2, 1), (2, 1, 3)\}\) are redundant and may be omitted. Theorem 5 holds for the remaining 56 equations.

We also note that Eq. (29) only affects the compatibility of triplets with collinear epipoles, that is without Eq. (29) Theorem 5 remains valid for a triplet of real rank-two essential matrices with non-collinear epipoles.

4 Applications

Among the possible applications of Theorem 5 we outline the following two ones.

4.1 Incremental Structure from Motion

In incremental structure from motion a set of essential matrices arises from independently solved two-view relative pose estimation problems. Due to the noise in input data, scale ambiguity, and other factors, the estimated essential matrices are hardly compatible. Their rectification (averaging) is one of the possible approaches to overcome the incompatibility. In Kasten et al. (2019a), the authors proposed a novel solution to the essential matrix averaging problem based on their own algebraic characterization of compatible sets of essential matrices. Although the method from Kasten et al. (2019a) demonstrates good results outperforming the existing state-of-the-art solutions both in accuracy and in speed, it is not applicable to the practically important case of cameras with collinear centers.

Left: residuals \(\Vert {\mathcal {E}} - \hat{{\mathcal {E}}}_\Lambda \Vert \) at varying levels of Gaussian image noise. Here, \(\hat{\mathcal E}_\Lambda \) is the triplet of pairwise estimated essential matrices each of which is scaled with respective \(\lambda _{ij} \in \Lambda \), \({\mathcal {E}}\) is the averaged compatible triplet. Both triplets are normalized to the unit Frobenius norm. For each noise level, the box shows values from lower to upper quartile, the line inside indicates the median, the whiskers show data within \(1.5\times \) interquartile range, and the dots outside the whiskers are outliers. Right: average rotational and translational errors against Gaussian image noise. Each point on the diagram is a median of \(10^3\) trials. The absolute rotations \(R_i\) and translations \(t_i\), with \(R_1 = I\) and \(t_1 = 0_{3\times 1}\), are recovered from the compatible triplet \({\mathcal {E}}\). The rotational and translational errors are averaged over the 2nd and 3rd views

The introduced polynomial constraints (26)–(30) can also be used to solve the rectification problem. In the simplest form it can be stated as follows: given a triplet of essential matrices \(\hat{{\mathcal {E}}} = \{{{\hat{E}}}_{12}, {{\hat{E}}}_{23}, {{\hat{E}}}_{31}\}\), find a compatible triplet \({\mathcal {E}}\) and scale factors \(\Lambda = \{\lambda _{12}, \lambda _{23}, \lambda _{31}\}\) so that \(\Vert \Lambda \Vert ^2 = 1\) and \({\mathcal {E}}\) is the closest to \(\hat{{\mathcal {E}}}_\Lambda = \{\lambda _{12}{{\hat{E}}}_{12}, \lambda _{23}{{\hat{E}}}_{23}, \lambda _{31}{{\hat{E}}}_{31}\}\) w.r.t. the Frobenius norm. Thus, we arrive at the following polynomial optimization problem:

where J is the homogeneous ideal generated by all forms from (9), (26)–(30) and \({\mathcal {V}}(J)\) is the corresponding projective variety. Problem (59) can be further solved by using iterative methods for constrained optimization, e.g. sequential quadratic programming (SQP), augmented Lagrangian method, etc.

To confirm the proposed approach, we modeled a scene consisting of six points viewed by three fully calibrated cameras with collinear centers. The scene and camera configuration is detailed in the following table:

Distance to the scene | 1 |

Scene depth | 0.5 |

Baseline length | 0.2 |

Image dimensions | \(752 \times 480\) |

Field of view | \(60^{\circ }\) |

Here, the baseline length is the distance between the first and third camera centers. The second camera center varies randomly around the baseline middle point with an amplitude of 0.02. To each image we added a zero-mean Gaussian noise of standard deviation varying from 0 to 1 pixels.

First, the standard 5-point algorithm (Stewénius et al. 2006) was applied to each image pair to estimate the triplet of essential matrices \(\hat{{\mathcal {E}}}\). The sixth point was used to resolve the multiplicity. Each essential matrix from \(\hat{{\mathcal {E}}}\) was normalized to the unit Frobenius norm. Then, problem (59) was solved by the SQP resulting in a compatible triplet \({\mathcal {E}}\) and a set of scale factors \(\Lambda = \{\lambda _{ij}\}\). The results for \(10^3\) trials per each noise level are shown in Fig. 2. Note that the estimated essential matrices for the noise-free case are guaranteed to have collinear epipoles as they differ from the ground truth only by scales. As it can be seen, the algorithm handles the collinear case without a modification.

4.2 Three-View Auto-Calibration

Let \(\{F_{12}, F_{23}, F_{31}\}\) be a compatible triplet of fundamental matrices and \(K_i\) be the calibration matrix of the ith camera. Then we can write \(\lambda _i \lambda _j E_{ij} = K_i^\top F_{ij} K_j\) for certain scalars \(\lambda _1\), \(\lambda _2\), and \(\lambda _3\), cf. (7). Substituting this into Eqs. (26)–(30), we get by a straightforward computation the following equations:

where \(C_i = \lambda _i(\det K_i)^{-1}(K_iK_i^\top )\), \(C = {{\,\mathrm{diag}\,}}(C_1, C_2, C_3)\), and F is the symmetric \(9\times 9\) matrix constructed from \(F_{12}\), \(F_{23}\), and \(F_{31}\) similarly as in formula (14). It is clear that \(K_iK_i^\top = C_i/(C_i)_{33}\) and therefore the calibration matrix can be estimated from \(C_i\) by the Cholesky factorization.

Given \(F_{12}\), \(F_{23}\), and \(F_{31}\), only matrices \(C_i\) are constrained by Eqs. (60)–(63) and hence these equations can be used to solve the camera auto-calibration problem in three and more views. We note that Eq. (63) is sextic, Eq. (60) is cubic, Eq. (61) is quadratic, and Eq. (62) is linear in the entries of matrix C. The auto-calibration constraint corresponding to Eq. (29) is of degree 10 in the entries of C. We do not write it here.

5 Discussion

In this paper we propose new necessary and sufficient conditions on the compatibility of three real rank-two essential matrices (Theorems 4 and 5 ). By compatibility we mean the correspondence of the essential matrices to a certain configuration of three calibrated cameras. The conditions have form of 82 cubic, one quartic, and one sextic homogeneous polynomial equations. We would like to emphasize that (i) these equations relate to the calibrated case only and in general do not hold for compatible triplets of fundamental matrices; (ii) the sufficiency of the equations covers the case of cameras with collinear centers.

The possible applications of the constraints may include multiview relative pose estimation, essential matrix averaging for incremental structure from motion, multiview auto-calibration, etc. Regarding the auto-calibration, it is worth mentioning that some of our equations [Eq. (62)] turn out to be linear in the entries of matrix incorporating the calibration parameters. This unexpected result could be useful in developing novel auto-calibration solutions.

Notes

References

Aholt, C., & Oeding, L. (2014). The ideal of the trifocal variety. Mathematics of Computation, 83, 2553–2574.

Arie-Nachimson, M., Kovalsky, S., Kemelmacher-Shlizerman, I., Singer, A., & Basri, R. (2012). Global motion estimation from point matches. In 2012 Second international conference on 3D imaging, modeling, processing, visualization & transmission (pp. 81–88). IEEE.

Cox, D., Little, J., & O’Shea, D. (2007). Ideals, varieties, and algorithms (Vol. 3). Berlin: Springer.

Demazure, M. (1988). Sur deux problèmes de reconstruction. Technical Report No 882, INRIA.

Faugeras, O. (1993). Three-dimensional computer vision: A geometric viewpoint. Cambridge: MIT Press.

Faugeras, O., & Maybank, S. (1990). Motion from point matches: Multiplicity of solutions. International Journal of Computer Vision, 4, 225–246.

Grayson, D. R., & Stillman, M. E. (2002). Macaulay2, a software system for research in algebraic geometry. http://www.math.uiuc.edu/Macaulay2/.

Hartley, R., & Zisserman, A. (2003). Multiple view geometry in computer vision. Cambridge: Cambridge University Press.

Horn, B. (1990). Relative orientation. International Journal of Computer Vision, 4, 59–78.

Huang, T., & Faugeras, O. (1989). Some properties of the \(E\)-matrix in two-view motion estimation. IEEE Transactions on Pattern Analysis and Machine Intelligence, 11, 1310–1312.

Kasten, Y., Geifman, A., Galun, M., & Basri, R. (2019a). Algebraic characterization of essential matrices and their averaging in multiview settings. In Proceedings of the IEEE international conference on computer vision (pp. 5895–5903).

Kasten, Y., Geifman, A., Galun, M., & Basri, R. (2019b). Gpsfm: Global projective sfm using algebraic constraints on multi-view fundamental matrices. In Proceedings of the IEEE conference on computer vision and pattern recognition (pp. 3264–3272).

Kileel, J. (2017). Minimal problems for the calibrated trifocal variety. SIAM Journal on Applied Algebra and Geometry, 1(1), 575–598.

Kukelova, Z., & Pajdla, T. (2007). Two minimal problems for cameras with radial distortion. In 2007 IEEE 11th international conference on computer vision (pp. 1–8). IEEE.

Longuet-Higgins, H. (1981). A computer algorithm for reconstructing a scene from two projections. Nature, 293(5828), 133.

Martyushev, E. (2017). On some properties of calibrated trifocal tensors. Journal of Mathematical Imaging and Vision, 58(2), 321–332.

Maybank, S. (1993). Theory of reconstruction from image motion. Berlin: Springer.

Nistér, D. (2004). An efficient solution to the five-point relative pose problem. IEEE Transactions on Pattern Analysis and Machine Intelligence, 26(6), 756–770.

Sengupta, S., Amir, T., Galun, M., Goldstein, T., Jacobs, D., Singer, A., & Basri, R. (2017). A new rank constraint on multi-view fundamental matrices, and its application to camera location recovery. In Proceedings of the IEEE conference on computer vision and pattern recognition (pp. 4798–4806).

Spetsakis, M., & Aloimonos, J. (1990). Structure from motion using line correspondences. International Journal of Computer Vision, 4, 171–183.

Stewénius, H., Engels, C., & Nistér, D. (2006). Recent developments on direct relative orientation. ISPRS Journal of Photogrammetry and Remote Sensing, 60(4), 284–294.

Stewénius, H., Nistér, D., Kahl, F., & Schaffalitzky, F. (2008). A minimal solution for relative pose with unknown focal length. Image and Vision Computing, 26(7), 871–877.

Weng, J., Huang, T., & Ahuja, N. (1992). Motion and structure from line correspondences: Closed-form solution, uniqueness, and optimization. IEEE Transactions on Pattern Analysis & Machine Intelligence, 14, 318–336.

Author information

Authors and Affiliations

Corresponding author

Additional information

Communicated by M. Hebert.

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

The work was supported by Act 211 Government of the Russian Federation, Contract No. 02.A03.21.0011.

Appendix

Appendix

We collect here several technical lemmas that we used in the proof of Theorem 5.

Recall that the radical of an ideal J, denoted \(\sqrt{J}\), is given by the set of polynomials which have a power belonging to J: OR to the ideal J:

It is known that \(\sqrt{J}\) is an ideal and the affine varieties of J and \(\sqrt{J}\) coincide. The following lemma gives a convenient tool to check whether a given polynomial is in the radical or not.

Lemma 1

(Cox et al. 2007) Let \(J = \langle p_1, \ldots , p_s \rangle \subset {\mathbb {C}}[\xi _1, \ldots , \xi _n]\) be an ideal. Then a polynomial \(p \in \sqrt{J}\) if and only if \(1 \in {{\widetilde{J}}} = \langle p_1, \ldots , p_s, 1 - \tau p \rangle \subset {\mathbb {C}}[\xi _1, \ldots , \xi _n, \tau ]\).

By Lemma 1, a polynomial \(p \in \sqrt{J}\) if and only if the (reduced) Gröbner basis of \({{\widetilde{J}}}\) is \(\{1\}\). In the proof of Theorem 5, we used the computer algebra system Macaulay2 (Grayson and Stillman 2002) to compute the Gröbner bases. The computation time did not exceed 3 seconds per basis.

Lemma 2

Let essential matrices \(E_{12}\), \(E_{23}\), \(E_{31}\) be represented in form \(E_{ij} = [b_{ij}]_\times R_{ij}\). If the matrices \(R_{ij}\) and vectors \(b_{ij}\) are constrained by

then the triplet \(\{E_{12}, E_{23}, E_{31}\}\) is compatible.

Proof

Let \(R_{ij}\) and \(b_{ij}\) satisfy Eqs. (64)–(65). Then Eq. (13) has the following possible solution for \(R_i\) and \(b_i\):

It follows that the triplet \(\{E_{12}, E_{23}, E_{31}\}\) is compatible. Lemma 2 is proved. \(\square \)

Lemma 3

The following triplet of essential matrices with collinear epipoles is compatible:

where \(\epsilon _i = {\pm } 1\), \(s = \begin{bmatrix} 0&\delta&1 \end{bmatrix}^\top \), and \(\delta \) is an arbitrary parameter.

Proof

We denote \(R_0 = \begin{bmatrix} -1 &{} 0 &{} 0 \\ 0 &{} -\cos \psi _0 &{} \sin \psi _0 \\ 0 &{} \sin \psi _0 &{} \cos \psi _0 \end{bmatrix}\), where \(\cos \psi _0 = \frac{1 - \delta ^2}{1 + \delta ^2}\) and \(\sin \psi _0 = \frac{2\delta }{1 + \delta ^2}\). The essential matrices from triplet (67) can be written in form \(E_{ij} = [b_{ij}]_\times R_{ij}\), where matrices \(R_{ij}\) and vectors \(b_{ij}\) are defined as follows

It is straightforward to verify that constraints (64)–(65) hold. By Lemma 2, triplet (67) is compatible. Lemma 3 is proved. \(\square \)

Lemma 4

The following triplets of essential matrices are compatible:

-

1.

$$\begin{aligned} E_{12}= & {} \begin{bmatrix} 0 &{} -\gamma _1 &{} \beta _1 \\ \gamma _1 &{} 0 &{} -\alpha _1 \\ -\beta _1 &{} \alpha _1 &{} 0 \end{bmatrix},\quad E_{23} = \begin{bmatrix} 0 &{} -\gamma _2 &{} \beta _2 \\ \gamma _2 &{} 0 &{} \alpha _1 \\ -\beta _2 &{} -\alpha _1 &{} 0 \end{bmatrix},\nonumber \\ E_{31}= & {} \begin{bmatrix}0 &{} \gamma _1 + \gamma _2 &{} -\beta _1 - \beta _2 \\ -\gamma _1 - \gamma _2 &{} 0 &{} 0 \\ \beta _1 + \beta _2 &{} 0 &{} 0 \end{bmatrix}; \end{aligned}$$(69)

-

2.

$$\begin{aligned} E_{12}= & {} \begin{bmatrix} 0 &{} 0 &{} 0 \\ 0 &{} 0 &{} -\alpha _1 \\ 0 &{} \alpha _1 &{} 0 \end{bmatrix},\,\nonumber \\ E_{23}= & {} \begin{bmatrix} 0 &{} 0 &{} \beta _2 \\ 0 &{} 0 &{} -\alpha _1 \\ -\beta _2 &{} \alpha _1 &{} 0 \end{bmatrix},\, E_{31} = \begin{bmatrix} 0 &{} 0 &{} \beta _2 \\ 0 &{} 0 &{} 0 \\ \beta _2 &{} 0 &{} 0 \end{bmatrix}; \end{aligned}$$(70)

-

3.

$$\begin{aligned} E_{12}= & {} \begin{bmatrix} 0 &{} 0 &{} \beta _1 \\ 0 &{} 0 &{} -\alpha _1 \\ -\beta _1 &{} \alpha _1 &{} 0 \end{bmatrix},\, E_{23} = \begin{bmatrix} 0 &{} 0 &{} 0 \\ 0 &{} 0 &{} -\alpha _1 \\ 0 &{} \alpha _1 &{} 0 \end{bmatrix},\, \nonumber \\ E_{31}= & {} \begin{bmatrix} 0 &{} 0 &{} -\beta _1 \\ 0 &{} 0 &{} 0 \\ -\beta _1 &{} 0 &{} 0 \end{bmatrix}; \end{aligned}$$(71)

-

4.

$$\begin{aligned} E_{12}= & {} \begin{bmatrix} 0 &{} 0 &{} \beta _1 \\ 0 &{} 0 &{} -\alpha _1 \\ -\beta _1 &{} \alpha _1 &{} 0 \end{bmatrix},\quad E_{23} = \begin{bmatrix} 0 &{} 0 &{} -\frac{\alpha _1^2}{\beta _1} \\ 0 &{} 0 &{} -\alpha _1 \\ \frac{\alpha _1^2}{\beta _1} &{} \alpha _1 &{} 0 \end{bmatrix},\nonumber \\ E_{31}= & {} \begin{bmatrix} 0 &{} 0 &{} -\frac{\alpha _1^2 + \beta _1^2}{\beta _1} \\ 0 &{} 0 &{} 0 \\ -\frac{\alpha _1^2 + \beta _1^2}{\beta _1} &{} 0 &{} 0 \end{bmatrix}; \end{aligned}$$(72)

-

5.

$$\begin{aligned} E_{12}&{=}&\begin{bmatrix} 0 &{} -\gamma _2 - \gamma _3 &{} 0 \\ \gamma _2 + \gamma _3 &{} 0 &{} 0 \\ 0 &{} 0 &{} 0 \end{bmatrix},\quad E_{23}{=} \begin{bmatrix} 0 &{} -\gamma _2 &{} \beta _2 \\ \gamma _2 &{} 0 &{} 0 \\ -\beta _2 &{} 0 &{} 0 \end{bmatrix},\nonumber \\ E_{31}&{=}&\begin{bmatrix} 0 &{} \gamma _3 &{} -\beta _2 \\ -\gamma _3 &{} 0 &{} 0 \\ {-}\beta _2 &{} 0 &{} 0 \end{bmatrix}; \end{aligned}$$(73)

-

6.

$$\begin{aligned} E_{12}= & {} \begin{bmatrix} 0 &{} -\gamma _1 &{} \beta _1 \\ \gamma _1 &{} 0 &{} 0 \\ -\beta _1 &{} 0 &{} 0 \end{bmatrix},\quad E_{23} = \begin{bmatrix} 0 &{} -\gamma _1 - \gamma _3 &{} 0 \\ \gamma _1 + \gamma _3 &{} 0 &{} 0 \\ 0 &{} 0 &{} 0 \end{bmatrix},\nonumber \\ E_{31}= & {} \begin{bmatrix} 0 &{} \gamma _3 &{} \beta _1 \\ -\gamma _3 &{} 0 &{} 0 \\ \beta _1 &{} 0 &{} 0 \end{bmatrix}; \end{aligned}$$(74)

-

7.

$$\begin{aligned} E_{12}= & {} \begin{bmatrix} 0 &{} -\gamma _1 &{} \beta _1 \\ \gamma _1 &{} 0 &{} 0 \\ -\beta _1 &{} 0 &{} 0 \end{bmatrix},\quad E_{23} = \begin{bmatrix} 0 &{} -\gamma _2 &{} \beta _2 \\ \gamma _2 &{} 0 &{} 0 \\ -\beta _2 &{} 0 &{} 0 \end{bmatrix},\nonumber \\ E_{31}= & {} \begin{bmatrix} 0 &{} \gamma _3 &{} \beta _3 \\ -\gamma _3 &{} 0 &{} 0 \\ \beta _3 &{} 0 &{} 0 \end{bmatrix}, \end{aligned}$$(75)

where

$$\begin{aligned} \begin{aligned} \gamma _1&= \delta \beta _1(\beta _1 + \beta _2 - \beta _3),\\ \gamma _2&= \delta \beta _2(\beta _1 + \beta _2 + \beta _3),\\ \gamma _3&= \delta \beta _3(-\beta _1 + \beta _2 + \beta _3), \end{aligned} \end{aligned}$$(76)and parameter \(\delta \) is subject to

$$\begin{aligned} \delta (\gamma _1 + \gamma _2 + \gamma _3) = -1. \end{aligned}$$(77)

Proof

Triplets (69)–(71), (73), and (74) are compatible by definition as the corresponding essential matrices can be represented in form (13). Namely,

-

1.

for triplet (69):

$$\begin{aligned} E_{12}= & {} I [b_1 - 0]_\times I,\quad E_{23} = I [0 - b_3]_\times I,\nonumber \\ E_{31}= & {} I[b_3 - b_1]_\times I, \end{aligned}$$(78)where \(b_1 = \begin{bmatrix} \alpha _1&\beta _1&\gamma _1 \end{bmatrix}^\top \), \(b_3 = \begin{bmatrix} \alpha _1&-\beta _2&-\gamma _2 \end{bmatrix}^\top \);

-

2.

for triplet (70):

$$\begin{aligned} E_{12}= & {} D_1 [0 - b_2]_\times I,\quad E_{23} = I [b_2 - b_3]_\times I,\nonumber \\ E_{31}= & {} I [b_3 - 0]_\times D_1, \end{aligned}$$(79)where \(D_1 = {{\,\mathrm{diag}\,}}(1, -1, -1)\), \(b_2 = \begin{bmatrix} \alpha _1&0&0 \end{bmatrix}^\top \), \(b_3 = \begin{bmatrix} 0&-\beta _2&0 \end{bmatrix}^\top \);

-

3.

for triplet (71):

$$\begin{aligned} E_{12}= & {} I [b_1 - b_2]_\times I,\quad E_{23} = I [b_2 - 0]_\times D_1,\nonumber \\ E_{31}= & {} D_1 [0 - b_1]_\times I, \end{aligned}$$(80)where \(b_1 = \begin{bmatrix} 0&\beta _1&0 \end{bmatrix}^\top \), \(b_2 = \begin{bmatrix} -\alpha _1&0&0 \end{bmatrix}^\top \);

-

4.

for triplet (73):

$$\begin{aligned}&E_{12} = D_3 [b_1 - b_2]_\times I,\quad E_{23} = I [b_2 - 0]_\times I,\quad \nonumber \\&E_{31} = I [0 - b_1]_\times D_3, \end{aligned}$$(81)where \(D_3 = {{\,\mathrm{diag}\,}}(-1, -1, 1)\), \(b_1 = \begin{bmatrix} 0&\beta _2&-\gamma _3 \end{bmatrix}^\top \), \(b_2 = \begin{bmatrix} 0&\beta _2&\gamma _2 \end{bmatrix}^\top \);

-

5.

for triplet (74):

$$\begin{aligned} E_{12}= & {} I [0 - b_2]_\times I,\quad E_{23} = I [b_2 - b_3]_\times D_3,\nonumber \\ E_{31}= & {} D_3 [b_3 - 0]_\times I, \end{aligned}$$(82)where \(b_2 = \begin{bmatrix} 0&-\beta _1&-\gamma _1 \end{bmatrix}^\top \), \(b_3 = \begin{bmatrix} 0&-\beta _1&\gamma _3 \end{bmatrix}^\top \).

Further, let

The essential matrices from triplets (72) and (75) admit the representation \(E_{ij} = [b_{ij}]_\times R_{ij}\), where the matrices \(R_{ij}\) and vectors \(b_{ij}\) are defined below in (84) and (85) respectively:

-

1.

$$\begin{aligned} b_{12}&= \begin{bmatrix} -\alpha _1 \\ -\beta _1 \\ 0 \end{bmatrix},&\quad R_{12}&= \begin{bmatrix} \cos \varphi _1 &{} \sin \varphi _1 &{} 0 \\ \sin \varphi _1 &{} -\cos \varphi _1 &{} 0 \\ 0 &{} 0 &{} -1 \end{bmatrix},\nonumber \\ b_{23}&= \begin{bmatrix} -\alpha _1 \\ \frac{\alpha _1^2}{\beta _1}\nonumber \\ 0 \end{bmatrix},&\quad R_{23}&= \begin{bmatrix} -\cos \varphi _1 &{} -\sin \varphi _1 &{} 0 \\ -\sin \varphi _1 &{} \cos \varphi _1 &{} 0 \\ 0 &{} 0 &{} -1 \end{bmatrix},\\ b_{31}&= \begin{bmatrix} 0 \\ -\frac{\alpha _1^2 + \beta _1^2}{\beta _1} \\ 0 \end{bmatrix},&\quad R_{31}&= \begin{bmatrix} -1 &{} 0 &{} 0 \\ 0 &{} -1 &{} 0 \\ 0 &{} 0 &{} 1 \end{bmatrix}. \end{aligned}$$(84)

-

2.

$$\begin{aligned} b_{12}&= -\begin{bmatrix} 0 \\ \beta _1 \\ \gamma _1 \end{bmatrix},&\quad R_{12}&= \begin{bmatrix} -1 &{} 0 &{} 0 \\ 0 &{} \cos \psi _1 &{} \sin \psi _1 \\ 0 &{} \sin \psi _1 &{} -\cos \psi _1 \end{bmatrix},\nonumber \\ b_{23}&= -\begin{bmatrix} 0 \\ \beta _2 \\ \gamma _2 \end{bmatrix},&\quad R_{23}&= \begin{bmatrix} -1 &{} 0 &{} 0 \\ 0 &{} \cos \psi _2 &{} \sin \psi _2 \\ 0 &{} \sin \psi _2 &{} -\cos \psi _2 \end{bmatrix},\nonumber \\ b_{31}&= -\begin{bmatrix} 0 \\ \beta _3 \\ \gamma _3 \end{bmatrix},&\quad R_{31}&= \begin{bmatrix} 1 &{} 0 &{} 0 \\ 0 &{} -\cos \psi _3 &{} \sin \psi _3 \\ 0 &{} -\sin \psi _3 &{} -\cos \psi _3 \end{bmatrix}. \end{aligned}$$(85)

It is straightforward to verify that constraints (64)–(65) hold for both cases. By Lemma 2, triplets (72) and (75) are compatible. Lemma 4 is proved. \(\square \)

Lemma 5

The following triplets of essential matrices are compatible provided that \(\lambda ^2 + \mu ^2 = 1\):

-

1.

$$\begin{aligned} E_{12}= & {} \beta _1\begin{bmatrix} 0 &{} 0 &{} 1 \\ 0 &{} 0 &{} -\frac{\lambda - 1}{\mu } \\ -1 &{} \frac{\lambda - 1}{\mu } &{} 0 \end{bmatrix},\nonumber \\&E_{23} = \begin{bmatrix} 0 &{} 0 &{} \beta _1 + \beta _3 \\ 0 &{} 0 &{} -\beta _1\frac{\lambda - 1}{\mu } \\ -\beta _1 - \beta _3 &{} \beta _1\frac{\lambda - 1}{\mu } &{} 0 \end{bmatrix},\nonumber \\ E_{31}= & {} \beta _3\begin{bmatrix} 0 &{} 0 &{} 1 \\ 0 &{} 0 &{} 0 \\ -\lambda &{} -\mu &{} 0 \end{bmatrix}; \end{aligned}$$(86)

-

2.

$$\begin{aligned} E_{12}= & {} \begin{bmatrix} 0 &{} 0 &{} \beta _2 + \beta _3\lambda \\ 0 &{} 0 &{} -\alpha _1 \\ -\beta _2 - \beta _3\lambda &{} \alpha _1 &{} 0 \end{bmatrix},\nonumber \\&E_{23} = \beta _2\begin{bmatrix} 0 &{} 0 &{} 1 \\ 0 &{} 0 &{} -\frac{\lambda - 1}{\mu } \\ -1 &{} \frac{\lambda - 1}{\mu } &{} 0 \end{bmatrix},\nonumber \\ E_{31}= & {} \beta _3\begin{bmatrix} 0 &{} 0 &{} 1 \\ 0 &{} 0 &{} 0 \\ -\lambda &{} -\mu &{} 0 \end{bmatrix}, \end{aligned}$$(87)

where \(\alpha _1 = (\beta _2 + \beta _3(\lambda + 1))\frac{\lambda - 1}{\mu }\);

-

3.

$$\begin{aligned} E_{12}= & {} \begin{bmatrix} 0 &{} 0 &{} \beta _1 \\ 0 &{} 0 &{} -\alpha _1 \\ -\beta _1 &{} \alpha _1 &{} 0 \end{bmatrix},\nonumber \\&E_{23} = \begin{bmatrix} 0 &{} 0 &{} \beta _2 \\ 0 &{} 0 &{} \alpha _1\lambda + \beta _1\mu \\ -\beta _2 &{} -\alpha _1\lambda - \beta _1\mu &{} 0 \end{bmatrix},\nonumber \\ E_{31}= & {} \beta _3\begin{bmatrix} 0 &{} 0 &{} 1 \\ 0 &{} 0 &{} 0 \\ -\lambda &{} -\mu &{} 0 \end{bmatrix}, \end{aligned}$$(88)

where

$$\begin{aligned} \begin{aligned} \beta _2&= -\frac{(\alpha _1(\lambda - 1) + \beta _1\mu )(\alpha _1\lambda + \beta _1\mu )}{\alpha _1\mu - \beta _1(\lambda - 1)},\\ \beta _3&= \frac{(\alpha _1^2 + \beta _1^2)(\lambda - 1)}{\alpha _1\mu - \beta _1(\lambda - 1)}. \end{aligned} \end{aligned}$$(89)

Proof

Throughout the proof, \(\cos \varphi _i = \frac{\alpha _i^2 - \beta _i^2}{\alpha _i^2 + \beta _i^2}\) and \(\sin \varphi _i = \frac{2\alpha _i\beta _i}{\alpha _i^2 + \beta _i^2}\). The essential matrices from triplets (86), (87), and (88) can be written in form \(E_{ij} = [b_{ij}]_\times R_{ij}\), where the matrices \(R_{ij}\) and vectors \(b_{ij}\) are defined below in (90), (91), and (92) respectively:

-

1.

$$\begin{aligned} b_{12}&= -\begin{bmatrix} \alpha _1 \\ \beta _1 \\ 0 \end{bmatrix},&\quad R_{12}&= \begin{bmatrix} \cos \varphi _1 &{} \sin \varphi _1 &{} 0 \\ \sin \varphi _1 &{} -\cos \varphi _1 &{} 0 \\ 0 &{} 0 &{} -1 \end{bmatrix},\nonumber \\ b_{23}&= \begin{bmatrix} \alpha _1 \\ \beta _1 + \beta _3 \\ 0 \end{bmatrix},&\quad R_{23}&= I,\nonumber \\ b_{31}&= -\begin{bmatrix} 0 \\ \beta _3 \\ 0 \end{bmatrix},&\quad R_{31}&= R_{12}, \end{aligned}$$(90)

where \(\alpha _1 = \beta _1\frac{\lambda - 1}{\mu }\);

-

2.

$$\begin{aligned} b_{12}&= \begin{bmatrix} \alpha _1 \\ \beta _2 + \beta _3\lambda \\ 0 \end{bmatrix},&\quad R_{12}&= I,\nonumber \\ b_{23}&= -\begin{bmatrix} \alpha _2 \\ \beta _2 \\ 0 \end{bmatrix},&\quad R_{23}&= \begin{bmatrix} \cos \varphi _2 &{} \sin \varphi _2 &{} 0 \\ \sin \varphi _2 &{} -\cos \varphi _2 &{} 0 \\ 0 &{} 0 &{} -1 \end{bmatrix},\nonumber \\ b_{31}&= -\begin{bmatrix} 0 \\ \beta _3 \\ 0 \end{bmatrix},&\quad R_{31}&= R_{23}, \end{aligned}$$(91)

where \(\alpha _1 = (\beta _2 + \beta _3(\lambda + 1))\frac{\lambda - 1}{\mu }\), \(\alpha _2 = \beta _2\frac{\lambda - 1}{\mu }\);

-

3.

$$\begin{aligned} b_{12}&= -\begin{bmatrix} \alpha _1 \\ \beta _1 \\ 0 \end{bmatrix},&\quad R_{12}&= \begin{bmatrix} \cos \varphi _1 &{} \sin \varphi _1 &{} 0 \\ \sin \varphi _1 &{} -\cos \varphi _1 &{} 0 \\ 0 &{} 0 &{} -1 \end{bmatrix},\nonumber \\ b_{23}&= -\begin{bmatrix} \alpha _2 \\ \beta _2 \\ 0 \end{bmatrix},&\quad R_{23}&= \begin{bmatrix} \cos \varphi _2 &{} \sin \varphi _2 &{} 0 \\ \sin \varphi _2 &{} -\cos \varphi _2 &{} 0 \\ 0 &{} 0 &{} -1 \end{bmatrix},\nonumber \\ b_{31}&= \begin{bmatrix} 0 \\ \beta _3 \\ 0 \end{bmatrix},&\quad R_{31}&= (R_{12}R_{23})^\top = \begin{bmatrix} \lambda &{} \mu &{} 0 \\ -\mu &{} \lambda &{} 0 \\ 0 &{} 0 &{} 1 \end{bmatrix}, \end{aligned}$$(92)

where \(\beta _2\) and \(\beta _3\) are defined in (89).

By direct computation, constraints (64)–(65) hold for all three cases. By Lemma 2, triplets (86)–(88) are compatible. Lemma 5 is proved. \(\square \)

Rights and permissions

About this article

Cite this article

Martyushev, E.V. Necessary and Sufficient Polynomial Constraints on Compatible Triplets of Essential Matrices. Int J Comput Vis 128, 2781–2793 (2020). https://doi.org/10.1007/s11263-020-01330-1

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11263-020-01330-1