Abstract

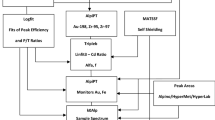

In this work, which is part of a larger effort to develop a software to automate instrumental neutron activation analysis calculations, the elemental concentration in a sample was calculated using either a set containing only the gamma-ray peaks recommended in the literature or a set containing all peaks identified. The results for each element were reduced using five tools: the usual unweighted and weighted means, plus the limitation of statistical weight, Normalized Residuals and Rajeval. The results were compared to the certified value for each element, allowing for discussion on the performance of each statistical tool and on the choices of peaks.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

Introduction

In the process of analyzing the quantitative results of an experiment where a certain variable has been determined more than once, the determination of the most reliable value for this variable, with the lowest realistic uncertainty, is often an issue. The most common techniques for obtaining this estimate are the unweighted average, the σ −2-weighted average (where σ is the uncertainty of that measurement), or even the choice of the “best” measurement (as judged by the experimentalist), and either of them have their favorable points and weaknesses. Above all, the two common averages can’t take into account the possibility that one value might have been influenced by unexpected factors and resulted way off the expected value—these outlier values may influence both common averages and result in a distorted final value. Several techniques have been proposed to the task of identifying outliers [1]; others have suggested averaging procedures that intend to identify and deal with these outlier values, also called robust averages, that should lead to more reliable estimates of the measured magnitude [2].

Instrumental neutron activation analysis (INAA) is an analytical technique where the elemental concentration of a sample is determined by measuring the gamma-ray activity induced after irradiation in a neutron field and comparing it to the activity induced in one (or, usually, more) well-known standards. In such a measurement, one can frequently determine a given element’s concentration using more than one gamma-ray transition and more than one comparator, leading to several estimates for the same magnitude, the concentration of that particular element. The determination of the single, final estimate for the concentration of that element in the sample and its uncertainty is, then, a typical case of the process described in the previous paragraph and could profit from a more refined data analysis. The choice of transitions to use itself has been thoroughly studied and the International Atomic Energy Agency (IAEA) proposed the use of either a well-defined set of transitions or of a single, “best”, transition for each element to be determined [3]. In the case of single comparator NAA, Blaauw [4] has proposed a holistic approach where the whole expected spectrum of each radionuclide found in the measurement is used so that interferent transitions are properly treated, but this approach requires a very good knowledge of the efficiency calibration curve of the detector and is difficult to apply on comparative INAA.

Many authors have proposed the use of robust methods in the analysis of NAA results, focusing on either the analysis of replicate samples [5], of interlaboratory data and intercomparisons [6] or in the interpretation of the results obtained for a group of non-identical samples [7–9]. The essential question of determining a single best estimate for a unique comparative NAA measurement where several transitions and comparators are present, though, isn’t treated and, therefore, the advantages of using a robust estimator can’t be properly assessed from the available literature data.

The objective of this work is, then, to answer the question of how to deliver the most precise and dependable result for the concentration in an automated software, which could be safely used by even a less experienced experimentalist.

Robust averages

When dealing with a set of discrepant data (roughly speaking, data where a simple average leads to a large χ2), some steps must be taken to assure that the final result, as well as its uncertainty, are a good estimate of the measured value and not influenced by unexpected outliers. There are several techniques developed specifically for this task; for instance, the evaluators of the Nuclear Data Sheets recommend using the Limitation of relative statistical weight (LRSW) method [10], where steps are taken to prevent a single datapoint to have more than 50 % of the total weight in a weighted average. Other, more refined, techniques are proposed in [2, 11], the Normalized Residuals technique (NR) and the Rajeval technique (RT), which try to locate possible outlier results and prevent them from having a great influence in the calculation’s outcome. All three techniques are described in detail in [2], and the next subsections will only give a rough outline of each.

Limitation of relative statistical weight

In this technique, a σ −2-weighted average is performed, but if a single point has more than 50 % of the total weight its uncertainty is raised so that its weight equals 50 %; the aim of this technique is just to prevent a single point from having too much influence on the final result.

Normalized residuals

In this case, a σ −2-weighted average is also performed, then the normalized residuals (i.e., the residual divided by the propagated uncertainty) for each point are calculated and, for each point where this residual is larger than a critical value (determined by a 99 % probability interval), the uncertainty of that point is enlarged accordingly and the average is recalculated. This procedure is repeated until no residual is larger than the critical value. The aim of this procedure is to assure that values far from the average (i.e., outliers) have little influence in the final outcome of the calculation.

Rajeval technique

This is a more refined variant of the NR; here points are first submitted to a population test in order to reject gross outliers and the remaining points are submitted to an individual consistency test, where central deviations (CD) are calculated by using the probability integral values, and the points with a large deviation have their uncertainties enlarged; this, too, is an iterative procedure.

Experimental procedure

In order to test the usefulness and assess the performance of these statistical tools, as well as to analyze the use of a greater set of gamma transitions in the analysis of INAA results, 100 mg aliquots of three reference materials (CRM) were irradiated together in the IEA-R1 reactor for 8 h under a thermal neutron flux of approximately 4 × 1012cm−2s−1. These samples were then counted twice in a 20 % HPGe system—the fist counting took place 7 days after the irradiation, to quantify radionuclides with half-lives ranging from several hours to a few days, and the second one 15 days after irradiation, for longer-lived radionuclides. In all cases the samples were counted for 1 h, with a source-detector distance of 9 cm in the first counting and of only a few mm in the second one. The spectra were processed using the in-house developed software VISPECT, which gives the peaks found in each spectrum and the cps (counts per second) for each with its respective uncertainty. One of the CRMs (JB-1 Basalt) was used as an unknown sample, while the other two (GS-N Granite and BE-N Basalt) were used as comparators to determine the elemental composition of the unknown sample.

The usual procedure is to check for the recommended gamma-rays for each radionuclide, as defined in [3]. In order to verify for the usefulness and reliability of using a larger set of gamma-rays, the spectra were also checked for other gamma-rays associated with the relevant decays, and two different datasets were analyzed: one with all the transitions found for each radionuclide and a second one using only the recommended transitions. The concentration for a given element was calculated individually for each transition/comparator pair, resulting in N different concentration values (points). The results for each of the two datasets were submitted to the same statistical analyses and the results for each element were verified both in terms of the relative uncertainty and of the z’-score, when compared to the certified values for the JB-1 material. Finally, for each element, the value with the lowest uncertainty observed using only the most recommended transition in [3] was also calculated. It must be stressed, though, that as all three robust techniques are meaningful only when at least 3 values are available for a chemical element—the RT, actually, can’t be applied at all to sets with less than three points—only the results for the elements where three or more points were available were included in the analysis.

Results and discussion

The results for the concentrations obtained for the 11 elements where three or more different datapoints were available are presented in Table 1, together with the concentration value from the certificate (C) and the single recommended transition (ST); N is the number of datapoints used in each group; the legend under.

TrS indicates which transition set was used, the one recommended in [3] (R) or the set composed by all transitions identified (A)—in the cases where the only transitions identified were the ones recommended in [3], this value is absent. Table 2 shows the z’-scores obtained in the comparison of these results to the certified value, using the combined uncertainties:

The comparison between the statistical tools tested shows that the LRSW and unweighted mean techniques were the only ones for which all values were in agreement with the certified ones within a 99.5 % interval (i.e., −3 < z’-score < 3), but at the cost of uncertainties that sometimes are of the same magnitude as the values themselves. The NR reached the best overall compromise between precision and accuracy, failing only for Tb when the complete dataset was used; its uncertainties were slightly larger than the ones obtained using a regular weighted mean, but the results obtained with it always led to lower z’-scores. The RT led mostly to the same results as the Normalized Residuals, failing only when using all available transitions for Sm and Tb—in the latter case, the Normalized Residuals also failed; on the other hand, it led to significantly smaller uncertainties for Eu when using all available transitions and for Fe, with either transition set. These results are a good indication that robust statistics can play an important role in this task, at the same time reducing the final uncertainty and avoiding interferences in the final result.

Regarding the choice of a transition set, these results show that the use of a single transition leads to significantly larger uncertainties and to results that are somehow less reliable than other methods (in the present experiment it was the case for Sm); on the other hand, the use of a larger dataset usually led to smaller uncertainties, but also to less reliable results—for Sm and Tb, for example, the results obtained using all available transitions were mostly far from the expected value, with z’-scores typically greater than 3.

Considering these results, it is clear that using the transition set recommended in [3] is the best choice, as the increase in the number of transitions led to much less dependable results. As for the choice of a statistical tool, both the Normalized Residuals and the RT, when used with this transition set, delivered excellent, dependable, results.

Conclusions

From this work it is clear that, at least for the elements analyzed, the best choice of transitions is the transition set recommended in [3], which leads to more reliable results without increasing too much the uncertainty. On the other hand, the use of a single transition led to larger uncertainties and, in one case, to a result which didn’t completely agree to the certified value. Also, regarding the statistical tools employed, the NR led to the best results in all cases; when using only the recommended gamma-ray set, though, the RT also led to reliable results, in some cases with a slightly smaller uncertainty. These results indicate that, in the development of a software to automate the post-counting tasks in an INAA experiment, either the Normalized Residuals or the Rajeval averages would be good choices for delivering a single, final result for the concentration of a given element in a sample.

References

Oliveira PMS, Munita CS, Hazenfratz R (2010) Comparative study between three methods of outlying detection on experimental results. J Radioanal Nucl Chem 283:433–437

Rajput MU, Ali N, Hussain S, Mujahid SA, MacMahon D (2012) Beta decay of the fission product 125Sb and a new complete evaluation of absolute gamma ray transition intensities. Rad Phys Chem 81:370–378

Bode P (1990) Practical aspects of operating a neutron activation analysis laboratory. Tech Rep IAEA-TECDOC-564, International Atomic Energy Agency

Blaauw M (1994) The holistic analysis of gamma-ray spectra in instrumental neutron activation analysis. Nucl Instrum Methods A 353:269–271

Rock NMS (1988) Summary statistics in geochemistry: a study of the performance of robust estimates. Math Geol 20:243–275

Lister B (1986) Best estimates from interlaboratory data. Anal Chim Acta 186:325–329

Chueinta W, Hopke PK, Paatero P (2000) Investigation of sources of atmospheric aerosol at urban and suburban residential areas in thailand by positive matrix factorization. Atmos Environ 34:3319–3329

Paatero P, Tapper U (1994) Positive matrix factorization: a non-negative factor model with optimal utilization of error estimates of data values. Environmetrics 5:111–126

Reimann C, Filzmoser P (2000) Normal and lognormal data distribution in geochemistry: death of a myth. Consequences for the statistical treatment of geochemical and environmental data. Environ Geol 39:1001–1014

Browne-Moreno E (2008) Joint ICTP-IAEA workshop on nuclear structure and decay data: Theory and evaluation—ENSDF decay (decay data). Technical report, International Atomic Energy Agency

Rajput MU, MacMahon TD (1992) Techniques for evaluating discrepant data. Nucl Instrum Methods A 312:289–295

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Zahn, G.S., Genezini, F.A., Secco, M. et al. Can robust statistics aid in the analysis of NAA results?. J Radioanal Nucl Chem 306, 607–610 (2015). https://doi.org/10.1007/s10967-015-4163-9

Received:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10967-015-4163-9