Abstract

Independent Haar-unitary random matrices and independent Haar-orthogonal random matrices are known to be asymptotically liberating ensembles, and they give rise to asymptotic free independence when used for conjugation of constant matrices. G. Anderson and B. Farrel showed that a certain family of discrete random unitary matrices can actually be used to the same end. In this paper, we investigate fluctuation moments and higher-order moments induced on constant matrices by conjugation with asymptotically liberating ensembles. We show for the first time that the fluctuation moments associated with second-order free independence can be obtained from conjugation with an ensemble consisting of signed permutation matrices and the discrete Fourier transform matrix. We also determine fluctuation moments induced by various related ensembles where we do not get known expressions but others related to traffic free independence.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

1.1 Background

Random matrices are matrix-valued random variables that were first investigated in mathematical statistics [29] and then in nuclear physics [28]. Over the years, its study has evolved into a theory with applications to pure and applied sciences such as numerical analysis [6], analytic number theory [10], and wireless communications [24].

One of the main topics in random matrix theory is the study of limiting, or asymptotic, properties of random matrix ensembles. The term random matrix ensemble is used in the literature to refer to a sequence of random matrices \(\{X_{N}\}_{N=1}^{\infty }\), or a sequence of families of random matrices \(\{\{X_{N,i}\}_{i \in I}\}_{N=1}^{\infty }\), where the considered random matrices increase in size with respect to N. Their limiting properties are those arising from letting N go to infinity. Joint eigenvalue distributions, eigenvalues spacing, concentration inequalities, large deviation principles, maximal eigenvalues, and central limit theorems are some examples of limiting properties, for an introduction on these subjects one can consult [2].

Now, introduced by D. Voiculescu in his research on von Neumann algebras in [25], free probability theory has played a key role in the study of random matrices when multiple ensembles need to be considered. A main notion from free probability is that of asymptotic free independence.

Definition 1

Let I be a non-empty set. Suppose \(\{X_{N,i}\}_{N=1}^{\infty }\) is a random matrix ensemble for each \(i\in I\) where each \(X_{N,i}\) is a N-by-N random matrix. We say that \(\{X_{N,i}\}_{N=1}^{\infty }\) with \(i\in I\) are asymptotically freely independent if the following two conditions hold:

-

(AF.1)

for each index \(i \in I \) and every integer \(m\ge 1\) the limit

$$\begin{aligned} \displaystyle \lim _{N\rightarrow \infty } {\mathbb {E}}\left[ \textrm{tr} \left( {X_{N,i}^m} \right) \right] , \end{aligned}$$where \(\textrm{tr} \left( {\cdot } \right) \) denotes the normalized trace \(\frac{1}{N}\textrm{Tr} \left( {\cdot } \right) \), exists and

-

(AF.2)

for all integers \(m\ge 1\), all indexes \(i_1, i_2, \ldots , i_m \in I\) satisfying \(i_{1} \ne i_{2}, i_{2} \ne i_{3}, \ldots , i_{m-2} \ne i_{m-1}, i_{m-1} \ne i_{m}\), and \(i_{m} \ne i_{1}\) and all polynomials \(\textrm{p}_{1}, \textrm{p}_{2}, \ldots ,\textrm{p}_{m}\) in the algebra \({\mathbb {C}}[\textrm{x}]\), we have

$$\begin{aligned} \lim _{N\rightarrow \infty } {\mathbb {E}} \left[ {\textrm{tr} \left( {Y_{N,1} Y_{N,2} \cdots Y_{N,m}} \right) }\right] = 0 \end{aligned}$$where \(Y_{N,k} = \textrm{p}_{k} \left( X_{N,i_k} \right) - {\mathbb {E}} \left[ {\textrm{tr} \left( {\textrm{p}_{k} \left( X_{N,i_k} \right) } \right) }\right] I_{N}\).

The first connection between free probability and random matrices was established by D. Voiculescu when he showed in [26] that independent Gaussian unitary ensembles converge to free semicircular random variables, a result which generalizes Wigner’s semicircular law and entails the asymptotic free independence of independent Gaussian unitary ensembles. The list of random matrix ensembles exhibiting asymptotic free independence has been extended since then, and it now includes: independent Wishart ensembles, independent Gaussian orthogonal ensembles, independent Haar-unitary distributed ensembles, independent Haar-orthogonal distributed ensembles, among others. The monograph [27] and the book [20] are standard introductions to free probability, and the recent monograph [18] is an excellent source presenting multiples directions in which the relation between free probability and random matrices has been extended.

Another result due to D. Voiculescu in [26], and subsequently generalized by other authors, states that conjugation by independent Haar-unitary distributed random matrices gives rise to asymptotic free independence. More concretely, assume \(D_{N,i}\) is a self-adjoint N-by-N deterministic matrix for each index \(i \in I\) and each integer \(N\ge 1\) and suppose that

for all \(i \in I \) and \(m\ge 1\); the random matrix ensembles \(\{ D^{}_{N,i} \}_{N=1}^{\infty }\) with \(i \in I\) might or might not be asymptotically freely independent; however, if \(\{U_{N,i}\}_{i \in I}\) is a family of independent N-by-N Haar-unitary distributed random matrices for each \(N\ge 1\), then \(\{ U^{}_{N,i} D^{}_{N,i} U^{*}_{N,i} \}_{N=1}^{\infty }\) with \(i \in I\) are asymptotically freely independent. The same conclusion holds if each \(U_{N,i}\) is Haar-orthogonal distributed, see [13].

Aiming to enclose all of those unitary random matrix ensembles that give rise to asymptotic free independence when used for conjugation, B. Farrell and G. Anderson introduced in [1] the notion of asymptotically liberating random matrix ensembles.

Definition 2

Suppose \( U_{N,i} \) is an N-by-N unitary random matrix for each index \(i \in I\) and each integer \(N\ge 1\). The unitary random matrix ensemble \(\left\{ \left\{ U_{N,i} \right\} _{i\in I}\right\} _{N=1}^{\infty }\) is asymptotically liberating if for all indexes \(i_{1},i_{2},\ldots ,i_{m} \in I\) with \(i_1 \ne i_2, i_2 \ne i_3, \ldots , i_{m-1}\ne i_m\), and \(i_m \ne i_1\) there exists a constant \(C > 0\) depending only on the indexes \(i_{1},i_{2},\ldots ,i_{m}\) such that

for all integers \(N \ge 1\) and all matrices \(A_{N,1}, A_{N,2}, \ldots , A_{N,m} \in \text {Mat}_N ({\mathbb {C}})\) each of trace zero.

It follows immediately from the above definition that asymptotically liberating ensembles gives rise to asymptotic free independence when used for conjugation. Indeed, suppose \(\{ \{ U_{N,i} \}_{i\in I}\}_{N=1}^{\infty }\) is an asymptotically liberating ensemble and assume \(\{ D^{}_{N,i} \}_{N=1}^{\infty }\) with \(i \in I\) satisfy (1.1). Letting \(X_{N,i} = U^{}_{N,i} D^{}_{N,i} U^{*}_{N,i}\), we have (1.1) implies (AF.1) from Definition 1; moreover, if each \(Y_{N,k}\) is as in (AF.2) from Definition 1, then

where \(A_{N,k}\) denotes the matrix of trace zero \(\textrm{p}_{k} ( D^{}_{N,i_k}) - \textrm{tr} ( \textrm{p}_{k} ( D^{}_{N,i_k} )) I_{N} \), but (1.1) also implies that \(\sup _{N} \left\| {A^{}_{N,k}} \right\| < \infty \), and hence, (AF.2) holds. As it was intended, independent Haar-unitary random matrix ensembles and independent Haar-orthogonal random matrix ensembles are among those unitary random matrix ensembles shown to be asymptotically liberating, see Theorem 2.8 in [1] or Lemma 3.

A key feature of asymptotic free independence is that it provides us with universal rules to compute limiting mixed moments out of individual ones. A limiting mixed moment of the ensembles \(\{X_{N,i}\}_{N=1}^{\infty }\) with \(i\in I\) is a limit of the form

where at least two of the indexes \(i_1, i_2,\ldots ,i_m \in I\) are distinct and none of them depend on N. For instance, if \(\{X_{N,i}\}_{N=1}^{\infty }\) with \(i\in I\) are asymptotically free independent and \(i_{1}, i_{2} \in I\) are distinct, one can show that

where \( \alpha _{m}^{(i)}\) denotes \(\lim _{N\rightarrow \infty } {\mathbb {E}}[\textrm{tr}(X_{N,i}^m) ]\) and is called the m-th limiting individual moment of \(\{X_{N,i}\}_{N=1}^{\infty }\). The relation above, and any other derived from asymptotic free independence to compute mixed moments, is called universal since it does not depend on any particular choice of \(i_{1}\) and \(i_{2}\) and it only requires \(\{X_{N,i_{1}}\}_{N=1}^{\infty }\) and \(\{X_{N,i_{2}}\}_{N=1}^{\infty }\) to be asymptotically freely independent.

At this point, one might wonder if there are universal rules for computing limiting mixed moments of higher order out of individual ones. A limiting moment of n-th order of the ensembles \(\{X_{N,i}\}_{N=1}^{\infty }\) with \(i \in I\) is defined to be a limit of the form

where \({\mathfrak {c}}_{n}[\cdot , \ldots , \cdot ]\) denotes the n-th classical cumulant and each \({\widetilde{X}}_{N,k}\) is of the form

for some integer \(m_{k}\ge 1\) and some indexes \(i^{(k)}_{1},i^{(k)}_{2},\ldots ,i^{(k)}_{m_{k}} \in I\) not depending on N. The choice of the normalization factor \(N^{n-2}\) appearing in (1.3) is due to what has been observed for the behavior of (1.3) when each \(X_{N,i}\) is a Gaussian unitary ensemble. Since the limiting moment (1.3) is just a generalization of (1.2), we call it mixed if at least two of the indexes \(i^{(1)}_{1},\ldots ,i^{(1)}_{m_{1}},i^{(2)}_{1},\ldots ,\) \(i^{(2)}_{m_{2}},\ldots ,i^{(n)}_{1},\ldots , i^{(n)}_{m_{n}}\) are distinct, and individual, otherwise.

The most studied moments of higher order are moments of second order, also known as fluctuation moments. A fluctuation moment of the ensembles \(\{X_{N,i}\}_{N=1}^{\infty }\) with \(i \in I\) is then a limit of the form

for some integers \(m_{1},m_{2}\ge 1\) and indexes \(i_{1},i_{2},\ldots ,i_{m_{1}},i_{m_{1}+1},i_{m_{1}+2},\ldots ,i_{m_{1}+m_{2}} \in I\). A common practice in free probability theory to determine combinatorially (1.3), or (1.4), is that of calculating limiting moments of products of cyclically alternating and centered random matrices, as in (AF.2) from Definition 1. For fluctuation moments, this means one must consider limits of the form

where \( Y_{N,k}\) and \(Z_{N,l}\) are given by

for all polynomials \(\textrm{p}_{1}, \textrm{p}_{2},\ldots , \textrm{p}_{m_{1}}, \textrm{q}_{1},\textrm{q}_{2}, \ldots , \textrm{q}_{m_{2}} \in {\mathbb {C}}[\textrm{x}]\) and all indexes \(i_1, i_2, \ldots , i_{m_{_1}}, j_{1},j_{2}, \ldots , j_{m_{_2}} \in I\) satisfying the condition

Analyzing the fluctuation moments of complex Gaussian and complex Wishart random matrix ensembles, J. Mingo and R. Speicher found a relation between individual and mixed moments of first and second order and introduced in [16] the notion of asymptotic free independence of second order.

Definition 3

We say that the random matrix ensembles \(\{X_{N,i}\}_{N=1}^{\infty }\) with \(i\in I\) are asymptotically freely independent of second order if they are asymptotically freely independent and the following three conditions are satisfied:

-

(ASOF.1)

for each index \(i \in I\) and all integers \(m,n \ge 1\) the limit

$$\begin{aligned} \displaystyle \lim _{N\rightarrow \infty } \textrm{Cov} \left[ \textrm{Tr} ( {X_{N,i}^m} ), \textrm{Tr} ( {X_{N,i}^n} ) \right] \end{aligned}$$exists,

-

(ASOF.2)

for all integers \(m_{1},m_{2} \ge 1\), all indexes \(i_1, i_2, \ldots , i_{m_{_1}}, j_{1},j_{2},\ldots ,j_{m_{_2}} \in I\) satisfying (1.6), and all polynomials \(\textrm{p}_{1}, \textrm{p}_{2},\ldots , \textrm{p}_{m_{1}}, \textrm{q}_{1},\textrm{q}_{2}, \ldots , \textrm{q}_{m_{2}}\) in the algebra \({\mathbb {C}}[\textrm{x}]\), if we take

$$\begin{aligned} Y_{N}=Y_{N,1} Y_{N,2} \cdots Y_{N,m_{1}} \quad \text { and } \quad Z_{N} = Z_{N,1} Z_{N,2} \cdots Z_{N,m_{2}} \end{aligned}$$with \(Y_{N,k}\) and \(Z_{N,l}\) given by (1.5) for \(1 \le k \le m_{1}\) and \(1 \le l \le m_{2}\), we have

$$\begin{aligned} \lim _{N\rightarrow \infty } \textrm{Cov} \left[ \textrm{Tr} ( { Y_{N} } ), \textrm{Tr} ( { Z_{N} } ) \right] = \delta _{m_{1},m_{2}} \lim _{N\rightarrow \infty } \sum ^{m_{1}}_{l=1}\prod ^{m_{2}}_{k=1} {\mathbb {E}} \left[ {\textrm{tr} \left( {Y_{N,k}Z_{N,l-k}} \right) }\right] \end{aligned}$$(1.7)where \(l-k\) is taken modulo \(m_{2}\), and

-

(ASOF.3)

for every integer \(n\ge 3\), all polynomials \(\textrm{p}_{1}, \textrm{p}_{2},\ldots , \textrm{p}_{n}\) in the algebra of non-commutative polynomials \({\mathbb {C}} \left\langle \textrm{x}_{i} \mid i \in I \right\rangle \), letting \(Y_{N,k} = \textrm{p}_{k} \left( \{X_{N,i}\}_{i\in I} \right) \), we have

$$\begin{aligned} \lim _{N\rightarrow \infty } {\mathfrak {c}}_{n} \left[ \textrm{Tr} \left( {Y_{N,1}} \right) , \textrm{Tr} \left( {Y_{N,2}} \right) ,\ldots ,\textrm{Tr} \left( {Y_{N,n}} \right) \right] = 0 \end{aligned}$$

Similar to asymptotic free independence, asymptotic free independence of second order provides us with universal rules, via the conditions (ASOF.1) and (ASOF.2) above, to calculate limiting mixed fluctuation moments out of individual ones. Moreover, independent Gaussian unitary ensembles are asymptotically freely independent of second order and conjugation by independent Haar-unitary random matrix ensembles leads to asymptotic free independence of second order, see [16] and [15], respectively.

However, in contrast to moments of first order, fluctuation moments induced by Haar-unitary random matrix ensembles and those induced by Haar-orthogonal random matrix ensembles differ. Investigating fluctuation moments of independent Gaussian orthogonal ensembles, E. Redelmeier proved in [21] that if each \(\{X_{N,i}\}_{i \in I}\) forms a family of independent Gaussian orthogonal ensembles for every \(N\ge 1\), then the ensembles \(\{X_{N,i}\}_{N=1}^{\infty }\) with \(i\in I\) satisfy (ASOF.1) and (ASOF.3) from Definition 3 but (ASOF.2) has to be replaced by the following:

- (ASOF.2’):

-

for all integers \(m_{1},m_{2} \ge 1\), all indexes \(i_1, i_2, \ldots , i_{m_{_1}}, j_{1},j_{2},\ldots ,j_{m_{_2}} \in I\) satisfying (1.6), and all polynomials \(\textrm{p}_{1}, \textrm{p}_{2},\ldots , \textrm{p}_{m_{1}}, \textrm{q}_{1},\textrm{q}_{2}, \ldots , \textrm{q}_{m_{2}}\) in the algebra \({\mathbb {C}}[\textrm{x}]\), if we take

$$\begin{aligned} Y_{N}=Y_{N,1} Y_{N,2} \cdots Y_{N,m_{1}} \quad \text { and } \quad Z_{N} = Z_{N,1} Z_{N,2} \cdots Z_{N,m_{2}} \end{aligned}$$with \(Y_{N,k}\) and \(Z_{N,l}\) given by (1.5) for \(1 \le k \le m_{1}\) and \(1 \le l \le m_{2}\), we then have

$$\begin{aligned} \lim _{N\rightarrow \infty } \textrm{Cov} \left[ \textrm{Tr} ( {Y_{N}} ), \textrm{Tr} ( {Z_{N} } ) \right]&= \delta _{m_{1},m_{2}} \lim _{N\rightarrow \infty } \sum ^{m_{1}}_{l=1} \left( \prod ^{m_{2}}_{k=1} {\mathbb {E}} [ {\textrm{tr} ( {Y_{N,k}Z_{N,l-k}} ) } ] \right. \nonumber \\&\quad \left. + \prod ^{m_{2}}_{k=1} {\mathbb {E}} [ {\textrm{tr} ( {Y^{}_{N,k}Z^{T}_{N,l+k}} ) } ] \right) \end{aligned}$$(1.8)where \(l-k\) and \(l+k\) are taken modulo \(m_{2}\).

Asymptotically freely independent ensembles satisfying (ASOF.1), (ASOF.2’), and (ASOF.3) are called asymptotically freely independent of second order in the real sense. Generalizing the findings of E. Redelmeier in [21], it was shown by J. Mingo and M. Popa in [13] that independent orthogonally invariant ensembles are asymptotically freely independent of second order in the real sense, and therefore, the fluctuation moments induced by Haar-orthogonal ensembles are not described by (1.7) but (1.8) instead.

1.2 Objectives and Main Results

The aim of this paper is to investigate the behavior of the fluctuation moments, and higher-order moments, resulting from conjugation by asymptotically liberating ensembles. Since independent Haar-unitary and independent Haar-orthogonal are both asymptotically liberating but the fluctuation moments each of them induces are distinct, we already know that the induced fluctuation moments depend on the specific liberating ensemble used for conjugation. However, it might well be the case that the relations in (1.7) and (1.8) cover all possible behaviors for fluctuation moments induced by liberating ensembles; our first result shows that this is actually not the case, adding even more evidence that fluctuation moments are more intricate than its first-order counterpart.

It is illustrative and good for comparison to restate what the relations in (1.7) and in (1.8) yield when Haar-unitary ensembles and Haar-orthogonal ensembles are used of conjugation. So, let us assume \(X_{N,1} = U^{}_{N,1} D^{}_{N,1} U^{*}_{N,1}\) and \(X_{N,2} = U^{}_{N,2} D^{}_{N,2} U^{*}_{N,2}\) for each integer \(N \ge 1\) where each sequence \(\{ D^{}_{N,i} \}_{N=1}^{\infty }\) satisfies (1.1) and \(\{ U_{N,1}, U_{N,2} \}_{N=1}^{\infty }\) is an asymptotically liberating ensemble. Note that if the random matrices \(Y_{N}\) and \(Z_{N}\) are as in (ASOF.2) from Definition 3, then we can write

and

where \(A^{}_{N,k}\) and \(B^{}_{N,l}\) are deterministic matrices of trace zero given by

for \(1 \le k \le 2m_{1}\) and \(1 \le l \le 2m_{2}\). For simplicity, and without loss of generality, let us assume \(i_{1} = j_{1}\). Now, if \(U_{N,1}\) and \(U_{N,2}\) are independent Haar-unitary ensembles, it follows from (AF.2) in Definition 1 and the relation in (1.7) that the covariance \( \textrm{Cov} \left[ \textrm{Tr} ( { Y_{N} } ), \textrm{Tr} ( { Z_{N} } ) \right] \) converges to

as N goes to infinity. On the other hand, if \(U_{N,1}\) and \(U_{N,2}\) are independent Haar-orthogonal ensembles, then (AF.2) and (1.8) imply that \( \textrm{Cov} \left[ \textrm{Tr} ( { Y_{N} } ), \textrm{Tr} ( { Z_{N} } ) \right] \) converges to

as N goes to infinity. Note that (1.1) alone guarantees the existence of each of the limits above if each matrix \(D_{N,i}\) equals its transpose, regardless of what \(U_{N,1}\) and \(U_{N,2}\) are.

Another ensemble shown to be asymptotically liberating, see Corollary 3.2 in [1], and a main focus in this paper, is the unitary random matrix ensemble \(\{W_{N}, H_{N}W_{N} /\sqrt{N}, X_{N}H_{N}W_{N}/\sqrt{N} \}\) where \(W_{N}\) is a random N-by-N signed permutation matrix, \(X_{N}\) is a random N-by-N signature matrix independent from \(W_{N}\), and \(H_{N}\) is the N-by-N discrete Fourier transform matrix. Our first result shows that if we take pairs of distinct unitary matrices \(U_{N,1}\) and \(U_{N,2}\) from \(\{W_{N}, H_{N}W_{N} /\sqrt{N}, X_{N}H_{N}W_{N}/\sqrt{N} \}\) and use them for conjugation, then the resulting fluctuation moments vary with each pair and differ from those in (1.12) and in (1.13).

Theorem 1

Let \(D_{N,1}\) and \(D_{N,2}\) be N-by-N self-adjoint matrices for each integer \(N\ge 1\) so that each \(\{ D^{}_{N,i} \}_{N=1}^{\infty }\) satisfies (1.1).

Suppose \(X_{N,1} = U^{}_{N,1} D^{}_{N,2} U^{*}_{N,1} \) and \(X_{N,2} = U^{}_{N,2} D^{}_{N,2} U^{*}_{N,2} \) where \(U_{N,1}\) and \(U_{N,2}\) are distinct matrices from \(\{W_{N}, H_{N}W_{N} /\sqrt{N}, X_{N}H_{N}W_{N}/\sqrt{N} \}\).

If \(Y_{N}\) and \(Z_{N}\) are given by \(Y_{N}=Y_{N,1} Y_{N,2} \cdots Y_{N,2m_{1}}\) and \(Z_{N} = Z_{N,1} Z_{N,2} \cdots Z_{N,2m_{2}}\) where \(Y_{N,k}\) and \(Z_{N,l}\) are defined as in (1.5) for some polynomials \(\textrm{p}_{1}, \textrm{p}_{2},\ldots , \textrm{p}_{2m_{1}}, \textrm{q}_{1}, \textrm{q}_{2}, \ldots , \textrm{q}_{2m_{2}}\in {\mathbb {C}}[\textrm{x}]\) and some indexes \(i_1, i_2, \ldots , i_{2m_{_1}}, j_{1},j_{2},\ldots ,j_{2m_{_2}} \in \{1,2\}\) satisfying (1.6) and \(i_{1}=j_{1}\), then the following holds:

-

(1)

\(U_{N,1} = W_{N} \) and \(U_{N,2}= H_{N}W_{N}/\sqrt{N}\) implies

$$\begin{aligned}&\textrm{Cov} \left[ \textrm{Tr} ( { Y_{N} } ), \textrm{Tr} ( { Z_{N} } ) \right] \\&\quad = \delta _{m_{1},m_{2}} \sum _{l=1}^{m_{1}} \left( \prod _{k=1}^{2m_{1}} \textrm{tr} \left( {A_{N,k} B_{N,2l-k}} \right) + \prod _{k=1}^{2m_{1}} \textrm{tr} \left( {A^{}_{N,k} B_{N,2l+k-1}^{T}} \right) \right) \\ {}&\qquad + O \left( N^{-\frac{1}{2 }}\right) \end{aligned}$$ -

(2)

\(U_{N,1} = W_{N} \) and \(U_{N,2}= X_{N}H_{N}W_{N}/\sqrt{N}\) implies

$$\begin{aligned}&\textrm{Cov} \left[ \textrm{Tr} ( { Y_{N} } ), \textrm{Tr} ( { Z_{N} } ) \right] \\&\quad = \delta _{m_{1},m_{2}} \sum _{l=1}^{m_{1}} \left( \prod _{k=1}^{2m_{1}} \textrm{tr} \left( {A_{N,k} B_{N,2l-k}} \right) + \prod _{k=1}^{2m_{1}} \textrm{tr} \left( {A_{N,k} \circ B_{N,2l+k-1}} \right) \right) \\ {}&\qquad + O \left( N^{-\frac{1}{2 }}\right) \end{aligned}$$ -

(3)

\(U_{N,1} = H_{N}W_{N}/\sqrt{N} \) and \(U_{N,2}= X_{N}H_{N}W_{N}/\sqrt{N}\) implies

$$\begin{aligned} \textrm{Cov} \left[ \textrm{Tr} ( { Y_{N} } ), \textrm{Tr} ( { Z_{N} } ) \right]= & {} \sum _{l_{1}=1}^{2m_{1}} \sum _{l_{2}=1}^{2m_{2}} \prod _{k_{1}=1}^{m_{1}} \textrm{tr} \left( {A_{N,l_{1}+k_{1}-1} A_{N,l_{1}-k_{1}}} \right) \cdot \\{} & {} \prod _{k_{2}=1}^{m_{2}} \textrm{tr} \left( {B_{N,l_{2}+k_{2}-1} B_{N,l_{2}-k_{2}}} \right) \\{} & {} + \delta _{m_{1},m_{2}} \sum _{l=1}^{2m_{1}} \left( \prod _{k=1}^{2m_{1}} \textrm{tr} \left( {A_{N,k}B_{N,l-k}} \right) \right) + O \left( N^{-\frac{1}{2 }}\right) \end{aligned}$$

with \(A_{N,k}\) and \(B_{N,l}\) defined as in (1.11), \(2l-k\), \(2l+k-1\), \(l_{1}+k_{1}-1\), \(l_{1}-k_{1}\), and \(l-k\) interpreted modulo \(2m_{1}\), and \(l_{2}+k_{2}-1\) and \(l_{2}+k_{2}\) interpreted module \(2m_{2}\).

The discovery of second-order behaviors deviating from second-order free independence, and second-order free independence in the real sense, is not new. From a more algebraic setting, the authors of [8] and [9] analyze fluctuation moments of matrices with entries from a possibly non-commutative unital algebra and obtain different relations from those mentioned above. Additionally, the fluctuation moments of symplectically invariant random matrices have been fully determined in [22]. In particular, the relations (1.12) and (1.13) must be replaced by

when considering conjugation by independent Haar-symplectic random matrix ensembles. We recap in Table 1 the fluctuation moments induced by conjugation with matrices uniformly distributed on classical compact groups and in Table 2 the fluctuation moments induced by conjugation with the matrices considered in this paper.

Notice (1.1) alone is not enough to guarantee the existence of limiting second-order behaviors in Theorem 1, in contrast to (1.12) and (1.13). For instance, if we want to take the limit as N goes to infinity in (3) from Theorem 1, we need \(\{D_{N,1}\}_{N=1}^{\infty }\) and \(\{D_{N,2}\}_{N=1}^{\infty }\) to have a joint limiting distribution, i.e., we need that the limit \(\lim _{N \rightarrow \infty } \textrm{tr}(D_{N,i_{1}}^{} D_{N,i_{2}}^{} \cdots D_{N,i_{m}}^{} ) \) exists for all integers \(m\ge 1\) and all indexes \(i_{1},i_{2},\ldots ,i_{m} \in \{1,2\}\). This shows we cannot expect a classification for universal products of second order, in the spirit of [19] or [23], encompassing all of the second-order behaviors exhibited by random matrices.

It would be desirable to have a master theorem encompassing all three cases in Theorem 1. However, in our analysis of fluctuation moments, we arrive to combinatorics that seem already too intricate when we consider each case separately. On this regard, although we make no explicit use of the theory of traffic free independence of C. Male, see [11], it is likely that our results will find a nice expression in terms of traffic algebras. Tools from traffic algebras have been already used in [12] to describe joint fluctuation moments of Wigner random matrices and deterministic matrices and their lack of second-order independence. Finally, it is pointed out in [7] that traffic algebras are closely related to \({\mathcal {A}}\)-tracial algebras, with both notions generalizing classical and free independence. We hope to describe the results in this paper in terms of traffic or \({\mathcal {A}}\)-tracial algebras in a future work.

Despite the fact that no pair of distinct unitary matrices \(U_{N,1}\) and \(U_{N,2}\) from the ensemble \(\{W_{N}, H_{N}W_{N} /\sqrt{N}, X_{N}H_{N}W_{N}/\sqrt{N} \}_{N=1}^{\infty }\) leads to asymptotic free independence of second order when used for conjugation, it turns out not much more is needed to achieve this end, at least, partially. More concretely, if \(U_{N,1}=W_{N,1}\) and \(U_{N,2}=H_{N}W_{N,2}/\sqrt{N}\) where \(W_{N,1}\) and \(W_{N,2}\) are independent N-by-N uniformly distributed signed permutation matrices, then the fluctuation moments induced by \(\{U_{N,1},U_{N,2}\}_{N=1}^{\infty }\) are the same as if \(U_{N,1}\) and \(U_{N,2}\) were independent Haar-unitary, i.e., the induced fluctuation moments are described by (1.12). Thus, we can think of \(\{W_{N,1},H_{N}W_{N,2} /\sqrt{N} \}_{N=1}^{\infty }\) as an asymptotically liberating ensemble of second order.

Theorem 2

Let \(D_{N,1}\) and \(D_{N,2}\) be N-by-N self-adjoint matrices for each integer \(N\ge 1\) so that each \(\{ D^{}_{N,i} \}_{N=1}^{\infty }\) satisfies (1.1). Suppose \(X_{N,1} = U^{}_{N,1} D^{}_{N,2} U^{*}_{N,1} \) and \(X_{N,2} = U^{}_{N,2} D^{}_{N,2} U^{*}_{N,2} \) where \(U_{N,1}=W_{N,1}\) and \(U_{N,2}=H_{N}W_{N,2}/\sqrt{N}\). Then, \(\{X_{N,1}\}_{N=1}^{\infty }\) and \(\{X_{N,2}\}_{N=1}^{\infty }\) are asymptotically freely independent and they satisfy (ASOF.1) and (ASOF.2) from Definition 3. In particular, if \(Y_{N}\), \(Z_{N}\), \(A_{N,k}\), and \(B_{N,l}\) are given as in the previous theorem, then

The main result in [1] gives sufficient conditions on a unitary random matrix ensemble to be asymptotically liberating. Using a different approach than the one of [1], we have been able to prove that, under the same conditions, a unitary random matrix ensemble not only is asymptotically liberating but also satisfies a natural generalization of the boundedness condition in Definition 2 to cumulants of any order. More concretely, we have the following lemma.

Lemma 3

Let \( U_{N,i} \) be an N-by-N unitary random matrix for each index \(i \in I\) and each integer \(N\ge 1\).

Suppose the unitary random matrix ensemble \({\mathcal {U}}=\{\{U_{N,{i}}\}_{{i} \in I}\}_{N=1}^{\infty }\) satisfies the following two conditions:

-

(I)

the families of random matrices \( \{ U^{*}_{N,i_{1}}U^{}_{N,i_{2}} \}_{i_{1}, i_{2} \in I} \) and \( \{ \textrm{W}^{*} U^{*}_{N,i_{1}}U^{}_{N,i_{2}} \textrm{W} \}_{i_{1}, i_{2} \in I} \) are equal in distribution for every N-by-N signed permutation matrix \(\textrm{W}\), and

-

(II)

for each positive integer m and indexes \(i_{1}, i_{2} \in I\) with \(i_{1} \ne i_{2} \) there is a constant \(C_m(i_1,i_2)\) independent from N such that

$$\begin{aligned} \Bigg \Vert \left( U^{*}_{N,i_{1}}U^{}_{N,i_{2}} \right) (j_{1},j_{2})\Bigg \Vert _{m} \le C_m(i_1,i_2) N^{-1/2} \end{aligned}$$for all integers \( j_{1},j_{2} \in \{1,2,\ldots , N\}\).

Now, given positive integers \(m_1, m_2, \ldots , m_n\), take \(m'_{k}=m'_{k-1}+m^{}_{k-1}\) for \(k=2,3,\ldots ,n\) with \(m'_{1}=0\) and consider the permutation \(\gamma = (1,2,\ldots , m'_{1}+ m_{1})(m'_{2}+1,m'_{2}+2, \ldots , m'_{2}+ m_{2}) \cdots (m'_{n}+1, \ldots , m'_{n}+ m_{n})\). If some indexes \({i}_1,{i}_2, \ldots , {i}_m \in I\) are such that \({i}_{k} \ne {i}_{\gamma (k)}\) for \(k=1, 2, \ldots , m\) where \(m = m_1 + m_2 + \cdots + m_n\), then there exists a constant \(C({i}_1, {i}_2, \ldots , {i}_m)\) such that for

with \(A_{1},A_{2},\ldots ,A_{m} \in \textrm{M}_N({\mathbb {C}})\) each of trace zero, we have

The fact that a unitary random matrix ensemble \( \{ \{ U_{N,i} \}_{i\in I} \}_{N=1}^{\infty }\) satisfying (I) and (II) above is asymptotically liberating can now be seen as a particular case of the previous lemma. Moreover, if \(U_{N,i_{1}}\) and \(U_{N,i_{2}}\) are independent Haar-unitary (resp. Haar-orthogonal), then \(U^{*}_{N,i_{1}}U^{}_{N,i_{2}}\) is also Haar-unitary (resp. Haar-orthogonal), and hence, \(U^{*}_{N,i_{1}}U^{}_{N,i_{2}}\) satisfies (I) and (II) above. Therefore, independent Haar-unitary (Haar-orthogonal) random matrix ensembles are asymptotically liberating.

The customary definition of asymptotic free independence for random matrix ensembles involves the convergence of a sequence of linear functionals on non-commutative polynomials, see Proposition 14 and the comment right after its proof. In a similar way, multi-linear functionals on non-commutative polynomials can be used to analyze the behavior of moments of higher order, allowing us to show that unitary random matrix ensembles satisfying (I) and (II) above induce the bounded cumulants property when used for conjugation.

Theorem 4

Let \(D_{N,i}\) be a self-adjoint N-by-N deterministic matrix, and let \( U_{N,i} \) be an N-by-N unitary random matrix for each index \(i \in I\) and each integer \(N\ge 1\). Suppose the unitary random matrix ensemble \( \{ \{ U_{N,i} \}_{i\in I} \}_{N=1}^{\infty }\) satisfies (I) and (II) from the previous lemma and (1.1) holds. Then, the ensemble \( \{ \{ U^{}_{N,i}D^{}_{N,i}U_{N,i}^{*} \}_{i \in I} \}_{N=1}^{\infty }\) has the bounded cumulants property; namely, for all polynomials \(\textrm{p}_{1}, \textrm{p}_{2}, \textrm{p}_{3}, \ldots \) in the algebra of non-commutative polynomials \({\mathbb {C}}\left\langle \textrm{x}_{i} \mid {i \in I} \right\rangle \) taking \(Y_{N,k}= \textrm{p}_{k} (\{U^{}_{N,i}D^{}_{N,i}U^{*}_{N,i} \}_{i \in I} )\) we have

for every integer \(n \ge 1\).

The term bounded cumulants property is borrowed from [14] where it is used to prove several results concerning the limiting behavior of unitarily invariant random matrix ensembles and some other random matrix ensemble with this property.

A few technical comments are worth before we present the organization of the paper. Bounds of graph sums of square matrices, see [17] or Sect. 3, and the relations (4.4) and (5.3) are some key components to our results. In particular, (4.4) reveals the n-th cumulant from Lemma 3 can be written a sum where each term is a product of a cumulant \({\mathfrak {c}}_n \left[ \pi \right] \) of the random variables \(( U^{*}_{N,i_{1}}U^{}_{N,i_{2}} ) (j_{1},j_{2})\) and a graph sum of the deterministic matrices \(A_{l}\). Sharp bounds for graph sums are provided in Theorem 5, so the behavior and existence of higher-order moments depend largely on the cumulants \({\mathfrak {c}}_n \left[ \pi \right] \), at least, in our approach. Estimates of \({\mathfrak {c}}_2 \left[ \pi \right] \) up to terms of order \(N^{-m-1/2}\) are enough for Theorems 1 and 2 and constitute some of our main technical results, see Proposition 17. The sharpness of our estimates for \({\mathfrak {c}}_2 \left[ \pi \right] \)—and, consequently, of the terms of order \(N^{-1/2}\) appearing in Theorems 1 and 2—is addressed in the last section. For higher-order moments with \(n\ge 3\), a finer description of \({\mathfrak {c}}_n \left[ \pi \right] \) than the one given here is required. A full description of \({\mathfrak {c}}_n \left[ \pi \right] \) for the unitary matrices from Theorems 1 and 2 would mean to write graph sums of the discrete Fourier transform as a power series in \(N^{1/2}\), such expression for arbitrary \(\pi \) and arbitrary N is unknown to us at the moment. For Haar-unitary and Haar-orthogonal ensembles, a full description of \({\mathfrak {c}}_n \left[ \pi \right] \) as a power series in \(N^{-2}\) is already available via the Weingarten Calculus from [4] and [5], so higher-order moments might be computed using (4.4) in this case.

1.3 Organization of this Paper

The rest of this paper is organized as follows. In Sect. 2, we introduce the main definitions and the main notation for partitions, classical cumulants, matrices, and non-commutative polynomials; we also establish the distribution of random signed permutation matrices and random signature matrices. In Sect. 3, we review and prove multiple results on graph sums of square matrices. Roughly speaking, a graph sum of square matrices is a sum of products of entries of square matrices with the constraint that some of the entries from distinct matrices are indexed by the same summation variable. Then, Sects. 4 and 5 are devoted to the proofs of our main results; concretely, Lemma 3 and Theorem 4 are proved in Sect. 4, whereas Theorems 1 and 2 are proved in Sect. 5. Finally, in Sect. 6, we give some concluding remarks including open questions and further research projects.

2 Preliminaries

2.1 Set Partitions, the Möbius Inversion Function, and Classical Cumulants

A partition of a non-empty set S is a set of non-empty and pair-wise disjoint subsets of S whose union is S, i.e., a set \(\pi \) is a partition of S if \(B \subset S\) and \(B \ne \emptyset \) for every \(B \in \pi \), \(B\cap B' \ne \emptyset \) implies \(B=B'\) for all \(B,B'\in \pi \), and \(\cup _{B \in \pi } B = S\). The elements of a partition are called blocks, a block is said to be even if it has even cardinality, and similarly, a block is said to be odd if it has odd cardinality. A partition containing only even blocks is called even, but if all of its blocks have exactly two elements, we refer to it as a pairing. The total number of block in partition \(\pi \) is denoted by \(\#(\pi )\) and we let P(S), \(P_{\text {even}}(S)\), and \(P_{2}(S)\) denote the set of all partitions of S, the set of all even partitions of S, and the set of all pairing partitions of S, respectively.

Example

The sets \(\theta _{1} = \{\{-1,-3,-2,2\},\{1,3\}\}\), \(\theta _{2} = \{\{-1,-2\},\{2\},\{1,-3,3\}\}\), and \(\theta _{3} = \{\{-1,-3\},\{1,3\},\{-2,2\}\}\) are all partitions of \(\{-1,1,2,-2,-3,3\}\). The partitions \(\theta _{1}\) and \(\theta _{3}\) are both even, but while \(\theta _{3}\) is a pairing, \(\theta _{1}\) is not. The partition \(\theta _{2}\) is neither even nor odd since it contains two odd blocks, \(\{2\}\) and \(\{1,-3,3\}\), and one even block, \(\{-1,2\}\).

We let [m] and \([\pm m ]\) denote the sets of integers \(\{1,2,\ldots , m\}\) and \(\{-1,1,-2,2,\ldots , -m, m \}\), respectively. The sets [m] and \([\pm m ]\) are used extensively in this paper, so we will omit the square brackets when referring to any of their sets of partitions. Thus, for instance, we write \(P_{\text {even}}(\pm m)\) instead of \(P_{\text {even}}([\pm m])\). Having fixed integers \(m_1, m_2 \ge 1\) and a partition \(\pi \in P(\pm (m_1 + m_2))\), we denote by \({\pi }_1\) and \({\pi }_2\) the restrictions of \({\pi }\) to \([\pm m_1]\) and \([\pm (m_1 + m_2) ] {\setminus } [\pm m_1]\), respectively.

Every partition \(\pi \in P(S)\) defines an equivalence relation, denoted by \(\sim _{\pi }\), that has the blocks of \(\pi \) as equivalence classes. Thus, given elements \(k,l \in S\), we write \(k \sim _{\pi } l\) only if k and l belong to the same block of \(\pi \). With this notation in mind, a partition \(\pi \in P( \pm m )\) is called symmetric if \(k \sim _{\pi } l\) implies \(-k \sim _{\pi } -l\).

The set of partitions P(S) becomes a partially ordered set with the partial order \(\le \) defined as follows: given partitions \(\pi \) and \(\theta \) in P(S), we write \(\pi \le \theta \), and say that \(\pi \) is a refinement of \(\theta \), if every block of \(\pi \) is contained in some block of \(\theta \). Note that \(\pi \le \theta \) if and only if \(k \sim _{\pi } l\) implies \(k \sim _{\theta } l \) for all \(k,l \in S\). In the previous example, the partition \(\pi _{3}\) is a refinement of \(\pi _{1}\), and there is no other refinement between \(\pi _{1}\), \(\pi _{2}\), and \(\pi _{3}\).

Consider now the function \(\zeta : P(S) \times P(S) \rightarrow \{1,0\}\) defined by

This function is called the zeta function of P(S). It turns out that if S is a finite set, then the system of equations

determines a function \(\mu : P(S) \times P(S) \rightarrow {\mathbb {Z}}\) called the Möbius function of P(S) which can be explicitly computed, but first, let us establish the convention that whenever we write \(\eta =\{B_{i_1},B_{i_2},\ldots ,B_{i_r}\}\) for a partition \(\eta \), it is always assumed that blocks \(B_{i_k}\) and \(B_{i_l}\) are the same only if \(i_k=i_l\). Suppose now we are given partitions \(\pi \) and \(\theta \) in P(S). If \(\pi \le \theta \), we can write \(\theta = \{B_1, B_2,\ldots , B_{r}\}\) and \(\pi =\{B_{1,1},B_{1,2}, \ldots , B_{1,m_1},\ldots , B_{n,m_r} \}\) with \(B_{k} = \cup _{l=1}^{m_k}B_{k,l}\) for each k, and, in this case, we have

On the other hand, if \(\pi \) is not a refinement of \(\theta \), we have \(\mu (\pi ,\theta ) = 0\). The Möbius inversion formula states that given arbitrary functions \(f,g:P(S) \rightarrow {\mathbb {C}}\), we have the relation

The computation of Möbius function, Eq. (2.2), and the Möbius inversion formula, Eq. (2.3), is well known, and their proofs can be found in [20, Lecture 10].

Let \((\Omega ,{\mathcal {F}},P)\) be a classical probability space, and let \(L^{-\infty }(\Omega ,{\mathcal {F}},P)\) denote the set of complex-valued random variables on \((\Omega ,{\mathcal {F}},P)\) with finite moments of all orders. The classical n-th cumulant on \(L^{-\infty }(\Omega ,{\mathcal {F}},P)\) is the n-linear functional \({\mathfrak {c}}_{n}: L^{-\infty }(\Omega ,{\mathcal {F}},P) \times \cdots \times L^{-\infty }(\Omega ,{\mathcal {F}},P) \rightarrow {\mathbb {C}} \) defined by

for random variables \(x_1, x_2, x_3,\ldots , x_n \in L^{-\infty }(\Omega ,{\mathcal {F}},P)\) and where \({\mathbb {E}}[ \cdot ]\) denotes the corresponding expected value. Note that if \(x_{k}\) is a constant for some \(k \in [n]\) and \(n \ge 2\), then \( {\mathfrak {c}}_{n}[x_1, x_2,\ldots , x_n] = 0 \).

2.2 The Kernel Notation, Tuples, and Permutations

Let \(S_{1}\) and \(S_{2}\) be non-empty sets. We make the convention that for a function \({\textbf{j}}: S_{1} \rightarrow S_{2}\), we put \(j_{k} = {\textbf{j}}(k)\) for every \(k \in S_{1}\); additionally, if \(S_{1} = [\pm m] \) for some integer \(m \ge 1\), we identify the function \({\textbf{j}}: S_{1} \rightarrow S_{2}\) with the tuple \((j_{-1},j_{1},j_{-2},\ldots ,j_{-m},j_{m})\). Moreover, the kernel of a function \({\textbf{j}}: S_{1} \rightarrow S_{2}\), denoted by \( \textrm{ker} \left( {{\textbf{j}}} \right) \), is defined as the partition of \(S_{1}\) whose blocks are all of the non-empty pre-images of \({\textbf{j}}\), i.e.,

The group of all permutations on a non-empty set S is denoted by \(\textrm{Sym}(S)\); however, if \(S=[m]\) or \(S = [\pm m]\) for some positive integer m, we simply write \(\textrm{Sym}(m)\) and \(\textrm{Sym}(\pm m)\), respectively. Given permutations \(\sigma _{l} \in \textrm{Sym}(S_l)\) for \(l=1,2\), we let \({\textbf{j}} \circ \varvec{\sigma }_{1}: S_{1} \rightarrow S_{2}\) and \( \varvec{\sigma }_{2} \circ {\textbf{j}}: S_{1} \rightarrow S_{2}\) denote the usual composition of functions, so we have

Example

The function \({\textbf{j}}:[\pm 3] \rightarrow [4]\) given by

or, equivalently, \( (j_{-1},j_{1},j_{-2},j_{2},j_{-3},j_{3}) = (4,1,3,4,1,4)\), has kernel

Additionally, if \(\sigma _{1} \in \textrm{Sym}(\pm 3)\) is given \(\sigma _{1} (k)=-k\) for every \(k \in [\pm 3]\) and \(\sigma _{2} \in \textrm{Sym}(4)\) is the cyclic permutation (1, 2, 3, 4), then \( {\textbf{j}} \circ \varvec{\sigma }_{1} = (1,4,4,3,4,1) \) and \( \varvec{\sigma }_{2} \circ {\textbf{j}} = (1,2,4,1,2,1) \).

Given a permutation \(\sigma \in \textrm{Sym}(S)\) and a partition \(\pi \in P(S)\), we let \(\sigma \circ \pi \) be the partition in P(S) given by

The map \(\pi \mapsto \sigma \circ \pi \) is a poset automorphism; in particular, it is order-preserving, so for all partitions \(\pi , \theta \in P(S_{})\) we get

Remark

Note that a partition \(\pi \in P(S_{1})\) and a function \({\textbf{j}}: S_{1} \rightarrow S_{2}\) satisfy \(\pi \le \textrm{ker} \left( {{\textbf{j}}} \right) \) if only if the function \({\textbf{j}}\) is constant when restricted to each of the blocks of \(\pi \), i.e., \(j_{k} = j_{l}\) whenever \(k,l \in B\) for some block \(B \in \pi \).

Moreover, for any permutations \(\sigma _{l} \in \textrm{Sym}(S_{l})\) with \(l=1,2\), we have that \( \textrm{ker} \left( { {\textbf{j}} \circ \varvec{\sigma }_{1} } \right) = \sigma ^{-1}_{1} \circ \textrm{ker} \left( {{\textbf{j}}} \right) \) and \( \textrm{ker} \left( {{\textbf{j}}} \right) = \textrm{ker} \left( {\varvec{\sigma }_{2} \circ {\textbf{j}}} \right) \).

2.3 Some Random Matrices and the Joint Distribution of their Entries

Let I be a non-empty set. Suppose \(\{X_{i}\}_{i \in I}\) and \(\{Y_{i}\}_{i \in I}\) are two families of N-by-N random matrices defined on the same probability space. We say that \(\{X_{i}\}_{i \in I}\) and \(\{Y_{i}\}_{i \in I}\) are equal in distribution if we have

for all integers \(m\ge 1\), indexes \(i_{1},i_{2}, \ldots , i_{m} \in I\), and functions \({\textbf{j}}: [\pm m] \rightarrow [N]\).

A matrix \(\textrm{X} \in \textrm{Mat}_{N}({\mathbb {C}})\) is a signature matrix if there exist signs \(\epsilon _{1},\ldots ,\epsilon _{N} \in \{-1,1\}\) such that

An N-by-N random matrix X is a uniformly distributed signature matrix if it is uniformly distributed on the set of N-by-N signature matrices; in this case, for all functions \(\textbf{i,j}: S \rightarrow [N]\) we have

A matrix \( \textrm{W} \in \textrm{Mat}_{N}({\mathbb {C}})\) is a signed permutation matrix if there exist signs \(\epsilon _{1},\ldots ,\epsilon _{N} \in \{-1,1\}\) and a permutation \(\sigma \in \textrm{Sym}(N) \) such that

An N-by-N random matrix W is a uniformly distributed signed permutation matrix if it is uniformly distributed on the set of N-by-N signed permutation matrices; if that is the case, for all functions \(\textbf{i,j}: S \rightarrow [N]\) we get

Remark

Suppose \(\{V_{i}\}_{i \in I}\) is a family of N-by-N random matrices distribution-invariant under conjugation by signed permutation matrices, i.e., the families \(\{V_{i}\}_{i \in I}\) and \(\{W^{*} V_{i} W \}_{i \in I}\) are equal in distribution for every signed permutation matrix W. Then, for all integers \(m\ge 1\), indexes \(i_{1},i_{2}, \ldots , i_{m} \in I\), and functions \({\textbf{j}}: [\pm m] \rightarrow [N]\), we have

for all signs \(\epsilon _{1},\ldots ,\epsilon _{N} \in \{-1,1\}\) and permutations \(\sigma \in \textrm{Sym}(N)\).

2.4 Non-commutative Polynomials and their Evaluation on Families of Random Matrices

Let I be a non-empty set. We denote by \({\mathbb {C}}\left\langle \textrm{x}_{i} \mid i \in I \right\rangle \) the algebra of non-commutative polynomials on the family of variables \(\{\textrm{x}_{i} \mid i \in I\}\). Let us recall that \({\mathbb {C}}\left\langle \textrm{x}_{i} \mid i \in I \right\rangle \) is the algebra over \({\mathbb {C}}\) with a basis consisting of all the words in the alphabet \(\{\textrm{x}_{i} \mid i \in I\}\), including the empty word which acts as multiplicative identity, and the product of two basis elements is given by concatenation. Thus, a basis element is a word of the form

for some integer \(r\ge 0\) and some indexes \(i_{1},i_{2},\ldots , i_{r} \in I\), and if \(\textrm{x}_{j_{1}}\textrm{x}_{j_{2}}\cdots \textrm{x}_{j_{r}}\) is another basis element, we have

Given polynomials \(\textrm{p}_{1},\textrm{p}_{2}, \ldots , \textrm{p}_{m}\) in the algebra \({\mathbb {C}}\left\langle \textrm{x}_{i} \mid i \in I \right\rangle \) and a set \(S=\{ k_{1}< k_{2}< \cdots < k_{n} \} \subset [m]\), we let

Suppose we are given random matrix ensembles \(\{X_{N,i}\}_{N=1}^{\infty }\) with \(i\in I\) where each \(X_{N,i}\) is a N-by-N random matrix. For each non-commutative polynomial \(\textrm{p} \in {\mathbb {C}}\left\langle \textrm{x}_{i} \mid i \in I \right\rangle \), we denote by

the random matrix obtained from replacing each \(\textrm{x}_{i}\) appearing in the polynomial \(\textrm{p}\) with the random matrix \(X_{N,i}\) for every \(i\in I\) and the constant term of \(\textrm{p}\), say \(\alpha \), with the scalar multiple of the identity matrix \(\alpha I_{N}\). For instance, if \(\textrm{p}(\textrm{x}_{1},\textrm{x}_{2}) = \textrm{x}_{1} \textrm{x}_{2} - \textrm{x}^{2}_{2} + 4 \), then

3 Graph Sums of Square Matrices

In this section, we review and prove some useful results on graph sums of square matrices. A graph sum of given matrices \(A_{1},A_{2},\ldots ,A_{m} \in \textrm{Mat}_N({\mathbb {C}})\) is a sum of the form

for some partition \(\pi \in P(\pm m)\). Note that the condition \( \textrm{ker} \left( {{\textbf{j}}} \right) \ge \pi \) in the sum above is simply a restatement of a set of equalities between the indexes \(j_{\pm k}\). For example, if we let \(\pi = \{\{1,-2\},\{2,-3\},\ldots ,\{m-1,-m\},\{m,-1\}\}\), then \( \textrm{ker} \left( {{\textbf{j}}} \right) \ge \pi \) only if \(j_{1} = j_{-2},j_{2}=j_{-3},\ldots , j_{m-1}=j_{-m}\), and \(j_{m}=j_{-1}\), and thus, we get

It is worth mentioning that although the labeling of the entries of \(A_{k}\) in (3.1) is not customary, it has proven to be suitable for many of our calculations; moreover, for a bijection \(\sigma : [\pm m ] \rightarrow S \), the relation

provides the link between the labeling of the entries of \(A_{k}\) in (3.1) and any other labeling. For instance, if \(\sigma : [\pm m ] \rightarrow [2m] \) is given by \(\sigma (-k)=2k-1\) and \(\sigma (k)=2k\) for \(1 \le k \le m\), then

where \({{\hat{\pi }}} = \{\{1,2\},\{3,4\},\ldots ,\{2\,m-1,2\,m\}\}\) and \(\pi = \sigma ^{-1}\circ {{\hat{\pi }}} = \{\{-1,1\},\{-2,2\},\ldots ,\{-m,m\}\}\). The type of sums above are named graph sums because they can be associated with certain graphs that, as we will see next, help us analyze the corresponding sums.

3.1 Bounds of Graph Sums of General Square Matrices

The main result in [17] concerns more general graph sums, allowing the matrices \(A_{k}\) in (3.1) to be rectangular and not necessarily square. For graph sums of square matrices, however, the result takes the following form.

Theorem 5

Suppose \(\pi \) is a partition in \(P(\pm m)\). Then, there exists a rational number \(\tau _{\pi } \in \{ 1, \frac{3}{2}, 2,\ldots \}\) depending only on the partition \(\pi \) such that for every integer \(N\ge 1\) the following two conditions hold:

-

(a)

for all matrices \( A_{1}, A_{2}, \ldots ,A_{m}\in \textrm{Mat}_N({\mathbb {C}}) \), we have

$$\begin{aligned} \left| \sum _{\begin{array}{c} {\textbf{j}} :[\pm m]\rightarrow [N] \\ \textrm{ker} \left( {{\textbf{j}}} \right) \ge \pi \end{array} } \prod _{k=1}^{m} A_{k}( j_{-k},j_{k}) \right| \le N^{\tau _{\pi }} \prod _{k=1}^{m} \left\| {A_{k}} \right\| \end{aligned}$$ -

(b)

there are some nonzero matrices \(B_{1}, B_{2}, \ldots ,B_{m} \in \textrm{Mat}_N({\mathbb {C}}) \) satisfying

$$\begin{aligned} \left| \sum _{\begin{array}{c} {\textbf{j}} :[\pm m]\rightarrow [N] \\ \textrm{ker} \left( {{\textbf{j}}} \right) \ge \pi \end{array} } \prod _{k=1}^{m} B_{k}( j_{-k},j_{k}) \right| = N^{\tau _{\pi }} \prod _{k=1}^{m} \left\| {B_{k}} \right\| \end{aligned}$$

Note that \(\tau _{\pi }\) is uniquely determined by (a) and (b). We call \(\tau _{\pi }\) the graph sum exponent of \(\pi \).

It is also shown in [17] that the graph sum exponent \(\tau _{\pi }\) can be algorithmically computed analyzing the two-edge connectedness of a graph associated with \(\pi \). For the reader’s convenience, we recount such algorithm next.

- Step 1.:

-

Given a partition \(\pi \in P(\pm m)\), consider the undirected graph \({\mathcal {G}}_{\pi }\) resulting from, first, taking edges \(E_{1}, E_{2},\ldots ,E_{m}\) with endpoints \(-1,+1,-2,+2,\ldots ,-m,+m\), respectively, and, then, identifying endpoints when they belong to the same block of \(\pi \).

- Step 2.:

-

Identify the cutting edges and the two-edge connected components of \({\mathcal {G}}_{\pi }\). Recall that a cutting edge of a graph, also known as a bridge, is an edge whose removal increases the number of connected components.

Moreover, a graph is two-edge connected if it is connected and has no cutting edges, and, consequently, a two-edge connected component of a graph is a subgraph that is maximal, under the usual graph inclusion, in the set of all two-edge connected subgraphs

- Step 3.:

-

Letting \({\mathcal {F}}_{\pi }\) denote the graph with vertex set given by the set of all two-edge connected components of \({\mathcal {G}}_{\pi }\) and edge set given by the set of all cutting edges of \({\mathcal {G}}_{\pi }\), the graph sum exponent \(\tau _{\pi }\) is given by

$$\begin{aligned} \tau _{\pi } = \sum _{v \text { vertex in } {\mathcal {F}}_{\pi } } {\mathfrak {l}}(v) \quad \text {where} \quad {\mathfrak {l}}(v) := \left\{ \begin{array}{cl} \frac{1}{2} &{} \text { if } \deg (v) = 1, \\ 1 &{} \text { if } \deg (v) = 0, \\ 0 &{} \text {otherwise}. \end{array}\right. \end{aligned}$$(3.3)and \(\deg (v)\) denotes the degree of the vertex v in the graph \({\mathcal {F}}_{\pi }\).

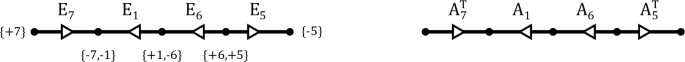

Example

The undirected graph \({\mathcal {G}}_{\pi }\) associated with the partition

can be represented as

Hence, the cutting edges of \({\mathcal {G}}_{\pi }\) are \(E_{3}\), \(E_{5}\), \(E_{6}\), \(E_{7}\), \(E_{8}\), and \(E_{9}\); moreover, the two-edge connected components of \({\mathcal {G}}_{\pi }\) are exactly what remains of \({\mathcal {G}}_{\pi }\) after removing all of its cutting edges. The graph \({\mathcal {F}}_{\pi }\) can be obtained from \({\mathcal {G}}_{\pi }\) by shrinking each of the two-edge connected components of \({\mathcal {G}}_{\pi }\) to a vertex, and thus, if we represent the cutting edges of \({\mathcal {G}}_{\pi }\) with dashed lines, we obtain

where \({\mathcal {F}}_{\pi }\) is the graph on the right and next to each of its vertices we have placed the corresponding contribution \({\mathfrak {l}}(v)\) to the graph sum exponent \(\tau _{\pi }\). Therefore, we have \(\tau _{\pi } = 4\).

Having described the algorithm to compute \(\tau _{\pi }\), we can now show that graph sum exponents of even partitions can be easily calculated.

Proposition 6

If \(\pi \in P( \pm m) \) is an even partition, then the graph sum exponent \(\tau _{\pi }\) equals the number of connected components of \({\mathcal {G}}_{\pi }\).

Proof

By Equation (3.3), it suffices to show that the graph \({\mathcal {G}}_{\pi }\) has no cutting edges. Suppose \({\mathcal {G}}_{\pi }\) has a cutting edge. If we remove such cutting edge, we get two disjoint graphs, each of which has one single vertex of odd degree and the other vertices of even degree. But, this contradicts the handshaking lemma that in any graph the sum of degrees over all its vertices must be even. Thus, \({\mathcal {G}}_{\pi }\) has no cutting edges, and hence, all its connected components are two-edge connected. \(\square \)

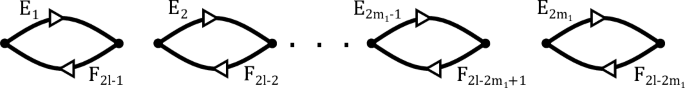

Now, resulting from endowing each edge \(E_{k}\) in the graph \({\mathcal {G}}_{\pi }\) with the direction that goes from \(+k\) to \(-k\), the directed graph \(\vec {{\mathcal {G}}}_{\pi }\) can sometimes be used to describe the corresponding graph sum. In particular, a graph sum factors as a product of traces of matrices when all connected components of \({\mathcal {G}}_{\pi }\) are bouquets, to which we refer as multiple loops, or cycles, each connected component gives rise to a trace. For example, for the partition

and given matrices \(A_{1},A_{2},\ldots ,A_{7} \in \textrm{Mat}_N({\mathbb {C}})\), we have the graph sum

where the right-hand side can be deduced from analyzing the directed graph \(\vec {{\mathcal {G}}}_{\pi }\) as follows:

-

(1)

The corresponding directed graph \(\vec {{\mathcal {G}}}_{\pi }\) has exactly three connected components, two cycles and one double-loop, and can be represented as

Each cycle and each one multiple-loop gives rise to a trace in the right-hand side of (3.4).

-

(2)

If a connected component of \(\vec {{\mathcal {G}}}_{\pi }\) is a cycle, we unfold it to obtain a horizontal line and replace each edge \(E_{k}\) by the matrix \(A_{k}\) if the direction of \(E_{k}\) goes from right to left in the horizontal line; otherwise, we replace \(E_{k}\) by \(A^{T}_{k}\), the transpose of \(A_{k}\).

We then put the matrices \(A_k\) or \(A_{k}^{T}\) in a trace \(\textrm{Tr} \left( {\cdot } \right) \) as they appear when we read the resulting horizontal line from left to right. For instance, the longest cycle of \(\vec {{\mathcal {G}}}_{\pi }\) gives

And so, we obtain the trace \(\textrm{Tr} \left( { A_{7}^{T} A_{1}^{} A_{6}^{} A_{5}^{T} } \right) \) in (3.4). Note that \(\textrm{Tr} \left( { A_{1}^{} A_{6}^{} A_{5}^{T} A_{7}^{T} } \right) \) and \(\textrm{Tr} \left( { A_{2}^{} A_{3}^{} } \right) \) do not depend on how the cycles in \(\vec {{\mathcal {G}}}_{\pi }\) are unfolded since for any matrices \(A,B \in \textrm{Mat}_N({\mathbb {C}})\) we have \(\textrm{Tr} \left( {AB} \right) = \textrm{Tr} \left( {BA} \right) \), \(\textrm{Tr} \left( {A} \right) = \textrm{Tr} \left( {A^{T}} \right) \), and \((AB)^{T} = B^{T}A^{T}\).

-

(3)

On the other hand, a multiple-loop in \(\vec {{\mathcal {G}}}_{\pi }\) with edges \(E_{k_{1}},E_{k_{2}},\ldots ,E_{k_{n}}\) yields the trace of the Hadamard product of \(A_{k_{1}},A_{k_{2}},\ldots ,A_{k_{n}}\). This way, we get \(\textrm{Tr} \left( {A_{4} \circ A_{8}} \right) \) in (3.4).

Thus, if \(\pi \) is now given by

the corresponding directed graph \(\vec {{\mathcal {G}}}_{\pi }\) can be represented as

and hence, we obtain

3.2 Bounds of Graph Sums of The Discrete Fourier Transform Matrix

Although the bound for graph sums given by Theorem 5 is optimal in the set of all square matrices, it might not be optimal for some graph sums involving the discrete Fourier transform matrix. Let us recall that the N-by-N discrete Fourier transform matrix is the symmetric matrix H with entries given by

where \(\omega = \exp (-\frac{2 \pi }{N} \sqrt{-1} ) \) is a primitive N-th root of unity. Now, letting \({\textbf{h}}({\textbf{j}})\) be given by

for each function \({\textbf{j}}: [\pm 2m] \rightarrow [N]\), Theorem 5 gives us that

for any partition \(\pi \in P(\pm 2m)\); on the other hand, since \( {\textbf{h}}({\textbf{j}})\) has absolute value 1, we also obtain

Thus, if \(\pi \) is the partition \(\{\{2k-1,-2k+1,2k,-2k\} \mid k = 1,2,\ldots , m\}\), then the graph sum exponent \(\tau _{\pi }\) equals m, and hence, \(\tau _{\pi } + m = 2m\), but also \(\#(\pi ) = m\), so (3.8) is a sharper bound than (3.7) in this case. In general, we prefer (3.8) over (3.7) since (3.8) is invariant under re-labeling of the entries of H and \(H^{*}\) in (3.6); namely, given a permutation \(\sigma \in \textrm{Sym}( \pm 2m )\) and letting

for any function \({\textbf{j}}: [\pm 2m] \rightarrow [N]\), the inequality in (3.8) implies

since we have the relation

Moreover, in the proof of Theorem 1, we will need to consider sums of the form

where \(m=m_{1}+m_{2}\) for some integers \(m_{1},m_{2} \ge 1\) and \(\sigma \in \textrm{Sym}(\pm 2m)\) is the permutation with cycle decomposition given by

Although the sum in (3.10) is not a graph sum, it can be determined up to a term of order \(N^{\#{\pi }-1}\) analyzing (3.9) since for every partition \( \pi \in P(\pm 2m)\) we have

The rest of this section is devoted to find and classify partitions \(\pi \) such that (3.8) becomes an equality. To do that, let us first associate a polynomial to each partition \(\pi \in P (\pm 2m)\).

The polynomial \(\varvec{\textrm{p}_{\pi }}\). Given a partition \(\pi =\{B_{1},B_{2},\ldots ,B_{r}\} \in P(\pm 2m)\), we let \(\textrm{p}_{\pi }(\textrm{x}_{1},\textrm{x}_{2},\ldots ,\textrm{x}_{r})\), or simply \(\textrm{p}_{\pi }\), be the polynomial obtained from the expression

after replacing each variable \(\textrm{x}_{k}\) by \(\textrm{x}_{l}\) whenever k belongs to the block \(B_{l}\). For instance, if \(\pi =\{B_{1}=\{-1,3\},B_{2}=\{-3,1\},B_{3}=\{-2,2\},B_{4}=\{-4,4\}\}\), then

Equivalently, the polynomial \(\textrm{p}_{\pi }(\textrm{x}_{1},\textrm{x}_{2},\ldots ,\textrm{x}_{r})\) is the image of (3.13) under the unique homomorphism from \({\mathbb {Z}}\left[ \textrm{x}_{-1},\textrm{x}_{1},\ldots ,\textrm{x}_{-2m},\textrm{x}_{2m}\right] \) to \({\mathbb {Z}}[\textrm{x}_{1}, \textrm{x}_{2}, \ldots , \textrm{x}_{r}]\) such that \(\textrm{x}_{k} \mapsto \textrm{x}_{l} \) whenever \(k \in B_{l}\). Note that \(\textrm{p}_{\pi }(\textrm{x}_{1},\textrm{x}_{2},\ldots ,\textrm{x}_{r})\) has degree either 0 or 2 and can also be explicitly defined as

where

moreover, \(\textrm{p}_{\pi }(\textrm{x}_{1},\textrm{x}_{2},\ldots ,\textrm{x}_{r})\) satisfies the relation

Therefore, (3.8) becomes an equality precisely when \(\textrm{p}_{\pi }(\textrm{x}_{1},\textrm{x}_{2},\ldots ,\textrm{x}_{r})\) is the zero polynomial. On the other hand, if \(\textrm{p}_{\pi }(\textrm{x}_{1},\textrm{x}_{2},\ldots ,\textrm{x}_{r})\) is a nonzero polynomial, we can then find a sharper bound than (3.8) via the reciprocity theorem for generalized Gauss sums, see [3, Section 1.2] for a proof of this theorem.

The reciprocity theorem for generalized Gauss sums Suppose a, b, c are integers with \(a, c\ne 0\) and \(ac+b\) even. Then,

Proposition 7

If \(\textrm{p}(\textrm{x}_{1},\textrm{x}_{2},\ldots ,\textrm{x}_{r})\) is a nonzero polynomial of degree at most 2 in \({\mathbb {Z}} \left[ \textrm{x}_1, \textrm{x}_2, \ldots , \textrm{x}_r \right] \), then there exists a constant \(C_{\textrm{p}}\) independent of N such that

Proof

Suppose \(\textrm{p}(\textrm{x}_{1},\ldots ,\textrm{x}_{r}) \in {\mathbb {Z}} \left[ \textrm{x}_1, \ldots , \textrm{x}_r \right] \) is a nonzero polynomial of degree at most 2. Without loss of generality, we can assume that there is a nonzero linear polynomial \(\textrm{q}_{1}(\textrm{x}_{1},\ldots ,\textrm{x}_{r}) = \alpha _{1} \textrm{x}_{1} + \alpha _{2} \textrm{x}_{2} +\cdots + \alpha _{r} \textrm{x}_{r} \in {\mathbb {Z}} \left[ \textrm{x}_{1}, \textrm{x}_{2}, \ldots , \textrm{x}_{r} \right] \) and a polynomial \(\textrm{q}_{2}(\textrm{x}_{2},\ldots ,\textrm{x}_{r}) \in {\mathbb {Z}} \left[ \textrm{x}_{2}, \textrm{x}_{3}, \ldots , \textrm{x}_{r} \right] \) of degree at most 2 such that

Since we have the inequality

we only need to show that there is a constant \(C_{\textrm{p}}\) independent from N such that

Suppose \(\alpha _{1} \ne 0\). Then, we have that

where S(a, b, c) denotes the generalized Gauss quadratic sum as in (3.17). Thus, by the reciprocity theorem for generalized Gauss sums, we get

and therefore, we obtain

Now, suppose \(\alpha _{1} = 0\). Recall that

So, we have

But, since the polynomial \(\textrm{q}_{1}(\textrm{x}_{1},\ldots ,\textrm{x}_{r}) = \alpha _{1} \textrm{x}_{1} +\cdots + \alpha _{r} \textrm{x}_{r}\) is nonzero, we must have \(\alpha _{k} \ne 0\) for some \(k \ne 1\), and hence, the equation

has at most \(\left| {\alpha _{k}} \right| \) solutions in the set \(\{0,1,\ldots ,N-1\}\) for any given integer \(\beta \). Thus, we have

and therefore, we get

\(\square \)

As an immediate consequence from (3.12), (3.16), and Proposition 7, we have the following.

Corollary 8

If \(\textrm{p}_{\pi }(\textrm{x}_{1},\textrm{x}_{2},\ldots ,\textrm{x}_{r})\) is a nonzero polynomial for some partition \(\pi = \{B_{1},B_{2},\ldots ,B_{r}\} \in P(\pm 2\,m)\), then there is a constant \(C_{}\) independent from N so that

The next two propositions establish necessary and sufficient conditions for \(\textrm{p}_{\pi }(\textrm{x}_{1},\textrm{x}_{2},\ldots ,\textrm{x}_{r})\) to be the zero polynomial. Roughly speaking, the polynomial \(\textrm{p}_{\pi }(\textrm{x}_{1},\textrm{x}_{2},\ldots ,\textrm{x}_{r})\) is zero if only and if the blocks of the partition \(\pi \) group the elements of the set \([\pm 2m]\) in such a way that the positive and negative signs appearing in (3.13) cancel each other out.

Proposition 9

Suppose \(\pi = \{B_{1}, B_{2}, \ldots , B_{2m}\}\) is a pairing partition in \(P( \pm 2 m )\). Then the polynomial \(\textrm{p}_{\pi } (\textrm{x}_{1},\textrm{x}_{2},\ldots ,\textrm{x}_{2\,m})\) is zero if and only if \(\pi \) is a symmetric partition such that \(k \sim _\pi l\) implies \(k + l\) odd for all integers \(k,l \in [\pm 2 m]\).

Proof

Suppose \(\textrm{p}_{\pi } (\textrm{x}_{1},\textrm{x}_{2},\ldots ,\textrm{x}_{2\,m})\) is the zero polynomial and take \(a_{t,s}\) as (3.15) for \(1 \le t \le s \le 2\,m\). To prove \(\pi \) is a symmetric partition such that \(k \sim _\pi l\) implies \(k + l\) odd for all integers \(k,l \in [\pm 2 m]\), it suffices to show that for every integer \(k \in [2m]\) there exists an integer \(l \in [2\,m]\) such that \(k+l\) is odd and either \(k \sim _\pi l\) and \(-k \sim _\pi -l\) or \(k \sim _\pi - l\) and \(-k \sim _\pi l\). Fix \(k \in [2m]\) and let \(t',s' \in [2\,m]\) such that \(k \in B_{t'}\) and \(-k \in B_{s'}\). Since \(\textrm{p}_{\pi } (\textrm{x}_1, \ldots , \textrm{x}_{2\,m}) = \sum _{1 \le t \le s \le r} a_{t,s} \textrm{x}_{t} \textrm{x}_{s}\) is the zero polynomial, we must have \(a_{t',s'}= 0 \). Now, if \(t' \ne s'\), from (3.15) we get that

which implies there exists \(l \in [2\,m] \) such that \( (-1)^{k} + (-1)^{l}\) is zero and either \(l \in B_{t'}\) and \(-l \in B_{s'}\) or \(-l \in B_{t'}\) and \(l \in B_{s'}\). But this is equivalent to the desired conclusion. A similar argument works for the case \(s=t\).

Now, if the partition \(\pi \) is a symmetric pairing in \(P(\pm 2m)\) such that \(k \sim _\pi l\) implies \(k + l\) odd for all integers \(k,l \in [\pm 2\,m]\), we can write \(\pi =\{B_{1},B_{2},\ldots ,B_{2\,m}\}\) with \(B_{1}=\{-k_{1},-l_{1}\},B_{2}=\{k_{1},l_{1}\}, B_{3}=\{-k_{2},-l_{2}\},B_{2}=\{k_{2},l_{2}\},\ldots ,B_{2\,m}=\{k_{m},l_{2\,m}\}\) and \(k_{1},l_{1},k_{2},l_{2},\ldots ,l_{m} \in [\pm 2m]\) satisfying \(k_{i}+l_{i}\) odd for \(i=1,2,\ldots , m\). Moreover, since \(\bigcup _{i=1}^{2\,m} B_{i}= [\pm 2\,m]\) and \((-1)^{k} = (-1)^{-k}\) for \(k \in [\pm 2m]\), we have

Therefore, from the definition of \(\textrm{p}_{\pi }(\textrm{x}_{1},\textrm{x}_{2},\ldots ,\textrm{x}_{2m})\) and the fact that \(k_{i}+l_{i}\) is odd for \(i=1,2,\ldots , m\), we get

\(\square \)

Proposition 10

Let \(\pi = \{B_1, B_2, \ldots , B_n\}\) be a partition in \(P( \pm 2\,m )\). If there is a partition \(\theta \in P( \pm 2\,m )\) such that \(\theta \le \pi \) and \(\textrm{p}_{\theta }\) is the zero polynomial, then \(\textrm{p}_{\pi }\) is also the zero polynomial. Conversely, if \(\textrm{p}_{\pi }\) is the zero polynomial, then there is a symmetric pairing partition \(\theta \le \pi \) such that \(\textrm{p}_{\theta }\) is the zero polynomial.

Proof

Suppose \(\theta \le \pi \) and \(\textrm{p}_{\theta }\) is the zero polynomial. Write \(\theta =\{B_{1,1},B_{1,2}, \ldots , B_{1,m_1},\ldots , B_{n,m_n} \}\) with \(B_{i} = \cup _{j=1}^{m_i}B_{i,j}\) for \(i=1,2,\ldots , n\). Take \({\mathcal {A}} = {\mathbb {Z}}\Big [\textrm{x}_{1},\textrm{x}_{-1},\ldots ,\textrm{x}_{2\,m},\textrm{x}_{-2\,m}\Big ]\), \({\mathcal {B}}={\mathbb {Z}}[\textrm{x}_{1,1}, \textrm{x}_{1,2}, \ldots , \textrm{x}_{1,m_1}, \ldots , \textrm{x}_{n,m_n}]\), and \({\mathcal {C}} = {\mathbb {Z}}[\textrm{x}_{1}, \textrm{x}_{2}, \ldots , \textrm{x}_{n}]\) and let \(\Phi : {\mathcal {A}} \rightarrow {\mathcal {B}}\) and \(\Psi : {\mathcal {B}} \rightarrow {\mathcal {C}}\) be the unique homomorphisms such that \( \Phi (\textrm{x}_{k})= \textrm{x}_{i,j}\) if \(k \in B_{i,j}\) and \(\Psi (\textrm{x}_{i,j}) = \textrm{x}_{i}\). Note that \((\Psi \circ \Phi ) (\textrm{x}_{k}) = \textrm{x}_{l}\) only if \(k \in B_{l}\), and thus, by definition of \(\textrm{p}_{\pi }\) and \(\textrm{p}_{\theta }\), we have that

Hence, if \(\textrm{p}_{\theta }\) is the zero polynomial, so is \(\textrm{p}_{\pi }\).

Suppose now \(\textrm{p}_{\pi }\) is the zero polynomial and let \(\theta \) be a minimal element of the set \( \{ {\widehat{\pi }} \in P(\pm 2\,m): {\widehat{\pi }} \le \pi \text{ and } \textrm{p}_{ {\widehat{\pi }}} = 0 \} \) endowed with the partial order inherited from \(P(\pm 2 m)\). Since \(\textrm{p}_{\theta }\) is the zero polynomial, it follows from the first part of Proposition 9’s proof that for every integer \(k \in [2m]\) there exists an integer \(l \in [2m]\) such that \(k+l\) is odd and either \(k \sim _\theta l\) and \(-k \sim _\theta -l\) or \(k \sim _\theta - l\) and \(-k \sim _\theta l\). Therefore, \(\theta \) lacks singletons and either \(\theta \) is a pairing partition or \(\theta \) has a block with at least three elements. Let us assume \(\theta = \{C_1, C_2, \ldots , C_n\}\) has a block with at least three elements, say \(C_{n}\). The previous property of \(\theta \)—borrowed from the first part of Proposition 9’s proof—implies there are integers \(k, l \in [2\,m]\) such that \(k+l\) is odd and at least one of the following conditions holds:

-

(1)

\(+k, +l \in C_{n}\) and \(-k \sim _\theta -l\)

-

(2)

\(+k, - l \in C_{n}\) and \(-k \sim _\theta +l\)

-

(3)

\(-k, -l \in C_{n}\) and \(+k \sim _\theta +l\)

-

(4)

\(-k, +l \in C_{n}\) and \(+k \sim _\theta -l\)

Assume (1) holds. Then, \( C_{n} {\setminus }\{k,l\}\) is not empty, and hence, letting \({\widehat{C}}_{i} = C_{i}\) for \(i=1,2,\ldots n-1\), \({\widehat{C}}_{n} = C_{n} \setminus \{k,l\}\), and \({\widehat{C}}_{n+1}= \{k,l\}\), we have \({{\widehat{\theta }}} = \{{\widehat{C}}_{1}, {\widehat{C}}_{2}, \ldots , {\widehat{C}}_{n+1} \}\) is a partition of \([\pm 2\,m ]\) such that \({{\widehat{\theta }}} \lneq \theta \), i.e., \(\theta \ge {{\widehat{\theta }}}\) but \(\theta \ne {{\widehat{\theta }}}\). Let us show that \(\textrm{p}_{{{\widehat{\theta }}}}\) must be the zero polynomial, contradicting the minimality of \(\theta \). Take \({\mathcal {A}} = {\mathbb {Z}}\left[ \textrm{x}_{1},\textrm{x}_{-1},\ldots ,\textrm{x}_{m},\textrm{x}_{-m}\right] \), \({\mathcal {B}}={\mathbb {Z}}[\textrm{x}_{1}, \textrm{x}_{2}, \ldots , \textrm{x}_{n}]\), and \(\widehat{{\mathcal {B}}} = {\mathbb {Z}}[\textrm{x}_{1}, \textrm{x}_{2}, \ldots , \textrm{x}_{n+1}]\) and let \(\Phi : {\mathcal {A}} \rightarrow {\mathcal {B}}\) and \({{\widehat{\Phi }}}: {\mathcal {A}} \rightarrow \widehat{{\mathcal {B}}}\) be the unique homomorphisms such that \( \Phi (\textrm{x}_{i})= \textrm{x}_{j}\) if \(i \in {C}_{j}\) and \({{\widehat{\Phi }}}(\textrm{x}_{i}) = \textrm{x}_{j}\) if \(i \in {\widehat{C}}_{j}\). Since \({\Phi }(\textrm{x}_{i}) = {\widehat{\Phi }}(\textrm{x}_{i})\) for \(i \in [\pm 2\,m] {\setminus } \{k,l\}\), we have

Moreover, since \(-k \sim _\theta -l\), we have \(\Phi ( \textrm{x}_{-k} ) = \Phi ( \textrm{x}_{-l} ) = {{\widehat{\Phi }}}( \textrm{x}_{-k} ) = {{\widehat{\Phi }}}( \textrm{x}_{-l} ) \), so we get

since \(k+l\) is odd, \(\Phi (\textrm{x}_{k}) = \Phi (\textrm{x}_{l}) = \textrm{x}_{n}\), and \({{\widehat{\Phi }}}(\textrm{x}_{k}) = {{\widehat{\Phi }}}(\textrm{x}_{l}) = \textrm{x}_{n+1}\). Thus, we obtain

But then, \(\theta \) is not minimal, and therefore, (1) does not hold. Similar arguments show that neither (2), nor (3), nor (4) hold. Therefore, the partition \(\theta \) must be a pairing, and, in fact, a symmetric pairing by Proposition 9. \(\square \)

As mentioned earlier, in proving Theorem 1, we need to consider sums as in (3.10). Note that if \(\textrm{p}_{\sigma ^{-1} \circ \pi }\) is the zero polynomial for some permutation \(\sigma \in \textrm{Sym}(\pm 2m)\), then \({\textbf{h}}({\textbf{j}}) = 1\) for any function \({\textbf{j}}:[\pm 2\,m]\rightarrow [N] \) satisfying \( \textrm{ker} \left( {{\textbf{j}}} \right) \ge \sigma ^{-1} \circ \pi \), and hence, we would get

On the other hand, if the polynomial \(\textrm{p}_{\sigma ^{-1} \circ \pi }\) is nonzero, we have that (3.10) is of order \(N^{\#(\pi ) - 1/2}\) by Corollary 8. We will now use the previous results to classify all symmetric pairing partitions so that \(\textrm{p}_{\sigma ^{-1} \circ \pi }\) is the zero polynomial.

Lemma 11

Let \(m=m_{1} + m_{2}\) for some integers \(m_{1}, m_{2}\ge 1\), and let \(\sigma \) be the permutation given by (3.11). Suppose k and l are integers in \([2\,m]\) and \(\pi \) is a symmetric pairing partition in \(P(\pm 2\,m)\) such that \(\textrm{p}_{\sigma ^{-1} \circ \pi }\) is the zero polynomial. If \(-k \sim _\pi l\), then \(\sigma ^{-t}(-k) \sim _\pi \sigma ^{t}(l)\) for every integer \(t \ge 0\). On the other hand, if \(-k \sim _\pi -l\), then \(\sigma ^{t}(-k) \sim _\pi \sigma ^{t}(-l)\) for every integer \(t \ge 0\).

Proof

Note that \({\hat{k}} \sim _{\pi } {\hat{l}} \) implies \(\sigma (- \sigma ^{-1} ({\hat{k}}) ) \sim _{\pi } \sigma (- \sigma ^{-1} ({\hat{l}}) )\). Indeed, by Proposition 10, the partition \(\sigma ^{-1} \circ \pi \) is symmetric since \(\textrm{p}_{\sigma ^{-1} \circ \pi }\) is the zero polynomial, and hence, \(- \sigma ^{-1} ({\hat{k}}) \sim _{\sigma ^{-1} \circ \pi } - \sigma ^{-1} ({\hat{l}}) \) provided \({\hat{k}} \sim _{\pi } {\hat{l}} \), but in that case we must have \(\sigma (- \sigma ^{-1} ({\hat{k}}) ) \sim _{\pi } \sigma (- \sigma ^{-1} ({\hat{l}}) )\). Note also that for every integer \({\hat{k}} \in [\pm 2m]\) we have

since for \(1 \le k \le 2\,m \) we have \(\sigma ^{-1}(k)=-k\), \(-\sigma ^{-1}(-k)<0\), and \(\sigma (-k)=k\).

Now, suppose \(\sigma ^{-t}(-k) \sim _{\pi } \sigma ^{t}(l)\) for some integer \(t \ge 0\). If t is even, then \({\hat{k}}=\sigma ^{-t}(-k)< 0 < \sigma ^{t}(l)={\hat{l}}\), and hence \( \sigma ^{-t-1}(-k) = \sigma (- \sigma ^{-1} ({\hat{k}}) ) \sim _{\pi } \sigma (- \sigma ^{-1} ({\hat{l}}) ) = \sigma ^{t+1}(l)\). On the other hand, if t is odd, we have \(\sigma ^{-t}(-k)> 0 > \sigma ^{t}(l)\), and hence \( \sigma ^{-t-1}(-k) = - \sigma ^{-t}(-k) \sim _{\pi } - \sigma ^{t}(l) = \sigma ^{t+1}(l)\) since \(\pi \) is symmetric. Thus, \(-k \sim _{\pi } l\) implies \(\sigma ^{-t}(-k) \sim _\pi \sigma ^{t}(l)\) for every integer \(t \ge 0\) by induction on t. Similarly, assuming \(-k \sim _{\pi } -l\), we get \(\sigma ^{t}(-k) \sim _{\pi } \sigma ^{t}(-l)\) for all \(t\ge 0\). \(\square \)

Remark 12

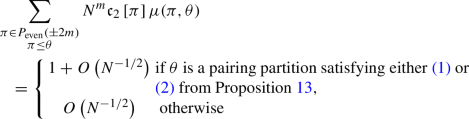

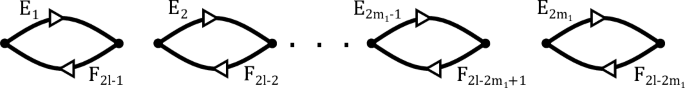

The results regarding the polynomials \(\textrm{p}_{\pi }\) and \(\textrm{p}_{\sigma ^{-1} \circ {\pi }}\) being zero can be restated in terms of the graphs \({\mathcal {G}}_{\pi }\) and \(\vec {{\mathcal {G}}}_{\pi }\) from Sect. 3.1. For instance, in the following proposition we show that the polynomial \(\textrm{p}_{\sigma ^{-1} \circ {\pi }}\) is zero for a given symmetric partition \(\pi \in P(\pm 2m)\) with \(m=m_1 + m_2\) if and only if one of the following conditions for the directed graph \(\vec {{\mathcal {G}}}_{\pi }\), where \(F_{t}\) denotes the edge \(E_{2m_{1}+t}\) for \(t=1,2,\ldots ,2m_{2}\), holds:

-

(1)

\(m_{1}=m_{2}\) and there is an integer \(1 \le l \le m_{2}\) so that the graph \(\vec {{\mathcal {G}}}_{\pi }\) can be represented as

-

(2)

\(m_{1}=m_{2}\) and there is an integer \(1 \le l \le m_{2}\) so that the graph \(\vec {{\mathcal {G}}}_{\pi }\) can be represented as

-

(3)

\(\vec {{\mathcal {G}}}_{\pi }\) is the disjoint union of \(\vec {{\mathcal {G}}}_{\pi _{1}}\) and \(\vec {{\mathcal {G}}}_{\pi _{2}}\), there is an integer \(1 \le k \le 2m_{1}\) so that \(\vec {{\mathcal {G}}}_{\pi _{1}}\) can be represented as

and there is an integer \(2m_{1}+1 \le l \le 2m_{1} + 2m_{2}\) so that \(\vec {{\mathcal {G}}}_{\pi _{2}}\) can be represented as

-

(4)

\(m_{1}\) and \(m_{2}\) are odd integers, the graph \(\vec {{\mathcal {G}}}_{\pi }\) is the disjoint union of \(\vec {{\mathcal {G}}}_{\pi _{1}}\) and \(\vec {{\mathcal {G}}}_{\pi _{2}}\), the graph \(\vec {{\mathcal {G}}}_{\pi _{1}}\) can be represented as

and the graph \(\vec {{\mathcal {G}}}_{\pi _{2}}\) can be represented as

-

(5)

\(m_{2}\) is odd, \(\vec {{\mathcal {G}}}_{\pi }\) is the disjoint union of \(\vec {{\mathcal {G}}}_{\pi _{1}}\) and \(\vec {{\mathcal {G}}}_{\pi _{2}}\), there is an integer \(1 \le k \le 2m_{1}\) so that \(\vec {{\mathcal {G}}}_{\pi _{1}}\) can be represented as

and the graph \(\vec {{\mathcal {G}}}_{\pi _{2}}\) can be represented as

-

(6)

\(m_{1}\) is odd, the graph \(\vec {{\mathcal {G}}}_{\pi }\) is the disjoint union of \(\vec {{\mathcal {G}}}_{\pi _{1}}\) and \(\vec {{\mathcal {G}}}_{\pi _{2}}\), the graph \(\vec {{\mathcal {G}}}_{\pi _{1}}\) can be represented as

and there is an integer \(1 \le l \le 2m_{1}\) so that \(\vec {{\mathcal {G}}}_{\pi _{2}}\) can be represented as

In the graphs above, \(2\,l-t\), \(2\,l+t-1\), and \(k \pm t \) are taken modulo \(2m_{1}\) for \(t=1,2,\ldots ,2m_{1}\) and \(l \pm t \) is taken modulo \(2m_{2}\) for \(t=1,2,\ldots ,2m_{2}\).

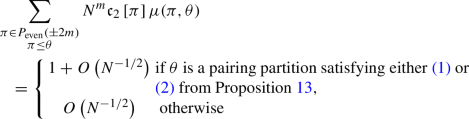

Proposition 13

Let \(m=m_{1} + m_{2}\) for some integers \(m_{1}, m_{2}\ge 1\), and let \(\sigma \) be the permutation given by (3.11). Suppose \({\pi }\) is a symmetric pairing partition of \([\pm 2m ]\) and denote by \({\pi }_1\) and \({\pi }_2\) the restrictions of \({\pi }\) to \([\pm 2m_1]\) and \([\pm 2\,m ] {\setminus } [\pm 2m_1]\), respectively. Then, \(\textrm{p}_{\sigma ^{-1} \circ {\pi }}\) is the zero polynomial if and only if one of the following conditions holds:

-

(1)

\({\pi } \ne {\pi }_1 \sqcup {\pi }_2\), \(m_{1}=m_{2}\), and there are integers \(1 \le k \le 2m_{2}\) and \(2m_{1}+1 \le l \le 2m_{1}+2m_{2}\) such that \(k+l\) is even and

$$\begin{aligned} {\pi } = \left\{ \{\sigma ^{t}(-k),\sigma ^{-t}(l)\} \mid t=1,2,\ldots , 4m_{1} \right\} . \end{aligned}$$ -

(2)

\({\pi } \ne {\pi }_1 \sqcup {\pi }_2\), \(m_{1}=m_{2}\), and there are integers \(1 \le k \le 2m_{2}\) and \(2m_{1}+1 \le l \le 2m_{1}+2m_{2}\) such that \(k+l\) is odd and

$$\begin{aligned} {\pi } = \left\{ \{\sigma ^{t}(-k),\sigma ^{t}(-l)\} \mid t=1,2,\ldots , 4m_{1} \right\} . \end{aligned}$$ -

(3)

\({\pi } = {\pi }_1 \sqcup {\pi }_2\) and there are integers \(1 \le k \le 2m_{1}\) and \(2m_{1}+1 \le l \le 2m_{1}+2m_{2}\) such that

$$\begin{aligned} {\pi }_{1} = \left\{ \{\sigma ^{t_{1}}(-k),\sigma ^{-t_{1}}(k) \} \mid t_{1}=1,2,\ldots , 2m_{1} \right\} \end{aligned}$$and

$$\begin{aligned} {\pi }_{2} = \left\{ \{\sigma ^{t_{2}}(-l),\sigma ^{-t_{2}}(l) \} \mid t_{2}=1,2,\ldots , 2m_{2} \right\} . \end{aligned}$$ -

(4)

\({\pi } = {\pi }_1 \sqcup {\pi }_2\), \(m_{1}\) and \(m_{2}\) are odd integers,

$$\begin{aligned} {\pi }_{1} = \left\{ \{\sigma ^{t_{1}}(-1),\sigma ^{t_{1}}(-m_1-1)\} \mid t_{1}=1,\ldots , 2m_{1} \right\} , \end{aligned}$$and

$$\begin{aligned}{\pi }_{2} = \left\{ \{\sigma ^{t_{2}}(-2m_1-1),\sigma ^{t_{2}}(-2m_1-m_2-1)\} \mid t_{2}=1,\ldots , 2m_{2} \right\} . \end{aligned}$$ -

(5)

\({\pi } = {\pi }_1 \sqcup {\pi }_2\), \(m_{2}\) is odd, there is an integer \(1 \le k \le 2m_{1}\) such that

$$\begin{aligned} {\pi }_{1} = \left\{ \{\sigma ^{t_{1}}(-k),\sigma ^{-t_{1}}(k) \} \mid t_{1}=1,2,\ldots , 2m_{1} \right\} , \end{aligned}$$and

$$\begin{aligned} {\pi }_{2} = \left\{ \{\sigma ^{t_{2}}(-2m_1-1),\sigma ^{t_{2}}(-2m_1-m_2-1)\} \mid t_{2}=1,\ldots , 2m_{2}. \right\} \end{aligned}$$ -

(6)

\({\pi } = {\pi }_1 \sqcup {\pi }_2\), \(m_{1}\) is odd,

$$\begin{aligned} {\pi }_{1} = \left\{ \{\sigma ^{t_{1}}(-1),\sigma ^{t_{1}}(-m_1-1)\} \mid t_{1}=1,\ldots , 2m_{1} \right\} , \end{aligned}$$and there is an integer \(2m_{1}+1 \le l \le 2m_{1}+2m_{2}\) such that

$$\begin{aligned} {\pi }_{2} = \left\{ \{\sigma ^{t_{2}}(-l),\sigma ^{-t_{2}}(l) \} \mid t_{2}=1,2,\ldots , 2m_{2} \right\} . \end{aligned}$$

Proof

Put \({{{\hat{\pi }}}} = \sigma ^{-1} \circ \pi \). Suppose \({{{\hat{\pi }}}} = \{B_{1}, B_{2},\ldots , B_{r}\}\) and let \(\Phi \) be the unique homomorphism from \({\mathbb {Z}}[\textrm{x}_{-1},\textrm{x}_{1},\ldots ,\textrm{x}_{-2m},\textrm{x}_{2m}]\) to \({\mathbb {Z}}[\textrm{x}_{1},\textrm{x}_{2},\ldots ,\textrm{x}_{r}]\) such that \(\Phi (\textrm{x}_{i}) = \textrm{x}_{j}\) if \(i \in B_{j}\). If condition (1) holds, then \({{{\hat{\pi }}}} = \left\{ \{\sigma ^{t}(-k),\sigma ^{-t-2}(l)\} \mid t = 1,2,\ldots , 4m_{1} \right\} \) and \(\sigma ^{t}(-k) + \sigma ^{-t-2}(l)\) is odd for \(t=1,2,\ldots , 4m_{1}\). Thus, since we can write

we get \( \textrm{p}_{{{{\hat{\pi }}}}} = \Phi ( \sum _{i=1}^{2\,m} (-1)^{i} \textrm{x}_{-i} \textrm{x}_{i} ) = 0. \) It follows from similar arguments that \(\textrm{p}_{{{{\hat{\pi }}}}}\) is the zero polynomial if (2) holds. Now, if (4) holds and we take \(l=2m_{1}+1\), we have that \(\sigma ^{t}(-k) + \sigma ^{-t-2}(k)\) and \(\sigma ^{t}(-l) + \sigma ^{t}(-m_{2}-l)\) are odd and \({{{\hat{\pi }}}} = \left\{ \{\sigma ^{t}(-k),\sigma ^{-t-2}(k)\}, \{\sigma ^{t}(-l),\sigma ^{t}(-m_{2}-l)\}\mid t \ge 0 \right\} \). Thus, since we can write

,

we get \(\displaystyle \textrm{p}_{{{\hat{\pi }}}}= 0\). Similar arguments show that if either (3), (5), or (6) holds, then \(\textrm{p}_{{{\hat{\pi }}}}\) is the zero polynomial.

Suppose now \(\textrm{p}_{{{{\hat{\pi }}}}}\) is the zero polynomial and let \({{{\hat{\pi }}}}_1\) and \({{{\hat{\pi }}}}_2\) be the restrictions of \({{{\hat{\pi }}}}\) to \([\pm 2m_1]\) and \([\pm (2m_1 + 2m_2)] {\setminus } [\pm 2m_1]\), respectively. We will consider two cases \({{{\hat{\pi }}}} \ne {{{\hat{\pi }}}}_{1} \sqcup {{{\hat{\pi }}}}_{2}\) and \({{{\hat{\pi }}}} = {{{\hat{\pi }}}}_{1} \sqcup {{{\hat{\pi }}}}_{2}\). Assume first \({{{\hat{\pi }}}} \ne {{{\hat{\pi }}}}_{1} \sqcup {{{\hat{\pi }}}}_{2}\). By Proposition 9, there are integers \(1 \le k \le 2m_{1}\) and \(2m_{1}+1 \le l \le 2m_{1}+2m_{2}\) such that \(k+l\) is odd and one of the following holds:

-

(1’)

\(k \sim _{{{\hat{\pi }}}} - l\) and \(-k \sim _{{{\hat{\pi }}}} l\).

-

(2’)

\(k \sim _{{{\hat{\pi }}}} l\) and \(-k \sim _{{{\hat{\pi }}}} -l\).