Abstract

Computational thinking (CT) is believed to be a critical factor to facilitate STEM learning, and a vital learning objective itself. Therefore, researchers are continuing to explore effective ways to improve and assess it. Makerspaces feature various hands-on activities, which can attract students with diverse interests from different backgrounds. If well designed, scaffolded maker activities have the potential to improve students’ CT skills and STEM learning. In this study, we explore ways to improve and assess physics and engineering integrated CT skills through developing maker activities and assessments, which are applicable in both informal and formal educational settings. Our paper presents our work on improving and assessing CT in maker activities with two primary goals. First, it introduces the maker activities and instruments we developed to improve and assess CT that are integrated in physics and engineering learning. Second, it presents the students’ CT skill and disposition change from pretest to posttest in two summer academies with CT enhanced maker activities, which was respectively led by after school educators and formal educators in a public library.

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Avoid common mistakes on your manuscript.

Introduction

Computation has become such an indispensable component of STEM disciplines (Henderson et al. 2007) that it is nearly impossible to do research or solve practical problems in any scientific or engineering discipline without thinking computationally. Not surprisingly, the latest Next Generation Science Standards (NGSS) include using computational thinking (CT) as one of eight core scientific practices (NGSS 2013). However, the NGSS gives little guidance for how to incorporate CT in the classroom. Researchers are still exploring effective ways to improve and assess CT. Our study contributes to such efforts. In particular, we developed maker activities and formative assessment strategies that promote physics and engineering learning as well as CT skills and dispositions in makerspaces. Informal (or after-school) and formal (classroom) educators then respectively implemented the activities and formative assessment we developed among high school students in two summer camps. In this paper, we first introduce the CT-related maker activities, implementation strategies, and instruments developed to assess CT in our project. We then present the possible impact of the activities on students’ CT skills, frequency of using CT skills, and CT-related dispositions in the two summer academies.

Background

In this section, we review the literature on CT, makerspaces, and formative assessment, which lay the foundations of our study.

Computational Thinking

In 1980, Papert first used the term “Computational Thinking” in the book Mindstorms: Children, computers, and powerful ideas indicating that computers might enhance thinking and change patterns of access to knowledge. Wing (2006) echoed these ideas, promoting the concept of CT and its broad application for problem solving. She stated that CT should be core to K-12 curricula and called for research on effective ways of teaching and learning CT. Since then, CT has drawn increasing attention from educators and educational researchers and has grown its importance in science, technology, engineering, and mathematics (STEM) education. Researchers have defined CT in various ways. For example, from a more specific and cognitive perspective of CT concepts, Brennan and Resnick (2012) developed a definition of CT that involved three key dimensions: (a) computational concepts, (b) computational practices, and (c) computational perspectives. After reviewing literature, interviewing mathematicians and scientists, and studying CT materials, Weintrop et al. (2016) formulated a taxonomy with 4 main categories with 22 subskills: data practices, modeling and simulation practices, computational problem solving practices, and systems thinking practices. ISTE and CSTA (2018) provided a vocabulary list for CT: algorithms and procedures, automation, simulation, parallelization, algorithms and procedures, automation, simulation, and parallelization. Selby and Woollard (2013) synthesized the consensus terms of CT in the literature and proposed CT as follows:

An activity, often product oriented, associated with, but not limited to, problem solving. It is a cognitive or thought process that reflects: the ability to think in abstractions, the ability to think in terms of decomposition, the ability to think algorithmically, the ability to think in terms of evaluations, and the ability to think in generalizations (p. 5).

Involving CT has an immense potential to transform how we approach STEM subjects in classroom. Malyn-Smith and Lee (2012) have facilitated the exploration of CT as a foundational skill for STEM professionals and how professionals engage CT in routine work and problem solving. Later in a formal context of K-8 classrooms, Lee et al. (2014) have integrated CT into classroom instruction through three types of fine-grained computational activities: digital storytelling, data collection and analysis, and computational science investigations. Research has increased lately on organizing professional development workshops about CT teaching strategies and probing teachers’ CT understanding and dispositions (Bower et al. 2017; Leonard et al. 2017; Mouza et al. 2017). To gain a better understanding of the CT studies, Grover and Pea (2013) systematically aggregated CT research findings about how CT has been defined in pertinent studies in K-12. They found that there are still not many studies conducted on K-12 CT learning and assessment, and many schools have not involved CT into their curriculum, implying more empirical studies are needed for CT curriculum design.

To reach more students and help them learn how to use CT to solve problems, researchers have developed and studied various interventions to introduce and develop CT skills across subjects such as journalism and expository writing (Wolz et al. 2010, 2011), science (Basu et al. 2016, 2017; Gero and Levin 2019; Sengupta et al. 2013; Wilensky et al. 2014), sciences and arts (Sáez-López et al. 2016), and mathematics (Wilkerson-Jerde 2014), etc. While there is a broad spectrum in the studies of CT intervention, the research community is still struggling to develop assessment tools that can address the uncertainty in how to best measure CT skills across STEM and non-STEM subjects (Grover and Pea 2013). Researchers have explored various types of CT assessments: knowledge tests, performance assessment, interviews, and surveys (Tang et al. 2018). Some studies employed CT knowledge tests, e.g., a pen and paper test to assess college students’ CS knowledge and CT skills developed by Shell and Soh (2013); an instrument with 15 multiple-choice questions and 8 open-ended questions to assess students’ application of CT knowledge to solve daily life problems and use predefined syntax to program a robot designed by Chen et al. (2017). Another example of a CT knowledge test is Bebras International Contest, a competition developed in Lithuania in 2003 which aims to promote CT learning of K-12 students around the world (Cartelli et al. 2012; Dagienė and Futschek 2008). Bebras tasks do not rely on any software or content subjects; instead, they consist of multiple-choice items with daily life prompts and intend to measure the five components of CT: abstraction, decomposition, algorithmic thinking, pattern generalization, and evaluation consistent with Selby and Woollard’s (2013) framework. Performance assessments serve to be another major assessment tools to measure CT skills. Bers et al. (2014) used grading rubrics to examine the key variables of debugging, correspondence, sequencing, and control flow by assigning the target level of achievement to each student’s robotic project. Some performance assessments are based on visual programming language, e.g., Fairy assessment of Alice programming (Werner et al. 2012), Scrape analysis of Scratch projects (Albert 2016). Lee (2011) explained the results of students’ think-aloud in terms of their usage of abstraction, automation and analysis.

Besides assessing outcomes of CT skills, researchers have also been interested in students’ attitudes and dispositions toward CT. Grover et al. (2015) utilized a system of assessments including formative and summative assessments with directed and open-ended programming assignments as well as a survey on perceptions of CT. Their findings revealed that students have become curious and positive toward the application of computer science and CT in our world. CSTA suggested that the following dispositions are essential dimensions supporting and enhance CT skills: “confidence in dealing with complexity, persistence in working with difficult problems, tolerance for ambiguity, the ability to deal with open ended problems, the ability to communicate and work with others to achieve a common goal or solution” (CSTA 2018, p. 7).

Given the variation of CT research, we see CT-related assessment, curriculum, and instruction as being classified by two dimensions: computer programming and other content knowledge integration (Fig. 1). As shown in Fig. 1, CT can be used, promoted, and assessed with different levels of computer programming involvement from lean to rich and with different levels of integration of other content subject from lean to rich. The two dimensions form quadrants from I to IV. For instance, those emphasizing both content knowledge and computer programming can be placed in quadrant I (Garneli and Chorianopoulos 2018). The assessments developed by Northwestern University’s team (Weintrop et al. 2014, 2016) can be placed in quadrant II, where students are not required to write computer programs, but they were expected to apply content knowledge when solving CT related problems. The Bebras items (Cartelli et al. 2012; Dagienė and Futschek 2008) can be classified in quadrant III. The activities and assessments only focusing on computer programming using computers can be placed in quadrant IV (Bers et al. 2014; Sherman and Martin 2015; Werner et al. 2012).

It should be noted that “being lean” on one or both dimensions do not mean that it is inferior to “being rich.” Instead, they cater to the needs of different learning purposes. Researchers and educators can focus on any one of the quadrants or move from one quadrant to the other. For example, activities in quadrant III fit young children and CT beginners best, because they can focus on CT skills without worrying about content knowledge or computer programming. After they have better developed their CT skills, they can be further led to incorporate more content knowledge and/or computer programming. Figure 1 shows some possible paths to the integration of CT, computer programming, and other content knowledge: all the paths start with CT without much content knowledge or computer programming. Path 1 moves to CT integrated with content knowledge, e.g., those that can be used in a science/math class. Path 2 moves to CT integrated with computer programming, which can be used in a computer class. Path 3 moved to CT with computer programming first and further to CT with content and computer programming involvement. Path 4 moves to CT with content involvement first and further to CT with content and computer programming. Both path 3 and path 4 can be used in interdisciplinary fields where both computer programming and other content subjects are critical. Notice that other content subjects can be STEM or non-STEM ones.

Integrating CT in STEM

Researchers have explored ways to integrate CT with STEM. For example, in Sengupta et al. (2013), 6th grade students constructed simulation models in computational thinking using simulation and modeling (CTSiM) to learn about topics and concepts in kinematics and ecology, integrating CT and science learning. In their study, 15 students were assigned a researcher to receive one-on-one verbal scaffolds, while the other 9 students were taught in a lecture format and conducted the activities individually, only receiving assistance when they raised their hands to ask for help. They found that the scaffolded group had more learning gains in both topics. In their follow-up publication, Basu et al. (2016) identified the challenges that students faced when they used CTSiM to integrate CT and science learning in the previous study. In a recent study, the research team compared an experimental group who used CTSiM with learner modeling and adaptive scaffolding and a control group who only used the CTSiM. They found that the group with scaffolding had better learning outcomes, including modeling strategies and behaviors, and an understanding of science and CT concepts. The Northwestern University group (Wilensky et al. 2014) introduced computational literacy and science inquiry by using agent-based modeling in various science fields, such as physics and chemistry and earth sciences. Similarly, Garneli and Chorianopoulos (2018) compared two ways of improving students’ CT in science learning context by asking students to create either a simulation or computer video games in the context of electric circuits. The video game construction group improved significantly in both CT skills and coding scores, while the simulation group only improved significantly in CT skills. The game design group was also more motivated than the simulation group. Gero and Levin (2019) proposed a way to use electronic spreadsheet to help students perform calculation and develop computational thinking in physics education.

The studies above integrated CT with STEM. However, the majority of them heavily emphasized the integrating of computer programming and STEM (in quadrant I). To explore the area of integrating CT into non-computing STEM subjects, this study designed and explored the effectiveness of assessment tools measuring students’ physics and engineering knowledge integrated with CT skills, as well as dispositions that support and enhance CT (CSTA 2018). Regarding CT skills, we focused the widely used CT skills of abstraction, decomposition, algorithmic thinking, and pattern generalization (Selby and Woollard 2013). In particular, we designed the activities following path 1, starting from quadrant III, adding more content knowledge and moving to quadrant II, and finally involving more computer programming and moving to quadrant I. By doing so, we expect to reduce the cognitive load for students and help them to build their CT skills and thinking habits step by step, so that they could finally integrate CT in their STEM learning. In addition to the CT skills, we focus on the dispositions suggested by CSTA that support and enhance CT skills (CSTA 2018), which is discussed in the earlier section. Particularly, we designed and applied the intervention in the context of makerspaces.

Makerspaces

Since the first Maker Faire in 2006, the maker movement has expanded dramatically as not just a phenomenon, but a philosophy, with makerspaces, activities, conferences, and studies multiplying each year worldwide (Bevan et al. 2015). Emerging from the do-it-yourself (DIY) tradition, the maker movement emphasizes the making process that occurs in an environment that is learning-oriented, but also promotes design thinking, computational concepts, collaborative work, and innovation (Papavlasopoulou et al. 2017). The environment where making and learning happens is commonly referred to as a makerspace—a physical space where people with common interests create DIY projects together, using technology, digital art, science, computers, etc. (Rivas 2014). As raised by Grover and Pea (2013) in their literature review on CT, it is worth noting that the informal educational contexts such as makerspaces present critical roles in implementing CT education. Nowadays, many makerspaces resemble studio arts learning environments where participants work independently or collaboratively using materials to design and make (Halverson and Sheridan 2014). Makerspaces exist in both formal school settings and informal afterschool programs.

The core of the makerspace is the concept of making, which, although broad, focuses on designing, building, modifying, fabricating a real and/or digital artifact. Most commonly, making refers to creating objects that can be developed with technological resources, including fabrication, physical computing, and programming (Martin 2015; Sheridan et al. 2014); however, it can also include low-tech creations like knitting or weaving. Therefore, students in makerspaces can be engaged in various activities, from sewing, 3-D printing, laser cutting, to game design. Influenced by the concept of making, maker activities have become increasingly popular in the field of education during the last few years, because of its ability to engage young people by exploring the physical world, and social associations, through relevant and creative explorations (Blikstein 2014; Martin and Dixon 2013; Martinez and Stager 2013). Maker activities attract students with various interests from different backgrounds and are mainly built on students’ ability to creatively produce artifacts and to share their process and products with others in physical or digital forums. For example, e-textiles combine the physical and digital worlds by introducing electronic components to fabric and jewelry (Buechley et al. 2013). Makey Makey is a construction kit that connects computers to everyday objects (Kafai et al. 2014; Silver et al. 2012). Rapid prototyping, also known as 3D printing, allows for accessible manufacturing of complicated models and artifacts.

Although the learning process is complex in making, several categories of learning outcomes have been identified in the literature. Makerspace holds the promise of helping learners gain knowledge and skills from the fields of engineering, circuitry, design, and computer programming (Kafai et al. 2009; Peppler and Glosson 2013; Resnick et al. 2009; Sheridan et al. 2013). Furthermore, maker activities have the potential to change students’ attitudes toward making, computing, and STEM subject domains. Searle et al. (2015) took a crafts-oriented approach to expand students’ views of computing and broaden participation in computer science by introducing students to computational concepts and letting them design and program electronic artifacts. The pre- and post-interview showed that students’ views shifted in ways that allowed them to see computing as accessible, transparent, personal, and creative. Meanwhile, maker activities have increased students’ confidence and capabilities in solving difficult problems (Burge et al. 2013; Jacobs and Buechley 2013; Wagner et al. 2013), which is consistent with the essential dispositions enhancing CT skills.

In addition to the cognitive and affective learning outcomes, many studies examined other practices that are elicited in maker activities, e.g., engagement and collaboration (Barton et al. 2016; Jacobs and Buechley 2013). By engaging students in constructing creative artifacts, maker activities provide an opportunity to explore and learn content knowledge, cultivate positive feelings and attitudes toward making and computing, as well as enhance cooperation and communication with peers (Lin et al. 2018).

In reviewing the literature, we found that maker activities provide the platform that promotes not only the learning of content knowledge but also the development of skills and abilities including abstract thinking skills, basic mathematics and algorithmic skills, CT skills, and the dispositions that enhance CT skills. However, few studies developed assessments to measure CT skills and dispositions in maker activities. To support students’ CT practice in classrooms, Lee et al. (2011) proposed the learning progression of “use-modify-create” from a practice perspective and outlined the components of CT as “abstraction, automation and analysis” in the contexts of modeling and simulation, robotics, and game design. Makerspaces provide an ideal environment in which the three-stage learning progression of “use-modify-create” can be employed to engage youth in developing CT. If well designed, maker activities can improve students’ CT skill and STEM learning. That is, students change from using someone else’s creation to modifying the model or program, and finally developing ideas for their own new projects. Therefore, our study was designed to improve students’ STEM learning, CT skills, and dispositions in the context of makerspace and use the three stage progression.

Formative Assessment

To help enhance students’ CT skills and improve their dispositions that support CT skills, we implemented formative assessment in our study. Summative assessment often occurs after learning, providing information to judge or evaluate learning and teaching; while formative assessment often occurs during learning, providing information to help learning and teaching. Summative assessment helps differentiate students, teachers, and schools. They, however, are not helpful for those labeled “low performers” as they can tend to have a negative effect by making them lose confidence and believe in “fixed intelligence” and “inborn ability” instead of in the possibility of effort contributing to improvement (Black and Wiliam 1998b). Formative assessment provides information to help students identify where the learning gaps are and how to address them, so it holds promise for improving students’ motivation and learning.

Formative assessments take different formats depending on the situation. Black and Wiliam (1998a) defined formative assessment as “all those activities undertaken by teachers, and/or students, which provide information to be used as feedback to modify the teaching and learning activities in which they are engaged (p. 7).” Shavelson et al. (2008) classified formative assessment techniques into three categories on a continuum based on the amount of planning involved and the formality of technique used: (a) on-the-fly formative assessment, which occurs when teachable moments unexpectedly arise in the classroom; (b) planned-for-interaction formative assessment, which is used during instruction but prepared deliberately before class to align closely with instructional goals; and (c) formal-and-embedded-in-curriculum formative assessment, which is designed to be implemented after a curriculum unit to ensure that students have reached important goals before moving to the next unit.

If well implemented, any form of formative assessment is beneficial for students’ learning and motivation (Ruiz-Primo and Furtak 2007). However, the on-the-fly formative assessment heavily relies on teachers’ experience and instinct and it is more elusive than reliable. Therefore, in our study, we aimed to create formal-and-embedded-in-curriculum formative assessment and planned-for-interaction formative assessment in the implementation of makerspace activities.

Our Study

Our study is intended to improve CT skills and dispositions integration with STEM subjects, in particular, physics and engineering learning. To achieve these goals, (a) we defined the key CT constructs, including both skills and dispositions, that can be applied and enhanced in makerspace activities; (b) we developed a series of maker activities that can improve students’ CT skills as well as physics and engineering learning; (c) we located the moments in the maker activities where students could demonstrate or apply CT skills; (d) we designed formative assessments that mentors or teachers can use to elicit CT; and finally, (e) instructors and mentors scaffolded the instruction for students to apply CT skills in maker activities, so that students can improve their physics and engineering learning as well as CT skills and dispositions. We designed the activities and strategies in a way that can be used in both formal and informal educational settings.

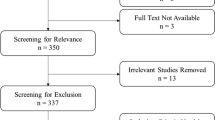

We further collected data to examine the quality of the assessments and effectiveness of the approach, maker activities with formative assessments, used in our study. We implemented our approach in two summer academies for secondary school students. In year 1, two after-school educators led the activities. In year 2, two formal educators led the activities. By giving these students pre- and posttests, we aim to answer the two major research questions: (a) What are the psychometric characteristics of the measures that assess CT skills and dispositions in a makerspace context, including CT related physics and engineering knowledge, CT dispositions, frequency of using CT skills, self-rated maker knowledge, and self-rated CT knowledge (psychometric features)? (b) Did the students using the maker activities improve these learning outcomes from pretest to posttest (pretest posttest comparison)?

Method

Procedures

We took three iterative major steps to develop and implement maker activities in our study: (a) developing/refining activities and formative assessment strategies, (b) providing professional training for mentors/teachers, and (c) implementing maker activities among students and collected data from students. The same steps were taken in year 1 and year 2. Our study was approved by the Institutional Research Board at both institutions of the authors and consents were received from all the students and their parents. The following sections describe the procedures in each step.

Developing/Refining Maker Activities

To create maker activities, we formed a development team with 12 regular adult members, including 4 researchers, 4 after-school educators, 3 high school physics teachers, and 1 program coordinator. The age of the adult development team members ranged from 23 to 54, with a mean of 34 and SD of 9.34. Five members were males and seven were females. Nine of the adult members reported to have a master's degree or higher. Among them, one had a Ph.D. and five were doctoral students at the time of study. Two team members had a degree in computer science, one in mechanical engineering, one in science, one in film, video, and media. The other members had degrees in social sciences, such as education, psychology, and business. Among the adult members, four identified as white, two identified as African, four identified as Hispanic, and four identified as Asian. Because some members identify with more than one group, the total number is higher than 12.

Besides adult members, five high school students from various public schools from a large urban city were in our development team. Two were 16 years old, two were 17 years old, and one was 18 years old when they first joined the program. Four of them receive reduced price or free lunch at school. Among the five participants, one identified as white, two identified as African American, and three identified as Asian. Two were female, two were male, and one did not report gender information. Overall, our development team was diverse, which was intentional because we wanted to develop activities for students with diverse backgrounds. We invited both high school teachers and students to be part of the development team because we wished to develop activities that fit their needs and interests.

Over 5 months, the development team met for nine 4-h meetings, as well as worked independently at home to develop maker activities that were expected to enhance physics and engineering learning and CT. After introducing CT and discussing with the team members about each CT component, team members used various methods to develop the maker activities. Some suggested activities that they have used in school, some modified activities that they found online, and some created new activities from scratch. After each team member developed their activities, we collected the potential activities. The team discussed the activities and chose the ones that the majority were interested in experimenting and tried them out. When we first developed the activities, we started with broad topics related to physics (such as electricity, magnetism, force, and motion) and the corresponding maker activities. After rounds of discussion and experiments, we finally narrowed down topic to a theme that is coherent, manageable, and appropriate for maker activities—electrical circuits—in our study.

In addition, considering that having too many CT components would be overwhelming for students and instructors, we focused on four CT subskills that are the most relevant to the maker activities: decomposition, abstraction, algorithmic thinking, and pattern generalization (Selby and Woollard 2013).

As our project developed the maker activities from scratch, we constantly refined the activities. For example, at the end of year 1 development, we decided on seven major maker activities that were related to electricity and magnetism. However, students in year 1 summer academy suggested that we reduce the number of activities and increase the time for each activity, so that students could have enough time to work on their projects. Based on this suggestion, we further trimmed down the activities and focused on electrical circuits only by removing the activities related to magnetism. In addition, to strengthen the connection among physics, engineering, and CT, we invited a doctoral student in Electrical Engineering to extend the activities related to engineering in year 2 and added more activities that involve computers. Finally, we extended the summer academy from 9 days to 11 days to provide sufficient time for students.

Professional Development

Two after-school educators and two high school physics teachers led the two summer academies respectively. All four educators went through the process of developing the maker activities. As they were part of the development team, we expected them to have a deep understanding of the CT and the activities. In addition, we wanted them to have a sense of ownership of the activities, rather than asking them to implement something that was designed by others. During the summer academy, we discussed teaching practices with the instructors and they provided feedback to improve the activities when necessary.

In addition, after we developed the draft activities in year 1, the leadership team provided a 4-day professional development training for 13 librarians from public libraries. During the 4 days, these librarians tried out the developed maker activities, learned about CT skills, related physics knowledge, and formative assessment strategies. After the professional development meeting, we further refined and finalized the maker activities based on their feedback. In addition, we recruited three librarians to join year 1 summer academy as mentors for students.

Activity Implementation

Nineteen secondary public school students in year 1 and 16 secondary public school students in year 2 participated in a summer academy held in a public library of a large Midwest city. Year 1 academy lasted for 9 days while year 2 academy lasted for 11 days. In both years, the summer academy lasted for 4 h per day. Following the “use-modify-create” learning progression proposed by Lee et al. (2011), the students in both years conducted our designed maker activities first, then they modified the project in student-driven making opportunities. In this process, we encouraged our students to bring what was interesting and relevant to them to makerspace when they created their own projects. In the last 2 days of both academies, the students synthesized what they learned from earlier days and created their own products. In year 1, two after-school educators from our development team led the summer academy, and three librarians participated in the study as facilitators for small groups. In year 2, two high school teachers from the development team led the summer academy. The following section provides more details about the participants.

Participants

As two years have different arrangements, we describe the participants separately for year 1 and year 2.

Year 1 Students

The demographic information from year 1 is as follows: (a) Ethnicity: 14 Hispanic, 3 African-American, 1 Asian, 1 Middle Eastern; (b) Gender: 16 females and 3 males; (c) Age: From 13 to 18 (M = 15.84, SD = 1.12); (d) Free/reduced-price lunch: 18 (95%) students; (e) 16 out of 19 students (84%) indicated that at least one of their favorite subjects in school is related to STEM. Among the 19 participants, 1 student dropped out from the study due to other obligations.

Year 1 Educators

Two of the after-school educators from the development team participated in the summer academy as leading instructors, three librarians and one after-school educator from the development team as small group facilitators (mentors). The leading instructors were a white male and a Hispanic male. Both instructors had rich experience of working with students in informal settings, an after-school program and public library setting respectively. They participated in the process of developing the maker activities and one instructor took the lead in writing the curriculum. During summer academy, they took turns to lead the activities for the whole class. Among the three librarian facilitators, two were male Hispanic, and the other was an Asian/White female. As these librarians had very limited knowledge/experience about the maker activities and CT, we invited them to join the summer program for two purposes, (a) to work with small student groups to simulate how makerspaces work in the public library setting (informal educational setting); (b) as part of their professional development.

Year 2 Students

The demographic information for year 2 students is as follows: (a) Ethnicity: 3 White, 6 African Americans, 4 Hispanic, and 3 Asian; (b) Gender: 9 males, 6 females; (c) Age: From 14 to 18 (M = 15.57, SD = 1.16); (d) Free/reduced-price lunch: 15 (100%) students; (e) 10 out of 15 students (67%) indicated that at least 1 of their favorite subjects in school was related to STEM. Among all the students, 1 student dropped out of the study due to other obligations.

Year 2 Educators

Two high school physics teachers participated in the year 2 summer academy. One led the activities and the other acted as a teaching assistant. Teacher A was the leading instructor, female and Caucasian, with 9 years of experience teaching physics. Teacher B was the assistant teacher, male African American, with 18 years of teaching experience and 12 years of physics teaching experience. Both teachers’ highest degree is master's. Both teachers were part of the development team; therefore, they were familiar with the activities and implementation strategies.

As students volunteered to participate in the summer academy after they just finished their formal school work, to some degree this sample was self-selective. As shown in the description above, the students in both years had diverse demographic backgrounds and the majority of the students came from low-income families. The majority of the students were interested in STEM-related subjects according to the survey.

Maker Activities

As introduced earlier, we developed seven maker activities for year 1 summer academy which included electricity and magnetism after multiple rounds of brainstorming, designing, and refining: circuits, e-textiles, electromagnets, simple motors, Makey Makeys (http://www.makeymakey.com/), circuits on breadboards, and Arduinos (https://www.arduino.cc/). Based on the feedback from students in year 1, we removed electromagnets and simple motor and focused on electrical circuits. In addition, we extended the Arduino activity, so that the students had more opportunities to learn about engineering related content and the computing element of CT was more apparent. We implemented the five maker activities in year 2 summer academy.

Below is the brief description of the five maker activities. Table 1 summarizes the major learning outcomes of each activity that are aligned with the CT. The readers who are interested in the details about each activity can visit our project website (https://actmaproject.wordpress.com/) for additional materials, such as specific materials and implementation procedures.

Electric Circuits

In this lesson, students learn what circuits are and how they work. Using wires with batteries, switches, alligator clips, light bulbs, and other components (such as buzzes and motors), the student learn and set up various circuits, including a simple circuit, series circuits, and parallel circuits. Through the activities, students understand the necessary components of a successful circuit, and how parallel and series circuits work. After students master the basic skills, they designed and created their own circuits to serve a particular function. For example, our students created various projects according to their own interests, e.g., a dollhouse with lighting systems, quiz game buzzer, an egg hatching house, a chair for Santa (that sounds an alarm and set off lights when someone sits on a chair in front of a plate of cookies), and an amusement park.

E-Textiles

E-textiles are circuits sewed on materials such as clothes, hats, shoes, or crafts. Instead of the regular wires with alligator clips and light bulbs, e-textile activities involve conductive threads, small flat LED lights, button batteries, and push-button switches, so that they can be sewed into wearable objects, such as clothes, caps, and accessories. Compared with electrical circuits constructed with alligator clips or just wires, e-textiles are more challenging: (a) they require great patience for students to sew the tiny LEDs with thin conductive thread that can easily short circuit the designed circuits (especially when many students have no experience with sewing); (b) students need to design the circuits before they sew them; otherwise, they may take a lot of time creating their artifact but only find that the circuits do not work and they have to start over; (c) LEDs only work with correct electrical polarity, while incandescent light bulbs illuminate regardless of polarity. However, the challenges that e-textiles pose make them particularly helpful in developing students’ CT dispositions, such as persistence (CSTA 2018). In addition, research has shown that e-textiles are helpful for narrowing the gender gap, given that female students tend to be more interested in the circuits in the context of e-textiles (Searle and Kafai 2015). Our students made various projects, e.g., an illuminated dog collar, a hair band that lights up, a bandana that lights when the ends are tied together, and a maze that lights up when fingers wearing a conductive glove trace the right path.

Makey Makey

Makey Makey is an electronic device that allows users to connect everyday conductive objects to it and control computer programs. This flexibility allows students to think of computing creatively and bring in their personal interests to making. The students made various projects, such as game controllers using fruits, a dancing pad using the human body, and a steering wheel to play a driving game. A student connected a painting she had done with a computer via Makey Makey so that when she when she touched different parts of her painting (e.g., butterfly or a flower), different music or lights were activated. Another student used a Makey Makey to create a mineral detector that can detect conductivity in the rocks from his collection. During this activity, we encouraged students to use CT skills, such as abstraction and pattern generalization to find the similarities between Makey Makey and the basic circuits. That is, as shown on the right in Fig. 2, different connections in Makey Makey are essentially parallel circuits, when the ground and a particular key connections are linked by a conductive object, the loop for that key or click is completed and the corresponding signal is sent to the computer. In addition, it broadens the concept of what is computing and how circuits work when students can touch an orange or a shoe covered in aluminum foil to control a computer.

Circuits on Breadboards

A breadboard is a construction base for prototyping of electronics. Using jumper wires, LED lights, resistors, and a power source, a circuit can be built on the breadboard. Metal strips are built under the holes on breadboard, which serve as existing wires, so that when jumper wires are inserted into the holes of breadboard, it is connected with the metal strips underneath the holes without soldering. This feature makes breadboards easy for users to create temporary and concise prototypes and experiment with circuit design on breadboard. After introducing a breadboard, its structure/function, and circuit examples on a breadboard, we guided students to design their own circuits on breadboards. The breadboard activity helped students further understand electrical circuits and prepared them for maker activities that involve computers.

Arduinos (https://www.arduino.cc/)

Arduino is an inexpensive, small computer-based easy-to-use hardware and software, designed to make learning physics and programming more fun and intuitive. Its major steps include hardware design/implementation and software design/implementation. In year 1, we only introduced a basic Ardunio activity to students, demonstrating how to use software to control for hardware, the circuits on breadboard. In year 2, we decided to extend the Arduino activity, so we invited a doctoral student in engineering to develop three more Arduino projects for students to learn Arduino while improving their CT skills. The goal of these activities is to control real physical systems (e.g., a number of LEDs on breadboard in our study) using Arduino. In these activities, students could create a simple project using knowledge about electrical circuits on breadboard (hardware) and basic Arduino instructions (software) that turns on the light(s), turns off the light(s), and specifies the length of light(s).

In Arduino activity 1, students built a simple circuit on breadboard with one LED light, and wrote a program to control the length of LED light. In Arduino activity 2, students built one LED light on breadboard, but they needed to program it in a way that the light will turn on and off in a pattern that simulates SOS in Morse code: three short (S), three long (O), and three short (S) flashes of light. In Arduino activity 3, students built a parallel circuit with two LED lights, both of which are controlled by the Arduino program. In Arduino activity 4, students built two set of LED lights with three in each set: green, red, and yellow. Students needed to program all lights to simulate the traffic light at an intersection. Different from all the previous activities, the lights in Arduino are controlled by a computer program rather than a physical switch. Arduino provides a perfect connection between physics and engineering, as the circuits on breadboard are similar to the traditional circuits, while the control of the circuits needs computer programming. Although basic, the Arduino activities introduce the essence of engineering, that is, hardware design, software design, and using software to control hardware.

As discussed in the literature review, we believe that CT skills can be used and promoted in various contexts, with different levels of computer programming involvement and other content knowledge (Fig. 1). Aligned with this belief, we designed the maker activities ranging from no content knowledge integrated to more content knowledge integrated, from no computing involved at all to more and more computing involved. Consistent across the activities is the application of CT skills and circuits. That is, CT skills can be used to solve the problems in all the activities and all the activities are essentially related to electrical circuits. In the following section, we discuss about the activity implementation strategies.

Formative Assessments

In the activity implementation, facilitators encouraged students to use CT skills to solve problems by asking formative assessment questions. We developed both formal-embedded formative assessment and planned formative assessment. For each maker activity, we gave students one-page worksheets that included questions that prompt them to use CT skills. For example, for the circuit activity, we asked students to complete five tasks: (a) What is your goal? (b) Convert the breadboard circuit to a visible one and draw the electrical circuit diagram for your design (abstraction). (c) Record the codes you used in the final design (algorithm design). (d) Record your major steps taken to accomplish the task, so that others who have not done it can follow your instruction and do what you did (decomposition and algorithm design). (e) Discuss how circuits on Arduino are different from previous circuits (pattern generalization).

Besides the formal formative assessment, we also developed formative assessments that teachers can use orally during each activity. Table 2 summarizes the formative assessment questions that are aligned with each activity. Notice that the first row in Table 2 encourages students to provide everyday life examples for each CT component. During the summer academies, we introduced students the CT concept and its components. As suggested in Fig. 1, we first started with guiding students to use CT without introducing much subject content. By asking formative assessment questions, we encouraged students to connect CT skills with their everyday life experience. For instance, we guided students to realize that a subway map is an abstraction for the routes in the city. Giving directions to somebody walking on the street is providing an algorithm. When planning a birthday party, we need to determine the location, time, guest list, prepare the party supplies (such as food, drink, decoration)—we are using decomposition and break down a big task to smaller manageable ones. When observing the traffic patterns regarding when (e.g., 8 to 9 AM, 5 to 6 PM) and where (e.g., a certain street) a traffic jam occurs and possibly avoid it, we are using pattern generalization. By connecting CT components with their everyday life experience, we encouraged students to feel more comfortable with each CT component. We guided students to realize that CT is nothing new; they use CT all the time in their everyday life, problem solving, and studying. However, they typically use these skills unconsciously. If they use these skills intentionally, they may be able to solve some problems using the power of a computer.

The maker activities provide students opportunities to develop their skills of intentionally using CT, ideally become a habit of thinking. While students participated in the maker activities, the instructors/mentors used informal formative assessments and encouraged students to apply CT skills in solving the makerspace challenges. Taking the circuits topic as an example (Table 2), students were encouraged to draw circuit diagrams to present the circuits (abstraction), compare different types of circuits and find their similarities and differences (pattern generalization), break down different components of the circuits to figure out the problematic branch or components (decomposition), and describe the procedures they used to build their circuits so that other teams could replicate what they did (algorithm design). Instructors used these formative assessment questions or something similar in class discussion, small group discussion, and one-to-one interaction in the summer academies.

Instruments

We gave students a demographic survey at the beginning of each summer academy. In addition, we gave students CT integrated achievement tests and self-reported surveys at the beginning and the end of the summer academy to examine the possible impact of the summer academy on students. Students completed all the instruments anonymously, but we gave each student an ID so that we could track data collected across time. Below describes each instrument.

CT Integrated Achievement Test

Appendix 1 includes the CT integrated achievement test used in our study. This achievement test is the integration of the physics and engineering content and CT skills emphasized in the maker activities, namely circuits, e-textile, Makey Makey, Breadboard, and Arduino. It includes both a knowledge test and a performance assessment. Year 1 summer academy covered electromagnet and motor, therefore year 1's achievement test has more items than in year 2. Year 2 summer academy covers more Arduino activities, therefore we developed a specific Arduino performance assessment. In addition, we gave students in year 2 six external CT items adapted from international Bebras test (Cartelli et al. 2012; Dagienė and Futschek 2008). The items were selected according to the indicated CT skill each item is designed to assess. In this paper, we used the Bebras items as a criterion test to examine the validity of the CT test. But we only examine students’ performance change on the common items. The items that were unique for year 1 or year 2 are not included in the Appendices or major analyses. Readers who are interested in the non-included items may check our full instruments on our website (https://actmaproject.wordpress.com/).

Self-Report Survey

The survey (see Appendix 2) includes the following dimensions: 12 items measuring CT dispositions (e.g., I have high confidence in dealing with complex problems), 9 items measuring the frequency of using CT skills (e.g., I break down a complex problem or system into smaller parts that are more manageable and easier to understand), and their self-evaluation of their knowledge about each maker activity that were used in the summer academy. Finally, we asked students to evaluate different aspects of the summer academy quantitatively and qualitatively.

Results and Discussion

To address the research questions, we investigated the psychometric features of the instruments, examined the possible impact of the summer academy on participants, and tentatively compared the two summer academies. Before we analyzed the data for pre-posttest comparison, we examined the assumptions for the statistical tests.

Research Question 1: Psychometric Features

Inter-Rater Agreement

The first, third, and fourth author scored the CT integrated achievement test, which mostly included open-ended questions. First, we drafted an analytical scoring rubrics based on the expected learning objectives. Then, we tentatively used the draft rubrics to score five students’ pretests and posttests. Every time after we scored a student’s work, we compared their scores, discussed about any discrepancies, clarified scoring rules, and refined the scoring rubrics. After the scoring rubrics is finalized (the final scoring rubrics is in Appendix 3), authors 3 and 4 independently scored five randomly selected students, the interrater agreement on all the items was .96. Authors 3 and 4 then independently scored the rest of the student work. When disagreement occurred, they discussed and reached a consensus, the consensus was used as the final score.

In year 2, authors 3 and 4 reviewed the scoring rubrics from year 1 to refresh their memory about the scoring rules and then scored another five randomly selected students’ posttest to calibrate their inter-rater agreement. The inter-rater agreement was 0.92. Given that the inter-rater agreement was satisfactory, authors 3 and 4 scored the pretest and posttest of all the students. The scoring process and results indicate that the CT integrated achievement test was scored with satisfactory reliability.

Internal Consistency

In addition to inter-rater reliability, we examined the internal consistency of each measure and the correlation coefficients of different measures, including both CT integrated achievement test and the subscales of the self-report survey for both pretest and posttest. Table 3 shows the results of the test. The values on the diagonal line in Table 3 indicate that all the measures have satisfactory internal consistency, all higher than .70. We further created a composite score for each measure as pretest and posttest scores in the following analyses.

Convergent Validity

Because different scales all measure CT-related constructs, we expect the scores to be positively correlated. The correlation coefficients in triangle A shows that self-rated CT knowledge is positively and significantly correlated with students’ CT disposition, CT frequency, and self-rated content knowledge at pretest. Pre-CT disposition is also positively and significantly correlated with frequency of using CT. In addition, self-rated CT knowledge is also positively and significantly correlated with the CT integrated achievement test. The correlation coefficients in triangle B show that the posttest pattern is similar to that of the pretest scores overall. However, self-rated CT knowledge is not significantly correlated with CT frequency. CT disposition is positively and significantly correlated with CT frequency, self-rated content knowledge, and self-rated CT knowledge. Although some measures at pretest and posttest are not significantly correlated, all of the correlation coefficients within pretest and within posttest are positive, showing convergent validity. That is, those with higher CT dispositions also tend to have higher self-rated content knowledge, CT knowledge, and they tend to use CT more frequently, vice versa.

In addition, year 2 students took Bebras items. We correlated the pretest and posttest Bebras items with achievement scores for year 2 data. The achievement test and Bebras items are positively and significantly correlated at pretest (.61) and posttest (.56). That is, the internal achievement test aligned with CT skills is positively correlated with external CT test, which provides validity evidence.

Test-Retest Reliability

The values on the diagonal line between triangles C and D show the reliability of measures from pretest to posttest. It shows that the pretest and posttest of achievement, CT disposition, CT frequency measures all positively and significantly correlated. However, the test-retest reliability of self-rated content knowledge and CT knowledge is positive but low. This is understandable as intervention emphasizing content knowledge and CT knowledge was given to students between the two tests, the correlation between the pretest and posttest does not reflect the stability of the test.

Discriminant Validity

Compared with the correlation coefficients in triangles A and B, the correlation coefficients in the triangles C and D overall are lower and some are even negative. As those measures differ in both occasion (pretest vs. posttest) and constructs (measuring related but different constructs), the relatively lower correlation coefficients than those in triangles A and B provide evidence for discriminant validity.

Overall, the tests and survey appear to show acceptable technical characteristics and can be used to examine the possible impact of the treatment on students.

Research Question 2: Pretest-Posttest Comparison

Assumption Tests

To answer research questions 2, we performed the paired t test to compare posttest scores with their corresponding pretest scores. We did the test for both summer academies together and separately. Given that the two groups may differ we also conducted multivariate analysis of covariance (MANCOVA), using posttest scores as the dependent variable and the corresponding pretest scores as the covariates and year as the factor. In addition, we conducted and analysis of covariance (ANCOVA) for each measure separately to locate the difference on specific outcome variables. We test the corresponding assumptions for those tests. According to both descriptive statistics and Shapiro-Wilk tests, pre- and posttest CT achievement, CT disposition, CT frequency scores all follow normal distribution. While pre- and post-perceived value of physics and engineering, interest in physics and engineering, and self-efficacy in physics and engineering did not follow normal distribution due to their ceiling effect. That is, the majority of the students rated 4 or 5 on those measures. This is not surprising given that the majority of the participants indicated that at least one STEM related subject is their favorite subject and those students chose to join the summer program that is related to physics and engineering learning.

For ANCOVA-related test, the Levine’s tests show that the homogeneity of variance are met for all the posttest scores except for self-rated maker activity knowledge and self-rated CT skills. Year 2 students had a bigger variance than year 1 students on self-rated maker activity knowledge and CT skills. Regression analysis shows that no significant interaction exists between pretest scores and year. That is, the homogeneity of regression is met for all tests. Based on the assumption tests, the t test, ANCOVA, and MANCOVA tests on CT tests, CT dispositions, and CT frequency are mostly warranted.

Two Groups Together

Table 4 shows the total score, descriptive statistics, and the paired t test results about CT integrated achievement test, CT dispositions, frequency of using CT, self-reported maker knowledge, and self-reported CT skills of the two groups combined. From pretest to posttest, students in two years on average significantly increased their scores on all the five measures. The improvement is so strong that they are all still statistically significant after Bonferroni adjustment, using alpha value as .01. These results show that overall the maker activities used in our study was effective in improving students’ physics and engineering knowledge, CT skills, and CT dispositions.

Two Groups Separately

Table 5 shows the results when the two groups are analyzed separately. In year 1, students significantly improved their scores on four measures: CT integrated achievement test, frequency of using CT, self-rated maker activity knowledge, and self-rated CT skills. In contrast, year 2 students only significantly improved on three measures at .05 level: CT integrated achievement test, self-rated maker activity knowledge, and self-rated CT skills.

Two Group Comparison

Systematically comparing the two groups is not the focus of our study, as the two group varied in the length of treatment, some maker activities, the facilitators, and the use of adult mentors. We tentatively compared the two groups by running multivariate analysis of variance (MANOVA); we found that after controlling for all the pretest scores, including achievement scores and affective scores, year 1 scored significantly higher than year 2 after controlling for pretest scores, Wilks’ Lambda = 0.43, F (5, 16) = 4.29, p = .013. Univariate ANCOVA shows that year 1 students performed significantly better on achievement test (partial eta squared = .267) and self-rated CT skills (partial eta squared = .298) even if Bonferroni adjustment is made and stricter criterion is applied—given that the homogeneity of variance assumption is violated (Table 5). This result confirmed that previous one, that is, the students in year 1 outperformed those in year 2, although both groups made progress overall.

Students’ Comments

Students’ responses to the open-ended questions on the posttest survey further confirmed the effectiveness of the summer academy. Overwhelmingly, students were enthusiastic about the activities and mentors. The students positively commented that the hands-on activities and mentors are helpful for them, they improved their physics, engineering learning, confidence in physics and engineering, and critical thinking skills. Those positive comments further supported the possible impact that we found in quantitative analyses. Students also made improvement suggestions: students wished to have more time to work on their projects, more materials to work with, cover more topics, have more freedom to work on their own projects, and work with students from more schools. Meanwhile, some students suggested that fewer lectures and worksheets should be used. It shows that students loved the hands-on nature of maker space, which is different from conventional STEM education they typically experienced.

Conclusion and Discussion

Our study designed maker activities and formative assessment strategies and used them to improve CT skills and dispositions in maker activities. Also, we created a CT-enriched and content-loaded theoretical framework and explored a route that integrates CT with STEM learning. We expected that the maker activities and formative assessment strategies following the route can be implemented in both informal and formal educational settings. Therefore, both after-school educators and formal educators respectively implemented the maker activities and formative assessment strategies in year 1 and year 2 summer academy in our study.

We developed instruments that can be used to measure CT skills and dispositions, including CT-integrated achievement test that is aligned with maker activities, CT disposition measures, frequency of using CT skills, and self-rated content and CT knowledge. Overall, those measures show satisfactory psychometric features: the open-ended CT-integrated achievement tests can be reliably scored. They all have high internal consistency and positive convergence. The achievement is also positively correlated with external CT test at both pretest and posttest. In addition, CT-integrated achievement test, CT disposition, and CT frequency show acceptable reliability over time.

We examined the effectiveness of the activities by comparing the posttest scores with pretest scores of the two groups together. We found that students in our study significantly improved their scores on all the measures, which indicated the effectiveness of our activities in general. When the two groups are analyzed separately, we found that students in year 1 significantly improved their scores on CT-integrated achievement test, frequency of using CT, self-rated maker activity knowledge, and self-rated CT skills. The students in year 2 significantly improved on almost all the four scales except for the frequency of using CT. Although the full and thorough comparison of the two groups is beyond the scope of this study, tentative comparison shows that the year 1 students outperformed the year 2 students. We suspect the following three reasons might have contributed to the difference between the two years: (a) both after-school educators in year 1 were the major activity developers and they were more familiar with the activities, the CT components, and the maker environment than the two formal teachers in year 2, so it was quite natural for them to implement the activities. (b) Three librarians mentored the students in year 1. Even though the librarians were new to the activities themselves, being adults and after-school educators, they helped the students more or less. (c) Due to the small sample size and non-random assignment, the difference between the students in the two groups may also lead to the difference in their learning outcomes.

Students’ comments on the open-ended portion of the posttest survey were consistent with the quantitative data collected by the survey. That is, the maker activities and mentors were helpful and students improved on both cognitive and affective learning outcomes.

Our study has the following limitations which can be studied in future studies. First, we could not randomly assign students to different conditions. Instead, we conducted the study among the students recruited for the particular year and educators who were available to administer the activity. Future study may randomly assign teachers and students to different conditions to examine the impact of the treatment and systematically compare different treatments. Second, we only analyzed the pre and posttest data in this paper and focus on the products. Future study should examine the fidelity of implementation (process) and connect it with the student outcomes (products), e.g., how the formative assessment was implemented, and how students responded to teachers’ prompts, and how students’ learning outcomes are associated with different interactions between teachers and students. Third, the assessment validation method is limited in our study. With a larger sample size, future studies can validate the CT measures with refined sub-scales and validate them with methods, such as factor analyses. Also, using cognitive interview method, future studies can also examine whether the CT items tap CT skills when students solve the problems. Fourth, in both years, we administered the activities in public library, which is an informal educational setting with limited number of students. Although the instructors in year 2 are high school physics teachers and we intentionally did not provide any additional help to them during their implementation, many contextual factors differ between formal and informal educational settings, e.g., the ratio of teacher and students, the amount of time that students can take for each activity in different settings. Future studies should implement the activities in school settings and examine the effectiveness of the treatment and explore the possible challenges and solutions, so that the treatment can benefit more students and its generalizability can be tested by more teachers and students. Finally, we chose certain equipment, such as Makey Makey, breadboard, and Arduino in our project for students to conduct their maker activities. However, they are certainly not the only equipment available to achieve similar goals; many other equipment may also be used with different features and advantages. For example, Rasberrypi (https://www.raspberrypi.org/) can be an alternative to Arduino and may be more powerful tool. With the fast development of technology, even more equipment may become available. We did not explore the possibility of using other equipment or compare different ones in our study. However, they all can be explored to integrate and improve CT and STEM.

Our study contributed to the field a way to improve and assess CT integrated STEM in the context of maker space. In particular, we (a) created a theoretical framework for integrating CT and content learning which potentially can be used to guide the design of curriculum, instruction, and assessment; (b) developed maker activities and formative assessment strategies to enhance CT skill improvement with physics and engineering learning; (c) developed achievement test and self-reported survey to measure CT; (d) had both formal and after-school educators implement the maker activities and formative assessment strategies; and (e) empirically examined the instruments and the possible impact of the treatment. We wish that our work will inform and inspire the researcher with similar interest to further explore ways to promote CT skills embedded in STEM learning and measure the potential impact of maker activities on student CT skills and STEM learning.

Change history

03 April 2020

The original version of this article unfortunately contained mistakes.

References

Albert, J. (2016). Adding computational thinking to your science lesson: what should it look like? Reno, Nevada: Paper presented at the Association for Science Teacher Education Conference.

Barton, A. C., Tan, E., & Greenberg, D. (2016). The makerspace movement: sites of possibilities for equitable opportunities to engage underrepresented youth in STEM. Teachers College Record.

Basu, S., Biswas, G., Sengupta, P., Dickes, A., Kinnebrew, J. S., & Clark, D. (2016). Identifying middle school students' challenges in computational thinking-based science learning. Research and Pratice in Techology Enhanced Learning, 11(13), 1–35.

Basu, S., Biswas, G., & Kinnebrew, J. S. (2017). Learninger modeling for adaptive scaffolding in a computational thinking-based science learning environment. User Modeling and User-Adapted Interaction, 27(1), 5–53.

Bers, M. U., Flannery, L., Kazakoff, E. R., & Sullivan, A. (2014). Computational thinking and tinkering: exploration of an early childhood robotics curriculum. Computers in Education, 72, 145–157.

Bevan, B., Gutwill, J. P., Petrich, M., & Wilkinson, K. (2015). Learning through STEM-rich tinkering: findings from a jointly negotiated research project taken up in practice. Science Education, 99(1), 98–120.

Black, P., & Wiliam, D. (1998a). Assessment and classroom learning. Assessment in Education, 5(1), 7–74.

Black, P., & Wiliam, D. (1998b). Inside the black box: raising standards through classroom assessment. Phi Delta Kappan, 80(2), 139–148.

Blikstein, P. (2014). Digital fabrication and ‘making’in education: the democratization of invention. In J. Walter-Herrmann & C. Büching (Eds.), FabLabs: of machines, makers and inventors. Bielefeld: Transcript Publishers.

Bower, M., Wood, L. N., Lai, J. W. M., Howe, C., Lister, R., Mason, R., … Veal, J. (2017). Improving the computational thinking pedagogical capabilities of school teachers. Australian Journal of Teacher Education, 42(3), 53–72.

Brennan, K., & Resnick, M. (2012). New frameworks for studying and assessing the development of computational thinking. Paper presented at the annual meeting of the American Educational Research Association, Vancouver.

Buechley, L., Peppler, K., Eisenberg, M., & Kafai, Y. (2013). Textile messages: dispatches from the world of E-textiles and education. New York, NY: Peter Lang Inc., International Academic Publishers.

Burge, J. E., Gannod, G. C., Doyle, M., & Davis, K. C. (2013). Girls on the go: a CS summer camp to attract and inspire female high school students. Paper presented at the 44th ACM technical symposium on computer science education.

Cartelli, A., Dagiene, V., & Futschek, G. (2012). Bebras contest and digital competence assessment: analysis of frameworks. Current trends and future practices for digital literacy and competence (pp. 35–46). Hershey, PA: IGI Global.

Chen, G., Shen, J., Barth-Cohen, L., Jiang, S., Huang, X., & Eltoukhy, M. (2017). Assessing elementary students’ computational thinking in everyday reasoning and robotics programming. Computers in Education, 109, 162–175.

CSTA (2018) Computational thinking teacher resources. Retrieved from http://www.iste.org/docs/ct-documents/ct-teacher-resources_2ed-pdf.pdf?sfvrsn=2

Dagienė, V., & Futschek, G. (2008). Bebras international contest on informatics and computer literacy: criteria for good tasks. Paper presented at the 3rd international conference on informatics in secondary schools - evolution and perspectives: Informatics education - supporting computational thinking Torun, Poland.

Garneli, V., & Chorianopoulos, K. (2018). Programming video games and simulations in science education: exploring computational thinking through code analysis. Interactive Learning Environments, 26(3), 386–401.

Gero, A., & Levin, L. (2019). Computational thinking and constructionism: creating difference equations in spreadsheets. International Journal of Mathematical Education in Science and Technology, 50(5), 779–787.

Grover, S., & Pea, R. (2013). Computational thinking in K–12: a review of the state of the field. Educational Research, 42(1), 38–43.

Grover, S., Pea, R., & Cooper, S. (2015). Designing for deeper learning in a blended computer science course for middle school students. Computer Science Education, 25(2), 199–237.

Halverson, E. R., & Sheridan, K. (2014). The maker movement in education. Harvard Educational Review, 84(4), 495–504.

Jacobs, J., & Buechley, L. (2013). Codeable objects: computational design and digital fabrication for novice programmers. Paper presented at the SIGCHI Conference on Human Factors in Computing Systems.

Kafai, Y., Peppler, K., & Chapman, R. (2009). The computer clubhouse: constructionism and creativity in youth communities. New York, NY: Teachers College Press.

Kafai, Y., Fields, D., & Searle, K. (2014). Electronic textiles as disruptive designs: supporting and challenging maker activities in schools. Harvard Educational Review, 84(4), 532–556.

Lee, I. (2011). Assessing youth’s computational thinking in the context of modeling & simulation, Paper presented at the annual meeting of the American Educational Research Association, New Orleans, LA.

Lee, I., Martin, F., Denner, J., Coulter, B., Allan, W., Erickson, J., Malyn-Smith, J., & Werner, L. (2011). Computational thinking for youth in practice. Acm Inroads, 2(1), 32–37.

Lee, I., Martin, F., & Apone, K. (2014). Integrating computational thinking across the K-8 curriculum. Acm Inroads, 5(4), 64–71.

Leonard, J., Barnes-Johnson, J., Mitchell, M., Unertl, A., Stubbe, C. R., & Ingraham, L. (2017). Developing teachers’ computational thinking beliefs and engineering practices through game design and robotics. North American Chapter of the International Group for the Psychology of Mathematics Education.

Lin, Q., Yin, Y., Tang, X., & Hadad, R. (2018). A systematic review of empirical research on maker activity assessment. New York: Paper presented at the annual meeting of the American Educational Research Association.

Malyn-Smith, J., & Lee, I. (2012). Application of the occupational analysis of computational thinking-enabled STEM professionals as a program assessment tool. Journal of Computational Science Education, 3(1), 2–10.

Martin, L. (2015). The promise of the maker movement for education. Journal of Pre-College Engineering Education Research, 5(1), 30–39.

Martin, L., & Dixon, C. (2013). Youth conceptions of making and the maker movement. New York: Paper presented at the Interaction Design and Children.

Martinez, S. L., & Stager, G. (2013). Invent to learn: making, tinkering, and engineering in the classroom. Torrance, CA: Constructing Modern Knowledge Press.

Mouza, C., Yang, H., Pan, Y.-C., Ozden, S. Y., & Pollock, L. (2017). Resetting educational technology coursework for pre-service teachers: a computational thinking approach to the development of technological pedagogical content knowledge (TPACK). Australasian Journal of Educational Technology, 33(3), 61–76.

NGSS. (2013). Appendix F - Science and engineering practices in the NGSS. Retrieved from http://www.nextgenscience.org/sites/ngss/files/Appendix%20F%20%20Science%20and%20Engineering%20Practices%20in%20the%20NGSS%20-%20FINAL%20060513.pdf

Papavlasopoulou, S., Giannakos, M. N., & Jaccheri, L. (2017). Empirical studies on the maker movement, a promising approach to learning: a literature review. Entertainment Computing, 18, 57–78.

Papert, S. (1980). Mindstorms: children, computers, and powerful ideas: basic books. Inc.

Peppler, K., & Glosson, D. (2013). Stitching circuits: Learning about circuitry through e-textile materials. Journal of Science Education and Technology, 22(5), 751–763.

Resnick, M., Maloney, J., Monroy-Hernández, A., Rusk, N., Eastmond, E., Brennan, K., … Silverman, B. (2009). Scratch: programming for all. Communications of the ACM 52(11), 60–67.

Rivas, L. (2014). Creating a classroom makerspace. Educational Horizons, 93(1), 25–26.

Ruiz-Primo, M., & Furtak, E. (2007). Informal formative assessment and scientific inquiry: exploring teachers’ practices and student learning. Educational Assessment, 11(3 & 4), 205–235.

Sáez-López, J.-M., Román-González, M., & Vázquez-Cano, E. (2016). Visual programming languages integrated across the curriculum in elementary school: a two year case study using “scratch” in five schools. Computers & Education, 97, 129–141. https://doi.org/10.1016/j.compedu.2016.03.003.

Searle, K. A., & Kafai, Y. B. (2015). Boys’ needlework: understanding gendered and indigenous perspectives on computing and crafting with electronic textiles. Omaha, Nebraska: Paper presented at the international computing education research.

Selby, C., & Woollard, J. (2013). Computational thinking: the developing definition. Paper presented at the special interest group on computer science education (SIGCSE).

Sengupta, P., Kinnebrew, J. S., Basu, S., Biswas, G., & Clark, D. (2013). Integrating computational thinking with K-12 science education using agent-based computation: a theoretical framework. Education and Information Technologies, 18(2), 351–380.

Shavelson, R. J., Yin, Y., Furtak, E. M., Ruiz-Primo, M. A., Ayala, C. C., Young, B., … Pottenger, F. M. (2008). On the role and impact of formative assessment on science inquiry teaching and learning. In J. Coffey, R. Douglas, & C. Stearns (Eds.), Assessing science learning: perspectives from research and practice (pp. 21–36). Arlington, VA: National Science Teachers Association Press.

Shell, D. F., & Soh, L.-K. (2013). Profiles of motivated self-regulation in college computer science courses: differences in major versus required non-major courses. Journal of Science Education and Technology, 22(6), 899–913.

Sheridan, K., Clark, K., & Williams, A. (2013). Designing games, designing roles: a study of youth agency in an urban informal education program. Urban Education, 48(5), 734–758.

Sheridan, K., Halverson, E. R., Litts, B., Brahms, L., Jacobs-Priebe, L., & Owens, T. (2014). Learning in the making: a comparative case study of three makerspaces. Harvard Educational Review, 84(4), 505–531.

Sherman, M., & Martin, F. (2015). The assessment of mobile computational thinking. Journal of Computing Sciences in Colleges, 30(6), 53–59.

Silver, J., Rosenbaum, E., & Shaw, D. (2012). Makey Makey improvising tangible and nature-based user interfaces. Kingston, Ontario, Canada: Paper presented at the ACM tangible embedded and embodied interaction.

Tang, X., Yin, Y., Lin, Q., & Hadad, R. (2018). Assessing computational thinking: a systematic review of the literature. New York: Paper presented at the annual meeting of American Education Research Association.

Wagner, A., Gray, J., Corley, J., & Wolber, D. (2013). Using app inventor in a K-12 summer camp. Paper presented at the 44th ACM technical symposium on computer science education, Denver, CO.

Weintrop, D., Beheshti, E., Horn, M. S., Orton, K., Trouille, L., Jona, K., & Wilensky, U. (2014). Interactive assessment tools for computational thinking in high school STEM classrooms. Chicago, IL: Paper presented at the INTETAIN.

Weintrop, D., Beheshti, E., Horn, M., Orton, K., Jona, K., Trouille, L., & Wilensky, U. (2016). Defining computational thinking for mathematics and science classrooms. Journal of Science Education and Technology, 25(1), 127–147.