Abstract

We first establish a law of large numbers and a convergence theorem in distribution to show the rate of convergence of the non-local means filter for removing Gaussian noise. Based on the convergence theorems, we propose a patch-based weighted means filter for removing an impulse noise and its mixture with a Gaussian noise by combining the essential idea of the trilateral filter and that of the non-local means filter. Experiments show that our filter is competitive compared to recently proposed methods. We also introduce the notion of degree of similarity to measure the impact of the similarity among patches on the non-local means filter for removing a Gaussian noise, as well as on our new filter for removing an impulse noise or a mixed noise. Using again the convergence theorem in distribution, together with the notion of degree of similarity, we obtain an estimation for the PSNR value of the denoised image by the non-local means filter or by the new proposed filter, which is close to the real PSNR value.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

Images are produced to record or display useful information. Due to the visibility of images and the rapid development of science and technology, images play an increasingly important role in our lives. However, because of imperfections in the imaging and capturing process, the recorded image invariably represents a degraded version of the original scene [3, 5]. The undoing of these imperfections is crucial to many of the subsequent image processing tasks.

There exists a wide range of different degradations. A very important example is the existence of noise. Noise may be introduced by the medium through which the image is created and transmitted. In this paper, we concentrate on removing impulse noise and its mixture with Gaussian noise.

We present a numerical image by a \(M\times N\) matrix \(u = \{u(i): i\in I \}\), where \(I=\{0, 1,\dots , M-1\} \times \{0, 1, \dots , N-1\}\) is the image domain, and \( u(i) \in \{0,1,2,\dots , 255\}\) represents the gray value at the pixel i for 8-bit gray images. The additive Gaussian noise model is:

where \(u=\{ u(i): i\in I\}\) is the original image, \(v=\{ v(i): i\in I\}\) is the noisy one, and \(\eta \) is the Gaussian noise: \(\eta (i)\) are independent and identically distributed Gaussian random variables with mean 0 and standard deviation \(\sigma \). In the sequel we always denote by u the original image, and v the noisy one. The random impulse noise model is:

where \(0<p<1\) is the impulse probability (the proportion of the occurrence of the impulse noise), and \(\eta (i)\) are independent random variables uniformly distributed on \([\text{ min }\{u(i):i\in I\},\text{ max }\{u(i):i\in I\}]\), generally taken as [0,255] for 8-bit gray images. For the mixture of Gaussian noise and impulse noise model, an image is first added by Gaussian noise and then contaminated by impulse noise. The task of image denoising is to recover the unknown original image u as well as possible from the degraded one v.

Many denoising methods have been developed in the literature. To remove a Gaussian noise, there are approaches based on wavelets [7, 10, 15], approaches based on variational models [16, 32], and weighted means approaches [4, 23, 33, 34, 41], etc. An important progress was marked by the proposition of the non-local means filter [4], abbreviated as NL-means; this filter estimates original images by weighted means along similar local patches. Since then, many researchers have combined the basic idea of NL-means with some other methods to remove noise, see for instance [11, 18, 20, 27]. There are also many methods to remove an impulse noise, including median based filters [1, 8, 30], fuzzy filters [42], and variational based methods [6, 14, 29].

The above-mentioned methods can only be applied to remove one kind of noise (a Gaussian noise or an impulse noise), and can not be used to remove a mixture of a Gaussian noise and an impulse noise. To remove a mixed noise, a successful method is the trilateral filter proposed in [19], where an interesting statistic called ROAD (Rank of Ordered Absolute Differences) is introduced to detect the impulse noisy pixels; this filter combines the ROAD statistic with the bilateral filter [33, 34] to remove the noise. The trilateral filter [19] is also effective for removing an impulse noise; a variant of the ROAD statistic, named ROLD (Rank-Ordered Logarithmic Difference), has been proposed in [14], where it is combined with the edge-preserving variational method [6] for removing impulse noise. Other methods have also been developed recently to remove a mixed noise. The papers [12, 13, 21, 24, 38] use the patch-based idea of NL-means to remove an impulse noise and a mixed noise. In [21, 24], generalizations of NL-means are proposed for removing an impulse noise and its mixture with a Gaussian noise, using the ROAD statistic [19]; the main idea therein is to define weights in terms of the ROAD statistic and the similarity of local patches, which are nearly zero for impulse noisy points. In [38], NL-means is adapted by estimating the similarity of patches with the reference image obtained in an impulse noise detection mechanism. The papers [12, 13] also use a patch-based approach, where a robust distance is introduced (inspired by order statistics) to estimate the similarity between patches using the tail of the binomial distribution, and the maximum likelihood estimator (MLE) is used to estimate the original image. The methods in [40] and [37, 43] use the ideas of BM3D [11] and K-SVD [18] (which are the state-of-the-art algorithms for Gaussian noise removal) respectively to remove a mixed noise; the algorithm proposed in [25] is based on a Bayesian classification of the input pixels, which is combined with the kernel regression framework.

The NL-means filter [4] explores in a nice way the similarity phenomenon existing very often in natural images. As stated above, many filters have been proposed based on the basic idea of NL-means. But the theoretic aspects have not been so much studied. A probabilistic explanation called similarity principle was given in [24]. In this paper we will improve this principle by proving a Marcinkiewicz law of large numbers and a convergence theorem in distribution, which describe the rate of convergence of NL-means.

Based on the convergence theorems, we will propose a new filter, called Patch-based Weighted Means Filter (PWMF) to improve the Mixed Noise Filter (MNF) introduced in [24]. Compared to MNF, the new filter simplifies the joint impulse factor in MNF, adds a spatial factor so that the filter extends entirely both the trilateral filter and the NL-means filter, and adjusts the choice of parameters. Experimental results show that our new filter is competitive for removing both the impulse noise and the mixed noise, compared to recently developed filters [13, 19, 37, 38, 40].

To well understand the impact of the similarity on the quality of NL-means, we will introduce the notion of degree of similarity. We will see that, in general, the larger the value of degree of similarity, the better the restoration result by NL-means. Furthermore, using the degree of similarity together with the convergence theorem in law, we will give a good estimation of the PSNR value of the denoised image (without knowing the original image). Our simulations show that the estimated PSNR value is quite close to the real one when the original image is known.

This paper is an extended and improved version of our conference paper [21]; it also develops and improves the earlier work [24].

The rest of this paper is organized as follows. In Sect. 2, we recall the non-local means filter [4], and present two convergence theorems (Theorems 1 and 2) to show the rate of convergence of NL-means. The proofs of the convergence theorems are postponed in an appendix by the end of the paper. In Sect. 3, we recall the trilateral filter [19] and introduce our new patch-based weighted means filter. Experiments are presented in Sect. 4 to compare the new filter with some recently proposed ones. In Sect. 5, the notion of degree of similarity is first introduced, and then applied to estimations of PSNR values, using the convergence theorem in law. Conclusions are made in Sect. 6. The paper is ended by an appendix in which we give the proofs of the convergence theorems, by establishing two more general convergence theorems for random weighted means of l-dependent random variables.

2 Convergence Theorems for Non-local Means

In this section, we present a Marcinkiewicz law of large numbers and a convergence theorem in distribution for NL-means [4] to remove Gaussian noise.

2.1 Non-local Means

Begin with some notation. For \(i \in I\) and \(d>1\) an odd integer, let

with \(\Vert \cdot \Vert _\infty \) denoting the sup norm:

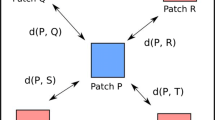

In other words, \( \mathcal {N}_{i}(d) \) is the window with center i and size \(d \times d\), and \(\mathcal {N}_{i}^{0}(d)\) is the same window but with the center i deleted. We sometimes simply write \(\mathcal {N}_{i}\) and \(\mathcal {N}_{i}^{0}\) for \(\mathcal {N}_{i}(d)\) and \(\mathcal {N}_{i}^{0}(d)\), respectively. Denote by

the vector composed of the gray values of v in the window \(\mathcal {N}_{i}\) arranged lexicographically; it represents the local oriented image patch defined on the window \(\mathcal {N}_{i}\).

For another odd integer D, let \(\mathcal {N}_{i} (D) \) be the window with center i and size \(D \times D\), defined in the same way as we did for \(\mathcal {N}_{i} (d) \). The denoised image by NL-means is given by

with

where \(\sigma _r>0\) is a control parameter,

\( a(i,k)>0\) being some fixed weights usually chosen to be a decreasing function of the Euclidean norm \(\Vert i-k\Vert \) or the sup norm \(\Vert i-k\Vert _\infty \), and \(\mathcal {T}=\mathcal {T}_{ij}\) is the translation mapping i to j (thus mapping \(\mathcal {N}_i\) onto \(\mathcal {N}_j\)):

We call \(\mathcal {N}_{i}(D)\) search windows, and \(\mathcal {N}_i=\mathcal {N}_{i}(d)\) local patches. Theoretically, the search window \(\mathcal {N}_{i}(D)\) in (1) can be chosen as the whole image I; but in practice, it is better to choose \(\mathcal {N}_{i}(D)\) with an appropriate number D not too large.

2.2 Convergence Theorems

We now present two convergence theorems for NL-means using probability theory. Following [24], first give a definition of similarity and recall the notion of l-dependence.

For simplicity, use the same notation \(v(\mathcal {N}_{i})\) to denote both the observed image patch centered at i and the corresponding random variable (in fact the observed image is just a realization of the corresponding variable). Therefore the distribution of the observed image \(v(\mathcal {N}_{i})\) is just that of the corresponding random variable.

Definition 1

(Similarity) Two patches \(v(\mathcal {N}_{i})\) and \(v(\mathcal {N}_{j})\) are called similar if they have the same probability distribution.

Definition 1 is a probabilistic interpretation of the similarity phenomenon. According to this definition, two observed patches \(v(\mathcal {N}_{i})\) and \(v(\mathcal {N}_{j})\) are similar if they are issued from the same probability distribution. In practice, we consider that two patches \(v(\mathcal {N}_i)\) and \(v(\mathcal {N}_j)\) are similar if their Euclidean distance is small enough, say \(\Vert v(\mathcal {N}_i)-v(\mathcal {N}_i)\Vert <T\) for some threshold T. We will come back to this point later in Sect. 5 when we consider the notion of degree of similarity.

Note that Definition 1 is equivalent to say that the non-noisy patches \(u(\mathcal {N}_i)\) and \(u(\mathcal N_j)\) are equal. In fact, when \(v(\mathcal N_i)\) and \(v(\mathcal N_j)\) have the same distribution, they have the same expected value, so that \(u(\mathcal N_i) = u(\mathcal N_j)\). The converse is also easy: when \(u(\mathcal N_i) = u(\mathcal N_j)\), then \(v(\mathcal N_i)\) and \(v(\mathcal N_j)\) have the same distribution, as so do \(\eta (\mathcal N_i)\) and \(\eta (\mathcal N_j)\). The definition seems to be somehow restrictive, but this models the ideal situation, and coincides with our intuition that \(u(\mathcal N_i) \) and \(u(\mathcal N_j) \) are very close when the patches are similar.

Definition 2

(l-dependence) For an integer \(l\ge 0\), a sequence of random variables \(X_1, X_2, \dots \) is called to be l-dependent if each subsequence \(X_{k_1}, X_{k_2}, \dots \) is independent whenever \(|k_m-k_n|>l\) for all \(m,n\ge 1.\) (That is, random variables with distances strictly greater than l are independent of each other.)

Fix two odd integers \(d>1\) and \(D>1\). For \(i\in I\), define

For convenience, we write \( I_i\) in the form

(throughout the paper for a set S we write |S| for the cardinality of S). The elements \(j \in I_i\) are ordered according to the increasing order of \(\Vert j-i\Vert _{\infty }\), and for the \(j's\) with the same distance \(\Vert j-i\Vert _{\infty }\), say \(\Vert j-i\Vert _{\infty }=c\) for some constant c, they are ordered anticlockwise beginning from the upper left corner of the quadrilateral \(\{x \in \mathbb {R}^2: \Vert x-i\Vert _{\infty }=c\}\). Notice that \(v(\mathcal {N}_{i})\) and \(v(\mathcal {N}_{j})\) are independent if \( \mathcal {N}_{i} \cap \mathcal {N}_{j} = \emptyset \). Since \(\mathcal {N}_{j} \cap \mathcal {N}_{j} \not = \emptyset \) if and only if \(\Vert j-i\Vert _{\infty } \le d-1\), there are precisely \((2d-1)^2 - 1\) windows \( \mathcal {N}_{j} \) with \(j\not = i \) which intersect \(\mathcal {N}_{i}\) (as the elements \(j \not = i\) with \(\Vert j-i\Vert _{\infty } \le d-1\) constitute a window centered at i of size \((2d-1) \times (2d-1)\), whose center is deleted). Therefore, we have:

Lemma 1

For each \(i\in I\), with the order of \(I_i\) that we defined above, the sequence of random vectors \(\{ v( \mathcal {N}_{j}): j \in I_i\}\) is l-dependent for \(l= (2d-1)^2 -1\).

Actually, very often we can take l smaller, as we only consider similar patches. Notice that if we choose the lexicographical order for \(I_i\), then we need to choose l much larger for the l-dependence. This is why we ordered \(j \in I_i \) according to the increasing order of \(\Vert j-i\Vert _{\infty }\).

As usual, for two sequences of real numbers \(a_n\) and \(b_n\), we write

Accordingly, when \(a_n\) and \(b_n\) are random, \(a_n = o (b_n)\) (resp. \(a_n = O (b_n)\)) almost surely means that

The following theorem is a kind of Marcinkiewicz law of large numbers. It gives an estimation of the almost sure convergence rate of the estimator to the original image for the non-local means filter.

Theorem 1

Let \(i\in I \) and let \(I_i\) be the set of j such that the patches \(v(\mathcal {N}_{i})\) and \(v(\mathcal {N}_{j})\) are similar (in the sense of Definition 1). Set

where

Then for any \(\epsilon \in (0,\frac{1}{2}]\), as \(|I_i|\rightarrow \infty \),

where \(|I_i|\) denotes the cardinality of \(I_i\).

Notice that when \(\epsilon =\frac{1}{2}\), (8) means that

which is the similarity principle in [24].

Recall that \(\mathcal {N}_i^0=\mathcal {N}_i\backslash \{i\}\). Theorem 1 shows that \(v^0(i)\) is a good estimator of the original image u(i) if the number of similar patches \(|I_i|\) is sufficiently large. Here we use the weight \(w^0 (i,j)\) instead of w(i, j), as \(w^0 (i,j)\) has the nice property that it is independent of v(j) if \(j\not \in \mathcal {N}_{i}\). This property is used in the proof, and makes the estimator \(v^0 (i)\) to be nearly non-biased: in fact, if the family \(\{v(j)\}_j\) is independent of the family \(\{w^0(i,j)\}_j \) (e.g. this is the case when the similar patches are disjoint), then it is evident that \(\mathbb {E}v^0(i) = u(i)\). We can consider that this non-biased property holds approximately as for each j there are few k such that \(w^0 (i,k)\) is dependent of v(j). A closely related explanation about the biased estimation of NL-means can be found in [39].

Notice that when \(v(\mathcal {N}_{j})\) is not similar to \(v(\mathcal {N}_{i})\), the weight \(w^0(i,j)\) is small and negligible. Therefore it is also reasonable to take all patches for the calculation. Indeed, taking just similar patches or all patches does not make much difference for the denoising results, as shown in Table 1 of [2]. However, selecting only similar patches can slightly improve the restoration result, and can also speed up the algorithm, as shown in [2, 26] where a pre-classification of patches is proposed so that we only need calculate the weights for similar patches. On the other hand, as illustrated by [39], the difference between \( w^0(i,j)\) and w(i, j) is also small, but \(w^0(i)\) often gives a little better restoration result. Therefore, Theorem 1 not only gives a mathematical justification of the original non-local means filter (showing that NLM(v)(i) is a reasonable estimator of u(i)), but also suggests some improvements by taking just similar patches instead of all patches, and using the weights \( w^0(i,j)\) instead of w(i, j).

The next result is a convergence theorem in distribution. It states that \(v^0(i)-u(i) \rightarrow 0\) with a rate as \(1/\sqrt{|I_i|}\) in the sense of distribution.

Theorem 2

Under the condition of Theorem 1, assume additionally that \(\{v(\mathcal {N}_{j}): j\in I_i\}\) is a stationary sequence of random vectors. Then as \( |I_i|\rightarrow \infty \),

where \(\mathop {\rightarrow }\limits ^{d}\) means the convergence in distribution, and \(\mathcal {L}\) is a mixture of centered Gaussian laws in the sense that it has a density of the form

\(\mu \) being the law of \(v(\mathcal {N}_i^0)\) and

with

Moreover, writing \(m=|\mathcal {N}_{i}^{0}|=d^2-1, \nu =\mathbb {E}(v(\mathcal {N}_{j_k}^0))\) and

we have the approximations

and

To apply Theorem 2 we often need to calculate the probability of the form \(\mathcal {L}(a,b) = \int _a^b f(t)dt\), where a, b are real numbers such that \(a<b\). In practice, to this end we can replace the density function f(t) by its approximation \({\tilde{f}} (t)\). But a direct calculation of \({\tilde{f}} (t) \) is not easy when m is large (as we have a multiple integral of order m whose numerical calculation is not easy). An efficient way for the calculation is to use the Monte-Carlo simulation as follows.

Remark 1

(Calculation of \(\int _a^b f(t)dt\) by Monte-Carlo simulation) Let \(-\infty < a < b < \infty \), and let \(X_i\) and \(U_i\) be independent random variables such that each \(X_i\) has the normal law \( N(0,\sigma ^2 Id_m)\) on \(\mathbb {R}^{m} \) (with \(Id_m\) denoting the identity matrix of size \(m \times m\)), and each \(U_i\) has the uniform law on (a, b). Then for \(a<b\) and k large enough,

To see the approximation, it suffices to notice that, by the law of large numbers,

By Theorems 1 and 2, the larger the value of \(|I_i|\), the better the approximation of \(v^0(i)\) to u(i), that is to say, the larger the number of similar patches, the better the restored result. This will be confirmed in Sect. 5 where we shall introduce the notion of degree of similarity for images, showing that the larger the degree of similarity, the better the quality of restoration.

The proofs of the theorems will be given in Appendix.

3 Patch-Based Weighted Means Filter

In this section, we first introduce our new filter which combines the basic idea of NL-means [4] and that of the trilateral filter [19]. We then analyse the convergence of this new filter for removing mixed noise.

3.1 Trilateral Filter

The authors of [19] proposed a neighborhood filter called the trilateral filter as an extension of the bilateral filter [33, 34] to remove random impulse noise and its mixture with Gaussian noise. Firstly, they introduced the statistic ROAD (Rank of Ordered Absolute Differences) to measure how like a point is an impulse noisy point defined by

\(r_{k}(i)\) being the k-th smallest term in the set \(\{ | u(i)-u(j)|: j\in \mathcal {N}_{i}(d)\backslash \{i\}\}, d\) and m two constants taken as \(d=3, m=4\) in [19]. If i is an impulse noisy point, then ROAD(i) is large; otherwise it is small. Therefore, the ROAD statistic serves to detect impulse noisy points.

Secondly, with the ROAD statistic, they defined the impulse factor \(w_{I}(i)\) and the joint impulse factor \(J_I(i,j)\):

where \(\sigma _{I}\) and \(\sigma _{J}\) are control parameters Footnote 1. If i is an impulse noisy point, then the value of \(w_{I}(i)\) is close to 0; otherwise it is close to 1. Similarly, if either i or j is an impulse noisy point, then the value of \(J_I(i,j)\) is close to 0; otherwise it is close to 1.

Finally, the restored image by the trilateral filter is

where

with

3.2 Patch-Based Weighted Means Filter

As in the non-local means filter [4], our filter estimates each point by the weighed means of its neighbors, and the weight for each neighbor is determined by the similarity of local patches centered at the estimated point and the neighbor. Due to the existence of impulse noise, some points are totally destroyed, so that noisy values are not related to original values at all. So we have to diminish the influence of impulse noisy points. Similarly to [21, 24], we introduce the following weighted norm:

where

Recall that here \(k=(k_1, k_2)\) represents a two-dimensional spatial location of a pixel, \(w_{I}\) is defined in (19), and \(\mathcal {T}\) is the translation mapping i to j (and thus \(\mathcal {N}_i(d)\) onto \(\mathcal {N}_j(d)\)). \(F\big (k,\mathcal {T}(k)\big )\) is a joint impulse factor: if k or \(\mathcal {T}(k)\) is an impulse noisy point, then \(F\big (k,\mathcal {T}(k)\big )\) is close to 0, so that these points contribute little to the weighted norm; otherwise \(F\big (k,\mathcal {T}(k)\big )\) is close to 1.

We now define our filter that we call Patch-Based Weighted Means Filter (PWMF). The restored image by PWMF is defined as

where

with

and \(w_{I}(j)\) is defined in (19). In addition, we mention that, in this paper, we use the joint impulse factor \(F\big (k,\mathcal {T}(k)\big )\) \(=w_I(k)w_I\big (\mathcal {T}(k)\big )\), which is different from the choice in [24] and [21], where \(F\big (k,\mathcal {T}(k)\big )\) \(=J_I\big (k,\mathcal {T}(k)\big )\). In fact, we can see that with this new choice, we simplify the methods in [24] and [21] by eliminating a parameter and speeding up the implementation. Furthermore, we empirically find that the new choice leads to an improvement of the quality of restored images, especially for impulse noise.

3.3 Convergence Analysis

Our new filter can be regarded as an application of the mathematical justifications of the non-local means filter stated in Sect. 2 to the remained image obtained after filtering the impulse noisy points by the weighted norm (22), which can be considered to contain only Gaussian noise.

Let us explain this more precisely. For a fixed pixel i, let

be the set of impulse noisy points, and \({P^c}=\mathcal {N}_{i}(D) \backslash P\) be its complementary. We will show that

which demonstrates that the estimated value by this filter is close to the weighted average of gray value of the non-impulse noisy points. Since \( w_I(j)\approx 0\) for impulse noisy point j, and \( w_I(j)\approx 1\) for other points, we can think that PWMF is close to NL-means applied to images containing only Gaussian noisy points. By Theorem 1, the right-hand side of (27) is close to u(i), which indicates the convergence \(\text{ PWMF }(v)(i)\) to u(i).

To obtain (27), set

then it holds that

Since

it follows that

We claim that \({J_2}/{J_1}\) is very small, and so is \( {J_2}/({J_1+J_2})\). In fact,

For \(j\in P\), we can approximately replace the ROAD statistic by its mean value \({\bar{R}} (P)\) over P; for \(j\in P^c\), we do the same by the mean value \({\bar{R}} (P^c)\) over \(P^c\). Therefore writing

we get

where the last step holds by Theorem 1 of [31]. Therefore, it follows that

The value of right-hand side of (29) is generally small. For example, for impulse noise \(p=0.2\), by results in [19], \({\bar{R}} (P)\) is about 242, and \({\bar{R}} (P^c)\) is about 17. Therefore, with the parameter \(\sigma _I=50\) used in the experiments,

Thus, by (28), the value \(\text{ PWMF }(v)(i)\) is very close to \(\frac{R_1}{J_1} \): we have approximately

4 Choices of Parameters and Comparison with Other Filters by Simulations

4.1 Choices of Parameters

Notice that PWMF reduces to NL-means when \(\sigma _I= \sigma _S=\infty \). So for removing Gaussian noise, a reasonable choice is to take \(\sigma _I\) and \(\sigma _S\) large enough. Now present the choices of parameters for removing impulse noise and mixed noise, which are determined empirically and important for our filter. The noise level \(\sigma \) and p, are supposed to be known, otherwise, there are methods to estimate them in the literature, for example [22, 28, 40].

In the calculation of ROAD [cf.(18)], we choose \(3\times 3\) neighborhoods and \(m=4\). For impulse noise or mixed noise with \(p=0.4, 0.5\), to further improve the results, \(5\times 5\) neighborhoods and \(m=12\) are used to calculate ROAD.

The patch size \(d=9\) is used in all cases; the search window sizes D are shown in Table 1.

Now come to the choice of \(\sigma _I, \sigma _M, \sigma _{S}, \sigma _{S,M}\) appearing in (19), (25) and (23). To apply our filter easily in practice, simple and uniform formulas in terms of p and \(\sigma \) are searched empirically. Assume that there is some simple relation between the desired parameters and the given parameters (the values of \(\sigma \) and p). We try some simple relations of the form \( a \sigma + bp +c\), then determine a, b, c by experiments in different cases, and then verify their validity again by many experiments.

-

To remove impulse noise, use \(\sigma _M=3+20p, \sigma _{S}=0.6+p\), and omit the factor \(w_{S,M}\) (i.e. \(\sigma _{S,M}\) can be taken a value large enough); \(\sigma _I=50\) for \(p=0.2, 0.3\), and \(\sigma _I=160\) for \(p=0.4, 0.5\).

-

For mixed noise, choose \(\sigma _I=50+5\sigma /3, \sigma _M=3+0.4\sigma +20p, \sigma _{S,M}=2\), and omit the factor \(w_{S}\), while \(\sigma = 10, 20, 30\) and \(p=0.2,0.3\).

-

For other values of \(\sigma \) or p, choose parameters by linear interpolation, linear extension or according to the adjacent values of \(\sigma \) or p.

It is not easy to find appropriate parameters for a filter. Different choices of parameters can have great influence to the restored images. See also [13]. Some similar research for NL-means can be found in [17, 35, 39].

Note that our choice of parameters is different from [24]: for the patch size, we use \(d=9\), while [24] uses \(d=3\) in most cases; for impulse noise with \(p=0.4,0.5\), we use \(5\times 5\) neighborhoods for ROAD, while [24] always uses \(3\times 3\) neighborhoods.

4.2 Experiments and Comparisons

We use standard gray images to test the performance of our filterFootnote 2. Original images are shown in Fig. 1.Footnote 3 As usual we use PSNR (Peak Signal-to-Noise Ratio)

to measure the quality of a restored image, where u is the original image, and \({\bar{v}}\) the restored one. For the simulations, the gray value of impulse noise is uniformly distributed on the interval [0,255]. We add Gaussian noise and then add impulse noise for the simulation of mixed noise. The same realizations of noisy images for comparisons of different methods are used when codes are available, that is, for TriF [19], ROLD-EPR [14], NLMixF [21] and MNF [24]. For other methods, the reported results in corresponding papers are listed.

The results for TriF are obtained by the program made by ourselves. To compare the performance of our filter with those of TriF fairly, we make our effort to obtain the best results as we can according to the suggestion of [19].

-

Use \(\sigma _I=40, \sigma _J=50, \sigma _S=0.5,\) and \(\sigma _R=2\sigma _{\textit{QGN}}\), where \(\sigma _{\textit{QGN}}\) is an estimator for the standard deviation of “quasi-Gaussian” noise defined in [19].

-

For impulse noise, apply one iteration for \(p=0.2\), two iterations for \(p=0.3, 0.4\), and four iterations for \(p=0.5\). For mixed noise, apply TriF twice with different values of \(\sigma _S\) as suggested in [19]: with all impulse noise levels p, for \(\sigma =10\), first use \(\sigma _{S}=0.3\), then \(\sigma _{S}=1\); for \(\sigma =20\), first \(\sigma _{S}=0.3\), then \(\sigma _{S}=15\); for \(\sigma =30\), first \(\sigma _{S}=15\), then \(\sigma _{S}=15\).

For ROLD-EPR, the listed values are the best PSNR values along iterations with the code from the authors of [14].

Table 2 demonstrates the performances of PWMF for removing impulse noise by comparing with TriF [19], ROLD-EPR [14], PARIGI [13], and NLMixF [21]. For ease of comparison, in this and following tables, the best results and the results where the differences from the best ones are less than 0.1 dB are displayed in bold. We can observe that our filter PWMF attains the best performance in term of PSNR. Some visual comparisons are shown in Figs. 2 and 3. Carefully comparing these images, we observe that TriF loses some small textured details, while ROLD-EPR is not smooth enough. PARIGI and PWMF show better results.

Different papers consider different mixtures of Gaussian noise and impulse noise. We demonstrate the performance of PWMF for removing mixed noise in Tables 3, 4, 5, and 6 by comparing it with TriF [19], NLMixF [21], PARIGI [13] IPAMF + BM [40], Xiao [37], MNF [24], and Zhou [43]. All these comparisons illustrate good performance of our filter except for Barbara when comparing with PARIGI. Our method does not work very well as PARIGI for Barbara, because the ROAD statistics is a very local statistics and can not use the redundancy of this image very well to detect impulse noisy pixels, while PARIGI is particularly powerful for the restoration of textured regions. From Fig. 4, it can be seen that the results of our filter are visually better than TriF. From Fig. 5, we can see that when the standard deviation \(\sigma \) is high, our filter is smoother than PARIGI, while PARIGI seems to preserve more weak textured details, but it has evident artifacts throughout the whole image (see the electronic version of this paper at full resolution).

Finally, we compare the CPU time of TriF [19], NLMixF [21], and our method PWMF for removing mixed nose in seconds in the platform of MATLAB R2011a with unoptimized mex files. The computer is equipped with 2.13GHZ Intel (R) Core (TM) i3 CPU and 3.0 GB memory. The results are presented in Table 7, which demonstrate that PWMF is rather fast: much faster than NLMixF and even faster than TriF when the noise level is low, thanks to the simplified joint impulse factor \(F\big (k, \mathcal {T}(k)\big )\) defined in (23). The results also show that our method is faster than Zhou [43].

5 Degree of Similarity and Estimated PSNR Values

In this section, we introduce the notion of degree of similarity (DS) of images corrupted by Gaussian noise and mixed noise. With this notion and Theorem 2, estimations of the PSNR values of denoised images are obtained. For simplicity, in this section, impulse noise is considered as particular mixed noise with \(\sigma =0\).

For Gaussian noise model, if two patches \(v(\mathcal {N}_{i})\) and \(v(\mathcal {N}_j)\) have the same distribution and are independent of each other, i.e. \(u(\mathcal {N}_{i})=u(\mathcal {N}_{j})\), then \(\{v(k)-v(\mathcal {T}(k)): k\in \mathcal {N}_{i}\}\) are independent variables with the same law \(N(0,2\sigma ^2)\) (recall that \(\mathcal {T}\) is the translation mapping of \(\mathcal {N}_i\) onto \(\mathcal {N}_j\)). Therefore

Comparison of the performances of TriF [19] and our filter PWMF for removing mixed noise

where X is the sum of squares of independent random variables with normal law N(0, 1), and has the law \(\chi ^2\) of density function \(f_{\kappa }\) with \(\kappa = d^2\), where

Let \(\alpha \in (0,1)\) represent the risk probability, chosen to be small enough. And let \(T_{\alpha }>0\) be determined by

so that

When \(\Vert v(\mathcal {N}_{i})-v(\mathcal {N}_j)\Vert \le T_{\alpha }\), we consider that \(v(\mathcal {N}_{i})\) and \(v(\mathcal {N}_{j})\) are similar with confidence level \(1-\alpha \). This leads us to the following definition of the degree of similarity.

Definition 3

(Degree of similarity of images corrupted by Gaussian noise) Let \(\alpha \in (0,1)\) and let \(T_{\alpha } >0 \) be defined as above. For \(i\in I,\) let

be the proportion of patches \(v(\mathcal {N}_j)\) similar to \(v(\mathcal {N}_{i})\) in the search window \(\mathcal {N}_{i}(D)\), and let

be their mean over the whole image. We call DS the degree of similarity of the image v with confidence level \(1-\alpha \).

Comparison of the performances of our filter PWMF and PARIGI [13] for removing mixed noise with Lena

For the mixed noise model, if the original patches satisfy \(u(\mathcal {N}_{i})=u(\mathcal {N}_{j})\), then, letting

we have

where \(P^c\) represents the set of non-impulse noisy points contained in \(\mathcal {N}_{i}\), and the right-hand side has the law \(\chi ^2\) with density function \(f_{\kappa }(t)\) with \(\kappa \approx (1-p)^2d^2\).

Similar to Definition 3, let \(T'_{\alpha }>0\) be determined by

Definition 4

(Degree of similarity of images corrupted by mixed noise) Let \(\alpha \in (0,1)\) and let \(T'_{\alpha }>0\) be defined as above. For \(i\in I,\) let

and

We call DS the degree of similarity of the image v with confidence level \(1-\alpha \).

Note that, on average, the proportion of significant pixels in a search window \(\mathcal N_i (D)\) is \((1-p)\), which explains the factor \((1-p)\) in the right-hand side of (32).

For each pixel \(i\in I\), denote by \({\bar{v}}(i)\) the gray value of the restored image at pixel i by NL-means for Gaussian noise or by PWMF for mixed noise. Then by Theorem 2, it holds that

where n represents the number of similar patches in the search window \(\mathcal N_i(D)\).

Definition 5

(Estimated PSNR value) For \(\beta \in (0,1)\), let \(A_{\beta }>0\) be such that

where \({\tilde{f}}(t)\) is defined by (15). Define

where \(n= D^2\cdot \textit{DS}\) is the estimated number of similar patches by means of the degree of similarity DS. We call \(\text{ PSNR }_{e}\) the estimated PSNR value with confidence level \(1-\beta \).

The value of \(\beta \) is chosen to be small enough, representing the risk probability. For mixed noise, we can replace \(\sigma _r\) by \(\sigma _M\) for the calculation of c(x) in \({\tilde{f}}(t)\). For given \(\beta \), we can use the Monte-Carlo simulation to obtain the approximate value of \(A_{\beta }\) (see Remark 1 in Sect. 2.2).

We compute the DS values and estimated PSNR values for different images corrupted by Gaussian noise with \(\sigma =10, 20, 30\), and mixed noise with \(\sigma =20, p=0.2\) or 0.3 shown in Tables 8 and 9. Note that the DS value depends on the choice of \(\alpha \), and the estimated PSNR value depends on the choice of \(\beta \) and \(\alpha \). To have a good approximation to the true PSNR value, we take \(\beta =0.03\); \(\alpha =0.03,0.3,0.5\) for Gaussian noise \(\sigma =10,20,30\) respectively, and \(\alpha =0.04\) for mixed noise. It can be seen that, generally, the larger the DS value, the larger the estimated PSNR value and the true PSNR value, and the estimated PSNR value is close to the true PSNR value.

6 Conclusions

Two convergence theorems, one for the almost sure convergence and the other for the convergence in law, are established to show the rate of convergence of NL-means [4]. Based on the convergence theorems, a new filter called patch-based weighted means filter (PWMF) is proposed to remove mixed noise, leading to an extension of NL-means. The choice of parameters has been carefully discussed. Simulation results show that the new proposed filter is competitive compared to recently developed known algorithms. The notion of degree of similarity is introduced to describe the influence of the proportion of similar patches in the application of NL-means or PWMF. With the notion of degree of similarity and the convergence theorem in law, we obtain a good estimation for PSNR values of denoised images by NL-means or PWMF.

Notes

The code of our method and the images can be downloaded at

https://www.dropbox.com/s/oylg9to8n6029hh/to_j_sci_comput_paper_code.zip.

The images Lena, Peppers256 and Boats are originally downloaded from

Fig. 1 Original \(512\times 512\) images of Lena, Bridge, Peppers512, Boats. Since in the original Peppers images, there are black boundaries of width of one pixel in the left and top which can be considered as impulse noise, to make an impartial comparison, we compute PSNR for Peppers images after removing all the four boundaries, that is with images of size \(510\times 510\) for Peppers512 and \(254\times 254\) for Peppers256

http://decsai.ugr.es/~javier/denoise/test_images/index.htm; the image Peppers512 is from http://perso.telecom-paristech.fr/~delon/Demos/Impulse and the image Bridge is from www.math.cuhk.edu.hk/~rchan/paper/dcx/.

References

Akkoul, S., Ledee, R., Leconge, R., Harba, R.: A new adaptive switching median filter. IEEE Signal Process. Lett. 17(6), 587–590 (2010)

Bilcu, R.C., Vehvilainen, M.: Fast nonlocal means for image denoising. In: Proceedings of SPIE, Digital Photography III 6502, 65020R (2007)

Bovik, A.: Handbook of Image and Video Processing. Academic Press, London (2005)

Buades, A., Coll, B., Morel, J.: A review of image denoising algorithms, with a new one. Multiscale Model. Simul. 4(2), 490–530 (2005)

Castleman, K.: Digital Image Processing. Prentice Hall Press, Upper Saddle River (1996)

Chan, R., Hu, C., Nikolova, M.: An iterative procedure for removing random-valued impulse noise. IEEE Signal Process. Lett. 11(12), 921–924 (2004)

Chang, S.G., Yu, B., Vetterli, M.: Adaptive wavelet thresholding for image denoising and compression. IEEE Trans. Image Process. 9, 1532–1546 (2000)

Chen, T., Wu, H.: Adaptive impulse detection using center-weighted median filters. IEEE Signal Process. Lett. 8(1), 1–3 (2001)

Chow, Y., Teicher, H.: Probability Theory: Independence, Interchangeability, Martingales. Springer, Berlin (2003)

Coifman, R., Donoho, D.: Translation-invariant denoising. Wavel. Stat. 103, 125–150 (1995)

Dabov, K., Foi, A., Katkovnik, V., Egiazarian, K.: Image denoising by sparse 3-D transform-domain collaborative filtering. IEEE Trans. Image Process. 16(8), 2080–2095 (2007)

Delon, J., Desolneux, A.: A patch-based approach for random-valued impulse noise removal. In: 2012 IEEE international conference on acoustics, speech and signal processing (ICASSP), pp. 1093–1096. IEEE (2012)

Delon, J., Desolneux, A.: A patch-based approach for removing impulse or mixed gaussian-impulse noise. SIAM J. Imaging Sci. 6(2), 1140–1174 (2013)

Dong, Y., Chan, R., Xu, S.: A detection statistic for random-valued impulse noise. IEEE Trans. Image Process. 16(4), 1112–1120 (2007)

Donoho, D., Johnstone, J.: Ideal spatial adaptation by wavelet shrinkage. Biometrika 81(3), 425–455 (1994)

Durand, S., Froment, J.: Reconstruction of wavelet coefficients using total variation minimization. SIAM J. Sci. Comput. 24(5), 1754–1767 (2003)

Duval, V., Aujol, J., Gousseau, Y.: A bias-variance approach for the nonlocal means. SIAM J. Imaging Sci. 4, 760–788 (2011)

Elad, M., Aharon, M.: Image denoising via sparse and redundant representations over learned dictionaries. IEEE Trans. Image Process. 15(12), 3736–3745 (2006)

Garnett, R., Huegerich, T., Chui, C., He, W.: A universal noise removal algorithm with an impulse detector. IEEE Trans. Image Process. 14(11), 1747–1754 (2005)

Hu, H., Froment, J.: Nonlocal total variation for image denoising. In: Symposium on Photonics and Optoelectronics (SOPO), pp. 1–4. IEEE (2012)

Hu, H., Li, B., Liu, Q.: Non-local filter for removing a mixture of gaussian and impulse noises. In: Csurka, G., Braz, J. (eds.) VISAPP, vol. 1, pp. 145–150. SciTePress (2012)

Johnstone, I.M., Silverman, B.W.: Wavelet threshold estimators for data with correlated noise. J. R. Stat. Soc. B B 59(2), 319–351 (1997)

Kervrann, C., Boulanger, J.: Local adaptivity to variable smoothness for exemplar-based image regularization and representation. Int. J. Comput. Vis. 79(1), 45–69 (2008)

Li, B., Liu, Q., Xu, J., Luo, X.: A new method for removing mixed noises. Sci. China Inf. Sci. 54, 51–59 (2011)

López-Rubio, E.: Restoration of images corrupted by gaussian and uniform impulsive noise. Pattern Recognit. 43(5), 1835–1846 (2010)

Mahmoudi, M., Sapiro, J.: Fast image and video denoising via nonlocal means of similar neighborhoods. IEEE Signal. Process. Lett. 12(12), 839–842 (2005)

Mairal, J., Sapiro, G., Elad, M.: Learning multiscale sparse representations for image and video restoration. Multiscale Model. Simul. 7, 214–241 (2008)

Muresan, D., Parks, T.: Adaptive principal components and image denoising. In: IEEE International Conference on image processing (2003)

Nikolova, M.: A variational approach to remove outliers and impulse noise. J. Math. Imaging Vis. 20(1), 99–120 (2004)

Pratt, W.: Median filtering. Semiannual Report, Image Processing Institute, University of Southern California, Los Angeles, Tech. Rep pp. 116–123 (1975)

Pruitt, W.: Summability of independent random variables. J. Math. Mech. 15, 769–776 (1966)

Rudin, L.I., Osher, S., Fatemi, E.: Nonlinear total variation based noise removal algorithms. Phys. D Nonlinear Phenom. 60(1–4), 259–268 (1992)

Smith, S., Brady, J.: SUSAN—a new approach to low level image processing. Int. J. Comput. Vis. 23(1), 45–78 (1997)

Tomasi, C., Manduchi, R.: Bilateral filtering for gray and color images. In: Proceedings of the international confernce on computer vision, pp. 839–846. IEEE (1998)

Van De Ville, D., Kocher, M.: Sure-based non-local means. IEEE Signal Process. Lett. 16(11), 973–976 (2009)

Wen, Z.Y.: Sur quelques théorèmes de convergence du processus de naissance avec interaction des voisins. Bull. Soc. Math. Fr. 114, 403–429 (1986)

Xiao, Y., Zeng, T., Yu, J., Ng, M.: Restoration of images corrupted by mixed gaussian-impulse noise via l sub (1-l) sub (0) minimization. Pattern Recognit. 44(8), 1708–1720 (2011)

Xiong, B., Yin, Z.: A universal denoising framework with a new impulse detector and nonlocal means. IEEE Trans. Image Process. 21(4), 1663–1675 (2012)

Xu, H., Xu, J., Wu, F.: On the biased estimation of nonlocal means filter. In: International conference on multimedia computing and systems/international conference on multimedia and expo, pp. 1149–1152 (2008)

Yang, J.X., Wu, H.R.: Mixed gaussian and uniform impulse noise analysis using robust estimation for digital images. In: Proceedings of the 16th international conference on digital signal processing, pp. 468–472 (2009)

Yaroslavsky, L.P.: Digital Picture Processing. Springer, Secaucus (1985)

Yuksel, M.: A hybrid neuro-fuzzy filter for edge preserving restoration of images corrupted by impulse noise. IEEE Trans. Image Process. 15(4), 928–936 (2006)

Zhou, Y., Ye, Z., Xiao, Y.: A restoration algorithm for images contaminated by mixed gaussian plus random-valued impulse noise. J. Vis. Commun. Image Represent. 24(3), 283–294 (2013)

Acknowledgments

The work has been supported by the Fundamental Research Funds for the Central Universities in China (No.N130323016), the Research Funds of Northeastern University at Qinhuangdao (No. XNB201312, China), the Scientific Research Foundation for the Returned Overseas Chinese Scholars, State Education Ministry(48-2), the National Natural Science Foundation of China (Grant Nos. 11171044 and 11401590), and the Science and Technology Research Program of Zhongshan (Grant No. 20123A351, China). The authors are very grateful to the reviewers for their valuable remarks and comments which led to a significant improvement of the manuscript. They are also grateful to Prof. Raymond H. Chan and Dr. Yiqiu Dong for kindly providing the code of ROLD-EPR.

Author information

Authors and Affiliations

Corresponding author

Appendix

Appendix

In this appendix we will prove Theorems 1 and 2.

1.1 Convergence Theorems for Random Weighted Means

We first show a Marcinkiewicz law of large numbers (Theorem 3) and a convergence theorem in distribution for random weighted means (Theorem 4) for l-dependent random variables, which we will use to prove Theorems 1 and 2.

Theorem 3

Let \(\{(a_k, v_k)\}\) be a sequence of l-dependent identically distributed random variables, with \(\mathbb {E}|a_1|^p<\infty \) and \( \mathbb {E}|a_1v_1|^p<\infty \) for some \( p\in [1,2),\) and \(\mathbb {E} a_1\ne 0\). Then

We need the following lemma to prove it.

Lemma 2

[24] If \(\{X_n\}\) are l-dependent and identically distributed random variables with \( \mathbb {E}X_1=0\) and \(\mathbb {E}|X_1|^p<\infty \) for some \(p\in [1,2)\), then

This lemma is a direct consequence of Marcinkiewicz law of large numbers for independent random variables (see e.g. [9], p. 118), since for all \(k\in \{1,\ldots ,l+1\}, \{X_{i(l+1)+k}:i\ge 0\}\) is a sequence of i.i.d. random variables, and for each positive integer n, we have

where \(m,k_0\) are positive integers determined by \(n=m(l+1)+k_0, 0\le k_0 \le l\).

Proof of Theorem 3

Notice that

where

Since \(\mathbb {E}|a_1|\le (\mathbb {E}|a_1|^p)^{1/p}<\infty \), by Lemma 2 with \(p=1\), we have

Since \(\mathbb {E}a_1z_1=0\), and \(\mathbb {E}|a_1z_1|^p<\infty \), again by Lemma 2, we get

Thus the conclusion follows. \(\square \)

Theorem 4

Let \(\{(a_k,v_k)\}\) be a stationary sequence of l-dependent and identically distributed random variables with \(\mathbb {E}a_1\ne 0, \mathbb {E}a_1^2<\infty ,\) and \(\mathbb {E}(a_1v_1)^2<\infty \). Then

that is,

where \(\varPhi (z)\) is the cumulative distribution function of the standard normal distribution,

and

with \(\lambda =\sum _{k=1}^l\mathbb {E}a_1z_1a_{1+k}z_{1+k}, \; z_k=v_k \mathbb {E}a_1-\mathbb {E}a_1 v_1 \).

We need the following lemma to prove the theorem.

Lemma 3

[36] Let \(\{X_n\}\) be a stationary sequence of l-dependent and identically distributed random variables with \(\mathbb {E}X_1=0\) and \(\mathbb {E}X_1^2<\infty \). Set \(S_n=X_1+ \cdots +X_n (n\ge 1)\),

Then var\((S_n)=c_1n-c_2\) for \(n > l\), and as \(n\rightarrow \infty \),

Proof of Theorem 4

As in the proof of Theorem 3, we have

where

Notice that the l-dependence of \(\{(a_k,v_k)\}\) and the stationarity imply those of \(\{(a_k,z_k)\}\). Therefore by Lemma 3, we get

where

Since \(\mathbb {E}|a_1|\le (\mathbb {E}|a_1|^p)^{1/p}\), by Lemma 2 with \(p=1\), we obtain

Thus the conclusion follows with \(c=c_0 /(\mathbb {E}a_1)^2\). \(\square \)

1.2 Proofs of Theorems 1 and 2

We now come to the proofs of Theorems 1 and 2, using Theorems 3 and 4.

For Theorem 1, we need to prove that for any \(\epsilon \in (0,\frac{1}{2}]\), as \(n\rightarrow \infty \),

where

We will apply Theorem 3 to prove this. Note that the sequence \(\{{w^{0}}(i,j_k),v(j_k)\}\) \({(k=1,2,\ldots ,n)}\) is usually not l-dependent, since the central random variable \(v(\mathcal {N}^{0}_{i})\) is contained in all the terms. To make use of Theorem 3, we first take a fixed vector to replace the central random variable.

Proof of Theorem 1

Fix \(x\in \mathbb {R}^{|\mathcal {N}_i^0|}\). Let

Then \(a_{k}\) and \(v(j_k)\) are independent since \(j_k\not \in \mathcal {N}^{0}_{j_k}\), so that

By Lemma 1, the sequence \( \{v(\mathcal {N}_{j_k})\} \) is l-dependent for \( l=(2d-1)^2 -1\); thus the sequence \(\{\big (a_{k},v(j_k)\big )\}\) is also l-dependent. Since \(v=u+\eta \), with the range of u being bounded and \(\eta \) being Gaussian, we have \(\mathbb {E}|v(j_k)|^p<\infty \) for \(p\in [1,2)\). (In fact, it holds for all \(p\ge 1\).) Hence \(\mathbb {E}|a_{k}v(j_k)|^p<\infty \), as \(a_k\le 1\).

Applying Theorem 3, we have, for any fixed \(x=v(\mathcal {N}^0_i)\in \mathbb {R}^{|\mathcal {N}_i^0|}\) and any positive integer \(k_0\),

Let \(k_0 > l\), so that \(v(\mathcal {N}_i^0)\) is independent of \(v(\mathcal {N}^{0}_{j_k})\) for all \(k\ge k_0\). By Fubini’s theorem, we can replace \(w^{0}(x,j_k)\) in (36) by

That is,

To prove the theorem, we need to estimate the difference between the left-hand sides of (8) and (37). Let

Then as before, fixing \(x\in \mathbb {R}^{|\mathcal {N}_i^0|}\), applying Theorem 3 with \(p=1\) and Fubini’s theorem, and replacing x by \(v(\mathcal {N}_i^0)\), we obtain

Using this and the fact that

we see that

Therefore, (37) implies that

As (39) holds for any \(p \in [1,2)\), we see that (8) holds for all \(\epsilon \in (0,\frac{1}{2}]\). \(\square \)

We will prove Theorem 2, which demonstrates that as \( n\rightarrow \infty \),

where

and \(\mathcal {L}\) is a mixture of centered Gaussian laws in the sense that it has a density of the form (15).

Proof of Theorem 2

The procedure of the proof is similar to that of the proof of Theorem 1. Fix \(x\in \mathbb {R}^{|\mathcal {N}_i^0|}\), and set

Then \(a_{k}\) and \(v(j_k)\) are independent, \( {\mathbb {E}a_{k}v(j_k)}/{\mathbb {E}a_{k}}=\mathbb {E}v(j_k)=u(i)\), and \(\{(a_{k},v(j_k))\}\) is a sequence of l-dependent and identically distributed random vectors with \(l= (2d-1)^2 -1\), and \( \mathbb {E}|a_{k}v(j_k)|^2\le \mathbb {E}|v(j_k)|^2<\infty \). Hence applying Theorem 4, we get, for any fixed x and any positive integer \(k_0\),

where \(c_x>0\) will be calculated by the end of the proof. This means that for any \(t\in \mathbb {R}\),

Let \(k_0>l\) be the positive integer such that \(v(\mathcal {N}_i^0)\) is independent of \(v(\mathcal {N}^{0}_{j_k})\) for all \(k\ge k_0\). Then by Fubini’s theorem and Lebesgue’s dominated convergence theorem, we have

where

with \(\mu \) being the law of \(v(\mathcal {N}_i^0)\). In other words,

where \(\mathcal {L}\) is the law with density f. This together with (38) prove the equation (15) of Theorem 2.

We now turn to the calculation of \(c_x\). Let \(v_k=v(j_k)\) and \( z_k=v_k \mathbb {E}a_1-\mathbb {E}(a_1 v_1)\). Because of the independence of \(a_1\) and \(v_1\), we get \(z_k=(v_k-\mathbb {E} v_1)\mathbb {E}a_1\). Then, it follows that

and

Note that if \((a_1,a_k)\) is independent of \((v_1,v_k)\), it holds that

by the independence of \(v_1\) and \(v_k\). If \(v_1\) is not contained in \(v(\mathcal {N}^{0}_{j_k})\), then \((a_1,a_k)\) is independent of \((v_1,v_k)\). Notice that according to the order of \(I_i\) defined in Sect. 2.2, when \(k>d^2\), \(v_1\) is not contained in \(v(\mathcal {N}^{0}_{j_k})\), so that (40) holds; therefore by Theorem 4,

We finally give an approximation of \(c_x\). Recall that \(a_{k}=e^{-\Vert x-v(\mathcal {N}^{0}_{j_k})\Vert ^2/(2\sigma _r^2)}\), and \(v_k=v(j_k)\). Let \(\mathcal {T}(j)=j-j_1+j_k\) be the translation mapping \(j_1\) to \(j_k\) (thus mapping \(\mathcal {N}^{0}_{j_1}\) onto \(\mathcal {N}^{0}_{j_k}\)).

If \(v( j_1)\) is not contained in \(v(\mathcal {N}^{0}_{j_k})\), we have already seen that \(\mathbb {E} (a_1z_1a_kz_k)= 0\). If \(v(j_1)\) is contained in \(v(\mathcal {N}^{0}_{j_k})\), to make \((a_1,a_k)\) independent of \((v_1,v_k)\), we can remove \(v(j_1)\) from \(v(\mathcal {N}^{0}_{j_k})\) and the corresponding term \(v(\mathcal {T}^{-1}(j_1))\) from \(v(\mathcal {N}^{0}_{j_1})\); remove \(v(j_k)\) from \(v(\mathcal {N}^{0}_{j_1})\) and the corresponding term \(v(\mathcal {T}(j_k))\)from \(v(\mathcal {N}^{0}_{j_k})\). The obtained values of \(a_1,a_k\) are very close to the initial values of \(a_1,a_k\) respectively. Hence, we can consider that \(\mathbb {E} (a_1z_1a_kz_k)\approx 0\). Therefore

where \(v=v(\mathcal {N}^{0}_{j_k})\) and \(m=|\mathcal {N}_{j_k}^0|\). Recall that \(\mu (dv)\) is the law of \(v(\mathcal {N}^{0}_{i})\), so it is also the law of \(v(\mathcal {N}^{0}_{j_k})\). Let

then \(v\sim N(\nu , \sigma ^2Id_m)\), where \(Id_m\) denotes the identity matrix of size \(m\times m\). Since

we get

This ends the proof of Theorem 2. \(\square \)

Rights and permissions

About this article

Cite this article

Hu, H., Li, B. & Liu, Q. Removing Mixture of Gaussian and Impulse Noise by Patch-Based Weighted Means. J Sci Comput 67, 103–129 (2016). https://doi.org/10.1007/s10915-015-0073-9

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10915-015-0073-9