Abstract

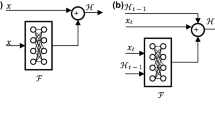

A contemporary approach for acquiring the computational gains of depth in recurrent neural networks (RNNs) is to hierarchically stack multiple recurrent layers. However, such performance gains come with the cost of challenging optimization of hierarchal RNNs (HRNNs) which are deep both hierarchically and temporally. The researchers have exclusively highlighted the significance of using shortcuts for learning deep hierarchical representations and deep temporal dependencies. However, no significant efforts are made to unify these finding into a single framework for learning deep HRNNs. We propose residual recurrent highway network (R2HN) that contains highways within temporal structure of the network for unimpeded information propagation, thus alleviating gradient vanishing problem. The hierarchical structure learning is posed as residual learning framework to prevent performance degradation problem. The proposed R2HN contain significantly reduced data-dependent parameters as compared to related methods. The experiments on language modeling (LM) tasks have demonstrated that the proposed architecture leads to design effective models. On LM experiments with Penn TreeBank, the model achieved 60.3 perplexity and outperformed baseline and related models that we tested.

Article PDF

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Avoid common mistakes on your manuscript.

References

Graves, A.: Generating sequences with recurrent neural networks. arXiv:1308.0850(2013)

Bahdanau, D., Cho, K., Bengio, Y.: Neural machine translation by jointly learning to align and translate. arXiv:1409.0473 (2014)

Mikolov, T.: Statistical language models based on neural networks. Presentation at Google, Mountain View (2012)

Graves, A., Liwicki, M., Fernández, S., Bertolami, R., Bunke, H., Schmidhuber, J.: A novel connectionist system for unconstrained handwriting recognition. IEEE Trans. Pattern Anal. Mach. Intell. 31(5), 855–868 (2009)

Hinton, G., Deng, L., Yu, D., Dahl, G.E., Mohamed, A.R., Jaitly, N., Senior, A., Vanhoucke, V., Nguyen, P., Sainath, T.N., Kingsbury, B.: Deep neural networks for acoustic modeling in speech recognition: The shared views of four research groups. IEEE Signal Process. Mag. 29 (6), 82–97 (2012)

Graves, A., Mohamed, A.R., Hinton, G.: Speech recognition with deep recurrent neural networks. In: IEEE International Conference on Acoustics, Speech and Signal Processing, pp. 6645–6649 (2013)

Mikolov, T., Sutskever, I., Chen, K., Corrado, G.S., Dean, J.: Distributed representations of words and phrases and their compositionality. In: Advances in Neural Information Processing Systems, pp. 3111–3119 (2013)

Mikolov, T., Chen, K., Corrado, G., Dean, J.: Efficient estimation of word representations in vector space. arXiv:1301.3781 (2013)

Pascanu, R., Mikolov, T., Bengio, Y.: On the difficulty of training recurrent neural networks. In: International Conference on Machine Learning, pp. 1310–1318 (2013)

Hochreiter, S., Schmidhuber, J.: Long short-term memory. Neural Comput. 9(8), 1735–1780 (1997)

Chung, J., Gulcehre, C., Cho, K., Bengio, Y.: Empirical evaluation of gated recurrent neural networks on sequence modeling. arXiv:1412.3555 (2014)

Srivastava, R.K., Greff, K., Schmidhuber, J.: Highway networks. arXiv:1505.00387 (2015)

He, K., Zhang, X., Ren, S., Sun, J.: Deep residual learning for image recognition. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 770–778 (2016)

Zhang, Y., Chen, G., Yu, D., Yaco, K., Khudanpur, S., Glass, J.: Highway long short-term memory rnns for distant speech recognition. In: IEEE International Conference on Acoustics, Speech and Signal Processing, pp. 5755–5759 (2016)

Kim, J., El-Khamy, M., Lee, J.: Residual LSTM: Design of a deep recurrent architecture for distant speech recognition. arXiv:1701.03360 (2017)

Kim, Y., Jernite, Y., Sontag, D., Rush, A.M.: Character-aware neural language models. In: AAAI, pp. 2741–2749 (2016)

Jozefowicz, R., Zaremba, W., Sutskever, I.: An empirical exploration of recurrent network architectures. In: Proceedings of the 32nd International Conference on Machine Learning (ICML-15), pp. 2342–2350 (2015)

Pascanu, R., Gulcehre, C., Cho, K., Bengio, Y.: How to construct deep recurrent neural networks. arXiv:1312.6026 (2013)

Schmidhuber, J.: Learning complex, extended sequences using the principle of history compression. Learning, 4(1) (2008)

El Hihi, S., Bengio, Y.: Hierarchical recurrent neural networks for long-term dependencies. In: Advances in Neural Information Processing Systems, pp. 493–499 (1996)

Du, Y., Wang, W., Wang, L.: Hierarchical recurrent neural network for skeleton based action recognition. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 1110–1118 (2015)

Zilly, J.G., Srivastava, R.K., Koutník, J., Schmidhuber, J.: Recurrent highway networks. arXiv:1607.03474 (2016)

Wang, Y., Tian, F.: Recurrent residual learning for sequence classification. In: EMNLP, pp. 938–943 (2016)

LeCun, Y., Bengio, Y., Hinton, G.: Deep learning. Nature 521(7553), 436 (2015)

Chung, J., Ahn, S., Bengio, Y.: Hierarchical multiscale recurrent neural networks. arXiv:1609.01704 (2016)

Levine, Y., Sharir, O., Shashua, A.: Benefits of depth for long-term memory of recurrent networks. arXiv:1710.09431 (2017)

Pan, P., Xu, Z., Yang, Y., Wu, F., Zhuang, Y.: Hierarchical recurrent neural encoder for video representation with application to captioning. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 1029–1038 (2016)

Chung, J., Gulcehre, C., Cho, K., Bengio, Y.: Gated feedback recurrent neural networks. In: International Conference on Machine Learning, pp. 2067–2075 (2015)

Aharoni, Z., Rattner, G., Permuter, H.: Gradual learning of deep recurrent neural networks. arXiv:1708.08863 (2017)

Serban, I.V., Sordoni, A., Bengio, Y., Courville, A.C., Pineau, J.: Building end-to-end dialogue systems using generative hierarchical neural network models. In: AAAI, pp. 3776–3784 (2016)

Sainath, T.N., Vinyals, O., Senior, A., Sak, H.: Convolutional, long short-term memory, fully connected deep neural networks. In: IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), pp. 4580–4584 (2015)

Chan, W., Jaitly, N., Le, Q., Vinyals, O.: Listen, attend and spell: A neural network for large vocabulary conversational speech recognition. In: IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), pp. 4960–4964 (2016)

Sutskever, I., Vinyals, O., Le, Q.V.: Sequence to sequence learning with neural networks. In: Advances in Neural Information Processing Systems, pp. 3104–3112 (2014)

Zhang, X.Y., Yin, F., Zhang, Y.M., Liu, C.L., Bengio, Y.: Drawing and recognizing chinese characters with recurrent neural network. IEEE Trans. Pattern Anal. Mach. Intell. 40(3), 849–862 (2018)

Yogatama, D., Dyer, C., Ling, W., Blunsom, P.: Generative and discriminative text classification with recurrent neural networks. arXiv:1703.01898 (2017)

Koutnik, J., Greff, K., Gomez, F., Schmidhuber, J.: A clockwork rnn. In: International Conference on Machine Learning, pp. 1863–1871 (2014)

Graves, A., Schmidhuber, J.: Offline handwriting recognition with multidimensional recurrent neural networks. In: Advances in Neural Information Processing Systems, pp. 545–552 (2009)

Sak, H., Senior, A., Beaufays, F.: Long short-term memory based recurrent neural network architectures for large vocabulary speech recognition. arXiv:1402.1128 (2014)

Goel, H., Melnyk, I., Banerjee, A.: R2N2: Residual recurrent neural networks for multivariate time series forecasting. arXiv:1709.03159 (2017)

Baskar, M.K., Karafiát, M., Burget, L., Veselý, K., Grézl, F., Černocký, J.: Residual memory networks: Feed-forward approach to learn long-term temporal dependencies. In: IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), pp. 4810–4814 (2017)

Karpathy, A., Johnson, J., Fei-Fei, L.: Visualizing and understanding recurrent networks. arXiv:1506.02078 (2015)

Werbos, P.J.: Backpropagation through time: What it does and how to do it. Proc. IEEE 78(8), 1550–1560 (1990)

Marcus, M.P., Marcinkiewicz, M.A., Santorini, B.: Building a large annotated corpus of english: The penn treebank. Comput. Linguist. 19(1), 313–330 (1993)

Goldsborough, P.: A tour of tensorflow. arXiv:1610.01178 (2016)

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Zia, T., Razzaq, S. Residual Recurrent Highway Networks for Learning Deep Sequence Prediction Models. J Grid Computing 18, 169–176 (2020). https://doi.org/10.1007/s10723-018-9444-4

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10723-018-9444-4