Abstract

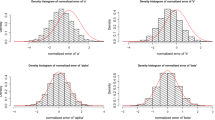

Regularly varying stochastic processes model extreme dependence between process values at different locations and/or time points. For such stationary processes we propose a two-step parameter estimation of the extremogram, when some part of the domain of interest is fixed and another increasing. We provide conditions for consistency and asymptotic normality of the empirical extremogram centred by a pre-asymptotic version for such observation schemes. For max-stable processes with Fréchet margins we provide conditions, such that the empirical extremogram (or a bias-corrected version) centred by its true version is asymptotically normal. In a second step, for a parametric extremogram model, we fit the parameters by generalised least squares estimation and prove consistency and asymptotic normality of the estimates. We propose subsampling procedures to obtain asymptotically correct confidence intervals. Finally, we apply our results to a variety of Brown-Resnick processes. A simulation study shows that the procedure works well also for moderate sample sizes.

Article PDF

Similar content being viewed by others

Avoid common mistakes on your manuscript.

References

Asadi, P., Davison, A.C., Engelke, S.: Extremes on river networks. Ann. Appl Stat. 9(4), 2023–2050 (2015)

Beirlant, J., Goegebeur, Y., Segers, J., Teugels, J.: Statistics of extremes, theory and applications. Wiley, Chichester (2004)

Blanchet, J., Davison, A.: Spatial modeling of extreme snow depth. Ann. Appl Stat. 5(3), 1699–1724 (2011)

Bolthausen, E.: On the central limit theorem for stationary mixing random fields. Ann. Probab. 10(4), 1047–1050 (1982)

Brown, B., Resnick, S.: Extreme values of independent stochastic processes. J. Appl. Probab. 14(4), 732–739 (1977)

Buhl, S., Klüppelberg, C.: Anisotropic Brown-Resnick space-time processes: estimation and model assessment. Extremes 19, 627–660 (2016). https://doi.org/10.1007/s10687-016-0257-1r

Buhl, S., Klüppelberg, C.: Limit theory for the empirical extremogram of random fields. Stoch. Process. Appl. 128(6), 2060–2082 (2018)

Buhl, S., Davis, R., Klüppelberg, C., Steinkohl, C.: Semiparametric estimation for isotropic max-stable space-time processes. Bernoulli, in press, arXiv:1609.04967v3[stat.ME] (2018)

Cho, Y., Davis, R., Ghosh, S.: Asymptotic properties of the spatial empirical extremogram. Scand. J Stat. 43(3), 757–773 (2016)

Davis, R., Mikosch, T.: The extremogram: a correlogram for extreme events. Bernoulli 15(4), 977–1009 (2009)

Davis, R., Klüppelberg, C., Steinkohl, C.: Max-stable processes for extremes of processes observed in space and time. J. Korean Stat. Soc. 42(3), 399–414 (2013a)

Davis, R., Klüppelberg, C., Steinkohl, C.: Statistical inference for max-stable processes in space and time. JRSS B 75(5), 791–819 (2013b)

Davis, R., Mikosch, T., Zhao, Y.: Measures of serial extremal dependence and their estimation. Stoch. Process. Appl. 123(7), 2575–2602 (2013c)

Davison, A. C., Padoan, S. A., Ribatet, M.: Statistical modeling of spatial extremes. Stat. Sci. 27(2), 161–186 (2012c)

de Fondeville, R., Davison, A.: High-dimensional peaks-over-threshold inference for the Brown-Resnick process. Biometrika 105(3), 575–592 (2018)

de Haan, L.: A spectral representation for max-stable processes. Ann. Probab. 12(4), 1194–1204 (1984)

de Haan, L., Ferreira, A.: Extreme value theory: An introduction. Springer Series in Operations Research and Financial Engineering, New York (2006)

Dombry, C., Eyi-Minko, F.: Strong mixing properties of max-infinitely divisible random fields. Stoch Process. Appl. 122(11), 3790–3811 (2012)

Dombry, C., Engelke, S., Oesting, M.: Exact simulation of max-stable processes. Biometrika 103, 303–317 (2016)

Dombry, C., Genton, M. G., Huser, R., Ribatet, M.: Full likelihood inference for max-stable data. arXiv:1703.08665 (2018)

Drees, H.: Bootstrapping empirical processes of cluster functionals with application to extremograms. arXiv:1511.00420v1[math.ST] (2015)

Einmahl, J., Kiriliouk, A., Segers, J.: A continuous updating weighted least squares estimator of tail dependence in high dimensions. Extremes 21(2), 205–233 (2018)

Embrechts, P., Koch, E., Robert, C.: Space-time max-stable models with spectral separability. Adv. Appl. Probab. 48(A), 77–97 (2016)

Engelke, S., Malinowski, A., Kabluchko, Z., Schlather, M.: Estimation of Hüsler-Reiss distributions and Brown-Resnick processes. JRSS B 77(1), 239–265 (2015)

Fasen, V., Klüppelberg, C., Schlather, M.: High-level dependence in time series models. Extremes 13(1), 1–33 (2010)

Giné, E., Hahn, M. G., Vatan, P.: Max-infinitely divisible and max-stable sample continuous processes. Probab. Theory Rel. Fields 87, 139–165 (1990)

Hult, H., Lindskog, F.: Extremal behavior of regularly varying stochastic processes. Stoch. Process. Appl. 115, 249–274 (2005)

Hult, H., Lindskog, F.: Regular variation for measures on metric spaces. Publications de l’Institut Mathematique (Beograd)́, 80, 121–140 (2006)

Huser, R.: Statistical Modeling and Inference for Spatio-Temporal Extremes. Ph.D. Thesis, École Polytechnique Fédérale de Lausanne, Lausanne (2013)

Huser, R., Davison, A.: Composite likelihood estimation for the Brown-Resnick process. Biometrika 100(2), 511–518 (2013)

Huser, R., Davison, A.: Space-time modelling of extreme events. JRSS B 76(2), 439–461 (2014)

Huser, R., Genton, M.G.: Non-stationary dependence structures for spatial extremes. Journal of Agricultural Biological and Environmental Statistics 21(3), 470–491 (2016)

Ibragimov, I., Linnik, Y.: Independent and Stationary Sequences of Random Variables. Wolters-Noordhoff, Groningen (1971)

Kabluchko, Z., Schlather, M., de Haan, L.: Stationary max-stable fields associated to negative definite functions. Ann. Probab. 37(5), 2042–2065 (2009)

Lahiri, S. N., Lee, Y., Cressie, N.: On asymptotic distribution and asymptotic efficiency of least squares estimators of spatial variogram parameters. J. Stat. Plan. Inf. 103(1), 65–85 (2002)

Li, B., Genton, M., Sherman, M.: On the asymptotic joint distribution of sample space-time covariance estimators. Bernoulli 14(1), 208–248 (2008)

Opitz, T.: Extremal t processes: Elliptical domain of attraction and a spectral representation. J. Multivar. Anal. 122, 409–413 (2013)

Padoan, S., Ribatet, M., Sisson, S.: Likelihood-based inference for max-stable processes. JASA 105(489), 263–277 (2009)

Politis, D. N., Romano, J. P., Wolf, M.: Subsampling. Springer, New York (1999)

Resnick, S. : Point processes, regular variation and weak convergence. Adv. Appl. Probab. 18(1), 66–138 (1986)

Resnick, S.: Heavy-tail phenomena, probabilistic and statistical modeling. Springer, New York (2007)

Schlather, M.: Randomfields, contributed package on random field simulation for R. http://cran.r-project.org/web/packages/RandomFields/

Steinkohl, C.: Statistical modelling of extremes in space and time using max-stable processes. Ph.D. Thesis. Technische Universität München, München (2013)

Thibaud, E., Aalto, J., Cooley, D. S., Davison, A. C., Heikkinen, J.: Bayesian inference for the Brown-Resnick process, with an application to extreme low temperatures. Ann. Appl. Stat. 10(4), 2303–2324 (2016)

Wadsworth, J., Tawn, J.: Efficient inference for spatial extreme value processes associated to log-Gaussian random functions. Biometrika 101(1), 1–15 (2014)

Acknowledgments

Sven Buhl acknowledges support by the Deutsche Forschungsgemeinschaft (DFG) through the TUM International Graduate School of Science and Engineering (IGSSE).

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendix A

Appendix A

1.1 A.1 α-mixing with respect to the increasing dimensions

We need the concept of α-mixing for the process \(\{X(\boldsymbol {s}): \boldsymbol {s}\in \mathbb {R}^{d}\}\) with respect to \(\mathbb {R}^{w}\). In a space-time setting with fixed spatial setting and increasing time series this is called temporalα-mixing.

Definition 1 (α-mixing and α-mixing coefficients)

Consider a strictly stationary process \(\left \{X(\boldsymbol {s}): \boldsymbol {s}\in \mathbb {R}^{d}\right \}\) and let ∥⋅∥ be some norm on \(\mathbb {R}^{d}\). For \({\Lambda }_{1}, {\Lambda }_{2} \subset \mathbb {Z}^{w}\) define

Further, for i = 1, 2 denote by \(\sigma _{\mathscr{F}\times {\Lambda }_{i}}= \sigma \left \{X(\boldsymbol {s}): \boldsymbol {s}\in \mathscr{F} \times {\Lambda }_{i}\right \}\) the σ-algebra generated by \(\{X(\boldsymbol {s}): \ \boldsymbol {s}\in \mathscr{F} \times {\Lambda }_{i}\}\).

-

(i)

We define the α-mixing coefficients with respect to \(\mathbb {R}^{w}\) for \(k_{1},k_{2} \in \mathbb {N}\) and z ≥ 0 as

$$\begin{array}{@{}rcl@{}} \alpha_{k_{1},k_{2}}(z) := \sup\left\{\left|\mathbb{P}(A_{1}\cap A_{2}) - \mathbb{P}(A_{1})\mathbb{P}(A_{2})\right|: \ A_{i} \in \sigma_{\mathscr{F} \times{\Lambda}_{i}}, |{\Lambda}_{i}|\leq k_{i}, d({\Lambda}_{1},{\Lambda}_{2}) \geq z\right\}. \end{array} $$(A.1) -

(ii)

We call \(\{X(\boldsymbol {s}): \boldsymbol {s}\in \mathbb {R}^{d}\} \alpha \)-mixing with respect to \(\mathbb {R}^{w}\), if \(\alpha _{k_{1},k_{2}}(z) \to 0\) as z →∞ for all \(k_{1},k_{2}\in \mathbb {N}\).

We have to control the dependence between vector processes \(\{\boldsymbol Y(\boldsymbol s)=X_{\mathscr{B}(\boldsymbol s,\gamma )}: \boldsymbol s \in {\Lambda }_{1}^{\prime }\}\) and \(\{\boldsymbol Y(\boldsymbol s)=X_{\mathscr{B}(\boldsymbol s,\gamma )}: \boldsymbol s \in {\Lambda }_{2}^{\prime }\}\) for subsets \({\Lambda }_{i}^{\prime } \subset \mathbb {Z}^{w}\) with cardinalities \(|{\Lambda }_{1}^{\prime }| \leq k_{1}\) and \(|{\Lambda } _{2}^{\prime }| \leq k_{2}\).. This entails dealing with unions of balls \({\Lambda }_{i}=\cup _{\boldsymbol s \in \mathscr{F}\times {\Lambda }_{i}^{\prime }} \mathscr{B}(\boldsymbol s,\gamma )\). Since γ > 0 is some predetermined finite constant independent of n, we keep notation simple by redefining the α-mixing coefficients corresponding to the vector processes for \(k_{1},k_{2} \in \mathbb {N}\) and z ≥ 0 as

1.2 A.2 Proof of Theorem 1

The proof of Theorem 1 is divided into two parts. In the first part we prove a LLN and a CLT in Lemmas A.1 and A.2 for the estimators \(\widehat {\mu }_{\mathscr{B}(\boldsymbol 0,\gamma ),m_{n}}\) in Eq. 3.4. In the second part of the proof we derive the CLT for the empirical extremogram \(\widehat \rho _{AB,m_{n}}\) in Eq. 3.2, and compute the asymptotic covariance matrix π. The proof generalizes corresponding proofs in Buhl and Klüppelberg (2018) (where the observation area increases in all dimensions) in a non-trivial way. We recall the separation of every point and every lag in its components corresponding to the fixed domain, indicated by the sub index \(\mathscr{F}\), and the remaining components, indicated by \(\mathscr{I}\), from Assumption 2. In particular, we decompose \(\boldsymbol h^{(i)}=(\boldsymbol h_{\mathscr{F}}^{(i)},\boldsymbol h_{\mathscr{I}}^{(i)})\in \mathscr{H}\).

The separation of the observation space with its fixed domain has to be introduced into the proofs given in Buhl and Klüppelberg (2018), which is even in the regular grid situation highly non-trivial. We will give detailed references to those proofs, whenever possible, to support the understanding. On the other hand, if arguments just follow a previous proof line by line we avoid the details.

- Part I: :

-

LLN and CLT for \(\widehat {\mu }_{\mathscr{B}(\boldsymbol 0,\gamma ),m_{n}}\)

Asin Buhl and Klüppelberg (2018), Section 5, we make use of a large/small block argument. For simplicity we assume that \(n^{w}/{m_{n}^{d}}\) is an integer and subdivide \(\mathscr{D}_{n}\) into \(n^{w}/{m_{n}^{d}}\) non-overlapping d-dimensional large blocks \(\mathscr{F} \times \mathscr{B}_{i}\) for \(i = 1,\ldots ,n^{w}/{m_{n}^{d}}\), where the \(\mathscr{B}_{i}\) are w-dimensional cubes with side lengths \(m_{n}^{d/w}\). From those large blocks we then cut off smaller blocks, which consist of the first rn elements in each of the w increasing dimensions. The large blocks are then separated (by these small blocks) with at least the distance rn in all w increasing dimensions and shown to be asymptotically independent.

We divide the lags in Ln into different sets according to the large and small blocks. Recall the notation of Eq. 3.5 and around. Observe that a lag \((\boldsymbol \ell _{\mathscr{F}},\boldsymbol \ell _{\mathscr{I}})\) with \(\boldsymbol \ell _{\mathscr{I}}=(\ell _{\mathscr{I}}^{(1)},\ldots ,\ell _{\mathscr{I}}^{(w)})\) appears in \(L_{\mathscr{F}}^{(i,i)} \times L_{n}\) exactly \(\text {N}_{\mathscr{F}}^{(i,i)}(\boldsymbol \ell _{\mathscr{F}}){\prod }_{j = 1}^{w}(n-|\ell _{\mathscr{I}}^{(j)}|)\) times, where \(\text {N}_{\mathscr{F}}^{(i,i)}(\boldsymbol \ell _{\mathscr{F}})\) is defined in Eq. 3.6. This term will replace \({\prod }_{j = 1}^{d} (n-|h_{j}|)\) in the proofs of Buhl and Klüppelberg (2018).

Lemma A.1

Let\(\{X(\boldsymbol s): \boldsymbol s \in \mathbb {R}^{d}\}\)bea strictly stationary regularly varying process observedon\(\mathscr{D}_{n}=\mathscr{F} \times \mathscr{I}_{n}\)as in Eq. 2.4. Fori ∈{1,…,p},let\(\boldsymbol h^{(i)}=(\boldsymbol h_{\mathscr{F}}^{(i)},\boldsymbol h_{\mathscr{I}}^{(i)}) \in \mathscr{H}\subseteq \mathscr{B}(\boldsymbol 0, \gamma )\)for someγ > 0 be a fixed lag vector and useas before the convention that\((\boldsymbol h_{\mathscr{F}}^{(p + 1)},\boldsymbol h_{\mathscr{I}}^{(p + 1)})=\boldsymbol 0\).Suppose that the following mixing conditions are satisfied.

-

(1)

\(\{X(\boldsymbol {s}): \boldsymbol {s}\in \mathbb {R}^{d}\}\)isα-mixingwith respect to\(\mathbb {R}^{w}\)withmixing coefficients\(\alpha _{k_{1},k_{2}}(\cdot )\)definedin Eq. A.1.

-

(2)

There exist sequencesm := mn,r := rn → ∞with\({m_{n}^{d}}/n^{w} \to 0\)and\({r_{n}^{w}}/{m_{n}^{d}} \to 0\)asn → ∞such that (M3) and (M4i) hold.

Then for every fixedi = 1,…,p + 1,asn → ∞,

with\(\sigma _{\mathscr{B}(\boldsymbol 0,\gamma )}^{2}(D_{i})\)specified in Eq. 3.8. If\(\mu _{\mathscr{B}(\boldsymbol 0,\gamma )}(D_{i})= 0\), then Eq. A.4is interpreted as\(\varphi \left [\widehat {\mu }_{\mathscr{B}(\boldsymbol 0,\gamma ),m_{n}}(D_{i})\right ] = o({m_{n}^{d}}/n^{w})\). Inparticular,

Proof of Lemma A.1.

We suppress the superscript (i) of h(i) (respectively \(\boldsymbol h_{\mathscr{F}}^{(i)}\)) for notational ease. Strict stationarity and relation (2.5) imply that

As to the asymptotic variance, we start from Eq. 3.7, where it has been calculated that

By Eq. 2.5 and since \(\mathbb {P}(\boldsymbol Y(\boldsymbol 0)/a_{m} \in D_{i}) \to 0\),

Counting the lags as explained above this proof, for fixed k ∈ ℕ we have by stationarity the analogy of Eq. 5.6 in Buhl and Klüppelberg (2018)

Concerning A21 we have,

With Eqs. 2.5 and 2.6 we obtain by dominated convergence,

As to A22, observe that for all n ≥ 0 we have \(\prod \limits _{j = 1}^{w} (1-\frac {|\ell _{\mathscr{I}}^{(j)}|}{n})\leq 1\) for \(\boldsymbol \ell _{\mathscr{I}}\in L_{n}\). Furthermore, since Di is bounded away from 0, there exists 𝜖 > 0 such that \(D_{i} \subset \{\boldsymbol x \in \overline {\mathbb {R}}^{|\mathscr{B}(\boldsymbol 0,\gamma )|}: \| \boldsymbol x \| > \epsilon \}\). Hence, we obtain

which differs from the corresponding expression in Buhl and Klüppelberg (2018) only by finite factors. Thus by an obvious modification of the arguments in that paper it follows that, using \({r_{n}^{w}}/{m_{n}^{d}} \to 0\) and condition (M3),

Using the definition (A.2) of α-mixing for A1 = {Y(0)/am ∈ Di} and \(A_2=\{\boldsymbol Y(\boldsymbol \ell _{\mathscr{F}}, \boldsymbol \ell _{\mathscr{I}})/a_m \in D_i\}\), we obtain by (M4i),

Summarising these computations, we conclude from Eqs. A.7 and A.8 that for n → ∞,

and, therefore, Eq. A.6 implies Eq. A.4. Since \({m_n^d/n^w\to 0}\) as n → ∞, Eqs. A.3 and A.4 imply Eq. A.5. □

Lemma A.1

Let\(\{X(\boldsymbol s): \boldsymbol s \in \mathbb {R}^d\}\)bea strictly stationary regularly varying process observedon\(\mathscr{D}_n=\mathscr{F} \times \mathscr{I}_n\).Fori ∈{1,…,p},let\(\boldsymbol h^{(i)}=(\boldsymbol h_{\mathscr{F}}^{(i)},\boldsymbol h_{\mathscr{I}}^{(i)}) \in \mathscr{H}\subseteq \mathscr{B}(\boldsymbol 0, \gamma )\)forsomeγ > 0 be a fixed lag vector and take as before the conventionthat\((\boldsymbol h_{\mathscr{F}}^{(p + 1)},\boldsymbol h_{\mathscr{I}}^{(p + 1)})=\boldsymbol 0\).Let the assumptions of Theorem 1 hold. Then for every fixedi = 1,…,p + 1,

with\(\widehat {\mu }_{\mathscr{B}(\boldsymbol 0,\gamma ),m_n}(D_i)\)asin Eq. ??,\(\mu _{\mathscr{B}(\boldsymbol 0,\gamma ),m_n}(D_i)):=m_n^d \mathbb {P}(\boldsymbol Y(\boldsymbol 0)/a_m \in D_i)\)and\(\sigma _{\mathscr{B}(\boldsymbol 0,\gamma )}^2(D_i)\)givenin Eq. ??.

Proof

Again we suppress the superscript (i) of h(i) and \(\boldsymbol h_{\mathscr{F}}^{(i)}\). As for the proof of consistency above, we generalise the proof of the CLT in Buhl and Klüppelberg (2018) (based on Bolthausen (1982)) to the new setting. We consider the process

observed on the w-dimensional regular grid \(\mathscr{I}_n\). In analogy to Eq. 5.11 in Buhl and Klüppelberg (2018) define

and note that by stationarity,

The boundary condition required in Eq. (1) in Bolthausen (1982) is satisfied for the regular grid \(\mathscr{I}_n\). By the same arguments as in Buhl and Klüppelberg (2018),

such that \({\sum }_{\boldsymbol i,\boldsymbol i^{\prime } \in \mathbb {Z}^w} \mathbb {C}ov[I(\boldsymbol i), I(\boldsymbol i^{\prime })]>0\). Replacing \(\mathscr{S}_n\) in Buhl and Klüppelberg (2018) by \(\mathscr{I}_n\) and nd by nw, we define

and obtain by the same arguments that

Now note that

as in Eq. A.9, with mixing coefficients defined in Eq. A.2. Therefore,

The standardized quantities are again as in Buhl and Klüppelberg (2018), with \(\mathscr{S}_n\) replaced by \(\mathscr{I}_n\) and nd by nw, by

The proof continues in Buhl and Klüppelberg (2018), with nd replaced by nw, by estimating the quantities B1, B2 and B3. The estimation of B1 follows the same lines of the proof, resulting in

We use definition (A.2) of the α-mixing coefficients for

then |Λ1′|,|Λ2′|≤ 2 and for d(Λ1′,Λ2′) we consider the following two cases:

-

(1)

∥i −j∥≥ 3rn. Then 2rn ≤ (2/3)∥i −j∥ and d(Λ1′,Λ2′) ≥∥i −j∥− 2rn. Since indicator variables are bounded and α2,2 is a decreasing function,

$$\begin{array}{@{}rcl@{}} |\mathbb{C}ov\left[I(\boldsymbol i)I(\boldsymbol i^{\prime}),I(\boldsymbol j)I(\boldsymbol j^{\prime}) \right]| &\leq 4 \alpha_{2,2}\left( \|\boldsymbol i-\boldsymbol j\|-2r_{n}\right) \leq 4 \alpha_{2,2}\left( \frac1{3} \|\boldsymbol i-\boldsymbol j\|\right). \end{array} $$ -

(2)

∥i −j∥< 3rn. Set z := min{∥i −j∥,∥i −j′∥,∥i′−j∥,∥i′−j′∥}, then d(Λ1′,Λ2′) ≥ z and, hence,

$$\mathbb{C}ov\left[I(\boldsymbol i)I(\boldsymbol i^{\prime}),I(\boldsymbol j)I(\boldsymbol j^{\prime}) \right] \leq 4 \alpha_{k_{1},k_{2}}(z), \quad 2 \leq k_{1}+k_{2} \leq 4.$$

Therefore,

The analogous argument as in Buhl and Klüppelberg (2018) yields

Next, \(\mathbb {E}[|B_2|] \to 0\) as n → ∞ by the same arguments as in Buhl and Klüppelberg (2018) replacing \(\mathscr{S}_n\) by \(\mathscr{I}_n\) and nd by nw. Then we find for B3 with the same replacements

We use definition (A.2) of the α-mixing coefficients for

such that |Λ1′| = 1, |Λ2′|≤ nw and d(Λ1′,Λ2′) > rn. Abbreviate

then I(0) and η(rn) are measurable with respect to \({\sigma }_{{\Lambda }_{1}}\) and \({\sigma }_{{\Lambda }_{2}}\), respectively, where \({\Lambda }_{i}=\cup _{\boldsymbol s \in \mathscr{F}\times {\Lambda }_{i}^{\prime }} \mathscr{B}(\boldsymbol s,\gamma )\). Now we apply Theorem 17.2.1 of Ibragimov and Linnik to obtain

where convergence to 0 is guaranteed by condition (M4iii).

- Part II: :

-

CLT for \(\widehat \rho _{AB,m_n}\) and limit covariance matrix

Recall the definition of \(\mathscr{H}=\{\boldsymbol h^{(1)},\ldots ,\boldsymbol h^{(p)}\}\). For i ∈{1,…,p}, write \(\boldsymbol h^{(i)}=(\boldsymbol h_{\mathscr{F}}^{(i)},\boldsymbol h_{\mathscr{I}}^{(i)})\) with respect to the fixed and increasing domains \(\mathscr{F}\) and \(\mathscr{I}_{n}\). Write further \(\boldsymbol h_{\mathscr{F}}^{(i)}=(h_{\mathscr{F}}^{(i,1)},\ldots ,h_{\mathscr{F}}^{(i,q)})\) and \(\boldsymbol h_{\mathscr{I}}^{(i)}=(h_{\mathscr{I}}^{(i,1)},\ldots ,h_{\mathscr{I}}^{(i,w)})\). Now we define the ratio

and the corresponding empirical estimator

using that \({\mathscr{F}}(\boldsymbol 0)=\mathscr{F}\). Observe that

Then the empirical extremogram as defined in Eq. 3.2 for μ-continuous Borel sets A,B in \(\overline {\mathbb {R}}\backslash \{0\}\) satisfies as n → ∞,

by definition (2.7) of the sets Di for i = 1,…,p. The remaining proof follows exactly as that of Theorem 4.2 in Buhl and Klüppelberg (2018), where in the last part the decomposition into a fixed and increasing grid has to be taken into account. □

1.3 A.3 Proof of Theorem 3

Throughout this proof, we suppress the sub index mn of \(\widehat {{\rho }}_{AB,m_n}\) and \(\widehat {{\rho }}_{AB,m_n}\) for notational ease. The case, where \(n^w/m_n^{3d} \to 0\) as n → ∞, is covered by Theorem 2, so we assume that \(n^w/m_n^{3d} \not \to 0\). Hence, by definition (3.16) we have to consider

Observe that for \(\boldsymbol h \in \mathscr{H}=\{\boldsymbol h^{(1)},\ldots ,\boldsymbol h^{(p)}\}\), as n →∞,

Since the conditions of Theorem 1 are satisfied we have that

and thus, by the continuous mapping theorem, it remains to show that for \(\boldsymbol h \in \mathscr{H}\),

We rewrite the latter as

As to A1, we calculate

By Theorem 1, the first term converges weakly to a normal distribution. Since \(\widehat \rho _{AB}(\boldsymbol h) \overset {P}{\rightarrow } \rho _{AB}(\boldsymbol h)\) and \(\rho _{AB,m_n}(\boldsymbol h) \to \rho _{AB}(\boldsymbol h)\) as n → ∞, the second term converges to 1 in probability. Slutzky’s theorem hence yields that \(A_1 \overset {P}{\rightarrow } 0\). As to A2, observe that

Therefore A2 converges to 0 if and only if \(\sqrt {n^w/m_n^{3d}} m_n^{-d}=\sqrt {n^w/m_n^{5d}}\) converges to 0.

1.4 A.4 Proof of Theorem 4

We start with the proof of consistency and use a subsequence argument. Let n′ = n′(n) be some arbitrary subsequence of n. We show that there exists a further subsequence n″ = n″(n′) such that \(\widehat {\boldsymbol \theta }_{n^{\prime \prime },V} \overset {\text {a.s.}}{\rightarrow } \boldsymbol \theta ^{\star }\) as n →∞, which in turn implies Eq. 4.6.

By (G1) we have for i = 1,…,p that \(\widehat {\rho }_{AB,m_n}(\boldsymbol h^{(i)}) \overset {P}{\rightarrow } \rho _{AB,\boldsymbol \theta ^{\star }}(\boldsymbol h^{(i)})\) as n →∞. Hence, there exists a subsequence n″ of n′ such that

as n →∞. For 𝜃 ∈Θ, we define the column vector and the quadratic forms

where we recall from Eq. 4.3 that \(\widehat {\boldsymbol g}_n(\boldsymbol \theta )=\left [\widehat {\rho }_{AB,m_n}(\boldsymbol h^{(i)})-\rho _{AB, \boldsymbol \theta }(\boldsymbol h^{(i)})\right ]{}^{^{\intercal }}_{i = 1,\ldots ,p}\). Assumptions (G1) and (G3) imply that Q(𝜃) > 0 for 𝜃⋆≠𝜃 ∈Θ and that Q(𝜃⋆) = 0, so 𝜃⋆ is the unique minimizer of Q. Smoothness and continuity of the functions ρAB,𝜃(h(i)) and V (𝜃) (Assumptions (G4) and (G5) with z1 = z2 = 0) and Eq. A.16 yield

Now assume that there exists some ω ∈Ω such that Eq. A.17 holds, but \(\widehat {\boldsymbol \theta }_{n^{\prime \prime },V}(\omega )\not \rightarrow \boldsymbol \theta ^{\star }\). Then there exist 𝜖 > 0 and a subsequence n″′ = n″′(n″) such that for all n ≥ 1,

Thus,

for all n ≥ n0 for some n0 ≥ 1. But this contradicts the definition of \(\widehat {\boldsymbol \theta }_{n^{\prime \prime \prime },V}\) as the minimizer of \(\widehat {Q}_{n^{\prime \prime \prime }}(\boldsymbol \theta ), \boldsymbol \theta \in {\Theta }\). Hence \(\widehat {\boldsymbol \theta }_{n^{\prime \prime },V} \overset {\text {a.s.}}{\rightarrow } \boldsymbol \theta ^{\star }\) as n →∞ and this shows Eq. ??.

To prove the CLT (4.7), we introduce the following notation:

-

We set \(\rho _{AB,\boldsymbol \theta }^{(\ell )}(\boldsymbol h^{(i)}):=\frac {\partial }{\partial \theta _{\ell }}\rho _{AB,\boldsymbol \theta }(\boldsymbol h^{(i)})\) for 1 ≤ i ≤ p, 1 ≤ ℓ ≤ k and

-

\(\boldsymbol \rho _{AB}^{(\ell )}(\boldsymbol \theta ):=(\rho _{AB,\boldsymbol \theta }^{(\ell )}(\boldsymbol h^{(i)}): i = 1,\ldots ,p){}^{^{\intercal }}\) for 1 ≤ ℓ ≤ k. The Jacobian matrix PAB(𝜃) (4.5) can then be written as

$$\begin{array}{@{}rcl@{}} \mathrm{P}_{AB}(\boldsymbol \theta)=(-\boldsymbol \rho_{AB}^{(1)}(\boldsymbol \theta),\ldots, -\boldsymbol \rho_{AB}^{(k)}(\boldsymbol \theta)). \end{array} $$ -

We denote by \(\boldsymbol e_{\ell } \in \mathbb {R}^k\) the ℓth unit vector.

-

For 1 ≤ i,j ≤ p, let vij(𝜃) := (V (𝜃))ij be the entry in the i th row and j th column of V (𝜃).

-

Set \(v_{ij}^{(\ell )}(\boldsymbol \theta ):= \frac {\partial }{\partial \theta _{\ell }} v_{ij}(\boldsymbol \theta )\) and \(V^{(\ell )}(\boldsymbol \theta ):=(v_{ij}^{(\ell )}(\boldsymbol \theta ))_{1 \leq i,j \leq p}, \quad 1 \leq \ell \leq k\).

As \(\widehat {\boldsymbol \theta }_{n,V}\) minimizes \(\widehat {\boldsymbol g}_n(\boldsymbol \theta ){}^{^{\intercal }} V(\boldsymbol \theta ) \widehat {\boldsymbol g}_n(\boldsymbol \theta )\) w.r.t. 𝜃, we obtain for 1 ≤ ℓ ≤ k,

Now define the p × k-matrix \(\widehat {\mathrm {P}}_{AB,n}:={\int }_0^1 \mathrm {P}_{AB}(u \boldsymbol \theta ^{\star } + (1-u)\widehat {\boldsymbol \theta }_{n,V}) \mathrm {d}u\), where the integral is taken componentwise. Assumptions (G4) and (G5) with z1 = z2 = 1 allow for a multivariate Taylor expansion of order 0 with integral remainder term of \(\widehat {\boldsymbol g}_n(\widehat {\boldsymbol \theta }_{n,V})\) around the true parameter vector 𝜃⋆, which yields

Plugging this into Eq. A.18 and rearranging terms, we find

for 1 ≤ ℓ ≤ k. Defining \(\widehat {R}_{n,V}\) as the k × k-matrix whose ℓth row is given by

the system of Eq. A.19 can be written in compact matrix form as

Hence, multiplying Eq. A.20 by \(\sqrt {n^w/m_n^d}\) and rearranging terms, we have,

Observe that the smoothness conditions (G4) and (G5) and the rank condition (G6) ensure invertibility of the terms in curly brackets and boundedness of its inverse. For the remainder of the proof, we can hence use Slutsky’s theorem; to this end note that, as n →∞:

-

By conditions (G4) and (G5ii) with z1 = z2 = 1, the matrices V (𝜃) and PAB(𝜃) are continuous in 𝜃, hence \(V(\widehat {\boldsymbol \theta }_{n,V}) \overset {P}{\rightarrow } V(\boldsymbol \theta ^{\star })\) and \(\mathrm {P}_{AB}(\widehat {\boldsymbol \theta }_{n,V}) \overset {P}{\rightarrow } \mathrm {P}_{AB}(\boldsymbol \theta ^{\star })\) by continuous mapping.

-

Using Eq. 4.6, we find that \((\widehat {\boldsymbol \theta }_{n,V}-\boldsymbol \theta ^{\star }) \overset {P}{\rightarrow } \boldsymbol 0\), \(\widehat {R}_{n,V} \overset {P}{\rightarrow } (\boldsymbol 0, \ldots , \boldsymbol 0)\) and \(\widehat {\mathrm {P}}_{AB,n} \overset {P}{\rightarrow } \mathrm {P}_{AB}(\boldsymbol \theta ^{\star })\).

-

The previous bullet point directly implies that \(C \overset {P}{\rightarrow } \boldsymbol 0\).

-

As to A, condition (G2) directly yields \(\sqrt {\frac {n^w}{m_n^d}}\widehat {\boldsymbol g}_n(\boldsymbol \theta ^{\star }) \overset {\mathscr{D}}{\rightarrow } \mathscr{N}(\boldsymbol 0, {\Pi })\).

-

Furthermore, \(\widehat {\boldsymbol g}_n(\boldsymbol \theta ^{\star }) \overset {P}{\rightarrow } \boldsymbol 0\) by (G1) and therefore \(B \overset {P}{\rightarrow } \boldsymbol 0\).

Finally, summarising those results, with \(B(\boldsymbol \theta ^{\star })=(\mathrm {P}_{AB}(\boldsymbol \theta ^{\star }){}^{^{\intercal }}[V(\boldsymbol \theta ^{\star })+V(\boldsymbol \theta ^{\star }){}^{^{\intercal }}] \mathrm {P}_{AB} (\boldsymbol \theta ^{\star }))^{-1}\), we obtain Eq. 4.7.

Rights and permissions

About this article

Cite this article

Buhl, S., Klüppelberg, C. Generalised least squares estimation of regularly varying space-time processes based on flexible observation schemes. Extremes 22, 223–269 (2019). https://doi.org/10.1007/s10687-018-0340-x

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10687-018-0340-x

Keywords

- Brown-Resnick process

- Extremogram

- Generalised least squares estimation

- Max-stable process

- Observation schemes

- Regularly varying process

- Semiparametric estimation

- Space-time process