Abstract

Prompting students to construct multiple solutions for modelling problems with vague conditions has been found to be an effective way to improve students’ performance on interest-oriented measures. In the current study, we investigated the influence of this teaching element on students’ performance. To assess the impact of prompting multiple solutions in mathematics instruction compared with the prompting of a single solution, we conducted an experimental study with 144 ninth graders from six German classes from middle track schools. We had two experimental groups: In one experimental group, students were required to provide two solutions for modelling problems related to the topic of Pythagoras’ theorem; in the other group, they were asked to find one solution for each problem. Students’ performance in solving tasks with and without a connection to the real world was assessed before and after a five-lesson teaching unit. In addition, the number of solutions developed and students’ experience of competence were assessed with a questionnaire during the teaching unit. The findings showed that, similar to previous studies, prompting students to find multiple solutions does not improve their performance directly. However, using path analysis, we found indirect effects of the treatment on students’ performance via the number of solutions they developed and their experience of competence.

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Avoid common mistakes on your manuscript.

1 Introduction

The development and comparison of multiple solutions are important instructional elements that are part of high-quality teaching standards in different countries (National Council of Teachers of Mathematics, 2000). Indeed, the analysis of the TIMSS 95 video study showed that posing problems that require students to construct several solutions and the subsequent presentation and discussion of solutions are teaching methods that are typically applied in high-performing countries, whereas in other countries, students are asked to find only one solution.

Japanese teachers frequently posed mathematics problems that were new for their students and then asked them to develop a solution method on their own. After allowing time to work on the problem, Japanese teachers engaged students in presenting and discussing alternative solution methods and then teachers summarized the mathematical points of the lesson. (…) These features of practice offer an alternative to those seen in the United States and Germany. (Hiebert, Gallimore, Garnier, Givvin, Hollingsworth, & Jacobs, 2003, p. 16)

These observations led us to assume that prompting students to provide multiple solutions can foster their performance. However, the design of the TIMSS video study did not allow causal inferences to be made about the effects of this teaching element. The first aim of the current study was to clarify the benefits of prompting and constructing multiple solutions compared to instructional settings in which only one solution has to be developed, using a randomised pretest-instruction-posttest design. The second aim was that we wanted to understand how the assumed positive effects of multiple solutions on performance come about, that is, what motivational and cognitive variables mediate the effects of prompting multiple solutions on students’ performance. In the current paper, we addressed these two research aims using demanding modelling problems.

Solving modelling problems is an important ability in mathematics education (Niss, Blum, & Galbraith, 2007). Because of their connection to reality, modelling problems often include vague conditions that have to be specified by the problem solver during the solution process. Hence, this paper investigated how prompting students to construct multiple solutions to modelling problems with vague conditions would affect students’ performance.

2 Theoretical background and research questions

2.1 Multiple solutions

The development and comparison of multiple solutions are important elements of constructivist learning theories and conceptions such as cognitive flexibility theory (Spiro, Coulson, Feltovich, & Anderson, 1988) or cognitive apprenticeship (Collins, Brown, & Newman, 1989). From the experience of solving problems in more than one way, students are expected to develop a broad range of strategies and representations that foster their cognitive flexibility and can be used to solve unknown problems (Rittle-Johnson & Star, 2009; Silver, Ghousseini, Gosen, Charalambous, & Font Strawhun, 2005). Other benefits from developing multiple solutions are the strengthening of connections between different elements of knowledge (Dhombres, 1993; Levav-Waynberg & Leikin, 2012); a deeper understanding of the learning subject (Neubrand, 2006); the support of students’ creativity (Leikin & Levav-Waynberg, 2007; Levav-Waynberg & Leikin, 2012); the fostering of metacognitive activities (Schukajlow & Krug, 2013b), self-regulation (Schukajlow & Krug, 2012); preferences for solving problems with multiple solutions; and experiences of autonomy, competence, and interest (Schukajlow & Krug, 2014b). Prompting students to construct multiple solutions and its impact on students’ learning have been investigated in the context of problem solving activities for several decades.

Empirical evidence of the effects of prompting multiple solutions on students’ mathematics achievements has emerged from research on expert teachers and from international comparative studies. Expert teachers encourage students to develop multiple solutions and to compare them in the classroom (Ball, 1993). Solving tasks that allow multiple solutions improves the professional development of teachers in problem solving (Guberman & Leikin, 2013). The ability to find different solutions is part of pedagogical content knowledge, which is an important predictor of students’ academic achievements in mathematics (Baumert et al., 2010). Moreover, teachers in Hong Kong and Japan, where students outperform US students, often compare different solutions in their teaching practice (Richland, Zur, & Holyoak, 2007).

However, only a few randomised empirical studies have been conducted on the effects of the development of multiple solutions on students’ achievements. Most of these studies were based on the assumption that the construction of multiple solutions is important for learning mathematics. Thus, they investigated which instructional method has to be used to teach students to find multiple solutions. The findings have supported theoretical considerations from cognitive science about the central role of linking together the different solutions that were prompted in class. Students who compared and contrasted different solutions of the same problem were found to outperform their classmates who reflected on each solution one at a time (Rittle-Johnson & Star, 2007). Prior knowledge, kind of representations, and complexity of the problems were identified as contextual factors that influence the effect of comparing different solutions on students’ progress in mathematics (Große & Renkl, 2006; Reed, Stebick, Comey, & Carroll, 2012; Rittle-Johnson, Star, & Durkin, 2009).

Comparing examples of two solution methods was shown to be preferable for specific aspects of learning to solve linear equations. Students in one group learned to solve linear equations such as 3(x + 1) = 15 using the “traditional” solution method: “distribute, combine like terms, subtract constants and variables from both sides, and then divide both sides by the coefficient” (Rittle-Johnson & Star, 2009). In the other group, students were instructed also to use an alternative solution method. They were taught to see such expressions as “x + 1” as one composite variable and, for example, to first divide both sides of the expression by 3 in the presented sample equation. Comparing examples of two solution methods was found to positively affect students’ conceptual knowledge of solving equations, which includes “the ability to recognize and to explain key concepts in the domain”, and their procedural flexibility, which refers to the “flexible use of solution methods on the procedural knowledge assessment” (Rittle-Johnson & Star, 2009, p. 532). However, the groups did not differ in procedural knowledge, which was measured as their accuracy in solving equations. Similar results regarding the accuracy of solutions were found for solving geometry problems (Levav-Waynberg & Leikin, 2012). Moreover, in a previous study carried out by Star and Rittle-Johnson (2008), students who solved linear equation problems using one solution method achieved higher scores on procedural knowledge on familiar but not on transfer problems than students who were instructed to solve the same tasks in multiple ways or to discover multiple ways of solving these problems on their own. This result indicates the importance of the kind of task used to examine students’ performance at posttest. If tasks that are used for instruction are similar to the tasks used on the posttest, and if the tasks can be solved by recognising a memorised mathematical procedure, then prompting one solution may be enough or even better for the short-time enhancement of students’ procedural knowledge.

The effects of encouraging students to find different solutions for a given problem have been investigated and implemented in different countries in the framework of the so-called open-ended approach (Becker & Shimada, 1997; Stacey, 1995). Teachers pose problems, such as the addition of two fractions, that can be solved in different ways or can have different solutions. One example is the problem: “What numbers can we sum together to get 21 as the result?” (see more about open problems by Silver, 1995). Students analyse the problem that is posed, find one solution, and then compare and discuss their solutions and the other solutions that are developed and presented by classmates. Another important part of this approach is to encourage students to change some of the task information and then to solve the new self-posed problem. Evaluations of the open-ended approach have confirmed the great opportunities for learning mathematics provided by these problems for students and pre-service teachers (Becker & Shimada, 1997; Klavir & Hershkovitz, 2008; Lin, Becker, Ko, & Byun, 2013). As prompting students to find multiple solutions can be viewed as the heart of the open-ended approach, adopting this approach can be expected to produce positive effects on students’ performance as a result of this instructional element.

In instructional settings that aim to improve students’ performance via the development of multiple solutions, instructors are encouraged to do the following:

-

Pose demanding mathematical problems that require students to construct different solutions.

-

Encourage students to develop several solutions to the posed problem on their own.

-

Have students discuss their individual solution paths with other students and the teacher.

-

Encourage students to compare and contrast different solutions.

Whereas instructional support of students with regard to the links between different solution methods has not been found to have considerable effects on students’ performance (Große & Renkl, 2006; Reed et al., 2012), the complexity of the problems (Reed et al., 2012), the autonomous development of different solutions, or students’ experience of competence during problem solving may be valuable factors that inspire students’ learning and performance. Thus, we posit that the number of solutions developed by students and their experience of competence have to be taken into account when investigating the effects of prompting students to construct multiple solutions.

2.2 Solving modelling problems

To investigate the effects of prompting students to provide multiple solutions, we chose to focus on students’ ability to solve modelling problems on the topic of Pythagoras’ theorem. The ability to solve modelling problems has importance for students’ current and future lives. Modelling is a part of curriculum programs in different countries and is a core component of “mathematical literacy” that has been examined in the Programme for International Students Assessment (PISA) studies (OECD, 2004). Solving modelling problems mainly includes the ability to realise a demanding mathematising process between the real world and mathematics (Blum, Galbraith, Henn, & Niss, 2007; Pollak, 1979). However, successfully solving modelling problems requires much more from students than only such mathematising processes. The wide range of students’ modelling activities is described in modelling circles (Galbraith & Stillman, 2006; Blum & Leiss, 2007; Verschaffel, Greer, & De Corte, 2000) and implies, among other practices, the correct use of mathematical procedures or so-called intra-mathematical performance (Niss et al., 2007; Schukajlow, Leiss, Pekrun, Blum, Müller, & Messner, 2012). Profound intra-mathematical knowledge can help students to construct a mathematical model, to apply correct mathematical procedures, and to obtain mathematical results. Working on modelling problems can be expected to improve students’ intra-mathematical performance (Blum et al., 2007) because students practice mathematical procedures while solving these problems.

2.2.1 Multiple solutions for modelling problems

There are different features of modelling problems (see summary by Maaß, 2010). In some modelling problems, information that is important for solving a problem is missing, and students need to make assumptions to solve this problem (Galbraith & Stillman, 2001). These tasks may be called modelling problems with vague conditions (Schukajlow & Krug, 2014b). Making assumptions is necessary for solving modelling problems with vague conditions and sets this kind of problem apart from open-ended problems whose solutions may simply involve providing samples such as a pair (1, 20) for the sum 21. Further, modelling problems can contain information that students do not need to use to solve the problem, so-called superfluous information.

The possibility of constructing multiple solutions is another feature of modelling problems. Three categories of multiple solutions can be distinguished with respect to solving modelling problems with vague conditions (Schukajlow & Krug, 2014b; Tsamir, Tirosh, Tabach, & Levenson, 2010). The first category includes multiple solutions that differ in the mathematical procedures that are applied but lead to the same mathematical result. The second kind of multiple solutions results from making different assumptions about missing data and obtaining different results but using the same mathematical procedure. The third category combines the first and the second kinds of multiple solutions. It contains solutions that vary in the mathematical solution method and are based on different assumptions about missing information. In this paper, we report on the effects of prompting the second kind of multiple solutions—different assumptions about missing information, different results, and the same solution method—on students’ academic achievements.

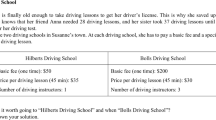

We will show different ways in which multiple solutions for real-word problems can be developed using the “Fire-brigade” task (Fig. 1).

Modelling problem “Fire-brigade” (Blum, 2011)

First, students read the “Fire-brigade” problem and construct a situation model. The situation model includes, among others, a burning house and an engine with a ladder that is driven out the back side. Students have to calculate the maximal height from which occupants of the house can be rescued. The maximal height depends on the position of the engine. If the engine backs up to the house, the fire-brigade can rescue persons from a greater height than if it drives forward towards the house or parks alongside the house. Thus, an assumption has to be made about the position of the engine with respect to the house for the calculation of maximal height. Depending on this assumption, students will obtain different results. They will need to calculate a leg in the right-angled triangle using Pythagoras’ theorem, for example, and add the height of the engine to their result. The ability to solve intra-mathematical problems according to Pythagoras’ theorem is needed to perform these calculations. Then the mathematical result has to be interpreted with respect to reality and validated as to whether it makes sense. Variations in the applied mathematical methods can occur if students calculate the length of the leg in the triangle using a scale drawing instead of Pythagoras’ theorem.

One important factor that can influence students’ performance as they solve modelling problems is the experience of competence.

2.3 The experience of competence as an intervening variable

The experience of competence plays an important role in self-determination theory (Deci & Ryan, 2000), in social cognitive models of achievement motivation (Eccles & Wigfield, 2002), or in learning theories regarding self-regulation (Boekaerts & Corno, 2005; Zimmerman & Schunk, 2001). It is closely related to other motivational constructs such as intrinsic motivation (Deci & Ryan, 2000), ability beliefs (Wigfield & Eccles, 2000), or goals (Hannula, 2006). In self-determination theory, the need for competence is one of three basic psychological needs that have to be fulfilled in order to initiate and maintain motivation, engagement, and achievement (Ryan & Deci, 2009; 2000). The experience of competence increases intrinsic motivation and interest in the learning activity (Krapp, 2005; Kunter, Baumert, & Köller, 2007), and to a lesser extent, it enhances achievement (Miserandino, 1996). Thus, learning environments in which mathematics is taught have to create opportunities for fulfilling this basic need of students in order to positively influence their motivation and to increase their achievements.

Instructions that aim to fulfil basic needs and to improve students’ motivation are assumed to be student-centred (Hannula, 2006). Indeed, there is some empirical evidence for the better development of motivational variables in student-centred teaching in which cooperative learning environments are typically used than in teacher-centred methods of instruction (Slavin, Hurley, & Chamberlain, 2003; Webb & Palincsar, 1996). Schukajlow et al. (2012) showed that a student-oriented teaching method of modelling problems tended to have stronger effects on students’ enjoyment, value, interest, and self-efficacy than “directive” teaching. Similar results have been revealed by studies about the effects of self-regulated learning or self-monitoring activities, which are closely connected to experience of competence (Minnaert, Boekaerts, & Opdenakker, 2008), on students’ affect, achievements, and their perceptions of their abilities (Marcou & Lerman, 2007; Panaoura, Gagatsis, & Demetriou, 2009). On the basis of these findings, we used student-centred instructions to investigate the effects of prompting students to construct multiple solutions on students’ performance.

2.4 Research questions

The present study was designed to answer the following research questions:

-

Research question 1

Does prompting students to construct multiple solutions for modelling problems affect their performance in solving modelling and intra-mathematical problems?

-

Research question 2

Does prompting students to construct multiple solutions affect students’ performance at posttest indirectly via the number of solutions developed and their experience of competence?

As prompting students to construct multiple solutions should positively influence performance in solving modelling and intra-mathematical problems, we expected similar effects on both dependent measures.

2.5 Hypothesised path model

As we wanted to investigate whether and how our intervention would work, we applied a path model to the data. It allowed us to test predictions about the possible factors that transmit the effects of experimentally manipulated treatment conditions on the outcome.

Path models are derived from theories or previous empirical results and connect the treatment condition (e.g., the teaching method) with the outcomes (e.g., performance after intervention) via mediators or intervening variables (e.g., the number of solutions developed and students’ experience of competence).

On the basis of theoretical considerations about the effects of prompting students to construct multiple solutions on students’ competence and performance, we hypothesised a path analytic model (Fig. 2). This model predicts how prompting multiple solutions leads to higher performance in mathematics. We predicted that prompting multiple solutions in the class would increase the number of solutions developed by students, that the number of solutions developed would have a positive impact on students’ experience of competence, and that experience of competence would lead to higher performance on the posttest. Although we found some studies that had investigated most of the direct single paths hypothesised in our model (see Sections 2.5.3 and 2.5.4), no study had applied a path model to the data to investigate indirect effects.

2.5.1 Linking the treatment condition to the number of solutions developed

The teaching method (by which the development of multiple vs. one solution was prompted) was expected to influence the number of solutions developed by students (path 1). In the “multiple solutions” treatment condition, two solutions had to be developed by each student. In the “one solution” condition, the development of only one solution was required by students. We predicted that students in the “multiple solutions” condition would develop significantly more solutions than students in the “one solution” condition.

2.5.2 Links between the number of solutions developed and students’ experience of competence

The number of solutions developed provides students with feedback about their competence in solving problems. Thus, the number of solutions developed by students should be strongly connected to their experience of competence in the model (path 2). This prediction was tested and confirmed in the previous study (Schukajlow & Krug, 2014b).

2.5.3 Linking the experience of competence to performance

Path 3 illustrates the link between the experience of competence and students’ performance on the posttest. A high experience of competence may be important for students’ motivation (Dörnyei, 2005; Ryan & Deci, 2000), which has a positive impact on academic outcomes (for meta-analysis cf. Hattie, 2009, p. 48). The experience of competence is a valuable part of self-efficacy beliefs (Bandura, 2003; Bong & Skaalvik, 2003), and self-efficacy is closely connected to students’ achievements (Malmivuori, 2006; Pietsch, Walker, & Chapman, 2003; Zan, Brown, Evans, & Hannula, 2006). Further evidence for the connection between experience of competence and performance comes from the analysis of a longitudinal study that tested competence beliefs and performance in seventh and ninth graders (Meece, Wigfield, & Eccles, 1990). Year-1 competence beliefs predicted year-2 expectancies for success and the year-2 expectancies influenced students’ year-2 achievements in mathematics. The direct connection between experience of competence and students’ performance was found by Hänze and Berger (2007) in physics. Students who reported higher competence during instruction achieved higher scores at posttest.

2.5.4 Linking prior performance to the number of solutions developed and to performance at posttest

Prior knowledge is an important factor that influences students’ learning. Students who achieve higher scores in mathematics are more interested in solving problems, they report more self-efficacy with regard to doing mathematics, and they frequently feel more positive emotions such as enjoyment while working on mathematical tasks than other students (Goetz, Frenzel, Pekrun, Hall, & Lüdtke, 2007; Heinze, Reiss, & Rudolph, 2005; Pekrun, Goetz, Frenzel, Barchfeld, & Perry, 2011; Malmivuori, 2006; Schukajlow & Krug, 2014a). These factors, in turn, allow such students to learn more in mathematics classes. Further, students with deep content knowledge may have less difficulty constructing several solutions to a problem, rarely interrupt their solution process, and improve their performance more strongly than other students.

Empirical studies on this issue involving word and intra-mathematical problems without vague conditions have focussed on the investigation of instructional designs for prompting multiple solutions. These designs involved presenting and comparing solution methods vs. sequentially presenting different solution methods. In some studies, researchers did not find any differences between approaches for students with different levels of prior knowledge, but in other studies, students with better prior knowledge in the respective domain benefitted more than other students from presenting and comparing multiple-solution methods (Große, 2014; Reed et al., 2012; Rittle-Johnson et al., 2009, 2012). The rationale behind the finding that novice students benefit from the sequential presentation of two solutions is that presenting and comparing two new solution methods on an unfamiliar task can be too much for them. The results from the case study on solving modelling problems with vague conditions indicate that students do not have any difficulties finding a second solution if they have already found a first solution (Schukajlow & Krug, 2013a). Indeed, the first and the second solutions for this kind of problem may differ only in the assumptions about missing information, but they can involve the same mathematical model, the application of identical mathematical procedures, and the same interpretation. Thus, it is possible that only low or even zero effects of prompting students to construct multiple solutions on the number of solutions developed or on students’ performance at posttest could be found for the specific kind of modelling problems that we investigated in the current study.

3 Method

3.1 Sample

One hundred forty-four German ninth graders (43 % female; mean age 15 years) from three comprehensive schools (German Gesamtschule) that each had two middle-track classes took part in this study. Middle-track classes were selected for this study in order to increase its representativeness: Students with different levels of knowledge in mathematics can be found in middle-track classes. Students from the current sample had not participated in any specific educational program, and thus, they did not have any formal experience in dealing with genuine modelling problems.

3.2 Study design

On the basis of students’ final grades in mathematics, each of the six classes was divided into two parts with the same number of students in each part in such a way that the average achievements in the two parts did not differ. The ratio of males and females was approximately equal in each part. One part of each classroom was assigned to the multiple-solution condition (students were prompted to find multiple solutions to modelling problems); the other part was assigned to the one-solution condition (similar tasks that each had one possible solution were presented). The teaching unit consisted of five 45-min long lessons that were presented across three sessions: the first and the second lessons, the third and the fourth lessons, and the fifth lesson. After the first and the second sessions, students filled out questionnaires about the number of solutions they developed in the previous lessons and about their experience of competence during the teaching unit (cf. Fig. 3). Before and after the teaching unit, the participants completed a performance test.

Four mathematics teachers between 25 and 54 years of age (two females) instructed students to solve modelling problems in the multiple- and one-solution conditions in separate rooms. Each teacher instructed the same number of student groups in the multiple-solution condition and in the one-solution condition. Thus, teachers’ personality had the same influence on students’ learning in each condition, and the impact of teachers’ personality did not differ between the treatments applied in this study. The research team provided the teachers with the modelling problems, the solution spaces, and the instruction manuals with lessons plans. The key points of each instruction condition were discussed before the teaching experiment began.

Treatment programs were based on the student-centred teaching method, which had demonstrated a positive influence on students’ learning and affect (Schukajlow et al., 2009; Schukajlow et al., 2012). In addition, students were instructed at the beginning about the goals of the respective treatment program and the key features of the problems that would be presented during the teaching unit. The same order was applied in the two treatment conditions and included the following:

-

Information about the goals of the teaching unit (the enhancement of solving modelling problems using multiple solutions vs. one solution)

-

Group work according to the fixed cooperation script. The cooperation script consisted of three parts: (a) individual work: working individually and developing the first ideas about how to solve a problem; (b) cooperative work: discussing ideas for solving the problem in group work; (c) individual work: writing down one’s own individual solution or multiple solutions (Schukajlow et al., 2012)

-

Presentation of information about how the first problem could be solved after the group work. Multiple solutions (multiple-solution condition) or one solution (one-solution condition) of other problems were presented by students and discussed by the whole class

-

A summary of the focal features of the modelling problems with or without multiple solutions

In order to stimulate the development of multiple solutions in one condition and to prevent their construction in the other condition, two similar versions of six tasks about Pythagoras’ theorem were developed. The topic of Pythagoras’ theorem had already been taught using intra-mathematical tasks before the applied teaching unit. In the multiple-solution condition, students have to find two different solutions for four of six problems and to record their solutions. For example, in the sample problem “Fire-brigade”, the modification was “Find two possible solutions. Write down both solution methods.”

A further instructional key point in the multiple-solution condition was to compare and link different solutions. Students discussed the differences between solutions and causes of these differences in group work. Teachers underlined this point while discussing the solution with the whole class and while summarising the focal features of the modelling problems. Because one solution to a problem typically has to be found in the real world, we did not modify the two problems that were given in the last lesson. In these problems, some important data were missing, but they required only the development of one solution such as the “Fire-brigade” problem (cf. Fig. 1).

In the one-solution condition, all information about missing data was given in the problems so students could develop only one solution. In this condition, the modified “Fire-brigade” problem included the following section “Today a high-rise building on Main Street is burning. The engine is backed up to the tower, and the ladder is completely driven out. At what height does the ladder meet the house? Write down your solution method.” Other problems that were taught in the classroom are presented by Schukajlow and Krug (2014b).

The fidelity of the treatment was ensured by using the following procedures. All teachers had at least 2 years of teaching experience and wanted to participate in the study voluntarily. They were familiar with the teaching of modelling problems and received a 1-day training session in the teaching method they were supposed to apply in the intervention. In each lesson, at least one person from the research group was present. The members of the research group administrated the tests and observed the implementation of the treatment. All lessons were videotaped, and all students’ solutions were collected. Analyses of these materials revealed that time on tasks did not differ between the two conditions, and all students were asked to solve the problems as identified by the instruction manuals. However, in the multiple-solution condition, students used the time for solving problems in two different ways. In the one-solution condition, students discussed the solution longer than in the multiple-solution condition.

3.3 Measures

3.3.1 Performance tests

The two types of problems for the topics of Pythagoras’ theorem—modelling and intra-mathematical ones—were used in order to test students’ progress. All the tests were administered by trained administrators. Students’ responses were marked with 1 for a correct solution and with 0 for the wrong solution. Most of the items were taken from the sizeable pool of items from other studies (Leiss, Schukajlow, Blum, Messner, & Pekrun, 2010; Rakoczy, Harks, Klieme, Blum, & Hochweber, 2013; Schukajlow et al., 2009). All items were examined in previous studies; thus, their approximate degree of difficulty and discriminatory power for the population of ninth graders were known. The time allocated for each test booklet was 1 h.

The item difficulties of two mathematics scales can be approximated by a two-dimensional Rasch model (Bond & Fox, 2001). This model allowed us to construct parallel test versions (with no item overlap) for each scale (here, performance in solving modelling or intra-mathematical problems) at two measurement points (pre- and posttest) so that students’ achievements could be compared. Students’ performance in solving modelling problems and intra-mathematical problems were examined using 18 and 8 items, respectively. As each student solved similar but not identical items at pretest and at posttest, students’ performance could be measured accurately, and memorisation effects were minimised. The ConQuest software (Wu, Adams, & Wilson, 1998) was used to scale students’ performance data. Weighted likelihood estimator (WLE) parameters (Warm, 1989) were estimated for each student. The WLEs characterise students’ performance using continuous scales for modelling and for intra-mathematical performance.

The EAP/PV test reliabilities, which are comparable to Cronbach’s α reliabilities, were .66 for modelling and .63 for intra-mathematical performance. Sample items from the tests are given in Fig. 4.

3.3.2 Scales for number of solutions developed and experience of competence

The number of solutions developed by students during the teaching unit was assessed two times during the teaching unit using a 4-point scale. After the first session, students were asked about the number of solutions they developed for the first and second problems and after the second session about the number of solutions they developed for the third and fourth problems. One sample item was “While solving the ‘Fire-brigade’ problem, I developed (0 = no solution; 1 = one solution; 2 = two solutions, 3 = more than two solutions) today.” In order to simplify the interpretation of this scale, the last two categories were aggregated into the category “two and more solutions”.Footnote 1 Thus, this scale ranged from 0 (no solutions) to 2 (two and more solutions).

Students’ experience of competence was also assessed after the first and second teaching sessions (Table 1). The 5-point Likert scale used in the current study ranged from 1 (not at all true) to 5 (completely true). This scale was comprised of four items that asked about understanding and mastering the assigned work during the teaching unit. Two items for this scale were taken from the study by Hänze and Berger (2007). Another two items were developed for the present study. The reliabilities of this scale (Cronbach’s α) measured after the second and fourth lessons were .91 and .90, respectively.

3.4 Data analysis

We began the data analysis by examining the influence of the treatment conditions on students’ performance in solving modelling and intra-mathematical problems. Then we tested the hypothesised model for performance with regard to each type of problem. In all regression analyses, dummy codes for the treatment factor (0 = one solution; 1 = multiple solutions) were used.

To answer the research questions, two path models with 11 free parameters and 144 subjects were applied to the data. The ratio of subjects to parameters was 13 (144/11), which was above the critical value of 5 for obtaining correct results (Kline, 2005). Thus, the sample of 144 participants was sufficient for addressing the research questions.

3.4.1 Clustering of the data

To increase the external validity of the current study, the students were instructed at school in groups of 11–14 students from the same mathematics class rather than individually. Groups of students attending the same class were randomly assigned to the two treatment conditions, taking into account their achievement and gender. This procedure produced hierarchical data such that the individual observations were more or less dependent. To examine the degree of dependence within the groups (n = 12) for performance at pretest, we calculated the intraclass correlation coefficient (ICC) using the statistical program Mplus (L. K. Muthén & Muthén, 1998–2012). The ICC was .137 for modelling and .204 for intra-mathematical performance. ICCs can be transformed into design effects (B. O. Muthén & Satorra, 1995) to indicate the loss of statistical power due to the dependence of observations. The design effect was 2.36 for modelling and 3.02 for intra-mathematical performance. Thus, we calculated fit statistics and assessed the effects using maximum-likelihood estimations with adjusted standard errors (MLR) using the type = complex analysis in Mplus. This statistical method takes into account the dependence of observations for parameter estimates and goodness-of-fit model testing (B. O. Muthén & Satorra, 1995; L. K. Muthén & Muthén, 1998–2012).

3.4.2 Missing values

In longitudinal studies, some values are often missing. In the present study, the percentage of missing values ranged from 9 % for performance to 1.3 % for experience of competence. The use of multiple imputation or the full information maximum-likelihood estimator (Peugh & Enders, 2004) are the preferred solutions for this problem. The missing values in the current study were estimated using the maximum-likelihood algorithm implemented in Mplus (L. K. Muthén & Muthén, 1998–2012). This algorithm uses the full information from the covariance matrices to estimate the missing values.

4 Results

4.1 Preliminary analysis and analysis of treatment effects on performance

The means and standard deviations for modelling and intra-mathematical performance are shown in Table 2. The negative values in modelling indicate that the modelling test was more difficult for students than the intra-mathematical test. Students’ performances at pretest differed slightly between the multiple-solution and one-solution groups. Students in the group in which multiple solutions were prompted showed slightly better results on the modelling and intra-mathematical pretests, but t tests revealed that these differences were not statistically significant (modelling: t(142) = 1.623, p = .11; intra-mathematical: t(142) = 1.593, p = .11).

To compare students’ final performance between the two groups, students’ measures at posttest were adjusted using the respective pretest values. The analysis showed that students in the multiple-solution condition and the one-solution condition did not differ in their performance measured after the teaching unit. Thus, prompting students to construct multiple solutions for modelling problems with vague conditions did not directly improve their performance.

4.2 Indirect effects via number of solutions and experience of competence

4.2.1 Statistical procedure

The maximum-likelihood algorithm implemented in Mplus was used for the statistical analyses of the path model (Muthén & Muthén, 1998–2012). This algorithm allows the fit values to be calculated for the path models. The goodness-of-fit values show whether the data provide a good fit to the hypothesised model. The comparative fit index (CFI) and the standardised root mean squared residual (SRMR) are most adequate for sample sizes with fewer than 250 persons (Hu & Bentler, 1999). We applied the combination of cutoff values of CFI >0.95 and SRMR <0.09 to examine the goodness of the fit of the model. We also calculated the chi-square goodness of fit. The same structure of the path model and the same intervening variables used for the investigation of the effects of treatment on performance in solving the two different types of problems (modelling and intra-mathematical ones) allowed us to cross-validate the hypothesised model.

4.2.2 Model fit

The calculated correlation matrix of the variables measured in the current study is presented in Table 3. The analysis of the values showed that all the correlations were in the expected direction (e.g., all performance measures were significantly correlated with each other, and all of the correlations between performance, number of solutions developed, and experience of competence were positive).

Model fit values were computed for the path model with regard to modelling and intra-mathematical performance (illustrated in Fig. 2). The hypothesised model fit the data well according to all fit indexes for both measures that were investigated: for performance in solving modelling problems as well as for performance in solving intra-mathematical problems. Twenty-eight percent of the variance in modelling and 24 % of the variance in intra-mathematical performance at posttest could be explained by this model (Table 4).

4.2.3 Direct and indirect effects on students’ performance

In this section, we will present the direct and indirect effects estimated in the hypothesised model. Parameter estimates and significance levels for each hypothesised path for the models with regard to modelling and intra-mathematical performance are presented in Table 5. The direct effects of the path model are also illustrated in Fig. 5.

In the previous analysis of path models of students’ interest, we found that the treatment was a strong predictor of the number of solutions developed (β = 0.96), and the number of solutions predicted experience of competence (β = 0.28) (Schukajlow & Krug, 2014b). In the current analysis of the path model of students’ performance, nearly the same coefficients were calculated for modelling (β = 0.98, β = 0.26) and intra-mathematical performance (β = 0.98, β = 0.26). The minor differences in estimates may have resulted in part from using other measures (performance instead of interest) or from taking into account the design effect for students’ performance.

One of the main results from the analysis of the path model was a significant prediction of students’ performance on the posttest from their experience of competence during the teaching unit. This result was found for both modelling problems (β = 0.22) and intra-mathematical problems (β = 0.26). Students who reported feeling more competent during the teaching unit achieved higher scores on the posttest. Next, we analysed indirect effects of the treatment and of the number of solutions developed on students’ performance at posttest. This analysis showed that both prompting multiple solutions and the number of solutions improved students’ performance in solving modelling problems at posttest (β = 0.06). Similar indirect effects were also found for intra-mathematical performance. Teaching method had a nearly statistically significant influence on students’ intra-mathematical performance at posttest (β = 0.07), and the number of solutions they developed significantly affected this performance (β = 0.07). Thus, prompting students to develop multiple solutions for modelling problems was found to affect their performance indirectly—through the number of solutions they developed and their experience of competence—as we assumed on the basis of the theoretical considerations.

Prior performance is an important factor that directly predicted performance at posttest for modelling (β = 0.43) and intra-mathematical performance (β = 0.28). However, students’ performance in modelling or in solving intra-mathematical problems at pretest did not influence the number of solutions they developed during the teaching unit. We also did not find significant indirect effects of prior performance on students’ performance at posttest. Although students who showed better performance at pretest outperformed low achievers at posttest, this did not result from the indirect effects of prior performance on the number of solutions they developed during the teaching unit.

5 Discussion

Prompting students to construct multiple solutions for a given problem is an important topic in mathematics education, and this practice can be applied in teaching in order to improve students’ learning. The effects of this element of teaching on students’ learning are based on case studies and analyses of the teaching practices of novice and expert teachers. However, only a few randomised experimental studies have investigated the effects of prompting students to find multiple solutions. Moreover, we could not find any studies that had investigated the impact of prompting students to construct multiple solutions for modelling problems with vague conditions, which should be used in the mathematical classroom because of the importance of modelling for students’ current and future lives. Furthermore, there has been a lack information on how prompting multiple solutions or prior performance influences students’ performance at posttest. This information is important for researchers and teachers as it illustrates which intervening variables should be taken into account when investigating or practising this kind of teaching method. We assessed these questions using path analysis to determine the impact of prompting multiple solutions on students’ achievements in solving modelling and intra-mathematical problems with regard to the topic of Pythagoras’ theorem.

5.1 Direct treatment effects

On the basis of theoretical considerations, we predicted that prompting students to construct multiple solutions for modelling problems would directly affect students’ performance. However, the results of our study did not confirm this prediction. Students in the multiple-solution and one-solution conditions produced similar results when solving modelling and intra-mathematical problems at posttest after the five-lesson teaching unit. This result is in line with findings from previous studies that did not identify effects of prompting multiple solutions for intra-mathematical problems on students’ performance, measured using accuracy in solving problems (Levav-Waynberg & Leikin, 2012; Star & Rittle-Johnson, 2008). Our findings extended this result to modelling problems with vague conditions and specifically for the prompting of multiple solutions for modelling problems with missing information. As this was the first randomised empirical study to use this kind of multiple-solution scenario, this result needs to be replicated in future studies. Previous studies that compared multiple-solution methods or different ways of presenting multiple-solution methods (on the same or on different problems) and that used word problems with a connection to reality as the content have produced inconsistent results and have underlined the importance of context factors—such as type of problems, kind of instructions, and prior knowledge—for learning (Große, 2014; Große & Renkl, 2006; Reed et al., 2012). The comparison of arithmetic word problems, for example, had a positive influence on students’ achievements, whereas algebra performance did not differ between a group that compared solutions and a group that did not compare solutions (Reed et al., 2012). The investigation of factors that support the learning of complex problems with a connection to reality is an important research issue that we analysed using path models and will discuss below.

One possible reason for the absence of direct effects of prompting multiple solutions on students’ performance is the way in which the problems were formulated on the posttest. We argue that when we solve problems in real life, we look for one solution to a problem; thus, the problems on the posttest required the students to develop only one solution. In the one-solution group, students had to develop one solution during the teaching unit and also had to develop one solution for each problem on the performance test. In the multiple-solution condition, however, students had to find two solutions during the treatment in two of the three sessions but only one solution for each task on the posttest. Given that a crucial point in the measurement of treatment effects is that the treatment tasks need to provide a good match to the performance test (Hattie, Biggs, & Purdie, 1996), the way in which the problems were formulated on the posttest may have negatively impacted the performance of the students in the multiple-solution group. In future research, the development of multiple solutions should be examined on the posttest to investigate the effects of prompting students to construct multiple solutions on students’ performance. A similar approach was already used in other studies and showed advantages of prompting multiple solutions of intra-mathematical problems with respect to mathematical knowledge, creativity, conceptual knowledge, and flexibility (Levav-Waynberg & Leikin, 2012; Rittle-Johnson & Star, 2009; Star & Rittle-Johnson, 2008).

Future studies should explore whether students who are instructed to use different mathematical solution methods to solve given modelling problems (e.g., Pythagoras’ theorem or scale drawing to the “Fire-brigade” problem) improve in their performance. The first analysis of prompting this kind of multiple solutions to modelling problems with regard to the topic “linear functions” was conducted for students’ self-regulation and did not reveal any positive effects (Achmetli, Schukajlow, & Krug, 2014). The investigation of different kinds of solutions that can be used to solve modelling problems can contribute to our knowledge about multiple solutions and provide information about how to enhance students’ ability to solve these kinds of problems.

5.2 Indirect effects via number of solutions developed and experience of competence

In order to explore how prompting students to find multiple solutions and students’ prior performance would influence students’ performance on the posttest, we examined the hypothesised path model. As expected, the predicted path model provided a good fit to the data for modelling and for intra-mathematical performance. Thus, the model adequately described the influence of treatment and prior performance on students’ performance at posttest.

Indirect effects of prompting students to find multiple solutions for modelling problems were identified. For the effects of the treatment on performance, two factors—the number of solutions developed and students’ experience of competence—were found to be crucial. Prompting multiple solutions increased the number of solutions developed, the number of solutions developed influenced the experience of competence, and the experience of competence improved students’ final performance. In addition to comparing, reflecting on, and discussing multiple solutions (Rittle-Johnson & Star, 2009; Silver et al., 2005), the number of solutions developed and experience of competence are valuable factors that play roles in producing the effect that prompting students to find multiple solutions has on students’ performance. We argue that the number of solutions developed during the teaching unit is important because it indicated the impact of the individual solution activities on students’ learning. Combined with the group and class discussions about the various solutions that different students found, the activities that we applied with our teaching method had positive influences on experience of competence and students’ final performance.

Schukajlow and Krug (2014b) showed that the experience of competence positively influenced students’ interest at posttest and mediated the impact of prompting multiple solutions and the number of solutions developed on students’ final interest. In the current study, we found similar effects on students’ performance. The experience of competence was a strong predictor of students’ performance in solving modelling and intra-mathematical problems as hypothesised in the self-determination theory of human behaviour (Deci & Ryan, 2000). This finding is in line with empirical results in other domains: The experience of competence predicted students’ performance in physics to a comparably strong degree (Hänze & Berger, 2007). Moreover, the experience of competence was found to be important for the effects of teaching method and the number of solutions students developed on students’ performance as we predicted on the basis of theoretical and empirical considerations from previous studies. These results underline the importance of the experience of competence for students’ cognitive and affective development. Learning settings that improve students’ experience of competence contribute to the achievements of cognitive and affective goals in mathematics education, both of which are important for students’ learning (Zan et al., 2006; McLeod, 1992).

To summarise these results and tie them to practical implications in the classroom, we suggest that teachers who instruct students to find multiple solutions should pay a lot of attention to the number of solutions developed by every student and to their individual experience of competence. To improve students’ performance (and interest), it is not sufficient to simply pose problems that require students to construct different solutions. Scaffolding students’ learning using cooperative settings such as used in our study (alone-together-alone) and comparing and contrasting multiple solutions with the whole class are important elements that allow students to produce more solutions. Moreover, we posit that students who do not find any solutions and merely observe how other students or the teacher present solutions will not improve their knowledge and interest. Thus, in order to help students to progress in mathematics and to improve their interest, the teacher cannot accept such passive behaviour from his or her students in the classroom.

Another element of the examined path model was students’ prior performance. The results of the current study showed that, according to the path model, students’ prior performance did not influence the number of solutions they developed and it did not have any indirect impact on students’ performance at posttest. This finding may be the result of students’ sufficient prior knowledge with regard to the content taught in the classroom and is in line with results from the study by Levav-Waynberg and Leikin (2012). They used case study observations and found that solving tasks with multiple solutions was beneficial for students who performed at both advanced and regular levels. To investigate the impact of prior knowledge on students’ performance, our study should be replicated with students who have limited knowledge of Pythagoras’ theorem and modelling. We assume that in that case, the influence of prior knowledge may be more powerful, similar to the study about comparing multiple solutions of linear equations by students who were not familiar with equations (Rittle-Johnson et al., 2009).

Another explanation for our findings can be found in the kind of multiple solution that was prompted in the classroom. The influence of prior knowledge might be limited because students did not need special mathematical knowledge to increase the number of solutions they developed when the solutions were based on different assumptions about missing data such as the position of the fire vehicle in the “Fire-brigade” task. This assumption is in line with a finding from our previous case study: Students who found one solution often had only minor difficulties in constructing a second solution using the same mathematical model and the same mathematical procedure to solve modelling tasks with vague conditions (Schukajlow & Krug, 2013a).

The limited impact of prior knowledge on the effect of prompting multiple solutions to modelling problems with vague conditions, and thus also on performance at posttest, can be seen as a positive result of students’ learning because students at different levels benefitted in comparable ways from being prompted to construct multiple solutions to modelling problems with vague conditions. Thus, the dispersal of students across different levels of mathematics did not increase after students practiced this teaching method.

5.3 Strengths and limitations

The effects of the treatment and prior performance on students’ performance at posttest were examined using inferential and path analyses. The active manipulation of the treatment conditions in our study (prompting multiple solutions vs. prompting one solution) using a randomised experimental design allowed us to causally interpret the treatment effects on students’ performance. However, the causal interpretation of paths in the hypothesised path model should be made with caution. The validity of the analysis of path models depends strongly on the times at which the data were collected and on evidence from previous research about the possibilities of directed effects such as the impact of experience of competence on students’ performance at posttest.

We collected data before, during, and after the teaching unit so that the data collected in our study would be ordered along a timeline. Thus, using these data, it was possible to determine the direction of the effects (e.g., from experience of competence during the teaching unit to students’ performance after the teaching unit) and thus also to examine the hypothesised path model. However, a path model cannot replace the use of experimental studies (Spencer, Zanna, & Fong, 2005).

The assumed path model was derived from constructivist learning theories (Collins et al., 1989; Spiro et al., 1988) and the self-determination theory of human behaviour (Deci & Ryan 2000). The results of previous empirical studies inspired the hypothesised paths between the applied teaching method, the number of solutions developed, experience of competence, and prior and final performances. However, this path model may be incomplete as other intervening variables such as interest, teachers’ feedback, or the quality of the students’ discussions (Große & Renkl, 2006; Rittle-Johnson & Star, 2009) could affect the number of solutions they developed, experience of competence, and their performance on the posttest. The investigation of the role of these intervening variables for prompting students to find multiple solutions is an important issue for future research. Moreover, research on multiple-solution learning settings should include not only performance but also other affective and meta-cognitive factors. We argue that including emotions, interest, values, self-efficacy, planning, and monitoring activities as intervening variables or dependent measures can significantly improve knowledge about the effects of multiple solutions (Guberman & Leikin, 2013; Schukajlow & Krug, 2012, 2013b; 2013c; 2014b).

Finally, we would like to note three other important limitations of the current study. First, the results may be different for the long-term practice of prompting multiple solutions in the classroom. We investigated the effects of an intervention across a relatively short time period because more studies of short duration in mathematics education were recently requested (Stylianides & Stylianides, 2014). But such effects can be expected to be even stronger across a longer period of instruction. Second, the reliabilities of .66 and .63 for modelling and intra-mathematical performance tests, respectively, were slightly below the limit of .70. The effects on students’ performance may be stronger if scales with higher reliabilities are applied. Third, investigations of the stability of changes in students’ interest using follow-up measures should be implemented in future research (see e.g., Rittle-Johnson & Star, 2009).

5.4 Conclusion

Prompting students to find multiple solutions is an important theme that has been investigated in research frameworks about open(-ended) problems (Becker & Shimada, 1997; Lin et al., 2013; Silver, 1995; Stacey, 1995), the implementation of multiple-solution tasks (Guberman & Leikin, 2013; Leikin & Levav-Waynberg, 2007; Levav-Waynberg & Leikin, 2012), and the impact of comparison on students’ learning (Reed et al., 2012; Rittle-Johnson & Star, 2007, 2009; Star & Rittle-Johnson, 2008; 2009). Our study extended this research using modelling problems with vague conditions and identified factors that are important for producing effects of prompting multiple solutions on students’ performance. The results of the current study showed that the number of solutions developed and experience of competence are factors that mediate the effects of the investigated teaching method not only on students’ interest (Schukajlow & Krug, 2014b) but also on students’ performance. Thus, these factors should be taken into account when teaching students to find multiple solutions for modelling problems. The results of other studies on prompting students to construct multiple solutions for modelling problems have also shown effects on students’ metacognition, preferences for finding multiple solutions, and self-regulation (Schukajlow & Krug, 2012, 2013b; 2013c; 2014b) and have extended the list of positive effects of this teaching method on students’ learning. Moreover, the positive effects of self-regulated teaching method on students’ performance in solving modelling problems and affect shown in previous studies (Schukajlow et al., 2009; Schukajlow et al., 2012) were found to increase when multiple solutions were prompted. On the basis of these findings, we encourage teachers to test the teaching method examined in our study in their mathematics classrooms.

Notes

In the multiple-solution condition, the number of students who reported developing more than two solutions varied between 9 and 16 % for each of four problems presented to the class.

References

Achmetli, K., Schukajlow, S., & Krug, A. (2014). Effects of prompting students to use multiple solution methods while solving real-world problems on students’ self-regulation. In C. Nicol, S. Oesterle, P. Liljedahl, & D. Allan (Eds.), Proceedings of the joint meeting of PME 38 and PME-NA 36 (Vol. 2, pp. 1–8). Vancouver, Canada: PME.

Ball, D. L. (1993). With an eye on the mathematical horizon: Dilemmas of teaching elementary school mathematics. The Elementary School Journal, 93, 373–397.

Bandura, A. (2003). Self-efficacy: The exercise of control (6. Printing. ed.). New York: Freeman.

Baumert, J., Kunter, M., Blum, W., Brunner, M., Dubberke, T., Jordan, A., et al. (2010). Teachers’ mathematical knowledge, cognitive activation in the classroom, and student progress. American Educational Research Journal, 47, 133–180.

Becker, J. P., & Shimada, S. (Eds.). (1997). The open-ended approach: A new proposal for teaching mathematics. Reston, VA: National Council of Teachers of Mathematics.

Blum, W. (2011). Can modelling be taught and learnt? Some answers from empirical research. In G. Kaiser, W. Blum, R. Borromeo Ferri, & G. Stillman (Eds.), Trends in the teaching and learning of mathematical modelling - Proceedings of ICTMA14 (pp. 15–30). New York: Springer.

Blum, W., Galbraith, P. L., Henn, H.-W., & Niss, M. (2007). Modelling and applications in mathematics education. The 14th ICMI study. New York: Springer.

Blum, W., & Leiss, D. (2007). How do students and teachers deal with mathematical modelling problems? The example sugarloaf and the DISUM project. In C. Haines, P. L. Galbraith, W. Blum, & S. Khan (Eds.), Mathematical modelling (ICTMA 12): Education, engineering and economics (pp. 222–231). Chichester: Horwood.

Boekaerts, M., & Corno, L. (2005). Self-regulation in the classroom: A perspective on assessment and intervention. Applied Psychology, 54(2), 199–231.

Bond, T. G., & Fox, C. M. (2001). Applying the Rasch model: Fundamental measurement in the human sciences. Mahwah, NJ: Lawrence Erlbaum.

Bong, M., & Skaalvik, E. M. (2003). Academic self-concept and self-efficacy: How different are they really? Educational Psychology Review, 15, 1–40.

Collins, A., Brown, J. S., & Newman, S. E. (1989). Cognitive apprenticeship: Teaching the crafts of reading, writing, and mathematics. In L. B. Resnick (Ed.), Knowing, learning and instruction: Essays in honor of Robert Glaser (pp. 453–492). Hillsdale, NJ: Erlbaum.

Deci, E. L., & Ryan, A. M. (2000). The “what” and “why” of goal pursuits: Human needs and the selfdetermination of behavior. Psychological Inquiry, 11(4), 227–268.

Dhombres, J. (1993). Is one proof enough? Travels with a mathematician of the baroque period. Educational Studies in Mathematics, 24(24), 401–419.

Dörnyei, Z. (2005). Theaching and researching motivation. Harlow: Longman.

Eccles, J. S., & Wigfield, A. (2002). Motivational beliefs, values, and goals. Annual Review of Psychology, 53, 109–132.

Galbraith, P. L., & Stillman, G. (2001). Assumptions and context: Pursuing their role in modelling activity. In J. Matos, W. Blum, K. Houston, & S. Carreira (Eds.), Modelling and mathematics education, ICTMA 9: Applications in science and technology (pp. 300–310). Chichester: Horwood Publishing.

Galbraith, P. L., & Stillman, G. (2006). A framework for identifying student blockages during transitions in the modelling process. ZDM The International Journal on Mathematics Education, 38(2), 143–162.

Goetz, T., Frenzel, A. C., Pekrun, R., Hall, N. C., & Lüdtke, O. (2007). Between- and within-domain relations of students’ academic emotions. Journal of Educational Psychology, 99, 715–733.

Große, C. S. (2014). Mathematics learning with multiple solution methods: Effects of types of solutions and learners’ activity. Instructional Science, 42, 1–31.

Große, C. S., & Renkl, A. (2006). Effects of multiple solution methods mathematics learning. Learning and Instruction, 16(2), 122–138.

Guberman, R., & Leikin, R. (2013). Interesting and difficult mathematical problems: Changing teachers’ views by employing multiple-solution tasks. Journal of Mathematics Teacher Education, 16(1), 33–56.

Hannula, M. S. (2006). Motivation in mathematics: Goals reflected in emotions. Educational Studies in Mathematics, 63, 165–178.

Hänze, M., & Berger, R. (2007). Cooperative learning, motivational effects, and student characteristics: An experimental study comparing cooperative learning and direct instruction in 12th grade physics classes. Learning and Instruction, 17(1), 29–41.

Hattie, J. (2009). Visible learning: A synthesis of meta-analyses relating to achievement. London: Routledge.

Hattie, J., Biggs, J. B., & Purdie, N. (1996). Effects of learning skills interventions on student learning: A meta-analysis. Review of Educational Research, 66, 99–136.

Heinze, A., Reiss, K., & Rudolph, F. (2005). Mathematics achievement and interest in mathematics from a differential perspective. ZDM The International Journal on Mathematics Education, 37(3), 212–220.

Hiebert, J., Gallimore, R., Garnier, H., Givvin, K. B., Hollingsworth, H., Jacobs, J., et al. (2003). Teaching mathematics in seven countries. Results from the TIMSS 1999 video study. Washington, DC: NCES.

Hu, L., & Bentler, P. M. (1999). Cutoff criteria for fit indexes in covariance structure analysis: Conventional criteria versus new alternatives. Structural Equation Modeling, 6(1), 1–55.

Klavir, R., & Hershkovitz, S. (2008). Teaching and evaluating ‘open-ended’ problems. International Journal for Mathematics Teaching and Learning, 20(5), 23.

Kline, R. B. (2005). Principles and practice of structural equation modeling. New York, NY: Guilford Press.

Krapp, A. (2005). Basic needs and the development of interest and intrinsic motivational orientations. Learning and Instruction, 15, 381–395.

Kunter, M., Baumert, J., & Köller, O. (2007). Effective classroom management and the development of subject-related interest. Learning and Instruction, 17, 494–509.

Leikin, R., & Levav-Waynberg, A. (2007). Exploring mathematics teacher knowledge to explain the gap between theory-based recommendations and school practice in the use of connecting tasks. Educational Studies in Mathematics, 66(3), 349–371.

Leiss, D., Schukajlow, S., Blum, W., Messner, R., & Pekrun, R. (2010). The role of the situation model in mathematical modelling – task analyses, student competencies, and teacher interventions. Journal für Mathematikdidaktik, 31(1), 119–141.

Levav-Waynberg, A., & Leikin, R. (2012). The role of multiple solution tasks in developing knowledge and creativity in geometry. Journal of Mathematical Behavior, 31, 73–90.

Lin, C.-Y., Becker, J., Ko, Y.-Y., & Byun, M.-R. (2013). Enhancing pre-service teachers’ fraction knowledge through open approach instruction. Journal of Mathematical Behavior, 32(3), 309–330.

Maaß, K. (2010). Classification scheme for modelling tasks. Journal für Mathematikdidaktik, 31(2), 285–311.

Malmivuori, M.-L. (2006). Affect and self-regulation. Educational Studies in Mathematics, 63, 149–164.

Marcou, A., & Lerman, S. (2007). Changes in students’ motivational beliefs and performance in a self-regulated mathematical problem-solving environment. In D. Pitta-Pantazi, & G. Philippou (Eds.), Proceedings of the Fifth Congress of the European Society for Research in Mathematics Education (pp. 288–297). Larnaca, Cyprus: University of Cyprus.

McLeod, D. B. (1992). Research on affect in mathematics education: A reconceptualization. In D. A. Grouws (Ed.), Handbook of research on mathematics, teaching and learning (pp. 575–596). New York: Macmillan.

Meece, J. L., Wigfield, A., & Eccles, J. S. (1990). Predictors of math anxiety and its consequences for young adolescents’ course enrollment intentions and performances in mathematics. Journal of Educational Psychology, 82, 60–70.

Minnaert, A., Boekaerts, M., & Opdenakker, M.-C. (2008). The relationship between students’ interest development and their conceptions and perceptions of group work. Unterrichtswissenschaft, 36(3), 216–236.

Miserandino, M. (1996). Children who do well in school: Individual differences in perceived competence and autonomy in above-average children. Journal of Educational Psychology, 88, 203–214.

Muthén, L. K., & Muthén, B. O. (1998–2012). Mplus user’s guide (5th ed.). Los Angeles, CA: Muthe’n & Muthe’n.

Muthén, B. O., & Satorra, A. (1995). Complex sample data in structural equation modeling. Sociological Methodology, 25, 267–316.

National Council of Teachers of Mathematics. (2000). Principles and standards for school mathematics. Reston, VA: Author.

Neubrand, M. (2006). Multiple Lösungswege für Aufgaben: Bedeutung für Fach, Lernen, Unterricht und Leistungserfassung [Multiple solution methods for problems: importance for content, learning, teaching and measurement of performance]. In W. Blum, C. Drüke-Noe, R. Hartung, & O. Köller (Eds.), Bildungsstandards Mathematik: konkret. Sekundarstufe I: Aufgabenbeispiele, Unterrichtsanregungen, Fortbildungsideen [Standards for school mathematics on the low-secondary level: tasks, ideas for teaching and teacher trainigs] (pp. 162–177). Berlin: Cornelsen.

Niss, M., Blum, W., & Galbraith, P. L. (2007). Introduction. In W. Blum, P. L. Galbraith, H.-W. Henn, & M. Niss (Eds.), Modelling and applications in mathematics education: The 14th ICMI study (pp. 1–32). New York: Springer.

OECD (2004). Learning for tomorrow’s world: First results from PISA 2003. Paris, France: OECD.

Panaoura, A., Gagatsis, A., & Demetriou, A. (2009). An intervention to the metacognitive performance: Self-regulation in mathematics and mathematical modeling. Acta Didactica Universitatis Comenianae Mathematics, 9, 63–79.

Pekrun, R., Goetz, T., Frenzel, A. C., Barchfeld, P., & Perry, R. P. (2011). Measuring emotions in students’ learning and performance: The Achievement Emotions Questionnaire (AEQ). Contemporary Educational Psychology, 36, 36–48.

Peugh, J. L., & Enders, C. K. (2004). Missing data in educational research: A review of reporting practices and suggestions for improvement. Review of Educational Research, 74(4), 525–556.

Pietsch, J., Walker, R., & Chapman, E. (2003). The relationship among self-concept, self-efficacy, and performance in mathematics during secondary school. Journal of Educational Psychology, 95(3), 589–603.

Pollak, H. (1979). The interaction between mathematics and other school subjects. In H.-G. Steiner & B. Christiansen (Eds.), New trends in mathematics teaching (Vol. IV, pp. 232–248). Paris, France: United Nations Educational Scientific and Cultural Organization.

Rakoczy, K., Harks, B., Klieme, E., Blum, W., & Hochweber, J. (2013). Written feedback in mathematics: Mediated by students’ perception, moderated by goal orientation. Learning and Instruction, 27, 63–73.

Reed, S. K., Stebick, S., Comey, B., & Carroll, D. (2012). Finding similarities and differences in the solutions of word problems. Journal of Educational Psychology, 104, 636–646.

Richland, L. E., Zur, O., & Holyoak, K. J. (2007). Cognitive supports for analogies in the mathematics classroom. Science, 316, 1128–1129.

Rittle-Johnson, B., & Star, J. R. (2007). Does comparing solution methods facilitate conceptual and procedural knowledge? An experimental study on learning to solve equations. Journal of Educational Psychology, 99(3), 561–574.

Rittle-Johnson, B., & Star, J. R. (2009). Compared with what? The effects of different comparisons on conceptual knowledge and procedural flexibility for equation solving. Journal of Educational Psychology, 101(3), 529–544.

Rittle-Johnson, B., Star, J. R., & Durkin, K. (2009). The importance of prior knowledge when comparing examples: Influences on conceptual and procedural knowledge of equation solving. Journal of Educational Psychology, 101(4), 836–852.

Rittle-Johnson, B., Star, J. R., & Durkin, K. (2012). Developing procedural flexibility: Are novices prepared to learn from comparing procedures? British Educational Research Journal, 82(3), 436–455.

Ryan, R. M., & Deci, E. L. (2000). Self-determination theory and the facilitation of intrinsic motivation, social development, and well-being. American Psychologist, 55, 68–78.

Ryan, R. M., & Deci, E. L. (2009). Promoting self-determined school engagement: Motivation, learning, and well-being. In K. R. Wentzel & A. Wigfield (Eds.), Handbook on motivation at school (pp. 171–196). New York: Routledge.

Schukajlow, S., Blum, W., Messner, R., Pekrun, R., Leiss, D., & Müller, M. (2009). Unterrichtsformen, erlebte Selbständigkeit, Emotionen und Anstrengung als Prädiktoren von Schüler-Leistungen bei anspruchsvollen mathematischen Modellierungsaufgaben [Teaching methods, perceived self-regulation, emotions, and effort as predictors for students’ performance while solving mathematical modelling tasks]. Unterrichtswissenschaft, 37(2), 164–186.

Schukajlow, S., & Krug, A. (2012). Effects of treating multiple solutions on students’ self-regulation, self-efficacy and value. In T. Y. Tso (Ed.), Proceedings of the 36th Conference of the International Group for the Psychology of Mathematics Education (Vol. 4, pp. 59–66). Taipei, Taiwan: PME.

Schukajlow, S., & Krug, A. (2013a). Considering multiple solutions for modelling problems - design and first results from the MultiMa-Project. In G. Stillman, G. Kaiser, W. Blum, & J. Brown (Eds.), International Perspectives on the Teaching and Learning of Mathematical Modelling (ICTMA 15 Proceedings) (pp. 207–216). Heidelberg: Springer.

Schukajlow, S., & Krug, A. (2013b). Planning, monitoring and multiple solutions while solving modelling problems. In A. M. Lindmeier, & A. Heinze (Eds.), Proceedings of the 37th Conference of the International Group for the Psychology of Mathematics Education (Vol. 4, pp. 177–184). Kiel, Germany: PME.

Schukajlow, S., & Krug, A. (2013c). Uncertainty orientation, preferences for solving tasks with multiple solutions and modelling. In B. Ubuz, Ç. Haser, & M. A. Mariotti (Eds.), Proceedings of the Eight Congress of the European Society for Research in Mathematics Education (pp. 1429–1438). Ankara, Turkey: Middle East Technical University.

Schukajlow, S., & Krug, A. (2014a). Are interest and enjoyment important for students’ performance? In C. Nicol, S. Oesterle, P. Liljedahl, & D. Allan (Eds.), Proceedings of the Joint Meeting of PME 38 and PME-NA 36 (Vol. 5, pp. 129–136). Vancouver, Canada: PME.

Schukajlow, S., & Krug, A. (2014b). Do multiple solutions matter? Prompting multiple solutions, interest, competence, and autonomy. Journal for Research in Mathematics Education, 45(4), 497–533.

Schukajlow, S., Leiss, D., Pekrun, R., Blum, W., Müller, M., & Messner, R. (2012). Teaching methods for modelling problems and students’ task-specific enjoyment, value, interest and self-efficacy expectations. Educational Studies in Mathematics, 79(2), 215–237.

Silver, E. A. (1995). The nature and use of open problems in mathematics education: Mathematical and pedagogical perspectives. ZDM The International Journal on Mathematics Education, 27(2), 67–72.

Silver, E. A., Ghousseini, H., Gosen, D., Charalambous, C. Y., & Font Strawhun, B. T. (2005). Moving from rhetoric to praxis: Issues faced by teachers in having students consider multiple solutions for problems in the mathematics classroom. Journal of Mathematical Behavior, 24(3–4), 287–301.

Slavin, R. E., Hurley, E. A., & Chamberlain, A. (2003). Cooperative learning and achievement: Theory and research. In W. M. Reynolds & G. E. Miller (Eds.), Handbook of psychology: Educational psychology (Vol. 7, pp. 177–198). New York: Wiley.