Abstract

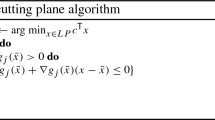

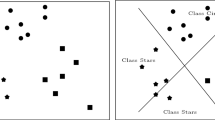

In this paper we study the relationship between separating hyperplanes and the Radon partitions of their support vectors. This study is relevant for maximal margin separations, which appear in Support Vector Machines (SVM), as well as for separations that optimize a Chebyshev norm. We propose a new version of the Stiefel exchange algorithm where we exploit the property that each Stiefel exchange is in fact a Radon exchange. Originally, the Stiefel exchange algorithm was developed to find Chebyshev approximations, but we show that it is also suited for finding hyperplane separations. We also show that many important properties in approximation theory are closely related to fundamental results in convex set theory, in particular to Helly’s, Radon’s and Caratheodory’s Theorem. Within this context, we prove a new result that generalizes both Radon’s and Caratheodory’s Theorem.

Article PDF

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Avoid common mistakes on your manuscript.

References

Bialon, P.: A linear support vector machine solver for a large number of training examples. Control Cybern. 38(1), 281–300 (2009)

Boser, B.E., Guyon, I., Vapnik, V.: A training algorithm for optimal margin classifiers. In: Haussler, D. (ed.) COLT, pp. 144–152. ACM (1992)

Burges, C.J.C.: A tutorial on support vector machines for pattern recognition. Data Min. Knowl. Discov. 2(2), 121–167 (1998)

Cadzow, J.A.: Minimum l 1, l 2, and l\(_{\infty }\) norm approximate solutions to an overdetermined system of linear equations. Digital Signal Process. 12 (4), 524–560 (2002)

Chapelle, O.: Training a support vector machine in the primal. Neural Comput. 19(5), 1155–1178 (2007)

Cheney, E.W.: Introduction to Approximation Theory. McGraw-Hill (1966)

Ewald, G.: Combinatorial Convexity and Algebraic Geometry. Springer (1996)

Gilbert, E.G., Johnson, D.W., Keerthi, S.S.: A fast procedure for computing the distance between complex objects in three-dimensional space. IEEE J Robot Autom 4, 193–203 (1988)

Goodman, J., Pollack, R.: Hadwiger’s transversal theorem in higher dimensions. J. Amer. Math. Soc. 1, 301–309 (1988)

Hawkins, D.M., Bradu, D., Kass, G.: Location of several outliers in multiple regression data using elemental sets. Technometrics 26, 197–208 (1984)

Joachims, T.: Advances in Kernel Methods - Support Vector Learning, chap. Making Large-Scale SVM Learning Practical, pp. 169–184. MIT Press (1999)

Joachims, T.: Training linear svms in linear time. In: Eliassi-Rad, T., Ungar, L.H., Craven, M., Gunopulos D. (eds.) KDD, pp. 217–226. ACM (2006)

Keerthi, S.S., Shevade, S.K., Bhattacharyya, C., Murthy, K.R.K.: A fast iterative nearest point algorithm for support vector machine classifier design. IEEE Trans Neural Netw 11, 124–136 (2000)

Keerthi, S.S., Chapelle, O., DeCoste, D.: Building support vector machines with reduced classifier complexity. J. Mach. Learn. Res. 7, 1493–1515 (2006)

Mehlhorn, K., Osbild, R., Sagraloff, M.: Reliable and efficient computational geometry via controlled perturbation. In: Bugliesi, M., Preneel, B., Sassone, V., Wegener, I. (eds.) ICALP (1), Lecture Notes in Computer Science, vol. 4051, pp. 299–310. Springer (2006)

Mitchell, B.F., Amd, V.N., Malozemov, V.F.D.: Finding the point of a polyhedron closest to the origin. SIAM J. Control 12, 19–26 (1974)

Musicant, D.R., Feinberg, A.: Active set support vector regression. IEEE Trans Neural Netw 15(2), 268–275 (2004)

Osborne, M.R., Watson, G.A.: On the best linear Chebyshev approximation. Comput. J. 10, 172–177 (1967)

Osuna, E., Freund, R., Girosi, F.: An improved training algorithm for support vector machines. In: Principe, J., Abd L.G., Morgan, N., Wilson, E. (eds.) Proceedings of the 1997 IEEE Workshop on Neural Networks for Signal Processing, pp. 276–285 (1997)

Platt, J.: Advaces in Kernel Methods: Support Vector Machines, chap. Fast Training of Support Vector Machines Using Sequential Minimal Optimization. MIT Press (1998)

Rousseeuw, P., Leroy, A.: Robust Regression and Outlier Detection. Wiley, New York (1987)

Stiefel, E.: über diskrete und lineare tschebyscheff-approximationen. Numerische Mathematik 1, 1–28 (1959)

Tsang, I.W., Kwok, J.T., Cheung, P.M.: Core vector machines: Fast SVM training on very large data sets. J. Mach. Learn. Res. 6, 363–392 (2005)

de la Vallée Poussin, C.J.: Sur la methode de l’ approximation minimum. Societe Scientifique de Bruxellles. Annales Memoires 35, 1–16 (1911)

Veelaert, P.: Constructive fitting and extraction of geometric primitives. Graph. Models Image Process. 59 (4), 233–251 (1997)

Veelaert, P.: Digital Geometry Algorithms, Lecture Notes in Computational Vision and Biomechanics, vol. 2, chap. Separability and Tight Enclosure of Point Sets, pp. 215–243. Springer (2012)

Veelaert, P.: Fast combinatorial algorithm for tightly separating hyperplanes. In: Barneva, R.P., Brimkov, V.E., Aggarwal, J.K. (eds.) IWCIA, Lecture Notes in Computer Science, vol. 7655, pp. 31–44. Springer (2012)

Watson, G.A.: Approximation in normed linear spaces. J. Comput. Appl. Math. 121, 1–36 (2000)

Ziegler, G.M.: Lectures on Polytopes. Graduate Texts in Mathematics, Vol. 152. Springer-Verlag, New York (1995)

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Veelaert, P. Combinatorial properties of support vectors of separating hyperplanes. Ann Math Artif Intell 75, 89–115 (2015). https://doi.org/10.1007/s10472-014-9430-x

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10472-014-9430-x