Abstract

We present a spectral method for one-sided linear fractional integral equations on a closed interval that achieves exponentially fast convergence for a variety of equations, including ones with irrational order, multiple fractional orders, non-trivial variable coefficients, and initial-boundary conditions. The method uses an orthogonal basis that we refer to as Jacobi fractional polynomials, which are obtained from an appropriate change of variable in weighted classical Jacobi polynomials. New algorithms for building the matrices used to represent fractional integration operators are presented and compared. Even though these algorithms are unstable and require the use of high-precision computations, the spectral method nonetheless yields well-conditioned linear systems and is therefore stable and efficient. For time-fractional heat and wave equations, we show that our method (which is not sparse but uses an orthogonal basis) outperforms a sparse spectral method (which uses a basis that is not orthogonal) due to its superior stability.

Article PDF

Similar content being viewed by others

Avoid common mistakes on your manuscript.

Code availability

Julia code for all figures presented in this paper are available at https://github.com/putianyi889/JFP-demo

Notes

Recall that a multiplication in q-bit precision has \(\mathcal {O}(q\log q\log \log q)\) complexity with the Schönhage–Strassen algorithm.

References

Hilfer, R.: Applications of Fractional Calculus in Physics. World Scientific, Singapore (2000)

Povstenko, Y.: Linear Fractional Diffusion-Wave Equation for Scientists and Engineers. Springer, Berlin (2015)

Benson, D.A., Wheatcraft, S.W., Meerschaert, M.M.: Application of a fractional advection-dispersion equation. Water Resour. Res. 36(6), 1403–1412 (2000)

Bagley, R.L., Torvik, P.J.: Fractional calculus–a different approach to the analysis of viscoelastically damped structures. AIAA J. 21(5), 741–748 (1983)

Mostafanejad, M.: Fractional paradigms in quantum chemistry. Int. J. Quantum Chem. 121(20), 26762 (2021)

Magin, R.L.: Fractional calculus models of complex dynamics in biological tissues. Comput. Math. Appl. 59(5), 1586–1593 (2010)

Fallahgoul, H.A., Focardi, S.M., Fabozzi, F.J.: 2 - Fractional Calculus. Academic Press, New York (2017). https://doi.org/10.1016/B978-0-12-804248-9.50002-4, https://www.sciencedirect.com/science/article/pii/B9780128042489500024

Li, B., Xie, W.: Adaptive fractional differential approach and its application to medical image enhancement. Comput. Electr. Eng. 45, 324–335 (2015)

Treeby, B.E., Cox, B.T.: Modeling power law absorption and dispersion for acoustic propagation using the fractional Laplacian. J. Acoust. Soc. Am. 127(5), 2741–2748 (2010)

Cui, M.: Compact finite difference method for the fractional diffusion equation. J. Comput. Phys. 228(20), 7792–7804 (2009)

Ainsworth, M., Glusa, C.: Hybrid finite element–spectral method for the fractional Laplacian: Approximation theory and efficient solver. SIAM J. Sci. Comput. 40(4), 2383–2405 (2018)

Diethelm, K.: An algorithm for the numerical solution of differential equations of fractional order. Electron. Trans. Numer. Anal. 5(1), 1–6 (1997)

Kilbas, A.A., Marichev, O.I., Samko, S.G.: Fractional Integrals and Derivatives (Theory and Applications). Gordon and Breach, Switzerland (1993)

Olver, S., Slevinsky, R.M., Townsend, A.: Fast algorithms using orthogonal polynomials. Acta Numer. 29, 573–699 (2020)

Jiao, Y., Wang, L.L., Huang, C.: Well-conditioned fractional collocation methods using fractional Birkhoff interpolation basis. J. Comput. Phys. 305, 1–28 (2016)

Zayernouri, M., Karniadakis, G.E.: Fractional spectral collocation method. SIAM J. Sci. Comput. 36(1), 40–62 (2014)

Mao, Z., Shen, J.: Efficient spectral–Galerkin methods for fractional partial differential equations with variable coefficients. J. Comput. Phys. 307, 243–261 (2016)

Hale, N., Olver, S.: A fast and spectrally convergent algorithm for rational-order fractional integral and differential equations. SIAM J. Sci. Comput. 40 (4), 2456–2491 (2018)

Chen, S., Shen, J., Wang, L.L.: Generalized Jacobi functions and their applications to fractional differential equations. Math. Comput. 85(300), 1603–1638 (2016)

Olver, S., Townsend, A.: A fast and well-conditioned spectral method. SIAM Rev. 55(3), 462–489 (2013)

Bhrawy, A.H., Zaky, M.A.: Shifted fractional-order Jacobi orthogonal functions: application to a system of fractional differential equations. Appl. Math. Model. 40(2), 832–845 (2016)

Kazem, S., Abbasbandy, S., Kumar, S.: Fractional-order Legendre functions for solving fractional-order differential equations. Appl. Math. Model. 37(7), 5498–5510 (2013)

Bailey, D.H., Barrio, R., Borwein, J.M.: High-precision computation: mathematical physics and dynamics. Appl. Math. Comput. 218(20), 10106–10121 (2012)

Horn, R.A., Johnson, C.R.: Matrix Analysis. Cambridge University Press, Cambridge (2012)

Szegö, G.: Orthogonal Polynomials. American Mathematical Soc (1939)

Alfaro, M., de Morales, M.A., Rezola, M.L.: Orthogonality of the Jacobi polynomials with negative integer parameters. J. Comput. Appl. Math. 145(2), 379–386 (2002)

NIST Digital Library of Mathematical Functions. http://dlmf.nist.gov/, Release 1.1.0 of 2020-12-15. F. W. J. Olver, A. B. Olde Daalhuis, D. W. Lozier, B. I. Schneider, R. F. Boisvert, C. W. Clark, B. R. Miller, B. V. Saunders, H. S. Cohl, and M. A. McClain, eds. http://dlmf.nist.gov/

Fousse, L., Hanrot, G., Lefèvre, V., Pélissier, P., Zimmermann, P.: MPFR: A multiple-precision binary floating-point library with correct rounding. ACM Trans. Math. Softw. 33(2), 13 (2007). https://doi.org/10.1145/1236463.1236468

Gogovcheva, E., Boyadjiev, L.: Fractional extensions of Jacobi polynomials and Gauss hypergeometric function. Fract. Calc. Appl. Anal. 8(4), 431–438 (2005)

Gutleb, T.S., Carrillo, J.A., Olver, S.: Computing equilibrium measures with power law kernels. Math. Comput. 91(337), 2247–2281 (2022)

Gautschi, W.: The condition of polynomials in power form. Math. Comp. 33(145), 343–352 (1979)

Fürer, M.: Faster integer multiplication. SIAM J. Comput. 39 (3), 979–1005 (2009)

Harvey, D., Van Der Hoeven, J.: Integer multiplication in time o (n log n). Ann. Math. 193(2), 563–617 (2021)

Haubold, H.J., Mathai, A.M., Saxena, R.K.: Mittag–Leffler functions and their applications. J. Appl. Math. 2011 (2011)

Garrappa, R.: Numerical evaluation of two and three parameter Mittag–Leffler functions. SIAM J. Numer. Anal. 53(3), 1350–1369 (2015)

Gorenflo, R., Loutchko, J., Luchko, Y.: Computation of the Mittag–Leffler function eα, β (z) and its derivative. In: Fract. Calc. Appl Anal. Citeseer (2002)

Hilfer, R., Seybold, H.J.: Computation of the generalized Mittag–Leffler function and its inverse in the complex plane. Integral Transforms Spec. Funct. 17(9), 637–652 (2006)

Ortigueira, M.D., Lopes, A.M., Machado, J.T.: On the numerical computation of the Mittag–Leffler function. Int. J. Nonlinear Sci. Numer. Simul. 20 (6), 725–736 (2019)

Zhao, X., Wang, L.L., Xie, Z.: Sharp error bounds for Jacobi expansions and Gegenbauer–Gauss quadrature of analytic functions. SIAM J. Numer. Anal. 51(3), 1443–1469 (2013)

Trefethen, L.N.: Approximation Theory and Approximation Practice, Extended Edition. SIAM (2019)

Tricomi, F.G., Erdélyi, A.: The asymptotic expansion of a ratio of gamma functions. Pac. J. Math. 1(1), 133–142 (1951)

Gautschi, W.: The condition of orthogonal polynomials. Math. Comput. 26(120), 923–924 (1972)

Olver, S., Xu, Y.: Orthogonal structure on a quadratic curve. IMA J. Numer. Anal. 41(1), 206–246 (2021)

Fasondini, M., Olver, S., Xu, Y.: Orthogonal polynomials on planar cubic curves. Found. Comput. Math., 1–31 (2021)

Fasondini, M., Olver, S., Xu, Y.: Orthogonal polynomials on a class of planar algebraic curves. arXiv:2211.06999 (2022)

Adcock, B., Huybrechs, D.: Frames and numerical approximation. SIAM Rev. 61(3), 443–473 (2019)

Torvik, P.J., Bagley, R.L.: On the appearance of the fractional derivative in the behavior of real materials. J. Appl. Mech. 51, 294–298 (1984)

Birkisson, A., Driscoll, T.A.: Automatic Fréchet differentiation for the numerical solution of boundary-value problems. ACM Trans. Math. Softw. 38(4), 1–29 (2012)

Crespo, S., Fasondini, M., Klein, C., Stoilov, N., Vallée, C.: Multidomain spectral method for the gauss hypergeometric function. Numer. Algoritm. 84(1), 1–35 (2020)

Acknowledgements

We thank Sheehan Olver for inspiring our interest in spectral methods for FIEs and FDEs and for many useful suggestions.

Funding

The first author was supported by the Roth scholarship from the Department of Mathematics, Imperial College London. The second author was supported by the Leverhulme Trust Research Project Grant RPG-2019-144.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Communicated by: Bangti Jin

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendix A. Pseudo-stabilisation

Appendix A. Pseudo-stabilisation

1.1 A.1 Introduction

Pseudo-stabilisation is a technique that uses high-precision computations to ensure that the output of an unstable algorithm has a specified accuracy. Pseudo-stabilisation is called thus because it makes an unstable algorithm appear stable by outputting accurate results. This technique is algorithm-specific and here we illustrate it for Algorithm 2 (see Section 5.2), which computes the entries of fractional integration matrices via the recurrence relation (28) (with \(A = I^{(\alpha ,\beta )}_{b,p,\mu }\)) in which errors grow exponentially. Since high-precision computations are more expensive than standard double precision, our aim is to use as low a precision as possible while ensuring the integration matrices are accurate to the specified tolerance. To choose this optimal precision, we need to know how fast errors grow in (28), examples of which are shown in Fig. 14. Since the exact values of the entries of \( I^{(\alpha ,\beta )}_{b,p,\mu }\) are not known, throughout this appendix, errors in a given precision are computed with reference to results computed in a much higher precision.

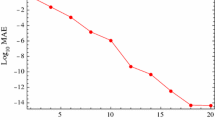

The errors from computing \(I^{(0,0)}_{0,2,1/2}\) using Algorithm 2. For the hybrid precision results, we computed the first p = 2 columns of \(I^{(0,0)}_{0,2,1/2}\) in double precision (using Algorithm 1), while the recurrence coefficients (i.e. the entries of B and C in (28)) were computed with 256-bit precision (see Table 2). In Fig. 14(b), the growth rate is the maximum error in a column divided by that in the previous column

1.2 A.2 Growth of errors

The error in the first p columns of \(I^{(\alpha ,\beta )}_{b,p,\mu }\) is determined by the precision used in Algorithm 1, while the growth rate of the error in subsequent columns depends on the recurrence coefficients (i.e. the entries of B and C in (28)) and hence also on the precision with which they are computed, as confirmed by Fig. 14. For recurrence coefficients computed in double precision, Fig. 14(b) shows that the errors grow roughly at a constant rate for the first circa 150 columns, after which the growth rate starts shooting up. The errors in higher (256-bit) precision grow at roughly a constant rate in Fig. 14(b); however, we shall find in Fig. 16 that if a sufficiently large number of columns are computed, the growth rate of the error also increases (for any precision). Due to the complicated behaviour of the growth rate of the error, we shall adopt an empirical approach to model and predict the growth of the error.

Let q be the precision and 𝜖 the corresponding machine epsilon, then 𝜖(q) = 21−q. We let e0 and E(q, n; e0) denote, respectively, the maximum errors in the initial p columns and in the n th column of \(I^{(\alpha ,\beta )}_{b,p,\mu }\), with n ≥ 0. Figure 14(a) shows examples of E(q, n; e0) for q = 53 and q = 256. We let E(q, n) = E(q, n; e0)/e0; hence, by definition, E(q, p − 1) = 1 and therefore we simulate the normalised maximum error E(q, n) by \(\widetilde {E}(q,n)\), which is defined as the maximum value in the n th column of A when one or more of the initial values in the recurrence (28) are set to 1 and the rest are set to zero. We have found that

holds, see Fig. 15 for an example. This implies that we can model the growth of errors with \(\widetilde {E}(q,n)\).

A plot of \(E(q,n;e_{0})/\widetilde {E}(q,n)\) in double precision (hence, q = 53 and e0 ≈ 10− 16) for the integration matrix \(I_{0,2,1/2}^{(0,0)}\), which illustrates the approximation (A1). \(\widetilde {E}(q,n)\) is not unique since there are multiple initial values which can be set to 0 or 1; the legend indicates the entry of A in (28) that is set to 1 to compute \(\widetilde {E}(q,n)\)

Next we consider the estimated growth rate of the error,

Figure 14(b) shows r(q, n) for q = 53, 256 and Fig. 16 shows r(q, n) for much larger n (up to 104). In Fig. 14(b), r(256,n) is approximately constant; however, in Fig. 16, it is seen that r(256,n) also increases in a step-like manner for large enough n. Figure 16(b) shows that r can be very noisy; however, the denoised or averaged version of r, which we denote by r0(q, n), takes the form of a superposition of step functions satisfying the scaling property (see Fig. 16(a) and (b))

Taking λ = 2 as an example, we have

where we used the fact that \(\widetilde {E}(q,0) = 1\). By (A1), this implies that E(2q, 2n) ≈ E2(q, n). In general, we use the approximation

which means that we can use a simulation on lower precision and fewer iterations to predict error propagation on higher precisions through a larger number of iterations. The approximation (A5) is exact for the simplest case in which r(q, n) and r(λq, λn) are constant and equal (e.g. for double precision with q = 53, n ≤ 150, see Fig. 14(b), and λ > 1). If we let e0(q) = 2−q, then for constant r, E(n, q; e0) = rn2−q and E(λn, λq; e0) = rλn2−λq = Eλ(n, q; e0).

Remark 6

In Fig. 16, the height of the steps of r0 for different q is the same but the lengths are proportional to q (hence the property (A3)). Except for the first step, which is the longest for every q, every step has the same length. We are surprised by the step-like behaviour of the growth rate. It seems to be caused by the errors in computing the integration matrix \(I_{b,p}^{(\alpha ,\beta )}\). We conjecture that if we could compute the integration matrix exactly, then the first step of r(q, n) would be infinitely long, i.e. the growth rate would be constant, which is what we would expect for a linear recurrence problem.

Remark 7

We have found that even though the approximations (A3) and (A5) are rather crude, especially when r is noisy, there is enough “noise cancellation” in the products of growth rates in (A4) (with r0 replaced by the actual growth rates r defined in (A2)) for the approximation (A5) to yield useful estimates.

1.3 A.3 Implementation

We now use the empirical estimate (A5) for the growth of errors to estimate the lowest precision required to meet a given tolerance. These estimates were used to compute the fractional integration matrices that appear in the problems in Section 6.

Suppose we aim to achieve a maximum error of at most δ in the computation of the first N + 1 columns of \(A = I^{(\alpha ,\beta )}_{b,p,\mu }\) using the recurrence (28). Setting e0 = 2−q and using (A2), we seek a q such that

Now the idea is to simulate E with \(\widetilde {E}\) on a chosen lower precision q0 and a smaller number of iterations m < N (which is to be determined) and use (A5) to estimate the smallest q satisfying (A6).

Using (A5) with λ = q/q0, we have \(E(q,N) \approx E(q_{0},Nq_{0}/q)^{q/q_{0}}\), hence, we require

Setting m = Nq0/q and since we aim to minimise q, we seek the largest m (say m∗) such that

Typical parameter choices are q0 = 53 and δ = 10− 16 which implies results in double precision, while N, the polynomial degree in the JFP basis required to resolve the solution to a given accuracy (which is typically the same as δ), is problem-dependent. To estimate m∗ in practice, we simulate E(q0,m) with \(\widetilde {E}(q_{0},m)\) and increase m until the first value of m (say m1) is found for which (A7) is not valid, then we set m∗ = m1 − 1 and set the precision to q = Nq0/m∗.

1.4 A.4 Computational complexity

Now we analyse the cost of the pseudo-stabilised Algorithm 2 with inputs p, N, and δ ≪ 1, where \(p \in \mathbb {N}_{+}\) satisfies \(\mu p = k_{*} \in \mathbb {N}_{+}\). Using (A7) and (A5), it follows that \(\log _{2} E(1,m/q_{0})<1\), so \(m=\mathcal {O}(q_{0})\) and since \(q=\frac {q_{0}}{m}N\), \(q=\mathcal {O}(N)\). The first N columns of \(I^{(\alpha ,\beta )}_{b,p,\mu }\) involve the computation of \(\mathcal {O}(N^{2})\) entries, each of which requiring up to 4p + 3 multiplications, leading to a total cost of \(\mathcal {O}(pN^{2}q\log q\log \log q)\) in q-bit precision.Footnote 1 Recalling that \(q=\mathcal {O}(N)\), we conclude that Algorithm 2 has an overall complexity of \(\mathcal {O}(pN^{3}\log N\log \log N)\).

Remark 8

Since the accuracy drops during the recurrence, the precision can be lowered gradually to reduce the complexity by a constant factor. However, that does not change the conclusions with big O notations.

Remark 9

There are other computational costs that are negligible compared to the main recurrence process:

-

There are divisions involving entries of the banded integration matrices which means that we can compute the inverse first with a cost of \(\mathcal {O}(pN^{2}\log N(\log \log N)^{2})\) using Newton–Raphson division.

-

The computation of \(\widetilde {E}(q_{0},m)\) has \(\mathcal {O}(p{q_{0}^{3}}\log q_{0}\log \log q_{0})\) complexity.

Remark 10

It is also possible to pseudo-stabilise Algorithm 1 (see Section 5) since the growth of errors follow similar patterns. However, the complexity of Algorithm 1 is higher, making the overall complexity of the pseudo-stabilised version \(\mathcal {O}(N^{4})\). We therefore only use Algorithm 1 if μ is irrational (recall that Algorithm 2 is only applicable for rational μ).

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Pu, T., Fasondini, M. The numerical solution of fractional integral equations via orthogonal polynomials in fractional powers. Adv Comput Math 49, 7 (2023). https://doi.org/10.1007/s10444-022-10009-9

Received:

Accepted:

Published:

DOI: https://doi.org/10.1007/s10444-022-10009-9

Keywords

- Fractional integral

- Spectral method

- Jacobi polynomials

- Riemann–Liouville

- Caputo

- Bagley–Torvik

- Mittag–Leffler

- High-precision