Abstract

We study multifrequency Hebbian plasticity by analyzing phenomenological models of weakly connected neural networks. We start with an analysis of a model for single-frequency networks previously shown to learn and memorize phase differences between component oscillators. We then study a model for gradient frequency neural networks (GrFNNs) which extends the single-frequency model by introducing frequency detuning and nonlinear coupling terms for multifrequency interactions. Our analysis focuses on models of two coupled oscillators and examines the dynamics of steady-state behaviors in multiple parameter regimes available to the models. We find that the model for two distinct frequencies shares essential dynamical properties with the single-frequency model and that Hebbian learning results in stronger connections for simple frequency ratios than for complex ratios. We then compare the analysis of the two-frequency model with numerical simulations of the GrFNN model and show that Hebbian plasticity in the latter is locally dominated by a nonlinear resonance captured by the two-frequency model.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

Hebbian learning is a widely accepted principle of synaptic plasticity which attributes strengthening of synaptic efficacies to correlated activation of pre- and post-synaptic neurons (Hebb 1949; Caporale and Dan 2008). While various mathematical formulations of the Hebb rule have been studied (Gerstner and Kistler 2002; Shouval 2007), here we investigate Hebbian plasticity in networks of neural oscillators (Maslennikov and Nekorkin 2017). Previous work showed that adaptive networks, in which the states of oscillatory elements (nodes) and the coupling weights between them (links) interact and co-evolve, exhibit collective dynamical effects, such as self-assembled multiclusters (Aoki and Aoyagi 2011), chimera states (Kasatkin et al. 2017), emergence of modular topology (Assenza et al. 2011) and transient switching dynamics (Maslennikov and Nekorkin 2015). However, previous models have mainly accounted for synchronization in 1:1 frequency ratio while multifrequency learning between distinct but resonant frequencies (e.g., harmonics and integer ratios) has not received much attention, despite its implications for auditory processing (Humphries et al. 2010) and cross-frequency coupling (Hyafil et al. 2015). In this paper, we study a model of multifrequency adaptive network (Large et al. 2010; Large 2010), which is an extension of a model of single-frequency Hebbian learning (Hoppensteadt and Izhikevich 1996a, b).

Hoppensteadt and Izhikevich (1996a, 1997) derived a generic model for weakly connected neural networks near a multiple Andronov–Hopf bifurcation (Andronov et al. 1971; Guckenheimer and Holmes 1983) when all oscillators have equal or \(\epsilon \)-close natural frequencies,

where \(' = d/d\tau \), \(\tau = \epsilon t\) is ‘slow’ time, \(\epsilon > 0\) represents the strength of synaptic connections in the original weakly connected system, and z, b, d and c are complex numbers. They called it a canonical model which they defined to be a simple mathematical model that can be derived using normal form theory from a family of biophysically more detailed models that share certain dynamic properties (Hoppensteadt and Izhikevich 2001). For example, they showed that weakly connected Wilson–Cowan-type models (Wilson and Cowan 1972), when each of them is near an Andronov–Hopf bifurcation, can be transformed to the above canonical model via a continuous change of variables (Hoppensteadt and Izhikevich 1996a, Theorem 1).

Hoppensteadt and Izhikevich (1996b, 1997) showed that the canonical network can memorize the phase differences between oscillators \(z_i\) and \(z_j\) in the phase of complex-valued connection \(c_{ij}\) if \(c_{ij}\) evolves in time according to a Hebbian learning rule of the form,

where \(\gamma \) and k are positive real numbers. They demonstrated that a plastic network consisting of equal-frequency oscillators can serve as a model of associative memory and pattern recognition (Hoppensteadt and Izhikevich 2000).

Large et al. (2010) extended the single-frequency network of Hoppensteadt and Izhikevich into a gradient frequency neural network (GrFNN), a network of neural oscillators tuned to a range of distinct frequencies. A canonical model for GrFNNs consisting of oscillators poised near an Andronov–Hopf bifurcation or a Bautin bifurcation (Kuznetsov 2004) is given by

where z, a, b, d and c are complex numbers. Unlike the single-frequency model which describes only the interactions between oscillators tuned to identical frequencies, the GrFNN model includes a full expansion of higher-order terms to capture nonlinear resonances between distinct frequencies (e.g., harmonics and integer ratios).

Large (2010, 2011) proposed a Hebbian learning rule of the form,

which enables the GrFNN model to learn and remember the frequency and phase relationships in multi-frequency signals. It has been shown that the GrFNN model can predict and explain nonlinearities in auditory peripheral and neural processing (Lerud et al. 2014, 2019), the universal structural properties found in musical cultures (Large 2010, 2011; Large et al. 2016), and the perception and learning of musical patterns (Large 2011; Large et al. 2015; Kim 2017; Tichko and Large 2019).

In this paper, we study the dynamic properties of multifrequency Hebbian learning by analyzing the above canonical models, which are mathematically simple and tractable. Our analysis focuses on Hebbian learning in two coupled oscillators, which we use to examine numerical simulations of larger networks. We start with an analysis of the single-frequency model of Hoppensteadt and Izhikevich (Sects. 2.1, 2.2) since, to our knowledge, no detailed analysis of the model was given before. We extend the single-frequency model by stabilizing it for the entire range of parameters (Sect. 2.3) and by introducing frequency detuning (Sect. 2.4). Next, we study multifrequency learning by analyzing a model for two distinct frequencies (Sect. 3.1) and by extending it to a gradient frequency network with arbitrary frequency relationships (Sect. 3.3). We also study frequency scaling for networks with logarithmically spaced frequencies (Sect. 3.2) and end with a discussion of the findings (Sect. 4).

2 Analysis of single-frequency learning

We first study Hoppensteadt and Izhikevich’s canonical model for single-frequency neural networks. As will be shown, the single-frequency model shares many dynamical properties with the multifrequency GrFNN model, but they also exhibit distinct behaviors. Here we analyze the simplest case of single-frequency network, namely two coupled equal-frequency oscillators.

The dynamics of two weakly coupled equal-frequency oscillators, each near an Andronov–Hopf bifurcation (or simply, a Hopf bifurcation), is described by the canonical model (Hoppensteadt and Izhikevich 1996a, 1997),

where \(z_i \in {\mathbb {C}}\) represents the state of the ith oscillator, \(c_{ij} \in {\mathbb {C}}\) is the coupling coefficient from the jth to the ith oscillator, \(\alpha _i \in {\mathbb {R}}\) is the bifurcation parameter, \(\omega \in {\mathbb {R}}\) is the common natural frequency, and the roman i is the imaginary unit. Since our goal is the analysis of the canonical model, not its derivation using averaging theory, we use \(\dot{} = d/dt\) instead of \(' = d/d\tau \) for slow time \(\tau \). When uncoupled (i.e., \(c_{ij} = 0\)), the equations become the normal form of a Hopf bifurcation (Murdock 2003), which is also known as the Stuart–Landau equation (Stuart 1958). An autonomous oscillator exhibits spontaneous limit-cycle oscillation if \(\alpha > 0\) or an equilibrium at zero if \(\alpha \le 0\).

The complex-valued Hebbian learning rule (Hoppensteadt and Izhikevich 1996b, 1997),

allows the connection \(c_{ij}\) to learn and memorize the phase difference between the oscillators \(z_i\) and \(z_j\), where \(\gamma > 0\) is the decay rate, and \(\kappa _{ij} > 0\) is the learning rate. For the simplicity of analysis, we assume \(\alpha _1 = \alpha _2 = \alpha \) and \(\kappa _{12} = \kappa _{21} = \kappa \), and notate

where \(i,j = 1,2\), which is a shorthand for \((i,j) = (1,2)\) for the equations for \(z_1\) and \(c_{12}\), and \((i,j) = (2,1)\) for the equations for \(z_2\) and \(c_{21}\).

2.1 Neutral stability of connection phase

Let us bring the system to the polar coordinates using \(z_i = r_i e^{\text {i}\phi _i}\) and \(c_{ij} = A_{ij} e^{\text {i}\theta _{ij}}\),

where \(i,j = 1,2\). Since the angular variables appear only as \(\theta _{ij} - \phi _i + \phi _j\), we define \(\psi _{ij} = \theta _{ij} - \phi _i + \phi _j\) and call them system phases. This turns the above eight-dimensional system into a six-dimensional one,

where \(i,j = 1,2\).

Equation (5) indicates that \({\dot{\psi }}_{ij} = 0\) when the system is in a steady state. As will be shown below, an obvious solution is \(\psi _{12}^* = \psi _{21}^* = 0\) (the asterisk denotes a steady-state solution), in which case \({\dot{\theta }}_{ij} = 0\) and \(\theta _{12} = -\theta _{21} = \phi _1 - \phi _2\) (see Eq. 4 and the definition of \(\psi _{ij}\) above). The solution only requires that connection phases \(\theta _{12}\) and \(\theta _{21}\) match the relative phase of the oscillators \(\pm (\phi _1 - \phi _2)\), without specifying steady-state values of the connection phases or the relative phase (or phase difference).

Numerical simulations of coupled equal-frequency oscillators (3) for five different initial conditions. Parameters used: \(\alpha = 1\), \(\gamma = 1\), \(\kappa = 0.5\), and \(\omega = 1\)

Figure 1 shows numerical simulations of the system starting from five different randomly generated initial conditions. Connection amplitudes \(A_{ij}\) and system phases \(\psi _{ij}\) converge to identical steady-state values, respectively, indicating the presence of an attractor. Connection phases \(\theta _{ij}\), on the other hand, converge to different values, but the steady state of each simulation satisfies the aforementioned condition \(\theta _{12} = -\theta _{21} = \phi _1 - \phi _2\). This suggests that plastic connection phases are neutrally stable, that is, they converge to a value which is not attracting (Strogatz 1994). A simulation of perturbation confirms this (Fig. 2). When the system is perturbed, \(\psi _{ij}\) are pulled back to the attractor at zero while \(\theta _{ij}\) converge to new values (which are again symmetric to each other), instead of being attracted back to the previous steady-state values.Footnote 1

The neutral stability of connection phases makes sense given that the learning rule (2) allows plastic connections to memorize the phase difference between the oscillators they connect (Hoppensteadt and Izhikevich 1996b, 1997). When the oscillators are not forced to have certain phase differences, as is the case for (3), the connection phases can have arbitrary steady-state values because the oscillators are free to have arbitrary phase differences (Fig. 1). However, when certain phase differences are forced on the oscillators, for instance, by external forcing, the connection phases are not neutrally stable but are attracted to the forced phase differences. Fig. 3 shows that when the oscillators are forced to have the phase difference \(\phi _1 - \phi _2 = \frac{\pi }{2}\), connection phases \(\theta _{12}\) and \(\theta _{21}\) are attracted to \(\frac{\pi }{2}\) and \(-\frac{\pi }{2}\), respectively.

Externally forced and mutually coupled equal-frequency oscillators simulated with five different initial conditions. In addition to the coupling terms in (3), each oscillator \(z_i\) is driven by external forcing \(F_i e^{\text {i}(\omega t + \vartheta _i)}\) where \(F_1 = F_2 = 2\), \(\vartheta _1 = \frac{\pi }{2}\) and \(\vartheta _2 = 0\). The initial conditions and other parameter values are identical to those used for Fig. 1

2.2 Stability analysis

We study the single-frequency model (3) further by examining the existence and stability of steady-state solutions. Below we discuss zero, asymmetric, and symmetric solutions.

Stability of zero and asymmetric solution. First, it is obvious that \(z_i = c_{ij} = 0\) is a solution of (3) regardless of parameter values. We find that the zero solution is stable for \(\alpha < 0\) and unstable for \(\alpha > 0\), which can be shown by examining the signs of \({\dot{r}}_i\) and \({\dot{A}}_{ij}\) for small perturbations from zero (\(\psi _{ij}\) is not defined at zero). Thus, zero is stable or attracting when autonomous (uncoupled) oscillators have an equilibrium at zero (\(\alpha < 0\)), and it is unstable or repelling when they exhibit spontaneous oscillations (\(\alpha > 0\)). For \(\alpha = 0\), zero is stable if \(\gamma > \kappa \) (strong forgetting), unstable if \(\gamma < \kappa \) (strong learning), and neutrally stable if \(\gamma = \kappa \) (along with other infinite solutions, see below). We also find that asymmetric solutions do not exist for (5). From the steady-state equations, we can show \(A_{12}^* = A_{21}^*\) which leads to \(r_1^* = r_2^*\).

Nonzero symmetric solution. When the oscillators and the learning rules have identical parameters as we assume here, it is likely that the system has symmetric solutions with \(r_1^* = r_2^*\) and \(A_{12}^* = A_{21}^*\). Examining (5), we find that the symmetric plane \(r_1 = r_2\), \(A_{12} = A_{21}\), \(\psi _{12} = -\psi _{21}\) is an invariant manifold because \({\dot{r}}_1 = {\dot{r}}_2\), \({\dot{A}}_{12} = {\dot{A}}_{21}\), and \({\dot{\psi }}_{12} = -{\dot{\psi }}_{21}\) on any point on the plane. Once the system is on the symmetric plane, it remains there indefinitely.

To examine the dynamics on the symmetric plane, we substitute \(r_i = r\), \(A_{ij} = A\) and \(\psi _{12} = -\psi _{21} = \psi \) in (5) and get

We obtain a nonzero symmetric solution by solving steady-state equations \({\dot{r}} = 0\), \({\dot{A}} = 0\), and \({\dot{\psi }} = 0\),

Since r and A are positive real numbers, the solution exists if \(\alpha > 0\) and \(\gamma > \kappa \), or if \(\alpha < 0\) and \(\gamma < \kappa \). In either case, both \(r^*\) and \(A^*\) diverge at \(\gamma = \kappa \) (Fig. 4). When \(\alpha = 0\), no nonzero solution exists unless \(\gamma = \kappa \) for which infinite solutions satisfying \(r^{*2} = A^*\) exist (including the zero solution as discussed above). In the parameter regimes without nonzero solutions, the system either decays to zero or diverges to infinity depending on the stability of zero (see Table 1).

Symmetric steady-state oscillator amplitude \(r^*\) as function of learning rate \(\kappa \) for the original single-frequency model (3), two stabilized models (8) and (9), and the fully expanded model (15). Parameters used: \(\alpha = 0.1\), \(\gamma = -\lambda = 1\), \(\beta = \beta _1 = \beta _2 = -1\), and \(k = m = 1\)

We can determine the linear stability of the nonzero symmetric solution (7) by evaluating the Jacobian matrix at the solution (Arnold 1978; Strogatz 1994). By solving the characteristic equation, we obtain the following eigenvalues of the Jacobian matrix,

Thus, if \(\gamma > \kappa \) (and \(\alpha > 0\)), all three eigenvalues are negative, indicating the solution is a stable node (see \(A_{ij}\) and \(\psi _{ij}\) approach fixed points monotonically in Fig. 1). In this case, the nonzero solution is the only stable solution because zero is not stable. If \(\gamma < \kappa \) (and \(\alpha < 0\)), the Jacobian matrix has two negative and one positive eigenvalues, indicating the solution is a saddle point. The two-dimensional stable manifold of this saddle point serves as a separatrix between the stable zero and the divergence to infinity.

Table 1 summarizes the steady states of the original single-frequency model for different regimes of parameters \(\alpha \), \(\gamma \) and \(\kappa \). In many parameter regimes, the original model does not have a stable steady-state solution but diverges to infinity unless it is precisely at zero. Below we discuss ways to stabilize the model in all its parameter regimes, and we extend it further by introducing frequency detuning.

2.3 Stabilization of learning dynamics

The nonzero steady-state solution of the single-frequency model, given in (7), diverges at \(\gamma = \kappa \) (see Fig. 4) because the input term \(A r \cos \psi \) in the oscillator amplitude equation in (6) is, with \(A^*\) growing linearly with \(r^{*2}\), effectively of the same order of r as the highest-order intrinsic term \(-r^3\). We can prevent the system from diverging by adding higher-order stabilizing terms in the oscillator equations and/or the learning equations. Let us first consider adding a quintic term to the oscillator equations (and making the cubic coefficient \(\beta \)),

where \(i,j = 1,2\).Footnote 2 Now, the oscillators can be near a double limit cycle bifurcation when \(\beta > 0\) (also known as saddle-node or fold bifurcation of periodic orbits; Arnold 1988; Kuznetsov 2004). For the interest of space, however, here we limit our analysis to the parameter regimes around a Hopf bifurcation by restricting \(\beta < 0\).

By bringing the system to the polar coordinates and solving symmetric steady-state equations, we get

Thus, unlike the original model (3), the stabilized model (8) does not have a singularity (Fig. 4). For \(\alpha > 0\) (the supercritical regime of a Hopf bifurcation), one positive real \(r^*\) always exists, and a linear stability analysis indicates that it is a stable node (see Fig. 5 where \(\varOmega = 0\)). For \(\alpha = 0\) (the critical point), one positive \(r^*\) exists if \(\frac{\kappa }{\gamma } > -\beta \) (none otherwise, see Fig. 6). For \(\alpha < 0\) (the subcritical regime), two positive solutions exist if \(\frac{\kappa }{\gamma } \ge - \beta + 2\sqrt{-\alpha }\), which are a stable node and a saddle point (Fig. 7). (The stability analysis shown in Figs. 5, 6 and 7 will be discussed more fully in the next section.)

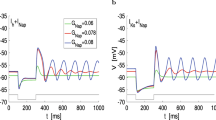

Nonzero symmetric steady-state solutions of the stabilized single-frequency model with frequency detuning (10) in the supercritical Hopf regime (\(\alpha = 0.1\), \(\beta = -1\), \(\gamma = 1\)). a The stability type of stable solutions in the \((\varOmega ,\kappa )\) parameter space. b Stability type and steady-state values for \(\kappa = 1.5\) and c for \(\kappa = 2.5\). The horizontal dashed lines in the plots of \(r^*\) indicate the spontaneous amplitude of the oscillators (i.e., the steady-state amplitude of an uncoupled oscillator). The diagonal dashed lines in the plots of \({\dot{\theta }}_{12}^*\) indicate frequency detuning \(\varOmega \)

Nonzero symmetric steady-state solutions of the stabilized single-frequency model (10) at the critical point of a Hopf bifurcation (\(\alpha = 0\), \(\beta = -1\), \(\gamma = 1\)). a The stability type of stable solutions. b Stability type and steady-state values for \(\kappa = 1.3\) and c for \(\kappa = 2.5\). A nonzero solution exists for \(\varOmega = 0\) if \(\kappa > \kappa _0 = -\beta \gamma \). See the text for details

Nonzero symmetric steady-state solutions of the stabilized single-frequency model (10) in the subcritical Hopf regime (\(\alpha = -0.1\), \(\beta = -1\), \(\gamma = 1\)). a The stability type of stable solutions. b Stability type and steady-state values for \(\kappa = 2.5\). \(\kappa _0 = \gamma (-\beta + 2\sqrt{-\alpha })\) is the smallest \(\kappa \) for which a nonzero solution exists at \(\varOmega = 0\)

Alternatively, we can add a cubic damping term in the learning rule to stabilize the system for all ranges of learning parameters,

where we notate the linear coefficient in the learning rule as \(\lambda \in {\mathbb {R}}\) instead of \(-\gamma \) to emphasize that now it can be positive or zero with the stabilizing cubic term added to the equation. Thus, the addition of a cubic term not only stabilizes the system (Fig. 4) but also introduces new parameter regimes to the learning rule. In this paper, we limit our analysis to the learning rule with only a linear damping term \(-\gamma c_{ij}\) and study the first stabilized model (8) further below. An analysis of nonlinear learning regimes will be given elsewhere.Footnote 3

2.4 Effects of frequency detuning

We extend the stabilized single-frequency model (8) by introducing frequency detuning (i.e., \(\omega _1 \ne \omega _2\)),

where \(i,j = 1,2\). As before, we convert the system to the polar coordinates using \(z_i = r_i e^{\text {i}\phi _i}\), \(c_{ij} = A_{ij} e^{\text {i}\theta _{ij}}\), define \(\psi _{ij} = \theta _{ij} - \phi _i + \phi _j\), and get

where \(\varOmega _{ij} = \omega _i - \omega _j\) is frequency detuning, and \(i,j = 1,2\).

Stability analysis. As with the original model (5), the symmetric plane \(r_1 = r_2\), \(A_{12} = A_{21}\), \(\psi _{12} = -\psi _{21}\) is an invariant manifold. Here we focus on the dynamics on the symmetric plane (numerical simulations suggest that no stable asymmetric solution exists). Substituting \(r_i = r\), \(A_{ij} = A\) and \(\psi _{12} = -\psi _{21} = \psi \), we get

where \(\varOmega = \omega _1 - \omega _2\). Combining steady-state equations and using \(\sin ^2\psi ^* + \cos ^2\psi ^* = 1\), we obtain a sixth-order equation for \(r^*\) (not shown due to its length), which we solve by numerical root finding. We determine the linear stability of each obtained nonzero solution \((r^*, A^*, \psi ^*)\) by evaluating the Jacobian matrix at the solution.

Figures 5a, 6a, and 7a show the stability type of stable nonzero symmetric solutions in the parameter space \((\varOmega , \kappa )\) for three different regimes of oscillator parameters: the supercritical (\(\alpha > 0\)), the critical (\(\alpha = 0\)), and the subcritical regime (\(\alpha < 0\)) of a Hopf bifurcation [see Kim and Large (2015, 2019) for the distinct characteristics of each regime]. Panels b and c of the figures show the steady-state values of both stable and unstable solutions for select values of \(\kappa \). In all three regimes, both oscillator amplitude \(r^*\) and connection amplitude \(A^*\) are maximum at \(\varOmega = 0\), indicating that neural networks with plastic connections resonate when there is no frequency detuning, as do networks with fixed coupling (Kim and Large 2015). In the supercritical regime (Fig. 5a, c), at least one stable nonzero solution exists for the entire range of \(\varOmega \) and \(\kappa \), and two stable solutions exist for intermediate \(|\varOmega |\) and large \(\kappa \). As discussed in the previous section (2.3), the region of \((\varOmega , \kappa )\) with at least one stable nonzero solution, often called an Arnold tongue, is lifted off \(\kappa = 0\) for the critical and subcritical regimes (\(\alpha \le 0\)), with the tongue tip at \(\kappa _0 = \gamma (-\beta + 2\sqrt{-\alpha })\) (Figs. 6a and 7a). This is because autonomous (uncoupled) oscillators with \(\alpha \le 0\) have a sole attractor at zero, and a high learning rate \(\kappa \) is needed to get them to develop nonzero connections. When nonzero solution(s) exists, zero is stable only if the solution with the smallest amplitude is a saddle point which acts as a separatrix (e.g., the saddle points in Figs. 6c and 7b, but not Fig. 5c). When no nonzero solution exists (the white regions in Figs. 6a, 7a), zero is always stable.

Rotating connection phase. Equation (11) indicates that when \(\omega _1 \ne \omega _2\) (i.e., \(\varOmega _{12} = -\varOmega _{21} \ne 0\)), both steady-state system phases \(\psi _{12}^*\) and \(\psi _{21}^*\) cannot be zero. Since \({\dot{\theta }}_{ij} = -\frac{\kappa r_i r_j}{A_{ij}} \sin \psi _{ij}\), nonzero \(\psi _{ij}^*\) means that connection phase \(\theta _{ij}\) is not constant over time but advances at a constant rate when the system is in a steady state. Since \({\dot{\psi }}_{ij} = {\dot{\theta }}_{ij} - {\dot{\phi }}_i + {\dot{\phi }}_j = 0\) in a steady state, the frequency of oscillating connection \({\dot{\theta }}_{ij}\) is equal to the difference of the oscillators’ instantaneous frequencies \({\dot{\phi }}_i - {\dot{\phi }}_j\). In other words, oscillating connections compensate the instantaneous frequency difference of the oscillators (see Fig. 8 for a simulation with frequency detuning).Footnote 4

Numerical simulation of the stabilized model with frequency detuning (10). In a steady state, the frequency of oscillating connections matches the difference of the oscillators’ instantaneous frequencies. Parameters used: \(\alpha = 0.1\), \(\beta = -1\), \(\omega _1 = 2\pi \) (or 1 Hz), \(\omega _2 = 0.8 \times 2\pi \) (0.8 Hz), \(\gamma = 1\) and \(\kappa = 1\)

Panels b and c of Figs. 5, 6 and 7 show that the steady-state oscillation frequency of plastic connection \({\dot{\theta }}_{ij}^*\) fall between 0 and \(\varOmega _{ij}\) (the latter is indicated by a diagonal dashed line in the figures; note \({\dot{\theta }}_{12}^* = -{\dot{\theta }}_{21}^*\) for symmetric solutions). For small frequency detuning \(|\varOmega |\), plastic connections have large amplitudes \(A^*\) and slow (near zero) oscillating frequencies \({\dot{\theta }}_{ij}^*\). In this case, the instantaneous frequencies of the oscillators are brought close to each other because the compensation by plastic connections is small. For large frequency detuning, connection frequencies are close to \(\varOmega _{ij}\) if steady-state solutions exist (see Fig. 5b, c) which means that oscillating connections compensate most of the frequency detuning so that the oscillators’ instantaneous frequencies are near their natural frequencies. In this case, the connections have small steady-state amplitudes because plastic connections can be considered as oscillators tuned to the frequency of zero.Footnote 5 They assume small amplitudes when forced to oscillate at a nonzero frequency.

3 Hebbian learning in multifrequency networks

Now we turn our attention to multifrequency learning. To study Hebbian learning in multifrequency neural networks, we first analyze a canonical model for two coupled oscillators with distinct frequencies. We use the same analytic methods used above for single-frequency models. As shown below, many findings for single-frequency learning hold for multifrequency learning since the former is a particular instance of the latter.

3.1 Two distinct frequencies

When two oscillators have distinct natural frequencies that approximate an integer ratio k : m, where k, \(m \in {\mathbb {N}}\), the dynamics of coupled oscillators can be described by

where \(a_i = \alpha _i + \text {i}\omega _i\), \(b_i = \beta _{1i}+\text {i}\delta _{1i}\), \(d_i = \beta _{2i}+\text {i}\delta _{2i}\), \(\alpha \), \(\omega \), \(\beta \), \(\delta \in {\mathbb {R}}\), and \(\epsilon > 0\) is a small number representing the strength of synaptic connections in the original weakly connected system (Large et al. 2010; Kim and Large 2019).Footnote 6 This is a generalization of the models derived in Hoppensteadt and Izhikevich (1997) for specific ratios (2:1 and 3:1) to a general integer ratio k : m or a resonant relation of \(m\omega _1 = k\omega _2\).

The coupling terms \(z_2^k {\bar{z}}_1^{m-1}\) and \(z_1^m {\bar{z}}_2^{k-1}\) are the lowest-order resonant monomials for \(m\omega _1 = k\omega _2\), which satisfy the resonant conditions between the eigenvalues,

The coupling terms are of order \({\mathcal {O}}(\sqrt{\epsilon }^{k+m-2})\), indicating the model for distinct frequencies is weakly connected compared to the single-frequency model for which \(k = m = 1\) (Hoppensteadt and Izhikevich 1997). Intrinsic terms are fully expanded in the form of a geometric series (with the coefficient \(d_i\)), instead of being truncated, to stabilize the system for arbitrarily large k and m (\(\beta _{2i} < 0\) for stability). For the convergence of the geometric series, oscillator amplitudes are restricted to \(|z_i| < \frac{1}{\sqrt{\epsilon }}\) (Large et al. 2010).

We generalize the original single-frequency learning rule (2) to a general resonant relation of \(m\omega _1 = k\omega _2\) with

which becomes (2) when \(k = m = 1\). The coupling terms \(z_1^m {\bar{z}}_2^k\) and \(z_2^k {\bar{z}}_1^m\) become stationary (to which plastic connections resonate, see footnote 5) when \(z_1\) and \(z_2\) are mode-locked in the frequency ratio k : m (i.e., when their relative phase \(m\phi _1 - k\phi _2\) is constant over time).

For the simplicity of analysis, we assume that the oscillators have identical parameters except their natural frequencies (e.g., \(\alpha _i = \alpha \)) and that intrinsic frequencies do not depend on amplitudes (i.e., \(\delta _{1i} = \delta _{2i} = 0\)). Also, for the interest of space, we limit our analysis to the parameter regimes around a Hopf bifurcation by restricting \(\beta _{1i} < 0\).

By rescaling \(z_i \rightarrow \frac{z_i}{\sqrt{\epsilon }}\), \(\beta _1 \rightarrow \frac{\beta _1}{\epsilon }\), \(\beta _2 \rightarrow \frac{\beta _2}{\epsilon }\), and \(\kappa \rightarrow \frac{\kappa }{\epsilon }\) (thus, now \(|z_i| < 1\), see Fig. 4), we get

Again, we transform the system to the polar coordinates using \(z_i = r_i e^{\text {i}\phi _i}\) and \(c_{ij} = A_{ij} e^{\text {i}\theta _{ij}}\), define \(\psi _{ij} = \theta _{ij} - m_{ij}\phi _i + k_{ij}\phi _j\) where \((k_{12}, m_{12}) = (k, m)\) and \((k_{21}, m_{21}) = (m, k)\), and get

where \(\varOmega _{ij} = m_{ij}\omega _i - k_{ij}\omega _j\) (or \(\varOmega _{12} = -\varOmega _{21} = m\omega _1 - k\omega _2\)). Since the dynamics of the model are determined by system phases \(\psi _{ij}\), as is the case for the single-frequency models discussed above, connection phases \(\theta _{ij}\) converge to neutrally stable steady-state values when \(\varOmega _{ij} = 0\) (while satisfying \(\theta _{12}^* = -\theta _{21}^* = m\phi _1 - k\phi _2\)), and they rotate when \(\varOmega _{ij} \ne 0\) (see Sects. 2.1, 2.4).

Symmetric solutions. The dynamics on the symmetric plane \(r_i = r\), \(A_{ij} = A\) and \(\psi _{12} = -\psi _{21} = \psi \) are governed by

where \(\varOmega = m\omega _1 - k\omega _2\). Again, we calculate nonzero symmetric steady-state solutions \((r^*, A^*, \psi ^*)\) by numerically solving a polynomial equation in \(r^*\) (of the order determined by \(k + m\)) we obtain by combining steady-state equations \({\dot{r}} = 0\), \({\dot{A}} = 0\) and \({\dot{\psi }} = 0\). The linear stability of the solutions is determined by evaluating the Jacobian matrix.

Nonzero symmetric steady-state solutions of the two-frequency (2:1) model (15) in the supercritical Hopf regime with small positive \(\alpha = 0.05\) (\(\beta _1 = \beta _2 = -1\), \(\gamma = 1\)). a The stability type of stable solutions in the \((\varOmega ,\kappa )\) parameter space. b Stability type and steady-state values for \(\kappa = 2\), c \(\kappa = 5\), and d for \(\kappa = 8\)

Steady-state solutions and their stability for multifrequency learning (k : m ratio) show similarities to single-frequency learning (1:1 ratio), but there are notable differences. Let us take the 2:1 model (i.e., Eq. 15 with \(k = 2\), \(m = 1\)) as an example and compare it with what we found above for the 1:1 single-frequency model. In the supercritical Hopf regime (\(\alpha > 0\)), the 2:1 model with a large \(\alpha \) has a set of steady-state solutions that are topologically equivalent to the 1:1 model shown in Fig. 5. However, for small positive values of \(\alpha \), the 2:1 model shows a different set of behaviors, with two stable solutions at \(\varOmega = 0\) for intermediate values of \(\kappa \) (Fig. 9). In the critical and subcritical Hopf regimes (\(\alpha = 0\) and \(\alpha < 0\), respectively), the 2:1 model has the same set of solutions as the 1:1 model in the subcritical Hopf regime (Fig. 7): a pair of nonzero solutions (a stable node and a saddle point) exist for small \(|\varOmega |\) for \(\kappa \) greater than a critical value \(\kappa _0\). Multifrequency models with \(k + m > 2\) share the same set of steady-state solutions as the 2:1 model examined here.

Relative strength of k : m learning. Although multifrequency models with \(k + m > 2\) show qualitatively identical behaviors, the strength of resonance varies with k and m. To compare the strength of k : m learning, we perform a further analysis at \(\varOmega = 0\). Using \(\psi ^* = 0\) at \(\varOmega = 0\) and combining steady-state equations \({\dot{r}} = 0\) and \({\dot{A}} = 0\) from (17), we get

Thus, defining \(X = r^{*2}\), the steady-state solutions at \(\varOmega = 0\) are the intersections of functions \(y_1\) and \(y_2\), defined as

Note that \(y_1\) depends only on oscillator parameters \(\alpha \), \(\beta _1\) and \(\beta _2\), while \(y_2\) depends on learning parameters \(\gamma \) and \(\kappa \) and the order of nonlinear resonance \(k + m\).

Figure 10a demonstrates that in the supercritical Hopf regime (\(\alpha > 0\)), \(y_1\) and \(y_2\) have an intersection at a higher \(X = r^{*2}\) when \(k + m\) is smaller (see Fig. 10b for a comparison over a range of \(\kappa \)). This shows that low-order multifrequency learning (i.e., learning of a simple frequency ratio with small k and m) exhibits stronger resonance than high-order learning (a complex ratio with large k and m), which is consistent with the previous finding for a periodically forced GrFNN model (Kim and Large 2019). For the critical and subcritical Hopf regimes (\(\alpha \le 0\)), we compare \(\kappa _0\), the smallest \(\kappa \) with nonzero stable solution (see Sect. 2.4), which we obtain by solving \(y_1 = y_2\) and \(y_1' = y_2'\) simultaneously because \(y_1\) and \(y_2\) touch at a single point when \(\kappa = \kappa _0\). Figure 10c shows that \(\kappa _0\) is higher for greater \(k + m\), indicating that a higher learning rate is required for high-order multifrequency learning.

Comparison of two-frequency learning in the ratio k : m. a The intersection of functions \(y_1\) and \(y_2\) in (18). Parameters: \(\alpha = 1\), \(\beta _1 = \beta _2 = -1\), \(\gamma = 1\), \(\kappa = 5\). b Symmetric steady-state oscillator amplitude \(r^*\) as a function of \(\kappa \). The parameters are identical to those used in Panel a. The dashed line indicates the spontaneous amplitude. c \(\kappa _0\), the minimum \(\kappa \) required for nonzero solutions, as a function of \(\alpha \le 0\) (\(\beta _1 = \beta _2 = -1\), \(\gamma = 1\))

3.2 Frequency scaling for logarithmic frequency networks

Next, we consider the bandwidth of the coupled oscillators, which we define as follows: Let \(\varGamma \) be the amount of frequency detuning \(|\varOmega |\) for which \(r^*\) is a half of the max value \(r_0^*\) at \(\varOmega = 0\). Then, for fixed \(\omega _1\), the range of \(\omega _2\) for which \(r^* \ge \frac{r_0^*}{2}\) is

since \(|\varOmega | = |m\omega _1 - k\omega _2| \le \varGamma \). Thus, the full bandwidth \(\frac{2\varGamma }{k}\) is constant across frequencies, as long as other parameters remain the same.

However, we previously showed that scaling oscillator parameters by natural frequency makes the bandwidth grow linearly with natural frequency, a behavior called “constant Q” which is often desirable when natural frequencies are equally spaced on a logarithmic scale as found in the tonotopic organization in the auditory system (Humphries et al. 2010):

where \(2\pi f_i = \omega _i\) (Large et al. 2010; Kim and Large 2015, 2019).

We introduce a frequency-scaled version of the learning rule,

where

is the internal division of \(f_1\) and \(f_2\) in the ratio k : m. Bringing (20) and (21) to the polar coordinates, we show that the frequency-scaled equation for symmetric system phase \(\psi \),

is identical to the unscaled equation (17) except the scaling factor \(\frac{1}{f_c}\) multiplied to the left-hand side and to \(\varOmega \). This means that when the unscaled bandwidth is \(|\varOmega | = \varGamma \), the bandwidth of the frequency-scaled model is \(\left| \frac{\varOmega }{f_c}\right| = \varGamma \). Thus, with frequency scaling, \(r^* \ge \frac{r_0^*}{2}\) when

The bandwidth is now expressed as a ratio of natural frequencies, and thus it grows with natural frequencies and is constant on a logarithmic scale (Fig. 11a).

3.3 Gradient frequency neural networks

In order to capture the interaction between arbitrary frequencies, the canonical model for GrFNNs with plastic connections (Large et al. 2010; Large 2010, 2011),

where \(a_i = \alpha _i + \text {i}\omega _i\), \(b_i = \beta _{1i}+\text {i}\delta _{1i}\), and \(d_i = \beta _{2i}+\text {i}\delta _{2i}\), includes the monomials for all possible two-frequency resonant relationships (i.e., all possible k : m),

(cf. Eq. 15). Depending on the oscillators’ instantaneous (actual) frequencies (which could be different from natural frequencies), a subset of the monomials become resonant and affect the long-term dynamics of the model, while the effects of other, nonresonant monomials are canceled out over time (Arnold 1988; Guckenheimer and Holmes 1983). (See Large et al. 2010; Kim and Large 2019, for discussions on the GrFNN model).

For logarithmically spaced natural frequencies, we scale the oscillator equation by natural frequency as shown above (Sect. 3.2). Since the resonant relations between oscillators are not specified in the GrFNN model, we use an approximated scaling factor for the learning rule,

assuming \(f_i:f_j \approx k_{ij}:m_{ij}\) (cf. Eq. 22). Hence, the frequency-scaled GrFNN model is given by

where \(a_i' = \alpha _i + 2\pi \text {i}\).

Numerical simulations of the frequency-scaled GrFNN model (26). a Time-averaged connection amplitudes from 10 simulations with different random initial conditions. b Average connection amplitudes for a source oscillator (1 Hz) obtained from the GrFNN simulations shown in Panel a (thick line) compared with the analysis of the two-frequency model (20, 21) for simple integer ratios (thin lines). The natural frequencies \(f_i\) of 601 oscillators are equally spaced on a logarithmic scale, ranging from 1 Hz to 4 Hz. Parameters: \(\alpha _i = 2\), \(\beta _{1i} = \beta _{2i} = -1\), \(\delta _{1i} = \delta _{2i} = 0\), \(\gamma _{ij} = 0.5\), \(\kappa _{ij} = 8.33 \times 10^{-5} = 0.05/(n-1)\), \(n = 601\). See text for details

Figure 11a shows time-averaged connection amplitudes from numerical simulations of the frequency-scaled GrFNN model in the supercritical Hopf regime (see the figure caption for parameters). The diagonals of the connection matrix \((c_{ij})\) with high amplitudes indicate resonances at simple frequency ratios, such as 1:1 and 2:1 as marked in the figure (n.b. self-connections at the main diagonal \(c_{ii}\) are not included in the model, see Eq. 26). As predicted from the analysis given above (Sect. 3.1), the peak amplitude of a resonance decreases with the order of resonance \(k+m\), with the strongest resonance at the ratio 1:1, followed by 2:1, 3:1, etc. The bandwidth of a resonance also decreases with increasing \(k+m\), but the width of each resonance is constant across logarithmically spaced frequencies due to frequency scaling (Sect. 3.2). Without frequency scaling, the bandwidth of a resonance decreases with logarithmic frequency (i.e. the widths of bright-colored diagonals would get narrower toward the upper right corner of the figure) because unscaled bandwidths are constant in linear frequency.

Figure 11b compares the connection amplitudes for a single source oscillator (1 Hz) with the analysis of the two-frequency model (20, 21). The thick line in Fig. 11b corresponds to the color-coded connection amplitudes at the lower edge of Fig. 11a. Near each major resonance in the GrFNN simulations (the thick line), the steady-state connection amplitude \(A^*\) of the two-frequency, k : m model (the thin lines, plotted for \(k + m\) up to 9) fits well with the simulations. The small peaks in the simulations (at 4:3, 5:3 and 7:2) are significantly higher than the analysis due to the influence of stronger resonances nearby. The good fit between the simulations and the analysis demonstrates that the lowest-order resonant monomial for the ratio k : m dominates the dynamics of the GrFNN model near that frequency ratio even though the model includes an infinite series of monomials for other frequency ratios.

Notice the dip in connection amplitude near the peak of the 2:1 resonance (Fig. 11b). The local variability near resonance peaks arises because the GrFNN model includes not only the lowest-order resonant monomial for the ratio k : m but also higher-order resonant monomials for the ratio pk : pm, \(p \in {\mathbb {N}}\). Thus, an infinite number of resonant monomials contribute to the local dynamics near k : m, and depending on their phase relationships, their combined effects can make the resonance in the GrFNN model significantly stronger or weaker than that of the two-frequency model which includes only the lowest-order resonant monomial. We leave the detailed analysis of this effect to future studies.

4 Discussion

In this paper, we studied Hebbian plasticity in oscillatory neural networks which can learn phase relationships between component oscillators with complex-valued coupling coefficients. We performed a dynamical systems analysis of three coupled oscillator models: the original single-frequency model of Hoppensteadt and Izhikevich (1996a, 1996b, 1997), a single-frequency model with frequency detuning and a stabilizing high-order term, and a two-frequency model for a general frequency ratio k : m. We found that the models have different sets of steady-state solutions in three parameter regimes around an Andronov–Hopf bifurcation. We also found that plastic connections converge to neutrally stable phases in the absence of external forcing and frequency detuning and that they oscillate in the presence of frequency detuning and compensate the difference in oscillators’ instantaneous frequencies. An analysis of the two-frequency model showed that learning is stronger for simple frequency ratios (small integers k and m) and that the minimum learning rate required to achieve learning is smaller for simple ratios. Finally, we compared the analysis of the two-frequency model with numerical simulations of a GrFNN model and showed that the dynamics of the GrFNN model near a frequency ratio k : m is locally dominated by the resonant monomials for that ratio.

The present work is, to our knowledge, the first to analyze multifrequency Hebbian plasticity in oscillator networks. The GrFNN model includes higher-order coupling terms to capture nonlinear resonance and multifrequency learning, whereas previous models of adaptive networks include only coupling terms for 1:1 synchronization (see previous works featuring Stuart–Landau oscillators for a direct comparison, e.g., Aoyagi 1995; Maslennikov and Nekorkin 2018). The learning rules for complex-valued connection coefficients studied here are different from the learning rules for real-valued weights in the previous studies in that the former enable adaptive networks to learn and remember relative phases between oscillators in connection phases, while the latter only strengthen or weaken the connections. The dynamic behaviors of connection phase reported in this study, such as neutral stability in the absence of external forcing, and rotation in the presence of frequency detuning, are unique to the complex-valued learning rules. We also presented a detailed analysis of the original single-frequency model of Hoppensteadt and Izhikevich (1996a, 1996b, 1997) because no such analysis has been given before and because single-frequency learning is a special case of k : m learning which shares essential dynamics with two-frequency learning. This work also adds a new set of results to our previous studies of the GrFNN model which analyzed phase locking (1:1) and mode locking (k : m) to periodic external signal via fixed coupling (Kim and Large 2015, 2019).

In this work, we limited the analysis of multifrequency Hebbian learning to simple, tractable cases. We mostly analyzed two coupled oscillators as a simplest case of oscillator networks. To carry the analysis further, we focused on the cases where oscillators have identical parameters except natural frequencies. Although these are non-realistic cases for the neural networks in the brain, they allow us to investigate the complex dynamics of multifrequency learning using analytic methods. Our future work will address dispersion among non-frequency parameters in larger networks (\(N \gg 2\)), which would result in more complex dynamics than the simple, degenerate cases analyzed here. Our modeling efforts, however, have not been restricted to oscillators with identical parameters. In one study (Lerud et al. 2019), we modeled the cochlear dynamics in macaque monkeys by fitting the parameters of individual oscillators in a two-layer GrFNN model to the tuning-curve data from the auditory nerves. Finally, for the interest of space, we did not present the analysis for all parameter regimes available to the GrFNN model (see Kim and Large 2015, for all regimes) and limited our analysis to linear learning rules. An analysis of the oscillator regimes near a double limit cycle bifurcation as well as nonlinear learning rules will be given elsewhere.

Since the GrFNN model is a generic mathematical model that is not bound to any particular timesales it can serve as a model of both short-term and long-term plasticity by controlling the magnitude of learning parameters \(\gamma \) and \(\kappa \) which determines the rate of learning. The GrFNN model has been employed to predict and explain the nature and constraints of developmental changes in rhythm perception (Tichko and Large 2019) and enculturation in musical tonality (Large et al. 2016) which typically span years or decades. On the other hand, GrFNN models with short-term plasticity have been studied as a neural mechanism for auditory scene analysis (Bregman 1990) in which individual frequencies originating from the same acoustic source form a coherent pattern of synchronized oscillations, segregated from frequencies from other sources (Large 2011). The pattern formation in nonlinear multifrequency plastic networks provides a novel method for processing and learning audio signals such as speech and music, an alternative to traditional linear signal processing techniques (Kim 2017).

Hebbian plasticity in multifrequency systems studied in this paper also provides a systems-level explanation for the learning of coordinated movements. In an ongoing study, we successfully modeled human data for the acquisition and retention of polyrhythmic bimanual movements (Park et al. 2013). The model, which consists of two coupled oscillators with adaptive natural frequencies, includes two resonant monomials with plastic coupling coefficients of their own. One monomial is for the frequency ratio to be learned (e.g., a 3:1 ratio between hand movements), and the other is for the default, 1:1 mode of bimanual coordination. Simulations of the model replicated various aspects of learning and retention in the human data, including wide individual differences in the acquired relative phase between hands, which were explained by the neutral stability of plastic connection phases. We are also investigating the learning of particular relative phases in bimanual coordination with canonical models (Zanone and Kelso 1992). Such modeling efforts will benefit from the analysis given in this paper, which provides a useful reference for understanding the dynamics of learning in oscillatory systems as well as for choosing adequate parameters for given modeling goals.

Notes

The amount of change in connection phases \(\theta _{ij}\) after a perturbation depends on connection amplitudes \(A_{ij}\). Once the connections grow strong enough compared to the magnitude of perturbation, and when learning is slow (with small \(\gamma \) and \(\kappa \)), the plastic connections act like fixed coupling and are not altered significantly by sporadic perturbations of small amplitudes. Accordingly, the oscillators are attracted back to the previous relative phase after a small perturbation. Thus, plastic connections which are neutrally stable on a long timescale constitute an attractor on a short timescale. For the purpose of demonstrating neutral stability, the simulation shown in Fig. 2 used fast learning and a strong perturbation.

We chose the quintic coefficient to be \(-1\) because here we want to examine the stabilization of amplitude dynamics without altering phase dynamics. In fully expanded models (13) and (24), the quintic coefficient \(d_i = \beta _{2i}+\text {i}\delta _{2i}\) has both amplitude (radial) and phase (azimuthal) components.

Note that the oscillation frequency of plastic connection \({\dot{\theta }}_{ij} = -\frac{\kappa r_i r_j}{A_{ij}} \sin \psi _{ij}\) is proportional to \(\kappa \). When learning is slow (with small \(\gamma \) and \(\kappa \)), plastic connections oscillate at slow frequencies and behave like fixed coupling on a short timescale. See footnote 2 for a related discussion on the timescale of learning.

See Eq. (9), for example, where the intrinsic part of the learning equation takes a similar form as the oscillator equation, except the former does not have any imaginary terms like \(\text {i}\omega \), which can be interpreted as the natural frequency being zero. Thus, plastic connections resonate when the oscillators maintain a fixed phase difference (or phase-locked) because that is when the input term \(\kappa z_i {\bar{z}}_j\) is stationary.

Note that (13) has \(\epsilon \) in the intrinsic higher-order terms (with the coefficient \(d_i\)) as well as in the coupling terms (with \(c_{ij}\)). The original weakly connected system is considered \(\epsilon \)-perturbation of the uncoupled system, from which the canonical model is derived using averaging theory (Hoppensteadt and Izhikevich 1996a). Here, to capture resonance between distinct frequencies, the canonical model is expanded to include higher-order perturbation terms (see Hoppensteadt and Izhikevich 1997, p. 172). Hence, both the higher-order intrinsic terms and the coupling terms are expressed as powers of \(\epsilon \).

References

Andronov AA, Leontovich EA, Gordon IE, Maier AG (1971) The theory of bifurcations of dynamical systems on a plane. Israel Program of Scientific Translations, Jerusalem

Aoki T, Aoyagi T (2011) Self-organized network of phase oscillators coupled by activity-dependent interactions. Phys Rev E 84(6):066109. https://doi.org/10.1103/PhysRevE.84.066109

Aoyagi T (1995) Network of neural oscillators for retrieving phase information. Phys Rev Lett 74(20):4075–4078. https://doi.org/10.1103/PhysRevLett.74.4075

Arnold VI (1978) Ordinary differential equations. MIT, Cambridge, Mass., oCLC: 833071813

Arnold VI (1988) Geometrical methods in the theory of ordinary differential equations, Grundlehren der mathematischen Wissenschaften, vol 250. Springer, New York. https://doi.org/10.1007/978-1-4612-1037-5

Assenza S, Gutiérrez R, Gómez-Gardeñes J, Latora V, Boccaletti S (2011) Emergence of structural patterns out of synchronization in networks with competitive interactions. Sci Rep 1(1):99. https://doi.org/10.1038/srep00099

Bregman AS (1990) Auditory scene analysis: the perceptual organization of sound. MIT Press, Cambridge

Caporale N, Dan Y (2008) Spike timing–dependent plasticity: a Hebbian learning rule. Annu Rev Neurosci 31(1):25–46. https://doi.org/10.1146/annurev.neuro.31.060407.125639

Gerstner W, Kistler WM (2002) Mathematical formulations of Hebbian learning. Biol Cybern 87(5–6):404–415. https://doi.org/10.1007/s00422-002-0353-y

Guckenheimer J, Holmes P (1983) Nonlinear oscillations, dynamical systems, and bifurcations of vector fields. No. 42 in Applied Mathematical Sciences. Springer, New York

Hebb DO (1949) The organization of behavior: a neuropsychological theory. Wiley, New York

Hoppensteadt FC, Izhikevich EM (1996a) Synaptic organizations and dynamical properties of weakly connected neural oscillators. I. Analysis of a canonical model. Biol Cybernet 75:117–127. https://doi.org/10.1007/s004220050279

Hoppensteadt FC, Izhikevich EM (1996b) Synaptic organizations and dynamical properties of weakly connected neural oscillators. II. Learning phase information. Biol Cybernet 75:129–135. https://doi.org/10.1007/s004220050280

Hoppensteadt FC, Izhikevich EM (1997) Weakly connected neural networks. No. 126 in Applied Mathematical Sciences. Springer, New York

Hoppensteadt FC, Izhikevich EM (2000) Pattern recognition via synchronization in phase-locked loop neural networks. IEEE Trans Neural Netw 11(3):734–738. https://doi.org/10.1109/72.846744

Hoppensteadt FC, Izhikevich EM (2001) Canonical neural models. In: Arbib MA (ed) The handbook of brain theory and neural networks, 2nd edn. MIT Press, Cambridge, pp 181–186

Humphries C, Liebenthal E, Binder JR (2010) Tonotopic organization of human auditory cortex. NeuroImage 50(3):1202–1211. https://doi.org/10.1016/j.neuroimage.2010.01.046

Hyafil A, Giraud AL, Fontolan L, Gutkin B (2015) Neural cross-frequency coupling: connecting architectures, mechanisms, and functions. Trends Neurosci 38(11):725–740. https://doi.org/10.1016/j.tins.2015.09.001

Kasatkin DV, Yanchuk S, Schöll E, Nekorkin VI (2017) Self-organized emergence of multilayer structure and chimera states in dynamical networks with adaptive couplings. Phys Rev E 96(6):062211. https://doi.org/10.1103/PhysRevE.96.062211

Kim JC (2017) A dynamical model of pitch memory provides an improved basis for implied harmony estimation. Front Psychol 8:666. https://doi.org/10.3389/fpsyg.2017.00666

Kim JC, Large EW (2015) Signal processing in periodically forced gradient frequency neural networks. Front Comput Neurosci 9:152. https://doi.org/10.3389/fncom.2015.00152

Kim JC, Large EW (2019) Mode locking in periodically forced gradient frequency neural networks. Phys Rev E 99(2):022421. https://doi.org/10.1103/PhysRevE.99.022421

Kuznetsov YA (2004) Elements of applied bifurcation theory. Applied Mathematical Sciences, vol 112. Springer, New York. https://doi.org/10.1007/978-1-4757-3978-7

Large EW (2010) Neurodynamics of music. In: Jones MR, Fay RR, Popper AN (eds) Music perception. Springer, New York, pp 201–231

Large EW (2011) Musical tonality, neural resonance and Hebbian learning. In: Agon C, Andreatta M, Assayag G, Amiot E, Bresson J, Mandereau J (eds) Mathematics and Computation in Music, no. 6726 in Lecture Notes in Artificial Intelligence. Springer, Berlin, pp 115–125

Large EW, Almonte FV, Velasco MJ (2010) A canonical model for gradient frequency neural networks. Physica D 239:905–911. https://doi.org/10.1016/j.physd.2009.11.015

Large EW, Herrera JA, Velasco MJ (2015) Neural networks for beat perception in musical rhythm. Front Syst Neurosci 9:159. https://doi.org/10.3389/fnsys.2015.00159

Large EW, Kim JC, Flaig NK, Bharucha JJ, Krumhansl CL (2016) A neurodynamic account of musical tonality. Music Percept 33(3):319–331. https://doi.org/10.1525/mp.2016.33.3.319

Lerud KD, Almonte FV, Kim JC, Large EW (2014) Mode-locking neurodynamics predict human auditory brainstem responses to musical intervals. Hear Res 308:41–49. https://doi.org/10.1016/j.heares.2013.09.010

Lerud KD, Kim JC, Almonte FV, Carney LH, Large EW (2019) A canonical oscillator model of cochlear dynamics. Hear Res 380:100–107. https://doi.org/10.1016/j.heares.2019.06.001

Maslennikov OV, Nekorkin VI (2015) Evolving dynamical networks with transient cluster activity. Commun Nonlinear Sci Numer Simul 23(1–3):10–16. https://doi.org/10.1016/j.cnsns.2014.11.019

Maslennikov OV, Nekorkin VI (2017) Adaptive dynamical networks. Phys Usp 60(7):694–704. https://doi.org/10.3367/UFNe.2016.10.037902

Maslennikov OV, Nekorkin VI (2018) Hierarchical transitions in multiplex adaptive networks of oscillatory units. Chaos Interdiscipl J Nonlinear Sci 12:121101. https://doi.org/10.1063/1.5077075

Murdock J (2003) Normal forms and unfoldings for local dynamical systems. Springer monographs in mathematics. Springer, New York

Park SW, Dijkstra TMH, Sternad D (2013) Learning to never forget-time scales and specificity of long-term memory of a motor skill. Front Comput Neurosci 7:111. https://doi.org/10.3389/fncom.2013.00111

Shouval H (2007) Models of synaptic plasticity. Scholarpedia 2(7):1605. https://doi.org/10.4249/scholarpedia.1605

Strogatz SH (1994) Nonlinear dynamics and chaos: with applications to physics, biology, chemistry, and engineering. Perseus Books, Cambridge

Stuart JT (1958) On the non-linear mechanics of hydrodynamic stability. J Fluid Mech 4(1):1–21. https://doi.org/10.1017/S0022112058000276

Tichko P, Large EW (2019) Modeling infants’ perceptual narrowing to musical rhythms: neural oscillation and Hebbian plasticity. Ann N Y Acad Sci 1453(1):125–139. https://doi.org/10.1111/nyas.14050

Wilson HR, Cowan JD (1972) Excitatory and inhibitory interactions in localized populations of model neurons. Biophys J 12:1–24. https://doi.org/10.1016/S0006-3495(72)86068-5

Zanone PG, Kelso JAS (1992) Evolution of behavioral attractors with learning: Nonequilibrium phase transitions. J Exp Psychol Hum Percept Perform 18(2):403–421. https://doi.org/10.1037/0096-1523.18.2.403

Acknowledgements

The authors thank Parker Tichko, Karl Lerud, and two anonymous reviewers for their helpful comments on the previous versions of the paper.

Funding

Early stages of this work were supported by National Science Foundation BCS-1027761 and Air Force Office of Scientific Research FA9550-12-10388.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

The authors declare that they have no conflict of interest.

Additional information

Communicated by Benjamin Lindner.

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Kim, J.C., Large, E.W. Multifrequency Hebbian plasticity in coupled neural oscillators. Biol Cybern 115, 43–57 (2021). https://doi.org/10.1007/s00422-020-00854-6

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00422-020-00854-6