Abstract

In this paper we obtain the following stability result for periodic multi-solitons of the KdV equation: We prove that under any given semilinear Hamiltonian perturbation of small size \(\varepsilon > 0\), a large class of periodic multi-solitons of the KdV equation, including ones of large amplitude, are orbitally stable for a time interval of length at least \(O(\varepsilon ^{-2})\). To the best of our knowledge, this is the first stability result of such type for periodic multi-solitons of large size of an integrable PDE.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

The Korteweg-de Vries (KdV) equation

is one of the most important model equations for describing dispersive phenomena. It is named after the two Dutch mathematician Korteweg and de Vries [29] (cf. also Boussinesq [14], Raleigh [40]) and originally was proposed as a model equation in one space dimension for long surface waves of water in a narrow and shallow channel. Today it is used in many branches of physics as well as in the engineering sciences. The seminal discovery in the late sixties that (1.1) admits infinitely many conservation laws ([34, 38]), and the development of the inverse scattering transform method ( [24]) led to the modern theory of integrable systems of finite and infinite dimension (see e.g. [20, 22], and references therein). More recently, as one of the most prominent examples among dispersive equations, (1.1) played a major role in the development of the theory of dispersive PDEs to which many of the leading analysts of our times contributed. In particular, the (globally in time) well-posedness theory of (1.1) has been established in various setups in great detail – see [19].

A distinguished feature of Eq. (1.1) is the existence of sharply localized traveling wave solutions of arbitrarily large amplitude and particle like properties. Kruskal and Zabusky, who studied them in numerical experiments in the early sixties (cf. [30]), coined the name ’soliton’ for them. More generally, they found solutions, which are localized near finitely many points in space, referred to as multi-solitons. In the periodic setup, these solutions often are referred to as periodic multi-solitons or finite gap solutions. Due to their importance in applications, various stability aspects have been considered such as the long time asymptotics of solutions with initial data near (periodic) multi-solitons (orbital stability, soliton resolution conjecture). Two major questions arise in connection with the structural stability of (1.1). One of them concerns the persistence of the (periodic) multi-solitons under perturbations of (1.1), and the other one concerns the long time asymptotics of solutions of perturbations of (1.1) with initial data close to a (periodic) multi-soliton. In the periodic setup, the first question has been studied quite extensively by developing KAM methods, pioneered by Kolmogorov, Arnold, and Moser to treat perturbations of finite dimensional integrable system, for PDEs (cf. [1, 8, 12, 15, 28, 31,32,33, 35, 39, 41], and references therein), whereas the second one turned out to be quite challenging and little is known so far. Our goal is to address this longstanding open problem.

The aim of this paper is to study in the periodic setup the long time asymptotics of the solutions of Hamiltonian perturbations of (1.1) with initial data close to a periodic multi-soliton of arbitrary large amplitude. To describe the class of perturbations considered, recall that (1.1) with the space periodic variable \(x \in {\mathbb {T}}_1:= {\mathbb {R}}/ {\mathbb {Z}}\) can be written in Hamiltonian form

where \(\nabla H^{kdv}(u)\) denotes the \(L^2-\)gradient of \(H^{kdv}\) and where \(\partial _x\) is the Poisson structure, corresponding to the Poisson bracket defined for functionals F, G by

We consider semilinear Hamiltonian perturbations of (1.1) of the form

where \(0< \varepsilon <1\) is a small parameter and F is a semilinear Hamiltonian vector field

Here \(P_f\) is a Hamiltonian of the form

and f a \(C^{\infty }-\)smooth density

so that with \(f'(x, \zeta ) := \partial _\zeta f(x, \zeta )\) and \(f''(x, \zeta ) := \partial ^2_\zeta f(x, \zeta )\),

To state our main results, we first need to introduce some more notations. Since \(u \mapsto \langle u \rangle _x := \int _0^1 u \, d x\) is a Casimir for the Poisson bracket (1.3) and hence a prime integral of (1.4), we restrict our attention to spaces of functions with zero mean (cf. [28], Section 13) and choose as phase spaces of (1.4) the scale of Sobolev spaces \(H^s_0({\mathbb {T}}_1)\), \(s \in {\mathbb {Z}}_{\ge 0}\),

where

and

On \(L^2_0({\mathbb {T}}_1)\), the Poisson structure \(\partial _x\) is nondegenerate and the corresponding symplectic form is given by

Note that the Hamiltonian vector field \( X_H (u) = \partial _x \nabla H (u) \), associated with the Hamiltonian H, satisfies \( d H (u)[ \cdot ] = {{\mathcal {W}}}_{L^2_0} ( X_H , \cdot ) \).

Our results can informally be stated as follows: for any \(f \in C^{\infty }({\mathbb {T}}_1 \times {\mathbb {R}})\), s sufficiently large, \( \varepsilon > 0\) sufficiently small, and for most of the finite gap solutions \(q: t \mapsto q(t, \cdot )\) of (1.1), the following holds: for any initial data \(u_0 \in H^s_0({\mathbb {T}}_1)\), which is \(\varepsilon \)-close in \(H^s_0({\mathbb {T}}_1)\) to the orbit \({\mathcal {O}}_q := \{ q(t, \cdot ) : \, t \in {\mathbb {R}}\}\) of q, the perturbed equation (1.4) admits a unique solution \(t \mapsto u(t, \cdot )\) in \(H^s_0({\mathbb {T}}_1)\) with initial data \(u(0, \cdot ) = u_0\) and life span at least \([- T, T]\), \(T = O(\varepsilon ^{-2})\). The solution \(u(t, \cdot )\) stays \(\varepsilon \)-close in \(H^s_0({\mathbb {T}}_1)\) to the orbit \({\mathcal {O}}_q \).

To state our results in precise terms, we need to define the notion of finite gap solution and the invariant tori, on which they evolve, and explain for which of these solutions the above stability results hold. Since these finite gap solutions are not small, we need to introduce coordinates to describe them. Most conveniently, this can be done in terms of a Euclidean version of action angle coordinates, referred to as Birkhoff coordinates. Let us now explain this in detail.

According to [28], the KdV equation (1.2) on \({\mathbb {T}}_1\) is an integrable PDE in the strongest possible sense, meaning that it admits globally defined canonical coordinates on \(L^2_0({\mathbb {T}}_1)\) so that when expressed in these coordinates, (1.2) can be solved by quadrature.

To describe these coordinates in more detail, we introduce for any \(s \in {\mathbb {Z}}_{\ge 0}\) the weighted \(\ell ^2-\)sequence spaces

where \( h^s_{0, c} \equiv h^s({\mathbb {Z}}{\setminus } \{ 0 \}, {\mathbb {C}}) \) is given by

By [28] there exists a real analytic diffeomorphism, referred to as (complex) Birkhoff map,

which is canonical in the sense that

whereas the brackets between all other coordinate functions vanish, and which has the property that for any \(s \in {\mathbb {N}}\), the restriction of \(\Phi ^{kdv}\) to \(H^s_0({\mathbb {T}}_1)\) is a real analytic diffeomorphism with range \(h^s_0\), \(\Phi ^{kdv} : H^s_0({\mathbb {T}}_1) \rightarrow h^s_0\), so that the KdV Hamiltonian, when expressed in the coordinates \(w_n,\) \(n \ne 0,\) is in normal form. More precisely,

is a real analytic function \( {{{\mathcal {H}}}}^{kdv }\) of the actions \(I(w)= (I_n(w))_{n \ge 1}\) alone,

where \(\ell ^{1,3}_+\) denotes the positive quadrant of the weighted \(\ell ^1-\)sequence space,

Equation (1.2), when expressed in the coordinates \(w_n\), \(n \ne 0\), then takes the form

where \(\omega _n^{kdv}(I)\), \(n \ne 0\), denote the KdV frequencies

Since by (1.10) the action variables Poisson commute, \(\{ I_n, I_m \}\), \(\forall n, m \ge 1\), it follows that they are prime integrals of (1.2) and so are the frequencies \( \omega _n^{kdv}(I)\), \(n \ne 0\). As a consequence, (1.11) can be solved by quadrature. Finally, the differential \(d_0 \Phi ^{kdv} : L^2_0({\mathbb {T}}_1) \rightarrow \ell ^2_0\) of \(\Phi ^{kdv}\) at \(q = 0\) is the Fourier transform (cf. [28], Theorem 9.8)

and hence \(d_0 \Psi ^{kdv}\) is given by the inverse Fourier transform \({{\mathcal {F}}}^{- 1}\). We remark that the coordinates \(w_{\pm n} \equiv w_{\pm n}(q)\), referred to as (complex) Birkhoff coordinates, are related to the (real) Birkhoff coordinates \(x_n,\) \(y_n\), \(n \ge 1\), introduced in [28], by

where \(\sqrt{\cdot }\) denotes the principal branch of the square root, \(\sqrt{\cdot } \equiv \root + \of {\cdot }\,\).

The Birkhoff coordinates are well suited to describe the finite gap solutions of (1.2). For any finite subset \(S_+ \subseteq {\mathbb {N}}\), let

We denote by \(M_S\) the submanifold of \(L^2_0({\mathbb {T}}_1) \), given by

whose elements are referred to as S-gap potentials, and by \(M_S^o\) the open subset of \(M_S\), consisting of the so called proper S-gap potentials,

Note that \(M_S\) is contained in \(\cap _{s \ge 0} H^s_0({\mathbb {T}}_1)\) and hence consists of \(C^\infty \)-smooth potentials and that \(M_S^o\) can be parametrized by the action-angle coordinates \( \theta = (\theta _k)_{k \in S_+} \in {\mathbb {T}}^{S_+},\) and \(I = (I_k)_{k \in S_+} \in {\mathbb {R}}^{S_+}_{> 0}\),

where \({\mathbb {T}}:= {\mathbb {R}}/ 2 \pi {\mathbb {Z}}\) and \(w(\theta , I) = (w_n(\theta , I))_{n \ne 0}\) is defined by

Introduce

For notational convenience, we view \({{\mathcal {M}}}_S^o \times h^s_\bot \) as a subset of \(h^s_0\). Its elements are denoted by

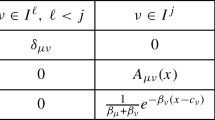

and it is endowed with the canonical Poisson bracket, given by

whereas the brackets between all other coordinate functions vanish.

It is convenient to introduce the frequency vector \(\omega (I)\) (cf. (1.12)),

By [11], the action to frequency map \(\omega : {\mathbb {R}}^{S_+}_{> 0} \rightarrow {\mathbb {R}}^{S_+},\) \(I \mapsto \omega (I)\), is a local diffeomorphism. Throughout the paper, we denote by \(\Xi \subset {\mathbb {R}}^{S_+}_{> 0}\) the closure of a bounded, open, nonempty set so that the restriction of \(\omega \) to \(\Xi \) is a diffeomorphism onto its image \(\Pi := \omega (\Xi )\) and so that for some \(\delta > 0,\)

where \(B_{S_+}(\delta )\) is the ball in \({\mathbb {R}}^{S_+}\) of radius \(\delta > 0\), centered at the origin. We remark that for any \(I \in \Xi + B_{S_+}(\delta )\), the nth action \(I_n = I_n(w)\), \(n \in S_+\), is of the form \( I_n(w) = I_n^{(0)} +y\) where \(I_n^{(0)}:= 2 \pi n w^{(0)}_n w^{(0)}_{- n} \in \Xi \) and

The inverse of \(\omega : \Xi \rightarrow \Pi \) is denoted by \(\mu \),

In what follows, we will consider the frequency vector \(\omega \) as a parameter. For any \(\omega \in \Pi ,\) a \(S-\)gap solution of (1.2) is defined as a solution of the form

whereas a finite gap solution of (1.2) is a solution of the form (1.16) for some \(S = S_+ \cup (- S_+)\) with \(S_+ \subset {\mathbb {N}}\) finite. The \(S-\)gap solution \(t \mapsto q(t, x; \omega )\) is a curve on the \(|S_+|-\)dimensional torus

We note that \({\mathfrak {T}}_{\mu (\omega )}\) is invariant under (1.2) and Lyapunov stable in \(H^s_0({\mathbb {T}}_1)\) for any \( s \ge 0\). More precisely, for any \(\varepsilon > 0\) there exists \(\delta > 0\), depending on s, so that for any initial data \(u_0 \in H^s_0({\mathbb {T}}_1)\) with

the solution \(u(t, \cdot )\) of (1.2) with \(u(0, \cdot ) = u_0\) satisfies

Finally, we introduce the so called normal frequencies,

and for any given \(\tau > |S_+| \), the subsets \(\Pi _\gamma \) of \(\Pi \),

where \(\Pi _\gamma ^{(i)}\), \(0 \le i \le 3\), are given by

Here we used the standard notation for vectors y in \({\mathbb {R}}^n\),

We refer to \(\Pi ^{(j)}_\gamma \), \(0 \le j \le 3\), as the jth Melnikov conditions and note that the third Melnikov conditions allow for ’a loss of derivatives in space’—see item (ii) in Comments on Theorem 1.1 below.

The goal of this paper is to prove a long time stability result of finite gap solutions (1.16) of the Korteweg-de Vries equation on \({\mathbb {T}}_1\). To state it, we denote for any Banach space X with norm \(\Vert \cdot \Vert _X\), integer \(m \ge 0\), and interval \(J \subset {\mathbb {R}}\), by \(C^m(J, X)\) the Banach space of functions \(f: J \rightarrow X\), which are m times continuously differentiable, endowed with the supremum norm, \(\Vert f\Vert _{C^m_t} := \max _{0 \le j \le m} \sup \{ \Vert \partial _t^j f(t) \Vert _X \, : \, t \in J; 0 \le j \le m\}\).

Theorem 1.1

Let f be a function in \( C^{\infty }({\mathbb {T}}_1 \times {\mathbb {R}})\) (cf. (1.6)), \(S_+\) be a finite subset of \({\mathbb {N}}\), and \(\tau \) be a number with \(\tau > |S_+| \) (cf. (1.20)). Then for any integer s sufficiently large and any \(\omega \in \Pi _\gamma \), \(0< \gamma < 1\), there exists \(0< \varepsilon _0 \equiv \varepsilon _0(s, \gamma ) < 1\) with the following properties: for any \(0 < \varepsilon \le \varepsilon _0\) and any initial data \(u_0 \in H^s_0({\mathbb {T}}_1)\), satisfying

equation (1.4) admits a unique solution

with initial data \(u(0, x) = u_0(x)\) and \(T \equiv T_{\varepsilon , s, \gamma } = O(\varepsilon ^{- 2})\). Moreover, u satisfies the estimate

where the distance function \(\mathrm{dist}_{H^s}\) is defined in (1.17). Furthermore, there exists \(0< \mathtt {a} < 1\) so that for any \(0< \gamma < 1,\) the Lebesgue measure \(|\Pi {\setminus } \Pi _\gamma |\) of \( \Pi {\setminus } \Pi _\gamma \) satisfies

Here and in the sequel, the notation \( h \lesssim _{\alpha , \ldots } g\) means that the real valued function h, depending on various variables, satisfies an estimate of the form \(h \le C g\) where g is also a real valued function, typically small, and the constant \(C>0\) only depends on the parameters \(\alpha , \ldots \). For notational convenience, the dependence of the constant C on f, \(S_+\), and \(\tau \) is not indicated.

Comments on Theorem 1.1

- (i):

-

Initial data. Note that the size of the distance of the initial value \(u_0\) to the considered \(S-\)gap solution of the KdV equation (cf. (1.22)) is assumed to be of the same order of magnitude as the size of the perturbation \(\varepsilon F(u)\) in (1.4).

- (ii):

-

Measure estimate (1.23). The proof of the measure estimates (1.23) requires that the third Melnikov conditions \(\Pi ^{(3)}_\gamma \) in (1.20) allow for a loss of derivatives in space. Furthermore, a key ingredient into the proof of (1.23) is the case \(n=3\) of Fermat’s Last Theorem, proved by Euler [21] (cf. Lemma 8.3).

- (iii):

-

Assumptions in Theorem 1.1. The results of Theorem 1.1 hold for any density \(f(x, \zeta )\) of class \({\mathcal {C}}^{\sigma }\) with \(\sigma \) sufficiently large. Furthermore, corresponding results hold for (invariant tori of) finite gap solutions of the KdV equation in the affine spaces \(c + H^s_0({\mathbb {T}}_1)\), \(c \in {\mathbb {R}}\). We assume in this paper that f is \({\mathcal {C}}^\infty -\)smooth and that \(c=0\) merely to simplify the exposition. In order to limit the size of the paper, we assume the perturbation \(\varepsilon F(u)\) to be semilinear (cf. (1.5)), leaving the case of a quasilinear one for future work. Most likely, the elaborate method designed in [23] will allow to transform quasilinear perturbations into normal form while preserving the Hamiltonian structure of the equation.

- (iv):

-

Time of stability. It seems unlikely that the stability results of Theorem 1.1 in the generality stated are valid for time intervals of size larger than \(O(\varepsilon ^{-2})\) since the conditions, required to hold for the frequencies \(\Omega _j\), \(j \in S^\bot \), so that the normal form procedure could be implemented, are too strong. See Remark 8.1 at the end of Sect. 8. Actually, it might be possible that the (almost) resonances of the KdV frequencies of degree four can be used to prove instability results for solutions of the perturbed equation (1.4)—see [16, 25] and references therein for related results for Schrödinger equations in two space dimension.

- (v):

-

Conservation of momentum. If the density f of the perturbation \({P_f}(u) = \int _0^1 f(x, u(x))\, d x\) does not explicitely depend on x, then the momentum \(M(u) := \frac{1}{2} \int _{{\mathbb {T}}_1} u^2\, d x\) is a prime integral of Eq. (1.4). We plan to prove in future work that the stability time can be improved in such a case.

- (v):

-

Integrable PDEs. The method of proof of Theorem 1.1 is quite general. We expect that for any integrable PDE, admitting coordinates of the type constructed in [27], a corresponding version of Theorem 1.1 holds, up to the measure estimates related to the nonresonance conditions for the frequencies of the integrable PDE considered. These estimates might require specific arithmetic properties of the frequencies—see item (ii) above.

To explain the main ideas of the proof, we first need to introduce some terminology and additional notations. They will be used throughout the paper.

Notations and terminology. For any finite subset \(S_+ \subset {\mathbb {N}}\), \(L^2_\bot ({\mathbb {T}}_1)\) is the subspace, given by

and \(\Pi _\bot \) denotes the \(L^2-\)orthogonal projector onto the subspace \( L^2_\bot ({\mathbb {T}}_1) \). For any \(s > 0\), we set

By \( {{\mathcal {E}}}_s\) we denote the phase space and by \(E_s\) the corresponding tangent space, given by

where \({\mathbb {T}}_1 = {\mathbb {R}}/ {\mathbb {Z}}\) and \({\mathbb {T}}= {\mathbb {R}}/ 2\pi {\mathbb {Z}}\). Elements of \({{\mathcal {E}}}\) are denoted by \({\mathfrak {x}} = (\theta , y , w)\) and the ones of its tangent space E by \( \widehat{ {\mathfrak {x}}} = ({{\widehat{\theta }}}, {\widehat{y}},{\widehat{w}})\). For \(s > 0\), \( H^{s}_\bot ({\mathbb {T}}_1)^*\) denotes the dual space of \( H^{s}_\bot ({\mathbb {T}}_1)\), which is canonically identified with the Sobolev space \( H^{-s}_\bot ({\mathbb {T}}_1)\) of distributions. The spaces \( {{\mathcal {E}}}_{-s} \) and \( E_{-s}\) are then defined as in (1.26). On E, we denote by \( \langle \cdot , \cdot \rangle _E\) the inner product defined by

where \( \langle \cdot , \cdot \rangle \) is the standard real scalar product on \(L^2_\bot \). For notational convenience, \(\Pi _\bot \) also denotes the projector of \(E_s\) onto its third component,

For any \(0< \delta < 1\), we denote by \(B_{S_+}(\delta )\) the open ball in \({\mathbb {R}}^{S_+}\) of radius \(\delta \) centered at 0 and by \(B_\bot ^s(\delta )\), \(s \ge 0\), the corresponding one in \(H^s_\bot ({\mathbb {T}}_1)\). For \(s=0\), we also write \(B_\bot (\delta )\) instead of \(B^0_\bot (\delta )\). These balls are used to define the following open neighborhoods in \({\mathcal {E}}_s\), \(s \ge 0\),

For notational convenience, often without stating it explicitly, \(\delta > 0\) will take on different values in the course of our arguments. In particular, \(\delta > 0\) typically will depend on s. (Note that by (1.15), the coordinates \(y= (y_n)_{n \in S_+}\) are of the same order as the coordinates \(w= (w_n)_{n \in S^\bot }\).)

For any \(k \ge 1, \) \(\partial _x^{-k} : L^2({\mathbb {T}}_1) \rightarrow L^2_0({\mathbb {T}}_1)\) is the linear operator, defined by

The space \( {{\mathcal {V}}}^s(\delta )\) is endowed with the symplectic form

where \( {{\mathcal {W}}}_\bot \) is the restriction to \( L^2_\bot ({\mathbb {T}}_1) \) of the symplectic form \( {{\mathcal {W}}}_{L^2_0} \) defined in (1.9). Throughout the paper, the Hamiltonians considered depend on the small parameter \(\varepsilon \in [0, \varepsilon _0]\), \(0< \varepsilon _0 < 1\), and are \(C^\infty \)-smooth maps, \({{\mathcal {V}}}^s(\delta ) \times [0, \varepsilon _0] \rightarrow {\mathbb {R}}\). Given such a Hamiltonian H, we often do not indicate the dependence of H on the parameter \(\varepsilon \). The Hamiltonian vector field of H is denoted by \(X_H\). It is given by

where \({\mathcal {J}}\) is the Poisson structure, associated to the symplectic form \({\mathcal {W}}\),

and where \(\nabla _\bot H({\mathfrak {x}}) \equiv \nabla _w H({\mathfrak {x}})\) denotes the \(L^2-\)gradient of H with respect to the variable w. For notational convenience, we denote by \( \{ F, G \}\) the Poisson bracket corresponding to \({\mathcal {J}},\)

Given a Hamiltonian vector field \(X_F : {{\mathcal {V}}}^s(\delta ) \times [0, \varepsilon _0] \rightarrow E_s\) with Hamiltonian F, we denote by \(\Phi _F(\tau , \cdot )\) or \(\Phi _{X_F}(\tau , \cdot ) \) the flow generated by \(X_F\). For the vector fields \(X_F\) considered in this paper, there exists \(0< \delta ' < \delta \) so that for any \(\tau \in [- 1, 1]\), the flow map \( {\mathcal {V}}^s(\delta ') \rightarrow {\mathcal {V}}^s(\delta )\), \({\mathfrak {x}} \mapsto \Phi _F(\tau , {\mathfrak {x}})\) is well defined. The Taylor expansion of \(\tau \mapsto H \circ \Phi _F(\tau , {\mathfrak {x}})\) at \(\tau = 0\) can be computed as

We will also need to consider \(C^\infty \)-smooth vector fields, which are not necessarily Hamiltonian,

where \(X^{(\theta )}\), \(X^{(y)}\), and \(X^\bot \) are the components of X,

The corresponding flow is denoted by \(\Phi _X(\tau , \cdot )\). Again we will only consider vector fields X with the property that there exists \(0< \delta ' < \delta \) so that for any \(\tau \in [- 1, 1]\), \(\Phi _X(\tau , \cdot )\) is well defined on \({{\mathcal {V}}}^s(\delta ')\). Given two \(C^\infty \)-smooth vector fields \(X, Y : {{\mathcal {V}}}^s(\delta ) \times [0, \varepsilon _0] \rightarrow E_s\), the commutator [X, Y] is defined as

The pull-back of a vector field \(X : {{\mathcal {V}}}^s(\delta ) \rightarrow E_s\) by a \(C^\infty \)-smooth diffeomorphism \(\Phi : {{\mathcal {V}}}^s(\delta ') \rightarrow {{\mathcal {V}}}^s(\delta )\) is defined as,

If \( \Phi _\tau (\cdot ) \equiv \Phi _Y(\tau , \cdot )\) is the flow of a vector field Y, then the Taylor expansion of \(\tau \mapsto \Phi _\tau ^* X ({\mathfrak {x}})\) at \(\tau = 0\) reads

In the case \(\tau =1\), we will often write \(\Phi _Y^*X\) instead of \(\Phi _1^* X\). Clearly if \(X = X_H\), \(Y = Y_F\) are Hamiltonian vector fields, then

Given two linear operators A, B, acting on \(L^2({\mathbb {T}}_1)\) (or \(L^2_\bot ({\mathbb {T}}_1)\)), their commutator is conveniently denoted by \([A, B]_{lin}\),

Moreover, given a densely defined linear operator \(A : L^2_\bot (T_1) \rightarrow L^2_\bot ({\mathbb {T}}_1)\), whose domain contains the elements of the Fourier basis \(e^{\mathrm{i} 2 \pi j x}\), \(j \in S^\bot \), we denote by \(A_j^{j'}\) or \([A]_j^{j'}\) the (Fourier) matrix coefficients of A,

Given a Banach space \((X, \Vert \cdot \Vert _X)\), we denote by \(C^\infty _b ({{\mathcal {V}}}^s(\delta ) \times [0, \varepsilon _0], X)\) the space of \(C^\infty \) functions \({{\mathcal {V}}}^s(\delta ) \times [0, \varepsilon _0] \rightarrow X\) with all derivatives bounded.

In our normal form procedure, we need to take into account the order of vanishing with respect to the variables y, w and the small parameter \(\varepsilon \). The following definition turns out to be convenient.

Definition 1.1

Let \((B, \Vert \cdot \Vert _B)\) be a Banach space and \(p \in {\mathbb {Z}}_{\ge 0}\). A \(C^\infty \)-smooth map

is said to be small of order p if for any \( \beta \in {\mathbb {Z}}_{\ge 0}^{S_+}\) and \(k_1, k_2 \in {\mathbb {Z}}_{\ge 0}\) with \(|\beta | + k_1 + k_2 \le p - 1\)

Note that if g is small of order p, then

and for any \(\alpha \in {\mathbb {Z}}_{\ge 0}^{S_+}\), \(\partial _\theta ^\alpha g\) is small of order p as well.

Given two Banach spaces \((X, \Vert \cdot \Vert _X)\), \((Y, \Vert \cdot \Vert _Y)\), we denote by \({{\mathcal {B}}}(X, Y)\) the space of bounded linear operators \(X \rightarrow Y\). If \(X = Y\), we write \({{\mathcal {B}}}(X)\) instead of \({{\mathcal {B}}}(X, X)\). Moreover for any integer \(p \ge 2\), we denote by \({{\mathcal {B}}}_p(X, Y)\), the space of bounded, p-multilinear maps \(M : X^p \rightarrow Y\), equipped with the standard norm,

If \(X = Y\), we write \({{\mathcal {B}}}_p(X)\) instead of \({{\mathcal {B}}}_p(X, X)\). Furthermore, given open sets \(U \subset X\) and \(V \subset Y\), we denote by \(C^\infty _b \big (U, V \big )\) the space of maps \(f: U \rightarrow V\) which are \(C^\infty \)-smooth and together with each of its derivatives, bounded.

Overview of the proof of Theorem 1.1. We prove Theorem 1.1 by the means of a normal form procedure. A key ingredient are canonical coordinates near a torus \({\mathfrak {T}}_{\mu (\omega )}\) of arbitrary size, constructed in [27]. They are obtained by first linearizing the Birkhoff map \(\Phi ^{kdv}\) at \({\mathfrak {T}}_{\mu (\omega )}\) and then constructing a symplectic corrector. The new coordinates yield a family of canonical transformations \(\Phi ^{kdv}_{\mu }\), parametrized by \(\mu \equiv \mu (\omega )\), \(\omega \in \Pi \). One of the main features of these transformations is that they admit expansions in terms of pseudo-differential operators up to a remainder of arbitrary negative order. To prove Theorem 1.1 we then follow a strategy developed in [7] in the context of water waves.

In a first step, referred to as Step 1, we write the perturbed Hamiltonian \(H^{kdv} + \varepsilon P_f\) in the new coordinates (cf. Theorem 4.1). More precisely, in Theorem 4.1, we rephrase [27, Theorem 1.1] in a form taylored to our needs and in Corollary 4.1, we compute for any given \(\mu \equiv \mu (\omega )\), \(\omega \in \Pi \), and \({\mathfrak {x}} = (\theta , y, w) \in {\mathcal {V}}^1(\delta )\) the Taylor expansion of \({{\mathcal {H}}}_{\varepsilon , \mu } := (H^{kdv} + \varepsilon P_f) \circ \Phi ^{kdv}_\mu \) at \((\theta , 0, 0)\) up to order three in the variables y, w, and \(\varepsilon \),

where \(\Omega _{S_+}(\omega )\) is given by the \(S_+ \times S_+\) matrix \((\partial _{I_j} \omega _i^{kdv}(\mu , 0))_{i, j \in S_+}\) and where \(D^{- 1}_\bot : L^2_\bot ({\mathbb {T}}_1) \rightarrow L^2_\bot ({\mathbb {T}}_1)\) and \(\Omega _\bot (\omega ) \equiv \Omega _{S^\bot }(\omega ): \, L^2_\bot ({\mathbb {T}}_1) \rightarrow L^2_\bot ({\mathbb {T}}_1)\) are Fourier multipliers in diagonal form,

with \( \Omega _n(\omega )\) given by (1.18). In order to simplify notation, in the sequel, we often will not indicate the dependence of quantities such as \({\mathcal {H}}_{\varepsilon , \mu }\), \({\mathcal {P}}_{\varepsilon , \mu }\), \(\Omega _\bot (\omega )\), \(\ldots \) on \(\varepsilon \), \(\mu \equiv \mu (\omega )\), and \(\omega \).

We note that \(\Omega _\bot \) is an unbounded operator. For any \({\mathfrak {x}} = (\theta , y, w)\), \({{\mathcal {P}}}({\mathfrak {x}}) \) can be expanded as

where \({{\mathcal {P}}}_{e}({\mathfrak {x}})\) is small of order three (cf. Definition (1.1)). The Hamiltonian vector field \(X_{{\mathcal {H}}}\), associated to \({{\mathcal {H}}}\), is given at any point \({\mathfrak {x}} = (\theta , y, w)\) by

We also show that the normal component \(\partial _x \nabla _\bot {{\mathcal {P}}}_{e}\) of the Hamiltonian vector field \(X_{{\mathcal {P}}_{e}}\) is the sum of a para-differential vector field of order one (cf. Definition 3.1 in Sect. 3) and a smoothing vector field (cf. Definition 3.3 in Sect. 3), i.e., for \({\mathfrak {x}} = (\theta , y, w)\),

where for any \(0 \le k \le N + 1\), \(T_{a_{1 - k}({\mathfrak {x}})}\) is the operator of para-multiplication with \(a_{1 - k}({\mathfrak {x}}) \in H^s({\mathbb {T}}_1)\) (cf. (2.1) in Sect. 2), which is small of order one, and where \( {{\mathcal {R}}}^\bot _{N}({\mathfrak {x}})\) is a regularizing vector field, which is small of order two.

In Step 2, we apply a regularization procedure, which conjugates the vector field (1.44) to another one, which is a smoothing perturbation of a vector field in diagonal form. Since the torus \({\mathfrak {T}}_{\mu (\omega )}\) in the coordinates \((\theta , y, w)\) is described by \(\{ y = 0, w = 0 \}\), the variables y, w can be used to measure the distance of a solution of the equation

from \({\mathfrak {T}}_{\mu (\omega )}\). Theorem 1.1 follows from Theorem 4.2 in Sect. 4, which states that for \(\mu \) in a large subset of \(\Xi \) and for any initial data \({\mathfrak {x}}_0 = (\theta _0, y_0, w_0)\), satisfying \(|y_0|, \Vert w_0 \Vert _s \le \varepsilon \) with \(s > 0\) large enough, the solution \(t \mapsto {\mathfrak {x}}(t) = (\theta (t), y(t), w(t))\) of (1.46) exists on a time interval of the form \([- T, T]\) with \(T \equiv T_{\varepsilon , s, \gamma } = O(\varepsilon ^{- 2})\) and

We deduce Theorem 4.2 from Theorem 4.3 and a local existence Theorem (cf. Appendix C), using energy estimates (cf. Sect. 7). Theorem 4.3 provides coordinates having the property that the vector field in (1.46), when expressed in these coordinates, is a vector field \(X = (X^{(\theta )}, X^{(y)}, X^{\bot })\) with the following two features: (F1) The y-component \(X^{(y)}\) of X is small of order three. (F2) The normal component \(X^\bot ({\mathfrak {x}})\) of \(X({\mathfrak {x}})\) at \({\mathfrak {x}} = (\theta , y, w)\) reads

where \({\mathtt {D}}^\bot ({\mathfrak {x}})\) is a skew-adjoint Fourier multiplier of order one (depending nonlinearly on \({\mathfrak {x}}\)), \(a({\mathfrak {x}}) \in H^s({\mathbb {T}}_1)\) is small of order two, and the remainder \({{\mathcal {R}}}^\bot ({\mathfrak {x}})\) is small of order three. In broad terms, our normal form procedure diagonalizes the normal component \(X^\bot \) of the vector field X up to a term, which is small of order three and which can be controlled by energy estimates. The procedure consists in eliminating/normalizing the terms of the Taylor expansion (1.40)–(1.43) of \(X_{{\mathcal {H}}}\), which are p-homogeneous in y, w, \(\varepsilon \) with \(0 \le p \le 2\) (cf. Definition 1.1).

Based on the normal form procedure, developed in Sects. 5 and 6, Theorem 4.3 is proved in Sect. 7. In Sect. 8 we show that the Lebesgue measure \(|\Pi {\setminus } \Pi _\gamma |\) of \( \Pi {\setminus } \Pi _\gamma \) (cf. (1.20)) satisfies \(|\Pi {\setminus } \Pi _\gamma | \lesssim \gamma ^{\mathtt {a}} \) for some \(0< \mathtt {a} < 1\). As already mentioned in item (ii) of Comments on Theorem 1.1, a key ingredient of the proof is the case \(n=3\) of Fermat’s Last Theorem, proved by Euler [21] (cf. Lemma 8.3). Sections 2 and 3 are prelimimary where para-differential calculus and para-differential vector fields are discussed to the extent needed in the paper.

We finish our overview of the proof of Theorem 1.1 by describing in some more detail the normal form procedure, developed in Sects. 5–6, to prove Theorem 4.3. In order to setup such a procedure in an effective way, we introduce, in the spirit of [7, 18, 23], various classes of para-differential and smoothing vector fields, which possibly depend in a nonlinear fashion on \({\mathfrak {x}} = (\theta , y, w)\), and develop a symbolic calculus for them—see Sect. 3. The order of homogeneity in our symbol classes is computed with respect to y, w, \(\varepsilon \) where we recall that y, w (together with \(\theta \)) are phase space variables and \(\varepsilon \) is the perturbation parameter appearing in (1.4) and (1.22). Our normal form procedure is split into two steps which we now describe.

In a first step, presented in Sect. 5, we normalize the terms in the Taylor expansion of the Hamiltonian \({{\mathcal {H}}}\), which are linear with respect to the normal variable w and homogeneous of order at most three in \((y, w, \varepsilon )\). Equivalently, this means that we normalize the terms in the Taylor expansion of the Hamiltonian vector field \(X_{{{\mathcal {H}}}}\) which do not contain w and are homogeneous of order at most two. This is achieved by a standard normal form procedure which consists in constructing a canonical transformation, given by the time one flow map \(\Phi _{\mathcal {F}}\) of a Hamiltonian vector field \(X_{{\mathcal {F}}}\) with a Hamiltonian \({\mathcal {F}}\) of the form

with the property that \(X_{{\mathcal {F}} }\) is a smoothing Hamiltonian vector field (cf. Lemma 3.19). Hence its flow is a smoothing perturbation of the identity, implying that the Hamiltonian vector field of the Hamiltonian \({{\mathcal {H}}} \circ \Phi _{{\mathcal {F}}}\) has a normal component, which is again of the form (1.45) (cf. Lemma 3.17). To construct \({\mathcal {F}}\), we only need to impose zeroth and first Melnikov conditions on \(\omega \), i.e., \(\omega \in \Pi _\gamma ^{(0)} \cap \Pi _\gamma ^{(1)}\) (cf. (1.20)). For notational convenience, the Hamiltonian vector field obtained in this way is again denoted by \(X = (X^{(\theta )}, X^{(y)}, X^\bot )\). The \(y-\)component \(X^{(y)}\) is small of order three and the normal component \(X^\bot \) of X at \({\mathfrak {x}} = (\theta , y, w)\) has the form

where

and for any \(0 \le k \le N + 1\), \(a_{1 - k} (\theta , y)\) is small of order one, \(w \mapsto A_{1 - k}(\theta )[w]\) is a linear operator, whereas \(w \mapsto {{\mathcal {R}}}^\bot _{N, 1}(\theta , y)[w]\) is a linear smoothing operator (smoothing of order \(N+1\)), and \(w \mapsto {{\mathcal {R}}}^\bot _{N, 2}(\theta )[w,w]\) is a quadratic smoothing operator (smoothing of order \(N+1\)). The term in (1.49), which is small of order three, is the sum of a para-differential vector field of order one and a smoothing vector field.

The second step of our normal form procedure is developed in Sect. 6. Since \(\Pi _\gamma ^{(3)}\) (cf. (1.20)) allows for a loss of derivatives in space, we first need to reduce the terms in the Taylor expansion of the normal component \(X^\bot \) of X, which are linear and quadratic in w, to constant coefficients up to smoothing terms—see Sect. 6.1. This regularization procedure is achieved by constructing a transformation which is not canonical, but nevertheless preserves the following important property, needed for the energy estimates: the linearization of \(X^\bot \) at \(w = 0\) equals \(X^\bot _1(\theta , y)\) and hence is Hamiltonian. In particular, the diagonal elements of the Fourier matrix representation of the linear operator \(X_1^\bot (\theta , y)\) are purely imaginary,

We remark that in the spirit of [23], one could construct a canonical transformation, but the construction of the one in Sect. 6.1 is technically simpler and due to (1.51) suffices for our purposes.

We now describe the second step of our normal form procedure in more detail. We begin by normalizing the operator

in the expansion of the vector field \(X^\bot _1(\theta , y)[w] + X^\bot _2(\theta )[w, w]\) (cf. (1.49), (1.50)). We transform the vector field in (1.49) by the means of the time one flow map \(\Phi _{Y}\) of the vector field

with b and B given by

(Recall that for \(a \in L^2({\mathbb {T}}_1)\), \(\langle a \rangle _x = \int _0^1 a\, d x\).) Note that b and B satisfy

For notational convenience, we denote the transformed vector field also by \(X_{1} = (X_{1}^{(\theta )}, \, X_{1}^{(y)}, \, X_{1}^\bot )\). We show that \(X_{1}^{(y)}\) is small of order three and that \( X_{1}^\bot (\theta , y, w)\) has the form

with

where for any \(1 \le k \le N+1 \), \(a_{1, 1 - k}(\theta , y)\) is small of order one and \(w \mapsto A_{1, 1 - k}(\theta )[w]\) is a linear operator. Furthermore, \({{\mathcal {R}}}_{N, 1}^{\bot }(\theta , y)\) is a smoothing linear operator and \({{\mathcal {R}}}_{N, 2}^{\bot }(\theta )\) is a smoothing bilinear operator. The term in (1.54), which is small of order three, is the sum of a para-differential vector field of order one and a smoothing vector field. We also show that the linear vector field \(X_{1, 1}^{\bot }(\theta , y)[w] \) in (1.54) satisfies the property (1.51), i.e., \([X_{1, 1}^{\bot }(\theta , y)]_j^j \in \mathrm{i} {\mathbb {R}}\) for any \(j \in S^\bot \), and that the Fourier multiplier \({{\mathcal {D}}}^{\bot }_{1, 1}(\theta , y)\) is skew-adjoint. By iterating this procedure \(N + 2\) times, one gets a vector field, which we denote by \(X_{4} = (X_{4}^{(\theta )}, X_{4}^{(y)}, X_{4}^\bot )\) (cf. Proposition 6.1), with the following properties: \(X_{4}^{(y)}\) is small of order three and \(X_{4}^\bot (\theta , y, w) \) has the form

Here \({{\mathcal {D}}}_{4, 1}^{\bot }(\theta , y)\) and \({{\mathcal {D}}}_{ 4, 2}^{\bot }(\theta , w)\) are Fourier multipliers of the form

where for any \(0 \le k \le N +1\), \(\lambda _{1 - k}(\theta , y) \in {\mathbb {R}}\) is small of order one and \(w \mapsto \Lambda ^\bot _{1 - k}(\theta )[w] \in {\mathbb {R}}\) is a linear operator. The remainder \({{\mathcal {R}}}_{N, 1}^{\bot }(\theta , y)\) is a smoothing linear operator and \({{\mathcal {R}}}_{N, 2}^{\bot }(\theta )\) is a smoothing bilinear operator. In addition, the Fourier multiplier \({{\mathcal {D}}}_{4, 1}^{\bot }(\theta , y)\) is skew-adjoint. Moreover we show that

Since the transformation \(\Phi _Y\) and the subsequent transformations constructed in the interative procedure are not canonical, the linear operator \( {{\mathcal {D}}}_{4, 2}^{\bot }(\theta , w)\) is not necessarily skew-adjoint. However the leading order term \(\Lambda ^\bot _1(\theta )[w] \partial _x\) of \( {{\mathcal {D}}}_{4, 2}^{\bot }(\theta , w)\) is skew-adjoint since \(\Lambda ^\bot _1(\theta )[w]\in {\mathbb {R}}\).

In Sect. 6.2 we design a normal form procedure to remove

from \( {{\mathcal {D}}}_{4, 2}^{\bot }(\theta , w)\) which requires to impose first Melnikov conditions on \(\omega \) (cf. definition (1.20) of \(\Pi _\gamma ^{(1)}\)). We transform the vector field \(X_{4}\) (cf. (1.55)) by the means of the time one flow map of a vector field, which in view of (1.58) is chosen to be of the form

where for any \(1 \le k \le N +1\), the linear functional \(w \mapsto \Xi ^\bot _{1 - k}(\theta )[w]\) is a solution of

The latter equation can be solved if \(\omega \in \Pi _\gamma ^{(1)}\) (first Melnikov conditions). The transformed vector field is denoted by \(X_{5} = (X_{5}^{(\theta )}, X_{5}^{(y)}, X_{5}^\bot )\). We show that \(X_{5}^{(y)}\) is small of order three and that \(X_{5}^\bot (\theta , y, w)\) has the form

where

and \({{\mathcal {R}}}_{N, 1}^\bot \), \({{\mathcal {R}}}_{N, 2}^\bot \) are as in (1.55). Clearly, the Fourier multiplier \({{\mathcal {D}}}^\bot _{5}({\mathfrak {x}})\) is skew-adjoint.

Finally in Sect. 6.3 we normalize the term in the Taylor expansion of the \(\theta \)-component \(X_{5}^{(\theta )}\) of \(X_{5}\), which is quadratic in w, and normalize the smoothing vector fields \({{\mathcal {R}}}_{N, 1}^\bot \) and \({{\mathcal {R}}}_{N, 2}^\bot \) in \(X^\bot _5\). Let us explain in more detail how to achieve the latter. We transform the vector field \(X_{5}\) by the time one flow map generated by the vector field

where \({{\mathcal {S}}}^\bot _1(\theta , y)\) is a smoothing linear operator and \({{\mathcal {S}}}^\bot _2(\theta )\) is a smoothing bilinear operator. They are chosen to be solutions of

and, respectively,

where

Equation (1.64) can be solved by imposing the second Melnikov conditions on \( \omega \), i.e., \(\omega \in \Pi _\gamma ^{(2)}\), and Eq. (1.65) by imposing the third Melnikov conditions, \(\omega \in \Pi _\gamma ^{(3)}\) - see Lemma 6.1. Note that in Eq. (1.65), the right hand side vanishes, meaning that the left hand side does not contain any resonant terms. Finally we get a vector field \(X_{6} = (X_{6}^{(\theta )}, X_{6}^{(y)}, X_{6}^\bot )\) where \(X_{6}^{(y)}\) is small of order three and \(X_{6}^\bot ({\mathfrak {x}})\) has the form

By the property (1.57) and the definition (1.66) of \({{\mathcal {Z}}}^\bot (y)\), it follows that \({{\mathcal {Z}}}^\bot (y)\) and hence \( {{\mathcal {D}}}^\bot _{5}({\mathfrak {x}}) + {{\mathcal {Z}}}^\bot (y)\) are skew-adjoint Fourier multiplier. Finally one shows that \(X_{6}^\bot \) in (1.67) has the form stated in (1.47).

Related work. Prior to our work, no results have been obtained on the long time asymptotics of the solutions of Hamiltonian perturbations of integrable PDEs such as the KdV or the nonlinear Schrödinger equation on \({\mathbb {T}}_1\) with initial data close to a periodic multi-soliton of possibly large amplitude. For Hamiltonian perturbations of linear integrable PDEs on \({\mathbb {T}}_1\), which satisfy nonresonance conditions, a by now standard normal form method has been developed allowing to prove the stability of the equilibrium solution \(u\equiv 0\) of (Hamiltonian) perturbations for time intervals of large size—see e.g. [2,3,4, 7, 13, 17, 18, 23, 37] and references therein. More recently, these techniques have been refined so that in specific cases, such results can also be proved for Hamiltonian perturbations of resonant linear integrable PDEs by approximating the perturbed equation by nonlinear integrable systems, satisfying nonresonance conditions—see [5, 13] for Hamiltonian perturbations of the linear Schrödinger equation and [6] for such perturbations of the Airy equation as well as the linearized Benjamin-Ono equation. We remark that for the Airy equation, the Hamiltonian perturbations considered in [6] are of the form \(\partial _x \nabla P_f\) (cf. (1.6)–(1.7)) with the density f(u(x)) not explicitly depending on x and f(z) being analytic in a neighborhood of \(z=0\) in \({\mathbb {C}}\).

Finally, we mention the recent paper [8] where it is proved by KAM methods that many periodic multi-solitons persist under quasi-linear perturbations of the KdV equation. As in this paper, a key ingredient are the normal form coordinates, constructed in [27].

2 Para-Differential Calculus

In this section we review some standard notions and results of the para-differential calculus, needed throughout the paper. For details we refer to [37].

We begin with reviewing the notion of para-product. To this end we need the following

Definition 2.1

A function \(\psi \in C^\infty ({\mathbb {R}}\times {\mathbb {R}})\) is said to be an admissible cut-off function, if there exist \(0< \varepsilon '< \varepsilon <1\) so that

and

where by (1.21) \(\langle \xi \rangle = \max \{1, |\xi | \}\).

Given a cut-off function \(\psi \) as in Definition 2.1, the para-product \(T_a u\) of a function \(a\in H^{1}({\mathbb {T}}_1)\) with a function \(u \in H^s({\mathbb {T}}_1)\), \(s \ge 1\), is defined as

where \({\widehat{a}}(\eta )\), also denoted by \(a_\eta \), is the \(\eta \)th Fourier coefficient of a,

Lemma 2.1

For any \(a \in H^{1}({\mathbb {T}}_1)\) and \(s \ge 1\), \(T_a\) is in \({{\mathcal {B}}}(H^s({\mathbb {T}}_1), H^s({\mathbb {T}}_1))\) and

Furthermore, for any \(s \ge 1\), the map \(H^1({\mathbb {T}}_1) \rightarrow {{\mathcal {B}}}(H^s({\mathbb {T}}_1), H^s({\mathbb {T}}_1)), \, a \mapsto T_a\), is linear.

Given two functions \(a, u \in H^s({\mathbb {T}}_1)\) with \(s \ge 1\), their product can be split as

where the remainder \({{\mathcal {R}}}^{(B)}(a, u)\) is given by

Note that the support \(\mathrm{supp}(\omega )\) of \(\omega : {\mathbb {Z}}\times {\mathbb {Z}}\rightarrow {\mathbb {R}}\) satisfies

The main feature of \({{\mathcal {R}}}^{(B)}(a, u)\) is that it is a regularizing bilinear operator in the following sense.

Lemma 2.2

For any \(s_1, s_2 \ge 0\),

is a bilinear map, satisfying

Next, we discuss the standard symbolic calculus for para-differential operators to the extent needed in this paper. It suffices to consider operators of the form

where we recall that for any \(m \in {\mathbb {Z}}\), the Fourier multiplier \(\partial _x^m\) is defined by

Alternatively, \(\partial _x^m\) can be written as the pseudo-differential operator \(\mathrm{Op}( ( \mathrm{i} 2 \pi \xi )^m \chi (\xi ))\) with symbol \(( \mathrm{i} 2 \pi \xi )^m \chi (\xi )\) where \(\chi : {\mathbb {R}}\rightarrow {\mathbb {R}}\) is a \(C^\infty -\)smooth cut-off function, satisfying

The symbol of an operator of the form (2.7) is given by

Lemma 2.3

Let \(a, b \in H^{N+3}({\mathbb {T}}_1)\) with \(N \in {\mathbb {N}}\). Then

where for any \(s \ge 0\),

is a bilinear map, satisfying

Lemma 2.4

Let \(m \in {\mathbb {Z}}\), \(N \in {\mathbb {N}}\). Then there exist an integer \(\sigma _N > N + m\) and combinatorial constants \((K_{n, m})_{1 \le n \le N + m}\), with \(K_{1, m} = m\) so that for any \(a \in H^{\sigma _N}({\mathbb {T}}_1)\)

where for any \(s \ge 0\), the map

is linear and satisfies the estimate

and where we use the customary convention that the sum \(\sum _{n = 1}^{N+m}\) equals 0 if \(N+m < 1\).

Combining Lemmas 2.3 and 2.4 yields the following

Lemma 2.5

Let \(m, m' \in {\mathbb {Z}}\), \(N \in {\mathbb {N}}\). Then there exists an integer \(\sigma _N > N + m\) so that for any \(a, b \in H^{\sigma _N}({\mathbb {T}}_1)\),

where \(K_{n, m}\) are the combinatorial constants of Lemma 2.4 and where for any \(s \ge 0\), the map

is bilinear and satisfies the estimate

According to Lemma 2.3, in the case \(m=0\), a possible choice is \(\sigma _N= N+ 3\), \(K_{n, 0} = 0\) for \(1 \le n \le N+m'\).

Using that \(K_{1,m} = m\), one infers from Lemma 2.5 an expansion of the commutator \([T_a \partial _x^m \,,\, T_b \partial _x^{m'} ]_{lin}\).

Corollary 2.1

(Commutator expansion). Let \(m, m' \in {\mathbb {Z}}\), \(N \in {\mathbb {N}}\). Then there exists \(\sigma _N > N +m +m'\) so that for any \(a, b \in H^{\sigma _N}({\mathbb {T}}_1)\), \([T_a \partial _x^m \,,\, T_b \partial _x^{m'} ]_{lin} \) has an expansion of the form

where for any \(s \ge 0\), the map

is bilinear and satisfies

According to Lemma 2.3, in the case \(m=0, m'=0\), \([T_a \, ,\, T_b ]_{lin} = {{\mathcal {R}}}_N(a, b) - {{\mathcal {R}}}_N(b, a)\). Hence a possible choice is \(\sigma _N= N+ 3\), \(K_{n, 0} = 0\) for \(1 \le n \le N\).

Finally, we discuss the adjoint \(T_a^\top \) of \(T_a\) with respect to the standard \(L^2-\)inner product.

Lemma 2.6

Let \(a \in H^{N+1}({\mathbb {T}}_1)\) with \(N \in {\mathbb {N}}\). Then \(T_a^\top = T_{ a} + {{\mathcal {R}}}_\top ( a)\) where for any \(s \ge 0\), the map

is linear and for any \(a \in H^{N+1}({\mathbb {T}}_1)\) satisfies \(\Vert {{\mathcal {R}}}_\top (a)\Vert _{{{\mathcal {B}}}(H^s, H^{s + N+1})} \lesssim _{s, N} \Vert a \Vert _{N+1}\).

Combining Lemmas 2.4 and 2.6 yields the following

Corollary 2.2

Let \(m \in {\mathbb {Z}}\), \(N \in {\mathbb {N}}\). Then there exists an integer \(\sigma _N > N + m\) so that for any \(a \in H^{\sigma _N}({\mathbb {T}}_1)\), \((T_a \partial _x^m)^\top \) admits the expansion

where \(K_{n, m}\) are the combinatorial constants of Lemma 2.4, and where for any \(s \ge 0\), the map

is linear and for any \(a \in H^{\sigma _N}({\mathbb {T}}_1)\) satisfies \(\Vert {{\mathcal {R}}}_{\top , N, m}(a)\Vert _{{{\mathcal {B}}}(H^s, H^{s + N + 1})} \lesssim _{s, N} \Vert a \Vert _{\sigma _N}\).

3 Para-Differential Vector Fields

In this section we introduce several classes of vector fields, compute the commutators between vector fields from these classes and study their flows. As part of the proof of Theorem 1.1 , these vector fields are used to transform equation (1.4) into normal form.

3.1 Definitions

Definition 3.1

(Para-differential vector fields). Let N, \(p \in {\mathbb {N}}\) and \(m \in {\mathbb {Z}}\). A vector field \(X^\bot \) in normal direction, defined on a subset of \({\mathcal {E}}\) and depending on the parameters \(\varepsilon \) and \(\mu \), is said to be of class \({{\mathcal {O}} B}(m, N)\), \(X^\bot \in {{\mathcal {O}} B}(m, N)\), if it is of the form

and has the following property: there are integers \(\sigma _N, s_N \ge 0\) so that for any \(s \ge s_N\) there exist \(0< \delta \equiv \delta (s, N) < 1\) and \(0< \varepsilon _0 \equiv \varepsilon _0(s, N) < 1\) so that for any \(0 \le k \le N + m\)

is \(C^\infty -\)smooth and together with each of its derivatives bounded. \(X^{\bot }\) is said to be of class \({\mathcal {OB}}^p(m, N)\) if it is in \({\mathcal {OB}}(m, N)\) and in addition, the functions \(a_{m -k}\) are small of order \(p - 1\).

Remark 3.1

-

(i)

If \(N+ m < 0\) in (3.1), the sum is defined to be the zero vector field. As a consequence, \({\mathcal {OB}}(m, N) = \{ 0 \}\) if \(N + m < 0\). Throughout the paper, the same convention holds for any sum of terms, indexed by an empty set, and for any of the used classes of vector fields.

-

(ii)

We point out that the bounds are uniform in the parameter \(\mu \), but no regularity assumptions with respect to \(\mu \) are required. Throughout the paper, the same convention holds.

Definition 3.2

(Fourier multiplier vector fields). Let N, \(p \in {\mathbb {N}}\) and \(m \in {\mathbb {Z}}\). A vector field \({\mathcal {M}}^\bot \) in normal direction, defined on a subset of \({\mathcal {E}}\) and depending on the parameters \(\varepsilon \) and \(\mu \), is said to be of class \({{\mathcal {O}} F}(m, N)\), \({\mathcal {M}}^\bot \in {{\mathcal {O}} F}(m, N)\), if it is of the form

and has the following property: there exist an integer \(\sigma _N \ge 0\), \(0< \delta \equiv \delta (N) <1\), and \(0< \varepsilon _0 \equiv \varepsilon _0(N) < 1\) so that for any \(0 \le k \le N + m\),

is \(C^\infty \)-smooth and together with each of its derivatives bounded. \({\mathcal {M}}^{\bot }\) is said to be of class \({\mathcal {OF}}^p(m, N)\) if it is in \({\mathcal {OF}}(m, N)\) and in addition, the functions \(\lambda _{m -k}\) are small of order \(p - 1\).

Definition 3.3

(Smoothing vector fields). Let N, \(p \in {\mathbb {N}}\). A vector field \({\mathcal {R}}\), defined on a subset of \({\mathcal {E}}\) and depending on the parameters \(\varepsilon \) and \(\mu \), is said to be of class \({\mathcal {OS}}( N)\), \({\mathcal {R}} \in {\mathcal {OS}}(N)\), if there exist \(s_N \ge 0\) so that for any \(s \ge s_N\), there exist \(0< \delta \equiv \delta (s, N) <1\) and \(0< \varepsilon _0 \equiv \varepsilon _0(s, N) < 1\) with the property that

is \(C^\infty \)-smooth and together with each of its dervatives bounded. \({{\mathcal {R}}}\) is said to be of class \({\mathcal {OS}}^p(N)\) if it is in \({\mathcal {OS}}(N)\) and in addition is small of order p.

Remark 3.2

For notational convenience, in the sequel, we refer to a function, which is \(C^\infty \)-smooth and together with each of its derivatives bounded, as a function which is \(C^\infty \)-smooth and bounded.

Next we introduce special classes of vector fields which are small of order 2 with respect to y, w, \(\varepsilon \).

Definition 3.4

Let \(N \in {\mathbb {N}}\) and \(m \in {\mathbb {Z}}\).

-

(i)

Assume that \(X^\bot ({\mathfrak {x}}) = \Pi _\bot \sum _{k = 0}^{m + N} T_{a_{m - k}({\mathfrak {x}})} \partial _x^{m - k} w\) is of class \({\mathcal {OB}}^2(m, N)\).

-

(i1)

\(X^\bot \) is said to be of class \({\mathcal {OB}}^2_{w}(m , N)\) if it is linear with respect to w. As a consequence, for any \(0 \le k \le m + N\), the coefficient \(a_{m - k}\) is small of order one and independent of w. More precisely, there is an integer \(s_N \ge 0\) so that for any \(s \ge s_N\), there exist \(0< \delta \equiv \delta (s, N) < 1\) and \(\varepsilon _0 \equiv \varepsilon _0(s, N) > 0\) with the property that

$$\begin{aligned} a_{m - k} : {\mathbb {T}}^{S_+} {\times } B_{S_+}(\delta ) \times [0, \varepsilon _0] {\rightarrow } H^s({\mathbb {T}}_1), \, (\theta , y, \varepsilon ) \mapsto a_{m - k}(\theta , y) \equiv a_{m - k}(\theta , y, \varepsilon ) \end{aligned}$$is \(C^\infty \)-smooth and bounded (cf. Remark 3.2). In this case, we often write \(X^\bot (\theta , y)[w]\) instead of \(X^\bot ({\mathfrak {x}})\) where

$$\begin{aligned} X^\bot (\theta , y) := \Pi _\bot \sum _{k = 0}^{N+m} T_{a_{m - k}(\theta , y)} \partial _x^{m - k}\, . \end{aligned}$$ -

(i2)

\(X^\bot \) is said be of class \({\mathcal {OB}}^2_{ww}(m, N)\) if it is quadratic with respect to w and independent of y. As a consequence, for any \(0 \le k \le m + N\), the coefficient \(a_{m - k}\) is linear with respect to w and independent of y. More precisely, there are integers \(s_N \ge 0\), \(\sigma _N \ge 0\) so that for any \(s \ge s_N\) there exist \(0< \delta \equiv \delta (s, N) < 1\) and \(0< \varepsilon _0 \equiv \varepsilon _0(s, N) < 1\) with the property that

$$\begin{aligned} a_{m - k} : {\mathbb {T}}^{S_+} {\times } H_\bot ^{s + \sigma _N} \times [0, \varepsilon _0] \rightarrow H^s({\mathbb {T}}_1), \, (\theta , w, \varepsilon ) \mapsto a_{m - k}(\theta , w) \equiv A_{m - k}(\theta )[w] \, , \end{aligned}$$with

$$\begin{aligned} A_{m - k}: {\mathbb {T}}^{S_+} \times [0, \varepsilon _0] \rightarrow {{\mathcal {B}}}(H^{s + \sigma _N}_\bot ({\mathbb {T}}_1), H^s({\mathbb {T}}_1)), \, (\theta , \varepsilon ) \mapsto A_{m - k}(\theta ) \equiv A_{m - k}(\theta , \varepsilon ) \ \end{aligned}$$being \(C^\infty \)-smooth and bounded. In this case we often write \(X^\bot (\theta , w)[w]\) instead of \(X^\bot ({\mathfrak {x}})\) where

$$\begin{aligned} X^\bot (\theta , w) = \Pi _\bot \sum _{k = 0}^{N+m} T_{A_{m - k}(\theta )[w]} \partial _x^{m - k} \, . \end{aligned}$$ -

(ii)

Assume that \({\mathcal {M}}^\bot ({\mathfrak {x}}) = \sum _{k = 0}^{N + m} \lambda _{m - k}({\mathfrak {x}}) \partial _x^{m - k} w\) is of class \({\mathcal {OF}}^2(m, N)\).

-

(ii1)

\({\mathcal {M}}^\bot \) is said to be of class \({\mathcal {OF}}^2_w(m, N)\) if it is linear with respect to w. More precisely, there exist \(0< \delta \equiv \delta (N) < 1\) and \(0< \varepsilon _0 \equiv \varepsilon _0(N) < 1\) with the property that for any \(0 \le k \le m + N\),

$$\begin{aligned} \lambda _{m - k} : {\mathbb {T}}^{S_+} \times B_{S_+}(\delta ) \times [0, \varepsilon _0] \rightarrow {\mathbb {R}}, \, (\theta , y, \varepsilon ) \mapsto \lambda _{m-k}(\theta , y) \equiv \lambda _{m-k}(\theta , y, \varepsilon ) \end{aligned}$$is \(C^\infty \)-smooth and bounded.

-

(ii2)

\({\mathcal {M}}^\bot \) is said to be of class \({\mathcal {OF}}^2_{ww}(m, N)\) if it is quadratic with respect to w and independent of y. More precisely, there exist an integer \(\sigma _N \ge 0\), \(0< \varepsilon _0 \equiv \varepsilon _0(N) < 1\), and for any \(0 \le k \le m + N\) a \(C^\infty -\)smooth map

$$\begin{aligned} \Lambda _{m -k} : {\mathbb {T}}^{S_+} \times [0, \varepsilon _0] \rightarrow {{\mathcal {B}}}(H^{\sigma _N}_\bot ({\mathbb {T}}_1), {\mathbb {R}}), \, \theta \mapsto \Lambda _{m -k} (\theta ) \equiv \Lambda _{m -k} (\theta , \varepsilon ), \end{aligned}$$so that \(\lambda _{m - k}({\mathfrak {x}}) = \Lambda _{m - k}(\theta )[w]\).

-

(iii)

Assume that \({{\mathcal {R}}}\) is a smoothing vector field of class \({\mathcal {OS}}^2(N)\).

-

(iii1)

\({{\mathcal {R}}}\) is said to be of class \({\mathcal {OS}}_{w}^2(N)\) if \({{\mathcal {R}}}({\mathfrak {x}})\) of the form \({{\mathfrak {R}}}(\theta , y)[w]\) with \({\mathfrak {R}}\) having the following property: there is an integer \(s_N \ge 0\) so that for any \(s \ge s_N\), there exist \(0< \delta \equiv \delta (s, N) < 1 \) and \(0< \varepsilon _0 \equiv \varepsilon _0(s, N) < 1\) with the property that

$$\begin{aligned}&{{\mathfrak {R}}} : {\mathbb {T}}^{S_+} \times B_{S_+}(\delta ) \times [0, \varepsilon _0] \rightarrow {{\mathcal {B}}}(H^s({\mathbb {T}}_1), H^{s + N + 1}({\mathbb {T}}_1)), (\theta , y, \varepsilon )\\&\quad \mapsto {{\mathfrak {R}}}(\theta , y) \equiv {{\mathfrak {R}}}(\theta , y; \varepsilon ) \end{aligned}$$is \(C^\infty \)-smooth, bounded, and small of order one. In the sequel, we will also write \({{\mathcal {R}}}(\theta , y)[w]\) for \({{\mathfrak {R}}}(\theta , y)[w]\).

-

(iii2)

\({{\mathcal {R}}}\) is said to be of class \({\mathcal {OS}}^2_{ww}(N)\) if \({{\mathcal {R}}}\) is quadratic with respect to w and independent of y. More precisely, \({{\mathcal {R}}}({\mathfrak {x}})\) is of the form \({{\mathfrak {R}}}(\theta )[w, w]\) with \({\mathfrak {R}}\) having the following property: there is an integer \(s_N \ge 0\) so that for any \(s \ge s_N\) there exists \(0< \varepsilon _0 \equiv \varepsilon _0(s, N) < 1\) with the property that

$$\begin{aligned} {{\mathfrak {R}}} : {\mathbb {T}}^{S_+} \times [0, \varepsilon _0] \rightarrow {{\mathcal {B}}}_2\big (H^s_\bot ({\mathbb {T}}_1) , H^{s + N + 1}_\bot ({\mathbb {T}}_1)\big ), \, (\theta , \varepsilon ) \mapsto {{\mathfrak {R}}}(\theta ) \equiv {{\mathfrak {R}}}(\theta , \varepsilon ) \end{aligned}$$is \(C^\infty \)-smooth and bounded. In the sequel, we will often write \({{\mathcal {R}}}(\theta )[w, w]\) instead of \({{\mathfrak {R}}}(\theta )[w, w]\).

Remark 3.3

For any \(N \in {\mathbb {N}}\) and \(m \in {\mathbb {Z}}\), the following inclusions between the classes of vector fields introduced above hold:

These inclusions hold since by (2.1) the operator \(T_\lambda \) of para-multiplication with any constant \(\lambda \in {\mathbb {R}}\) satisfies \(\Pi _\bot T_\lambda = \lambda \Pi _\bot \).

For notational convenience, we will often not distinguish between a vector field X of the form \((0, 0, X^\bot )\) and its normal component \(X^\bot \). Given two vector fields X and Y, defined on a subset of \({\mathcal {E}}\) and depending on the parameters \(\varepsilon \) and \(\mu \), we write

if for any \(1 \le j \le n,\) there exists a vector field \(X_j \in {{\mathcal {O}}}_j\) so that \(X = Y + X_1 + \cdots + X_n\). Here \({{\mathcal {O}}}_j\) denotes any of the classes of vector fields introduced above.

3.2 Commutators

Lemma 3.1

(Commutators I). Let N, p, and q be in \({\mathbb {N}}\).

-

(i)

For any smoothing vector fields \({{\mathcal {R}}}\), \({{\mathcal {Q}}} \in {{\mathcal {OS}}}(N)\), the commutator \([{{\mathcal {R}}}, {{\mathcal {Q}}}]\) is also in \({{\mathcal {OS}}}(N)\).

-

(ii)

For any vector fields \({{\mathcal {R}}} {\in } {{\mathcal {OS}}}^p(N)\) and \({{\mathcal {Q}}} \in {{\mathcal {OS}}}^q(N)\), one has \([{{\mathcal {R}}}, {{\mathcal {Q}}}] \in {{\mathcal {OS}}}^{p + q - 1}(N)\)

Proof

The two items follow from Definition 3.3 (smoothing vector fields) and the definition (1.34) of the commutator. \(\square \)

Lemma 3.2

(Commutators II). Let N, p, \(q \in {\mathbb {N}}\) and \(m \in {\mathbb {Z}}\).

If \(X= (0, 0, X^\bot )\) with \(X^\bot \in {{\mathcal {O}} B}(m, N)\) and \({{\mathcal {R}}} = ({\mathcal {R}}^{(\theta )}, {\mathcal {R}}^{(y)}, {\mathcal {R}}^\bot ) \in {{\mathcal {OS}}}(N)\), then

If \(X^\bot \in {{\mathcal {O}} B}^p(m, N)\) and \({{\mathcal {R}}} \in {{\mathcal {OS}}}^q(N)\), then \( {\mathcal {C}}^\bot _{[X, {\mathcal {R}}]} \in {{\mathcal {OB}}}^{p + q -1}(m, N)\) and \({{\mathcal {R}}}_{[X, {\mathcal {R}}]} \in {{\mathcal {OS}}}^{p + q - 1}(N - m)\).

Proof

By (3.1), X can be written as \(X({\mathfrak {x}}) := \sum _{k = 0}^{N + m} X_k({\mathfrak {x}})\) where

For any \(0 \le k \le N+m\), the commutator \( [X_k, {{\mathcal {R}}}] ({\mathfrak {x}})= d X_k({\mathfrak {x}})[{{\mathcal {R}}}({\mathfrak {x}})] - d {{\mathcal {R}}}({\mathfrak {x}}) [X_k({\mathfrak {x}})]\) can be computed as

where \({\mathcal {R}} = ({\mathcal {R}}^{(\theta )}, {\mathcal {R}}^{(y)}, {\mathcal {R}}^\bot )\). Note that \(d {{\mathcal {R}}}({\mathfrak {x}}) [X_k({\mathfrak {x}})] \in {{\mathcal {OS}}}(N - m)\) and that for any \(0 \le k \le N+m\),

Formula (3.3) then follows by setting \( {\mathcal {C}}^\bot _{[X, {\mathcal {R}}]}({\mathfrak {x}}) := \Pi _\bot \sum _{k = 0}^{m + N} T_{d a_{m - k}({\mathfrak {x}})[{{\mathcal {R}}}({\mathfrak {x}})]} \partial _x^{m - k} w\), and

The remaining part of the lemma is proved by using similar arguments. \(\square \)

Lemma 3.3

(Commutators III). Let N, p, \(q \in {\mathbb {N}}\), m, \(m' \in {\mathbb {Z}}\), and let \(m_* := \max \{ m + m' - 1, \, m, \, m' \}\). For any \(X^\bot \in {{\mathcal {OB}}}(m, N)\) and \(Y^\bot \in {{\mathcal {OB}}}(m', N)\), one has

If in fact \(X^\bot \in {{\mathcal {OB}}}^p(m, N)\) and \(Y^\bot \in {{\mathcal {OB}}}^q(m', N)\), then

Proof

By formula (3.1), \(X^\bot \in {{\mathcal {OB}}}(m, N)\) and \(Y^\bot \in {{\mathcal {OB}}}(m', N)\) are of the form

With \(X^\bot = \sum _{k = 0}^{N + m} X^\bot _k\) and \(Y^\bot = \sum _{j = 0}^{N + m'} Y^\bot _j\) one gets

where

To compute \([X^\bot _k, Y^\bot _j] \) for k, j in the corresponding ranges, for notational convenience we let

One computes

Using the formula

and the corresponding one for \(\Pi _\bot T_{b} \partial _x^{n'} \circ \Pi _\bot T_{a} \partial _x^{n}\), one obtains \([X^\bot _*, \, Y^\bot _*] = {{\mathcal {C}}}^\bot _1 + {{\mathcal {R}}}^\bot _1\) where

and

Since by assumption, there exist integers \(s_N \ge 0\), \(\sigma _N \ge 0\), so that for any \(s \ge s_N\) there is \(0< \delta \equiv \delta (s, N) < 1\) and \(0< \varepsilon _0 \equiv \varepsilon _0(s, N) < 1\) with the property that \(a, b: {{\mathcal {V}}}^{s + \sigma _N}(\delta ) \times [0, \varepsilon _0] \rightarrow H^s({\mathbb {T}}_1)\) are \(C^\infty \)-smooth and bounded, it then follows that

and, in view of Corollary 2.1, that

Furthermore, since \(\Pi _\bot - \mathrm{Id}\) is a smoothing operator, one concludes that \( {{\mathcal {R}}}^\bot _1 \in {{\mathcal {OS}}}(N)\). Altogether, we have proved that \([X^\bot _*, \, Y^\bot _*]\) is of the form \({{\mathcal {C}}}^\bot _{[X^\bot _*, \, Y^\bot _*]} + {{\mathcal {R}}}^\bot _{[X^\bot _*, \, Y^\bot _*]}\) where

If in fact \(X_*^\bot \in {{\mathcal {OB}}}^p(m, N)\) and \(Y_*^\bot \in {{\mathcal {OB}}}^q(m', N)\), then a is small of order \(p - 1\), b is small of order \(q - 1\) and it follows that

One then infers that \({{\mathcal {C}}}^\bot _{[X^\bot _*, \, Y^\bot _*]} \in {{\mathcal {OB}}}^{p + q - 1}(n_*, N)\) and \({{\mathcal {R}}}^\bot _{[X^\bot _*, \, Y^\bot _*]} \in {{\mathcal {OS}}}^{p + q - 1}(N)\). \(\square \)

Lemma 3.4

(Commutators IV). Let N, p, \(q \in {\mathbb {N}}\), m, \(m' \in {\mathbb {Z}}\), and let \(m_* := \max \{ m + m' - 1, \, m, \, m' \}\).

-

(i)

For any \({\mathcal {M}}^\bot \in {{\mathcal {OF}}}(m, N)\) and \({\mathcal {M}}'^\bot \in {{\mathcal {OF}}}(m', N)\)

$$\begin{aligned} {[}{\mathcal {M}}^\bot , \, {\mathcal {M}}'^\bot ] \in {{\mathcal {OF}}}\big (m\vee m' , N \big ). \end{aligned}$$If in fact \( {\mathcal {M}}^\bot \in {{\mathcal {OF}}}^p(m, N)\) and \({\mathcal {M}}'^\bot \in {{\mathcal {OF}}}^q(m', N)\), then \([{\mathcal {M}}^\bot , {\mathcal {M}}'^\bot ] \in {{\mathcal {OF}}}^{p + q - 1}(m \vee m' , N )\).

-

(ii)

For any \(X^\bot \in {{\mathcal {OB}}}(m, N)\) and \({\mathcal {M}}^\bot \in {{\mathcal {OF}}}(m', N)\),

$$\begin{aligned} {[}X^\bot , {\mathcal {M}}^\bot ] {=} {{\mathcal {C}}}^\bot _{[X^\bot , {\mathcal {M}}^\bot ]} + {{\mathcal {R}}}^\bot _{[X^\bot , {\mathcal {M}}^\bot ]}, \quad {{\mathcal {C}}}^\bot _{[X^\bot , {\mathcal {M}}^\bot ]} \in {{\mathcal {OB}}}(m_* ,N), \qquad {{\mathcal {R}}}^\bot _{[X^\bot , {\mathcal {M}}^\bot ]} \in {{\mathcal {OS}}}(N). \end{aligned}$$If \(X^\bot \in {{\mathcal {OB}}}^p(m, N)\) and \({\mathcal {M}}^\bot \in {{\mathcal {OF}}}^q(m', N)\), then

$$\begin{aligned} {{\mathcal {C}}}^\bot _{[X^\bot , {\mathcal {M}}^\bot ]} \in {{\mathcal {OB}}}^{p + q - 1}(m_*, N),\qquad {{\mathcal {R}}}^\bot _{[X^\bot , {\mathcal {M}}^\bot ]} \in {{\mathcal {OS}}}^{p + q - 1}(N). \end{aligned}$$ -

(iii)

For any \({\mathcal {M}}= (0, 0, {\mathcal {M}}^\bot )\) with \({\mathcal {M}}^\bot \in {{\mathcal {OF}}}(m, N)\) and \({\mathcal {R}} = \big ( {\mathcal {R}}^{(\theta )}, {\mathcal {R}}^{(y)}, {\mathcal {R}}^\bot \big ) \in {{\mathcal {OS}}}(N)\)

$$\begin{aligned} {[}{\mathcal {M}}, {\mathcal {R}}] = (0,0, {{\mathcal {C}}}^\bot _{[{\mathcal {M}}, {\mathcal {R}}^]}) + {{\mathcal {R}}}_{[{\mathcal {M}}, {\mathcal {R}}]}, \qquad {{\mathcal {C}}}^\bot _{[{\mathcal {M}}, {\mathcal {R}}]} \in {{\mathcal {OF}}}(m, N), \qquad {{\mathcal {R}}}_{[{\mathcal {M}}, {\mathcal {R}}]} \in {{\mathcal {OS}}}(N - m) \end{aligned}$$If \({\mathcal {M}}^\bot \in {{\mathcal {OF}}}^p(m, N)\) and \({\mathcal {R}} \in {{\mathcal {OS}}}^q(N)\), then \({{\mathcal {C}}}^\bot _{[{\mathcal {M}}, {\mathcal {R}}]} \in {{\mathcal {OF}}}^{p + q - 1}(m, N)\) and \({{\mathcal {R}}}_{[M, {\mathcal {R}}]} \in {{\mathcal {OS}}}^{p + q - 1}(N)\).

Proof

Since the claims of the lemma follow by arguing as in the proofs of Lemma 3.2 and Lemma 3.3, the details of the proofs are omitted. \(\square \)

3.3 Flows of para-differential vector fields

In this subsection we study the flow of para-differential vector fields of the form \(Y = (0, 0, \, Y^\bot )\) with

By Definition 3.1, there are integers \(s_N \ge 0\), \(\sigma _N\ge 0\) so that for any \(s \ge s_N\) there exist \(0< \delta \equiv \delta (s, N) < 1\) and \(0< \varepsilon _0 \equiv \varepsilon _0(s, N) < 1\) with the property that

is \(C^\infty -\)smooth and bounded. In the sequel, we will often tacitly increase \(s_N\), \(\sigma _N\) and decrease \(\delta \equiv \delta (s, N)\), \(\varepsilon _0\equiv \varepsilon _0(s, N)\), whenever needed.

Denote by \(\Phi _Y (\tau , \cdot )\) the flow associated with Y. By the standard ODE theorem in Banach spaces, for any \(s \ge s_N\), there exist \(0< \delta \equiv \delta (s, N) < 1\), and \(0 < \varepsilon _0 \equiv \varepsilon _0(s, N) \ll \delta \), so that for any \(-1 \le \tau \le 1\),

It then follows that for \(-1 \le \tau \le 1\) and \({\mathfrak {x}} \in {\mathcal {V}}^s(\delta ),\) one has \(\Phi _Y(- \tau , \Phi _Y(\tau , {\mathfrak {x}})) = {\mathfrak {x}}\).

Remark 3.4

For notational convenience, \(\Phi _Y(- \tau , \cdot )\) is referred to as the inverse of \(\Phi _Y(\tau , \cdot )\) and we write \(\Phi _Y(\tau , \cdot )^{-1} = \Phi _Y(- \tau , \cdot )\). In particular, \(\Phi _Y(1, \cdot )^{-1} = \Phi _Y(- 1, \cdot )\). Using our convention of tacitly decreasing \(\delta \) and \(\varepsilon _0\), if needed, \(\Phi _Y(\tau , \cdot )^{-1}\) is defined for \(({\mathfrak {x}}, \varepsilon ) \in {{\mathcal {V}}}^s(\delta ) \times [0, \varepsilon _0]\). More generally, a similar convention is used for diffeomorphisms between neighborhoods of \({\mathbb {T}}^{S_+} \times 0 \times 0\) in \({\mathcal {E}}_s\) throughout the paper.

The following lemma provides a para-differential expansion of the flow \(\Phi _Y (\tau , \cdot )\).

Lemma 3.5

Let N, \(p \in {\mathbb {N}}\) and assume that the normal component \(Y^\bot \) of \(Y = (0, 0, \, Y^\bot )\) satisfies (3.4). Then for any \(-1 \le \tau \le 1\), \(\Phi _Y(\tau , {\mathfrak {x}})\) admits an expansion of the form

where

Proof

The normal component \(\Phi _Y^\bot (\tau , {\mathfrak {x}})\) of the flow \(\Phi _Y(\tau , {\mathfrak {x}})\) satisfies the integral equation

To solve it, we make the ansatz that \(\Phi _Y^\bot (\tau , {\mathfrak {x}})\) admits an expansion of the form

with the property that there exist \(s_N \ge 0\), \(\sigma _N \ge 0\) so that the following holds: for any \(s \ge s_N\), there exist \(0< \delta \equiv \delta (s, N) < 1\) and \(0< \varepsilon _0 \equiv \varepsilon _0(s, N) < 1\) so that for any \(-1 \le \tau \le 1\) and \( 0 \le k \le N+m\),

To determine \(\big ( b_{m-k}\big )_{0 \le k \le N+m}\) and \( {{\mathcal {R}}}^\bot _{N}\), in terms of the coefficient \(a_{m}\) of \(Y^\bot \) in (3.4), we compute the expansion of the right hand side of the Eq. (3.6) by substituting the ansatz (3.7) into the integrand \(Y^\bot (\Phi _{Y}(t, {\mathfrak {x}}))\). In view of definition (3.4) of \(Y^\bot \), one gets for any \(-1 \le t \le 1\),

Using that \(\Pi _\bot - \mathrm{Id}\) is a smoothing operator and that

one gets

where we recall that \(m \le 0\) and that by our convention, a sum of terms over an empty index set equals 0. Moreover, by increasing \(s_N, \sigma _N\) if needed, it follows that for any \(s \ge s_N\) and \(-1 \le t \le 1,\) the map \(A(t, {\mathfrak {x}}) := \Pi _\bot T_{a_{m}(\Phi _Y(t, {\mathfrak {x}}))} \partial _x^{m}\) satisfies (after decreasing \(\delta \) and \(\varepsilon _0\) if necessary)

and hence in view of (3.8),

In view of (3.10)–(3.11), we rewrite (3.9) as

Since \(a_{m}\) and \(b_{m-k}\) are small of order \(p - 1\) (cf. (3.8)), it follows from Lemma 2.5 that for any \(0 \le k \le N + 2m\), the term \(T_{a_{m}(\Phi _Y(t, {\mathfrak {x}}))} \partial _x^{m} T_{b_{m-k}(t, {\mathfrak {x}})} \partial _x^{m-k} w \) has an expansion of the form

with the constants K(j, m) given as in Lemma 2.5, implying that

where \(g_{2m}(t, {\mathfrak {x}}) = a_{m}(\Phi _{Y}(t, {\mathfrak {x}})) b_{m}(t, {\mathfrak {x}})\) and for any \(1 \le i \le N + 2m\),

Combining (3.6)–(3.15) then yields the following identity,

Let us first consider the case where \(m \le -1\). We then require that the coefficients \(b_{m-k}\), \(0 \le k \le N+m\), satisfy the following system of equations,

Since for any \( |m| + 1 \le k \le N + 2m\), \(g_{m-k}\) only depends on \(b_{m-k'}\) with \(k' \le k + m \le k -1\) (cf. (3.15)), the coefficients \(b_{m-k}\) are determined inductively in terms of \(a_{m}\). One then verifies that the properties of the coefficients \(b_{m-k}\), stated in ansatz (3.8), are satisfied. The remainder \({{\mathcal {R}}}^\bot _{N}\) then satisfies the following integral equation

where \({{\mathcal {Q}}}^\bot _{N}(\tau , \cdot ) \in {{\mathcal {OS}}}^{2 p- 1}(N)\) is given by the sum of the two terms in (3.10) and the operator \(A(t, {\mathfrak {x}})\) is defined in (3.11). By increasing \(s_N\) if needed, it follows that for any \(s \ge s_N\),

and hence by the Gronwall Lemma, one infers that \({{\mathcal {R}}}^\bot _N\) satisfies

implying that \( \Vert {{\mathcal {R}}}^\bot _N(\tau , {\mathfrak {x}}) \Vert _{s + N + 1} \lesssim _{s, N} (\varepsilon + \Vert y \Vert + \Vert w \Vert _s)^{2 p - 1}\). Similar estimates hold for the derivatives of \({{\mathcal {R}}}^\bot _N\). Altogether we have shown that \({{\mathcal {R}}}^\bot _N \in {{\mathcal {OS}}}^{2 p - 1}(N)\).

Finally let us consider case \(m=0\). We then require that the coefficients \(b_{-k}\), \(0 \le k \le N\), satisfy the following system of equations,

The solution \(b_0\) then reads \(b_{0}(\tau , {\mathfrak {x}}) = e^{\int _0^\tau a_{0}(\Phi _Y(t, {\mathfrak {x}})) \, dt} -1\). The remaining part of the proof then follows as in the case \(m \le -1\). \(\square \)

Lemma 3.6

Let N, \(p \in {\mathbb {N}}\) and let \(\Phi _Y(\tau , {\mathfrak {x}})\) denote the flow map considered in Lemma 3.5, corresponding to the vector field \(Y = (0, 0, \, Y^\bot )\), with \(Y^\bot ({\mathfrak {x}}) = \Pi _\bot T_{a_{m}({\mathfrak {x}})} \partial _x^{ m} w\) and \(m \le 0\), satisfying (3.4). Then for any \(-1\le \tau \le 1,\) \(d \Phi _Y( \tau , {\mathfrak {x}})^{- 1}[\widehat{{\mathfrak {x}}}]\) admits an expansion of the form

with the following properties: there exist \(s_N\), \(\sigma _N \ge N\) so that for any \(s \ge s_N\), there exist \(\delta \equiv \delta (s, N) > 0\) and \(0< \varepsilon _0 \equiv \varepsilon _0(s, N) < 1\) so that the following holds: for any \(0 \le k \le N + m\) and \(-1 \le \tau \le 1\),

with \(b_{m- k}(\tau , \cdot )\), \(B_{m- k}(\tau , \cdot )\), and \({{\mathcal {R}}}^\bot _N(\tau , \cdot )\) being small of order \(p - 1\), and the expansion above holds for any \({\mathfrak {x}} \in {\mathcal {V}}^{s+\sigma _N}(\delta )\) and \(\widehat{{\mathfrak {x}}} \in E_{s+\sigma _N}\).

Proof

First we note that for any \(-1 \le \tau \le 1\), \(d \Phi _Y(\tau , {\mathfrak {x}})^{- 1} = d \Phi _Y(- \tau , \Phi _Y(\tau , {\mathfrak {x}})) \) and that by Lemma 3.5,

with \(b_{m-k}(\tau , \cdot ; \Phi _Y) \in C^\infty _b\big ( {{\mathcal {V}}}^{s + \sigma _N}(\delta ) \times [0, \varepsilon _0], \, H^s({\mathbb {T}}_1) \big )\) being small of order \(p - 1\) and \({{\mathcal {R}}}^\bot _N(\tau , \cdot ; \Phi _Y) \in {{\mathcal {OS}}}^p(N)\). To simplify notation, let \({\widetilde{b}}_{m-k}(\tau , {\mathfrak {x}}):= b_{m-k}(\tau , {\mathfrak {x}}; \Phi _Y)\) and \(\widetilde{{\mathcal {R}}}^\bot _N(\tau , {\mathfrak {x}}) := {{\mathcal {R}}}^\bot _N(\tau , {\mathfrak {x}}; \Phi _Y)\). Then the normal component of \(d \Phi _Y( \tau , {\mathfrak {x}})^{- 1}[\widehat{{\mathfrak {x}}}] - \widehat{{\mathfrak {x}}}\) can be computed as follows

By expanding the terms \(T_{d {\widetilde{b}}_{m-k}(- \tau , \Phi _Y(\tau , {\mathfrak {x}}))[\widehat{{\mathfrak {x}}}]} \partial _x^{m-k} \Phi _Y^\bot (\tau , {\mathfrak {x}})\) with the help of Lemma 2.5, one is led to define \(b_{m-k}(\tau , {\mathfrak {x}})\), \(B_{m-k}(\tau , {\mathfrak {x}})\), and \({{\mathcal {R}}}^\bot _N(\tau , {\mathfrak {x}})\) with the claimed properties. \(\square \)

Combining Lemmas 3.5 and 3.6, one obtains an expansion of the pullback of various types of vector fields by the time one flow map \(\Phi _Y(1, \cdot )\):

Lemma 3.7

Let N, p, \(q \in {\mathbb {N}}\) and let \(\Phi _Y(1, {\mathfrak {x}})\) denote the time one flow map, corresponding to the vector field \(Y = (0, 0, \, Y^\bot )\), with \(Y^\bot ({\mathfrak {x}}) = \Pi _\bot T_{a_{m}({\mathfrak {x}})} \partial _x^{ m} w\) and \(m \le 0\), satisfying (3.4) (cf. Lemma 3.5). Then the following holds:

-

(i)

For any \(X := (0,0, X^\bot )\) with \(X^\bot \in {{\mathcal {OB}}}^q(n, N)\) and \(n \ge 0\), the pullback \(\Phi _Y^* X({\mathfrak {x}}) = d\Phi _Y(1, {\mathfrak {x}})^{-1} X(\Phi _Y(1, {\mathfrak {x}}))\) of X by \(\Phi _Y(1, \cdot )\) admits an expansion of the form

$$\begin{aligned} \Phi _Y^* X ({\mathfrak {x}})= \big (0, 0, \, X^\bot ({\mathfrak {x}}) + \Upsilon ^\bot ({\mathfrak {x}}) + {{\mathcal {R}}}_N^\bot ({\mathfrak {x}}) \big ) \end{aligned}$$where \(\Upsilon ^\bot \in {{\mathcal {OB}}}^{p + q - 1}(n, N)\) and \({{\mathcal {R}}}_N^\bot \in {{\mathcal {OS}}}^{p + q - 1}(N)\).

-

(ii)

For any X in \({{\mathcal {OS}}}^q(N)\), the pullback \(\Phi _Y^* X\) of X by \(\Phi _Y(1, \cdot )\) admits an expansion of the form

$$\begin{aligned} \Phi _Y^* X({\mathfrak {x}}) = X({\mathfrak {x}}) + \big (0, 0, \, \Upsilon ^\bot ({\mathfrak {x}}) \big ) + {{\mathcal {R}}}_N({\mathfrak {x}}) \end{aligned}$$where \( \Upsilon ^\bot \in {{\mathcal {OB}}}^{p + q - 1}(m, N)\) and \({{\mathcal {R}}}_N \in {{\mathcal {OS}}}^{p + q - 1}(N)\).

Proof

We only prove item (i) since item (ii) can be proved by similar arguments. Since by (1.36) with \(\tau =1\)

we analyze for any \(t \in [0, 1]\) the vector field

Recall that \(Y^\bot ({\mathfrak {x}}) = \Pi _\bot T_{a_{m}({\mathfrak {x}})} \partial _x^{m} w \in {{\mathcal {OB}}}^p(m, N)\). Taking into account that \(m_* = \max \{n+m-1, m, n\} = n\) (since \(n \ge 0 \ge m\)), it follows from Lemma 3.3 that \([X, Y] = \big (0, 0, [X^\bot , Y^\bot ]\big )\) satisfies

By Definitions 3.1–3.3, and Lemma 3.5, Lemma 3.6, as well as Lemma 2.5, one obtains

with \(\Upsilon ^\bot ({\mathfrak {x}}) \in {{\mathcal {OB}}}^{p + q - 1}(n, N)\) and \({{\mathcal {R}}}_N^\bot ({\mathfrak {x}}) \in {{\mathcal {OS}}}^{p + q - 1}(N)\). \(\square \)

Next we analyze the pullback \(\Phi _Y^* X_{{\mathcal {N}}}\) of the Hamiltonian vector field \(X_{{{\mathcal {N}}}}({\mathfrak {x}})\) with \({\mathcal {N}}\) being the following Hamiltonian in normal form (cf. (4.15)),

where the Fourier multipliers \(D^{- 1}_\bot \) and \(\Omega _\bot \equiv \Omega _\bot (\omega )\) are given by (1.42) and Q is assumed to be a map in \(C^\infty _b(B_{S_+}(\delta ) \times [0, \varepsilon _0], \, {\mathbb {R}})\) with \(Q(0) = 0\) and \(\nabla _y Q(0) = 0\). Since \(\partial _x D_\bot ^{- 1} \Omega _\bot = \mathrm{i} \Omega _\bot \), the vector field \(X_{{{\mathcal {N}}}}({\mathfrak {x}})\) then reads

and its differential is given by

Note that \({{\mathcal {N}}}({\mathfrak {x}})\) does not depend on \(\theta \), but only on y, w, and \(\varepsilon \). For notational convenience, we will often write \({{\mathcal {N}}}(y, w)\) instead of \({{\mathcal {N}}}({\mathfrak {x}})\). The following result on the expansion of \(\mathrm{i} \Omega _\bot \) can be found in [27].

Lemma 3.8

([27, Lemma C.7]). For any \(N \in {\mathbb {N}}\), the Fourier multiplier \(\mathrm{i} \Omega _\bot \) has an expansion of the form

where \(c_{- k}\equiv c_{-k}(\omega )\) are real constants, depending only on the parameter \(\omega \in \Pi \), and \({{\mathcal {R}}}^\bot _N \equiv {\mathcal {R}}_N^\bot (\omega )\) is in \({{\mathcal {B}}}(H^s_\bot ({\mathbb {T}}_1), H^{s + N + 1}_\bot ({\mathbb {T}}_1))\) for any \(s \in {\mathbb {R}}\).

Lemma 3.9

Let \(X_{{\mathcal {N}}}\) be the vector field given by (3.22) and \(Y = (0, 0, \, Y^\bot )\) be the vector field with \(Y^\bot ({\mathfrak {x}}) = \Pi _\bot T_{a_{m}({\mathfrak {x}})} \partial _x^{ m} w\) and \(m \le 0\), satisfying (3.4) with \(p, N \in {\mathbb {N}}\). Furthermore let \(\Phi _Y(1, {\mathfrak {x}})\) be the time one flow map corresponding to the vector field Y (cf. Lemma 3.5). Then the following holds:

-

(i)

If in addition \(Y^\bot ({\mathfrak {x}}) = \Pi _\bot T_{a_{m}({\mathfrak {x}})} \partial _x^{ m} w\) is in \({{\mathcal {OB}}}^2_{w}(m, N)\), hence \(a_m({\mathfrak {x}}) \equiv a_m(\theta , y)\) independent of w, and if \(\langle a_{m}({\mathfrak {x}}) \rangle _x = 0\), then \([X_{{\mathcal {N}}}, Y]\) is of the form \(\big ( 0, 0 , \, [X_{{\mathcal {N}}}, Y]^\bot \big )\) with \([X_{{\mathcal {N}}}, Y]^\bot \in {{\mathcal {OB}}}^2(2 + m, N)\) and admits an expansion of the form

$$\begin{aligned} {[}X_{{\mathcal {N}}}, Y]^\bot ({\mathfrak {x}}) = \Pi _\bot T_{-3\partial _x a_{m}({\mathfrak {x}})} \partial _x^{2 + m} w + {\mathcal {C}}^\bot ( {\mathfrak {x}}) + {{\mathcal {R}}}^\bot _{N}( {\mathfrak {x}}) + {{\mathcal {OB}}}^3(m, N), \end{aligned}$$where \({\mathcal {C}}^\bot ( {\mathfrak {x}}) \in {{\mathcal {OB}}}^2_{w}(1 + m, N)\) and \({{\mathcal {R}}}^\bot _{N}( {\mathfrak {x}}) \in {{\mathcal {OS}}}_{w}^2(N)\). Moreover \({\mathcal {C}}^\bot ( {\mathfrak {x}})\) and \({{\mathcal {R}}}^\bot _{N}( {\mathfrak {x}})\) are of the form \({\mathcal {C}}^\bot ( {\mathfrak {x}}) = {\mathcal {C}}^\bot ( \theta , y) [w]\) and, respectively, \({{\mathcal {R}}}^\bot _{N}( {\mathfrak {x}}) = {{\mathcal {R}}}^\bot _{N}(\theta , y)[w] \), and the diagonal matrix elements of \({\mathcal {C}}^\bot ( \theta , y)\) and \({{\mathcal {R}}}^\bot _{N}(\theta , y)\) vanish,